By Zheng Ziying (Nanmen)

In terms of software testing, how does one evaluate the sufficiency and effectiveness of tests? How can you cut down on the numerous test cases? In this article, Zheng Ziying, an Alibaba Cloud researcher, shares his insights into 18 challenges in software testing in the hope of providing you with some inspiration.

A dozen years ago, when I was working for my former employer, I happened to read a list named "Hard Problems in Testing" on the internal website. The list included 30 to 40 challenges that were summarized by various departments. Unfortunately, I could never find the list again; I regret that I did not keep a copy of it. I looked for the list many times, in hopes of looking over the many areas for improvement in the testing field suggested by the challenges it listed. Since reading the list, I have believed that software testing is at least as challenging as software development if not more so.

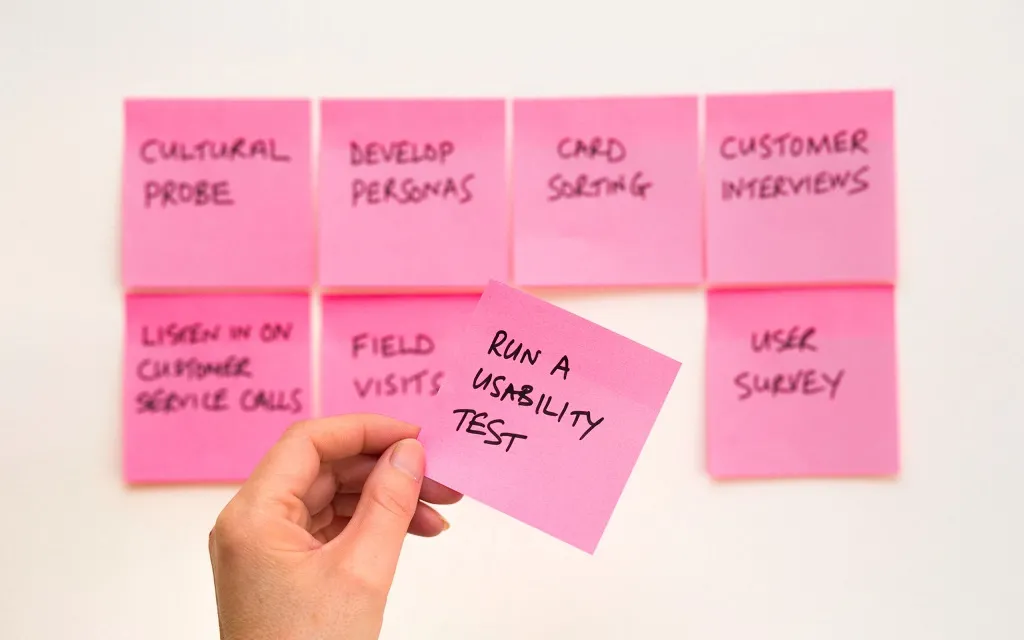

If I had to make another list of "Hard Problems in Testing", I would include the following items[1].

How do you measure the sufficiency of tests, including tests on new and existing code? Such a consideration may start with, but definitely goes far beyond, code coverage. A basic criterion of test sufficiency is that all scenarios, states, state transition paths, event sequences, possible configurations, and possible data undergo tests. Even if this criterion is met, you still cannot assume the sufficiency of testing. Perhaps the best we can do is to approach sufficiency[2].

How do you evaluate the effectiveness of test cases, that is, the ability to detect bugs? Effectiveness and sufficiency have an orthogonal relationship to each other. You can evaluate the effectiveness of test cases by using forward analysis. For example, you can analyze whether your test cases have verified all the data that is stored in the system under test (SUT) during the test. A more common approach is mutation testing. In this approach, we inject artificial bugs into the code under test and check how many of the bugs can be detected by our test cases. Mutation testing has been implemented on a large scale, and we should focus on the following aspects: (1) preventing pesticide paradox, and (2) injecting bugs into configurations and data in addition to the code under test.

Here is a famous quote from the advertising industry: Half the money I spend on advertising is wasted; the trouble is I don't know which half[3].

Software testing faces a similar problem. Quite a large part of the time you spend on the numerous test cases is wasted, but you do not know which part. Time is wasted in the following ways.

This involves extremely high costs. If you are not going to do this, you may miss a particular logic for a particular merchant in a particular country. Some services may even require manual analysis. Are there more general, complete, and reliable technical means for equivalent class analysis?

Assume that you have N test cases, M of which can be removed without affecting the result of testing. How do you determine the existence of the M test cases and identify them?

In an internal review of P9 quality line promotion, the committee asked the following question: "How can we cut down on the number of test cases in the future?" This seemingly simple question is actually very difficult to answer. In principle, if the measurement of test sufficiency and test effectiveness is done very well with low measurement cost, you can remove test cases by continuous trial and error. This is an engineering idea, and there may be other ideas derived from other theoretical approaches.

Many teams find it difficult to decide whether and how to perform end-to-end regression testing. The core advantage lies in the possibility of skipping integration testing. Assume that boundaries between systems are clearly and exhaustively defined. When you change the code of a system, can you skip integration testing with the upstream and downstream systems, and just verify the changed code within the agreed boundaries?

Many people, including me, believe that such a possibility exists. However, we can neither prove it nor dare skip integration testing in practice. We do not have any readily-replicable successful experience in this area. We do not even have a methodology to instruct the development and quality assurance (QA) teams on how to achieve the effect of regression testing without integrating upstream and downstream systems during testing.

I am trying to find a way to skip integration testing, but it is difficult and there are many dead ends. Maybe one day, someone will come to me with a theoretical proof that proves my idea to be impossible.

Analysis omissions can cause a variety of failures. You may omit a corner case in system analysis during development, or forget a special scenario during testing. Your compatibility evaluation may fail to identify an existing compatibility problem. Most of the time, analysis omissions are unknown unknowns. Is there a method or technique that can be used to reduce analysis omissions?

Test cases can be automatically generated by using several techniques, such as fuzz testing, model-based testing (MBT), recording and playback, and traffic bifurcation. Some of these techniques, such as single-system recording and playback and traffic bifurcation, are quite mature, while others are not. For example, fuzz testing is still being explored by a variety of teams, and MBT has not been implemented on a large scale. We indeed explored how to generate test cases from product requirement documents (PRDs) by using Natural Language Processing (NLP). However, generating test oracle can sometimes be more challenging than generating test steps. Anyway, the field of automatic test case generation has great potential and is still to be explored further.

You need to troubleshoot problems online and offline. Automatic troubleshooting solutions for basic problems tend to have two limitations. First, the solutions feature more customization than universality. Second, they rely on manually accumulated rules, or so-called expert experience. This is achieved by recording and repeating troubleshooting steps manually. However, each problem is unique. Therefore, a previously proven troubleshooting step may not work when a slightly different problem occurs. Some existing technologies, such as automatic trace comparison, are helpful for troubleshooting and automatic bug locating.

Well-known industry practices include Precfix developed by Alibaba Cloud and SapFix developed by Facebook. However, existing technical solutions have various limits or deficiencies. In this field, we still have a long way to go.

An important principle in test case design is avoiding dependencies between test cases. Specifically, the execution result of one test case should not be affected by the execution process or execution result of another. Based on this principle, the traditional best practice is to keep each test case self-sufficient. Specifically, each test case needs to trigger the required background processing flow and prepare the required test data on its own. However, if each test case prepares its test data from scratch, the execution efficiency is quite low. In this case, how do you reduce the time required for test data preparation without violating the principle of avoiding dependencies?

I recommend that you use a data bank. After the first test case is executed, it hands over the generated data to a data bank, such as a card-bound member that meets the Know Your Customer (KYC) rule in a specific region, a closed transaction, and a merchant that has completed the signing process. When the second test case is about to start, it checks the data bank for merchants that meet a specific condition. If the merchant that was handed over to the data bank by the first test case happens to meet the conditions of the second test, the data bank "lends" the merchant to the second test case. Before the second test case returns the merchant to the data bank, the merchant cannot be lent to any other test cases.

After a period of operation, the data bank learns what data each test case needs and generates. The data bank obtains such knowledge through learning, which does not require manual description. Therefore, this mechanism is also applicable to existing test cases in existing systems. Such knowledge enables the data bank to implement two optimizations.

The test data bank "lends" test data to test cases in an exclusive or shared manner. For shared data, you can grant read and write permissions or read-only permission to test cases. For example, you can allow multiple test cases to use a merchant to test order placement and payment scenarios, but prohibit these test cases from modifying the merchant, such as by re-engaging the merchant in an agreement.

If resources such as switches and scheduled tasks are managed by the data bank as test data, test cases can be executed in parallel to the maximum extent possible. For example, assume that N test cases need to modify a switch value. If the N test cases are executed in parallel, they will affect each other. Therefore, they must be executed in a series. However, any one of the N test cases can be executed in parallel with test cases other than these N test cases. When the data bank learns how each test case uses the resources and the average running time of each test case, the data bank can optimally arrange the execution order of test cases and adjust the order in real time during batch execution, allowing parallel execution to the maximum extent.

Such a data bank is universally applicable. Services differ from each other only in service objects and resources. This differentiation can be implemented through plug-ins. If such a general-purpose data bank[4] is created and is convenient to adopt, it would significantly improve the testing efficiency of many small and medium-sized software teams. This data bank is merely an idea of mine so far. I have not got a chance to put it into practice.

A distributed system can encounter a variety of exceptions in its internal and external interactions, including access timeout, network connection and time consumption jitter, disconnections, domain name system (DNS) resolution failures, and exhaustion of resources such as disks, CPU, memory, and connection pools. How do you ensure that system behaviors, including business logic and system self-defense measures such as downgrade and circuit breaking, meet expectations in all situations? We have performed a lot of online drills, which are essentially exception tests. However, how do you identify problems earlier, for example, in the offline process? For a complex distributed system, you need a great number of exception test cases to traverse all possible exception points and exceptions. In addition, system behaviors do not change upon some exceptions, whereas system behaviors change upon other exceptions. In the latter case, it is difficult to provide the expected result (test oracle) of each exception test case.

Concurrency can occur at a variety of levels. For example, at the database level, read or write requests can be concurrently sent to a table or record. At the single-system level, concurrency can occur between multiple threads in one process, between multiple processes on one server, or between multiple instances of one service. At the service level, operations can be concurrently performed on one business object such as a member, document, or account. Traditionally, concurrency testing is dependent on performance testing. Even if a problem is identified by luck, it is usually ignored or cannot be reproduced. Achievements in the field of concurrency testing include CHESS developed by Microsoft and the distributed model checking and Sparrow Search Algorithm (SSA) that are being explored by Tan Jinfa from Alibaba Cloud.

The three measures for ensuring business continuity have been carried out for many years. Everyone in the Alibaba economy can roll back a release. However, as I have observed, we do not have adequate pre-event protective measures to guarantee the correctness of the system after rollback. We rely more on post-event methods such as phased release and monitoring to prevent potential rollback problems. Over the past two years, I have personally experienced several online failures caused by rollback. The difficulty in rollback testing lies in the numerous possibilities to be covered because a release may be rolled back at any point. Rollback may also cause compatibility problems. For example, can the data generated by new code be properly processed by the previous code after rollback?

There are many types of compatibility problems between code and data. For example, how do you ensure that your new code correctly processes all your old data? Old data may have been generated by your previous code several months ago. For example, a refund may involve a forward payment document that was created several months ago. Old data may also have been generated just a few minutes ago, such as when code is upgraded while an operation initiated by a user is being executed. The difficulty of verifying compatibility in these scenarios lies in the numerous possibilities to be verified. In the preceding examples, the forward payment document that is involved in the refund might have been generated by several earlier versions of code. The time when the code is upgraded may correspond to many possible workflow stages.

One thing in common among exception testing, concurrency testing, rollback testing, and compatibility testing is that we know that problems may exist, but the quantity of possibilities will overwhelm us.

The effectiveness of testing also depends on the correctness of mocks. Mocks differ from mocked services, whether internal, second-party, or third-party ones. This difference can lead to missed bugs. A traffic comparison method was proposed to cope with this problem. I once had another idea: Use bundle and compiler instructions to enable one set of source code to support three compilation and building modes.

This idea has not been put into practice yet.

When you need to detect certain types of problems, regular software testing can be costly and less effective, whereas static code analysis is more effective. For example, a failure to clear the ThreadLocal variable can cause an out of memory fault and generate invalid information among different upstream requests. To give another example, you can detect NullPointerException (NPE) bugs in your code by using fuzz testing and exception testing, or by using logs during test regression. You can also find NPE bugs in an earlier stage by using static code analysis. Static code analysis also allows you to accurately locate some concurrency problems in the early stage. In short, we recommend that you use static code analysis to prevent as many problems as possible.

In addition to protocols, chips, and key algorithms, can formal verification be applied to a more business-oriented area?

Strictly speaking, mistake proof is not within the scope of software testing. Experience shows that many bugs and faults could have been avoided if only the code were better designed. Last year, I summarized the mistake-proof design of payment systems. I hope that more mistake-proof design principles in various software systems can be summarized, and better implemented by using technical means. This may be more difficult than summarizing design rules.

Development and QA engineers have a certain awareness of testability. However, they do not have a systematic understanding of testability. Many engineers think that testability means opening interfaces and adding test hooks. In other words, engineers have no idea what testability means in their respective fields, such as payment systems, public clouds, and Enterprise Resource Planning (ERP) systems. Therefore, engineers fail to put forward testability requirements in an effective and systematic way in the requirement and system design analysis stage, resulting in a lag in requirements. For testability design, I hope we will put together a series of design principles, like the DRY, KISS, Composition Over Inheritance, Single Responsibility, and the Rule of Three principles, in programming, a series of anti-patterns, and even monographs like Design Pattern.

These are the items that I would add to the list of Hard Problems in Testing, and I am already working on some of them.

[1] I have also encountered other challenges in the testing field, but I will not list them here because they are highly relevant to specific business scenarios or technology stacks. The testing field is also faced with some very difficult challenges. For example, the pass rate of regression testing is required to be greater than 99%, and code changes in backbone development are required to pass the code access control threshold. However, these challenges have more to do with engineering than the software testing technology itself.

[2] The measurement and improvement of test sufficiency are different issues. Some people consider the measurement and improvement of test sufficiency as one and the same thing. Based on this view, they can use one algorithm to both analyze data for measurement and improve test sufficiency based on this data. I do not agree with this view. Measurement and improvement are not necessarily based on the same algorithm. This can be proved by many examples, such as the measurement and improvement of test effectiveness and the measurement and improvement of O&M stability. Even if you can use one algorithm to both measure and improve test sufficiency, I recommend that you add other algorithms to avoid closed loops and blind spots that can result when only one algorithm is used.

[3] These words are not true today. They reflect the situation a dozen years ago, before online advertising and big data were developed.

[4] The data bank is not necessarily a platform. It does not need to be a service or have a user interface (UI). It can be a .jar package or an independent process that is launched during test execution.

Get to know our core technologies and latest product updates from Alibaba's top senior experts on our Tech Show series

2,593 posts | 794 followers

FollowAlibaba Clouder - April 22, 2020

Alibaba Clouder - September 20, 2019

Alibaba Cloud Security - April 24, 2019

Alibaba Container Service - February 25, 2025

ApsaraDB - April 3, 2019

Alibaba Clouder - February 4, 2019

2,593 posts | 794 followers

Follow Bastionhost

Bastionhost

A unified, efficient, and secure platform that provides cloud-based O&M, access control, and operation audit.

Learn More Managed Service for Grafana

Managed Service for Grafana

Managed Service for Grafana displays a large amount of data in real time to provide an overview of business and O&M monitoring.

Learn More Mobile Testing

Mobile Testing

Provides comprehensive quality assurance for the release of your apps.

Learn More Offline Visual Intelligence Software Packages

Offline Visual Intelligence Software Packages

Offline SDKs for visual production, such as image segmentation, video segmentation, and character recognition, based on deep learning technologies developed by Alibaba Cloud.

Learn MoreMore Posts by Alibaba Clouder