AIACC-Inference(AIACC推理加速)支持优化基于Torch框架搭建的模型,能够显著提升推理性能。本文介绍如何手动安装AIACC-Inference(AIACC推理加速)Torch版并提供示例体验推理加速效果。

前提条件

已创建阿里云GPU实例:

实例规格:配备A10、V100或T4 GPU。

说明更多信息,请参见实例规格族。

实例镜像:Ubuntu 16.04 LTS或CentOS 7.x。

背景信息

AIACC-Inference(AIACC推理加速)Torch版通过对模型的计算图进行切割,执行层间融合,以及高性能OP实现,大幅度提升PyTorch的推理性能。您无需指定精度和输入尺寸,即可通过JIT编译的方式对PyTorch框架下的深度学习模型进行推理优化。

AIACC-Inference(AIACC推理加速)Torch版通过调用aiacctorch.compile(model)接口即可实现推理性能加速。您只需先使用torch.jit.script或者torch.jit.trace接口,将PyTorch模型转换为TorchScript模型,更多信息,请参见PyTorch官方文档。本文将为您提供分别使用torch.jit.script和torch.jit.trace接口实现推理性能加速的示例。

准备并安装AIACC-Inference(AIACC推理加速)Torch版软件包

AIACC-Inference(AIACC推理加速)Torch版为您提供了Conda一键安装包以及whl包两种软件包,您可以根据自身业务场景选择一种进行安装。

Conda安装包

Conda一键安装包中已经预装了大部分依赖包,您只需手动安装CUDA驱动,再安装Conda包即可。具体操作如下:

重要请勿随意更改Conda安装包中的预装依赖包信息,否则可能会因为版本不匹配导致Demo运行报错。

自行安装CUDA 470.57.02或以上版本的驱动。

下载Conda安装包。

wget https://aiacc-inference-public.oss-cn-beijing.aliyuncs.com/aiacc-inference-torch/aiacc-inference-torch-miniconda-latest.tar.bz2解压Conda安装包。

mkdir ./aiacc-inference-miniconda && tar -xvf ./aiacc-inference-torch-miniconda-latest.tar.bz2 -C ./aiacc-inference-miniconda加载Conda安装包。

source ./aiacc-inference-miniconda/bin/activate

whl安装包

您需要手动安装相关依赖包后再安装whl软件包。具体操作如下:

选择以下任一方式安装相关依赖包。由于whl软件包依赖大量不同的软件组合,请您谨慎设置。

方式一

自行安装如下版本的依赖包:

CUDA 11.1

cuDNN 8.3.1.22

TensorRT 8.2.3.0

将TensorRT及CUDA的相关依赖库放置在系统LD_LIBRARY_PATH环境变量中。

以下命令以CUDA的相关依赖库位于/usr/local/cuda/目录下,TensorRT的相关依赖库位于/usr/local/TensorRT/目录下为例,您需要根据实际情况替换。

export LD_LIBRARY_PATH=/usr/local/cuda/lib64:$LD_LIBRARY_PATH export LD_LIBRARY_PATH=/usr/local/TensorRT/lib:$LD_LIBRARY_PATH执行环境变量。

source ~/.bashrc

方式二

使用NVIDIA的pip包安装相关依赖包。

pip install nvidia-pyindex && \ pip install nvidia-tensorrt==8.2.3.0

安装PyTorch 1.9.0+cu111。

pip3 install torch==1.9.0+cu111 torchvision==0.10.0+cu111 torchaudio==0.9.0 -f https://download.pytorch.org/whl/torch_stable.html下载并安装aiacctorch。

pip install aiacctorch -f https://aiacc-inference-public.oss-cn-beijing.aliyuncs.com/aiacc-inference-torch/aiacctorch_stable.html -f https://download.pytorch.org/whl/torch_stable.html

基于ResNet50模型执行推理

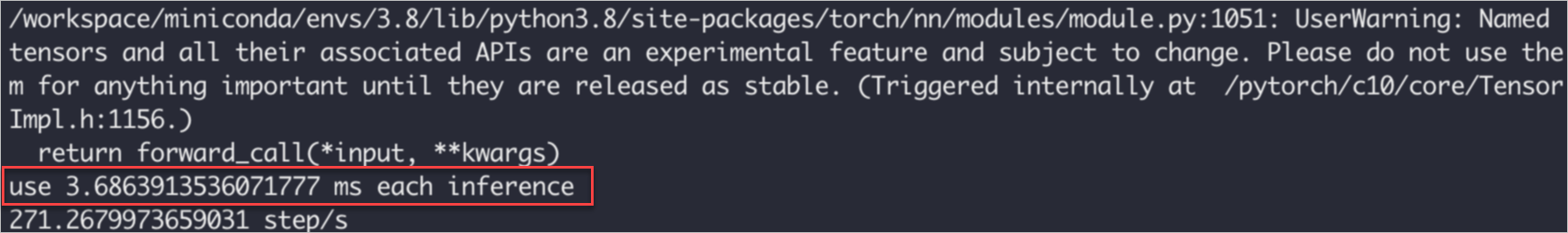

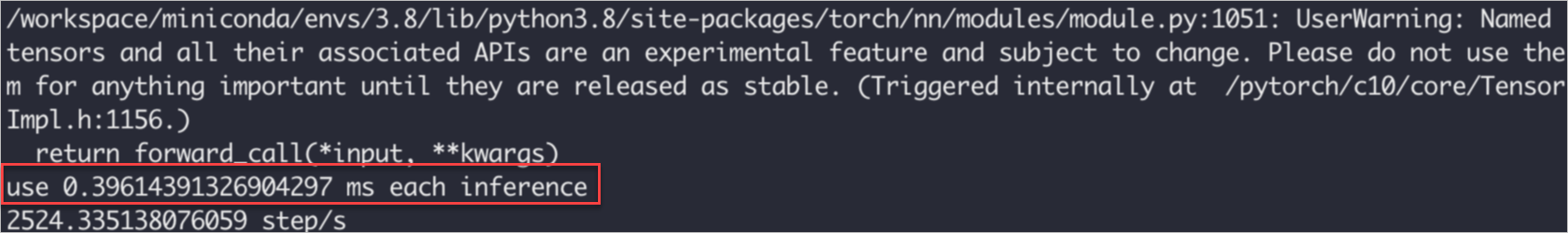

以下示例将以安装了Conda软件包为例,基于ResNet50模型,并调用torch.jit.script接口执行推理任务,执行1000次后取平均时间,将推理耗时从3.68 ms降低至0.396 ms以内。

原始版本

原始代码如下所示:

import time import torch import torchvision.models as models mod = models.resnet50(pretrained=True).eval() mod_jit = torch.jit.script(mod) mod_jit = mod_jit.cuda() in_t = torch.randn([1,3,224,224]).float().cuda() # Warming up for _ in range(10): mod_jit(in_t) inference_count = 1000 # inference test start = time.time() for _ in range(inference_count): mod_jit(in_t) end = time.time() print(f"use {(end-start)/inference_count*1000} ms each inference") print(f"{inference_count/(end-start)} step/s")执行结果如下,显示推理耗时大约为3.68 ms。

加速版本

您仅需要在原始版本的示例代码中增加如下两行内容,即可实现性能加速。

import aiacctorch aiacctorch.compile(mod_jit)更新后的代码如下:

import time import aiacctorch #import aiacc包 import torch import torchvision.models as models mod = models.resnet50(pretrained=True).eval() mod_jit = torch.jit.script(mod) mod_jit = mod_jit.cuda() mod_jit = aiacctorch.compile(mod_jit) #进行编译 in_t = torch.randn([1,3,224,224]).float().cuda() # Warming up for _ in range(10): mod_jit(in_t) inference_count = 1000 # inference test start = time.time() for _ in range(inference_count): mod_jit(in_t) end = time.time() print(f"use {(end-start)/inference_count*1000} ms each inference") print(f"{inference_count/(end-start)} step/s")执行结果如下,显示推理耗时为0.396 ms。相较于之前的3.68 ms,推理性能有了显著提升。

基于Bert-Base模型执行推理

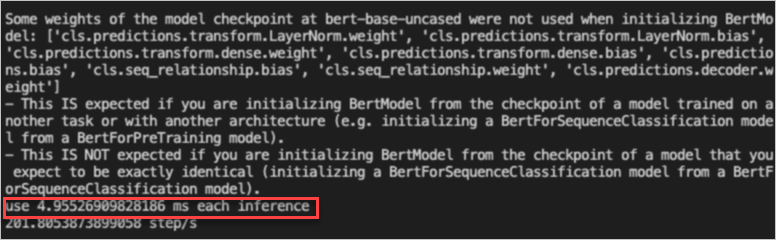

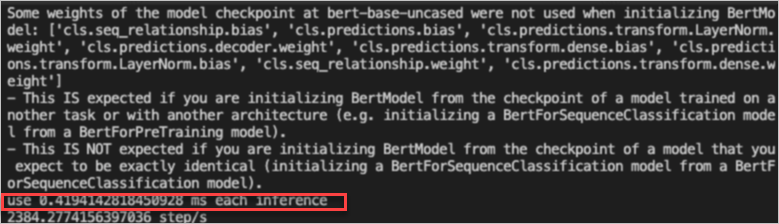

以下示例将基于Bert-Base模型,并调用torch.jit.trace接口执行推理任务,将推理耗时从4.95 ms降低至0.419 ms以内。

安装transformers包。

pip install transformers分别运行原始版本和加速版本的Demo,并查看运行结果。

原始版本

原始代码如下所示:

from transformers import BertModel, BertTokenizer, BertConfig import torch import time enc = BertTokenizer.from_pretrained("bert-base-uncased") # Tokenizing input text text = "[CLS] Who was Jim Henson ? [SEP] Jim Henson was a puppeteer [SEP]" tokenized_text = enc.tokenize(text) # Masking one of the input tokens masked_index = 8 tokenized_text[masked_index] = '[MASK]' indexed_tokens = enc.convert_tokens_to_ids(tokenized_text) segments_ids = [1, 1, 1, 1, 1, 1, 1, 0, 0, 0, 0, 0, 0, 0, ] # Creating a dummy input tokens_tensor = torch.tensor([indexed_tokens]).cuda() segments_tensors = torch.tensor([segments_ids]).cuda() dummy_input = [tokens_tensor, segments_tensors] # Initializing the model with the torchscript flag # Flag set to True even though it is not necessary as this model does not have an LM Head. config = BertConfig(vocab_size_or_config_json_file=32000, hidden_size=768, num_hidden_layers=12, num_attention_heads=12, intermediate_size=3072, torchscript=True) # Instantiating the model model = BertModel(config) # The model needs to be in evaluation mode model.eval() # If you are instantiating the model with `from_pretrained` you can also easily set the TorchScript flag model = BertModel.from_pretrained("bert-base-uncased", torchscript=True) model = model.eval().cuda() # Creating the trace traced_model = torch.jit.trace(model, dummy_input) # Warming up for _ in range(10): all_encoder_layers, pooled_output = traced_model(*dummy_input) inference_count = 1000 # inference test start = time.time() for _ in range(inference_count): traced_model(*dummy_input) end = time.time() print(f"use {(end-start)/inference_count*1000} ms each inference") print(f"{inference_count/(end-start)} step/s")执行结果如下,显示推理耗时大约为4.95 ms。

加速版本

您仅需要在原始版本的示例代码中增加如下两行内容,即可实现性能加速。

import aiacctorch aiacctorch.compile(traced_model)更新后的代码如下:

from transformers import BertModel, BertTokenizer, BertConfig import torch import aiacctorch #import aiacc包 import time enc = BertTokenizer.from_pretrained("bert-base-uncased") # Tokenizing input text text = "[CLS] Who was Jim Henson ? [SEP] Jim Henson was a puppeteer [SEP]" tokenized_text = enc.tokenize(text) # Masking one of the input tokens masked_index = 8 tokenized_text[masked_index] = '[MASK]' indexed_tokens = enc.convert_tokens_to_ids(tokenized_text) segments_ids = [1, 1, 1, 1, 1, 1, 1, 0, 0, 0, 0, 0, 0, 0, ] # Creating a dummy input tokens_tensor = torch.tensor([indexed_tokens]).cuda() segments_tensors = torch.tensor([segments_ids]).cuda() dummy_input = [tokens_tensor, segments_tensors] # Initializing the model with the torchscript flag # Flag set to True even though it is not necessary as this model does not have an LM Head. config = BertConfig(vocab_size_or_config_json_file=32000, hidden_size=768, num_hidden_layers=12, num_attention_heads=12, intermediate_size=3072, torchscript=True) # Instantiating the model model = BertModel(config) # The model needs to be in evaluation mode model.eval() # If you are instantiating the model with `from_pretrained` you can also easily set the TorchScript flag model = BertModel.from_pretrained("bert-base-uncased", torchscript=True) model = model.eval().cuda() # Creating the trace traced_model = torch.jit.trace(model, dummy_input) traced_model = aiacctorch.compile(traced_model) #进行编译 # Warming up for _ in range(10): all_encoder_layers, pooled_output = traced_model(*dummy_input) inference_count = 1000 # inference test start = time.time() for _ in range(inference_count): traced_model(*dummy_input) end = time.time() print(f"use {(end-start)/inference_count*1000} ms each inference") print(f"{inference_count/(end-start)} step/s")执行结果如下,显示推理耗时为0.419 ms。相较于之前的4.95 ms,推理性能有了显著提升。

基于ResNet50模型执行动态尺寸推理

在AIACC-Inference-Torch中,我们无需关心动态尺寸的问题,AIACC-Inference-Torch能够支持不同的输入尺寸。以下示例基于ResNet50模型,输入3个不同的长宽尺寸,带您体验使用AIACC-Inference-Torch进行加速的过程。

import time

import aiacctorch #import aiacc包

import torch

import torchvision.models as models

mod = models.resnet50(pretrained=True).eval()

mod_jit = torch.jit.script(mod)

mod_jit = mod_jit.cuda()

mod_jit = aiacctorch.compile(mod_jit) #进行编译

in_t = torch.randn([1,3,224,224]).float().cuda()

in_2t = torch.randn([1,3,448,448]).float().cuda()

in_3t = torch.randn([16,3,640,640]).float().cuda()

# Warming up

for _ in range(10):

mod_jit(in_t)

mod_jit(in_3t)

inference_count = 1000

# inference test

start = time.time()

for _ in range(inference_count):

mod_jit(in_t)

mod_jit(in_2t)

mod_jit(in_3t)

end = time.time()

print(f"use {(end-start)/(inference_count*3)*1000} ms each inference")

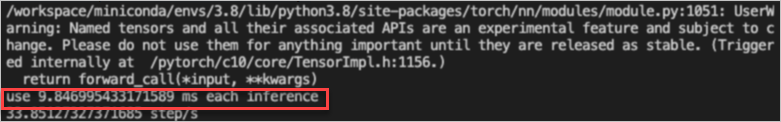

print(f"{inference_count/(end-start)} step/s")执行结果如下,显示推理耗时大约为9.84 ms。

为了缩短模型编译的时间,应在warming up阶段推理最大及最小的tensor尺寸,避免在执行时重复编译。例如,已知推理尺寸在1×3×224×224至16×3×640×640之间时,应在warming up时推理这两个尺寸。

性能数据对比参考

以下数据为AIACC-Inference-HRT与PyTorch的性能对比结果,采用的环境配置如下:

实例规格:配置NVIDIA A10的GPU实例。

CUDA版本:11.5。

CUDA Driver版本:470.57.02。

Model | Input-Size | AIACC-Inference-Torch (ms) | Pytorch Half (ms) | Pytorch Float (ms) | 加速比 |

resnet50 | 1x3x224x224 | 0.46974873542785645 | 3.4382946491241455 | 2.9194235801696777 | 6.22 |

mobilenet-v2-100 | 1x3x224x224 | 0.23872756958007812 | 2.8045766353607178 | 2.0068271160125732 | 8.69 |

SRGAN-X4 | 1x3x272x480 | 23.070229649543762 | 35.863523721694946 | 132.00348043441772 | 5.74 |

YOLO-V3 | 1x3x640x640 | 3.869319200515747 | 8.807475328445435 | 15.704705834388735 | 4.06 |

bert-base-uncased | 1x128,1x128 | 0.9421144723892212 | 3.1525989770889282 | 3.761411190032959 | 4.00 |

bert-large-uncased | 1x128,1x128 | 1.3300731182098389 | 6.11789083480835 | 7.110695481300354 | 5.34 |