If data does not appear in the Managed Service for OpenTelemetry console after you report data from an open source client, work through the following sections in order. Each section corresponds to a stage in the data pipeline where data can be lost.

Data flows through these stages before it appears in the console:

Client SDK or agent sends telemetry over HTTP or gRPC.

Network carries the request to the endpoint.

Ingestion endpoint accepts or rejects the request.

Storage backend (Simple Log Service) persists the data.

Console displays the data.

A failure at any stage prevents data from appearing. Start with network connectivity, then check ingestion settings, storage, and protocol-specific errors.

Check network connectivity

Identify whether your code uses a private endpoint or a public endpoint. Private endpoints must belong to the same virtual private cloud (VPC) as the server that reports data. Cross-region reporting is not supported.

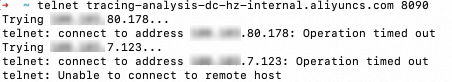

From the reporting environment, run

curlortelnetto verify that the endpoint and port are reachable. Example for a gRPC endpoint in the China (Hangzhou) region: If the connection fails, check your Elastic Compute Service (ECS) security group settings. For more information, see Prepare for using Managed Service for OpenTelemetry and Security group overview.Success: The terminal displays a

Connectedmessage.

Failure: The terminal stays at

Trying [IP]or returnsUnable to connect to remote host.

telnet <endpoint> <port>telnet tracing-analysis-dc-hz.aliyuncs.com 8090

Check ingestion settings

Data ingestion can be disabled at the cluster level, the application level, or both. Ingestion also stops when the reported data volume reaches the configured quota.

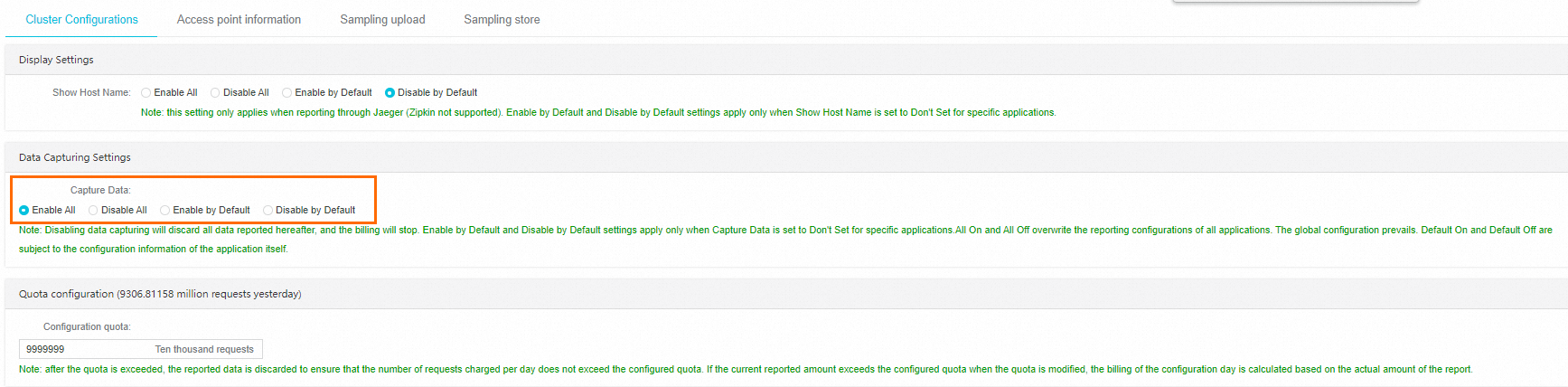

Global ingestion configuration

In the left-side navigation pane of the Managed Service for OpenTelemetry console, choose System Configuration > Cluster Configuration. In the Ingestion Configuration section, verify that Enable All or Enable by Default is selected.

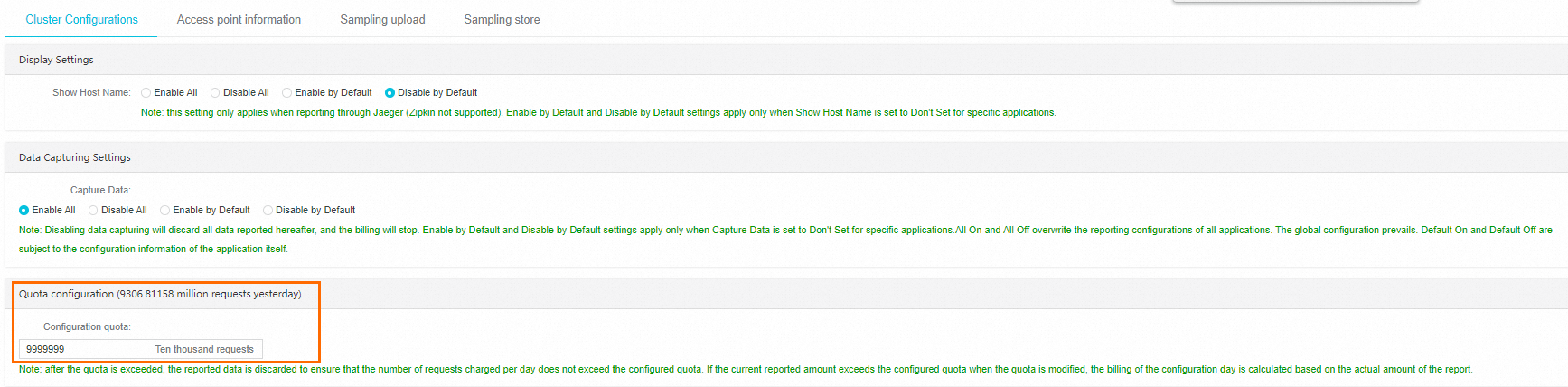

Quota

On the same Cluster Configuration page, scroll to the Quota Configuration section. Verify that the amount of reported data has not reached the configured quota. If it has, increase the quota.

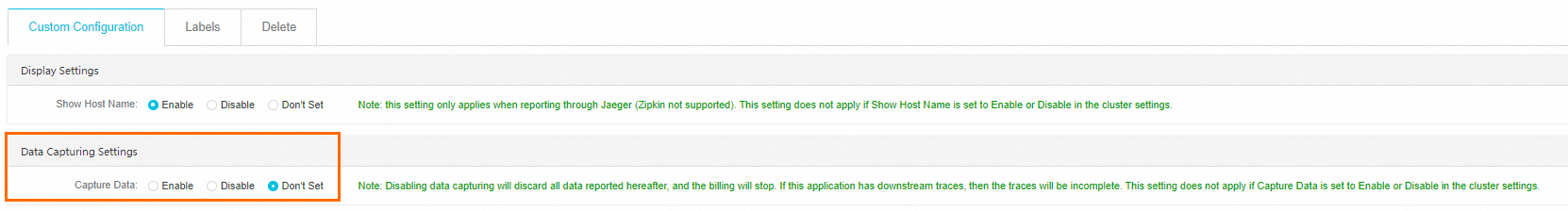

Application-level data capturing settings

On the Applications page, click the name of the application that you want to manage. In the left-side navigation pane of the application details page, click Application Settings. On the Custom Configuration tab, check whether Enable or Don't Set is selected as Capture Data in the Data Capturing Settings section.

If Enable All or Disable All is selected in the global Ingestion Configuration, the application-level setting does not take effect. The global setting takes precedence. If Don't Set is selected for an application, the application inherits the cluster-level setting.

Check the Simple Log Service data source

Managed Service for OpenTelemetry stores data in Simple Log Service projects within your account. If the number of Simple Log Service projects reaches the upper limit, data reporting fails.

To resolve this issue:

Release Simple Log Service projects that are no longer in use.

Submit a ticket to increase the project limit.

Check the monitoring task status

If the console indicates that the monitoring task is abnormal or not enabled, submit a ticket.

Troubleshoot HTTP reporting failures

HTTP status codes

Check the HTTP status code returned in the console or log files, then follow the corresponding resolution.

| Status code | Meaning | Cause | Resolution |

|---|---|---|---|

| 400 | Unsupported data format | The Content-Type header value must be application/json, application/x-thrift, or Bad Request. Common causes: a tag value is a JSON array instead of a string, or spans are sent as a JSON object instead of a JSON array. | Fix the request body to match the required format. |

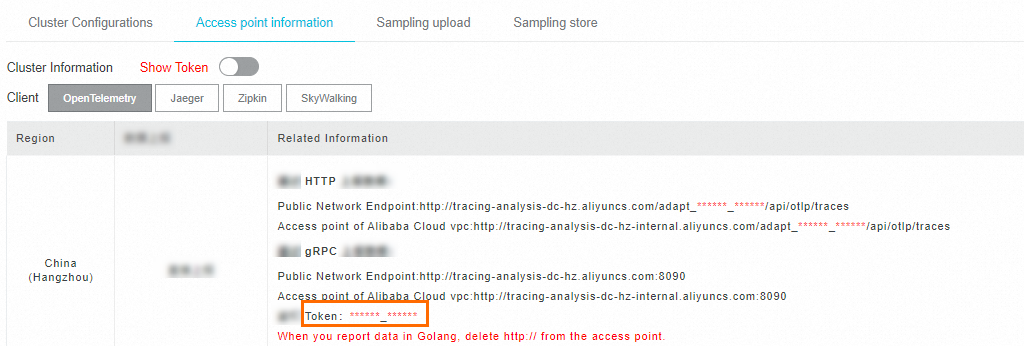

| 403 | Authorization refused | (1) The endpoint or token is incorrect. (2) The Zipkin client URL contains /v2/spans. | (1) Find the correct endpoint and token in the Managed Service for OpenTelemetry console under Cluster Configuration. (2) Remove /v2/spans from the URL. |

| 405 | Data quota exceeded | The reported data volume has reached the configured upper limit. | Increase the limit in the Quota Configuration section of the Cluster Configuration page. |

| 406 | Cluster collection disabled | Cluster data collection is disabled. | Enable it in the Ingestion Configuration section of the Cluster Configuration page. |

APISIX reporting failure

If the following error appears when APISIX reports data through OpenTelemetry:

The origin server did not find a current representation for the target resource or is not willing to disclose that one exists.APISIX cannot report data directly to Managed Service for OpenTelemetry through OpenTelemetry. Use OpenTelemetry Collector to forward the data instead.

Troubleshoot gRPC reporting failures

Check the gRPC status code returned in the console or log files. For a full list of status codes, see gRPC status codes.

Timeout

Error message:

Failed to export spans. The request could not be executed. Full error message: timeoutResolution:

Check network connectivity (see Check network connectivity).

Increase the timeout period for data reporting in your SDK or agent configuration.

Authentication failure (gRPC status code 7)

Error message:

Failed to export spans. Server responded with gRPC status code 7. Error message:Verify that the value of the Authentication field in the gRPC request header matches the token shown in the Managed Service for OpenTelemetry console.

SkyWalking MeterSender error

Error message:

MeterSender : Send meters to collector fail with a grpc internal exception. org.apache.skywalking.apm.dependencies.io.grpc.StatusRuntimeException: UNIMPLEMENTED: Method not found: skywalking.v3.MeterReportService/collectThe SkyWalking client is sending metrics to the Managed Service for OpenTelemetry server, which is not supported. Disable metrics reporting in the SkyWalking client.

Troubleshoot unexpected trace data

SkyWalking agent or SDK

Missing framework or middleware events

Check the plug-in path of the SkyWalking agent:

${agent-path}/agent-8.x/plugins. Verify that plug-in versions match the framework versions used in your application. If a required plug-in is missing from thepluginsdirectory, copy it from thebootstrap-pluginsoroptional-pluginsfolder, or download it from the SkyWalking community.Verify that only one SkyWalking agent is attached to the application. Multiple agents can cause instrumentation conflicts.

Broken trace

Check whether asynchronous tracing is in use. For information about resolving broken traces in asynchronous tracing, see Trace Cross Thread.

Trace shorter than expected

Increase the maximum number of spans the SkyWalking agent can report by modifying the collector.agent.service_graph.batch_size value in the ${agent-path}/agent-8.x/config/agent.config file.

OpenTelemetry agent or SDK

Broken trace

Check whether asynchronous tracing is in use. To resolve broken traces in asynchronous tracing:

Update the OpenTelemetry version.

Use the SpanLinks API to correlate spans across traces.

Specify a span as the parent span of another span.

Pass the trace context explicitly through context propagation.