Process large volumes of requests asynchronously at 50% the cost of real-time inference. Batch inference is OpenAI-compatible and ideal for model evaluation, data labeling, and other bulk workloads.

Workflow

Workflow:

Submit a task: Upload a file with multiple requests.

Process asynchronously: The system processes tasks in a background queue. You can monitor task progress and status through the console or API.

Download results: When the task completes, the system generates a result file containing successful responses and an error file with details of any failures.

Availability

International

In the international deployment mode, both the endpoint and data storage are located in the Singapore region. Model inference compute resources are dynamically scheduled across global regions, excluding the Chinese mainland.

Supported models: qwen-max, qwen-plus, qwen-flash, qwen-turbo.

Chinese mainland

In the Chinese mainland deployment mode, both the endpoint and data storage are located in the Beijing region. Model inference compute resources are available only in the Chinese mainland.

Supported models:

Text generation models: Stable versions of Qwen-Max, Plus, Flash, and Long, and some

latestversions. QwQ series (qwq-plus) and some third-party models (deepseek-r1, deepseek-v3) are also supported.Multimodal models: Stable versions of Qwen-VL-Max, Plus, and Flash, plus some

latestversions. Also supported is the Qwen-OCR model.Text embedding models: text-embedding-v4.

Some models support thinking mode. When enabled, this mode generates thinking

tokensand increases costs.The

qwen3.5series (such asqwen3.5-plusandqwen3.5-flash) enable thinking mode by default. When using hybrid-thinking models, explicitly set theenable_thinkingparameter (trueorfalse).

Usage steps

Step 1: Prepare the input file

Before creating a task, prepare a file that meets the following requirements:

Format: UTF-8 encoded JSONL (one independent JSON object per line).

Scale limits: Up to 50,000 requests per file, max 500 MB.

Split larger datasets into separate tasks.

Per-request limit: Up to 6 MB per JSON object, within the model context window.

Consistency: All requests must use the same model.

Unique identifier: Each request must include a unique `custom_id` field within the file. This identifier is used to match requests with their results.

Sample file

{"custom_id":"1","method":"POST","url":"/v1/chat/completions","body":{"model":"qwen-max","messages":[{"role":"system","content":"You are a helpful assistant."},{"role":"user","content":"Hello!"}]}}

{"custom_id":"2","method":"POST","url":"/v1/chat/completions","body":{"model":"qwen-max","messages":[{"role":"system","content":"You are a helpful assistant."},{"role":"user","content":"What is 2+2?"}]}}JSONL batch generation tool

Use this tool to quickly generate JSONL files.

Step 2: Submit and view results

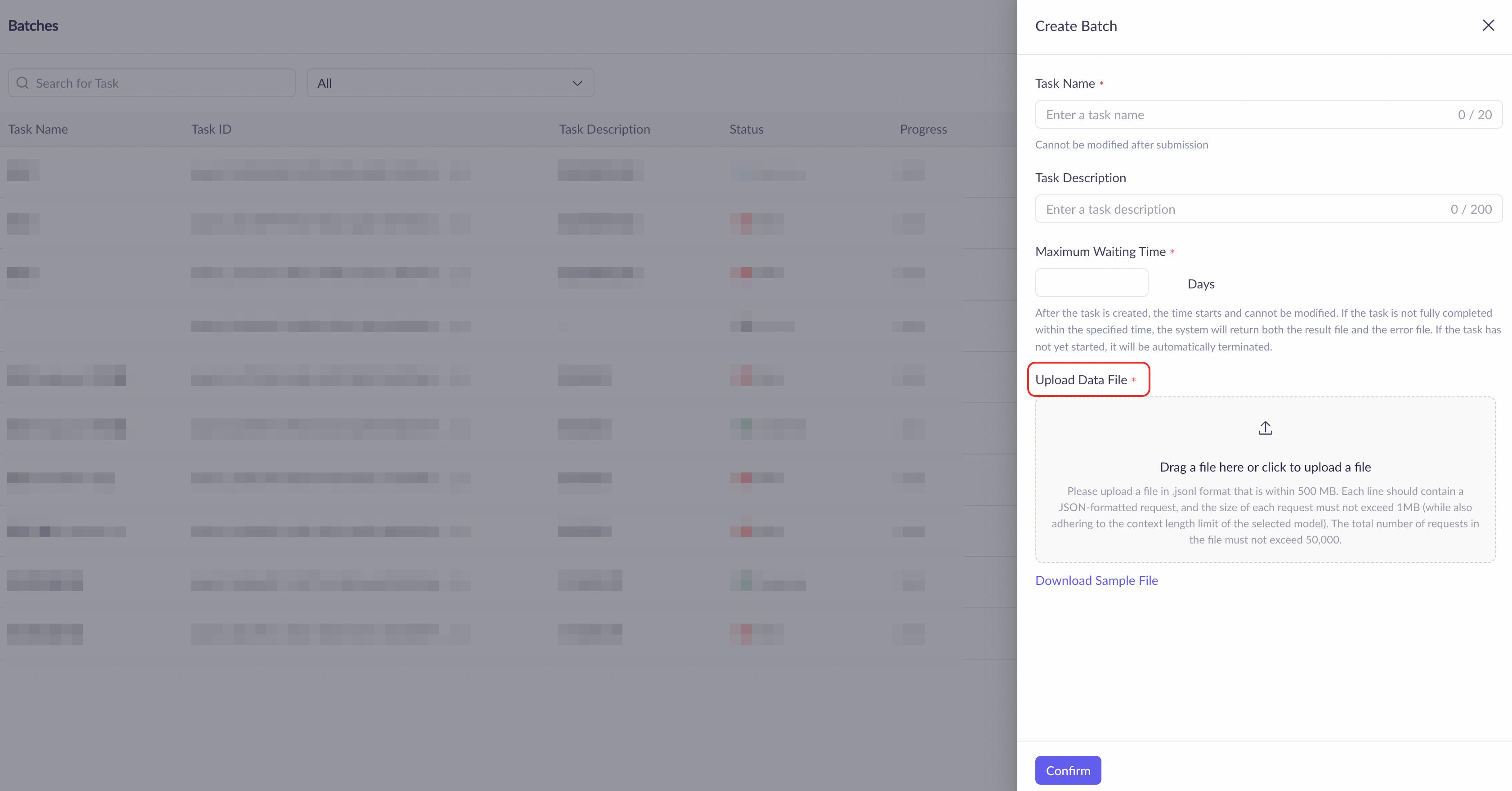

(1) Create a task

On the Batch inference page, click Create Batch.

In the dialog box, enter a Task Name and Description, set the Maximum Waiting Time (1 to 14 days), and upload your JSONL file.

Click Download Sample File to download a template.

When ready, click Confirm.

View and manage tasks

View:

On the task list page, view the Progress (processed/total requests) and Status for each task.

Search by task name or ID, or filter by workspace to quickly locate a specific task.

Manage:

Cancel: Cancel a running task from the Actions column.

Troubleshoot: For failed tasks, hover over the status to view an error summary and download the error file for detailed information.

Download and analyze results

When a task completes, click View Results to download the output files:

Result file: Contains all successful requests and their

responseresults.Error file (if any): Contains all failed requests and their

errordetails.

Both files include custom_id for matching results with input requests.

Step 3: View usage statistics (optional)

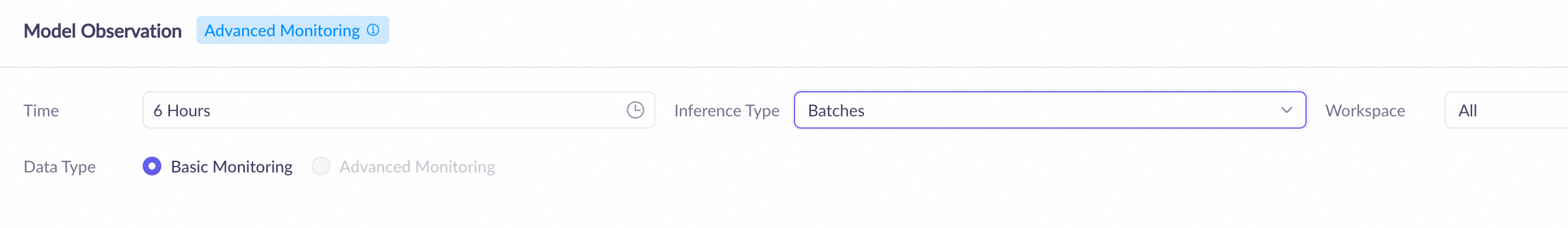

On the Model Monitoring page, filter and view usage statistics for batch inference.

View data overview: Select a Time (up to 30 days) and set Inference Type to Batches to display:

Monitoring data: Summary statistics for all models in the selected period, including total calls and failures.

Model list: Detailed metrics for each model, including total calls, failure rate, and average call duration.

To view inference data older than 30 days, go to the Bills page.

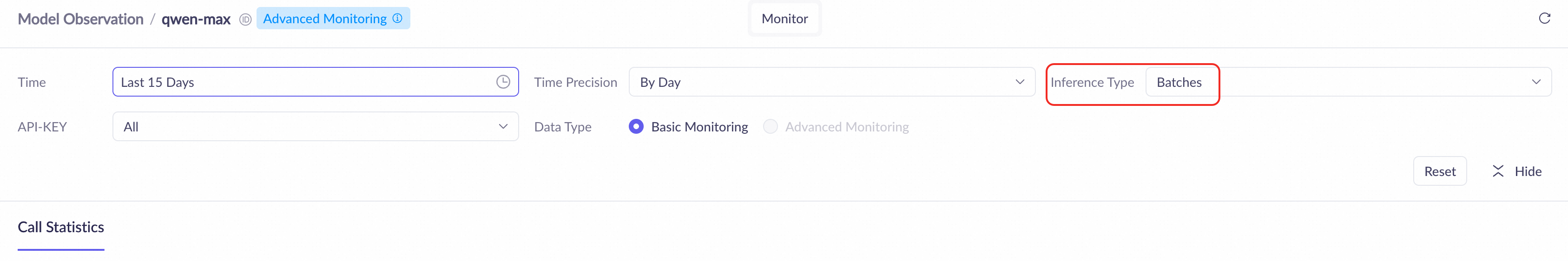

View model details: In the Models, click Actions for a specific model, then select Monitor to view Call Statistics such as call count and usage volume.

Call data is recorded when tasks complete. Running tasks show no call data until finished.

Monitoring data has a 1 to 2 hour delay.

API reference

Use the OpenAI-compatible API to automate batch task creation and management. Core workflow:

Call

POST /v1/filesto upload your file and record the returned file ID.Create a task

CallPOST /v1/batcheswith the file ID from step 1 , and record the returnedbatch_id.Poll status

Use thebatch_idto pollGET /v1/batches/{batch_id}. Whenstatusbecomescompleted, record theoutput_file_idand stop polling.Download results

Use theoutput_file_idto callGET /v1/files/{output_file_id}/contentand download the result file.

For complete Batch API definitions and examples, see OpenAI compatible: batch (file input).

Task lifecycle

validating: The system is verifying file format (JSONL) and request validity.

in_progress: The system is processing requests.

completed: Result and error files are ready for download.

failed: Validation failed (incorrect format or oversized file). No requests were executed.

expired: The task exceeded the maximum wait time. Create a new task with longer timeout to retry.

cancelled: The task was manually cancelled. Unstarted requests were terminated.

Billing

Unit price: The input and output tokens for all successful requests are charged at 50% of the real-time inference price for the corresponding model. For more information, see Model list.

Billing scope:

Only requests successfully executed within a task are billed.

Requests that fail because of file parsing errors, task execution failures, or row-level errors do not incur charges.

For canceled tasks, requests successfully completed before the cancellation are still billed as normal.

Batch inference is a separate billing item. It supports AI general-purpose savings plan, but not discounts, such as subscription (other savings plans) or free quotas for new users. It also does not support features such as context cache.

Some models, such as qwen3.5-plus and qwen3.5-flash, have thinking mode enabled by default. This mode generates additional thinking tokens, which are billed at the output token price and increase costs. To control costs, set the `enable_thinking` parameter based on task complexity. For more information, see Deep thinking.

FAQ

Do I need to purchase or enable anything extra?

No additional setup required. Activate Model Studio and pay as you go.

Why does my task fail immediately after submission (status changes to

failed)?This typically indicates a file-level error. The task does not execute any inference requests. Check the following:

File format: Verify it uses strict JSONL format with one complete JSON object per line.

File scale: Ensure the file size and line count do not exceed limits. For details, see Prepare the input file.

Model consistency: Verify that the

body.modelfield is identical across all requests and that the model is available in your region.

How long does a batch task take?

Processing time depends on system load. Tasks may queue during peak hours. Results return within the specified timeout.

Error codes

If a call fails with an error message, see Error messages.