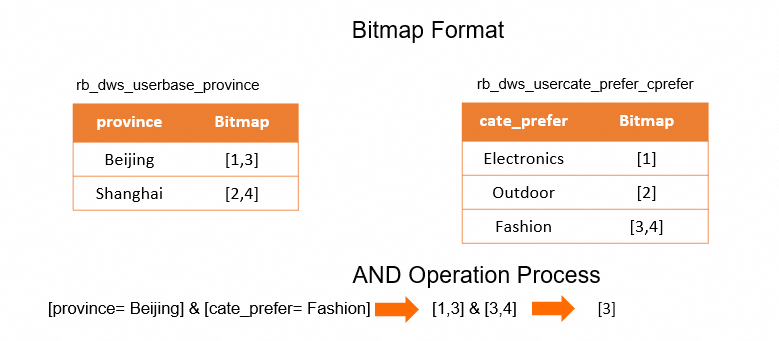

When a user profiling system has more than 1,000 tags, wide tables become impractical — update performance degrades as columns accumulate. Roaring bitmaps offer a better approach: encode user IDs as integers, store them in compact bitmap structures, and replace expensive JOIN operations with fast bitwise AND, OR, and NOT operations. For large-scale user property analysis, Hologres can respond to queries in sub-seconds using this approach.

Hologres is compatible with the PostgreSQL ecosystem and natively supports the roaringbitmap extension.

When to use roaring bitmaps

Roaring bitmaps are effective in two scenarios:

Large-scale tag filtering: Replace JOIN operations across many large tables with

BITMAP_ANDintersection operations. The bitmap extension uses Single Instruction Multiple Data (SIMD) optimization to improve CPU utilization by 1% to 2%.Deduplication at scale: Bitmaps have intrinsic deduplication built in, eliminating the overhead of unique vector (UV) computing.

Property tags vs. action tags

Tags in a user profiling system fall into two categories with different characteristics that determine whether bitmap storage makes sense:

| Tag type | Description | Examples | Suitable for bitmaps? |

|---|---|---|---|

| Property tags | Stable user attributes filtered by precise conditions | Gender, province, marital status | Yes — high compression ratio, efficient bitwise operations |

| Action tags | Behavioral data organized by time, frequently updated, requires range scanning and aggregate filtering | Page views, purchases, active logons | No — low compression ratio, data changes constantly |

Why action tags are not suitable: Fact tables are organized by time (for example, one partition per day), and each day's slice contains limited rows. Compressing sparse per-day slices into bitmaps wastes storage due to row-oriented overhead. Action data also changes frequently, and the [option→bitmap] storage structure cannot identify which records need updating. For action data queries, Hologres uses its original columnar storage format instead.

Prerequisites

Before you begin, make sure you have:

A Hologres instance

The

roaringbitmapextension enabled (runCREATE EXTENSION roaringbitmap;)Source data tables with user IDs and tag columns

Roaring bitmaps store only integers. If your user IDs are strings, encode them to integers before building bitmap tables. See Step 1: Encode user IDs for the encoding approach and trade-offs.

How it works

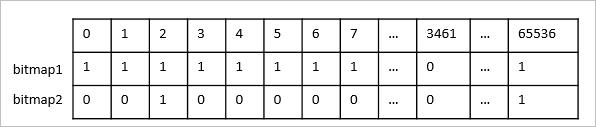

The bitmap approach has three layers:

Encoding: Map string user IDs to integers using a dictionary table with a

BIGSERIALauto-increment column.Bitmap table design: Split tag tables by column into bitmap tables. Each bitmap table stores

(tag_value, bucket, bitmap)— wherebucket = encode_uid / 65536distributes the data evenly across nodes.Querying: Combine bitmaps from multiple tables using

RB_AND,RB_OR, andrb_cardinalityto count matching users without JOIN or deduplication overhead.

Segmenting bitmaps into 65,536 buckets is critical for even resource distribution. Without segmentation, a column like gender (only Male and Female) produces just two bitmaps, concentrating compute and storage on two nodes.

Build a property tag bitmap system

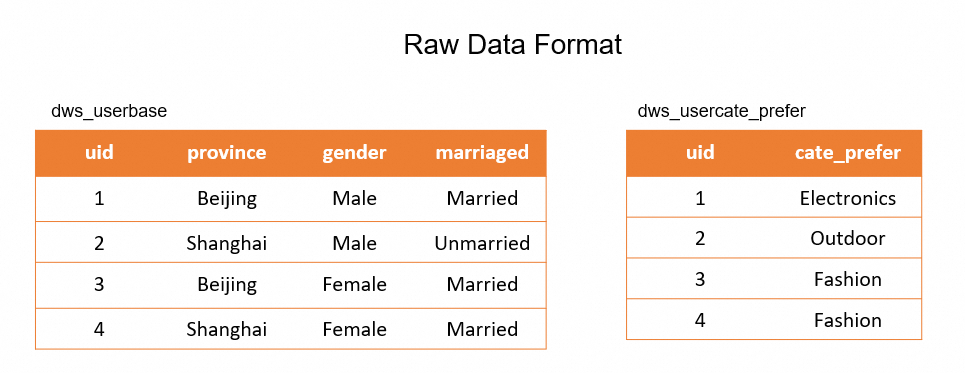

The following steps walk through a complete example using two source tables:

dws_userbase— basic user properties (province, gender)dws_shop_cust— shop customer types (shop_id, cust_type)

Step 1: Encode user IDs

Bitmaps require integer input. Create a dictionary table using BIGSERIAL to map string user IDs to sequential integers:

-- Create a UID dictionary table

CREATE TABLE dws_uid_dict (

encode_uid bigserial,

uid text primary key

);

-- Populate from your source tag table

INSERT INTO dws_uid_dict(uid)

SELECT uid

FROM dws_userbase ON CONFLICT DO NOTHING;This produces compact, sequential integer IDs — efficient to store as bitmaps. Sparse integer ranges consume significantly more space than dense ranges.

When to encode:

| User ID type | Recommendation |

|---|---|

| String user IDs | Encode (required) |

| Integer user IDs with infrequent ID restoration | Encode (recommended) |

| Integer user IDs that require frequent restoration (for example, real-time output of user details) | Skip encoding — decoding back requires an extra JOIN at query time |

Step 2: Design bitmap tables

Split tag columns into separate bitmap tables. Each table uses bucket as the distribution key so queries run in parallel across nodes.

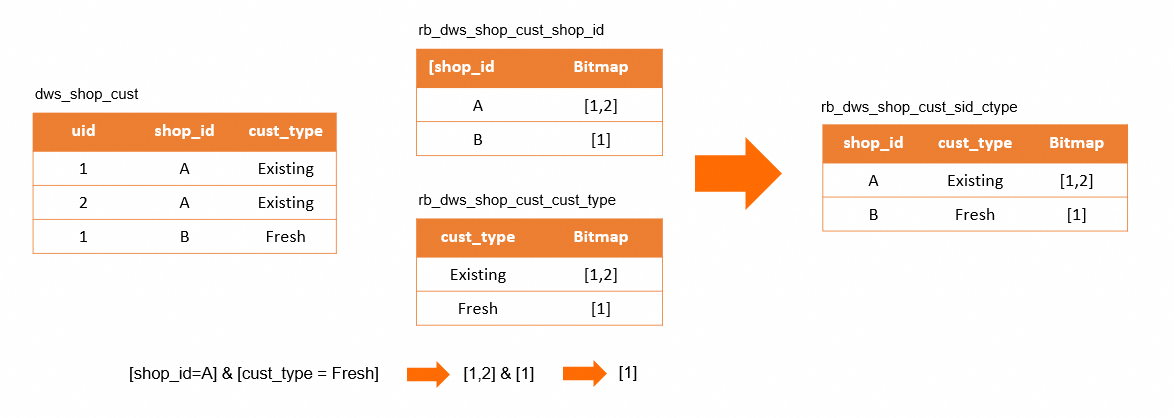

Do not split correlated columns into separate bitmap tables. If cust_type is a metric for shop_id (meaning a user's customer type only makes sense in the context of a specific shop), splitting them produces incorrect results. Combine correlated columns into one bitmap table.

Correct approach — combined correlated columns:

What goes wrong when splitting correlated columns:

For the dws_shop_cust table, cust_type depends on shop_id. Splitting them into separate bitmap tables and filtering [shop_id = A] & [cust_type = Fresh] returns UID [1] — but that user is not actually a Fresh customer of shop A. Use a combined bitmap table instead.

Create the bitmap tables:

-- Enable the roaringbitmap extension

CREATE EXTENSION roaringbitmap;

-- Bitmap table: province property

BEGIN;

CREATE TABLE rb_dws_userbase_province (

province text,

bucket int,

bitmap roaringbitmap

);

CALL set_table_property('rb_dws_userbase_province', 'distribution_key', 'bucket');

END;

-- Bitmap table: shop + customer type (correlated columns combined)

BEGIN;

CREATE TABLE rb_dws_shop_cust_sid_ctype (

shop_id text,

cust_type text,

bucket int,

bitmap roaringbitmap

);

CALL set_table_property('rb_dws_shop_cust_sid_ctype', 'distribution_key', 'bucket');

END;Step 3: Write data into bitmap tables

Use encode_uid / 65536 to assign each user to a bucket, then aggregate with rb_build_agg:

-- Write property bitmap data

INSERT INTO rb_dws_userbase_province

SELECT province,

encode_uid / 65536 AS bucket,

rb_build_agg(b.encode_uid) AS bitmap

FROM dws_userbase a

JOIN dws_uid_dict b ON a.uid = b.uid

GROUP BY province, bucket;

-- Write shop + customer type bitmap data

INSERT INTO rb_dws_shop_cust_sid_ctype

SELECT shop_id,

cust_type,

encode_uid / 65536 AS bucket,

rb_build_agg(b.encode_uid) AS bitmap

FROM dws_shop_cust a

JOIN dws_uid_dict b ON a.uid = b.uid

GROUP BY shop_id, cust_type, bucket;Step 4: Query with bitmap operations

To count users matching [shop_id = A] & [cust_type = Fresh] & [province = Beijing], combine bitmaps from both tables with RB_AND and count with RB_CARDINALITY:

SELECT SUM(RB_CARDINALITY(rb_and(ub.bitmap, uc.bitmap)))

FROM

(SELECT rb_or_agg(bitmap) AS bitmap,

bucket

FROM rb_dws_userbase_province

WHERE province = 'Beijing'

GROUP BY bucket) ub

JOIN

(SELECT rb_or_agg(bitmap) AS bitmap,

bucket

FROM rb_dws_shop_cust_sid_ctype

WHERE shop_id = 'A'

AND cust_type = 'Fresh'

GROUP BY bucket) uc ON ub.bucket = uc.bucket;Each subquery retrieves matching buckets and merges within-bucket bitmaps using rb_or_agg. The outer query intersects the two result sets per bucket and sums the cardinalities.

Handle action tags

For action data (behavioral fact tables), skip the bitmap conversion. Store the fact table in Hologres using columnar orientation, with clustering_key on shop_id and encode_uid for efficient filtering.

Create the action table and its encoded version:

-- Source action table

BEGIN;

CREATE TABLE dws_usershop_behavior (

uid int not null,

shop_id text not null,

pv_cnt int,

trd_cnt int,

ds integer not null

);

CALL set_table_property('dws_usershop_behavior', 'distribution_key', 'uid');

COMMIT;

-- Encoded action table (bucket-distributed for local join)

BEGIN;

CREATE TABLE dws_usershop_behavior_bucket (

encode_uid int not null,

shop_id text not null,

pv_cnt int,

trd_cnt int,

ds int not null,

bucket int

);

CALL set_table_property('dws_usershop_behavior_bucket', 'orientation', 'column');

CALL set_table_property('dws_usershop_behavior_bucket', 'distribution_key', 'bucket');

CALL set_table_property('dws_usershop_behavior_bucket', 'clustering_key', 'shop_id,encode_uid');

COMMIT;

-- Write fact data

INSERT INTO dws_usershop_behavior_bucket

SELECT encode_uid,

shop_id,

pv_cnt,

trd_cnt,

ds,

encode_uid / 65536

FROM dws_usershop_behavior a

JOIN dws_uid_dict b ON a.uid = b.uid;To query users matching [province = Beijing] & [shop_id = A, no purchase in 7 days], generate a bitmap from the filtered action table and join it with the property bitmap index. Because both tables use bucket as their distribution key, Hologres executes this as a local join:

SELECT sum(rb_cardinality(bitmap)) AS cnt

FROM

(SELECT rb_and(ub.bitmap, us.bitmap) AS bitmap,

ub.bucket

FROM

(SELECT rb_or_agg(bitmap) AS bitmap,

bucket

FROM rb_dws_userbase_province

WHERE province = 'Beijing'

GROUP BY bucket) AS ub

JOIN

(SELECT rb_build_agg(encode_uid) AS bitmap,

bucket

FROM

(SELECT encode_uid,

bucket

FROM dws_usershop_behavior_bucket

WHERE shop_id = 'A'

AND ds > to_char(current_date - 7, 'YYYYMMdd')::int

GROUP BY encode_uid, bucket

HAVING sum(trd_cnt) = 0) tmp

GROUP BY bucket) us ON ub.bucket = us.bucket) r;Load bitmap data offline

Process bitmap data offline to avoid impact on production queries. Both MaxCompute and Hive support loading data into Hologres via foreign tables.

Build bitmaps in MaxCompute

MaxCompute requires a JAR-based user-defined function (UDF) for bitmap operations. All steps mirror the online approach: encode UIDs, assign buckets, and aggregate into bitmaps.

-- Select the MaxCompute project

USE bitmap_demo;

-- Create source tables

CREATE TABLE mc_dws_uid_dict (

encode_uid bigint,

bucket bigint,

uid string

);

CREATE TABLE mc_dws_userbase (

uid string,

province string,

gender string,

marriaged string

);

-- Encode UID values (incremental — skips already-encoded UIDs)

WITH uids_to_encode AS (

SELECT DISTINCT(ub.uid),

CAST(ub.uid / 65536 AS BIGINT) AS bucket

FROM mc_dws_userbase ub

LEFT JOIN mc_dws_uid_dict d ON ub.uid = d.uid

WHERE d.uid IS NULL

),

uids_bucket_encode_offset AS (

SELECT bucket,

sum(cnt) OVER (ORDER BY bucket ASC) - cnt AS bucket_offset

FROM (

SELECT count(1) AS cnt, bucket

FROM uids_to_encode

GROUP BY bucket

) x

),

dict_used_id_offset AS (

SELECT max(encode_uid) AS used_id_offset FROM mc_dws_uid_dict

)

-- New encode_uid = max existing + bucket offset + row number within bucket

INSERT INTO mc_dws_uid_dict

SELECT COALESCE((SELECT used_id_offset FROM dict_used_id_offset), 0) + bucket_offset + rn,

bucket,

uid

FROM (

SELECT row_number() OVER (PARTITION BY ub.bucket ORDER BY ub.uid) AS rn,

ub.bucket,

bo.bucket_offset,

uid

FROM uids_to_encode ub

JOIN uids_bucket_encode_offset bo ON ub.bucket = bo.bucket

) j;

-- Register bitmap UDFs from the mc-bitmap JAR

ADD JAR function_jar_dir/mc-bitmap-functions.jar AS mc_bitmap_func.jar -f;

CREATE FUNCTION mc_rb_cardinality AS com.alibaba.hologres.RbCardinalityUDF USING mc_bitmap_func.jar;

CREATE FUNCTION mc_rb_build_agg AS com.alibaba.hologres.RbBuildAggUDAF USING mc_bitmap_func.jar;

-- Create and populate the bitmap table

CREATE TABLE mc_rb_dws_userbase_province (

province string,

bucket int,

bitmap string

);

INSERT INTO mc_rb_dws_userbase_province

SELECT province,

b.bucket_num,

mc_rb_build_agg(b.encode_uid) AS bitmap

FROM mc_dws_userbase a

JOIN mc_dws_uid_dict b ON a.uid = b.uid

GROUP BY province, b.bucket_num;Download the mc-bitmap JAR to use bitmap functions in MaxCompute.

Import into Hologres

Create a foreign table pointing to the MaxCompute bitmap table, then insert into the Hologres bitmap table using roaringbitmap_text to convert the serialized string format:

-- Create a MaxCompute foreign table in Hologres

CREATE TABLE mc_rb_dws_userbase_province (

province text,

bucket int,

bitmap text

) SERVER odps_server OPTIONS (project_name 'bitmap_demo', table_name 'mc_rb_dws_userbase_province');

-- Write bitmap data from MaxCompute into Hologres

INSERT INTO rb_dws_userbase_province

SELECT province,

bucket::INT,

roaringbitmap_text(bitmap, FALSE)

FROM mc_rb_dws_userbase_province;After the import completes, run the same bitwise queries described in Step 4: Query with bitmap operations.

For a detailed walkthrough of offline UV calculation, see Batch UV calculation.

Real-time bitmap updates with Flink

For real-time scenarios, use Hologres with Flink to maintain bitmap data as user actions arrive:

Use the UID dictionary table as a Flink dimension table. Use

INSERT ON CONFLICTin Hologres to add new user IDs as they appear, then join the dimension table with the incoming action stream in Flink.Aggregate the joined stream by tag using Roaring bitmaps.

Write the resulting bitmaps into the bitmap table in Hologres.

What's next

roaringbitmap functions — full reference for RB_AND, RB_OR, RB_CARDINALITY, and other bitmap functions

PostgreSQL SERIAL — auto-increment column setup in Hologres

Batch UV calculation — complete offline UV deduplication example