EMR Serverless Spark includes a built-in MaxCompute DataSource based on the Spark DataSource V2 API. MaxCompute, formerly known as Open Data Processing Service (ODPS), is a fast, fully managed data warehouse solution for exabyte-scale data. This topic shows you how to connect EMR Serverless Spark to MaxCompute and run read/write operations using SparkSQL and Notebooks.

Prerequisites

Before you begin, ensure that you have:

-

An EMR Serverless Spark workspace. See Create a workspace.

-

A MaxCompute project with open storage enabled. See Create a MaxCompute project and Enable open storage.

The examples in this topic use the pay-as-you-go billing method for open storage.

Limitations

-

Supported engine versions:

-

esr-4.x: esr-4.6.0 and later

-

esr-3.x: esr-3.5.0 and later

-

esr-2.x: esr-2.9.0 and later

-

-

The operations in this topic require you to enable the open storage feature for MaxCompute. See Enable open storage.

-

The MaxCompute endpoint you use must support the Storage API. If it does not, switch to a compatible endpoint. See Data transmission resources.

Billing

With pay-as-you-go open storage, charges apply to the logical size of data read or written after you exceed the 1 TB free quota.

Step 1: Create a session

Create a session to connect EMR Serverless Spark to MaxCompute. SQL sessions and Notebook sessions use the same Spark configuration parameters.

Spark configuration

Add the following configuration when creating either session type. Replace the placeholder values with your own.

spark.sql.catalog.odps org.apache.spark.sql.execution.datasources.v2.odps.OdpsTableCatalog

spark.sql.extensions org.apache.spark.sql.execution.datasources.v2.odps.extension.OdpsExtensions

spark.sql.sources.partitionOverwriteMode dynamic

spark.hadoop.odps.tunnel.quota.name pay-as-you-go

spark.hadoop.odps.project.name <project_name>

spark.hadoop.odps.end.point <endpoint>

spark.hadoop.odps.access.id <accessId>

spark.hadoop.odps.access.key <accessKey>| Placeholder | Description | Example |

|---|---|---|

<project_name> |

Your MaxCompute project name | my_project |

<endpoint> |

Your MaxCompute endpoint. See Endpoints. | https://service.cn-hangzhou-vpc.maxcompute.aliyun-inc.com/api |

<accessId> |

AccessKey ID of the account used to access MaxCompute | LTAI5tXxx |

<accessKey> |

AccessKey secret of the account used to access MaxCompute | xXxXxXx |

To access a MaxCompute project with the schema feature (three-layer model) enabled, also add the following parameter. For more information, see Spark Connector and Schema operations.

spark.sql.catalog.odps.enableNamespaceSchema trueCreate a SQL session

-

Log on to the EMR console.

-

In the left-side navigation pane, choose EMR Serverless > Spark.

-

Click the name of your workspace.

-

In the left-side navigation pane, choose Operation Center > Sessions.

-

On the SQL Session tab, click Create SQL Session.

-

Enter a name (for example,

mc_sql_compute), paste the Spark configuration above into the Spark Configuration field, and click Create.

Create a Notebook session

-

Log on to the EMR console.

-

In the left-side navigation pane, choose EMR Serverless > Spark.

-

Click the name of your workspace.

-

In the left-side navigation pane, choose Operation Center > Sessions.

-

Click the Notebook Sessions tab, then click Create Notebook Session.

-

Enter a name (for example,

mc_notebook_compute), paste the Spark configuration above into the Spark Configuration field, and click Create.

For more information about session management, see Session Manager.

Step 2: Read and write data

Use SparkSQL

-

On the EMR Serverless Spark page, click Data Development in the left navigation pane.

-

On the Development tab, click the

icon.

icon. -

In the dialog box, enter a name such as

mc_load_task, keep the type as SparkSQL, and click OK. -

Paste the following code into the new tab:

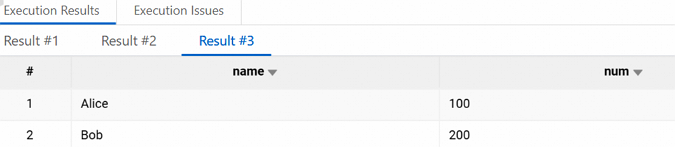

CREATE TABLE odps.default.mc_table (name STRING, num BIGINT); INSERT INTO odps.default.mc_table (name, num) VALUES ('Alice', 100),('Bob', 200); SELECT * FROM odps.default.mc_table; -

From the database drop-down list, select a database. From the Compute drop-down list, select the SQL session you created in Step 1 (for example,

mc_sql_compute). -

Click Run. After the job completes, results appear on the Execution Results tab.

-

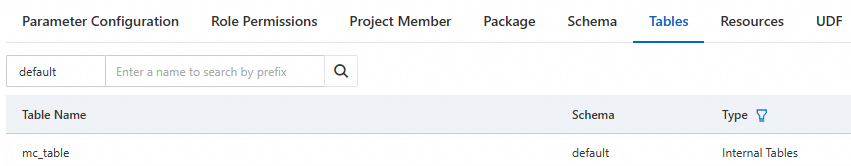

To verify, open the MaxCompute console, navigate to your project, and click the Tables tab. The

mc_tabletable should appear.

Use a Notebook

-

On the EMR Serverless Spark page, click Data Development in the left navigation pane.

-

On the Development tab, click the

icon.

icon. -

In the dialog box, enter a name such as

mc_load_task, select Interactive Development > Notebook for type, and click OK. -

From the session drop-down list, select the Notebook session you created in Step 1 (for example,

mc_notebook_compute). -

Run the following code cells in sequence: Create a table:

spark.sql(""" CREATE TABLE odps.default.mc_table (name STRING, num BIGINT); """)Insert data:

spark.sql("INSERT INTO odps.default.mc_table (name, num) VALUES ('Alice', 100),('Bob', 200);")Query data:

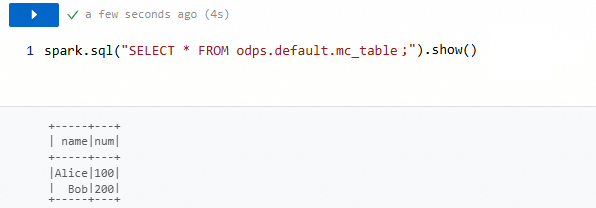

spark.sql("SELECT * FROM odps.default.mc_table;").show()Results appear on the Execution Results tab.

-

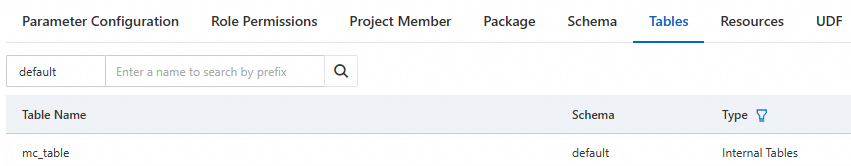

To verify, open the MaxCompute console, navigate to your project, and click the Tables tab. The

mc_tabletable should appear.

FAQ

What's next

The examples in this topic use SparkSQL and Notebook jobs. To use other job types (batch or streaming), see Develop a batch or streaming job.