When cluster workloads fluctuate, manually resizing node counts is slow and error-prone. Auto scaling in E-MapReduce (EMR) adds or removes task nodes automatically based on rules you define — keeping jobs running smoothly during peaks and reducing costs during off-peak hours.

Prerequisites

Before you begin, ensure that you have:

-

A DataLake, Dataflow, online analytical processing (OLAP), DataServing, or custom cluster. For more information, see Create a cluster.

-

A task node group containing pay-as-you-go instances or preemptible instances. For more information, see Create a node group.

Step 1: Select a trigger mode

Choose a trigger mode based on your workload characteristics:

| Scenario | Trigger mode |

|---|---|

| Workloads fluctuate on a predictable schedule, or you need a fixed node count within a specific period. | Time-based scaling |

| Workloads fluctuate without a predictable schedule, and the required node count changes with the actual workload. | Load-based scaling |

| Workloads have both predictable patterns and dynamic fluctuations. | Time-based scaling combined with load-based scaling |

Step 2: Configure auto scaling rules

When multiple auto scaling rules are configured and their conditions are met simultaneously, the system applies the following execution order:

Scale-out rules take precedence over scale-in rules.

Time-based and load-based rules execute based on trigger sequence.

Load-based rules trigger based on the time the cluster load metrics are evaluated.

Load-based rules with the same cluster load metrics trigger in the order they were configured.

Time-based scaling

Configure a time-based scale-out rule to run repeatedly or once at a specific time when workload is expected to increase. Pair it with a scale-in rule to reduce nodes during off-peak hours.

For a rule that repeats, set Rule Expiration Time to stop triggering scaling after a specified date.

Example: If workloads increase at 22:00 and decrease at 04:00 daily, configure a repeated scale-out rule at 22:00 and a repeated scale-in rule at 04:00.

For parameter details, see Configure custom auto scaling rules.

Load-based scaling

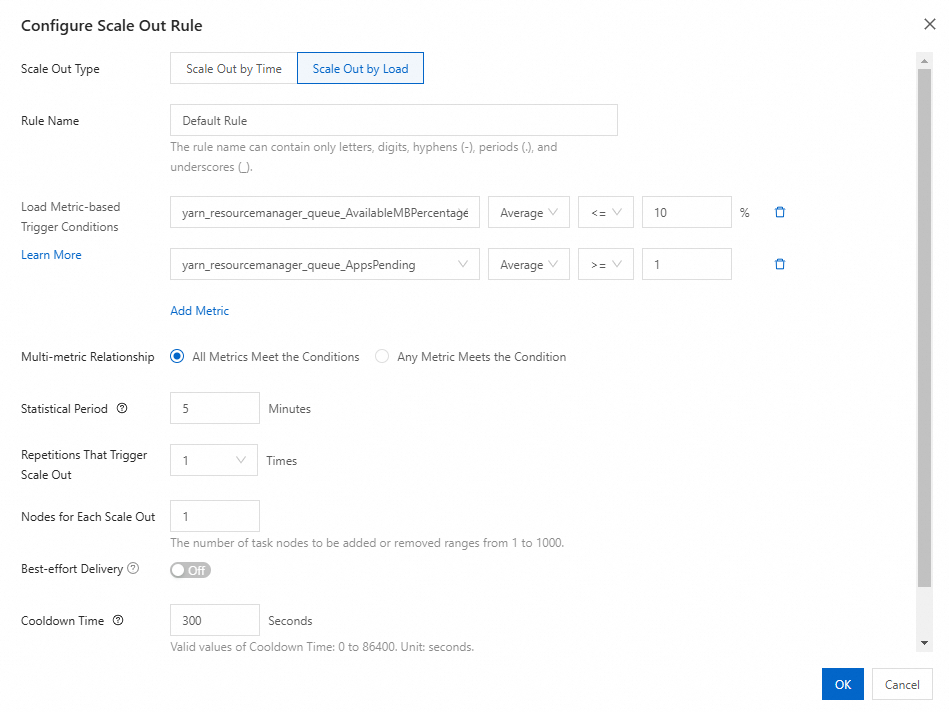

Load-based scaling monitors cluster load metrics and triggers scaling when thresholds are crossed. After configuration, click OK, then click Save and Apply in the Configure Auto Scaling panel.

1. Select cluster load metrics

On the Metric Monitoring subtab of the Monitoring tab, select YARN-HOME from the Dashboard drop-down list. Observe how metrics change across different periods and business workloads.

Select metrics whose values are inversely related to cluster capacity — after a scaling activity, metric values should decrease as the number of instances increases.

The following YARN metrics are recommended:

| Metric | Service | Description |

|---|---|---|

| yarn_resourcemanager_queue_AvailableMBPercentage | YARN | Percentage of available memory to total memory in the root queue |

| yarn_resourcemanager_queue_AvailableVCores | YARN | Number of available vCPUs in the root queue |

| yarn_resourcemanager_queue_AvailableMB | YARN | Available memory in the root queue (MB) |

| yarn_resourcemanager_queue_AppsPending | YARN | Number of pending tasks in the root queue |

| yarn_resourcemanager_queue_PendingContainers | YARN | Number of containers waiting to be allocated in the root queue |

| yarn_resourcemanager_queue_AvailableVCoresPercentage | YARN | Percentage of available vCPUs in the root queue |

For your first load-based rule, use pending-related metrics (such as yarn_resourcemanager_queue_AppsPending) for scale-out rules and available-related metrics for scale-in rules.

Example: Set a scale-out rule to trigger when yarn_resourcemanager_queue_AppsPending is greater than or equal to 1 over 60 seconds. When the condition is met, one node is added and the number of pending tasks decreases.

2. Configure the rule parameters

| Parameter | Description | Recommendation |

|---|---|---|

| Trigger conditions | One or more metric thresholds with AND or OR logic | Use multiple conditions with AND to reduce false triggers |

| Statistical Period | Time window for evaluating metric values | Set to 1 minute. Larger values may trigger scaling on stale data. |

| Cooldown Time | Minimum time between scaling activities | Set to 100–300 seconds for scale-out rules. Adding nodes takes an average of 1.55 minutes (or 1.83 minutes for 100 nodes), so a cooldown lets new nodes stabilize before re-evaluating. |

| Number of nodes | Instances to add or remove per activity | Estimate based on existing node capacity and expected workload growth |

| Effective time period | Hours within a day when the rule is active | Configure different rules for different time periods as needed |

3. Set node group limits

The Limits on Node Quantity of Current Node Group section defines the node count boundaries:

-

Maximum Number of Instances — upper limit for the node group, preventing indefinite scale-out.

-

Minimum Number of Instances — lower limit; if instances are released unexpectedly, the system adds them back to meet this minimum.

4. Tune after initial deployment

After the rule is active, review metric trends and scaling activity records to adjust the configuration:

-

Too many scaling events, with added nodes idling then being removed — add more metric conditions using the AND operator, or increase the cooldown period value.

-

Scale-out too slow or insufficient — increase the number of instances added per scale-out activity.

What's next

-

Configure custom auto scaling rules — define advanced rule parameters and review all available cluster load metrics.