NIC multi-queue allows you to configure multiple transmit and receive queues on a network interface. Each queue can be processed by a different CPU core. Its purpose is to improve network I/O throughput and reduce latency by processing network packets in parallel across multiple CPU cores.

Why use NIC multi-queue

A traditional single-queue network interface relies on one CPU core to process all packets. This can lead to CPU overload, increased latency, and packet loss. Modern servers have multi-core CPUs, and NIC multi-queue distributes network traffic across these cores for more efficient resource utilization.

Under the same network PPS and bandwidth conditions, tests show that using two queues instead of one improves network performance by 50% to 100%. Using four queues provides even more significant gains:

-

Better utilization of multi-core CPU architecture: Distributes network traffic across multiple CPU cores for a more balanced load and higher CPU utilization.

-

Higher throughput: Processing multiple packets simultaneously significantly increases network throughput, especially under high loads.

-

Lower latency: Reduces congestion by distributing packets across multiple queues.

-

Reduced packet loss: Mitigates packet loss caused by an overloaded single queue during periods of high traffic.

Although NIC multi-queue offers these benefits, improper configuration can decrease performance or cause other issues. For example, incorrect settings for the queue count or CPU affinity can cause unnecessary context-switching overhead. Setting the queue count too low can underutilize your hardware resources.

Typically, when an Elastic Network Interface (ENI) is attached to an instance, its queue count is automatically set to the default value for that instance type, and this setting takes effect within the operating system. If you need to manually adjust the queue count for the ENI, you should first carefully consider your specific use case and hardware conditions to determine the optimal configuration.

How NIC multi-queue works

-

Queue architecture

An Elastic Network Interface (ENI) supports multiple Combined queues. Each Combined queue is processed by an independent CPU core, enabling parallel packet processing, reducing lock contention, and fully utilizing multi-core performance.

Receive (RX) and Transmit (TX) queues are the two types of queues used for processing packets. Each Combined queue consists of one RX queue and one TX queue:

-

RX queue: An RX queue is used to process incoming data packets from the network. When a packet arrives, the network interface distributes it to a specific RX queue based on a distribution strategy, such as round-robin or flow-based hashing.

-

TX queue: A TX queue is used to manage outgoing data packets. An application places generated packets into a TX queue, and the network interface then sends them based on factors like sequence or priority.

-

-

IRQ affinity support

Each queue is associated with an independent interrupt. IRQ affinity distributes interrupt handling across different CPU cores to prevent any single core from being overloaded.

IRQ affinity is enabled by default on all public images except for Red Hat Enterprise Linux (RHEL). For more information, see Configure IRQ affinity.

Limitations

-

Only some instance types support NIC multi-queue. For more information, see Instance type families. If the value in the NIC queues column is greater than 1, the instance type supports NIC multi-queue.

-

For instance types that support NIC multi-queue, the feature is enabled automatically after you attach an ENI to an instance.

-

The queue count listed for an instance type represents the maximum queues per ENI supported by that type.

-

You can call the DescribeInstanceTypes API operation to query queue-related metrics for an instance type family by specifying the InstanceTypeFamily parameter:

-

Default queue count

The PrimaryEniQueueNumber response parameter indicates the default queue count for the primary ENI. The SecondaryEniQueueNumber parameter indicates the default queue count for a secondary ENI.

-

Maximum queues per ENI

The MaximumQueueNumberPerEni response parameter indicates the maximum queues per ENI allowed for the instance type family.

-

Total queue quota

The TotalEniQueueQuantity response parameter indicates the total queue quota allowed for the instance type family.

-

-

-

Some older public images with kernel versions earlier than 2.6 may not support NIC multi-queue. We recommend that you use the latest public images.

View ENI queue count

Console

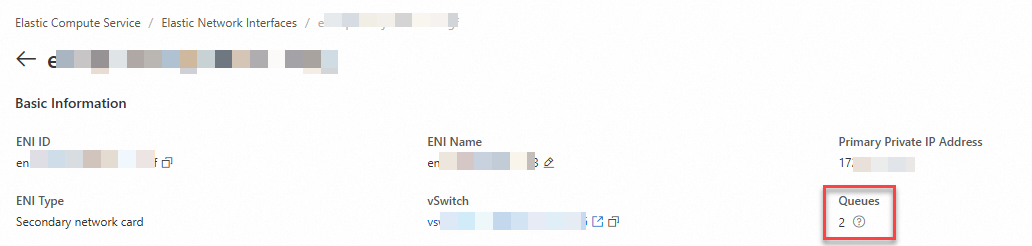

In the top navigation bar, select the region and resource group of the resource that you want to manage.

-

Click the ID of the target secondary ENI to go to its details page.

-

In the Basic Information section, find the Queues parameter. The value indicates the current queue count of the ENI.

-

If you modified the queue count for the ENI, the modified value is displayed.

-

If you have not modified the queue count for the ENI:

-

If the ENI is not attached to an instance, no value is displayed.

-

If the ENI is attached to an instance, the default queue count for the instance type is displayed.

-

-

API

You can call the DescribeNetworkInterfaceAttribute operation to view the queue count of an ENI. The QueueNumber parameter in the response indicates the queue count.

-

If you modified the queue count for the ENI, the modified value is displayed.

-

If you have not modified the queue count for the ENI:

-

If the ENI is not attached to an instance, no value is displayed.

-

If the ENI is attached to an instance, the default queue count for the instance type is displayed.

-

Within the instance

-

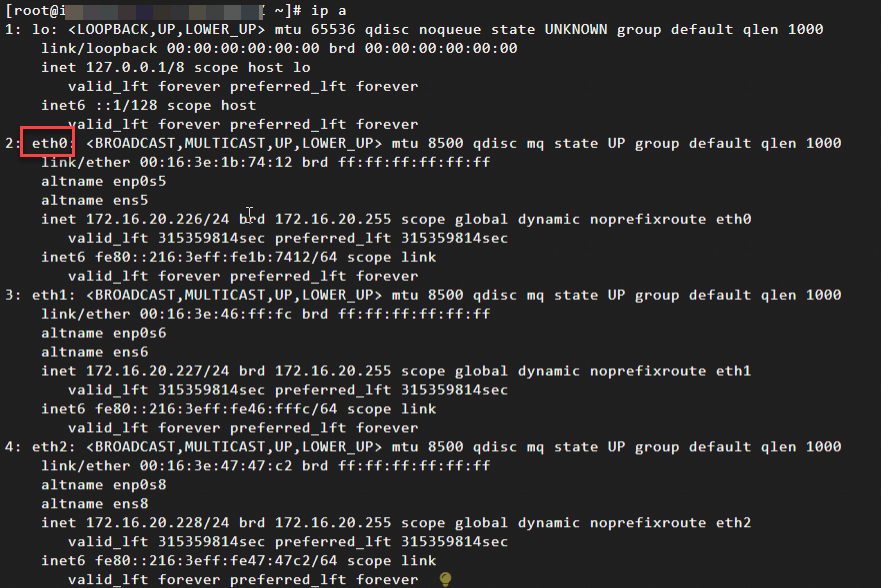

Connect to a Linux instance.

NoteFor Windows instances, you can view the ENI queue count in the console or by calling an API operation.

For more information, see Log on to a Linux instance by using Workbench.

-

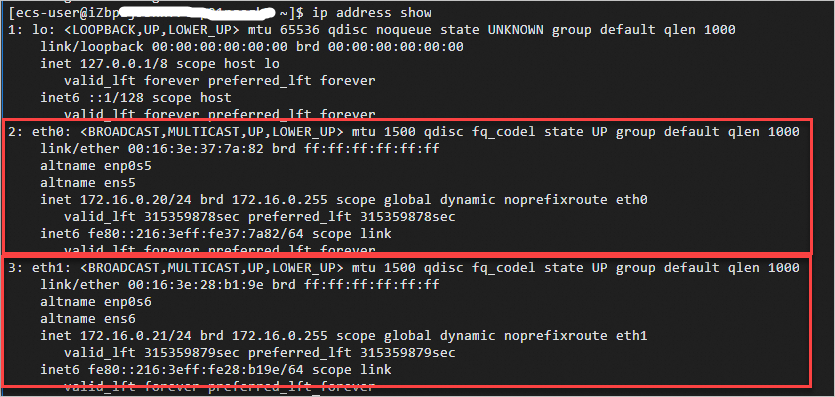

Run the

ip acommand to view network configuration information.

-

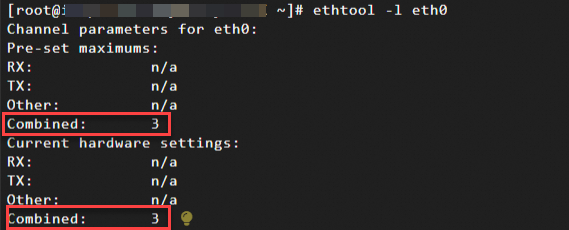

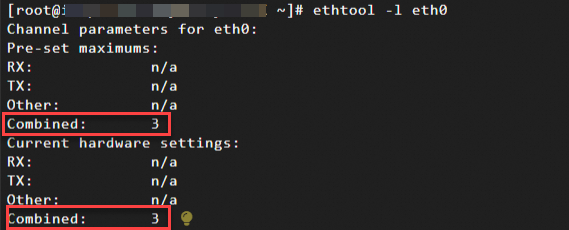

Run the following command to check if the primary ENI eth0 supports NIC multi-queue.

This example uses the primary ENI. To check a secondary ENI, replace the network interface identifier with eth1, eth2, or another value.

ethtool -l eth0Check the command output to determine if NIC multi-queue is supported:

-

If the value of "Combined" under "Pre-set maximums" is greater than 1, the ENI supports NIC multi-queue. This value indicates the maximum queue count supported by the ENI.

-

The value of "Combined" under "Current hardware settings" indicates the current queue count in use.

In this example, the output indicates that the ENI supports a maximum of three combined (RX+TX) queues and is currently using three queues.

-

To modify the maximum number of queues that an ENI supports, see Modify the queue count for an ENI.

-

To modify the number of queues in use by the OS, see Modify the queue count used by the OS.

-

Modify ENI queue count

Although an ENI's queue count is set to a default value when attached to an instance, you can manually adjust it through the console or by using the API.

-

You can modify the queue count for an ENI only when it is Available, or when it is InUse and its attached instance is Stopped.

-

The queue count cannot exceed the maximum queues per ENI for the instance type.

-

The total queue count of all ENIs on an instance cannot exceed the total queue quota for the instance type.

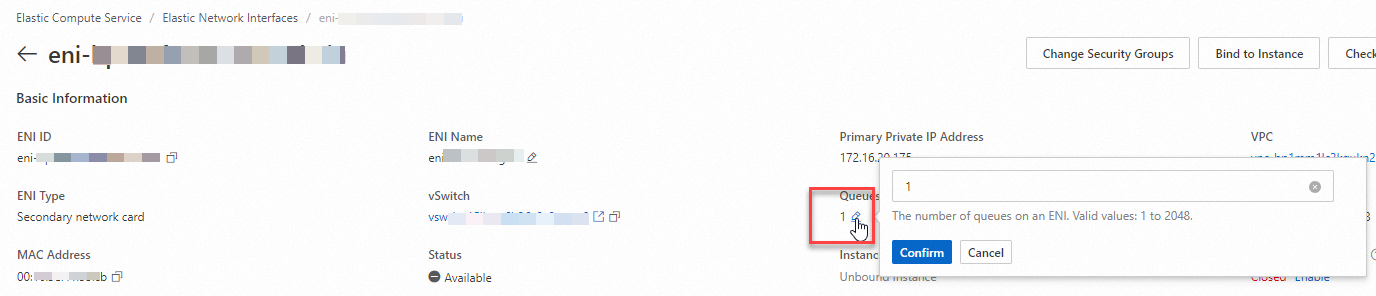

Console

In the top navigation bar, select the region and resource group of the resource that you want to manage.

-

Click the ID of the target secondary ENI to go to its details page.

-

Click Modify Queue Count.

-

Click OK to complete the modification.

API

You can call the ModifyNetworkInterfaceAttribute operation and set the QueueNumber parameter to modify the queue count of an ENI.

After you modify the queue count for an ENI that is attached to an instance, the new setting takes effect after the instance starts.

Modify OS queue count

After you modify the queue count for an ENI in the console or by using the API, the new setting automatically takes effect within the OS. The number of queues the ENI uses within the OS matches the queue count you set.

You can also tune the number of queues that the ENI actively uses within the OS. This value can be lower than the configured queue count for the ENI.

-

Changing the queue count used by the OS inside an instance does not affect the maximum queue count supported by the ENI, and this change does not appear in the console or API responses.

-

This change is temporary and does not persist after an instance restart.

The following example shows how to adjust the number of queues used by an ENI on an Alibaba Cloud Linux 3 instance that supports NIC multi-queue.

-

Connect to a Linux instance.

For more information, see Log on to a Linux instance by using Workbench.

-

Run the

ip address showcommand to view network configuration information.

-

Run the following command to check if the primary ENI eth0 supports NIC multi-queue.

This example uses the primary ENI. To check a secondary ENI, replace the network interface identifier with eth1, eth2, or another value.

ethtool -l eth0 -

Check the command output to determine if NIC multi-queue is supported:

-

If the value of "Combined" under "Pre-set maximums" is greater than 1, the ENI supports NIC multi-queue. This value indicates the maximum queue count supported by the ENI.

-

The value of "Combined" under "Current hardware settings" indicates the current queue count in use.

In this example, the output indicates that the ENI supports a maximum of three combined (RX+TX) queues and is currently using three queues.

-

-

Run the following command to set the number of active queues for the primary ENI eth0 to 2.

This example uses the primary ENI. To adjust a secondary ENI, replace the network interface identifier with eth1, eth2, or another value.

sudo ethtool -L eth0 combined NNis the number of queues that you want the ENI to use.Nmust be less than or equal to the "Combined" value under "Pre-set maximums".In this example, set the number of queues for the primary ENI to 2:

sudo ethtool -L eth0 combined 2

Configure IRQ affinity

When using NIC multi-queue, you typically need to configure IRQ affinity. This process assigns interrupts from different queues to specific CPU cores instead of allowing any core to handle them, which helps reduce CPU contention and improve network performance.

-

All public images except for Red Hat Enterprise Linux (RHEL) have IRQ affinity enabled by default and require no additional configuration.

-

RHEL images support IRQ affinity for NIC multi-queue, but it is disabled by default. Follow the steps in this topic to configure it.

The following steps show how to use the ecs_mq script to automatically configure IRQ affinity for NIC multi-queue on a Red Hat Enterprise Linux 9.2 image. If your instance does not use an RHEL image, IRQ affinity is enabled by default and requires no configuration.

-

Connect to a Linux instance.

For more information, see Log on to a Linux instance by using Workbench.

-

(Optional) Stop the irqbalance service.

The irqbalance service dynamically adjusts IRQ affinity, which conflicts with the

ecs_mqscript. We recommend that you stop the irqbalance service.systemctl stop irqbalance.service -

Run the following command to download the latest version of the

ecs_mqautomatic configuration script.wget https://ecs-image-tools.oss-cn-hangzhou.aliyuncs.com/ecs_mq/ecs_mq_latest.tgz -

Run the following command to decompress the

ecs_mqscript package.tar -xzf ecs_mq_latest.tgz -

Run the following command to change the working directory.

cd ecs_mq/ -

Run the following command to run the

ecs_mqinstallation script.bash install.sh redhat 9NoteReplace

redhatand9with the name and major version number of your operating system. -

Run the following command to start the

ecs_mqservice.systemctl start ecs_mqAfter the service starts, it automatically enables IRQ affinity.

Modifying the NIC queue count and configuring IRQ affinity are different methods for optimizing network performance. You should test different configuration combinations based on your system's actual load. Monitor performance metrics such as throughput and latency. Use this data to allocate queues to different CPU cores and set IRQ affinity to achieve optimal load balancing.