After you deploy a model in a Serverless resource group, you can call it from any DataWorks workspace in the same region. This page shows how to call a deployed large language model (LLM) or vector model from Data Integration and Data Studio.

Prerequisites

Before you begin, ensure that you have:

-

A Serverless resource group attached to the DataWorks workspace

-

A model service deployed in the Serverless resource group (see Deploy a large language model)

Set up before calling

Before making your first call, complete three steps: verify network connectivity, get the endpoint, and get the API key.

1. Verify network connectivity

The virtual private cloud (VPC) attached to the resource group you use for calling must be in the list of VPCs allowed to connect to the model service.

-

Open the DataWorks resource group list and switch to the region where the resource group is located.

-

In the Actions column for the target resource group, click Network Settings to open the VPC Binding page.

-

Under Data Scheduling & Data Integration, note the vSwitch CIDR Block.

ImportantWhen calling a model, use the first VPC listed in the resource group's network configuration.

-

To view or update the list of connectable VPCs for the model service, see Manage model networks.

2. Get the endpoint

After deployment, an internal same-region endpoint is automatically generated. The endpoint follows this format:

http://<model-service-id>.<region>.dataworks-model.aliyuncs.comTo find your endpoint, see View a model service.

3. Get the API key

To get the API key for authentication, see Manage API keys.

API keys start with DW.

Call a large language model service

Call an LLM service from Data Integration or Data Studio to perform intelligent data processing.

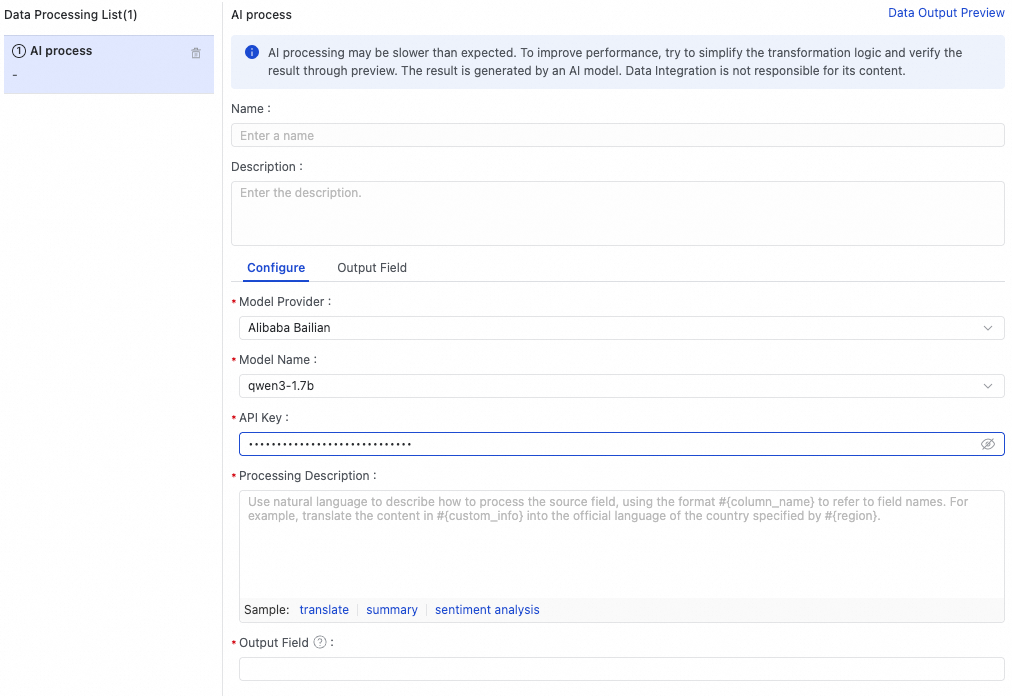

Call from Data Integration

In a single-table offline sync task, use the LLM service to run AI-assisted processing on data during synchronization.

Call from Data Studio

Data Studio offers three ways to call an LLM service. Use the large language model node for the simplest setup, or Shell and Python nodes for custom scripting.

Use a large language model node

The large language model node in Data Studio (new version) provides a dedicated interface for configuring and calling an LLM service. In the large language model node, you can configure a large language model service and call the model.

Use a Shell node

This example calls an LLM service from a Shell node to answer a question.

-

Create a Shell node and add the following command. Replace

<endpoint>and<api-key>with the values from Set up before calling.curl -X POST http://<endpoint>/v1/completions \ -H "Authorization: <api-key>" \ -H "Content-Type: application/json" \ -d '{"prompt":"What are the differences and connections between AI, machine learning, and deep learning?", "stream":"false", "max_tokens": 1024}' -v -

In the Run Configuration section on the right, select the resource group whose network connectivity to the model service is configured.

-

Click Run.

Schedule or publish this node:

-

Scheduling: In the Scheduling section, select the same resource group and configure scheduling properties under Scheduling Policies.

-

Publishing: Click the

icon to publish the node. A node runs on a schedule only after it is published to the production environment. See Node scheduling configuration and Node publishing.

icon to publish the node. A node runs on a schedule only after it is published to the production environment. See Node scheduling configuration and Node publishing.

Use a Python node

This example calls an LLM service from a Python node with streaming output.

Step 1: Create a custom image with the `requests` library.

This example requires the Python requests library. Create a custom image based on an official DataWorks image using these parameters:

| Custom image parameter | Configuration |

|---|---|

| Image Name/ID | Select a Python-compatible image from the DataWorks image list |

| Supported Task Type | Python |

| Package | Python 3: requests; Script: /home/tops/bin/pip3 install 'urllib3<2.0' |

Step 2: Create a Python node with the following code.

Create a Python node and add this code. Replace <endpoint> and <api-key> with the values from Set up before calling.

import requests

import json

import time

import sys

# Replace these values

httpUrl = "http://<endpoint>"

apikey = "<api-key>"

url = httpUrl + "/v1/completions"

headers = {

"Authorization": apikey,

"Content-Type": "application/json"

}

data = {

"prompt": "Please write a poem about spring",

"stream": True,

"max_tokens": 512

}

try:

response = requests.post(url, headers=headers, json=data, stream=True)

response.raise_for_status()

full_text = "" # Accumulate the full response to prevent loss.

buffer = "" # Used to handle incomplete JSON lines (optional).

for line in response.iter_lines():

if not line:

continue # Skip empty lines.

line_str = line.decode('utf-8').strip()

if line_str.startswith("data:"):

data_str = line_str[5:].strip() # Remove "data: ".

if data_str == "[DONE]":

print("\n[Stream response ended]")

break

try:

parsed = json.loads(data_str)

choices = parsed.get("choices", [])

if choices:

delta_text = choices[0].get("text", "")

if delta_text:

full_text += delta_text

for char in delta_text:

print(char, end='', flush=True)

sys.stdout.flush()

time.sleep(0.03) # Typewriter effect.

except json.JSONDecodeError:

continue

print(f"\n\n[Full response length: {len(full_text)} characters]")

print(f"[Full content]:\n{full_text}")

except requests.exceptions.RequestException as e:

print(f"Request failed: {e}")

except Exception as e:

print(f"Error: {e}")Step 3: Run the node.

In the Run Configuration section on the right, select the resource group with network connectivity configured and the custom Image from Step 1.

Click Run.

Schedule or publish this node:

-

Scheduling: In the Scheduling Configuration section, select the same resource group and image, then configure properties under Scheduling Policy.

-

Publishing: Click the

icon to publish. A node runs on a schedule only after it is published to the production environment. See Node scheduling configuration and Node publishing.

icon to publish. A node runs on a schedule only after it is published to the production environment. See Node scheduling configuration and Node publishing.

Call a vector model service

Call a vector model service from Data Integration or Data Studio to convert text into embeddings. The following examples use BGE-M3 deployed in a DataWorks resource group.

Call from Data Integration

In a single-table offline sync task, use the model service to run vectorization on data during synchronization.

Call from Data Studio

Use a large language model node

The large language model node in Data Studio (new version) supports vector models. Configure the model service in the node and call the vector model directly.

Use a Shell node

This example converts text to a vector using BGE-M3 from a Shell node.

-

Create a Shell node and add the following command. Replace

<endpoint>and<api-key>with the values from Set up before calling.curl -X POST "http://<endpoint>/v1/embeddings" \ -H "Authorization: <api-key>" \ -H "Content-Type: application/json" \ -d '{ "input": "This is a piece of text that needs to be converted into a vector", "model": "bge-m3" }' -

In the Run Configuration section on the right, select the resource group whose network connectivity to the model service is configured.

-

Click Run.

Schedule or publish this node:

-

Scheduling: In the Scheduling section, select the same resource group and configure scheduling properties under Scheduling Policies.

-

Publishing: Click the

icon to publish. A node runs on a schedule only after it is published to the production environment. See Node scheduling configuration and Node publishing.

icon to publish. A node runs on a schedule only after it is published to the production environment. See Node scheduling configuration and Node publishing.

Use a Python node

This example converts text to a vector using BGE-M3 from a Python node.

Step 1: Create a custom image with the `requests` library.

Follow the same steps as in the LLM Python node example to create a custom image with the requests library installed.

Step 2: Create a Python node with the following code.

Create a Python node and add this code. Replace <endpoint> and <api-key> with the values from Set up before calling.

import requests

import json

# Replace these values

api_url = "http://<endpoint>/v1/embeddings"

token = "<api-key>"

headers = {

"Authorization": token,

"Content-Type": "application/json"

}

payload = {

"input": "Test text",

"model": "bge-m3"

}

try:

response = requests.post(api_url, headers=headers, data=json.dumps(payload))

print("Response status code:", response.status_code)

print("Response content:", response.text)

except Exception as e:

print("Request exception:", e)Step 3: Run the node.

In the Run Configuration section on the right, select the resource group with network connectivity configured and the custom Image from Step 1.

Click Run.

Schedule or publish this node:

-

Scheduling: In the Scheduling Configurations section, select the same resource group and image, then configure properties under Scheduling Policies.

-

Publishing: Click the

icon to publish. A node runs on a schedule only after it is published to the production environment. See Node scheduling configuration and Node publishing.

icon to publish. A node runs on a schedule only after it is published to the production environment. See Node scheduling configuration and Node publishing.

Troubleshooting

| Symptom | Likely cause | Resolution |

|---|---|---|

401 Unauthorized |

Incorrect or missing API key | Verify that the API key starts with DW and that you copied it correctly from Manage API keys. |

| Connection refused or timeout | VPC not bound to the model service | Confirm that the first VPC listed in the resource group's network configuration is in the allowed VPC list. See Manage model networks. |

| Endpoint not reachable | Wrong endpoint format | Make sure the endpoint follows the format http://<model-service-id>.<region>.dataworks-model.aliyuncs.com. |

What's next

For the full list of supported API parameters and request formats, see OpenAI-compatible server.