Use the StarRocks node in DataWorks to develop, schedule, and integrate StarRocks tasks. This topic describes the development workflow.

Overview

StarRocks is a next-generation, high-speed MPP database. Compatible with the MySQL protocol, this OLAP engine delivers outstanding performance across various scenarios, including multi-dimensional analysis, data lake analysis, high-concurrency queries, and real-time data analysis.

Prerequisites

Create a Business Flow.

DataStudio organizes development by Business Flows. You must create one before creating a node. For more information, see Create a workflow.

Create a StarRocks data source.

Add your StarRocks database as a data source in DataWorks. For more information, see Create a StarRocks data source.

NoteThe StarRocks node only supports StarRocks data sources created via JDBC.

(Optional; required for RAM users) Add the RAM user to the workspace and assign the Develop or Workspace Administrator role. Grant the Workspace Administrator role with caution due to its high privileges. For more information, see Add members to a workspace.

Limitations

Supported regions: China (Hangzhou), China (Shanghai), China (Beijing), China (Shenzhen), China (Chengdu), China (Hong Kong), Singapore, Malaysia (Kuala Lumpur), Germany (Frankfurt), US (Silicon Valley), and US (Virginia).

Step 1: Create a StarRocks node

Go to the DataStudio page.

Log on to the DataWorks console. In the top navigation bar, select the desired region. In the left-side navigation pane, choose . On the page that appears, select the desired workspace from the drop-down list and click Go to Data Development.

Right-click the target Business Flow and select .

In the Create Node dialog box, enter the node Name and click Confirm.

Step 2: Develop a StarRocks task

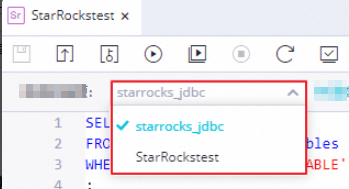

(Optional) Select a StarRocks data source

Select the target data source on the configuration tab. If only one StarRocks data source exists, it is selected by default.

The StarRocks node only supports StarRocks data sources created via JDBC.

Develop SQL code: Simple example

Enter your task code in the editor. This example queries all basic tables in the database:

SELECT * FROM information_schema.tables

WHERE table_type = 'BASE TABLE';Develop SQL code: Switch the catalog and database

SET CATALOG catalog_name; --Switch the catalog for the current session

USE catalog_name.db_name; --Specify the database for the current sessionEnclose keywords in backticks (``) to prevent parsing errors.

Develop SQL code: Use scheduling parameters

Use Scheduling Parameters to define dynamic inputs. Define variables in your code as ${variable_name}, then assign values in Scheduling Configuration > Scheduling Parameter on the right. For more information about the supported formats and configuration details, see Supported formats for scheduling parameters and Configure and use scheduling parameters.

For example, if parameter a is set to $[yyyymmdd] (today), the code queries tables created today.

SELECT * FROM information_schema.tables

WHERE CREAT_TIME = '${a}';Step 3: Configure task scheduling

To schedule the task, click Scheduling Configuration on the right and configure the properties. For more information, see Overview.

Configure the Rerun Property and Upstream Dependent Node before submitting.

Step 4: Debug the task code

Debug the task to ensure correct execution:

(Optional) Select a debugging resource group and assign parameter values.

Click the

icon in the toolbar. In the Parameters dialog box, select a resource group.

icon in the toolbar. In the Parameters dialog box, select a resource group.Assign values to any scheduling parameters for debugging. For more information about parameter assignment logic, see Task debugging process.

Save and run the task code.

Click the

icon to save, then click the

icon to save, then click the  icon to run.

icon to run.(Optional) Run a smoke test.

Run a smoke test during or after submission to verify execution in the development environment. For more information, see Perform smoke testing.

Step 5: Submit and publish the task

Submit and publish the node to activate the schedule.

Click the

icon in the toolbar to save the node.

icon in the toolbar to save the node.Click the

icon in the toolbar to submit the node task.

icon in the toolbar to submit the node task.In the Submit dialog box, enter a Change Description and select code review options.

NoteConfigure the Rerun Property and Upstream Dependent Node before submitting.

Code review ensures quality. If enabled, a reviewer must approve the code before publication. For more information, see Code review.

In standard mode workspaces, click Publish in the upper-right corner to deploy to production. For more information, see Publish tasks.

Next steps

For details on monitoring and maintenance, see the Operation Center documentation. Manage auto triggered tasks