A Function Compute node lets you run custom serverless functions as part of a DataWorks data pipeline. Use it when your pipeline needs custom business logic, data transformation, or third-party API calls that go beyond what built-in node types support. Function Compute nodes support periodic scheduling, so you can trigger functions on a timed basis and combine them with other node types to build end-to-end data processing flows.

Prerequisites

Before you begin, make sure that you have:

Function Compute activated. See Activate Function Compute and What is Function Compute?

A service created. A service is the basic resource unit in Function Compute. You configure permissions, logs, and functions at the service level. See Create a service

An event function created within that service. DataWorks supports only event functions, not HTTP functions. Write code against the Function Compute function interface and deploy it as an event function. See Create a function and Function types

Limitations

| Limitation | Details |

|---|---|

| Function type | DataWorks supports invoking only event functions. It does not support HTTP functions. Create an event function that handles event requests if you want to schedule it in DataWorks. |

| Region availability | China (Hangzhou), China (Shanghai), China (Beijing), China (Shenzhen), China (Chengdu), China (Hong Kong), Singapore, Malaysia (Kuala Lumpur), Indonesia (Jakarta), Germany (Frankfurt), UK (London), US (Silicon Valley), US (Virginia) |

Usage notes

Service list not appearing

When you select a service on the node configuration page, the service list may be empty for the following reasons:

Your account has an overdue payment. Add funds to your account, then refresh the page and try again.

Your account lacks the fc:ListServices permission or the AliyunFCFullAccess policy. Contact your Alibaba Cloud account owner to grant the permission. See Grant permissions to a RAM user.

Long-running functions

If a function runs for more than 1 hour in a DataWorks Function Compute node, you must set the invocation method to asynchronous invocation. For more information about asynchronous invocations in Function Compute, see Asynchronous invocation.

RAM user permissions

If a RAM user develops a Function Compute node, grant the user the following permissions:

| Policy type | Permissions |

|---|---|

| System policy | AliyunFCFullAccess, AliyunFCReadOnlyAccess, AliyunFCInvocationAccess |

| Custom policy | fc:GetAsyncTask, fc:StopAsyncTask, fc:GetService, fc:ListServices, fc:GetFunction, fc:InvokeFunction, fc:ListFunctions, fc:GetFunctionAsyncInvokeConfig, fc:ListServiceVersions, fc:ListAliases, fc:GetAlias, fc:ListFunctionAsyncInvokeConfigs, fc:GetStatefulAsyncInvocation, fc:StopStatefulAsyncInvocation |

For the full list of permission policies, see Permission policies and examples (Function Compute 2.0) and Permission policies and examples (Function Compute 3.0).

Step 1: Go to the node creation page

Log on to the DataWorks console. In the top navigation bar, select the desired region. In the left-side navigation pane, choose Data Development and O\&M \> Data Development. On the page that appears, select the desired workspace from the drop-down list and click Go to Data Development.

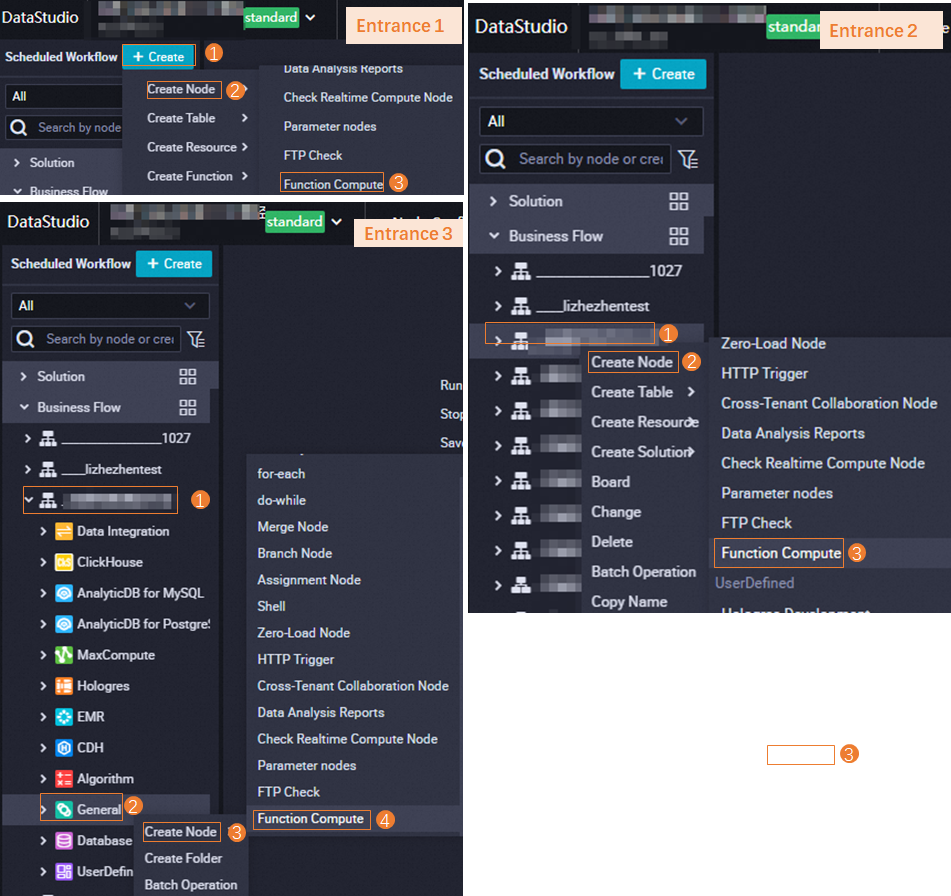

Go to the node creation page. On the DataStudio page, you can create a Function Compute node using one of the following methods.

Step 2: Create and configure a Function Compute node

Create a Function Compute node. After you go to the node creation page, follow the on-screen instructions to configure basic information for the new node, such as its path and name. Then, create the node.

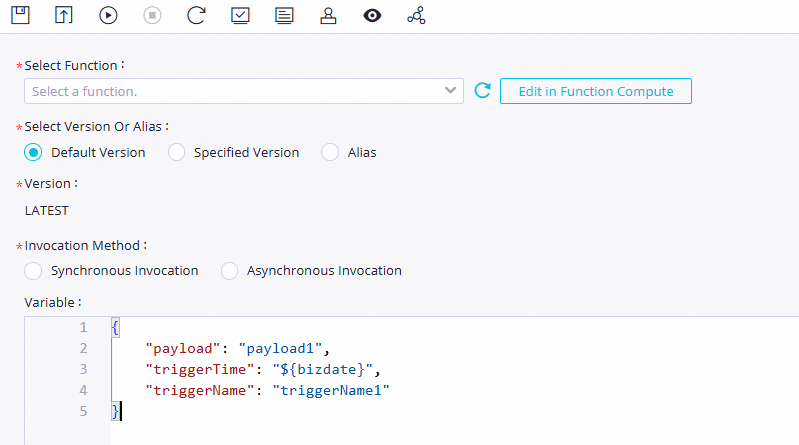

Configure the node parameters. On the node editing page, select the function to invoke and configure the invocation method and variables based on your requirements.

Parameter Description Select Function The event function to invoke for this node. If no function is available, create one. See Create a function. Select Version or Alias The service version or alias to use when invoking the function. Defaults to LATEST. See Publish a version and Create an alias. Invocation Method How DataWorks invokes the function. See the table below for guidance on which method to use. Variables The JSON payload passed to the function, corresponding to Test Function \> Configure Test Event in the Function Compute console. Define DataWorks scheduling variables in ${}format for dynamic values.Choosing an invocation method

Invocation method How it works When to use Synchronous call DataWorks triggers the function and waits for a result before continuing. Functions that complete within 1 hour. Asynchronous invocation DataWorks submits the event and receives an immediate acknowledgment. The function runs independently. Functions that run longer than 1 hour, consume large resources, or have error-prone logic. Example: synchronous invocation with a time-triggered function

This example uses the

para_service_01_by_time_triggersfunction, created from the platform's Time-triggered Function sample code:import json import logging logger = logging.getLogger() def handler(event, context): logger.info('event: %s', event) # Parse the JSON evt = json.loads(event) triggerName = evt["triggerName"] triggerTime = evt["triggerTime"] payload = evt["payload"] logger.info('triggerName: %s', triggerName) logger.info("triggerTime: %s", triggerTime) logger.info("payload: %s", payload) return 'Timer Payload: ' + payloadThe Variables field passes a JSON payload to the function. The

${bizdate}variable must be assigned a value in Step 4:{ "payload": "payload1", "triggerTime": "${bizdate}", "triggerName": "triggerName1" }For more function code samples, see Sample code.

(Optional) Test the node. After configuring the node, click the

icon. Specify the resource group and assign constant values to the variables to test the node logic. The parameter format is

icon. Specify the resource group and assign constant values to the variables to test the node logic. The parameter format is key=value. Separate multiple parameters with commas (,).For details on testing a task, see Test a task.

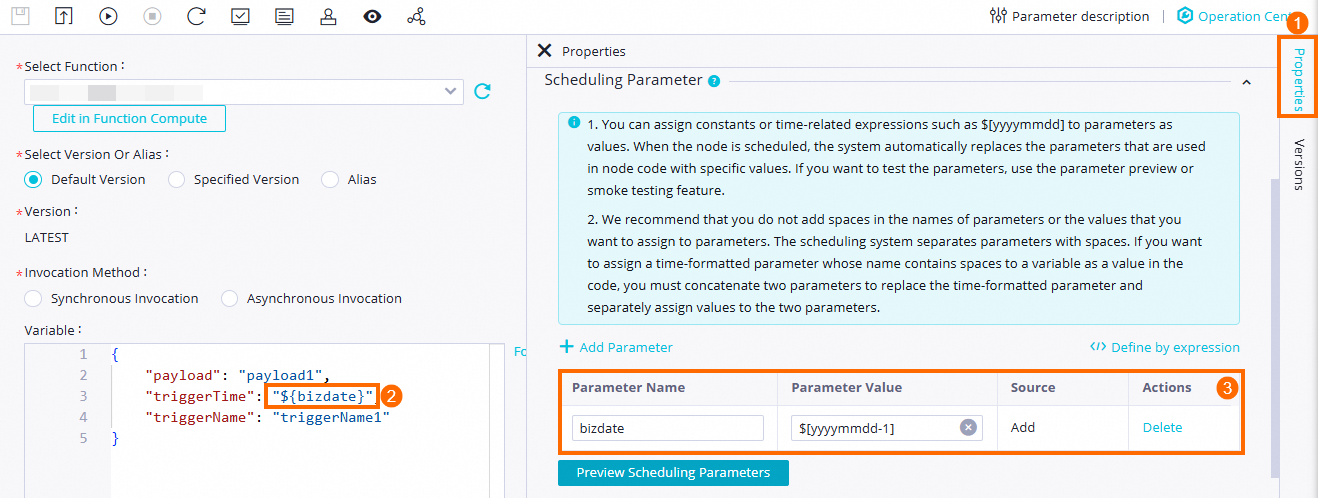

Configure the periodic scheduling properties. DataWorks scheduling parameters let you pass dynamic values to function variables at runtime. After defining variables in the Variables field using

${}syntax, go to the Scheduling Configuration tab to assign their values. In this example,bizdateis set to the previous day, so the function processes data from the previous day on each scheduled run. See Configure scheduling parameters. For details on all scheduling properties, see Overview of task scheduling properties.

For details on all scheduling properties, see Overview of task scheduling properties.

Step 3: Commit and publish the node

A Function Compute node must be committed and published to the production environment before it can be automatically scheduled.

Save and commit the node. Click the

and

and  icons to save and commit the node. When prompted, enter a change description.

icons to save and commit the node. When prompted, enter a change description.(Optional) Enable code review so that a reviewer must approve the node code before it can be published. See Code review.

(Optional) Run a smoke test on the task before publishing to verify it runs as expected. See Smoke testing.

Before committing, set the Rerun Property and Upstream Dependencies in the scheduling configuration.

(Optional) Publish the node. If your workspace is in standard mode, click Publish in the upper-right corner to publish the node after committing. See Workspaces in standard mode and Publish a task.

What's next

Manage and monitor your scheduled tasks in the DataWorks Operation Center. See Operation Center.

Explore advanced scenarios using Function Compute nodes. See Dynamically add watermarks to PDFs using a Function Compute node in DataWorks.