This tutorial shows you how to run a word count MapReduce job on an E-MapReduce (EMR) cluster using DataWorks Data Studio. The job reads a text file from Object Storage Service (OSS) and counts word occurrences. By splitting large datasets into multiple parallel Map tasks, MapReduce significantly improves data processing efficiency. You will prepare source data, build a JAR package, configure an EMR MR node, run the job, and view the results.

Prerequisites

Before you begin, make sure you have:

-

An EMR cluster created and registered with DataWorks. See Data Studio: Associate an EMR computing resource

-

(Optional) If you use a Resource Access Management (RAM) user, add the user to the workspace with the Developer or Workspace Administrator role. The Workspace Administrator role has extensive permissions — grant it with caution. See Add members to a workspace. Alibaba Cloud account users can skip this step

-

An OSS bucket. See Create buckets

Limitations

-

EMR MR nodes can only run on a Serverless Resource Group (recommended) or an Exclusive Scheduling Resource Group.

-

For DataLake or custom clusters, configure EMR-HOOK on the cluster before managing metadata in DataWorks. See Configure Hive EMR-HOOK.

NoteWithout EMR-HOOK, DataWorks cannot display metadata in real time, generate audit logs, display Data Lineage, or perform EMR-related data governance tasks.

How it works

This tutorial has five stages:

| Stage | Description |

|---|---|

| 1. Prepare source data | Create a sample text file and upload it to OSS |

| 2. Build the JAR package | Write and compile a MapReduce word count program that reads from OSS |

| 3. Configure the EMR MR node | Upload or reference the JAR package in Data Studio, then enter the run command |

| 4. Run the job | Execute the node and configure scheduling if needed |

| 5. View results | Check the output files in OSS or query them with a Hive external table |

Step 1: Prepare source data

Create the input file

Create a file named input01.txt with the following content:

hadoop emr hadoop dw

hive hadoop

dw emrUpload source data to OSS

-

Log in to the OSS console. In the left navigation pane, click Bucket List.

-

Click the target bucket name to open the File Management page. This tutorial uses

onaliyun-bucket-2. -

Click Create Directory to create two directories:

-

emr/datas/wordcount02/inputs— for the source data file -

emr/jars— for the JAR package

-

-

Navigate to the

emr/datas/wordcount02/inputsdirectory, click Upload File, then click Scan for Files in the Files to upload section. Selectinput01.txtand click Upload File.

Step 2: Build the JAR package

Add Maven dependencies

In your IDEA project, add the following dependencies to pom.xml. Use version 2.8.5 to match the EMR MR cluster runtime — version mismatches cause class compatibility errors.

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-common</artifactId>

<version>2.8.5</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>2.8.5</version>

</dependency>Write the MapReduce program

The program uses three OSS configuration parameters to access files:

| Parameter | Description |

|---|---|

fs.oss.accessKeyId |

Your AccessKey ID |

fs.oss.accessKeySecret |

Your AccessKey secret |

fs.oss.endpoint |

The OSS endpoint for the region where your cluster and bucket reside. See Regions and endpoints. The OSS bucket must be in the same region as the EMR cluster. |

An Alibaba Cloud account's AccessKey grants full permissions for all API operations. Use a RAM user's AccessKey for API calls and daily operations. Never hardcode credentials in your code or commit them to a repository.

The following sample code adapts the standard Hadoop WordCount example with OSS access. Note the package name (cn.apache.hadoop.onaliyun.examples) and class name (EmrWordCount) — you will use both when submitting the job in Step 3.

Build the JAR

Build the JAR package. This tutorial uses onaliyun_mr_wordcount-1.0-SNAPSHOT.jar as the file name.

Step 3: Configure the EMR MR node

Data Studio provides two ways to make the JAR available to the EMR MR node. Choose based on your situation:

| Method | When to use |

|---|---|

| Upload and reference | The JAR is developed locally and you want to manage it inside DataWorks. Use this when the file is small enough to upload through the UI. |

| Reference from OSS | The JAR is already in OSS, or it is too large to upload through the DataWorks UI. DataWorks automatically downloads the JAR to the execution environment at runtime. |

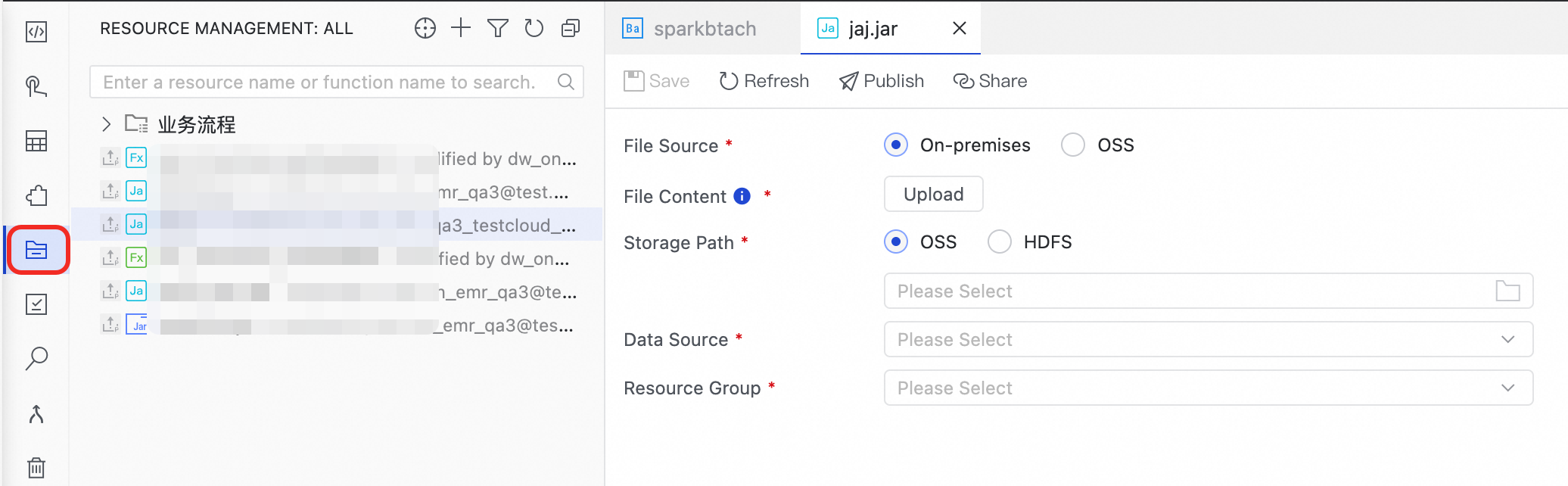

Method 1: Upload and reference

-

Create an EMR JAR resource in Data Studio. See Resource management. Store the JAR in the

emr/jarsdirectory, click Click to upload, then selectonaliyun_mr_wordcount-1.0-SNAPSHOT.jar. -

Set the Storage Path, Data Source, and Resource Group, then click Save.

-

Open the EMR MR node and go to the code editor page.

-

In the resource management pane on the left, right-click

onaliyun_mr_wordcount-1.0-SNAPSHOT.jarand select Reference Resource. -

After the reference annotation appears, enter the run command. The fully qualified class name is

cn.apache.hadoop.onaliyun.examples.EmrWordCount— the package and class name from the Java code in Step 2. Replace the bucket name and paths with your actual values.NoteComment statements are not supported in the EMR MR node editor.

##@resource_reference{"onaliyun_mr_wordcount-1.0-SNAPSHOT.jar"} onaliyun_mr_wordcount-1.0-SNAPSHOT.jar cn.apache.hadoop.onaliyun.examples.EmrWordCount oss://onaliyun-bucket-2/emr/datas/wordcount02/inputs oss://onaliyun-bucket-2/emr/datas/wordcount02/outputs

Method 2: Reference from OSS

You can directly reference an OSS resource from the node by using OSS REF. DataWorks automatically downloads the referenced OSS resource to the local execution environment at runtime.

-

Upload the JAR to OSS. Log in to the OSS console and navigate to the

emr/jarsdirectory inonaliyun-bucket-2. Click Upload File, then click Scan for Files to addonaliyun_mr_wordcount-1.0-SNAPSHOT.jar. -

On the EMR MR node configuration page, enter the following command. The format is:

hadoop jar <path_to_JAR> <fully_qualified_class_name> <input_directory> <output_directory>Component Description endpointThe OSS endpoint. If left blank, DataWorks can only access OSS in the same region as the EMR cluster. The bucket must be in the same region as the cluster. bucketThe OSS bucket name. objectThe file path within the bucket. hadoop jar ossref://onaliyun-bucket-2/emr/jars/onaliyun_mr_wordcount-1.0-SNAPSHOT.jar cn.apache.hadoop.onaliyun.examples.EmrWordCount oss://onaliyun-bucket-2/emr/datas/wordcount02/inputs oss://onaliyun-bucket-2/emr/datas/wordcount02/outputsThe

ossref://prefix tells DataWorks to download the JAR before execution. The path format isossref://{endpoint}/{bucket}/{object}:

Configure advanced parameters (optional)

Set additional parameters in the EMR Node Parameter or DataWorks Parameter section on the right side of the node configuration pane. Available parameters depend on the cluster type.

Datalake or custom cluster

| Parameter | Default | Description |

|---|---|---|

queue |

default |

The YARN scheduling queue for the job. See Basic queue configurations. |

priority |

1 |

Job priority. |

FLOW_SKIP_SQL_ANALYZE |

false |

Set to true to execute multiple SQL statements at once; false executes one SQL statement at a time. For use in the data development environment only. |

| Others | — | Custom MR job parameters added as -D key=value statements when DataWorks submits the job. |

Hadoop cluster

| Parameter | Default | Description |

|---|---|---|

queue |

default |

The YARN scheduling queue for the job. See Basic queue configurations. |

priority |

1 |

Job priority. |

USE_GATEWAY |

false |

Set to true to submit the job through a gateway cluster; false submits to the header node. If the cluster has no associated gateway cluster and this is set to true, job submission fails. |

Step 4: Run the job

-

In the Run Configuration section, set Compute Resources and Resource Group.

Note-

You can also configure Scheduling CUs based on the resource requirements of the task. The default CU value is

0.25. -

To access data sources over the public internet or a VPC, use a scheduling resource group that has passed the connectivity test with the data source. See Network connectivity solutions.

-

-

In the parameter dialog in the toolbar, select the data source and click Run.

-

To run the node on a schedule, configure scheduling properties. See Node scheduling configuration.

-

Deploy the node to make it operational. See Node and workflow deployment.

Step 5: View results

After the job completes, check the output in two ways:

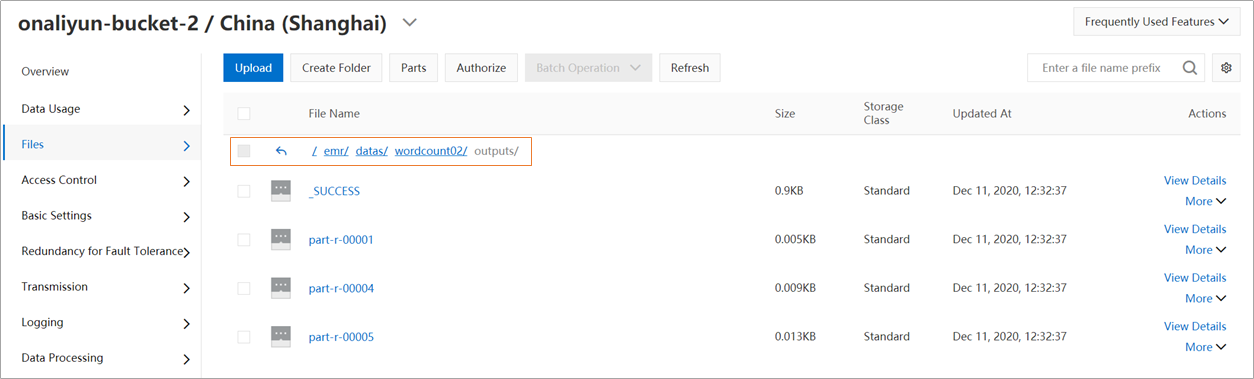

In the OSS console: Navigate to the emr/datas/wordcount02/outputs directory in onaliyun-bucket-2 to find the output files.

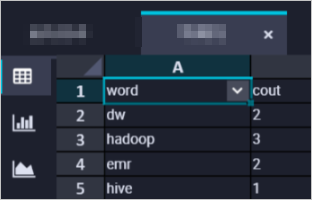

In DataWorks using Hive: Create an EMR Hive node (see Create a node for a scheduled workflow), then create an external Hive table that points to the output path and query it:

CREATE EXTERNAL TABLE IF NOT EXISTS wordcount02_result_tb

(

`word` STRING COMMENT 'word',

`cout` STRING COMMENT 'count'

)

ROW FORMAT delimited fields terminated by '\t'

location 'oss://onaliyun-bucket-2/emr/datas/wordcount02/outputs/';

SELECT * FROM wordcount02_result_tb;The query returns the word count results stored in the output directory.

What's next

-

Monitor the job's running status in Operation Center. See Getting started with Operation Center.

-

Schedule the node to run periodically. See Node scheduling configuration.

-

Explore other EMR node types in Data Studio, such as EMR Hive or EMR Spark, for different workload patterns.