Impala is an SQL query engine built for fast, real-time, interactive queries on petabyte-scale data. Use an EMR Impala node in DataWorks Data Studio to run Impala SQL as part of a scheduled data pipeline.

EMR Impala nodes run only on compute resources of the legacy Data Lake (Hadoop) cluster type. DataWorks no longer accepts new Hadoop-type cluster bindings, but clusters already bound continue to work.

Prerequisites

Before you begin, ensure that you have:

-

An Alibaba Cloud EMR cluster bound to DataWorks. For setup instructions, see Data Studio: Associate an EMR computing resource

-

A Hive data source configured in DataWorks with connectivity verified. For setup instructions, see Data Source Management

-

(RAM users only) Been added to the workspace with the Developer or Workspace Administrator role. The Workspace Administrator role has extensive permissions — grant it with caution. For instructions, see Add members to a workspace. Alibaba Cloud account users can skip this step

Limitations

| Constraint | Value | Notes |

|---|---|---|

| Supported resource groups | Serverless (recommended) or exclusive resource groups for scheduling | Other resource group types are not supported |

| Maximum SQL statement size | 130 KB | Applies per statement |

| Maximum query result rows | 10,000 records | Platform-enforced limit on interactive query results, not a configuration error |

| Maximum query result size | 10 MB | Platform-enforced limit on interactive query results, not a configuration error |

Create an EMR Impala node

Step 1: Write SQL code

In the SQL editing area on the node editor page, write your SQL code.

To pass dynamic values at runtime, define variables using the ${variable name} format, then assign values to each variable in Scheduling Parameters under Scheduling Configuration on the right panel. For supported formats, see Supported formats for scheduling parameters.

SHOW TABLES;

CREATE TABLE IF NOT EXISTS userinfo (

ip STRING COMMENT 'IP address',

uid STRING COMMENT 'User ID'

) PARTITIONED BY (

dt STRING

);

ALTER TABLE userinfo ADD IF NOT EXISTS PARTITION(dt='${bizdate}'); -- scheduling parameter

SELECT * FROM userinfo;Step 2: Configure advanced parameters (optional)

Under Scheduling Configuration on the right panel, go to EMR Node Parameters > DataWorks Parameters to configure the following parameters. Available parameters differ by cluster type.

For open-source Spark properties, configure them under EMR Node Parameters > Spark Parameters instead.

DataLake/Custom clusters: EMR on ECS

| Parameter | Type | Description | Default |

|---|---|---|---|

FLOW_SKIP_SQL_ANALYZE |

Boolean | Controls how SQL statements execute. Set to true to run multiple statements at once; false runs them one at a time. Applies to test runs in the development environment only. |

false |

DATAWORKS_SESSION_DISABLE |

Boolean | Controls Java Database Connectivity (JDBC) connection reuse during test runs. Set to true to create a new JDBC connection for each SQL run — required if you need the Hive yarn applicationId printed in logs. Set to false to reuse the same connection across statements in a node. |

false |

priority |

Integer | Priority level for job scheduling. | 1 |

queue |

String | The YARN scheduling queue for job submission. Use this to route jobs to a specific queue when your cluster has multiple queues configured. For queue configuration details, see Basic queue configurations. | default |

Hadoop clusters: EMR on ECS

| Parameter | Type | Description | Default |

|---|---|---|---|

FLOW_SKIP_SQL_ANALYZE |

Boolean | Controls how SQL statements execute. Set to true to run multiple statements at once; false runs them one at a time. Applies to test runs in the development environment only. |

false |

USE_GATEWAY |

Boolean | Specifies whether to submit jobs through a gateway cluster. Set to true to route through a gateway cluster; false submits directly to the header node. If your cluster has no associated gateway cluster, keep this set to false — setting it to true causes subsequent EMR job submissions to fail. |

false |

Step 3: Run the node

-

In Run Configuration, set the Computing Resource and Resource Group.

-

To adjust compute capacity, configure Schedule CUs based on task requirements. The default is

0.25CU. -

To access a data source over the public network or through a VPC, select the scheduling resource group that passed the connectivity test for that data source. For details, see Network connection solutions.

-

-

In the Parameters dialog box, select your Hive data source and click Run.

-

Click Save.

Next steps

After verifying the node runs correctly, complete the following steps to put it into production:

-

Configure scheduling: Set how often the node runs. For details, see Node scheduling configuration.

-

Publish the node: Deploy it to the production environment. For details, see Publish nodes or workflows.

-

Monitor in Operation Center: After publishing, track auto-triggered task runs in Operation Center. For details, see Get started with Operation Center.

Troubleshooting

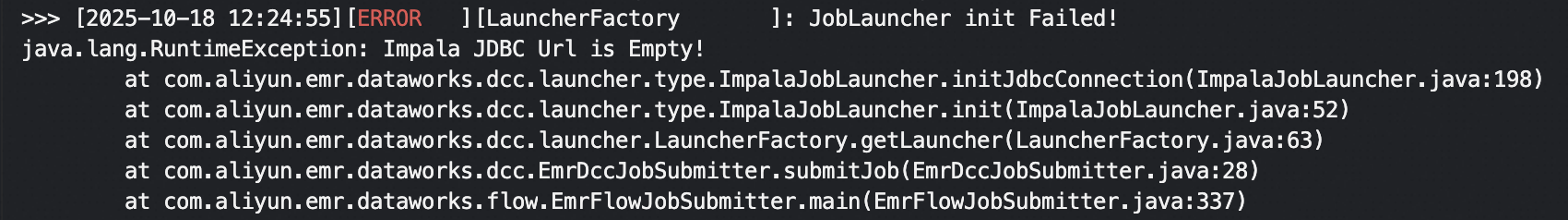

"Impala JDBC Url is Empty" error

The Impala service is not added to your cluster. Add the Impala service to your cluster to resolve this.

The Impala service is available to existing users only.

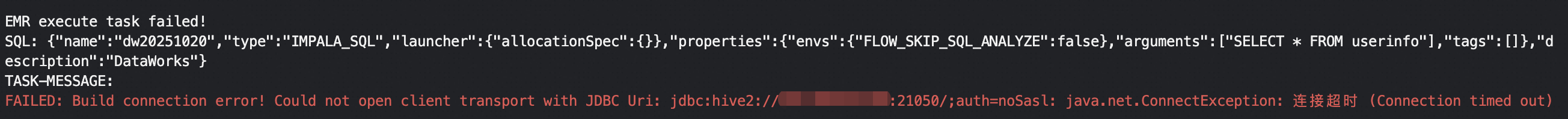

Connection timeout when running a node

This indicates a connectivity issue between the resource group and the cluster. Go to the computing resource list page to initialize the resource. In the dialog box that appears, click Re-initialize and verify that initialization completes successfully.