A data quality monitoring node lets you configure monitoring rules to check the quality of tables in a data source — detecting dirty data, validating row counts, checking null values, and more. The node runs as part of your scheduling pipeline and automatically blocks downstream tasks when data quality issues are detected, preventing dirty data from propagating through your Extract, Transform, and Load (ETL) pipeline.

How it works

After a data quality monitoring node runs, it evaluates each configured rule against the monitored table. If a rule check returns an anomaly, the node applies your handling policy: either stop the current node (blocking downstream tasks) or continue while sending an alert.

Limitations

-

Supported data sources: MaxCompute, E-MapReduce, Hologres, CDH Hive, AnalyticDB for PostgreSQL, AnalyticDB for MySQL, and StarRocks

-

One table per node: Each node monitors one table, but you can configure multiple monitoring rules for the node. To monitor multiple tables, create multiple data quality monitoring nodes.

-

Workspace binding: Monitored tables must belong to a data source bound to the same workspace as the node.

-

Partition monitoring: For partitioned tables, specify a partition filter expression. For non-partitioned tables, the entire table is monitored by default.

-

Rules managed in DataStudio only: Rules created in DataStudio can be run, modified, published, and managed only in DataStudio. In the Data Quality module, you can view these rules but cannot trigger scheduled runs or manage them there.

-

Publishing replaces rules: If you modify monitoring rules in a node and republish the node, the original monitoring rules are replaced.

Prerequisites

Before you begin, ensure that you have:

-

A business flow created in Data Development (DataStudio). For more information, see Create a business flow.

-

A data source created and bound to the current workspace, with the target table already created in the data source. For more information, see Data Source Management, Resource Management, and Node development.

-

A Serverless resource group created. Data quality monitoring nodes run only on Serverless resource groups. For more information, see Resource Management.

-

(Optional, for RAM users) The Resource Access Management (RAM) user for task development added to the workspace with the Development or Workspace Manager role. The Workspace Administrator role has extensive permissions and must be granted with caution. For more information, see Add workspace members.

Step 1: Create a data quality monitoring node

-

Log on to the DataWorks console. In the top navigation bar, select the target region. In the left-side navigation pane, choose Data Development and O\&M \> Data Development. Select the target workspace from the drop-down list and click Go to Data Development.

-

In DataStudio, right-click the target business flow and choose Create Node \> Data Quality \> Data Quality Monitoring.

-

In the Create Node dialog box, enter a name and click Confirm.

Step 2: Configure monitoring rules

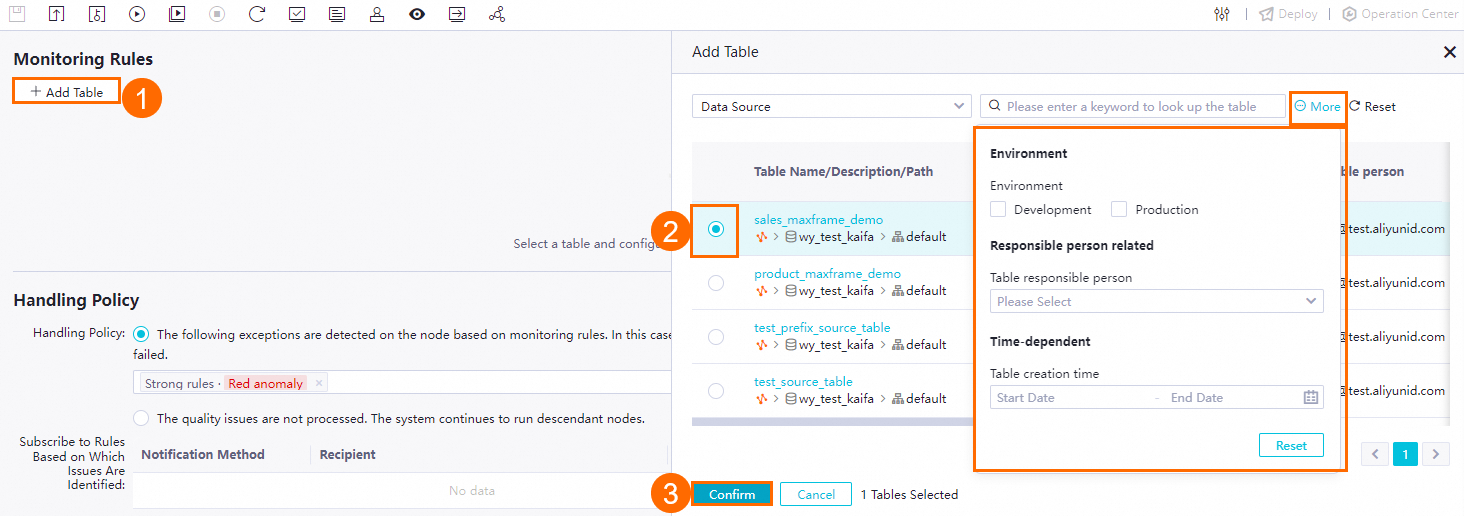

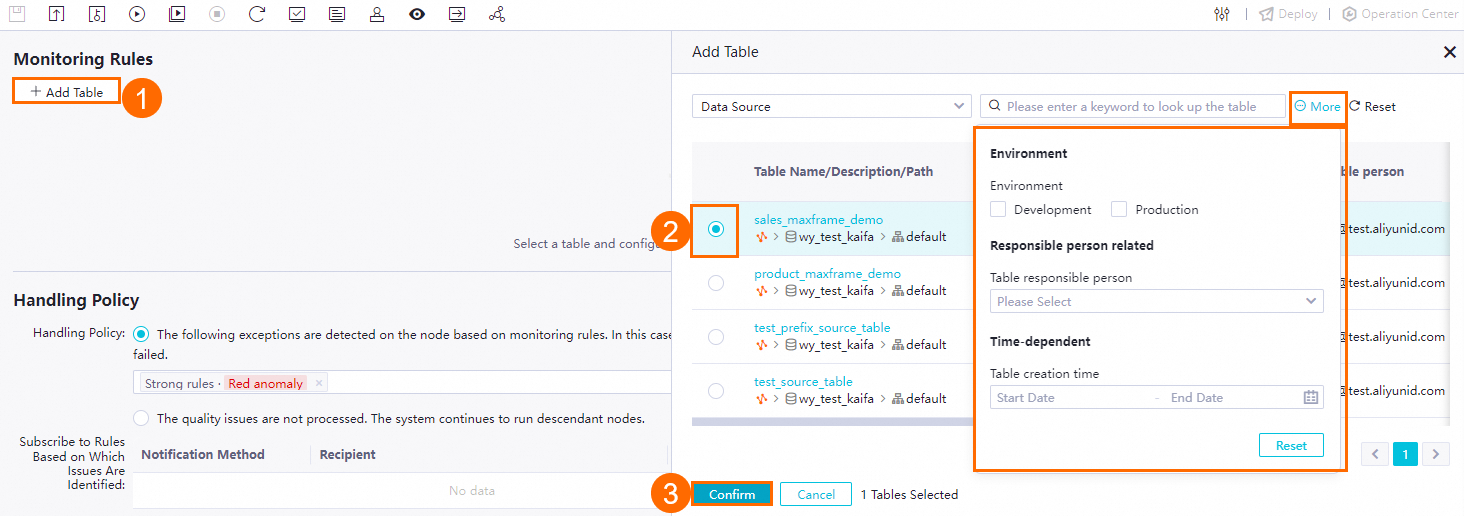

1. Select the table to monitor

Click Add Table. In the Add Table dialog box, search for and select the target table.

2. Set the data range

-

Non-partitioned tables: The entire table is monitored by default. Skip this step.

-

Partitioned tables: Select the partition to monitor. Use scheduling parameters if needed. Click Preview to verify the partition filter expression result.

3. Create or import rules

Configured rules are enabled by default. Create new rules or import existing ones.

Create a rule

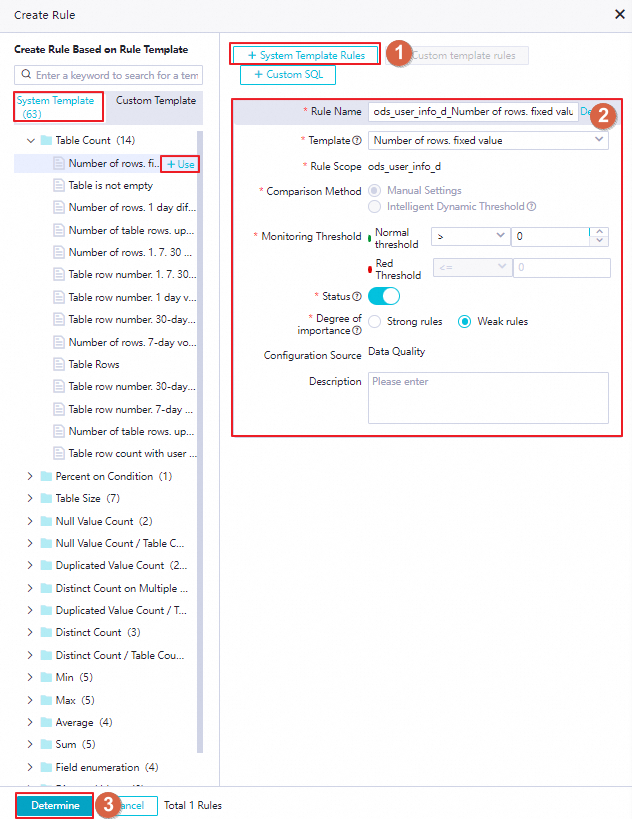

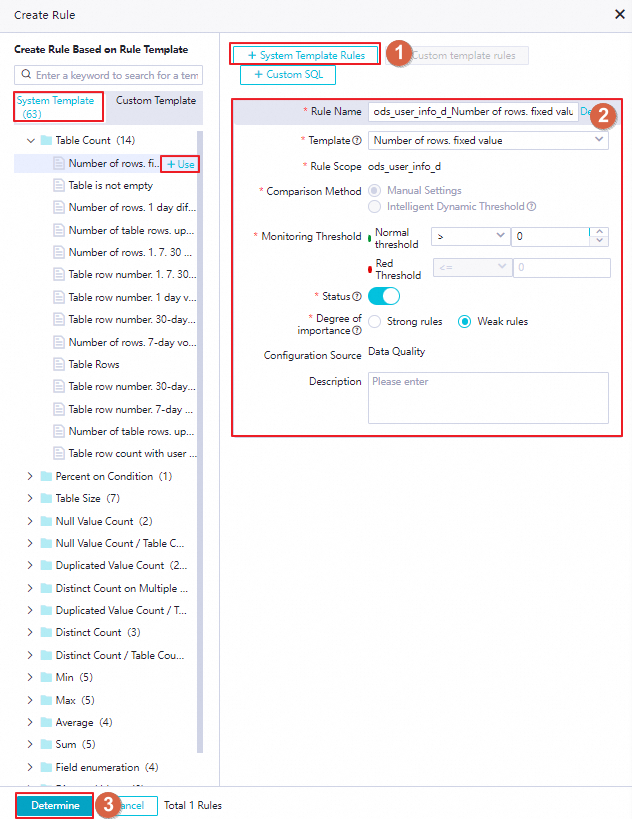

Click Create Rule to create a monitoring rule from a template or a custom SQL statement.

From a system template

The platform provides built-in table-level and field-level rule templates. Select a template to create a rule quickly. You can also find a template in the system template list on the left and click +Use.

Parameters for configuring a rule based on a built-in rule template

The following table describes the rule parameters.

| Parameter |

Description |

| Rule name |

The name of the monitoring rule. |

| Template |

The type of rule validation to perform. For available built-in templates, see View built-in rule templates.

Note

Field-level rules for average value, sum, minimum value, and maximum value apply only to numeric fields.

|

| Rule scope |

The scope of the rule. Table-level rules apply to the current table. Field-level rules apply to a specific field. |

| Comparison method |

How the rule determines whether data meets expectations. Select Manual settings or Intelligent dynamic threshold. See the comparison methods table below for details. |

| Monitoring threshold |

The threshold values used in conjunction with the comparison method. See the comparison methods table below for details. |

| Retain problem data |

When enabled and a check fails, the system creates a table to store the problematic rows. Available only for MaxCompute tables and specific rule types. Problem data is not retained if the rule is Disabled. |

| Status |

Whether the rule is active in the production environment. A Disabled rule cannot be triggered by test runs or scheduling nodes. |

| Degree of importance |

Determines whether a rule violation blocks the associated scheduling node. Strong rules block the node when the critical threshold is exceeded; weak rules do not. See Step 3: Configure a handling policy for how the two rule strengths behave differently. |

| Configuration source |

The source of the rule configuration. The default value is Data Quality. |

| Description |

Optional description for the rule. |

Comparison methods and thresholds

| Comparison method |

How it works |

Threshold parameters |

| Manual settings |

Compare the check output against a fixed expected value or a fluctuation range you define. For numeric outputs: supported operators are Greater Than, Greater Than Or Equal To, Equal To, Not Equal To, Less Than, Less Than Or Equal To. For fluctuation outputs: supported operators are Absolute value, Raise, and Drop. |

Normal threshold: The check result is within this range — data is as expected. Red threshold: The check result crosses this boundary — data is not as expected. For fluctuation rules, you can also set an orange threshold for warnings that do not affect business operations. |

| Intelligent dynamic threshold |

The system determines a reasonable threshold automatically using intelligent algorithms. No manual threshold configuration is required. Applies only to custom SQL, custom range, or dynamic threshold rules. |

Orange threshold: The check result indicates an anomaly that does not affect business operations. |

When the comparison method is set to Intelligent dynamic threshold, configure the Degree of importance parameter.

From a custom template

Before using this method, go to Data Quality \> Quality Assets \> Rule Template Library to create a custom rule template. Then create a monitoring rule based on that template. For more information, see Create and manage custom rule templates.

You can also find a custom template in the template list on the left and click +Use.

Parameters for configuring a rule based on a custom rule template

The following parameters are specific to custom template rules. For other parameters, see the system template parameter table above.

| Parameter |

Description |

| FLAG parameter |

A SET statement to execute before the rule's SQL statement runs. |

| SQL |

The SQL statement that defines the complete check logic. The statement must return a single numeric value (one row, one column). Enclose partition filter expressions in brackets []. Example: SELECT count(*) FROM ${tableName} WHERE ds=$[yyyymmdd];. The ${tableName} variable is automatically replaced with the monitored table name. When this parameter is set, the Data range partition setting in the monitor configuration is overridden. The rule determines the partition to check based on the WHERE clause in this SQL statement. |

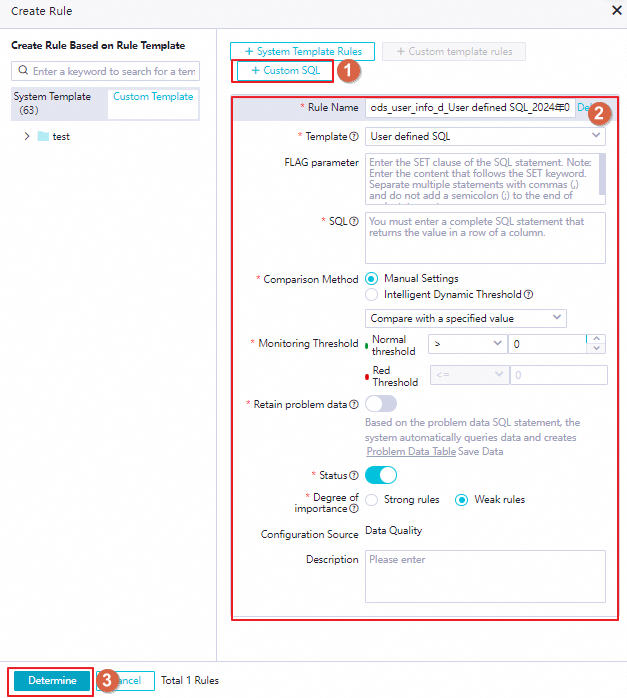

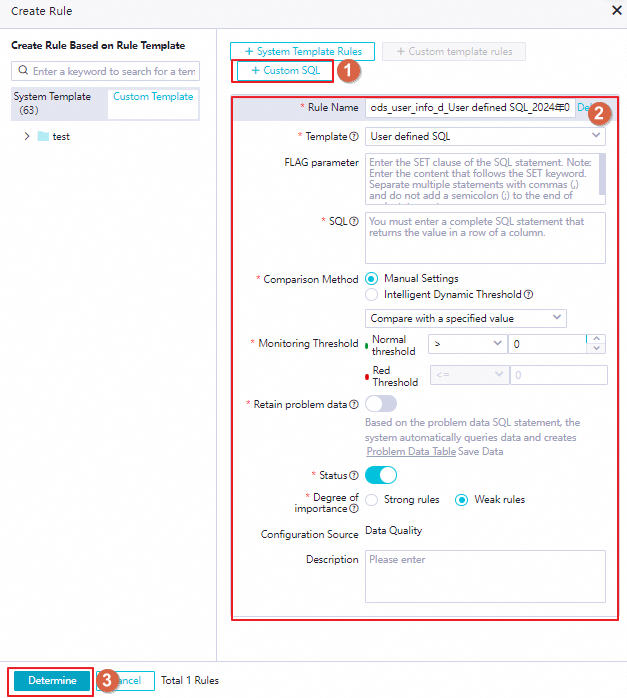

From a custom SQL statement

This method lets you define custom check logic directly without a template.

Parameters for configuring a rule based on a custom SQL statement

The following parameters are specific to custom SQL rules. For other parameters, see the system template parameter table above.

| Parameter |

Description |

| FLAG parameter |

A SET statement to execute before the rule's SQL statement runs. |

| SQL |

The SQL statement that defines the complete check logic. The statement must return a single numeric value (one row, one column). Enclose partition filter expressions in brackets []. Example: SELECT count(*) FROM <table_name> WHERE ds=$[yyyymmdd];. Replace <table_name> with the actual table name. When this parameter is set, the Data range partition setting in the monitor configuration is overridden. The rule determines the partition to check based on the WHERE clause in this SQL statement. |

Import existing rules

If monitoring rules for the target table already exist in the Data Quality module, import them to quickly clone those rules. If no rules exist, you must first create them in the Data Quality module. For more information, see Configure rules for a single table. This supports batch import of both table-level and field-level rules.

Click Import Rule. Search for and select rules to import by rule ID or name, rule template, or associated scope.

After you publish a data quality monitoring node, rule details are visible in the Data Quality module, but management operations such as modifying or deleting rules are not available there.

4. Configure compute resources

Select the compute resource group to use for the quality rule check. This specifies which data source runs the monitoring task. By default, the data source of the monitored table is used.

If you select a different data source, confirm that it has access to the monitored table.

Step 3: Configure a handling policy

In the Handling policy section of the node configuration page, configure how the node responds to check anomalies and how notifications are delivered.

Exception categories and handling policy

The following table shows each exception type, what triggers it, and how each policy affects pipeline execution.

| Exception category |

What it means |

Policy: Do not ignore |

Policy: Ignore |

| Strong rule - Error alert |

The strong rule's red threshold was exceeded — monitored data does not meet expectations and is likely to affect downstream operations. |

Current node stops and is set to failed. Downstream nodes do not run. |

Pipeline continues. |

| Strong rule - Warning alert |

The strong rule's orange threshold was triggered — anomalies are present, but downstream operations are not affected. |

Current node stops. Downstream nodes do not run. |

Pipeline continues. |

| Strong rule - Check failed |

The monitor failed to run (for example, the monitored partition was not generated, or the SQL statement failed). |

Current node stops. Downstream nodes do not run. |

Pipeline continues. |

| Soft rule - Error alert |

The soft rule's red threshold was exceeded. |

Current node stops. Downstream nodes do not run. |

Pipeline continues. |

| Soft rule - Warning alert |

The soft rule's orange threshold was triggered. |

Current node stops. Downstream nodes do not run. |

Pipeline continues. |

| Soft rule - Check failed |

The monitor failed to run for a soft rule. |

Current node stops. Downstream nodes do not run. |

Pipeline continues. |

Configure the handling policy based on your business impact assessment:

-

Do not ignore: When the specified exception category is detected, the node stops and its status is set to failed. Downstream nodes do not run, blocking the pipeline and preventing dirty data from spreading. Add multiple exception categories as needed. Use this policy when an exception has major downstream impact.

-

Ignore: The exception is recorded, downstream nodes continue to run, and the pipeline is not blocked.

Notification settings

Configure how exception notifications are delivered. When an exception occurs, the platform sends a notification using the specified method.

-

Email, email and text message, or phone call: Select recipients from users within the current account. Confirm that email addresses and phone numbers are configured correctly. For more information, see View and set alert contacts.

-

Webhook: Enter the webhook URL. For more information about obtaining a webhook URL, see Obtain a webhook URL.

Step 4: Configure scheduling

To run the node periodically, click Properties in the right-side pane and configure the scheduling properties. For more information, see Configure scheduling properties for a node.

Set the Rerun and Parent nodes properties before submitting the node.

Step 5: Debug the task

-

(Optional) Select a resource group and assign values to scheduling parameters. Click the  icon in the toolbar. In the Parameters dialog box, select the scheduling resource group and assign values to any scheduling parameters used by the task. For more information, see Task debugging process.

icon in the toolbar. In the Parameters dialog box, select the scheduling resource group and assign values to any scheduling parameters used by the task. For more information, see Task debugging process.

-

Save and run the task. Click the  icon to save the task, then click the

icon to save the task, then click the  icon to run it. After the run completes, view the results at the bottom of the node configuration page. If the run fails, check the error message to troubleshoot.

icon to run it. After the run completes, view the results at the bottom of the node configuration page. If the run fails, check the error message to troubleshoot.

-

(Optional) Perform smoke testing. To verify that the scheduling node task runs as expected in the development environment, perform smoke testing when you submit the node or after submission. For more information, see Perform smoke testing.

Step 6: Submit and publish the task

When you submit and publish the node, the configured quality rules are also submitted and published. After publishing, the node runs periodically according to its scheduling configuration.

-

Click the  icon to save the node.

icon to save the node.

-

Click the  icon to submit the node. In the Submit dialog box, enter a Change description. If needed, select whether to perform a code review after submission.

icon to submit the node. In the Submit dialog box, enter a Change description. If needed, select whether to perform a code review after submission.

Set the Rerun and Parent nodes properties before submitting. Code review helps ensure configuration quality — a submitted node can be published only after a reviewer approves it. For more information, see Code review.

-

If using a workspace in standard mode, click Deploy in the upper-right corner of the node configuration page to publish the task to the production environment. For more information, see Publish tasks.

What's next

-

Task O\&M: After the task is published, it runs periodically. Click Operation Center in the upper-right corner of the node configuration page to view the scheduling and running status of the auto triggered task, including node status and triggered rule details. For more information, see Manage auto triggered tasks.

-

Data Quality: After quality rules are published, go to the Data Quality module to view rule details. Management operations such as modifying or deleting rules are not available there. For more information, see Data Quality.

icon in the toolbar. In the Parameters dialog box, select the scheduling resource group and assign values to any scheduling parameters used by the task. For more information, see Task debugging process.

icon in the toolbar. In the Parameters dialog box, select the scheduling resource group and assign values to any scheduling parameters used by the task. For more information, see Task debugging process.

icon to save the task, then click the

icon to save the task, then click the  icon to run it. After the run completes, view the results at the bottom of the node configuration page. If the run fails, check the error message to troubleshoot.

icon to run it. After the run completes, view the results at the bottom of the node configuration page. If the run fails, check the error message to troubleshoot. icon to save the node.

icon to save the node. icon to submit the node. In the Submit dialog box, enter a Change description. If needed, select whether to perform a code review after submission.

icon to submit the node. In the Submit dialog box, enter a Change description. If needed, select whether to perform a code review after submission.