Create a Hive data source to allow Dataphin to read business data from Hive or write data to Hive. This topic describes how to create a Hive data source.

Background information

Hive is a data warehouse tool built on Hadoop. It maps structured data files to database tables and supports SQL queries. Hive converts HQL or SQL statements into MapReduce, Tez, or other programs. If you use Hive, create a Hive data source before integrating Hive with Dataphin for data development or writing Dataphin data to Hive.

Limits

If you use an OSS-based Hive external table for offline integration in an Alibaba Cloud E-MapReduce 5.x Hadoop compute engine, configure the required settings before using it. For configuration details, see Use an OSS-based Hive external table for offline integration.

If you use a Hive data source as an input or output component for integration, confirm that the Dataphin IP address is in the Hive network whitelist. This ensures Dataphin can access Hive data.

Ensure connectivity between the Dataphin application cluster and scheduling cluster and the following Hive services: Hive server, HDFS NameNode (including web UI and IPC), DataNode, KDC server, metadatabase or Hive Metastore, and ZooKeeper.

Permissions

Only users with the New Data Source Permission Point in a custom global role or users with the super administrator, data source administrator, domain architect, or project administrator role can create a data source.

Procedure

In the top menu bar on the Dataphin homepage, choose Management Hub > Datasource Management.

On the Datasource page, click + New Data Source.

On the New Data Source page, in the Big Data section, select Hive.

If you recently used Hive, select Hive in the Recently Used section. You can also enter Hive-related keywords in the search box to filter quickly.

On the New Hive Data Source page, configure the data source connection parameters.

The Hive data source configuration includes three parts: Integration Configuration for data integration, Real-time Development Configuration for real-time development, and Metadatabase Configuration for basic metadata retrieval.

NoteProduction and development data sources should usually be separate to isolate environments and reduce impact on production. However, Dataphin also supports using the same data source for both environments by setting identical parameters.

Hive Data Source Basic Information

Parameter

Description

Datasource Name

Enter a data source name. The name must meet these rules:

You can use only Chinese characters, uppercase and lowercase English letters, digits, underscores (_), or hyphens (-).

It cannot exceed 64 characters.

Datasource Code

After you configure a data source code, reference tables in Flink SQL tasks using the format

datasource_code.table_nameordatasource_code.schema.table_name. To automatically access the correct environment's data source, use the variable format${datasource_code}.tableor${datasource_code}.schema.table. For more information, see Dataphin data source table development.ImportantThe data source code cannot be modified after it is configured.

You can preview data on the object details page in the asset directory and asset checklist only after the data source code is configured.

In Flink SQL, only MySQL, Hologres, MaxCompute, Oracle, StarRocks, Hive, SelectDB, and GaussDB data warehouse service (DWS) data sources are currently supported.

Version

Select the Hive version. Dataphin supports these versions:

CDH 5.x Hive 1.1.0

Alibaba Cloud EMR 3.x Hive 2.3.5

Alibaba Cloud EMR 5.x Hive 3.1.x

CDH 6.x Hive 2.1.1

FusionInsight 8.x Hive 3.1.0

Cloudera Data Platform 7.x Hive 3.1.3

AsiaInfo DP 5.x Hive 3.1.0

Amazon EMR

Data Source Description

Enter a brief description of the data source. Maximum length: 128 characters.

Data Lake Table Format

Enable or disable data lake table format. Default: disabled. When enabled, select a table format.

When Version is Cloudera Data Platform 7.x Hive 3.1.3, only Hudi is supported.

When Version is Alibaba Cloud EMR 5.x Hive 3.1.x, select Iceberg or Paimon.

NoteThis option is available only when Version is Cloudera Data Platform 7.x Hive 3.1.3 or Alibaba Cloud EMR 5.x Hive 3.1.x.

Enable Modules

Integration: Enable to use this Hive data source for data integration.

Real-time Development: Enable to use this Hive data source for real-time development.

NoteReal-time development is supported for CDH 6.x Hive 2.1.1, Cloudera Data Platform 7.x Hive 3.1.3, and AsiaInfo DP 5.x Hive 3.1.0.

Real-time development is supported for Alibaba Cloud EMR 5.x Hive 3.1.x when Paimon is selected as the data lake table format.

Data Source Configuration

Select the configuration environment:

If your business uses separate production and development data sources, select Production + Development Data Source.

If your business uses one data source for both production and development, select Production Data Source.

Tag

Add tags to classify your data source. For instructions on creating tags, see Manage Data Source Tags.

Production/Development Data Source Configuration

Required parameters vary by Hive version.

Versions Other Than Amazon EMR

Hive Configuration

Metadata Retrieval Method: Choose one of these methods: Metadata Database, HMS, or DLF. Each method requires different configuration details.

Metadata Database

NoteWhen only Real-time Development is enabled, only the metadata database method is supported.

Parameter

Description

Database Type

Select the corresponding database type based on the metadatabase type used in your cluster. Dataphin supports MySQL. The MySQL database type supports MySQL 5.1.43, MySQL 5.6/5.7, and MySQL 8.

JDBC URL

Enter the JDBC connection URL for the target database. Format:

jdbc:mysql://host:port/dbname.Username and Password

Enter the username and password to log on to Hive.

Hive JDBC URL

Enter the Hive JDBC connection URL. Support these formats:

Hive Server: Format is

jdbc:hive://host:port/dbname.ZooKeeper: Example:

jdbc:hive2://zk01:2181,zk02:2181,zk03:2181/;serviceDiscoveryMode=zooKeeper;zooKeeperNamespace=hiveserver2.Hive with Kerberos enabled: Format is

jdbc:hive2://host:port/dbname;principal=hive/_HOST@xx.com.

NoteWhen the version is E-MapReduce 3.x, E-MapReduce 5.x, or Cloudera Data Platform, the JDBC URL does not support multiple IP addresses after Kerberos authentication is enabled.

HMS

Parameter

Description

Authentication Type

HMS supports No Authentication, LDAP, and Kerberos. For Kerberos, upload a keytab file and configure a principal.

hive-site.xml

Upload the Hive hive-site.xml configuration file.

NoteIf Real-time Development is enabled, this configuration file is reused.

Hive JDBC URL

Enter the Hive JDBC connection URL. Support these formats:

Hive Server: Format is

jdbc:hive://host:port/dbname.ZooKeeper: Example:

jdbc:hive2://zk01:2181,zk02:2181,zk03:2181/;serviceDiscoveryMode=zooKeeper;zooKeeperNamespace=hiveserver2.Hive with Kerberos enabled: Format is

jdbc:hive2://host:port/dbname;principal=hive/_HOST@xx.com.

DLF

NoteDLF is supported only for Alibaba Cloud EMR 5.x Hive 3.1.x.

Parameter

Description

Endpoint

Enter the DLF endpoint for the region where your cluster is located. For instructions, see the and DLF Region and Endpoint Reference.

AccessKey ID and AccessKey Secret

Enter the AccessKey ID and AccessKey Secret for the account that owns the cluster.

You can get them on the User Information Management page.

hive-site.xml

Upload the Hive hive-site.xml configuration file.

NoteIf Real-time Development is enabled, this configuration file is reused.

Hive JDBC URL

Enter the Hive JDBC connection URL. Support these formats:

Hive Server: Format is

jdbc:hive://host:port/dbname.ZooKeeper: Example:

jdbc:hive2://zk01:2181,zk02:2181,zk03:2181/;serviceDiscoveryMode=zooKeeper;zooKeeperNamespace=hiveserver2.Hive with Kerberos enabled: Format is

jdbc:hive2://host:port/dbname;principal=hive/_HOST@xx.com.

Integration

NoteConfigure integration settings only if you enable the Integration module.

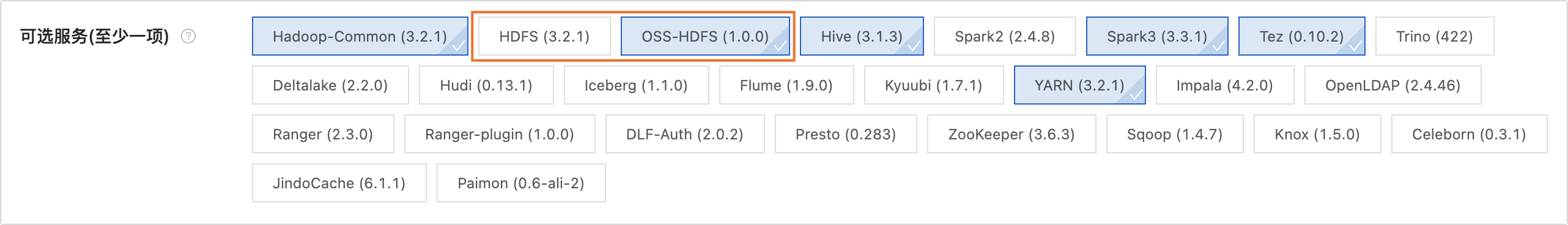

Integration Configuration

Choose HDFS or OSS-HDFS Cluster Storage. Required settings depend on your choice.

HDFS

Parameter

Description

NameNode

The NameNode manages metadata in an HDFS cluster. Click Add and then configure NameNode node information in the Add NameNode dialog box.

Web UI Port: Port to access the web interfaces of components in the Hadoop cluster.

IPC Port: Port for inter-process communication (IPC).

In a CDH5 environment, Web UI Port and IPC Port are

50070and8020by default. You can specify the ports according to your actual configuration.NoteThe Test Connection succeeds if either the Web UI port or IPC port is correct.

Configuration File

Upload the Hadoop configuration files

hdfs-site.xmlandcore-site.xml. Export them from your Hadoop cluster.NoteIf you enable Data Lake Table Format and select Hudi, and if you use the Hive data source for Real-time Development with the dp-hudi Connector, upload the

hive-site.xmlconfiguration file.Enable Kerberos

Kerberos is an identity authentication protocol based on symmetric keys. It provides authentication for other services and supports SSO (single sign-on). After authenticating once, a client can access multiple services such as HBase and HDFS.

If your Hadoop cluster uses Kerberos, complete these configurations.

Kerberos Configuration Method

KDC Server: Address of the KDC server, used to assist Kerberos authentication.

NoteYou can enter multiple KDC server addresses separated by commas.

krb5.conf Configuration: Upload the krb5.conf file for Kerberos authentication.

HDFS Configuration

HDFS Keytab File: Upload the keytab authentication file. Get it from your HDFS server.

HDFS Principal: Enter the principal name for the HDFS keytab file. Example:

xxx/hdfsclient@xxx.xxx.

Hive Configuration

JDBC URL: Enter the Hive JDBC connection URL. Support these formats:

Hive Server:

jdbc:hive://host:port/dbname.ZooKeeper:

jdbc:hive2://zk01:2181,zk02:2181,zk03:2181/;serviceDiscoveryMode=zooKeeper;zooKeeperNamespace=hiveserver2.Kerberos-enabled:

jdbc:hive2://host:port/dbname;principal=hive/_HOST@xx.com.

NoteWhen the version is E-MapReduce 3.x, E-MapReduce 5.x, or Cloudera Data Platform, the JDBC URL does not support multiple IP addresses after Kerberos authentication is enabled.

Hive Keytab File: Upload the keytab authentication file. Get it from your Hive server.

Hive Principal: Enter the principal name for the Hive keytab file. Example:

xxx/hdfsclient@xxx.xxx.

Disable Kerberos

If your Hadoop cluster does not use Kerberos, complete these configurations.

JDBC URL: Enter the Hive JDBC connection URL. Support these formats:

Hive Server:

jdbc:hive://host:port/dbname.The ZooKeeper endpoint. For example:

jdbc:hive2://zk01:2181,zk02:2181,zk03:2181/;serviceDiscoveryMode=zooKeeper;zooKeeperNamespace=hiveserver2.Kerberos-enabled:

jdbc:hive2://host:port/dbname;principal=hive/_HOST@xx.com.NoteWhen the version is E-MapReduce 3.x, E-MapReduce 5.x, or Cloudera Data Platform, the JDBC URL does not support multiple IP addresses after Kerberos authentication is enabled.

Username and Password: Enter the username and password to log on to Hive.

NoteTo ensure tasks run correctly, confirm the user has permission to access Hive data.

OSS-HDFS

NoteOSS-HDFS cluster storage is supported only for Alibaba Cloud EMR 5.x Hive 3.1.x.

Parameter

Description

Cluster Storage

Check your cluster storage type using one of these methods:

Cluster Not Created: You can view the cluster storage class on the E-MapReduce 5.x Hadoop cluster creation page. The following figure shows the cluster storage class:

For an existing cluster: You can view the storage class of the cluster on the product page of the E-MapReduce 5.x Hadoop cluster. The following figure provides an example.

Cluster Storage Root Directory

Enter the cluster storage root directory. Get it from your E-MapReduce 5.x Hadoop cluster information. See the image below.

Important

ImportantIf the path includes an endpoint, Dataphin uses that endpoint. If not, Dataphin uses the bucket-level endpoint from core-site.xml. If no bucket-level endpoint is configured, Dataphin uses the global endpoint from core-site.xml. For more information, see the and Alibaba Cloud OSS-HDFS Service (JindoFS) Endpoint Configuration.

Configuration File

Upload the cluster configuration files

core-site.xmlandhive-metastore-site.xml. Export them from your Hadoop cluster.AccessKey ID and AccessKey Secret

Enter the AccessKey ID and AccessKey Secret to access OSS in your cluster. Use an existing AccessKey or create a new one by following the or Create an AccessKey guide.

ImportantThese settings take priority over AccessKeys in core-site.xml.

To reduce the risk of exposing your AccessKey, the AccessKey secret appears only once during creation and cannot be viewed later. Store it securely.

Enable Kerberos

Kerberos is an identity authentication protocol based on symmetric keys. It provides authentication for other services and supports SSO (single sign-on). After authenticating once, a client can access multiple services such as HBase and HDFS.

If your Hadoop cluster uses Kerberos, complete these configurations.

Kerberos Configuration Method

krb5.conf Configuration: Upload the krb5.conf file for Kerberos authentication.

Hive Configuration

JDBC URL: Enter the Hive JDBC connection URL. Support these formats:

Hive Server:

jdbc:hive://host:port/dbname.The ZooKeeper endpoint. For example,

jdbc:hive2://zk01:2181,zk02:2181,zk03:2181/;serviceDiscoveryMode=zooKeeper;zooKeeperNamespace=hiveserver2.Kerberos-enabled:

jdbc:hive2://host:port/dbname;principal=hive/_HOST@xx.com.NoteWhen the version is E-MapReduce 3.x, E-MapReduce 5.x, or Cloudera Data Platform, the JDBC URL does not support multiple IP addresses after Kerberos authentication is enabled.

Hive Keytab File: Upload the keytab authentication file. Get it from your Hive server.

Hive Principal: Enter the principal name for the Hive keytab file. Example:

xxx/hdfsclient@xxx.xxx.

Disable Kerberos

If your Hadoop cluster does not use Kerberos, complete these configurations.

JDBC URL: Enter the Hive JDBC connection URL. Support these formats:

The Hive Server endpoint follows the format

jdbc:hive://host:port/dbname.The ZooKeeper endpoint. For example,

jdbc:hive2://zk01:2181,zk02:2181,zk03:2181/;serviceDiscoveryMode=zooKeeper;zooKeeperNamespace=hiveserver2.Kerberos-enabled:

jdbc:hive2://host:port/dbname;principal=hive/_HOST@xx.com.

Username and Password: Enter the username and password to log on to Hive.

NoteTo ensure tasks run correctly, confirm the user has permission to access Hive data.

Spark Configuration

NoteSpark configuration is supported for Cloudera Data Platform 7.x Hive 3.1.3 with Hudi as the data lake table format.

Spark configuration is supported for Alibaba Cloud EMR 5.x Hive 3.1.x with Iceberg or Paimon as the data lake table format.

Parameter

Description

Spark

Select Enable or Disable. Default: enabled. If enabled, configure the parameters below.

NoteSpark cannot be disabled if Paimon is selected as the data lake table format.

Service Type

Select Kyuubi or Livy. Default: Kyuubi.

Spark JDBC URL

Enter the JDBC connection URL. Format:

jdbc:hive2://{host}:{port}/{database name}.Authentication Type

Select No Authentication, LDAP, or Kerberos.

Username and Password

Enter the authentication username and password. To ensure tasks run correctly, confirm the user has the required data permissions.

NoteEnter a username for No Authentication. Enter both username and password for LDAP.

Spark Keytab File

Upload the keytab authentication file. Get it from your Spark server.

NoteThis setting is available only when Kerberos is selected as the authentication type.

Spark Principal

Enter the principal name for the Spark keytab file. Example:

xxx/hadooppclient@xxx.xxx.NoteThis setting is available only when Kerberos is selected as the authentication type.

Real-time Development

NoteOnly CDH6.x Hive 2.1.1, CDP7.x Hive 3.1.3, and AsiaInfo DP5.x Hive 3.1.0 versions support enabling real-time development. When you select real-time development while enabling modules, you can configure the real-time development module.

Configuration File: Upload the hive-site.xml file. Flink SQL tasks ignore authentication information from integration and use authentication information from the Flink engine to access the Hive data source.

Amazon EMR

Parameter

Description

Master Node Public DNS

Get the VPC private DNS from the public DNS. Both Hive and Spark connect using the private DNS. Format:

ec2-{public_ip}.{region}.compute.amazonaws.com.Key File (*.pem)

The key pair to access the master node EC2 instance. This is the key pair you set when creating the EMR cluster.

Configuration File

Upload cluster configuration files such as core-site.xml, yarn-site.xml, hive-site.xml, and hdfs-site.xml. Or click Get Cluster Configuration (you must first enter the master node public DNS and upload the key file) to download them from the master node.

Database

Enter the database name for the Amazon EMR compute engine.

Engine Type

Select Hive or Spark. Default: Hive. If you select Hive, enter the Hive JDBC URL. If you select Spark, enter the Spark JDBC URL.

Hive JDBC URL: Enter the Hive JDBC connection URL or click Auto Retrieve (you must first enter the master node public DNS and upload the key file). Format:

jdbc:hive2//host1:port1,host2:post2/. Do not include the database name.Spark JDBC URL: Enter the Spark JDBC connection URL. Format:

jdbc:hive2//{host:port}/{database name}.

Username

The specified username for Hive or Spark. This username is used as the JDBC

username.Cluster Storage

Only HDFS is supported.

Metadata Retrieval Method

Select HMS or Amazon Glue.

HMS: Default selection.

Amazon Glue: If you select Amazon Glue, configure the Glue Region Code, Glue AccessKey ID, and Glue AccessKey Secret.

Glue Region Code: Enter the Amazon Glue region code. Examples: ap-northeast-3, us-east-1, us-west-1.

Glue AccessKey ID and Glue AccessKey Secret: Enter the Amazon Glue AccessKey ID and AccessKey Secret.

Select a default resource group. This resource group runs tasks related to the current data source, including database SQL, offline full-database migration, and data preview.

Click Test Connection or OK to save and complete the Hive data source creation.

Click Test Connection to verify connectivity between the data source and Dataphin. If you click OK, Dataphin tests all selected clusters. Even if all tests fail, the data source is still created successfully.