This topic describes the process of generating a logical fact table by adding multiple attributes to a business activity. The resulting table includes primary keys, measures, and fact attribute fields. The primary key defines the data extraction logic from the source table, while measures are numeric fields that quantify various aspects of the data.

Limits

The Data Standard module requires you to set the Data Standard field in the table.

The Asset Security module is necessary to set the Data Classification and Data Categorization fields in the table.

Without the Asset Quality module, you cannot implement Unique and Not Null validation for primary key fields.

Prerequisites

You have created business activity objects. For more information, see Create and manage business entities.

Procedure

Step 1: Create a logical fact table

On the Dataphin homepage, click Development > Data Development in the top menu bar.

To access the Create Logical Fact Table dialog box, follow these steps:

Select Project (Dev-Prod mode requires selecting an environment) -> click Logical Fact Table -> click the

icon.

icon.

On the Create Logical Fact Table page, configure the parameters.

Parameter

Description

Business Activity

Select the business activity for which you want to create a logical fact table. Business activities are categorized into three types: Business Event, Business Snapshot, and Business Process.

Table Type

The logical table type is determined by the business activity type.

Business Activity selected as Business Event sets the Table Type to Event Logical Fact Table.

Business Activity selected as Business Snapshot sets the Table Type to Snapshot Logical Fact Table.

Business Activity selected as Business Process sets the Table Type to Process Logical Fact Table.

Data

By default, this is the name of the data block where the business activity is located and cannot be modified.

Data Domain

By default, this is the name of the subject area where the business activity is located and cannot be modified.

Computing Engine

Tenants with Dataphin instances set to Hadoop compute engine can select compute engines, including Hive, Impala, and Spark.

ImportantThe corresponding compute engine must be enabled before it can be selected. For more information, see create a Hadoop compute source.

The compute engines have the following limitations:

Hive: Cannot read source tables stored in Kudu format.

Impala: Can read source tables stored in Kudu format but does not support storing logical tables as Kudu. Not recommended if you do not have source tables in Kudu format.

Spark: Spark cannot read source tables stored in Kudu format.

Data Latency

Data latency defines the scheduling type of the subsequent logical fact table task in the production environment. The logical fact table supports Offline T+1 (daily task), Offline T+h (hourly task), and Offline T+m (minute task).

NoteArgoDB, StarRocks, SelectDB, and Doris compute engines only support offline T+1 (daily table).

Logical Table Name

Input the logical table name, ensuring it does not exceed 100 characters. Upon selecting the business object, the system autofills the logical table name according to the following pattern:

<data block name>.fct_<business object code>_<data latency>.ImportantOnly letters, numbers, and underscores (_) are permitted, with the first character being a letter. The system is case-insensitive and automatically converts uppercase letters to lowercase.

label_is a system-reserved prefix, and names cannot start withlabel_.For AnalyticDB for PostgreSQL, the table name must be within 50 characters.

Different data latencies have distinct naming formats for logical table names, with

<data latency>indicating different information:di: T+1 latency, daily incremental, stores the incremental data of the day every day.

hi: T+h latency, hourly incremental, stores the incremental data of the current hour every hour.

thi: T+h latency, hourly incremental, stores the incremental data from 0:00 to the current hour every hour.

mi: T+m latency, minute incremental, stores the incremental data of the last 15 minutes every 15 minutes.

tmi: T+m latency, minute incremental, stores the incremental data from 0:00 to the current minute every 15 minutes.

df/da: T+1 latency, daily full, stores the full data up to the current day every day.

hf: T+h latency, hourly full, stores the full data up to the current hour every hour.

mf: T+m latency, minute full, stores the full data up to the last 15 minutes every 15 minutes.

Chinese Name

The naming convention for custom names is:

May include Chinese characters, letters, numbers, underscores (_), or hyphens (-).

Cannot exceed 64 characters.

Description

Provide a brief description of the logical fact table, within a 1000-character limit.

Click OK.

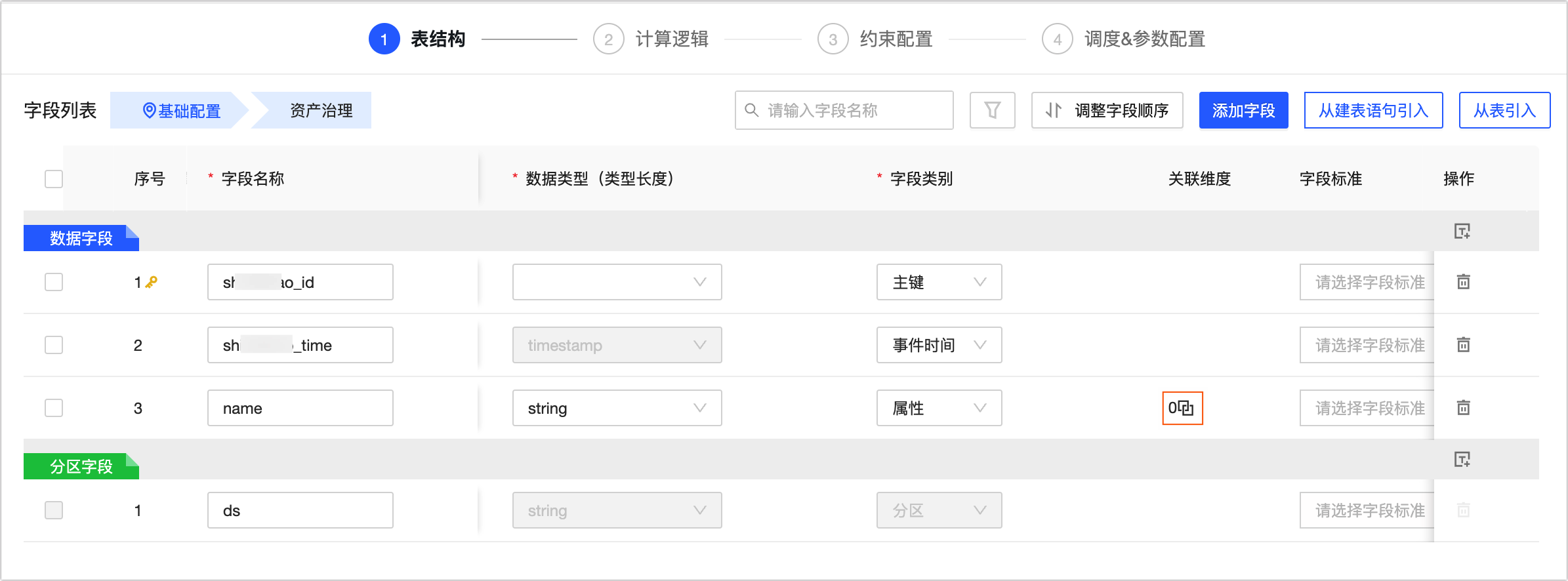

Step 2: Configure the structure of the logical fact table

On the Table Structure page, configure the parameters.

Area

Description

①Table Field Positioning

Click to locate the Basic Configuration or Asset Administration configuration in the field list.

①Search and Filter Area

You can search for fields by name within the table field list.

Click

to apply filters such as Data Type, Field Category, Whether There Is an Associated Dimension, Associated Dimension, Field Constraint, Data Classification conditions.

to apply filters such as Data Type, Field Category, Whether There Is an Associated Dimension, Associated Dimension, Field Constraint, Data Classification conditions.②List Operations

Reorder Columns: Before adjusting the field order, ensure that downstream references to this logical table do not use

select *to query this logical table to prevent data errors.Import Fields: This feature lets you add new fields to a table. Supports importing from Create Table Statement and Import From Table. For more information, see Import fields for a logical fact table.

Add Fields: Supports adding Data Fields and Partition Fields. You can edit the field's Name, Description, Data Type, Column Type, Associated Dimension, Recommend Standards, Field Constraints, Data Class, Data Sensitivity Level, and Remarks information based on business requirements.

NoteMaxCompute engine supports up to 6-level partition fields.

ArgoDB, StarRocks, SelectDB, and Doris compute engines do not support adding partition fields.

③Field List

The field list displays detailed information about the fields, including Serial Number, Column Name, Description, Data Type, Column Type, Associated Dimension, Recommend Standards, Field Constraints, Data Class, Data Sensitivity Level, and Remarks.

Serial Number: The serial number of the table field. Each new field increments by 1.

Field Name: The name of the table field. You can enter the field name or Chinese keywords, and the system will automatically match the standard preset field name.

Description: The description information of the table field, limited to 512 characters.

Data Type: Supports string, bigint, double, timestamp, decimal, Text, Numeric, Datetime Variable, and Other data types.

Column Type: Supports setting as Primary Key, Partition, Property.

NoteOnly one primary key field is allowed.

Only fields of type string, varchar, bigint, int, tinyint, smallinit are supported as partition fields.

Associated Dimension: For specific operations, see adding associated dimensions.

Recommend Standards: Select the field standard for the field. To create a standard, see create and manage data standards.

Field Constraints: Select the field constraints for the field. Supports Unique and Not Null constraints.

Data Class: Select the data classification for the field. To create a data classification, see create data classifications.

Data Sensitivity Level: After selecting the data classification, the system will automatically identify the data level.

Remarks: Enter remarks for the field, limited to 2048 characters.

You can also perform Delete operations on fields in the operation column.

NoteField deletions cannot be undone.

④Batch Operations

You can batch select table fields to perform the following operations.

Delete: Click the

icon to batch delete the selected data fields.

icon to batch delete the selected data fields.Data Type: Click the

icon to batch modify the data types of the selected fields.

icon to batch modify the data types of the selected fields.Column Type: Click the

icon to batch modify the column types of the selected fields.

icon to batch modify the column types of the selected fields.Recommend Names: Click the

icon. The system will tokenize the content in the field's description and match it with created roots to recommend field names. You can replace the names of selected fields with modified values in the root naming dialog box. The following figure shows an example:

icon. The system will tokenize the content in the field's description and match it with created roots to recommend field names. You can replace the names of selected fields with modified values in the root naming dialog box. The following figure shows an example: Note

NoteIf the recommended field names do not meet your requirements, you can modify them in the Modified Field Name input box.

Click Reset to reset the Modified Field Name to the system's matched root.

Recommend Standards: Click the

icon. The system will recommend field standards based on field names. You can set the fields to the recommended field standards in the field standards dialog box.

icon. The system will recommend field standards based on field names. You can set the fields to the recommended field standards in the field standards dialog box.Field Constraints: Click the

icon to batch set field constraints.Important

icon to batch set field constraints.ImportantSub-dimension logical tables do not support setting field constraints.

Click Save And Next.

Import fields for a logical fact table

Import from table

On the logical fact table configuration page, click Import From Table.

In the Import From Table dialog box, select the Source Table, and then choose the fields that you want to add.

Parameter

Description

Source Table

Supports selecting all physical tables (non-Dataphin automatically generated physical tables), logical tables, and views (non-parameterized views) with read permissions under the current tenant.

For information on how to obtain read permissions for physical tables, see apply for, renew, and return table permissions.

Field List

Select the fields you need to add.

NoteYou can select fields from multiple source tables by switching the source table.

Selected Fields

Added fields will be included with the selected fields. You can perform Delete operations on the selected fields.

Click Add to import fields from a physical table into the logical fact table.

After the fields are added to the New Fields area, you can edit their name, data type, field category, and associated dimensions as needed.

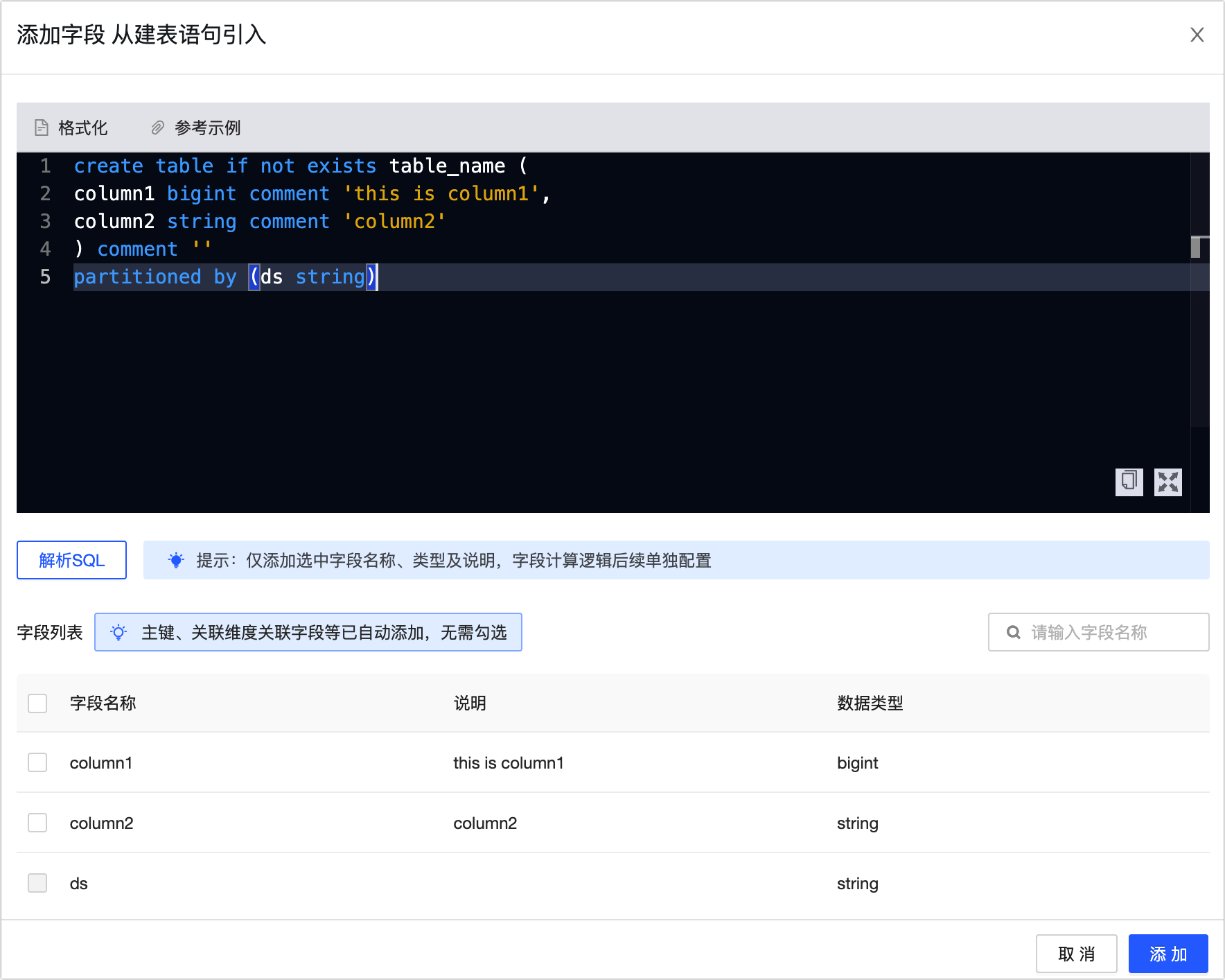

Import from create table statement

Depending on whether the logical fact table has a primary key, select the source table for the measure fields. If the logical fact table has a primary key, measure fields can only be selected from the main source table. If there is no primary key, measure fields can be selected from the main source table and other data tables.

On the configuration page of the logical fact table, click Import From Create Table Statement.

In the Import From Create Table Statement dialog box, enter the CREATE TABLE statement and click Parse SQL.

Select the fields you need from the field list and click Add to create new fields for the logical fact table.

Add associated dimensions

Associate foreign key fields in the fact attributes of the logical fact table with dimensions.

On the logical fact table's configuration page, click the

icon to open the edit model relationship dialog box.

icon to open the edit model relationship dialog box.

In the Edit Model Relationship dialog box, configure the parameters.

Area

Parameter

Description

Null Value Replacement

If the foreign key field of the logical fact table cannot be associated with the dimension table, the system will set the Default Value to -110.

Associated Dimension

Associate Entity, Logical Dimension Table

Select the Associated Entity and Logical Dimension Table you have created.

Edit Association Logic

Association Logic

Select fields in the logical fact table and configure the relationship between the logical fact table fields and the primary key in the dimension logical table that needs to be associated.

Dimension Table Version Policy

Select the version (partition) of the associated dimension table. By default, the same cycle partition version as the primary table is used.

Missing Association Policy

The missing association policy defines the calculation logic for fields that exist in the main source table (left table) but do not exist in the dimension logical table (right table), meaning fields that cannot be associated. You can choose Retain Original Missing Association Data and Use Default Value For Missing Association Data:

Retain Original Missing Association Data: When creating derived metrics, the original data from the left table is retained.

Use Default Value For Missing Association Data: If fields in the dimension table are not associated with the logical fact table, the default value -110 is used.

Edit Dimension Role

Role English Name, Role Name

Dimension roles serve as dimension aliases. When referencing the same dimension multiple times, unique role names must be defined, creating a role assumption effect. You need to define the Role English Name and Role Name:

The prefix of the Role English Name is by default

dim, and the naming convention for the custom part is:Contains letters, numbers, or underscores (_).

Cannot exceed 64 characters.

The naming convention for the Role Name:

Contains Chinese characters, numbers, letters, underscores (_), or hyphens (-).

Cannot exceed 64 characters.

Click OK.

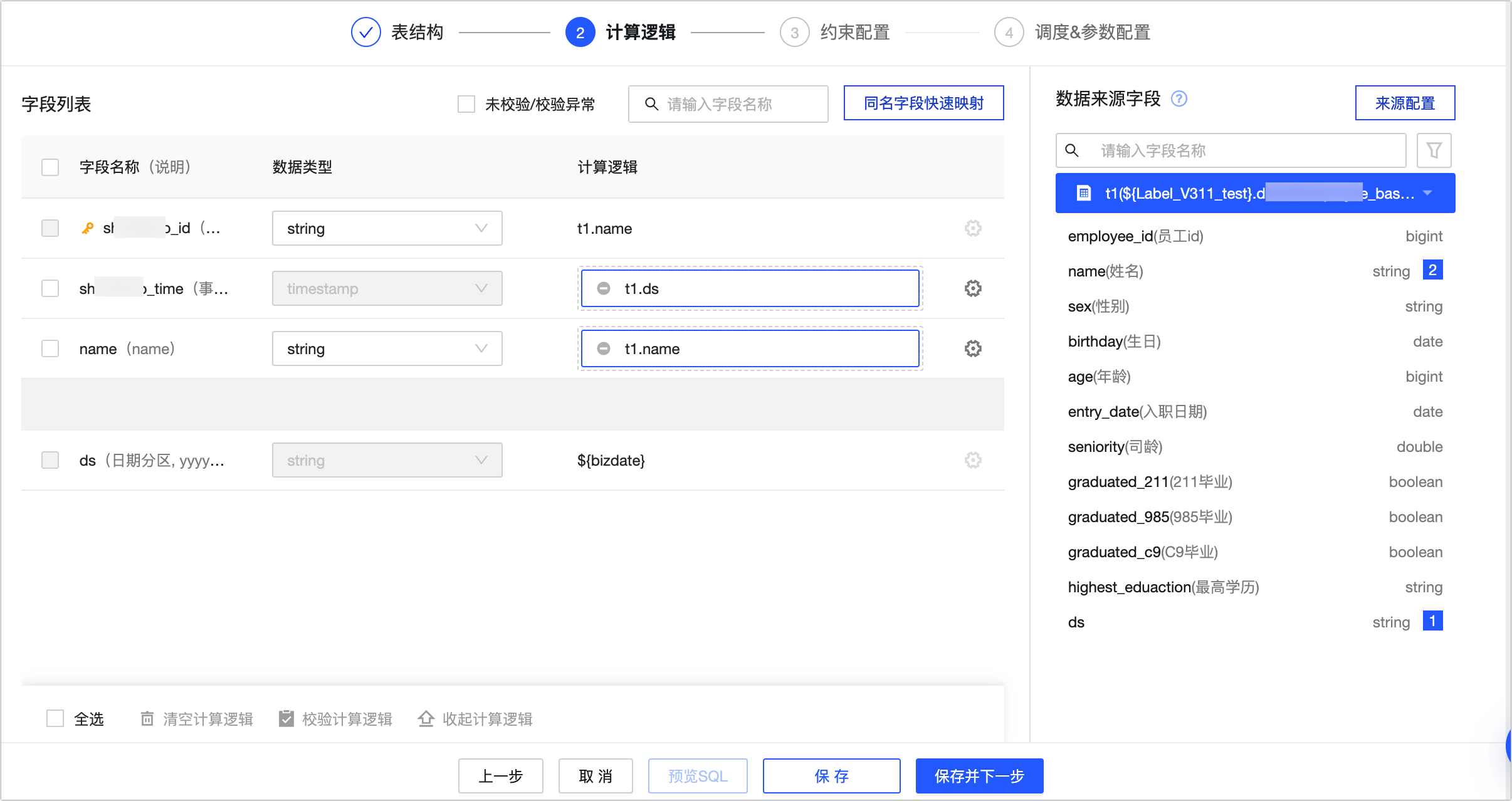

Step 3: Configure the computation logic of the logical fact table

On the Computation Logic page, configure the mapping between the source data and the primary key of the logical fact table.

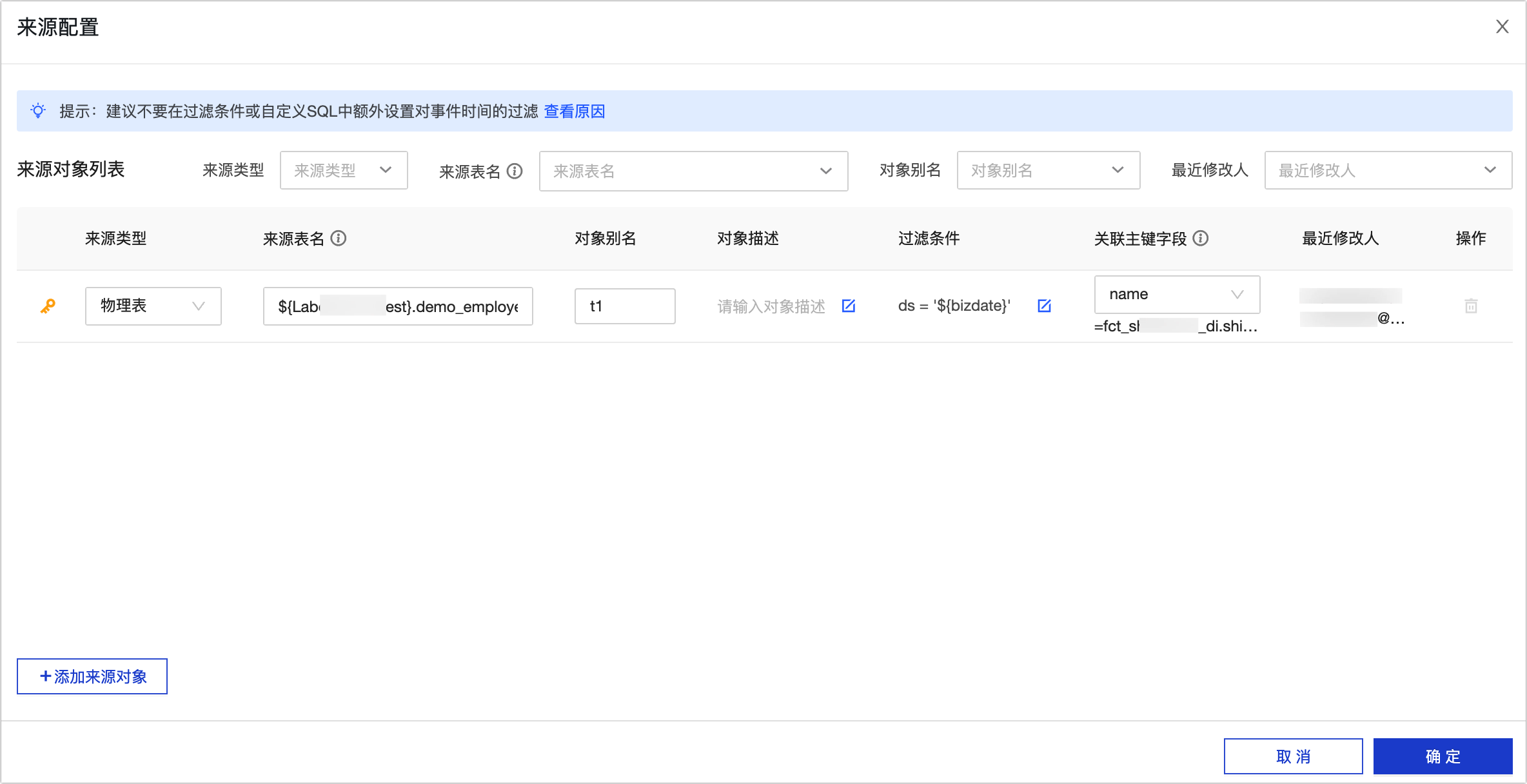

Click Source Configuration to open the Source Configuration dialog box, and in the Source Configuration dialog box, configure the source parameters.

Note

NoteAvoid setting additional filters for event time in filter conditions or custom SQL.

If the logical table lacks a primary key and has multiple source tables, use custom SQL to pre-associate the tables.

Parameter

Description

Source Type

Supports three source types: Physical Table, SQL, and Logical Table.

Source Table Type Description:

Primary Key Source Table: Logical tables with primary keys can configure multiple sources, with the first one being the main source. The total data volume (rows) in the logical table is determined by this source.

No Primary Key Source Table: Logical tables without primary keys can only configure one source. If there are multiple source tables, use custom SQL to pre-associate the tables.

NoteTo configure multiple source objects, you can click Add Source Object to add more.

Source Object

Select Physical Table: Choose from all physical tables (non-Dataphin generated) and physical views (non-parameterized) with read permissions under the current tenant.

For more information on how to obtain read permissions for physical tables, see apply for, renew, and return table permissions.

Select SQL: Click the

icon and enter the content in the editor, for example:

icon and enter the content in the editor, for example:select id, name from project_name_dev.table_name1 t1 join project_name2_dev.table_name2 t2 on t1.id = t2.idSelect Logical Table: Choose from all logical tables with read permissions under the current tenant.

For more information on how to obtain read permissions for physical tables, see apply for, renew, and return table permissions.

ImportantUsing a logical table as a data source for another logical table increases the complexity of the computation logic and the difficulty of operation and maintenance. Please choose carefully.

Object Alias

Customize the alias of the source table. For example: t1, t2.

Object Description

Enter the description information of the object, limited to 1000 characters.

Filter Condition

Click the

icon and enter the content in the editor, for example:

icon and enter the content in the editor, for example:ds=${bizdate} and condition1=value1.Custom SQL has no filter conditions.

Foreign Key Field

The field in the source object that corresponds to the primary key of the logical table and can be associated with the primary key through an equality relationship.

Delete

The main source does not support deletion.

For logical tables without primary keys, deleting the source will clear the computation logic of the fields.

Click OK to complete the source configuration.

After completing the source configuration, drag the source fields into the computation logic.

You can also click the Map By Name button to batch place the source fields into the computation logic of logical table fields with the same name.

Click the

icon to edit the computation logic expression in the editor (the expression does not support aggregate functions: sum, count, min, etc.), for example:

icon to edit the computation logic expression in the editor (the expression does not support aggregate functions: sum, count, min, etc.), for example:Example 1:

substr(t1.column2, 3, 10Example 2:

case when t1.column2 != '1' then 'Y' else 'N' endExample 3:

t1.column2 + t2.column1

Click the

icon at the bottom to validate the expression.

icon at the bottom to validate the expression.Click the Preview SQL button to view the computation logic SQL.

Click Save And Next.

Step 4: Configure constraints

Based on field constraints, the system creates quality rules for the current logical table in the quality module. You can set the rule strength for the fields, including strong rules and soft rules. For more information, see Data table quality rules.

Click Save And Next.

Step 5: Configure the scheduling configuration of the logical fact table

On the Scheduling & Parameter Configuration page, configure the Data Latency, Scheduling Properties, Scheduling Dependencies, and Scheduling Parameters and Runtime Configuration for the logical fact table.

Parameter

Description

Data latency

When data latency is enabled, the system will automatically reprocess all data within the Maximum Latency Days period for the specified logical table. For more information, see Configure data latency for logical tables.

Scheduling Properties

Used to define the scheduling method for the logical fact table in the production environment. Through scheduling properties, you can configure the scheduling type, scheduling cycle, scheduling logic, and execution of the logical fact table. For more information, see Configure scheduling properties for logical tables.

Upstream dependency

Used to define the node of the logical table in the scheduling task. Dataphin runs each node in the business flow in an orderly manner through the configuration results of the scheduling dependencies of each node, ensuring that business data is produced effectively and in a timely manner. For more information, see configure upstream dependencies for logical tables.

Parameter Configuration

Parameter configuration involves assigning values to variables within the code, enabling automatic substitution of these variables with their respective values during node scheduling. On the parameter configuration page for scheduling, you have the option to either Ignore or Convert To Global Variables. For further details, see configure parameters for logical tables.

Runtime Configuration

You can configure task-level runtime timeouts and rerun strategies for the logical fact table based on your business scenarios. This prevents resource waste from prolonged computing tasks while enhancing the reliability of task operations. For more information, see configure runtime for logical tables

Resource Configuration

You can configure the scheduling resource group for the current logical table task. The logical table task will occupy the resource quota of this resource group during scheduling. For configuration instructions, see Configure resources for logical tables.

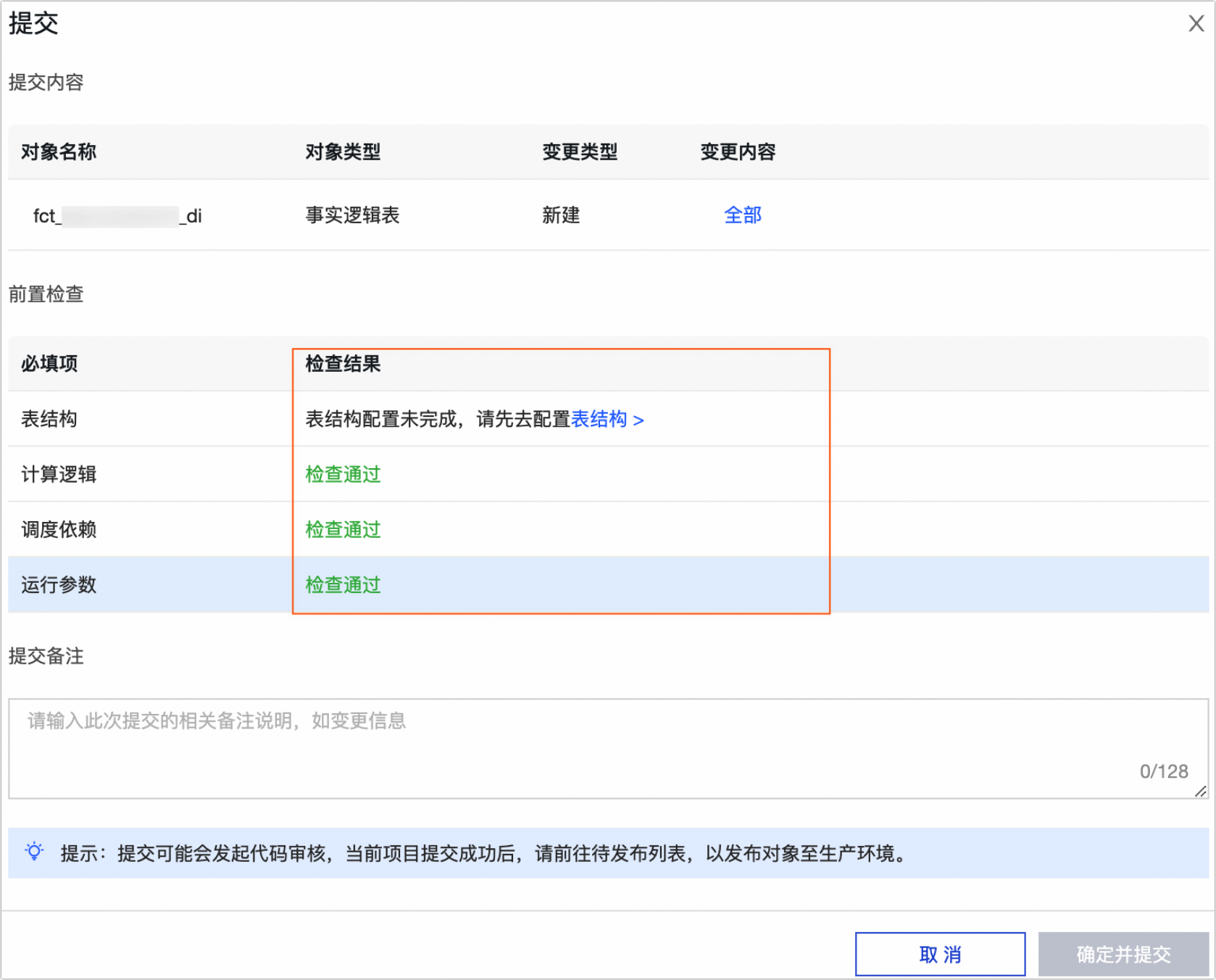

Step 6: Save and submit the logical table

After configuring the logical fact table, click Save and Submit. The system will validate the Table Structure, Computation Logic, Scheduling Dependencies, and Runtime Parameters. Review the configuration and address any issues indicated by the check results.

Once all checks pass, enter the submission remarks and click Confirm and Submit.

Upon submission, Dataphin performs lineage analysis and submission checks for the task. For more information, see Submission guidelines for standardized modeling tasks or .

What to do next

If your project is in Dev-Prod mode, you must publish the logical table to the production environment. For more information, see Manage publish tasks.

After the logical table is published to the production environment, you can view and maintain the logical table tasks in the Operation Center. For more information, see Operation Center.