This topic describes how to view the details of physical tables and fields when the compute engine is MaxCompute.

Accessing physical table details

On the Dataphin home page, click Administration > Asset Checklist in the top menu bar.

Click the Data Table tab, select the target physical table, and click the name of the physical table or the

icon in the Actions column to open the object details page.

icon in the Actions column to open the object details page.

Physical table details

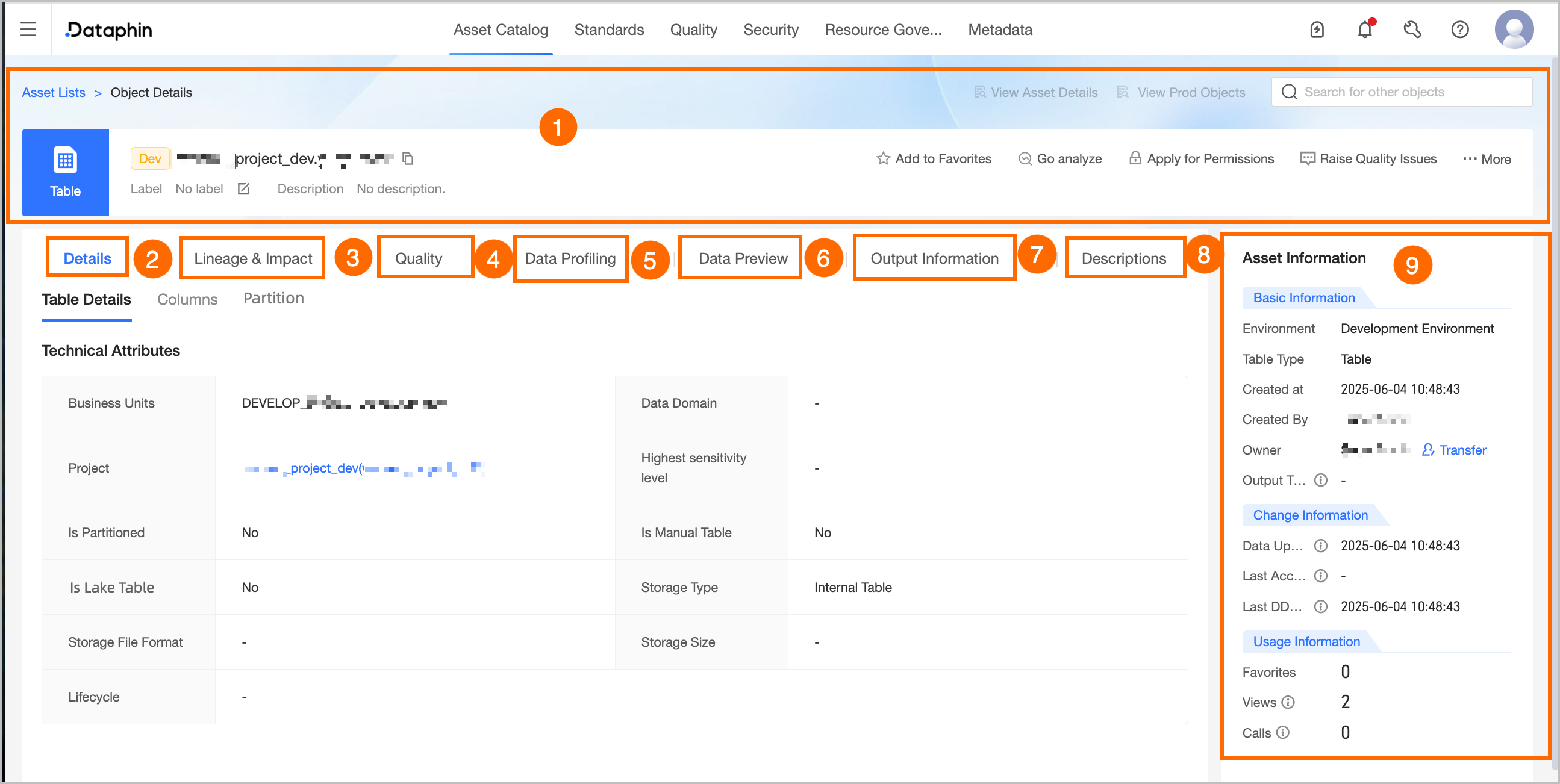

Area | Description |

① Summary information | Displays information about the table, such as its type, environment, name, tags, and description. You can also perform the following operations:

Note Analysis platform tables do not support the Go Analysis, Request Permission, Feedback quality issues, Edit Table, View Transfer-out Records, or View Permission List operations. |

② Detail information | Displays information about the table, fields, and partitions.

|

③ Lineage & impact |

|

④ Quality overview | If you enable the Data Quality feature, an overview of rule validation and a list of quality monitoring rules for the current data table are displayed. Click View Report Details or View Rule Details to go to the corresponding page in the Data Quality module for more details. Note You cannot view the quality overview for analysis platform tables. |

⑤ Data exploration | If you enable the Data Quality feature, you can configure data exploration tasks for the data table to quickly understand the data profile and assess data availability and potential threats in advance. If you want to enable automatic exploration, you can enable the corresponding configuration in Administration > Metadata Center > Exploration and Analysis. For more information, see Create a data exploration task. |

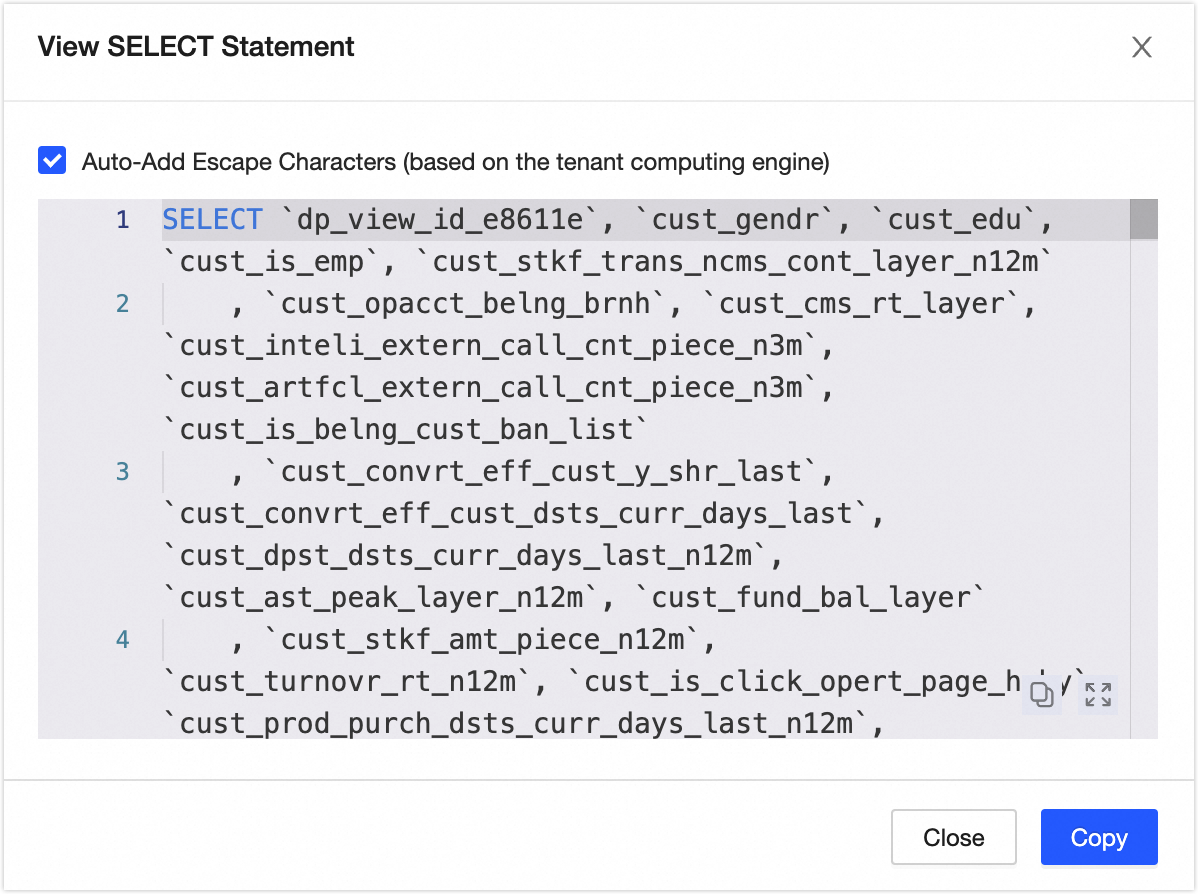

⑥ Data preview | If sample data exists for the data table, the sample data is displayed by default. You can also manually trigger a query to obtain the latest data. If no sample data exists, a data preview query is automatically triggered.

You can search or filter the data by field, view the details of a single row, adjust the column width, and transpose rows and columns. You can also click the sort icon next to a field to select No Sort, Ascending, or Descending. Double-click a field value to copy it. |

⑦ Output information | Output tasks include data write tasks for the object, tasks for which lineage is automatically parsed or custom-configured with the current table as the output table, and tasks whose output name is in the format of `Project name.Table name`. The output task list is updated in near real-time. The output details are updated at T+1.

|

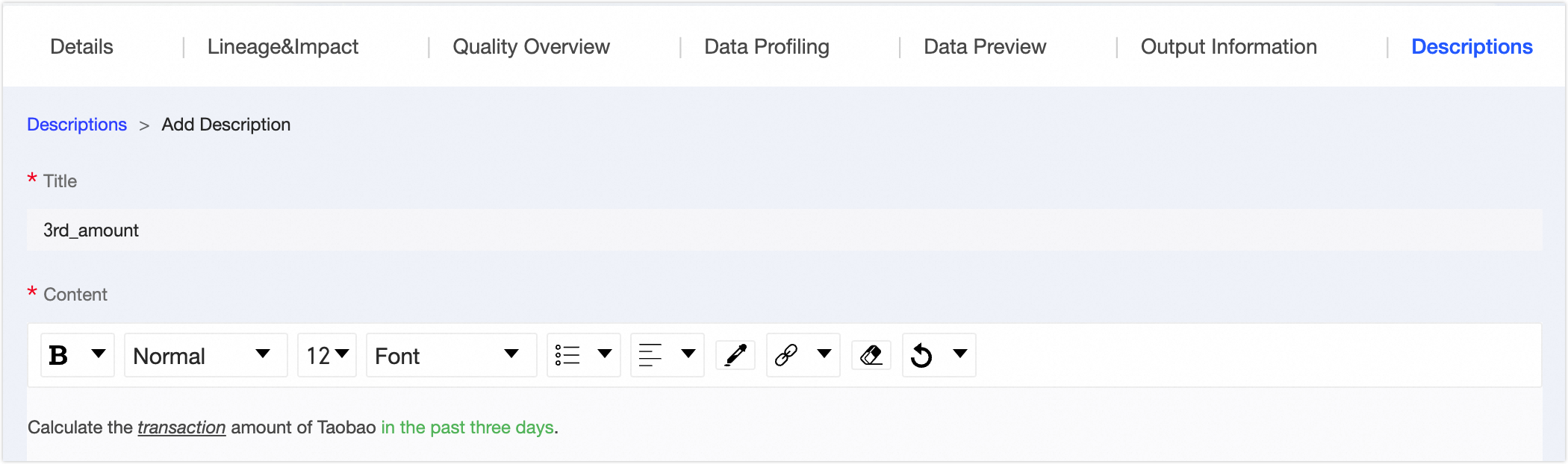

⑧ Usage instructions | You can add usage instructions for the data table to provide information for data viewers and consumers. Click Add Usage Instructions, and enter a title and content. |

⑨ Asset information | Displays detailed information about the physical table, such as Basic Information, Change Information, and Usage Information.

|

Table-level lineage

The table-level lineage page displays a lineage graph. The graph includes lineage from sync tasks, SQL compute tasks, and logical table tasks that is automatically parsed by the system, custom lineage that is manually configured for compute tasks, and external lineage that is registered using OpenAPI.

Area | Description |

①Search and quick operation area |

|

②Legend area | The data tables supported by table-level lineage include Physical Table, Logical Dimension Table, Logical Fact Table, Logical Summary Table, logical tag table, View, Materialized View, Logical View, Meta Table, Mirror Table, and Datasource Table. |

③Lineage graph display area | Displays the complete event chain diagram. You can manually expand multiple levels of upstream or downstream dependencies. You can perform a fuzzy search by data table name keyword.

|

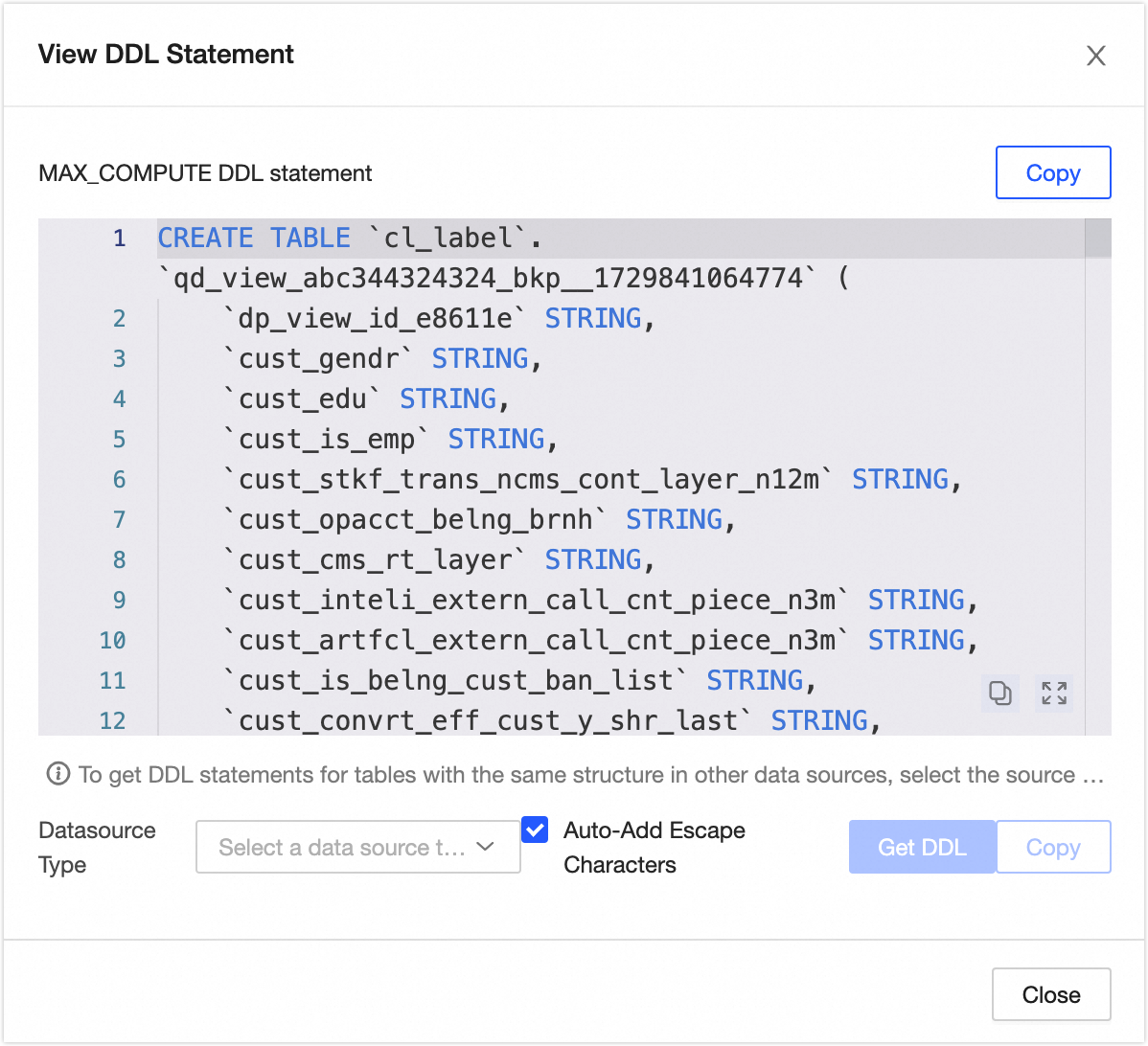

④Object details area | Hover over a table to view its details. The data source table lists the Name, Object Type, Storage Format, Data Source, and Lineage Source. Dataphin data table: Displays the table's Name, File Format, Storage File Format, the Subject Area of logical tables and logical views or the Project of physical tables and physical views, Owner, Storage Size, Lifecycle (this information is not displayed for compute engines on Hadoop clusters), Description, and Lineage Source. You can also perform the View Lineage, View DDL, and Request Permission operations.

|

Field-level lineage

The field lineage page displays a lineage graph. The graph includes lineage from sync tasks, SQL compute tasks, and logical table tasks that is automatically parsed by the system, custom lineage that is manually configured for compute tasks, and external lineage that is registered using OpenAPI.

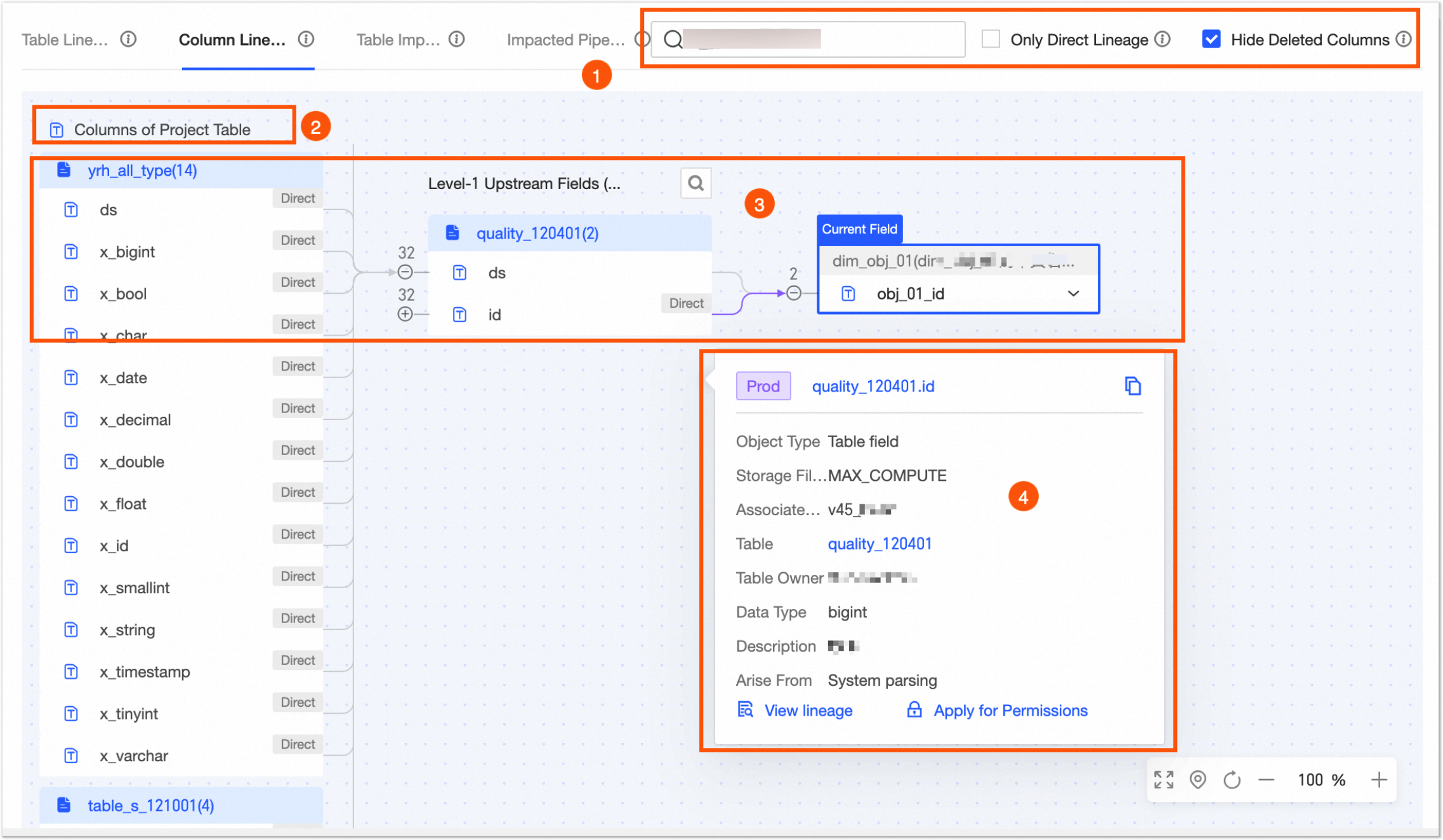

Area | Description |

①Search and quick operation area |

|

②Legend area | The fields supported by field lineage include Compute Source Table Fields and Data Source Table Fields. |

③Lineage graph display area | Displays the complete event chain diagram. You can manually expand multiple levels of upstream or downstream dependencies. You can perform a fuzzy search by field name keyword. If a circular dependency exists, you cannot expand it further. You must view the downstream dependencies from the starting node. Central node: Displays the current field and its table name, and is marked with Current Field in the upper-left corner. You can perform a fuzzy search by field keyword to switch to and view the lineage graphs of different fields. |

④Object details area | Hover over a field to display its Name, Object Type, Storage Format, the Board for the logical table or logical view, the Project for the physical table or physical view, the Table, the Table Owner, the Data Type, the Description, and Lineage Source. You can also View Lineage and Request Permission.

|

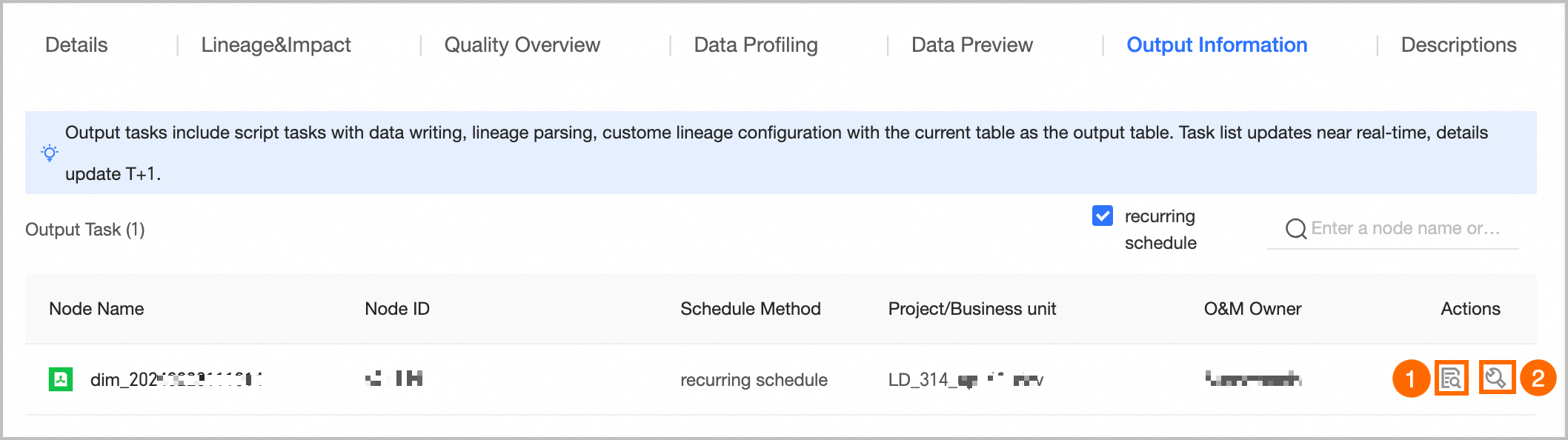

Output details

You can view the output tasks for the data table. These tasks include data write tasks for the object, tasks that are parsed or configured to use the current table as the output table, and tasks whose output name is in the `Project name.Table name` format.

Area | Description |

①Task details | Displays the Node Name, Task ID, Subject Area, and Owner. |

②Recurring instance | Displays the Average Start Time, Average Output Time, and Average Running Duration.

|

③Running details | Displays the Data Timestamp, Status, Scheduled Time, Start Time, End Time, and Running Duration. You can also perform the View Instance and View Log operations in the Actions column.

|

Field details

This section displays the details of the data table that contains the current field. For more information, see Physical table details.