Database Autonomy Service (DAS) can help enterprises save up to 90% of database administration costs and reduce 80% of O&M risks. This helps enterprises focus on business innovation and growth. In this topic, examples during Double 11 are used to describe the five core autonomy features of DAS: 24/7 real-time anomaly detection, fault self-healing, automatic optimization, auto scaling, and intelligent stress testing.

24/7 real-time anomaly detection

DAS provides 24/7 real-time anomaly detection based on machine learning algorithms. Compared with the traditional threshold-based alerting method, this feature detects database workload anomalies in real time. If you use the traditional alerting method, failures are detected after they occur. You can use DAS to collect data of various types. For example, you can collect hundreds of database performance metrics and SQL query logs from links. DAS also allows you to process and store large amounts of data online and offline. You can use machine learning and forecasting algorithms of databases to implement continuous model training, real-time model-based forecasting, and real-time anomaly detection and analysis. Compared with the traditional rule-based and threshold-based methods, the real-time anomaly detection feature has the following advantages:

Wide detection scope. In addition to various monitoring metrics, DAS monitors items such as SQL queries, logs, and locks.

Near-real-time detection. Anomalies are detected in near real time. If you use the traditional methods, anomalies are detected after they occur.

AI-based anomaly-driven detection. Different from the traditional alerting method that detects failures after they occur, DAS detects anomalies in real time.

Periodic anomaly identification, adaptation to different service characteristics, and forecasting.

The real-time anomaly detection feature can accurately and automatically identify common workload anomalies, such as glitches, periodic characteristics, trend characteristics, and mean offset. This feature detects anomalies by analyzing the characteristics of multiple time series. After an anomaly is detected, the real-time anomaly detection feature triggers global diagnostics and analysis based on root causes, subsequent recovery from failures, and optimization.

Automatic self-healing

The 24/7 real-time anomaly detection feature ensures that database instance exceptions are detected in real time. DAS automatically analyzes root causes. Then, DAS stops operations that may impair the system or repairs the system. This way, your database can be automatically restored. This reduces the impact on enterprise business.

At 12:31:00 on November 5, 2020, the number of active sessions and the CPU utilization of a database instance that was connected to DAS surged. At 12:33:00, the DAS anomaly detection center confirmed that the increase was caused by a database exception instead of jitters. This exception triggered automatic SQL throttling and root cause diagnostics. At 12:34:00, the diagnostic tests were complete. Based on the result, the system identified two SQL statements that caused the exception. After these SQL statements were detected, automatic SQL throttling was immediately initiated. Then, the number of active sessions started to decrease. After the existing problematic SQL statements were executed, the number of active sessions was restored to a normal value in a short period of time, and the CPU utilization was also restored to a normal value. This entire process meets the following requirement of the self-healing capability: 1-5-10. The number 1 indicates that anomalies are detected within 1 minute. The number 5 indicates that anomalies are located within 5 minutes. The number 10 indicates that anomalies are handled within 10 minutes.

External automatic SQL optimization

In most cases, approximately 80% of database issues can be solved by SQL optimization. However, SQL optimization is a complex process that requires expert knowledge and experience on databases. SQL optimization is also time-consuming because the workloads for executing SQL statements are constantly changing. All of the preceding factors make SQL optimization a demanding task that requires high expertise and incurs high costs.

DAS continuously performs SQL review and optimization for databases based on global workloads and actual business scenarios. DAS works like a professional database administrator (DBA) to manage your databases around the clock. This makes SQL optimization effective. Compared with the traditional methods, the SQL diagnostics capability of DAS has the following advantages:

SQL diagnostics uses the external cost-based model to provide indexing and statement rewriting recommendations, identify performance bottlenecks, and make specification recommendations. This feature helps prevent the issues caused by the traditional rule-based methods. The rule-based traditional methods may cause inappropriate recommendations and failures in quantifying performance improvements.

DAS is suitable for a wide range of scenarios: formal signature databases for testing, automatic feedback extraction from online use cases, and diverse scenarios within Alibaba Group.

SQL optimization is implemented based on data generated from global workloads, such as the execution frequency and read/write ratio of SQL statements. This minimizes the issues caused by SQL optimization based on a small number of workloads.

The example shows a use case of automatic SQL optimization during Double 11. On November 7, DAS detected a load exception caused by slow SQL statements by using the load anomaly detection feature. This exception automatically triggered a closed-loop process for SQL optimization. After the optimized SQL statements were published, the optimization result was tracked for 24 consecutive hours to evaluate the optimization. The optimization was successful. The statistics show that before the optimization the average number of scanned rows for the SQL statements was 148,889.198 and the average RT was 505.561 milliseconds. After the optimization, the average number of scanned rows was 12.132. This value is approximately one ten-thousandth of the average RT before the optimization. The average RT decreased to 0.471 milliseconds. This value is approximately one thousandth of the average RT before the optimization.

Average RT and the number of scanned rows before the automatic SQL optimization

Average RT and the number of scanned rows after the automatic SQL optimization

Auto scaling

Alibaba Cloud databases provide diverse computing specifications and storage capacities. When your business workloads change, auto scaling can be enabled for your databases. For cloud-native applications, databases automatically determine the optimal specifications based on the changes of your business workloads. This way, the minimum number of resources is used to meet database capacity requirements. The time series forecasting feature of DAS uses AI to automatically calculate and forecast the business model and capacity requirements of a database. This way, auto scaling can be enabled for your database to meet your business requirements in real time.

The auto scaling feature of DAS implements a closed data loop. The loop consists of the following modules: performance data collection, the decision center, algorithm modeling, specification recommendation, management and execution, and task tracking and evaluation.

The module for performance data collection collects real-time performance data of instances, such as various performance metrics, specification configuration, and information about active sessions on instances.

The decision center module provides a global trend based on the instance sessions and performance data. This way, global autonomy is implemented based on root causes. For example, DAS enables SQL throttling to solve the issue of insufficient computing resources. If the trend shows that business traffic surges, auto scaling is enabled.

Algorithm modeling is the core of the DAS auto scaling feature. The algorithms help you detect business workload exceptions and recommend capacity specifications for database instances. This solves the issues such as difficulties in selecting the scaling time, scaling method, and computing specifications.

The module for specification recommendation and verification makes specification recommendations and checks whether the recommended specifications are suitable for the deployment types and running environments of database instances. This module also checks whether the recommended specifications are available in the current region. This ensures that the recommended specifications can be used on the client.

The management and execution module uses the generated recommended specifications to distribute and execute tasks.

The status tracking module measures and tracks the changes of database instance performance before and after the specifications are changed.

For a PolarDB instance that was connected to DAS, the business traffic of the user steadily increased. The CPU utilization of the PolarDB instance continued to surge and became high. DAS accurately detected the instance exception by using the auto scaling algorithm and automatically added two read-only nodes to the instance. Then, the CPU utilization of the instance was decreased to a lower value. After the CPU utilization maintained a low value for two hours, the instance traffic continued to increase and the auto scaling feature was triggered again. The auto scaling feature upgraded the instance specifications from 4 CPU cores and 8 GB memory to 8 CPU cores and 16 GB memory. Then, the instance ran as expected for more than 10 hours. This ensured the availability of the instance during peak hours.

Intelligent stress testing

The intelligent stress testing feature of DAS allows you to evaluate the required database specifications and capacity before you deploy your service in the cloud or before business promotions. The auto scaling feature helps you perform automatic scaling on your resources based on the specified performance threshold of your database or the built-in intelligent policies of DAS. This way, the workloads for specification evaluation and management are decreased.

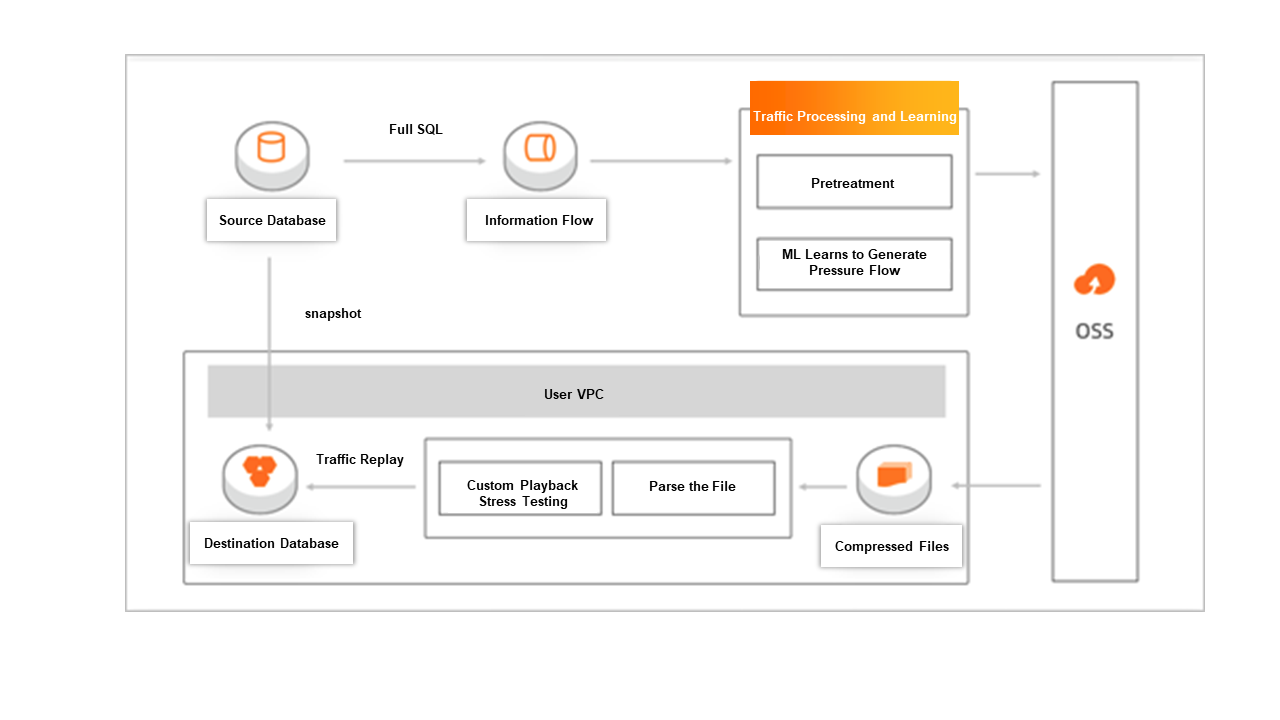

Most traditional stress testing solutions use existing stress testing tools such as Sysbench and TPC-C. One of the major problems of these solutions is that the SQL statements used in these stress testing tools significantly differ from those in actual business scenarios. The stress testing results cannot show the actual performance and stability of your business. The intelligent stress testing feature of DAS is implemented based on actual business workloads. Therefore, the stress testing results reflect the performance and stability changes of the database instances for different workloads. For this purpose, the intelligent stress testing feature must overcome the following challenges:

Long-term stress testing, such as 24/7 stress testing for evaluating business stability, can be implemented when a large number of SQL statements cannot be collected. SQL statement collection requires time and incurs storage costs. When some SQL statements are provided, DAS needs to generate the SQL statements that meet your business requirements.

Concurrent threads can be used to play back the traffic of instances. DAS must ensure that the concurrency is consistent with that of actual business. DAS also needs to provide the playback rate options, such as 2x and 10x, and peak stress testing. The rate 2x specifies that the playback rate is two times the normal rate. The rate 10x specifies that the playback rate is 10 times the normal rate.

DAS automatically learns the business model to generate actual business workloads that fall within the stress testing period. DAS also provides diverse stress testing scenarios to help you overcome challenges in promotions and database selection.