DataHub is a real-time data distribution platform that is designed to process streaming data. You can publish and subscribe to streaming data in DataHub and distribute the data to other platforms. DataHub allows you to analyze streaming data and build applications based on streaming data. This topic describes how to synchronize data from a PolarDB for MySQL cluster to a DataHub instance by using Data Transmission Service (DTS). After you synchronize data to DataHub, you can use big data services such as Realtime Compute for Apache Flink to analyze data in real time.

Prerequisites

The DataHub instance resides in the China (Hangzhou), China (Shanghai), China (Beijing), or China (Shenzhen) region.

A DataHub project is created to receive the synchronized data. For more information, see Create a project.

The binary logging feature is enabled for the PolarDB for MySQL cluster. For more information, see Enable binary logging.

The tables to be synchronized from the PolarDB for MySQL cluster have PRIMARY KEY or UNIQUE constraints.

Limits

Initial full data synchronization is not supported. DTS does not synchronize historical data of the required objects from the source PolarDB cluster to the destination DataHub instance.

You can select only tables as the objects to be synchronized.

After a data synchronization task is started, DTS does not synchronize columns that are created in the source PolarDB cluster to the destination DataHub instance.

We recommend that you do not perform data definition language (DDL) operations on the required objects during data synchronization. Otherwise, data synchronization may fail.

SQL operations that can be synchronized

Operation type | SQL statement |

DML | INSERT, UPDATE, and DELETE |

DDL | ADD COLUMN |

Procedure

Purchase a data synchronization instance. For more information, see Purchase a DTS instance.

NoteOn the buy page, set Source Instance to PolarDB, set Destination Instance to DataHub, and set Synchronization Topology to One-way Synchronization.

Log on to the DTS console.

NoteIf you are redirected to the Data Management (DMS) console, you can click the

icon in the

icon in the  to go to the previous version of the DTS console.

to go to the previous version of the DTS console.In the left-side navigation pane, click Data Synchronization.

In the upper part of the Synchronization Tasks page, select the region in which the destination instance resides.

Find the data synchronization instance and click Configure Task in the Actions column.

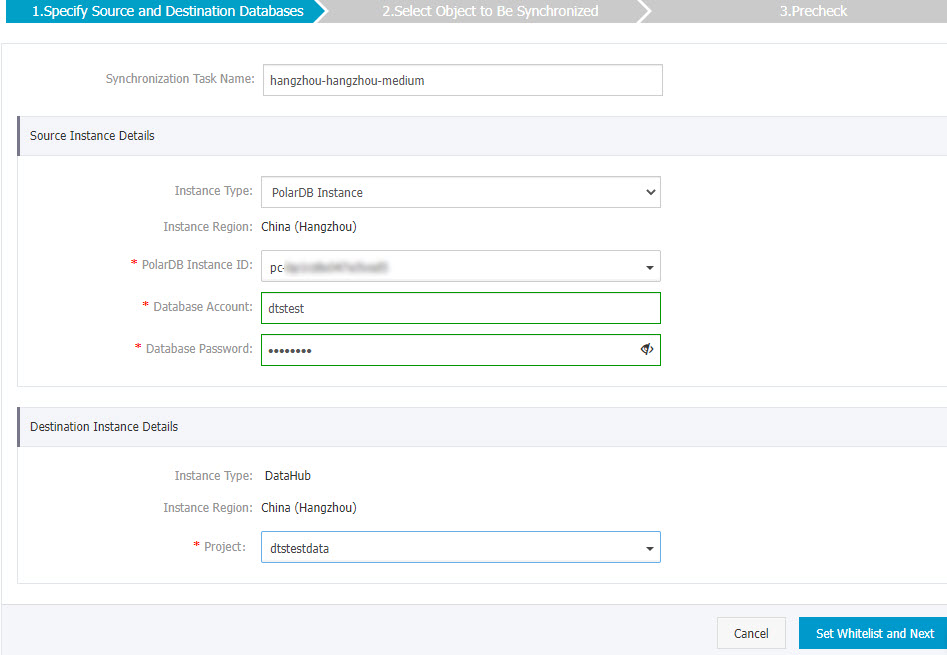

Configure the source and destination instances.

Section

Parameter

Description

N/A

Synchronization Task Name

DTS automatically generates a task name. We recommend that you specify an informative name to identify the task. You do not need to use a unique task name.

Source Instance Details

Instance Type

The value of this parameter is set to PolarDB Instance and cannot be changed.

Instance Region

The source region that you selected on the buy page. You cannot change the value of this parameter.

PolarDB Instance ID

Select the ID of the source PolarDB cluster.

Database Account

Enter the database account of the source PolarDB cluster.

Database Password

Enter the password of the database account.

Destination Instance Details

Instance Type

The value of this parameter is set to DataHub and cannot be changed.

Instance Region

The destination region that you selected on the buy page. You cannot change the value of this parameter.

Project

Select the name of the DataHub project.

In the lower-right corner of the page, click Set Whitelist and Next.

If the source or destination database is an Alibaba Cloud database instance, such as an ApsaraDB RDS for MySQL or ApsaraDB for MongoDB instance, DTS automatically adds the CIDR blocks of DTS servers to the IP address whitelist of the instance. If the source or destination database is a self-managed database hosted on an Elastic Compute Service (ECS) instance, DTS automatically adds the CIDR blocks of DTS servers to the security group rules of the ECS instance, and you must make sure that the ECS instance can access the database. If the self-managed database is hosted on multiple ECS instances, you must manually add the CIDR blocks of DTS servers to the security group rules of each ECS instance. If the source or destination database is a self-managed database that is deployed in a data center or provided by a third-party cloud service provider, you must manually add the CIDR blocks of DTS servers to the IP address whitelist of the database to allow DTS to access the database. For more information, see Add the CIDR blocks of DTS servers.

WarningIf the CIDR blocks of DTS servers are automatically or manually added to the whitelist of the database or instance, or to the ECS security group rules, security risks may arise. Therefore, before you use DTS to synchronize data, you must understand and acknowledge the potential risks and take preventive measures, including but not limited to the following measures: enhancing the security of your username and password, limiting the ports that are exposed, authenticating API calls, regularly checking the whitelist or ECS security group rules and forbidding unauthorized CIDR blocks, or connecting the database to DTS by using Express Connect, VPN Gateway, or Smart Access Gateway.

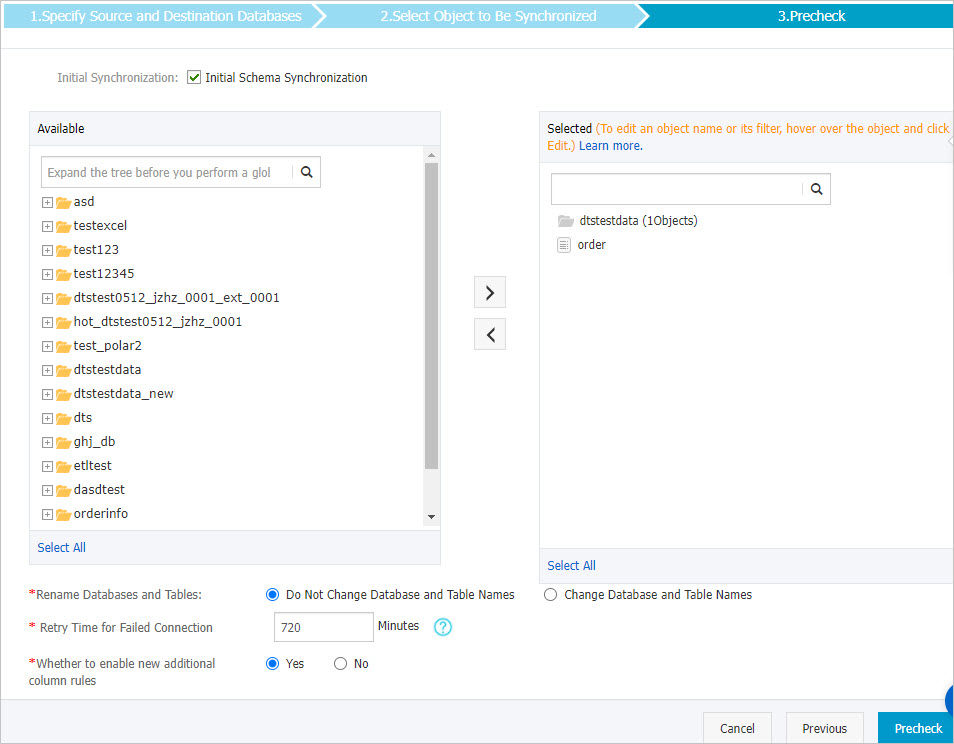

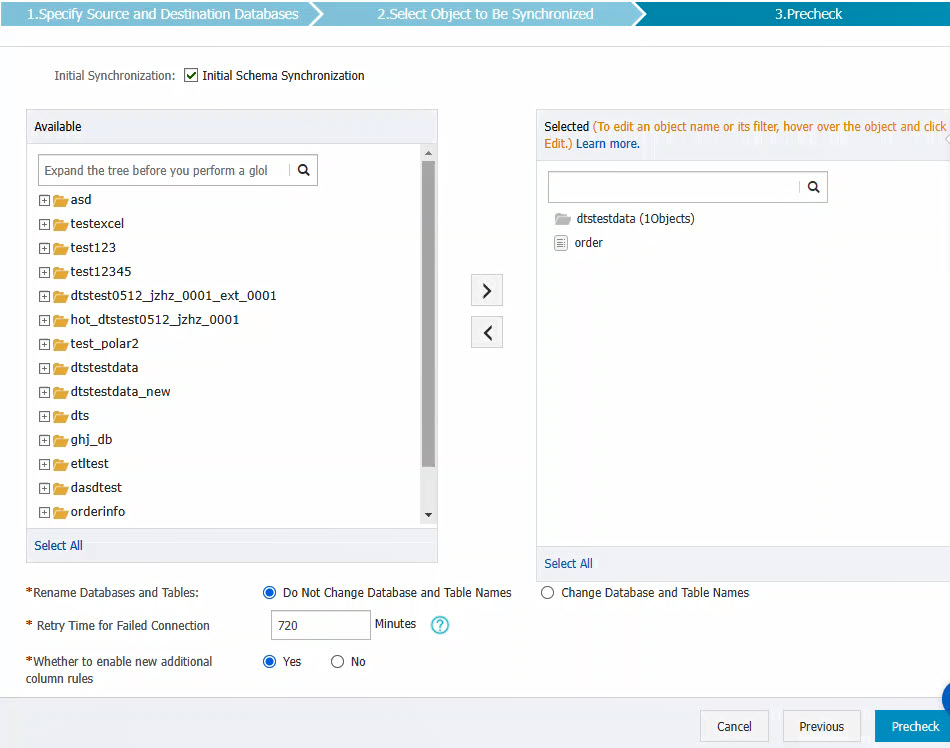

Select the synchronization policy and the objects to be synchronized.

Parameter

Description

Initial Synchronization

Select Initial Schema Synchronization.

NoteAfter you select Initial Schema Synchronization, DTS synchronizes the schemas of the required objects (such as tables) to the destination DataHub project.

Select the objects to be synchronized

Select one or more objects in the Available section and click the

icon to move the objects to the Selected section. Note

icon to move the objects to the Selected section. NoteYou can select tables as the objects to be synchronized.

By default, after an object is synchronized to the destination database, the name of the object remains unchanged. You can use the object name mapping feature to change the names of the objects that are synchronized to the destination instance. For more information, see Rename an object to be synchronized.

Enable new naming rules for additional columns

After DTS synchronizes data to the destination DataHub project, DTS adds additional columns to the destination topic. If the names of additional columns are the same as those of existing columns in the destination topic, the data synchronization fails. Select Yes or No to specify whether to enable new additional column rules.

WarningBefore you configure this parameter, check whether the names of the additional columns are the same as those of existing columns in the destination topic. Otherwise, the data synchronization task may fail or data may be lost. For more information, see the Naming rules for additional columns section of the Modify the naming rules for additional columns topic.

Rename Databases and Tables

You can use the object name mapping feature to rename the objects that are synchronized to the destination instance. For more information, see Object name mapping.

Replicate Temporary Tables When DMS Performs DDL Operations

If you use DMS to perform online DDL operations on the source database, you can specify whether to synchronize temporary tables generated by online DDL operations.

Yes: DTS synchronizes the data of temporary tables generated by online DDL operations.

NoteIf online DDL operations generate a large amount of data, the data synchronization task may be delayed.

No: DTS does not synchronize the data of temporary tables generated by online DDL operations. Only the original DDL data of the source database is synchronized.

NoteIf you select No, the tables in the destination database may be locked.

Retry Time for Failed Connections

By default, if DTS fails to connect to the source or destination database, DTS retries within the next 720 minutes (12 hours). You can specify the retry time based on your needs. If DTS reconnects to the source and destination databases within the specified time, DTS resumes the data synchronization task. Otherwise, the data synchronization task fails.

NoteWhen DTS retries a connection, you are charged for the DTS instance. We recommend that you specify the retry time based on your business needs. You can also release the DTS instance at your earliest opportunity after the source and destination instances are released.

Optional. In the Selected section, move the pointer over the name of a table to be synchronized and click Edit. In the dialog box that appears, configure the shard key used to partition the table.

In the lower-right corner of the page, click Precheck.

NoteBefore you can start the data synchronization task, DTS performs a precheck. You can start the data synchronization task only after the task passes the precheck.

If the task fails to pass the precheck, you can click the

icon next to each failed item to view details.

icon next to each failed item to view details. After you troubleshoot the issues based on the details, initiate a new precheck.

If you do not need to troubleshoot the issues, ignore the failed items and initiate a new precheck.

Close the Precheck dialog box after the following message is displayed: Precheck Passed. Then, the data synchronization task starts.

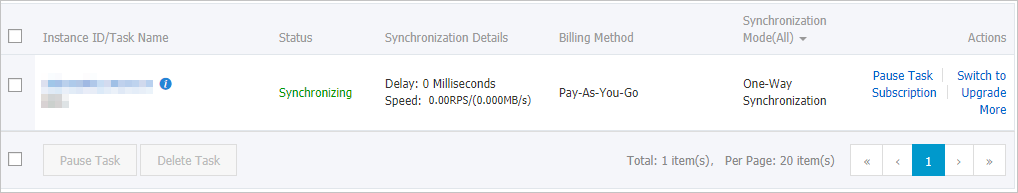

Wait until initial synchronization is complete and the data synchronization task enters the Synchronizing state.

You can view the status of the data synchronization task on the Synchronization Tasks page.

Schema of a DataHub topic

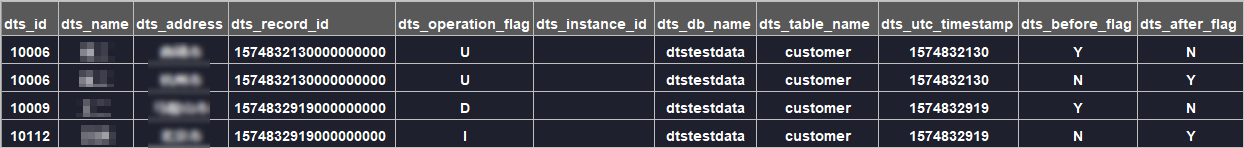

When DTS synchronizes incremental data to a DataHub topic, DTS adds additional columns to store metadata in the topic. The following figure shows the schema of a DataHub topic.

In this example, id, name, and address are data fields. DTS adds the dts_ prefix to data fields, including the original data fields that are synchronized from the source database to the destination database, because the previous version of naming rules for additional columns is used. If you use the new naming rules for additional columns, DTS does not add prefixes to the original data fields that are synchronized from the source database to the destination database.

The following table describes the additional columns in the DataHub topic.

Previous additional column name | New additional column name | Type | Description |

|

| String | The unique ID of the incremental log entry. Note

|

|

| String | The operation type. Valid values:

|

|

| String | The server ID of the database. |

|

| String | The database name. |

|

| String | The table name. |

|

| String | The operation timestamp displayed in UTC. It is also the timestamp of the log file. |

|

| String | Indicates whether the column values are pre-update values. Valid values: Y and N. |

|

| String | Indicates whether the column values are post-update values. Valid values: Y and N. |

Additional information about the dts_before_flag and dts_after_flag fields

The values of the dts_before_flag and dts_after_flag fields in an incremental log entry vary based on operation types:

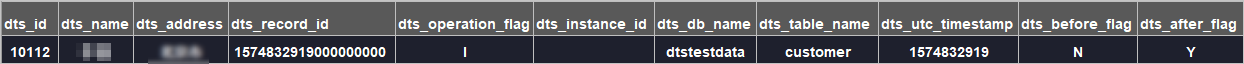

INSERT

For an INSERT operation, the column values are the newly inserted record values (post-update values). The value of the

dts_before_flagfield is N, and the value of thedts_after_flagfield is Y.

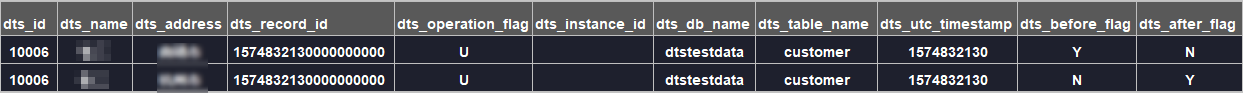

UPDATE

DTS generates two incremental log entries for an UPDATE operation. The two incremental log entries have the same values for the

dts_record_id,dts_operation_flag, anddts_utc_timestampfields.The first log entry records the pre-update values. Therefore, the value of the

dts_before_flagfield is Y, and the value of thedts_after_flagfield is N. The second log entry records the post-update values. Therefore, the value of thedts_before_flagfield is N, and the value of thedts_after_flagfield is Y.

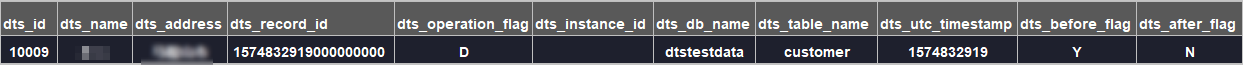

DELETE

For a DELETE operation, the column values are the deleted record values (pre-update values). The value of the

dts_before_flagfield is Y, and the value of thedts_after_flagfield is N.

What to do next

After you configure the data synchronization task, you can use Alibaba Cloud Realtime Compute for Apache Flink to analyze the data that is synchronized to the DataHub project. For more information, see What is Alibaba Cloud Realtime Compute for Apache Flink?