The Natural Language Generation (NLG) component leverages Large Language Models (LLMs) for multi-turn conversations, knowledge retrieval, and content generation.

Component information

AI-generated content may contain inaccuracies. Review and verify the content carefully before use.

Icon | Name |

| Natural Language Generation |

Preparations

Go to the canvas page of an existing flow or a new flow.

Go to the canvas page of an existing flow.

Log on to the . Choose Chat Flow > Flow Management. Click the name of the flow that you want to edit. The canvas page of the flow appears.

Create a new flow to go to the canvas page. For more information, see Create a flow.

Procedure

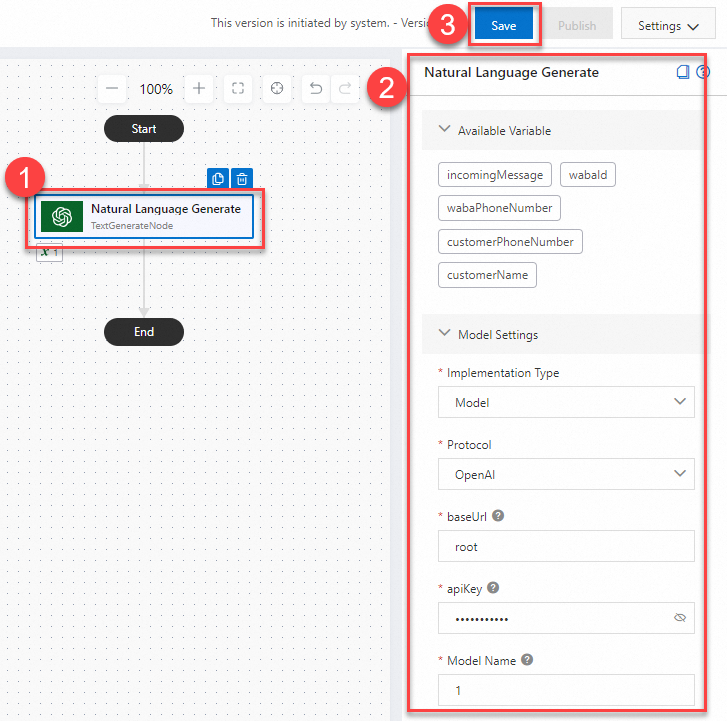

Click the Natural Language Generation component on the canvas. The component configuration panel appears on the right.

Configure the component as needed. For detailed instructions, see Parameters.

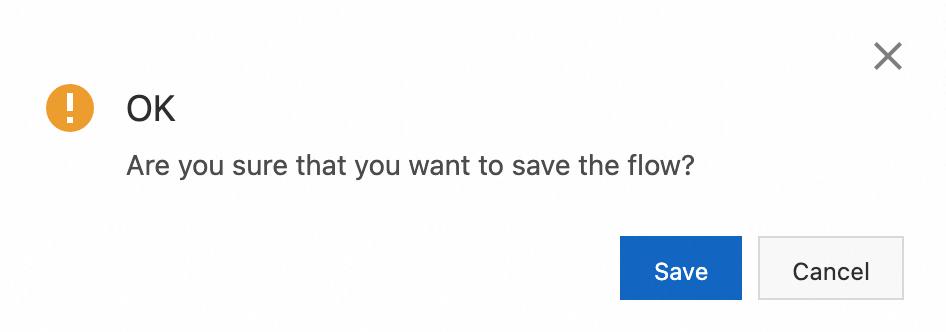

Click Save in the upper-right corner. In the message that appears, click Save.

Parameters

You can set Implementation Type to Model or Application.

Model

Parameter | Description |

Protocol | When the implementation type is Model, only OpenAI is supported as the vendor. |

baseUrl | The access point for the model service, such as |

apiKey | The key for the model service. |

Model Name | The name of the model to use, such as |

Initial Prompt | The initial prompt for the model session. This guides its output. For example, "You are a witty comedian. Please use humorous language in subsequent Q&A." |

Model Input | The input for the current session. You can directly reference or embed multiple variables, such as "{{incomingMessage}}" or "Please help me find information about {{topic}}." |

Model Output Variable Name | The name of the variable where the model's response will be stored. This variable can be reused in subsequent steps or sent as a reply. |

Fallback Text | This message will be sent if the model service is unavailable or encounters an error. For example: "I'm sorry, I can't answer your question right now." |

Application

Parameter | Description |

Protocol | When the implementation type is Application, only Dashscope is supported as the vendor. Note For more information about applications, see Application development. |

apiKey | The key for the application service. Note For more information, see Get an API key. |

workspaceId | The workspace ID where the agent, workflow, or application resides. Pass this ID when calling an application in a sub-workspace. Not required for an application in the default workspace. Note For information about workspaces, see Workspace Permission Management. |

appId | The application ID. |

Application Input | The input for the current session. You can reference or embed multiple variables, such as "{{incomingMessage}}" or "Please help me find information about {{topic}}." |

Custom Pass-through Parameters | Pass through custom parameters, such as {"city": "Hangzhou"}. |

Application Output Variable Name | The name of the variable where the application's response will be stored. This variable can be reused in subsequent steps or sent as a reply. |

Fallback Text | This message will be sent if the application service is unavailable or encounters an error. For example: "I'm sorry, I can't answer your question right now." |