Export blockchain transaction data to an external relational database for business analytics, reporting, or downstream system integration — without affecting on-chain performance. The cloud service integration module streams write sets from your blockchain channel to a staging table, then a SQL trigger automatically propagates the data to your business table.

This topic covers:

Setting up the database schema (staging table and business table)

Deploying and instantiating the sample chaincode

Configuring a Database Trigger in the BaaS console

Generating sample transactions and verifying data export

Prerequisites

Before you begin, ensure that you have:

The cloud service integration module installed in your blockchain instance

The cloud service integration sample code downloaded locally

A database instance with public network access (RDS for MySQL v5.6 or later is used in this topic; other supported database types are also available)

Node.js v8.17.0 or later installed locally

How it works

Each blockchain transaction on Hyperledger Fabric produces a write set — a record of the key-value state changes committed to the ledger. The cloud service integration module captures these write sets from the Tx event stream and inserts them into a staging table (write_set) in your database. A SQL trigger then reads from the staging table and writes the parsed task data into a business table (taskmgr).

This two-table design separates raw ledger data from your application data, making it straightforward to extend the transformation logic without touching the chaincode.

Set up the database schema

Log in to the DMS console and connect to your database instance.

Open a SQL window: in the upper menu bar, click SQL Operation > SQL Window.

Copy the contents of

./blockchain2db/taskmgr.sqlfrom the sample code into the SQL window and run the script. Confirm that all statements execute successfully. The script creates theoctopusdatabase, thewrite_setstaging table, thetaskmgrbusiness table, and the trigger that transfers records between them.

Deploy the sample chaincode

Deploy the taskmgr chaincode to your channel by following Chaincode Deployment. Install and instantiate the chaincode before proceeding.

Create a Database Trigger

In the BaaS console, navigate to the cloud service integration section and create a Database Trigger with the following settings:

| Setting | Value |

|---|---|

| Channel | taskmgr |

| Smart contract | taskmgr |

| Event type | Tx |

| Database type | MySQL (or another supported type) |

| Database address | Your database instance endpoint |

| User ID | Your database username |

| Password | Your database password |

| Database name | octopus |

| Table name | write_set |

Generate sample transactions

Go to the

blockchain2smsdirectory in the sample code.Open

main.jsand fill in the following parameters based on the inline comments:REST API address

Refresh token

Channel name

Smart contract name

For more information on obtaining these values, see Use REST APIs.

Install dependencies and run the script:

Note: This sample script is for demonstration purposes only. The

contentanddatafields in the output are base64-encoded payloads representing the write set of each transaction. A production implementation should add error handling and adapt the transaction submission logic to your application's requirements.npm install node main.jsA successful run outputs a transaction ID and status for each submitted transaction:

Data pushed to blockchain with transaction 701c7006f26aed8457273a00bbfcc8cea4d75eac958996e07837036ea7e2fdac { id: '701c7006f26aed8457273a00bbfcc8cea4d75eac958996e07837036ea7e2fdac', status: '200', events: [ { type: 'Contract', platform: 'Fabric', instance_id: 'csi-e2ehmfqasth-bcw7tzao2dzeo', network: '', id: '', name: 'event-create-task', content: 'eyJuY...TAz' } Data pushed to blockchain with transaction 60a06a189415db587b49cbf91b46467bce1ea16490b19f6dfc8d520aa31240bc { id: '60a06a189415db587b49cbf91b46467bce1ea16490b19f6dfc8d520aa31240bc', status: '200', events: [ { type: 'Contract', platform: 'Fabric', instance_id: 'csi-e2ehmfqasth-bcw7tzao2dzeo', network: '', id: '', name: 'event-task-finished', content: 'eyJuY...p7In0=' } ], data: 'eyJu...In0=' } Data pushed to blockchain with transaction 7810e0496a1c91a16102736b00a6f26da0baa42874a907fff26bff1b7eb3bf27 { id: '7810e0496a1c91a16102736b00a6f26da0baa42874a907fff26bff1b7eb3bf27', status: '200', events: [], data: 'eyJu...nIn0=' }

Verify data export

After the transactions complete, query both tables to confirm the data was exported correctly.

Check the staging table for raw write set records:

SELECT * FROM write_set

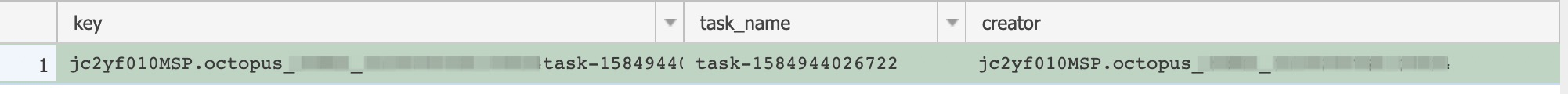

Check the business table for parsed task records:

SELECT * FROM taskmgr

Records appearing in both tables confirm that the cloud service integration module successfully captured the blockchain transactions and the SQL trigger propagated them to the business table.

What's next

Modify the SQL trigger logic in

taskmgr.sqlto match your own business table schema.Replace the sample

main.jswith your application's transaction-submission logic using the REST APIs.To integrate with a different database type, select the appropriate database type when creating the Database Trigger in the BaaS console.