Notebook is an interactive development environment built into Data Management (DMS) that lets you write, run, and iterate on Spark SQL jobs directly in your browser — without leaving the AnalyticDB for MySQL ecosystem. Use it to explore data, build pipelines, and visualize results in a single interface connected to your cluster.

Notebook is available only in the China (Hangzhou) region.

Prerequisites

Before you begin, ensure that you have:

An AnalyticDB for MySQL cluster (Enterprise Edition, Basic Edition, or Data Lakehouse Edition)

A job resource group created for the cluster

A database account for the cluster:

Alibaba Cloud account: create a privileged account

Resource Access Management (RAM) user: create a privileged account and a standard account, then associate the standard account with the RAM user

AnalyticDB for MySQL authorized to assume the AliyunADBSparkProcessingDataRole role

Spark application log storage configured for the cluster

NoteTo configure log storage, log on to the AnalyticDB for MySQL console and click the cluster ID. In the left-side navigation pane, choose Job Development > Spark JAR Development and click Log Settings. Select the default path or specify a custom path. The custom path cannot be the root directory of an Object Storage Service (OSS) bucket — it must include at least one subfolder.

An OSS bucket in the same region as the AnalyticDB for MySQL cluster

Set up the environment

Step 1: Create a workspace

Log on to the DMS console V5.0.

In the upper-left corner, hover over the

icon and choose All Features > Data+AI > Notebook.Note

icon and choose All Features > Data+AI > Notebook.NoteIn normal mode, choose Data+AI > Notebook in the top navigation bar.

Click Create Workspace. In the dialog box, set the Workspace Name and Region, then click OK.

In the Actions column of the workspace, click Go to Workspace.

Step 2: (Optional) Add workspace members

If multiple users share the workspace, add members and assign roles before proceeding.

Step 3: Configure code storage

Open the

tab and click Storage Management.

tab and click Storage Management.In the Code Storage section, configure the OSS path where notebook code will be stored.

Step 4: Add a compute resource

Open the

tab and click Resource Configuration.

tab and click Resource Configuration.Click Add Resource and configure the following parameters.

| Parameter | Required | Description |

|---|---|---|

| Resource Name | Yes | A custom name for this resource |

| Resource Introduction | Yes | A description of the resource's purpose |

| Image | Yes | Only Spark3.5+Python3.9 is supported |

| AnalyticDB Instance | Yes | The ID of the AnalyticDB for MySQL cluster. If the cluster is not listed, register it with DMS first |

| AnalyticDB Resource Group | Yes | The name of the job resource group |

| Executor Spec | Yes | The resource specification for Spark executors. This example uses the default medium specification. For all available specifications, see Spark application configuration parameters |

| Max Executors / Min Executors | Yes | The number of Spark executors. After you select Spark3.5+Python3.9, Min Executors is automatically set to 2 and Max Executors to 8 |

| Notebook Spec | Yes | The Notebook instance specification. This example uses General_Tiny_v1 (1 core, 4 GB) |

| VPC ID | Yes | The virtual private cloud (VPC) of the AnalyticDB for MySQL cluster, required for Notebook-to-cluster communication |

| Zone ID | Yes | The zone of the AnalyticDB for MySQL cluster |

| vSwitch ID | Yes | The vSwitch connected to the AnalyticDB for MySQL cluster |

| Security Group ID | Yes | A security group that allows Notebook to communicate with the cluster |

| Release Resource | Yes | The idle period after which the resource is automatically released |

| Dependent Jars | No | The OSS path of the JAR package. Required only when submitting Python jobs that use a JAR package |

| SparkConf | No | Spark configuration parameters in key: value format. For AnalyticDB-specific parameters, see Spark application configuration parameters |

If you change the VPC and vSwitch of the AnalyticDB for MySQL cluster, update VPC ID and vSwitch ID in this resource to match. Otherwise, job submissions will fail.

Click Save.

In the Actions column of the resource, click Start.

Step 5: Register an OSS instance

This step connects your OSS bucket to the workspace so that Notebook can read and write data lake files.

Hover over the

icon and choose All Features > Data Assets > Instances.

icon and choose All Features > Data Assets > Instances.Click +New. In the Add Instance dialog box, configure the following parameters.

| Section | Parameter | Value |

|---|---|---|

| Data Source | Data source | On the Alibaba Cloud tab, select OSS |

| Basic Information | File and log storage | Automatically set to OSS |

| Instance region | The region of the AnalyticDB for MySQL cluster | |

| Connection method | Automatically set to Connection String Address | |

| Connection string address | oss-cn-hangzhou.aliyuncs.com | |

| Bucket | The name of your OSS bucket | |

| Access mode | This example uses Security Hosting - Manual | |

| AccessKey ID | The AccessKey ID of an Alibaba Cloud account or RAM user with OSS access. See Accounts and permissions | |

| AccessKey Secret | The AccessKey secret of the same account. See Accounts and permissions | |

| Advanced Information | (Optional) | See Register an Alibaba Cloud database instance for details |

Click Test Connection in the lower-left corner.

NoteIf the connection test fails, check the instance information based on the error message.

After the Successful connection message appears, click Submit.

Go back to the workspace and open the

tab.

tab.On the Data Lake Data tab, click Add OSS and select the bucket you registered.

Develop a Spark SQL job

Step 6: Create a notebook

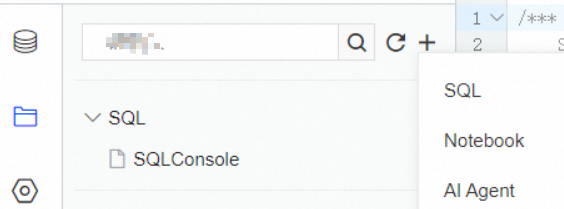

On the ![]() tab, click the

tab, click the ![]() icon and select Notebook.

icon and select Notebook.

Step 7: Write and run Spark SQL

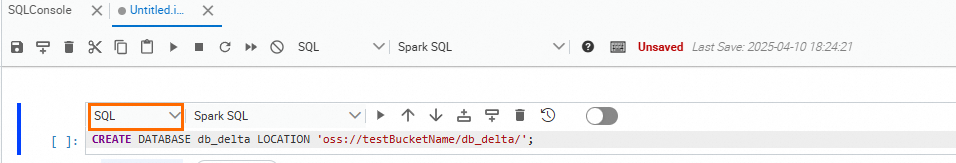

Each notebook cell runs either Python code or SQL. Switch the cell type using the language selector in the cell toolbar.

For a full reference of Notebook buttons and keyboard shortcuts, see the Use Notebook to query and analyze data topic.

In a Code cell, install the Delta Lake Python library:

pip install deltaSwitch the cell type to SQL and create a database with an OSS storage path:

NoteThe

db_deltadatabase and thesample_dataexternal table created in the next step automatically appear in the AnalyticDB for MySQL cluster. You can query thesample_datatable directly from the AnalyticDB for MySQL console.CREATE DATABASE db_delta LOCATION 'oss://testBucketName/db_delta/'; -- Specify the storage path for data in the db_delta database.

Switch the cell type to Code and run the following script to create the

sample_dataexternal table and load sample data. The data is stored in the OSS path specified by thedb_deltadatabase location.# -*- coding: utf-8 -*- import pyspark from delta import * from pyspark.sql.types import * from pyspark.sql.functions import * print("Starting Delta table creation") data = [ ("Robert", "Baratheon", "Baratheon", "Storms End", 48), ("Eddard", "Stark", "Stark", "Winterfell", 46), ("Jamie", "Lannister", "Lannister", "Casterly Rock", 29), ("Robert", "Baratheon", "Baratheon", "Storms End", 48), ("Eddard", "Stark", "Stark", "Winterfell", 46), ("Jamie", "Lannister", "Lannister", "Casterly Rock", 29), ("Robert", "Baratheon", "Baratheon", "Storms End", 48), ("Eddard", "Stark", "Stark", "Winterfell", 46), ("Jamie", "Lannister", "Lannister", "Casterly Rock", 29) ] schema = StructType([ StructField("firstname", StringType(), True), StructField("lastname", StringType(), True), StructField("house", StringType(), True), StructField("location", StringType(), True), StructField("age", IntegerType(), True) ]) sample_dataframe = spark.createDataFrame(data=data, schema=schema) sample_dataframe.delta').mode("overwrite").option('mergeSchema','true').saveAsTable("db_delta.sample_data")Switch the cell type to SQL and query the table to verify the data was loaded:

SELECT * FROM db_delta.sample_data;

Analyze data from the AnalyticDB for MySQL console

The sample_data external table is automatically visible in the AnalyticDB for MySQL cluster. To run Spark SQL queries against it from the console:

Log on to the AnalyticDB for MySQL console. In the upper-left corner, select a region. In the left-side navigation pane, click Clusters, select an edition tab, find the cluster, and click the cluster ID.

In the left-side navigation pane, choose Job Development > SQL Development. Select the Spark engine and an interactive resource group.

Query the table:

SELECT * FROM db_delta.sample_data LIMIT 1000;

What's next

Notebook: describes the information about Notebook.