When multiple workloads share a cluster, unrestricted pod-to-pod communication creates security risks. Kubernetes network policies let you define which pods, namespaces, and IP addresses can send traffic to—or receive traffic from—specific pods. This topic covers enabling network policies in ACK Serverless Pro clusters and walks through four common scenarios: label-based access control, IP allowlisting through an SLB instance, egress restrictions to specific addresses, and namespace-level Internet access control.

Prerequisites

Before you begin, ensure that you have:

An ACK managed cluster or ACK dedicated cluster. See Create an ACK managed cluster and Create an ACK dedicated cluster (discontinued)

The ACK Virtual Node component updated to version 2.10.0 or later. See Manage components

A security group configured for the cluster

The kubeconfig file of the cluster obtained and kubectl connected to the cluster. See Connect to ACK clusters by using kubectl

ACK Pro clusters and ACK Serverless clusters use different components to implement network policies.

In ACK Pro clusters (without elastic container instances), network policies are implemented by Terway. See Enable network policies in ACK Pro clusters.

In elastic container instances of ACK Serverless clusters or ACK Pro clusters, network policies are implemented by Poseidon. This topic covers the Poseidon-based implementation.

Limitations

Network policies are supported only in ACK Serverless Pro clusters and ACK Pro clusters.

Network policies do not support IPv6 addresses.

Network policies do not support the

endPortfield.Label selectors in network policies are evaluated against all policies in a cluster. Keep the total number of network policies below 40 to maintain performance and simplify troubleshooting.

Step 1: Enable network policies

Install the Poseidon component to enable network policies for an ACK Serverless Pro cluster.

Log on to the ACK console. In the left navigation pane, click Clusters.

On the Clusters page, click the name of the cluster you want to manage. In the left navigation pane, click Add-ons.

On the Add-ons page, click the Networking tab. In the Poseidon card, click Install.

In the Install Poseidon dialog box, select Enable the NetworkPolicy feature for elastic container instances, then click OK. After installation completes, Installed appears in the upper-right corner of the card.

Step 2: Create an nginx application and verify baseline connectivity

Create an nginx application and expose it as a Service. Then create a busybox client pod to confirm connectivity before applying any network policies. This baseline lets you verify that policies take effect in the following steps.

Use the ACK console

Log on to the ACK console. In the left navigation pane, click Clusters.

Click the name of the cluster you want to manage, then choose Workloads > Deployments in the left navigation pane.

On the Deployments tab, click Create from Image. Create an application named nginx and expose it as a Service. Configure the following parameters and use default values for the rest. For parameter details, see Parameters.

Section Parameter Value Basic information Name nginxBasic information Replicas 1Container Image name nginx:latestAdvanced > Services Name nginxService type Cluster IP,SLB, orNode PortPort mapping Name: nginx, Service port:80, Container port:80, Protocol:TCPBack on the Deployments tab, click Create from Image again. Create a client application named busybox to test nginx connectivity. Configure the following parameters:

Section Parameter Value Basic information Name busyboxBasic information Replicas 1Container Image name busybox:latestContainer Start parameters Select stdin and tty Verify that busybox can reach the nginx Service:

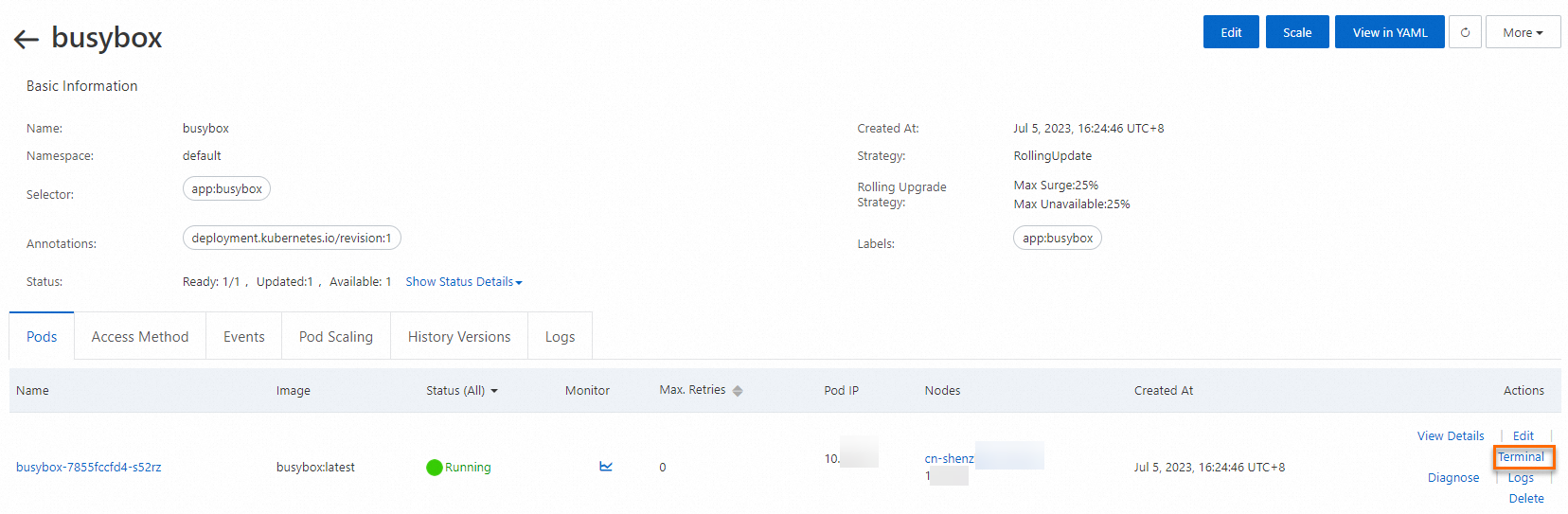

On the Deployments page, click the name of the busybox application.

On the Pods page, select the pod named

busybox-{hash}and click Terminal in the Actions column.

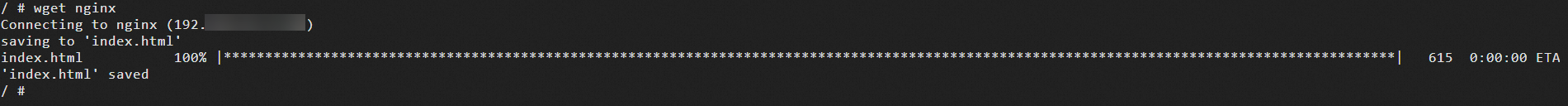

In the terminal, run

wget nginx. A successful response confirms the nginx Service is reachable.

Use the CLI

Create an nginx pod and expose it as a Service:

kubectl run nginx --image=nginxExpected output:

pod/nginx createdVerify the pod is running:

kubectl get podExpected output:

NAME READY STATUS RESTARTS AGE nginx 1/1 Running 0 45sExpose the pod as a Service:

kubectl expose pod nginx --port=80Expected output:

service/nginx exposedConfirm the Service is created:

kubectl get serviceExpected output:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 172.XX.XX.1 <none> 443/TCP 30m nginx ClusterIP 172.XX.XX.48 <none> 80/TCP 12sVerify baseline connectivity—confirm busybox can reach the nginx Service before applying any network policies:

kubectl run busybox --rm -ti --image=busybox /bin/shExpected output:

If you don't see a command prompt, try pressing enter. / #Inside the busybox shell, run:

wget nginxExpected output:

Connecting to nginx (172.XX.XX.48:80) saving to 'index.html' index.html 100% |****| 612 0:00:00 ETA 'index.html' savedThis confirms the nginx Service is reachable without any network policies in place.

Step 3: Configure network policies

The following scenarios cover common network policy patterns. Each scenario builds on the nginx application from Step 2.

By default, Kubernetes allows all pod-to-pod traffic. Network policies are additive—once a policy selects a pod, only traffic explicitly permitted by a matching policy is allowed.

Scenario 1: Restrict access by label

Use case: Allow only pods with a specific label—such as a designated microservice—to reach the nginx Service, and block all other pods.

Create

policy.yamlwith the following content:kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: access-nginx spec: podSelector: matchLabels: run: nginx ingress: - from: - podSelector: matchLabels: access: "true"Apply the policy:

kubectl apply -f policy.yamlExpected output:

networkpolicy.networking.k8s.io/access-nginx createdVerify the policy blocks unlabeled pods. Run busybox without the required label:

kubectl run busybox --rm -ti --image=busybox /bin/shInside the shell, attempt to reach the nginx Service:

wget nginxExpected output:

Connecting to nginx (172.19.XX.XX:80) wget: can't connect to remote host (172.19.XX.XX): Connection timed outThe connection times out because the pod does not have the

access=truelabel.Verify the policy allows pods with the correct label. Run busybox with

access=true:kubectl run busybox --rm -ti --labels="access=true" --image=busybox /bin/shInside the shell:

wget nginxExpected output:

Connecting to nginx (172.21.XX.XX:80) saving to 'index.html' index.html 100% |****| 612 0:00:00 ETA 'index.html' savedThe 100% progress confirms the labeled pod can access the nginx Service.

Scenario 2: Restrict access by source IP address through an SLB instance

Use case: Expose the nginx Service through an Internet-facing SLB instance, but allow traffic only from specific IP addresses—such as your corporate network.

Create

nginx-service.yamlwith the following content:apiVersion: v1 kind: Service metadata: labels: run: nginx name: nginx-slb spec: externalTrafficPolicy: Local ports: - port: 80 protocol: TCP targetPort: 80 selector: run: nginx type: LoadBalancerApply the Service:

kubectl apply -f nginx-service.yamlExpected output:

service/nginx-slb createdGet the external IP address assigned to the SLB instance:

kubectl get service nginx-slbExpected output:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE nginx-slb LoadBalancer 172.19.xx.xxx 47.110.xxx.xxx 80:32240/TCP 8mVerify the current state. The SLB IP is not reachable because the

access-nginxpolicy from Scenario 1 is still active and blocks external traffic:wget 47.110.xxx.xxxExpected output:

Connecting to 47.110.XX.XX:80... failed: Connection refused.The connection fails because the existing policy allows only pods with

access=true. Traffic from your local machine arrives as external IP traffic—not pod traffic—so it is blocked.Get the public IP address of your local machine:

curl myip.ipip.netExpected output:

IP address: 10.0.x.x. From: China Beijing BeijingUpdate

policy.yamlto also allow traffic from your IP address and from the SLB health check range:NoteUse a /24 CIDR block to account for requests that may originate from different IP addresses. The

100.64.0.0/10block covers SLB health check IP addresses and is required for the SLB to report the Service as healthy.kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: access-nginx spec: podSelector: matchLabels: run: nginx ingress: - from: - podSelector: matchLabels: access: "true" - ipBlock: cidr: 100.64.0.0/10 - ipBlock: cidr: 10.0.0.1/24 # Replace with your actual IP CIDR blockApply the updated policy:

kubectl apply -f policy.yamlExpected output:

networkpolicy.networking.k8s.io/access-nginx unchangedVerify the SLB is now reachable. Launch a labeled busybox pod and connect through the SLB IP:

kubectl run busybox --rm -ti --labels="access=true" --image=busybox /bin/shInside the shell:

wget 47.110.XX.XXExpected output:

Connecting to 47.110.XX.XX (47.110.XX.XX:80) index.html 100% |****| 612 0:00:00 ETAThe 100% progress confirms the nginx Service is accessible through the SLB.

Scenario 3: Restrict a pod's egress to specific addresses

Use case: Limit a pod's outbound traffic so it can access only specific external addresses—for example, www.aliyun.com—while blocking all other destinations.

Look up the IP addresses for the target domain:

dig +short www.aliyun.comExpected output:

www-jp-de-intl-adns.aliyun.com. www-jp-de-intl-adns.aliyun.com.gds.alibabadns.com. v6wagbridge.aliyun.com. v6wagbridge.aliyun.com.gds.alibabadns.com. 106.XX.XX.21 140.XX.XX.4 140.XX.XX.13 140.XX.XX.3Create

busybox-policy.yamlwith egress rules that allow only those IP addresses and UDP port 53 for DNS resolution:NoteAlways include a rule to allow UDP port 53. Without it, DNS resolution fails and the pod cannot connect to any domain—including allowed ones.

kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: busybox-policy spec: podSelector: matchLabels: run: busybox egress: - to: - ipBlock: cidr: 106.XX.XX.21/32 - ipBlock: cidr: 140.XX.XX.4/32 - ipBlock: cidr: 140.XX.XX.13/32 - ipBlock: cidr: 140.XX.XX.3/32 - to: - ipBlock: cidr: 0.0.0.0/0 - namespaceSelector: {} ports: - protocol: UDP port: 53Apply the policy:

kubectl apply -f busybox-policy.yamlExpected output:

networkpolicy.networking.k8s.io/busybox-policy createdStart a busybox pod and verify that traffic to non-allowed addresses is blocked:

kubectl run busybox --rm -ti --image=busybox /bin/shInside the shell, attempt to reach a blocked domain:

wget www.taobao.comExpected output:

Connecting to www.taobao.com (64.13.XX.XX:80) wget: can't connect to remote host (64.13.XX.XX): Connection timed outVerify that access to the allowed domain succeeds:

wget www.aliyun.comExpected output:

Connecting to www.aliyun.com (140.205.XX.XX:80) Connecting to www.aliyun.com (140.205.XX.XX:443) wget: note: TLS certificate validation not implemented index.html 100% |****| 462k 0:00:00 ETAThe 100% progress confirms the pod can reach

www.aliyun.comand is blocked from all other addresses.

Scenario 4: Control Internet access by namespace

Use case: Restrict all pods in a namespace to internal services by default, with explicit exceptions for pods that need Internet access—for example, pods that call external APIs.

These policies affect all pods in the namespace and may disrupt services that communicate with the Internet. Use a dedicated test namespace before applying to production workloads.

Create a test namespace:

kubectl create ns test-npExpected output:

namespace/test-np createdCreate a default network policy that allows pods in the test-np namespace to access only internal services. Create

default-deny.yaml:kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: namespace: test-np name: deny-public-net spec: podSelector: {} ingress: - from: - ipBlock: cidr: 0.0.0.0/0 egress: - to: - ipBlock: cidr: 192.168.0.0/16 - ipBlock: cidr: 172.16.0.0/12 - ipBlock: cidr: 10.0.0.0/8Apply the policy:

kubectl apply -f default-deny.yamlExpected output:

networkpolicy.networking.k8s.io/deny-public-net createdConfirm the policy is in place:

kubectl get networkpolicy -n test-npExpected output:

NAME POD-SELECTOR AGE deny-public-net <none> 1mCreate an exception policy that allows pods with the

public-network=truelabel to access the Internet. Createallow-specify-label.yaml:kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: allow-public-network-for-labels namespace: test-np spec: podSelector: matchLabels: public-network: "true" ingress: - from: - ipBlock: cidr: 0.0.0.0/0 egress: - to: - ipBlock: cidr: 0.0.0.0/0 - namespaceSelector: matchLabels: ns: kube-system # Allows access to critical services in kube-system (e.g., CoreDNS)Apply the policy:

kubectl apply -f allow-specify-label.yamlExpected output:

networkpolicy.networking.k8s.io/allow-public-network-for-labels createdConfirm both policies are active:

kubectl get networkpolicy -n test-npExpected output:

NAME POD-SELECTOR AGE allow-public-network-for-labels public-network=true 1m deny-public-net <none> 3mVerify that a pod without the label cannot access the Internet:

kubectl run -it --namespace test-np --rm --image registry-cn-hangzhou.ack.aliyuncs.com/ack-demo/busybox:1.28 busybox-intranetInside the shell:

ping aliyun.comExpected output:

PING aliyun.com (106.11.2xx.xxx): 56 data bytes ^C --- aliyun.com ping statistics --- 9 packets transmitted, 0 packets received, 100% packet lossThe 100% packet loss confirms the

deny-public-netpolicy is blocking Internet access for pods without the exception label.Verify that a pod with the

public-network=truelabel can access the Internet:kubectl run -it --namespace test-np --labels public-network=true --rm --image registry-cn-hangzhou.ack.aliyuncs.com/ack-demo/busybox:1.28 busybox-internetInside the shell:

ping aliyun.comExpected output:

PING aliyun.com (106.11.1xx.xx): 56 data bytes 64 bytes from 106.11.1xx.xx: seq=0 ttl=47 time=4.235 ms 64 bytes from 106.11.1xx.xx: seq=1 ttl=47 time=4.200 ms 64 bytes from 106.11.1xx.xx: seq=2 ttl=47 time=4.182 ms ^C --- aliyun.com ping statistics --- 3 packets transmitted, 3 packets received, 0% packet lossThe 0% packet loss confirms the

allow-public-network-for-labelspolicy grants Internet access to pods with thepublic-network=truelabel.

Best practices

Default-deny first, then open exceptions. The safest pattern for network policy design is:

Apply a deny-all policy to a namespace to block all traffic by default.

Add specific allow policies for traffic you need to permit.

Any unintended traffic is blocked unless explicitly allowed. Scenario 4 demonstrates this pattern with namespace-scoped Internet access control.

Keep policy count manageable. Network policies use label selectors to match pods, and every new policy adds to the evaluation cost for each connection. Keep the total number of policies in a cluster below 40 to maintain performance and simplify troubleshooting.

Always allow DNS egress. When applying egress restrictions, include a rule for UDP port 53. Without it, pods cannot resolve domain names—even connections to explicitly allowed IP addresses may fail if they depend on DNS.

Validate before and after. Confirm baseline connectivity from Step 2 before creating any policies. After applying each policy, run the same connectivity test to confirm the policy takes effect as expected. Use kubectl describe networkpolicy <name> to inspect how Kubernetes interpreted a policy if behavior is unexpected.