Ray is an open-source framework for building scalable AI and Python applications, widely used for distributed machine learning workloads. This guide walks you through deploying a Ray Cluster on Alibaba Cloud Container Service for Kubernetes (ACK) using the KubeRay Operator.

Prerequisites

Before you begin, ensure that you have:

-

An ACK Managed Cluster Pro running Kubernetes v1.24 or later. For setup instructions, see Create an ACK managed cluster. To upgrade an existing cluster, see Manually upgrade a cluster.

-

At least one node with a minimum of 8 vCPUs and 32 GB of memory (test environment). For production environments, size nodes to match your actual workload. For GPU-accelerated workloads, configure GPU-accelerated nodes. For supported Elastic Compute Service (ECS) instance types, see Instance family.

-

kubectl installed on your local machine and connected to your cluster. For connection instructions, see Connect to a cluster using kubectl.

-

(Optional) An ApsaraDB for Tair instance for GCS fault tolerance. See Set up GCS fault tolerance below.

(Optional) Set up GCS fault tolerance

To provide fault tolerance and high availability for the Ray Cluster, create an ApsaraDB for Tair (Redis-compatible) instance that meets the following requirements:

-

Same region and Virtual Private Cloud (VPC) as your ACK Managed Cluster Pro. For setup instructions, see Step 1: Create an instance.

-

A whitelist group that allows access from the VPC CIDR block. For configuration instructions, see Step 2: Configure a whitelist.

-

A VPC endpoint (recommended). For instructions, see View connection addresses.

-

The instance password. For instructions, see Change or reset the password.

Install the KubeRay Operator

-

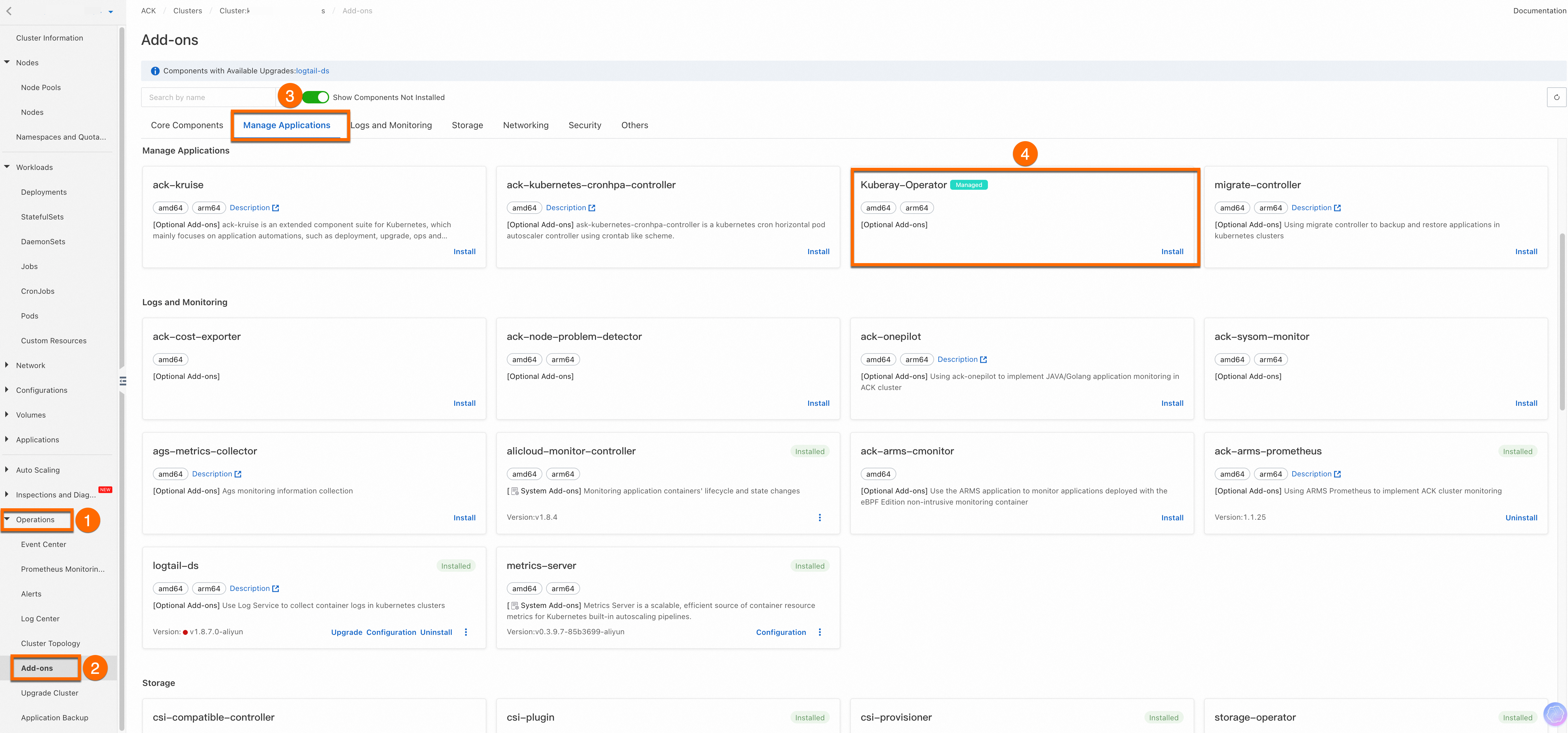

Log on to the Container Service for Kubernetes (ACK) console.

-

In the left-side navigation pane, click Clusters, then click the name of your cluster.

-

Navigate to Operations > Add-ons > Manage Applications.

-

Under Kuberay-Operator, click Install.

Deploy the Ray Cluster

This example uses the official Ray image rayproject/ray:2.36.1, which is hosted on Docker Hub. Due to network conditions, pulling from Docker Hub may fail. If the pull fails, use one of the following alternatives:

-

Subscribe to the image through Container Registry to mirror it from outside the Chinese mainland. For instructions, see Subscribe to images outside China.

-

Create a Global Accelerator instance to pull images directly from overseas sources. For instructions, see Use GA to accelerate cross-domain container image pulling in ACK.

Run the following command to create a Ray Cluster named myfirst-ray-cluster.

The manifest configures:

| Component | Setting | Notes |

|---|---|---|

| Head node | num-cpus: "0" |

Prevents Ray from scheduling tasks on the head, keeping it free for cluster management |

Worker group work1 |

1 replica, scalable up to 1,000 | Adjust replicas to match your workload |

| Autoscaling | Disabled (enableInTreeAutoscaling: false) |

Enable autoscaling only after you understand your workload's resource patterns |

Expand to view the complete command code

cat <<EOF | kubectl apply -f -

apiVersion: ray.io/v1

kind: RayCluster

metadata:

name: myfirst-ray-cluster

namespace: default

spec:

suspend: false

autoscalerOptions:

env: []

envFrom: []

idleTimeoutSeconds: 60

imagePullPolicy: Always

resources:

limits:

cpu: 2000m

memory: 2024Mi

requests:

cpu: 2000m

memory: 2024Mi

securityContext: {}

upscalingMode: Default

enableInTreeAutoscaling: false

headGroupSpec:

rayStartParams:

dashboard-host: 0.0.0.0

num-cpus: "0"

serviceType: ClusterIP

template:

spec:

containers:

- image: rayproject/ray:2.36.1

imagePullPolicy: Always

name: ray-head

resources:

limits:

cpu: "4"

memory: 4G

requests:

cpu: "1"

memory: 1G

workerGroupSpecs:

- groupName: work1

maxReplicas: 1000

minReplicas: 0

numOfHosts: 1

rayStartParams: {}

replicas: 1

template:

spec:

containers:

- image: rayproject/ray:2.36.1

imagePullPolicy: Always

name: ray-worker

resources:

limits:

cpu: "4"

memory: 4G

requests:

cpu: "4"

memory: 4G

EOFVerify the deployment

Run the following commands to confirm the cluster is running.

-

Check the Ray Cluster status:

kubectl get rayclusterExpected output:

NAME DESIRED WORKERS AVAILABLE WORKERS CPUS MEMORY GPUS STATUS AGE myfirst-ray-cluster 1 1 5 5G 0 ready 4m19s -

Check the Pods:

kubectl get podExpected output:

NAME READY STATUS RESTARTS AGE myfirst-ray-cluster-head-5q2hk 1/1 Running 0 4m37s myfirst-ray-cluster-work1-worker-zkjgq 1/1 Running 0 4m31s -

Check the Services:

kubectl get svcExpected output:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 21d myfirst-ray-cluster-head-svc ClusterIP None <none> 10001/TCP,8265/TCP,8080/TCP,6379/TCP,8000/TCP 6m57s

When the Ray Cluster status is ready and all Pods show 1/1 Running, the deployment is complete.