When a Java application runs out of heap memory, the JVM exits without writing any diagnostic artifacts by default. This topic shows you how to mount a Container Network File System (CNFS)-backed NAS volume into your Java pod so that the JVM automatically writes .hprof heap dumps on out-of-memory (OOM) exit. You then use File Browser to access and download those files.

Prerequisites

Before you begin, ensure that you have:

-

A CNFS-managed NAS file system. See Manage NAS file systems using CNFS (recommended)

-

A Container Registry Enterprise Edition instance. See Create an Enterprise instance

Usage notes

Set the JVM max heap below the pod memory limit. Configure -Xmx to a value smaller than the pod's memory limit. If the JVM heap reaches the pod memory limit first, the Linux OOM killer terminates the pod immediately — the JVM never gets a chance to write the dump file.

Use a dedicated CNFS for heap dumps. Keep the CNFS used for business data separate from the one used for JVM heap dumps. A single large OOM event can produce a multi-gigabyte .hprof file. If that file shares quota with your application's working data, it can exhaust the quota and disrupt normal operations.

Sync the File Browser image before you start. The container image docker.io/filebrowser/filebrowser:v2.18.0 may fail to pull due to network restrictions. Sync it to your ACR Enterprise Edition instance by subscribing to images from outside China with the following settings:

| Field | Value |

|---|---|

| Artifact source | Docker Hub |

| Source repository coordinates | filebrowser/filebrowser |

| Subscription policy | v2.18.0 |

After the subscription completes, configure password-free image pulling between the ACR Enterprise Edition instance and your ACK cluster. See Pull images from the same account.

Deploy the Java application

Deploy a Java Deployment that uses CNFS-backed storage as the heap dump destination. The example uses the sample image registry.cn-hangzhou.aliyuncs.com/acs1/java-oom-test:v1.0, which runs a program called Mycode with an 80 MiB heap limit.

The Deployment uses subPathExpr: $(POD_NAMESPACE).$(POD_NAME) to create a per-pod subdirectory inside the shared NAS volume. Each pod writes its dump files to its own isolated directory, so multiple pod restarts do not overwrite each other's dumps.

subPathExpruses round brackets —$(POD_NAME)— not curly brackets. The variable values come from the Downward API environment variablesPOD_NAMEandPOD_NAMESPACEdefined in the same container spec.

cat << EOF | kubectl apply -f -

apiVersion: apps/v1

kind: Deployment

metadata:

name: java-application

spec:

selector:

matchLabels:

app: java-application

template:

metadata:

labels:

app: java-application

spec:

containers:

- name: java-application

image: registry.cn-hangzhou.aliyuncs.com/acs1/java-oom-test:v1.0

imagePullPolicy: Always

env:

- name: POD_NAME # Inject pod name via Downward API

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: metadata.name

- name: POD_NAMESPACE # Inject pod namespace via Downward API

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: metadata.namespace

args:

- java

- -Xms80m # Minimum heap size

- -Xmx80m # Maximum heap size (keep below pod memory limit)

- -XX:HeapDumpPath=/mnt/oom/logs # Write heap dumps to the CNFS-backed mount

- -XX:+HeapDumpOnOutOfMemoryError # Trigger heap dump on OOM

- Mycode

volumeMounts:

- name: java-oom-pv

mountPath: "/mnt/oom/logs"

subPathExpr: $(POD_NAMESPACE).$(POD_NAME) # Round brackets, not curly brackets

volumes:

- name: java-oom-pv

persistentVolumeClaim:

claimName: cnfs-nas-pvc

---

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: cnfs-nas-pvc

spec:

accessModes:

- ReadWriteMany

storageClassName: alibabacloud-cnfs-nas

resources:

requests:

storage: 70Gi # If directory quota is enabled, limits the subdirectory to 70 GiB

---

EOFVerify the OOM event

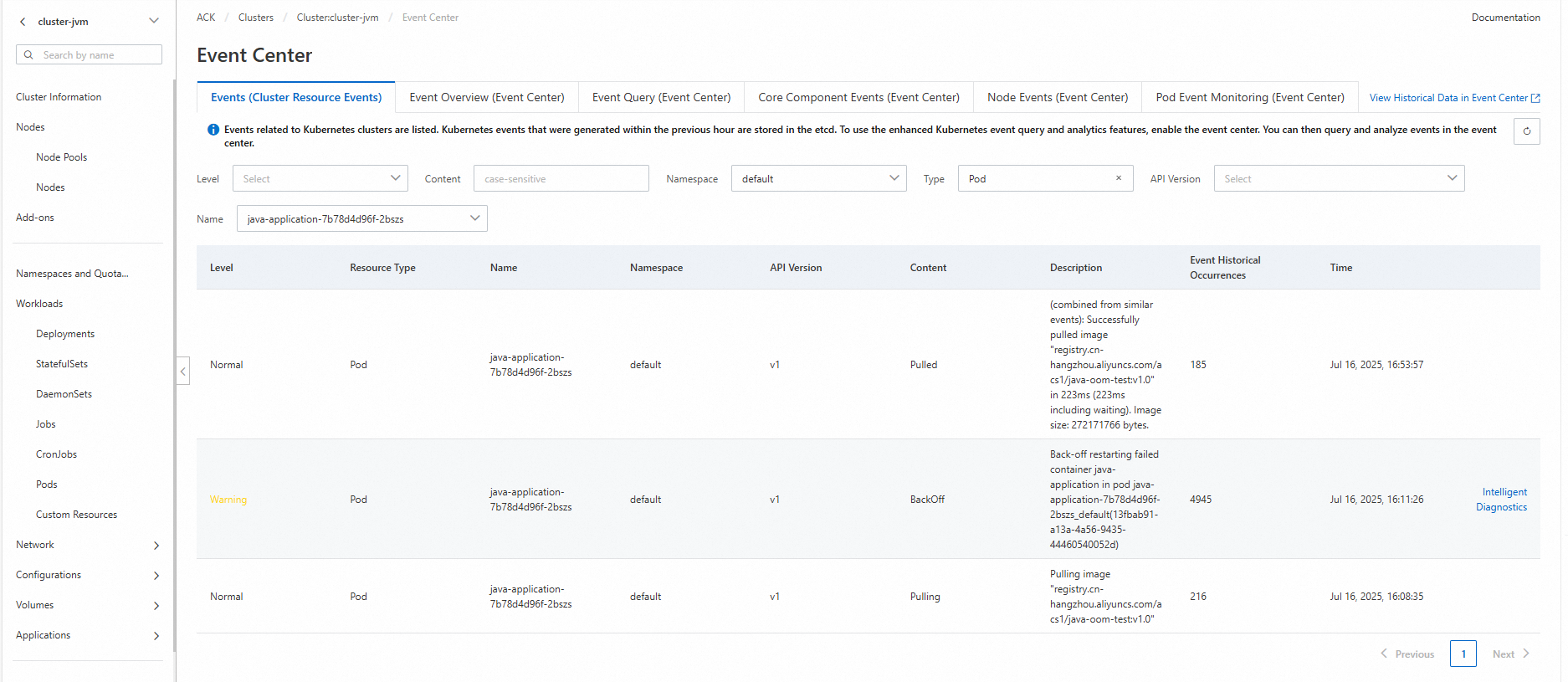

After the Deployment is running, the Mycode program allocates memory until the JVM exhausts the 80 MiB heap and triggers OOM. The pod restarts, and ACK records a back-off restarting alert in Event Center.

To confirm the OOM occurred:

-

Log on to the ACK console. In the left navigation pane, click Clusters.

-

Find your cluster and click its name. In the left navigation pane, choose Operations > Event Center.

-

Check the events list for a

back-off restartingalert on thejava-applicationpod.

Browse the heap dump files

NAS does not have a built-in web interface for browsing files. Deploy File Browser to access the heap dump files through a browser: mount the same CNFS PVC to File Browser's rootDir, expose it through a Service, and open it in a browser.

Deploy File Browser

cat << EOF | kubectl apply -f -

apiVersion: v1

kind: ConfigMap

metadata:

name: filebrowser

namespace: default

labels:

app.kubernetes.io/instance: filebrowser

app.kubernetes.io/name: filebrowser

data:

.filebrowser.json: |

{

"port": 80,

"address": "0.0.0.0"

}

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: filebrowser

namespace: default

labels:

app.kubernetes.io/instance: filebrowser

app.kubernetes.io/name: filebrowser

spec:

replicas: 1

selector:

matchLabels:

app.kubernetes.io/instance: filebrowser

app.kubernetes.io/name: filebrowser

template:

metadata:

labels:

app.kubernetes.io/instance: filebrowser

app.kubernetes.io/name: filebrowser

spec:

containers:

- name: filebrowser

# Replace with your ACR image address after syncing filebrowser/filebrowser:v2.18.0

image: XXXX-registry-vpc.cn-hangzhou.cr.aliyuncs.com/test/test:v2.18.0

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

name: http

protocol: TCP

volumeMounts:

- mountPath: /.filebrowser.json

name: config

subPath: .filebrowser.json

- mountPath: /db

name: rootdir

- mountPath: /rootdir

name: rootdir

volumes:

- name: config

configMap:

name: filebrowser

defaultMode: 420

- name: rootdir

persistentVolumeClaim:

claimName: cnfs-nas-pvc # Same PVC as the java-application Deployment

EOFExpected output:

configmap/filebrowser unchanged

deployment.apps/filebrowser configuredCreate a Service

-

Log on to the ACK console. In the left navigation pane, click Clusters.

-

Find your cluster and click its name. In the left navigation pane, choose Network > Services.

-

On the Services page, select the

defaultnamespace and click Create. Configure the following parameters:For NLB pricing, see NLB billing overview.

Parameter Value Name filebrowserService type SLB > SLB type: NLB. Select Create Resource, then set Access method to Public Access. Backend Click +Reference Workload Label. Set Resource type to Deploymentsand Resources tofilebrowser.Port mapping Service port: 8080, Container port:80, Protocol:TCP -

Submit the configuration.

Access File Browser

-

After the Service is created, copy the endpoint address from the Services page.

-

Open a browser and go to

<endpoint-address>:8080. The File Browser login page appears. -

Log in with the default credentials: username

admin, passwordadmin.

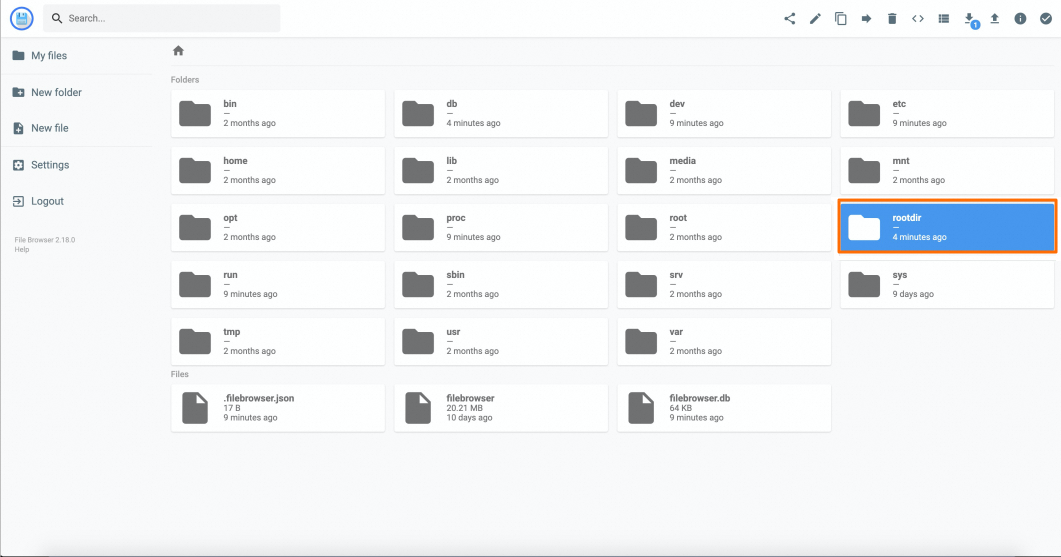

-

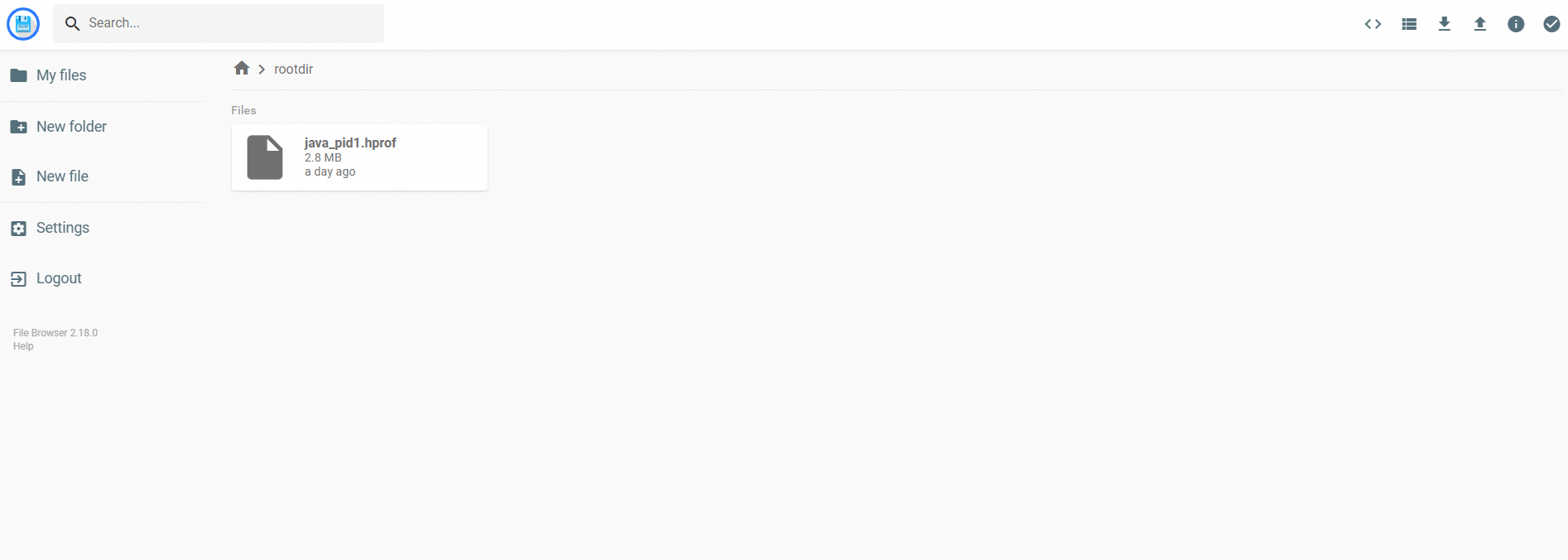

Double-click rootdir to enter the NAS mount point.

Result

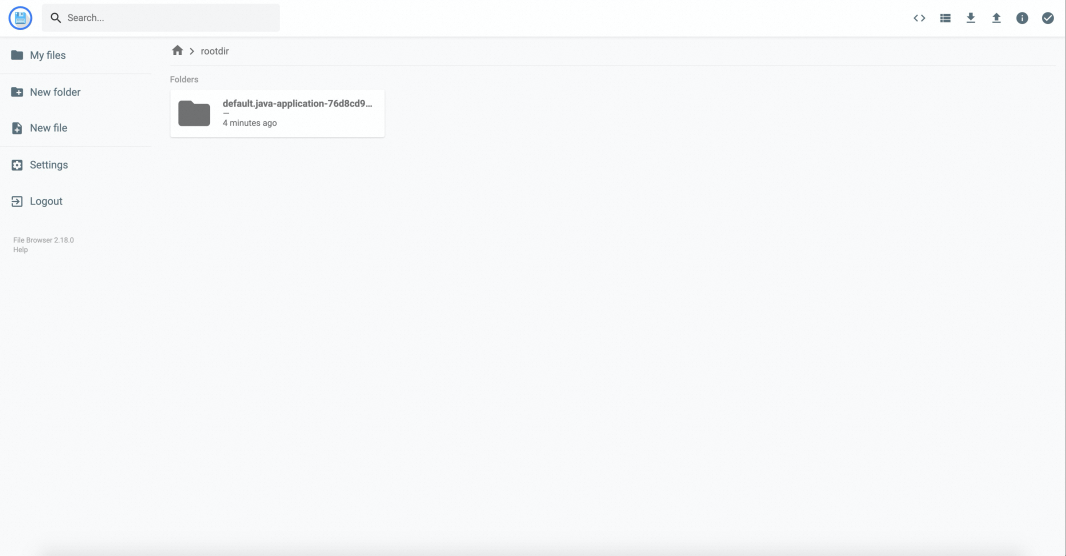

Inside rootdir, you'll find a directory named after the pod that produced the OOM — for example, default.java-application-76d8cd95b7-prrl2. The directory name comes from the subPathExpr: $(POD_NAMESPACE).$(POD_NAME) rule in the Deployment.

Open the directory to find java_pid1.hprof. Download it to your local machine and analyze it with Eclipse Memory Analyzer (MAT) to identify the code line that caused the OOM.

How it works

This setup reliably captures heap dumps across pod restarts because of three cooperating mechanisms:

-

CNFS PVC with `ReadWriteMany`: The NAS-backed PVC is mounted into both the

java-applicationpod and the File Browser pod simultaneously. NAS persists files independently of pod lifecycle — when the Java pod crashes and restarts, the dump files remain on NAS. -

`subPathExpr` with Downward API: Each pod instance mounts into its own subdirectory (

<namespace>.<pod-name>) rather than writing directly to the NAS root. This prevents different pod restarts from overwriting each other's dump files, and makes it easy to identify which pod produced which dump. -

`-XX:+HeapDumpOnOutOfMemoryError`: Before the JVM exits on OOM, it writes the heap state to the path specified by

-XX:HeapDumpPath. Because that path is backed by NAS, the file survives the pod exit.