This topic answers common questions about the backup center.

Understand job status

Before troubleshooting, understand what each status means. A Completed status does not guarantee that all resources were processed successfully — always check the Errors and Warnings fields.

| Status | Meaning |

|---|---|

| Completed | The job finished. If resources are missing from the restore cluster, check the Warnings field — resources may have been excluded by configuration or recycled by business logic. |

| PartiallyFailed | The job completed but failed to process some resources. Check the Errors and Warnings fields in the job details. |

| Failed | The job did not complete. Retrieve error details using the methods below. |

| InProgress | The job is still running. If a job stays in this state for an extended period, see Why is my job stuck in InProgress? |

Get error details

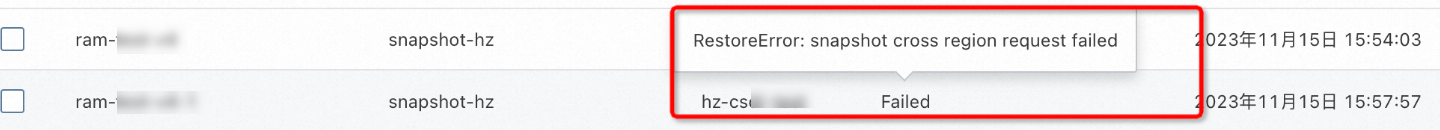

When a backup job, StorageClass conversion task, or restore job shows Failed or PartiallyFailed, use the following methods to retrieve error details.

Quick view: Hover over Failed or PartiallyFailed in the Status column for a brief error message, such as RestoreError: snapshot cross region request failed.

Full error details: Run the command for your task type to view all events, including detailed error messages.

Backup job:

kubectl -n csdr describe applicationbackup <backup-name>StorageClass conversion task:

kubectl -n csdr describe converttosnapshot <backup-name>Restore job:

kubectl -n csdr describe applicationrestore <restore-name>

If you use the backup center with kubectl, upgrade the migrate-controller component to the latest version before troubleshooting. This does not affect existing backups. For more information, see Manage components.

Console issues

Why does the console show "The working component is abnormal" or "Failed to fetch current data"?

The backup center component was not installed correctly. Check the following:

The cluster has nodes. The backup center cannot be deployed if no nodes exist.

If the cluster uses FlexVolume, switch to CSI (Container Storage Interface) first. See The migrate-controller component in a FlexVolume cluster cannot start.

If you use kubectl, verify that your YAML configurations are correct. See Use kubectl to back up and restore applications.

For ACK dedicated clusters and registered clusters, verify that the required permissions are granted. See ACK dedicated cluster and Registered cluster.

Check whether the

csdr-controllerandcsdr-velerodeployments in thecsdrnamespace failed due to insufficient resources or scheduling constraints, and resolve any issues.

Why does the console show "The name has been used. Change the name and try again"?

When you delete a task, the system creates a deleterequest resource in the cluster and runs a series of deletion operations — not just deleting the backup resource itself. If the deletion fails or is interrupted, some resources may remain in the cluster with the same name, causing this error.

Run the following command to delete the conflicting resource. For example, if the error is deleterequests.csdr.alibabacloud.com "xxxxx-dbr" already exists:

kubectl -n csdr delete deleterequests xxxxx-dbrThen create the task with a new name.

Why can't I select an existing backup when restoring across clusters?

The most common cause is that the backup vault has not been initialized in the target cluster. On the Restore page, find Backup Vault and click Initialize Backup Vault. After initialization completes, select the backup and restore.

If initialization fails, the backuplocation resource in the target cluster shows an Unavailable status. Run the following command to check:

kubectl get -n csdr backuplocation <backuplocation-name>Expected output:

NAME PHASE LAST VALIDATED AGE

<backuplocation-name> Available 3m36s 38mIf the status is Unavailable, see Why does my job fail with "VaultError: xxx"?.

If the backup vault status is fine, confirm in the source cluster console that the backup job shows Completed. Failed or in-progress backup jobs cannot be selected for cross-cluster restoration.

Why does the console show "The service role required by the current component has not been authorized" (AddonRoleNotAuthorized)?

Starting from migrate-controller 1.8.0, the cloud resource authentication logic for ACK managed clusters was updated. The first time you install or upgrade to this version, the Alibaba Cloud account must complete authorization.

If you are logged in with an Alibaba Cloud account, click Authorize.

If you are logged in as a RAM user, click Copy Authorization Link and send it to the Alibaba Cloud account holder for authorization.

Why does the console show "The current account has not been granted the cluster RBAC permissions required for this operation" (APISERVER.403)?

The console interacts with the API server to submit and monitor backup and restore jobs. The default permission sets for O&M engineers and developers are missing some permissions required by the backup center.

Grant the following ClusterRole permissions to backup center operators. For instructions, see Use custom RBAC roles to restrict resource operations in a cluster.

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: csdr-console

rules:

- apiGroups: ["csdr.alibabacloud.com","velero.io"]

resources: ['*']

verbs: ["get","create","delete","update","patch","watch","list","deletecollection"]

- apiGroups: [""]

resources: ["namespaces"]

verbs: ["get","list"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get","list"]Why does the backup center component fail to upgrade or uninstall, and the csdr namespace stays in Terminating?

The backup center exited abnormally, leaving jobs in the InProgress state. The finalizers field on these jobs is blocking resource deletion.

Run the following command to identify what is blocking the namespace:

kubectl describe ns csdrConfirm the stuck jobs are no longer needed and delete their finalizers. After the csdr namespace is deleted:

For upgrades, reinstall the migrate-controller component.

For uninstalls, the component is now removed.

General job failures

Why does my job fail with "internal error"?

The component or an underlying cloud service encountered an unexpected exception. For example, the cloud service may not be available in the current region.

If the error is HBR backup/restore internal error, check the Cloud Backup console to verify that the container backup feature is available in your region.

Why does my job fail with "create cluster resources timeout"?

During a StorageClass conversion or restoration, the backup center creates temporary pods, persistent volume claims (PVCs), and persistent volumes (PVs). If these resources stay in an unavailable state for too long, this timeout error occurs.

Identify the stuck resource:

kubectl -n csdr describe <applicationbackup/converttosnapshot/applicationrestore> <task-name>For example, an output like

wait for created tmp pvc default/demo-pvc-for-convert202311151045 for convertion bound time outmeans the PVCdemo-pvc-for-convert202311151045in thedefaultnamespace is not binding.Check the PVC status to find the root cause:

kubectl -n default describe pvc demo-pvc-for-convert202311151045

Common causes include:

Insufficient cluster or node resources.

The restore cluster is missing the required storage class. Use the StorageClass conversion feature to select an available storage class before restoring.

The underlying storage of the storage class is unavailable — for example, the specified disk type is not supported in the current zone.

The Container Network File System (CNFS) associated with

alibabacloud-cnfs-nasis abnormal. See Use CNFS to manage NAS file systems (recommended).A storage class with

volumeBindingMode: Immediatewas selected in a multi-zone cluster.

For storage troubleshooting guidance, see Troubleshoot storage issues.

Why does my job fail with "addon status is abnormal"?

The components in the csdr namespace are abnormal. Check their status:

kubectl get pod -n csdr

kubectl describe pod <pod-name> -n csdrFor resolution steps, see Why is my job stuck in InProgress?.

Why does my job fail with "VaultError: xxx"?

This error means the backup vault cannot reach the Object Storage Service (OSS) bucket. Work through the following checks in order.

1. Verify the OSS bucket exists.

Log on to the OSS console and confirm the bucket associated with the backup vault exists. If it does not, create a new bucket and re-associate it. See Create buckets.

You cannot create a backup vault with the same name as a deleted one, nor associate a vault with an OSS bucket whose name does not follow the cnfs-oss-* format. If you have an existing vault associated with an incorrectly named bucket, create a new vault with a different name and associate it with a cnfs-oss-* bucket.

2. Verify OSS access permissions.

The required steps depend on your cluster type:

ACK Pro clusters: No OSS permission configuration is needed if the OSS bucket name starts with

cnfs-oss-.ACK dedicated clusters and registered clusters: Configure OSS permissions as described in Install migrate-controller and grant permissions.

ACK managed clusters where the component was installed or upgraded to v1.8.0 or later outside the console: run the following command to check whether OSS permissions are configured:

Follow the instructions for ACK dedicated clusters and registered clusters. See Install the backup service component and configure permissions.

Click Authorize to authorize your Alibaba Cloud account. This only needs to be done once per account.

kubectl get secret -n kube-system | grep addon.aliyuncsmanagedbackuprestorerole.tokenExpected output:

addon.aliyuncsmanagedbackuprestorerole.token Opaque 1 62dIf the output matches, permissions are in place. If not, grant permissions using one of these methods:

3. Check the network configuration.

kubectl get backuplocation <backuplocation-name> -n csdr -o yaml | grep networknetwork: internal— the vault accesses OSS over the internal network.network: public— the vault accesses OSS over the Internet. If this causes a timeout, verify that the cluster can access the Internet. See Enable an existing ACK cluster to access the Internet.

The backup vault must use public network access in these scenarios:

The cluster and the OSS bucket are in different regions.

The cluster is an ACK Edge cluster.

The cluster is a registered cluster that is not connected to a VPC via Cloud Enterprise Network (CEN), Express Connect, or VPN — or a route to the internal OSS CIDR block of the region is not configured.

To switch to public network access, run:

kubectl patch -n csdr backuplocation/<backuplocation-name> --type='json' -p \

'[{"op":"add","path":"/spec/config","value":{"network":"public","region":"<region-id>"}}]'

kubectl patch -n csdr backupstoragelocation/<backuplocation-name> --type='json' -p \

'[{"op":"add","path":"/spec/config","value":{"network":"public","region":"<region-id>"}}]'Replace <region-id> with the region of the OSS bucket, such as cn-hangzhou.

Why does my job fail with "HBRError: check HBR vault error"?

Cloud Backup is not activated or lacks the required permissions.

Activate the Cloud Backup service. See Enable Cloud Backup.

For clusters in China (Ulanqab), China (Heyuan), or China (Guangzhou), also authorize Cloud Backup to access API Gateway after activation. See (Optional) Step 3: Authorize the Cloud Backup service to access API Gateway.

For ACK dedicated clusters and registered clusters, verify that Cloud Backup RAM (Resource Access Management) permissions are granted. See Install the migrate-controller backup service component and configure permissions.

Why does my job fail with "HBRError: ... code: 400, Illegal request. Please modify the parameters"?

The ack-backup-data Cloud Backup repository in the region was deleted.

When you first create a backup in a region, the backup center automatically creates an ack-backup-data repository to store backups. If this repository is deleted, subsequent jobs fail with this error.

After the repository is deleted, backups created before the deletion cannot be restored. The following steps only create a new repository for future backups.

In all clusters using the backup center in the affected region, clear the backup vault records:

kubectl -ncsdr delete backuplocation --all kubectl -ncsdr delete backupstoragelocation --allReturn to the cluster and create a new backup. The component automatically creates a new

ack-backup-datarepository and associates it with the backup vault.

Why does my job fail with "hbr task finished with unexpected status: FAILED, errMsg ClientNotExist"?

The Cloud Backup client (the hbr-client DaemonSet) on a node in the csdr namespace is not running correctly.

Check for abnormal

hbr-clientpods:kubectl -n csdr get pod -lapp=hbr-clientIf any pods are in an abnormal state, check whether the cause is insufficient pod IP addresses, memory, or CPU. For pods in

CrashLoopBackOffstate, view the logs:kubectl -n csdr logs -p <hbr-client-pod-name>If the logs contain

SDKError: StatusCode: 403, Code: MagpieBridgeSlrNotExist, follow (Optional) Step 3: Authorize Cloud Backup to access API Gateway to grant the required permissions.For other SDK errors, use the EC error code to troubleshoot. See Troubleshoot issues using EC error codes.

Why is my job stuck in InProgress?

Cause 1: Components in the csdr namespace are abnormal

Check whether the components are restarting or failing to start:

kubectl get pod -n csdr

kubectl describe pod <pod-name> -n csdrIf the cause is out-of-memory (OOM):

If the affected pod is

csdr-velero-*and your restore cluster runs many production namespaces, Velero's Informer Cache may be consuming too much memory. To disable it, add--disable-informer-cache=trueto the migrate-controller args:Disabling the Informer Cache reduces memory usage but may affect job performance. Monitor job performance after making this change.

kubectl -nkube-system edit deploy migrate-controllerAdd the parameter to the container's

args:name: migrate-controller args: - --disable-informer-cache=trueTo increase the memory limit without disabling the cache, run:

kubectl patch deploy <deploy-name> -p '{"spec":{"containers":{"resources":{"limits":"<new-limit-memory>"}}}}'Use

csdr-controllerforcsdr-controller-*pods andcsdr-veleroforcsdr-velero-*pods.

If the cause is missing Cloud Backup permissions:

Confirm Cloud Backup is activated. If not, activate it at Cloud Backup.

For ACK dedicated clusters and registered clusters, confirm that Cloud Backup permissions are configured. See Install migrate-controller and grant permissions.

Check whether the token required by the Cloud Backup client exists:

Find the node where

hbr-client-*is running:kubectl get pod <hbr-client-***> -n csdr -owideChange the node label from

truetofalse:kubectl label node <node-name> csdr.alibabacloud.com/agent-enable=false --overwriteDescribe the

hbr-clientpod:kubectl describe <hbr-client-***>If the events show

couldn\'t find key HBR_TOKEN, the token is missing.

ImportantThe token is automatically recreated the next time you run a backup or restore. If you copy a token from another cluster, the started

hbr-clientwill not be active. Delete the copied token and the associatedhbr-client-*pod, then repeat the steps above.

Cause 2: Disk snapshot permissions are not configured

If an application has disk volumes mounted and the backup job stays in InProgress, check the VolumeSnapshot resources:

kubectl get volumesnapshot -n <backup-namespace>If the READYTOUSE field stays false for all VolumeSnapshots, check the following:

In the ECS console, verify that the disk snapshot feature is enabled in the region. If not, enable it. See Enable Snapshots.

Check that the CSI provisioner pod is running:

kubectl -nkube-system get pod -l app=csi-provisionerVerify that disk snapshot permissions are configured. The steps depend on your cluster type:

ACK managed clusters: In the RAM console, check that the

AliyunCSManagedBackupRestoreRolepolicy includes permissions forhbr:*,ecs:CreateSnapshot, andoss:*actions onacs:oss:*:*:cnfs-oss*. If the role is missing, go to the RAM Quick Authorization page to grant it. The required policy is:{ "Statement": [ { "Effect": "Allow", "Action": [ "hbr:CreateVault", "hbr:CreateBackupJob", "hbr:DescribeVaults", "hbr:DescribeBackupJobs2", "hbr:DescribeRestoreJobs", "hbr:SearchHistoricalSnapshots", "hbr:CreateRestoreJob", "hbr:AddContainerCluster", "hbr:DescribeContainerCluster", "hbr:CancelBackupJob", "hbr:CancelRestoreJob", "hbr:DescribeRestoreJobs2" ], "Resource": "*" }, { "Effect": "Allow", "Action": [ "ecs:CreateSnapshot", "ecs:DeleteSnapshot", "ecs:DescribeSnapshotGroups", "ecs:CreateAutoSnapshotPolicy", "ecs:ApplyAutoSnapshotPolicy", "ecs:CancelAutoSnapshotPolicy", "ecs:DeleteAutoSnapshotPolicy", "ecs:DescribeAutoSnapshotPolicyEX", "ecs:ModifyAutoSnapshotPolicyEx", "ecs:DescribeSnapshots", "ecs:DescribeInstances", "ecs:CopySnapshot", "ecs:CreateSnapshotGroup", "ecs:DeleteSnapshotGroup" ], "Resource": "*" }, { "Effect": "Allow", "Action": [ "oss:PutObject", "oss:GetObject", "oss:DeleteObject", "oss:GetBucket", "oss:ListObjects", "oss:ListBuckets", "oss:GetBucketStat" ], "Resource": "acs:oss:*:*:cnfs-oss*" } ], "Version": "1" }ACK dedicated clusters: In the ACK console, go to the cluster's Cluster Information page, find Master RAM Role, and check the Permission Management tab. If the

k8sMasterRolePolicy-Csi-*policy is missing or incomplete, grant the same policy shown above to the Master RAM role.Registered clusters: Only registered clusters where all nodes are Alibaba Cloud Elastic Compute Service (ECS) instances can use the disk snapshot feature. Check whether the required permissions were configured when you installed the CSI storage plug-in. See Configure RAM permissions for the CSI component.

Cause 3: Non-disk volume type

Cross-region restore is only supported for disk volumes (migrate-controller 1.7.7 and later). For other volume types, if you are using a storage service that supports Internet access — such as OSS — create a statically provisioned PV and PVC and restore the application without StorageClass conversion. See Use an ossfs 1.0 statically provisioned volume.

Backup failures

Why does my backup fail with "backup already exists in OSS bucket"?

A backup with the same name already exists in the OSS bucket associated with the backup vault. Create the backup with a new name.

A backup may be invisible in the current cluster for several reasons: it belongs to an in-progress or failed job (which are not synchronized across clusters), it was deleted in a different cluster (the file is marked but not removed from OSS), or the current cluster is not associated with the vault that stored it.

Why does my backup fail with "get target namespace failed"?

This usually occurs in scheduled backup jobs. The namespace selection is invalid:

If you selected Include, all the selected namespaces have been deleted.

If you selected Exclude, the excluded namespaces no longer exist in the cluster.

Update the backup plan to fix the namespace selection.

Why does my backup fail with "velero backup process timeout"?

Two common causes:

OSS bucket storage class: If the bucket's storage class is Archive, Cold Archive, or Deep Cold Archive, the backup center cannot update metadata files (archived files must be restored first). Change the bucket's storage class to Standard. To keep archived data at lower cost, configure a lifecycle rule to automatically convert the storage class. See Convert storage classes.

Subtask timeout: The default timeout for backup subtasks is 60 minutes (migrate-controller 1.7.7 and later). If your cluster has many resources or the API server has high latency, increase this value in the csdr-config ConfigMap:

kubectl edit -n csdr cm csdr-configAdd velero_timeout_minutes to the applicationBackup section. For example, to set a 100-minute timeout:

apiVersion: v1

data:

applicationBackup: |

...

velero_timeout_minutes: 100Restart the controller for the change to take effect:

kubectl -n csdr delete pod -l control-plane=csdr-controllerWhy does my backup fail with "HBR backup request failed"?

Three possible causes:

Incompatible storage plug-in: If your cluster uses a non-Alibaba Cloud CSI storage plug-in, or the PV is not a standard Kubernetes volume type (such as NFS or LocalVolume), submit a ticket for assistance.

Block mode volume: Cloud Backup does not support volumes whose VolumeMode is Block. If your cluster uses CSI, disk snapshots are used for data backup by default, and disk snapshots support Block mode volumes. If the storage plug-in type is incorrect, switch to CSI, reinstall the backup component, and run the backup again.

Cloud Backup client issue: For file system volumes (OSS, NAS, CPFS, or local), the Cloud Backup client may have timed out or failed. To investigate:

Log on to the Cloud Backup console.

Go to Backup > Container Backup and click the Backup Jobs tab.

Select the region in the top navigation bar.

Search for

<backup-name>-hbrto view the job status and failure reason. See Back up ACK clusters.

To query a StorageClass conversion or backup job, search for the backup name.

Why does my backup fail with "hbr task finished with unexpected status: FAILED, errMsg SOURCE_NOT_EXIST"?

For third-party CSI or self-managed storage types (NFS, Ceph):

The backup center uses the standard Kubernetes volume mount path as the data backup path. For standard CSI, the default path is /var/lib/kubelet/pods/<pod-uid>/volumes/kubernetes.io~csi/<pv-name>/mount. If the kubelet root path in your cluster has been changed, Cloud Backup may not find the data.

Log on to the node where the volume is mounted and troubleshoot:

Find the kubelet root path. Run:

If the startup command includes

--root-dir, that value is the kubelet root path.If it includes

--config, check the config file for theroot-dirfield.If neither is present, check

/etc/systemd/system/kubelet.servicefor anEnvironmentFilereference, then check that file forROOT_DIR.If nothing is found, the kubelet root path is the default

/var/lib/kubelet.

ps -elf | grep kubeletCheck whether the root path is a symbolic link:

ls -al <root-dir>If the output shows something like

kubelet -> /var/lib/container/kubelet, the actual root path is/var/lib/container/kubelet.Confirm that the target volume's data exists under the root path at

<root-dir>/pods/<pod-uid>/volumes.Set the

KUBELET_ROOT_PATHenvironment variable in thecsdr/csdr-controllerdeployment to the actual kubelet root path.

For HostPath storage:

HostPath does not create a mount path under the kubelet root path. The backup component cannot read data from the node path by default. Submit a ticket for assistance.

Why does my backup fail with "check backup files in OSS bucket failed", "upload backup files to OSS bucket failed", or "download backup files from OSS bucket failed"?

The OSS server returned an error during a file operation on the backup vault bucket. Three possible causes:

KMS permissions missing: If you enabled server-side encryption with a Key Management Service (KMS) customer master key (CMK) on the OSS bucket, the backup center needs additional permissions. See Does the backup center support KMS encryption for the associated OSS bucket?.

Incomplete OSS permissions: For ACK dedicated clusters and registered clusters, check the permission policy of the RAM user used during component installation. See Step 1: Configure permissions.

Revoked authentication credentials: For ACK dedicated clusters and registered clusters, confirm that the RAM user's authentication credentials are still valid. If they were revoked, get new credentials, update the alibaba-addon-secret Secret in the csdr namespace, and restart the component:

kubectl -nkube-system delete pod -lapp=migrate-controllerWhy does my backup show PartiallyFailed with "PROCESS velero partially completed"?

Some cluster resources failed to back up. Run the following command to see which resources failed and why:

kubectl -n csdr exec -it $(kubectl -n csdr get pod -l component=csdr | tail -n 1 | cut -d ' ' -f1) -- ./velero describe backup <backup-name>Check the Errors and Warnings fields in the output and fix the issues. For additional logs:

kubectl -n csdr exec -it $(kubectl -n csdr get pod -l component=csdr | tail -n 1 | cut -d ' ' -f1) -- ./velero backup logs <backup-name>Why does my backup show PartiallyFailed with "PROCESS hbr partially completed"?

Cloud Backup failed to back up some file system volumes (OSS, NAS, CPFS, or local volumes). This can occur because:

The storage plug-in used by some volumes is not supported.

Files were deleted during the backup, causing a consistency failure.

To investigate, search for <backup-name>-hbr on the Backup Jobs tab of the Cloud Backup console. Select the correct region and review the job status and failure reason. See Back up ACK clusters.

StorageClass conversion failures

Why does my StorageClass conversion fail with "storageclass xxx not exists"?

The target storage class selected for conversion does not exist in the current cluster.

Reset the StorageClass conversion task:

cat << EOF | kubectl apply -f - apiVersion: csdr.alibabacloud.com/v1beta1 kind: DeleteRequest metadata: name: reset-convert namespace: csdr spec: deleteObjectName: "<backup-name>" deleteObjectType: "Convert" EOFCreate the required storage class in the cluster.

Run the restore job again with StorageClass conversion configured.

Why does my StorageClass conversion fail with "only support convert to storageclass with CSI diskplugin or nasplugin provisioner"?

StorageClass conversion only supports Alibaba Cloud CSI disk and NAS volume types as targets. For other requirements, submit a ticket.

If you are using a storage service that supports public network access (such as OSS), create a statically provisioned PV and PVC and restore the application without StorageClass conversion. See Use an ossfs 1.0 statically provisioned volume.

Why does my StorageClass conversion fail with "current cluster is multi-zoned"?

In a multi-zone cluster, if you convert to a disk-type StorageClass whose volumeBindingMode is Immediate, CSI creates the PV in a fixed zone. Pods cannot be scheduled to a different zone, leaving them in Pending.

Reset the StorageClass conversion task (use the same command as above).

Select the correct storage class:

Console: Select alicloud-disk. The default is

alicloud-disk-topology-alltype.Command line: Use

alicloud-disk-topology-alltype. Alternatively, setvolumeBindingModetoWaitForFirstConsumerto ensure the PV is created in the same zone as the pod.

Run the restore job again.

Restore failures

Why does my restore fail with "multi-node writing is only supported for block volume"?

The application to be restored has a volume whose AccessMode is ReadWriteMany or ReadOnlyMany. When restoring to Alibaba Cloud disk storage (which does not support multiple mounts by default), CSI blocks the mount.

This occurs in three scenarios:

Older CSI version or FlexVolume in the backup cluster: Earlier CSI versions did not check

AccessModesduring mounting. The volume fails when restored to a cluster with a newer CSI version. Starting from v1.8.4, the backup component automatically converts disk volumeAccessModestoReadWriteOnce. Upgrade the component and restore again.Missing storage class in the restore cluster: The volume is matched to an Alibaba Cloud disk volume by default. Create a storage class with the same name in the restore cluster before restoring, or use StorageClass conversion to specify the target.

Manual StorageClass conversion to disk: Add the

convertToAccessModesparameter to convertAccessModestoReadWriteOnce. See convertToAccessModes.

Why does my restore fail with "only disk type PVs support cross-region restore in current version"?

Cross-region restore is only supported for disk volumes (migrate-controller 1.7.7 and later). For other storage types that support Internet access (such as OSS), create a statically provisioned PV and PVC and restore the application. See Use an ossfs 1.0 statically provisioned volume.

Why does my restore fail with "ECS snapshot cross region request failed"?

Cross-region restore for disk volumes requires ECS disk snapshot permissions, which are not granted by default for all cluster types.

For ACK dedicated clusters and registered clusters connected to self-managed Kubernetes deployed on ECS instances, grant ECS disk snapshot permissions. See Registered cluster.

Why does my restore fail with "accessMode of PVC xxx is xxx"?

The disk volume being restored has an AccessMode of ReadOnlyMany or ReadWriteMany. CSI enforces the following rules:

Only volumes with

multiAttachenabled can be mounted to multiple instances.Volumes with

VolumeMode: Filesystem(ext4 or xfs) can only be mounted to multiple instances in read-only mode.

Two recommended approaches:

If you are converting a multi-mount volume (such as OSS or NAS) to disk storage, create a new restore job and select

alibabacloud-cnfs-nasas the target for StorageClass conversion. This uses a CNFS-managed NAS volume, which supports multiple mounts. See Use CNFS to manage NAS file systems (recommended).If the backed-up disk PV does not meet current CSI requirements (backed up when CSI version was lower and

AccessModedetection was not enforced), migrate your workloads to use dynamically provisioned disk volumes to avoid forced disk detachment during scheduling.

My restore status is Completed, but some resources are missing. Why?

A Completed status does not guarantee all resources were restored. Check each possible cause:

The resource was not backed up. Run the following command to inspect the backup:

kubectl -n csdr exec -it $(kubectl -n csdr get pod -l component=csdr | tail -n 1 | cut -d ' ' -f1) -- ./velero describe backup <backup-name> --detailsCluster-level resources of pods running in namespaces not selected for backup are not backed up by default. For cluster-level backup configuration, see Cluster-level backup.

The resource was excluded during restoration. Check whether the namespace, resource type, or other filters in the restore job excluded the resource, and re-run the restore.

The restore subtask partially failed. Run the following command to identify failures:

kubectl -n csdr exec -it $(kubectl -n csdr get pod -l component=csdr | tail -n 1 | cut -d ' ' -f1) -- ./velero describe restore <restore-name>Fix the issues in the Errors and Warnings fields.

The resource was recycled after creation. Check the audit logs for the resource to determine whether it was deleted after being created, due to ownerReferences or business logic.

Other questions

The migrate-controller component in a FlexVolume cluster cannot start

The migrate-controller component does not support FlexVolume clusters. Migrate to CSI before using the backup center:

Migrate from FlexVolume to CSI using the csi-compatible-controller component

Migrate statically provisioned NAS volumes from FlexVolume to CSI

Migrate statically provisioned OSS volumes from FlexVolume to CSI

For other cases, join the DingTalk user group (group ID: 35532895) for consultation.

To back up applications in a FlexVolume cluster and restore them to a CSI cluster during migration, see Use the backup center to migrate applications in a Kubernetes cluster that runs an older version.

Can I modify a backup vault?

No. To make changes, delete the current backup vault and create a new one with a different name.

Because a backup vault is a shared resource that may be in active use at any time, modifying its parameters risks data inaccessibility during ongoing backups or restores. You also cannot create a new vault with the same name as a deleted one.

Can I use an OSS bucket whose name is not in the "cnfs-oss-\*" format?

For clusters other than ACK dedicated clusters and registered clusters, the backup center has read/write access to OSS buckets named in the cnfs-oss-* format by default. Using a differently named bucket requires additional configuration.

Configure OSS permissions for the component. See ACK dedicated cluster.

Restart the backup service component:

kubectl -n csdr delete pod -l control-plane=csdr-controller kubectl -n csdr delete pod -l component=csdr

After creating a vault with a non-standard bucket name, wait for the connectivity check to complete (approximately five minutes) before starting backup or restore operations. Check the vault status:

kubectl -n csdr get backuplocationExpected output:

NAME PHASE LAST VALIDATED AGE

a-test-backuplocation Available 7s 6d1hHow do I specify the backup schedule when creating a backup plan?

The backup schedule supports two formats:

Cron expression: For example,

1 4 * * *runs a backup at 4:01 AM every day.Interval: For example,

6h30mruns a backup every 6 hours and 30 minutes.

Cron format reference:

* * * * *

| | | | |

| | | | ·----- day of week (0 - 6, Sun to Sat)

| | | ·-------- month (1 - 12)

| | .----------- day of month (1 - 31)

| ·-------------- hour (0 - 23)

·----------------- minute (0 - 59)Example: 0 2 15 * 1 runs a backup at 2:00 AM on the 15th of each month.

What changes does a restore job make to backed-up YAML resources?

Restore jobs make the following automatic adjustments:

Disk volume size: If a disk volume is smaller than 20 GiB, the size is increased to 20 GiB.

Services: Restored based on the Service type:

NodePort Services: Service ports are retained by default during cross-cluster restoration.

LoadBalancer Services:

When

ExternalTrafficPolicyisLocal,HealthCheckNodePortuses a random port. To retain the original port, setspec.preserveNodePorts: truein the restore job.If the Service uses an existing Server Load Balancer (SLB) instance from the backup cluster, the restored Service uses the same SLB instance but disables its listeners. Configure the listeners in the SLB console.

If the SLB instance is managed by Cloud Controller Manager (CCM) in the backup cluster, CCM creates a new SLB instance. See Considerations for configuring a LoadBalancer Service.

How do I view the resources in a backup?

Cluster application backups:

Run in a cluster that has the backup files synchronized:

kubectl -n csdr get pod -l component=csdr | tail -n 1 | cut -d ' ' -f1

kubectl -n csdr exec -it csdr-velero-xxx -c velero -- ./velero describe backup <backup-name> --detailsOr use the ACK console: go to your cluster > Operations > Application Backup > Backup Records, then click a backup record.

Disk volume backups:

In the ECS console, go to Storage & Snapshots > Snapshots and query snapshots by disk ID.

Non-disk volume backups:

In the Cloud Backup console, go to Backup > Container Backup and select the region. The Clusters tab lists backed-up clusters and their PVCs. The Backup Jobs tab shows job status.

If Client Status is abnormal, Cloud Backup is not running correctly in the ACK cluster. Go to the DaemonSets page in the ACK console to troubleshoot.

Can I back up from an older Kubernetes version and restore to a newer version?

Yes. By default, all API versions supported by a resource are backed up. For example, a Deployment in Kubernetes 1.16 supports extensions/v1beta1, apps/v1beta1, apps/v1beta2, and apps/v1. All four versions are stored in the backup vault regardless of which version was used to create it. The KubernetesConvert feature handles API version conversion during restore.

When restoring, the API version recommended by the restore cluster is used. For example, restoring to Kubernetes 1.28 uses apps/v1 for Deployments.

If no API version is shared between the source and target clusters, deploy the resource manually. For example, Ingresses in Kubernetes 1.16 clusters use extensions/v1beta1 and networking.k8s.io/v1beta1, which are not supported in Kubernetes 1.22 and later (only networking.k8s.io/v1 is supported). For API version migration details, see the Kubernetes deprecation guide. Avoid migrating from newer to older Kubernetes versions, and avoid migrating from versions earlier than 1.16 to newer versions.

Is traffic automatically switched to SLB instances during restoration?

No. After restoration, SLB listeners are either disabled or a new SLB instance is created (depending on the original configuration). Traffic is not automatically switched.

If you use other service discovery mechanisms and want to control when traffic switches, exclude Service resources during backup and deploy them manually when you are ready to switch.

Why aren't resources in csdr, ack-csi-fuse, kube-system, kube-public, and kube-node-lease backed up by default?

csdr: This is the backup center's own namespace. Backing it up directly would cause components to fail in the restore cluster. Backup synchronization is handled automatically — you do not need to migrate backups manually.

ack-csi-fuse: This namespace runs FUSE client pods maintained by CSI. The CSI in the new cluster automatically synchronizes these clients during storage restoration.

kube-system, kube-public, kube-node-lease: These are Kubernetes system namespaces. Due to differences in cluster parameters and configurations, restoring these namespaces across clusters is not supported. Before running a restore job, install and configure system components in the restore cluster manually — for example, the Container Registry password-free image pulling component (

acr-configuration) and the ALB Ingress component (ALBConfig).

Does the backup center use ECS disk snapshots for disk volumes? What is the default snapshot type?

The backup center uses ECS disk snapshots by default in the following scenarios:

The cluster is an ACK managed or dedicated cluster.

The cluster runs Kubernetes 1.18 or later and uses CSI 1.18 or later.

In other scenarios, Cloud Backup is used for disk data backup.

ECS disk snapshots created by the backup center have the instant access feature enabled by default. The snapshot validity period matches the validity period specified in the backup configuration. Starting from October 12, 2023, 11:00, Alibaba Cloud no longer charges for snapshot instant access storage or operations in any region. See Use the instant access feature.

Why is the ECS disk snapshot validity period different from what I specified in the backup configuration?

The validity period configuration depends on the csi-provisioner component. If csi-provisioner is older than version 1.20.6, VolumeSnapshots are created without the validity period or instant access settings — so the backup configuration does not affect disk snapshots.

Upgrade csi-provisioner to 1.20.6 or later to ensure the validity period is applied correctly.

If upgrading is not possible, configure a default snapshot validity period instead:

Update migrate-controller to v1.7.10 or later.

Check whether a VolumeSnapshotClass with the 30-day retention setting exists:

kubectl get volumesnapshotclass csdr-disk-snapshot-with-default-ttlIf it does not exist, or if it exists but

retentionDaysis not set to30, apply the following:apiVersion: snapshot.storage.k8s.io/v1 deletionPolicy: Retain driver: diskplugin.csi.alibabacloud.com kind: VolumeSnapshotClass metadata: name: csdr-disk-snapshot-with-default-ttl parameters: retentionDays: "30"

All disk volume backups in the cluster will then create snapshots with the retention period set in retentionDays.

What is volume data backup, and when do I need it?

What it does: Volume data backup copies volume data to cloud storage using ECS disk snapshots or Cloud Backup. When you restore the application, a new disk or NAS file system is created from this copy. The restored application and the original application have independent data — changes in one do not affect the other.

If you do not need data isolation or if your storage already provides cross-zone or cross-region access (such as OSS), skip volume data backup. PVC and PV YAML files are still included in the application backup by default.

When to use it:

Disaster recovery or versioned data records.

The application uses disk volumes (basic disks can only be mounted to a single node).

Cross-region backup and restore is required (most storage types other than OSS do not support cross-region access).

Data isolation between the original and restored applications is required.

The storage plug-ins or versions differ significantly between the backup and restore clusters, making direct YAML restoration impractical.

Risks of not backing up volumes for stateful applications:

Volumes with `Delete` reclaim policy: CSI creates a new, empty PV during restore. Statically provisioned volumes without a matching storage class stay in

Pendinguntil you manually create a PV or storage class.Volumes with `Retain` reclaim policy: Resources are restored in PV-first order. For multi-mount storage (NAS, OSS), the original file system or bucket is reused. For disks, there is a risk of forced disk detachment.

To check the reclaim policy of your volumes:

kubectl get pv -o=custom-columns=CLAIM:.spec.claimRef.name,NAMESPACE:.spec.claimRef.namespace,NAME:.metadata.name,RECLAIMPOLICY:.spec.persistentVolumeReclaimPolicyHow do I select nodes for file system backups in data protection?

By default, Cloud Backup jobs can run on any node except virtual nodes. Only one volume backup job runs on a node at a time.

Three node scheduling policies are available:

| Policy | Behavior |

|---|---|

exclude (default) | All nodes are eligible. Add csdr.alibabacloud.com/agent-excluded="true" to exclude specific nodes. |

include | Only labeled nodes are eligible. Add csdr.alibabacloud.com/agent-included="true" to enable specific nodes. |

prefer | All nodes are eligible. Nodes with csdr.alibabacloud.com/agent-included="true" are preferred; nodes with csdr.alibabacloud.com/agent-excluded="true" are used last. |

To label a node:

# Exclude a node

kubectl label node <node-name> csdr.alibabacloud.com/agent-excluded="true"

# Include a node (for the include policy)

kubectl label node <node-name> csdr.alibabacloud.com/agent-included="true"To change the policy, edit the csdr-config ConfigMap:

kubectl -n csdr edit cm csdr-configAdd node_schedule_policy to the applicationBackup section:

apiVersion: v1

data:

applicationBackup: |

backup_max_worker_num: 15

restore_max_worker_num: 5

delete_max_worker_num: 30

schedule_max_worker_num: 20

convert_max_worker_num: 15

node_schedule_policy: include # Valid values: include, exclude, prefer

pvBackup: |

batch_snapshot_max_num: 20

enable_ecs_snapshot: "true"

kind: ConfigMapRestart the controller for the change to take effect:

kubectl -n csdr delete pod -lapp=csdr-controllerWhat are the differences between application backup and data protection?

Application backup backs up Kubernetes workloads — including applications, Services, and configuration files in namespaces. Optionally include volume data for mounted volumes. Use application backup to migrate applications between clusters or restore applications for disaster recovery.

Application backup does not back up volumes that are not mounted to pods. To back up all volumes, create data protection backup jobs.

Data protection backs up storage volumes — PVCs and PVs — independently of application workloads. Use data protection to restore a deleted PVC as a standalone operation, or to implement data replication and disaster recovery at the storage layer.

How do I exclude specific persistent volumes from backup and recovery?

Some volumes, such as log storage or high-availability storage (OSS), do not need to be backed up. Here is a workflow for backing up a namespace that contains Volume A (no backup needed) and Volume B (backup needed):

Backup flow:

Use data protection to back up Volume B — this backs up both the YAML and the data.

Use application backup to select the namespace with Backup Volume set to Disable. This backs up the YAML files for both Volume A and Volume B without copying data.

If you do not want Volume A restored in the target cluster at all, add

pvc, pvto Excluded Resources in the advanced configuration.

Restore flow:

Restore the data protection backup in the target cluster. This restores the YAML and data of Volume B.

Restore the application backup in the target cluster. This restores the YAML of Volume A and all other application resources. CSI then creates a new storage source or reuses the existing one based on Volume A's reclaim policy.

After both restores complete, the application, Volume A, and Volume B (with its data) are all running in the target cluster.

Does the backup center support KMS encryption for the associated OSS bucket?

The backup center supports server-side encryption for OSS buckets. Enable it in the OSS console. See Server-side encryption.

If you use a KMS-managed CMK (bring your own key, or BYOK) with a specified CMK ID, grant the backup center permissions to access KMS:

Create a custom permission policy:

{ "Version": "1", "Statement": [ { "Effect": "Allow", "Action": [ "kms:List*", "kms:DescribeKey", "kms:GenerateDataKey", "kms:Decrypt" ], "Resource": [ "acs:kms:*:141661496593****:*" ] } ] }This grants access to all KMS keys under the Alibaba Cloud account. For more granular resource control, see Authorization information.

Attach the policy:

For ACK dedicated clusters and registered clusters: attach it to the RAM user used during installation. See Grant permissions to a RAM user.

For other clusters: attach it to the

AliyunCSManagedBackupRestoreRolerole. See Grant permissions to a RAM role.

If you use a KMS key managed by OSS or a key fully managed by OSS, no additional permissions are needed.

How do I change the container images used during restoration?

Change the image registry address:

For hybrid cloud deployments or migrations from on-premises to cloud, use the imageRegistryMapping field to remap image registry addresses. For example, to change docker.io/library/ to registry.cn-beijing.aliyuncs.com/my-registry/:

docker.io/library/: registry.cn-beijing.aliyuncs.com/my-registry/Change the image repository or version:

Create a ConfigMap in the csdr namespace before running the restore:

apiVersion: v1

kind: ConfigMap

metadata:

name: <configuration-name>

namespace: csdr

labels:

velero.io/plugin-config: ""

velero.io/change-image-name: RestoreItemAction

data:

"case1": "app1:v1,app2:v2"

# Change only the repository: "case1": "app1,app2"

# Change only the version: "case1": "v1:v2"

# Change a specific registry image: "case1": "docker.io/library/app1:v1,registry.cn-beijing.aliyuncs.com/my-registry/app2:v2"For multiple changes, add case2, case3, and so on to the data field. After creating the ConfigMap, run the restore job with the imageRegistryMapping field left blank.

These changes apply to all restore jobs in the cluster. Use specific patterns (such as limiting to a particular registry) to avoid unintended changes. Delete the ConfigMap when it is no longer needed.