This topic covers common questions about node auto scaling in ACK, including scale-out and scale-in behavior, scheduling policies, and add-on management.

Known limitations

Available node resources may not match instance type specifications

The available resources on a newly provisioned node are always slightly less than the instance type's advertised specifications. The underlying OS and system daemons on the Elastic Compute Service (ECS) instance consume a portion of CPU, memory, and storage before any pod is scheduled. For details, see Why is the memory size different from the instance type specification after I purchase an instance?

Because of this overhead, keep the following in mind when configuring pod resource requests:

-

Keep total requests below the full instance capacity. As a general guideline, a pod's total resource requests should not exceed 70% of the node's capacity.

-

Account for static pods not managed as DaemonSets. The cluster-autoscaler only considers the resource requests of Kubernetes pods (including pending pods and DaemonSet pods) when evaluating node capacity. Manually reserve resources for any static pods outside this scope.

-

Test resource-heavy pods before deploying at scale. If a pod requests more than 70% of a node's resources, test and confirm in advance that the pod can be scheduled to a node of the same instance type.

Scheduling policy support is limited

The cluster-autoscaler supports only a limited set of scheduling policies when determining whether an unschedulable pod fits a node pool with Auto Scaling enabled. For details, see What scheduling policies does cluster-autoscaler use?

Only resource type policies are supported in ResourcePolicy

When using ResourcePolicy to customize the priority of elastic resources, only resource type policies are supported. For details, see Customize elastic resource priority scheduling.

apiVersion: scheduling.alibabacloud.com/v1alpha1

kind: ResourcePolicy

metadata:

name: nginx

namespace: default

spec:

selector:

app: nginx

units:

- resource: ecs

- resource: eciScaling out a specific instance type in a multi-type node pool is not supported

If a node pool is configured with multiple instance types, you cannot direct the cluster-autoscaler to provision a specific instance type during scale-out. The autoscaler models the entire node pool's capacity based on the smallest available instance type—the one with the fewest resources across all configured types. For details, see How does the autoscaler calculate capacity for a node pool with multiple instance types?

Pods with zone-specific constraints may not trigger scale-out in multi-zone node pools

If a node pool spans multiple availability zones, a pod with a zone dependency may not trigger a scale-out. This applies to pods that require a specific zone because of:

-

A persistent volume claim (PVC) bound to a volume in that zone.

-

A

nodeSelector,nodeAffinity, or another scheduling rule targeting the zone.

In these cases, the cluster-autoscaler may fail to provision a node in the required zone. For more scenarios, see Why does my node pool fail to provision new nodes?

Storage constraints are invisible to the autoscaler

The autoscaler has no awareness of pod-level storage constraints, such as:

-

Needing to run in a specific availability zone to access a persistent volume (PV).

-

Requiring a node that supports a specific disk type (such as ESSD).

Solution: Configure a dedicated node pool for applications with storage dependencies before enabling Auto Scaling. Preset the availability zone, instance type, and disk type in the node pool configuration to ensure newly provisioned nodes meet the pod's storage requirements.

Also make sure your pods do not reference a PVC in a Terminating state. A pod that cannot schedule because its PVC is terminating will fail continuously, which can cause the cluster-autoscaler to make incorrect scaling decisions.

Scale-out behavior

What scheduling policies does cluster-autoscaler use to determine whether an unschedulable pod can be scheduled to a node pool with Auto Scaling enabled?

The cluster-autoscaler evaluates the following scheduling policies:

-

PodFitsResources

-

GeneralPredicates

-

PodToleratesNodeTaints

-

MaxGCEPDVolumeCount

-

NoDiskConflict

-

CheckNodeCondition

-

CheckNodeDiskPressure

-

CheckNodeMemoryPressure

-

CheckNodePIDPressure

-

CheckVolumeBinding

-

MaxAzureDiskVolumeCount

-

MaxEBSVolumeCount

-

ready

-

NoVolumeZoneConflict

What resource types can cluster-autoscaler simulate during scheduling analysis?

The cluster-autoscaler can simulate and evaluate the following resource types:

cpu

memory

sigma/eni

ephemeral-storage

aliyun.com/gpu-mem (shared GPUs only)

nvidia.com/gpuTo scale based on other resource types, see How do I configure custom resources for a node pool with Auto Scaling enabled?

Why does my node pool fail to provision new nodes?

Check the following common causes:

Auto Scaling is not enabled on the node pool

Node auto scaling works only for node pools with Auto Scaling configured. Make sure the cluster-level auto scaling feature is enabled and the node pool's scaling mode is set to Auto. For details, see Enable node auto scaling.

Pod resource requests exceed allocatable capacity

The advertised specifications of an ECS instance represent total capacity, not allocatable capacity. ACK reserves a portion of CPU, memory, and storage for the OS kernel, system services, and Kubernetes daemons (kubelet, kube-proxy, Terway, and the container runtime). The standard cluster-autoscaler calculates scaling decisions using the resource reservation policy from Kubernetes 1.28 and earlier.

To use a more accurate reservation policy, switch to node instant scaling, which uses the updated algorithm. Alternatively, define custom resource reservations in the node pool configuration.

For details about resource consumption:

-

System resources: Why does a purchased instance have a memory size different from the instance type?

-

Add-on resources: Resource reservation policy

A pod's zone constraint is preventing scale-out

If a pod has a scheduling dependency on a specific availability zone—due to a PVC bound to a zonal volume or a node affinity rule—the cluster-autoscaler may not be able to provision a node in that zone, especially in a multi-zone node pool.

Required permissions are missing

The cluster-autoscaler requires cluster-scoped permissions granted on a per-cluster basis. Complete all authorization steps described in Enable node auto scaling for the cluster.

The autoscaler is temporarily paused due to unhealthy nodes

If provisioned nodes fail to join the cluster or stay in NotReady for an extended period, the autoscaler temporarily pauses further scaling to prevent repeated failures. Resolve the unhealthy node issues—scaling resumes automatically once the condition clears.

The cluster has no worker nodes

The cluster-autoscaler runs as a pod inside the cluster. With no worker nodes, the autoscaler pod cannot run and cannot provision new nodes. Configure node pools with a minimum of two nodes to ensure high availability for core cluster add-ons. For scaling from zero or down to zero nodes, use node instant scaling.

If a scaling group is configured with multiple instance types, how does the autoscaler calculate the group's capacity for scaling decisions?

For a scaling group with multiple instance types, the cluster-autoscaler models the group's capacity using the minimum value for each resource dimension across all configured types.

For example, with two instance types:

-

Instance type A: 4 vCPU, 32 GiB memory

-

Instance type B: 8 vCPU, 16 GiB memory

The autoscaler calculates:

-

Minimum CPU: min(4, 8) = 4 vCPU

-

Minimum memory: min(32, 16) = 16 GiB

The entire scaling group is treated as if it can only provision nodes with 4 vCPU and 16 GiB. A pending pod requesting more than 4 vCPU or more than 16 GiB will not trigger a scale-out for this group, even though instance type B could satisfy the CPU request.

If multiple node pools with Auto Scaling enabled are available, how does cluster-autoscaler choose which one to scale out?

When a pod is unschedulable, the cluster-autoscaler simulates which node pools can accommodate it. The simulation evaluates each node pool's labels, taints, and available instance types.

If multiple node pools qualify, the autoscaler defaults to the least-waste strategy: it selects the node pool that leaves the fewest unused CPU and memory resources after the pod is scheduled.

How do I configure custom resources for a node pool with Auto Scaling enabled?

Add ECS tags with the following prefix to the node pool:

k8s.io/cluster-autoscaler/node-template/resource/{resource_name}:{resource_size}Example:

k8s.io/cluster-autoscaler/node-template/resource/hugepages-1Gi:2GiWhy can't I enable Auto Scaling for a node pool?

Auto Scaling cannot be enabled in the following cases:

-

It is the default node pool. The node auto scaling feature does not support the cluster's default node pool.

-

The node pool contains manually added nodes. Remove the manually added nodes first, or create a new dedicated node pool with Auto Scaling enabled from the start.

-

The node pool uses subscription-based instances. Node auto scaling only works with pay-as-you-go instances.

Scale-in behavior

Why won't cluster-autoscaler scale in a node?

The cluster-autoscaler skips a node during scale-in if any of the following conditions apply:

-

Pod utilization exceeds the threshold. The total resource requests of pods on the node are above the configured scale-in threshold.

-

The node runs `kube-system` pods. By default, the cluster-autoscaler does not remove nodes running pods from the

kube-systemnamespace. -

A pod has strict scheduling constraints. If a pod uses a

nodeSelectorornodeAffinitythat prevents it from being rescheduled to any other node, the node cannot be scaled in. -

A pod is protected by a PodDisruptionBudget (PDB). If evicting a pod would violate the PDB's

minAvailableormaxUnavailablesetting, the node is kept. For details, see PodDisruptionBudget. -

Pods are not managed by a native Kubernetes controller. Pods not created by a Deployment, ReplicaSet, Job, or StatefulSet block node removal by default.

For a full list of conditions that can block a node scale-in, see the cluster-autoscaler FAQ.

How do I enable or disable eviction for a specific DaemonSet?

The Evict DaemonSet Pods setting in cluster configuration controls DaemonSet eviction globally. For more information, see Step 1: Enable node auto scaling for the cluster.

Override this setting per DaemonSet by adding an annotation to the DaemonSet's pods (in the pod template, not on the DaemonSet object itself):

-

Enable eviction for a specific DaemonSet's pods:

cluster-autoscaler.kubernetes.io/enable-ds-eviction: "true" -

Disable eviction for a specific DaemonSet's pods:

cluster-autoscaler.kubernetes.io/enable-ds-eviction: "false"

If the global Evict DaemonSet Pods setting is disabled, enable-ds-eviction: "true" only applies to DaemonSet pods on non-empty nodes. To evict DaemonSet pods from empty nodes, enable the global setting first.

By default, the cluster-autoscaler evicts DaemonSet pods in a non-blocking manner and proceeds without waiting for eviction to complete. To make the autoscaler wait for a specific DaemonSet pod to be fully evicted before proceeding, add the following annotation alongside enable-ds-eviction:

cluster-autoscaler.kubernetes.io/wait-until-evicted: "true"These annotations have no effect on pods that are not part of a DaemonSet.

What types of pods can prevent cluster-autoscaler from removing a node?

For a complete list of conditions that block node scale-in, see What types of pods can prevent CA from removing a node? in the upstream cluster-autoscaler FAQ.

Extension support

Does cluster-autoscaler support CustomResourceDefinitions (CRDs)?

No. The cluster-autoscaler supports only standard Kubernetes objects and does not support CRDs.

Pod-level scaling behavior control

How do I delay scale-out for a specific pod?

Add the cluster-autoscaler.kubernetes.io/pod-scale-up-delay annotation to the pod. The cluster-autoscaler will not consider the pod for scale-out until it remains unschedulable for longer than the specified delay. This gives the Kubernetes scheduler extra time to place the pod on existing nodes before triggering a scale-up.

Example:

cluster-autoscaler.kubernetes.io/pod-scale-up-delay: "600s"How do I use pod annotations to control scale-in behavior?

Use the cluster-autoscaler.kubernetes.io/safe-to-evict annotation to explicitly mark a pod as safe or unsafe to evict during scale-in:

-

Prevent scale-in for the node: Add

"cluster-autoscaler.kubernetes.io/safe-to-evict": "false"to a pod running on the node. The autoscaler will not terminate the node while this pod is present. -

Allow scale-in for the node: Add

"cluster-autoscaler.kubernetes.io/safe-to-evict": "true"to explicitly mark the pod as safe to evict.

Node-level scaling behavior control

How do I prevent cluster-autoscaler from scaling in a specific node?

Add the cluster-autoscaler.kubernetes.io/scale-down-disabled: "true" annotation to the node. Replace <nodename> with the target node name:

kubectl annotate node <nodename> cluster-autoscaler.kubernetes.io/scale-down-disabled=trueAdd-on management

How do I upgrade cluster-autoscaler to the latest version?

-

Log on to the ACK console. In the left navigation pane, click Clusters.

-

On the Clusters page, find the cluster and click its name. In the left navigation pane, choose Nodes > Node Pools.

-

Click Edit to the right of Node Scaling. In the panel that appears, click OK to upgrade to the latest version.

What actions trigger an automatic update of cluster-autoscaler?

The cluster-autoscaler is updated automatically when:

-

The auto scaling configuration is modified.

-

A node pool with Auto Scaling enabled is created, deleted, or updated.

-

The cluster's Kubernetes version is successfully upgraded.

Node scaling isn't working on my ACK managed cluster even though role authorization is complete

This usually means the token addon.aliyuncsmanagedautoscalerrole.token is missing from a Secret in the kube-system namespace. ACK uses the cluster's Worker Role to enable auto scaling, and this token is required for authentication.

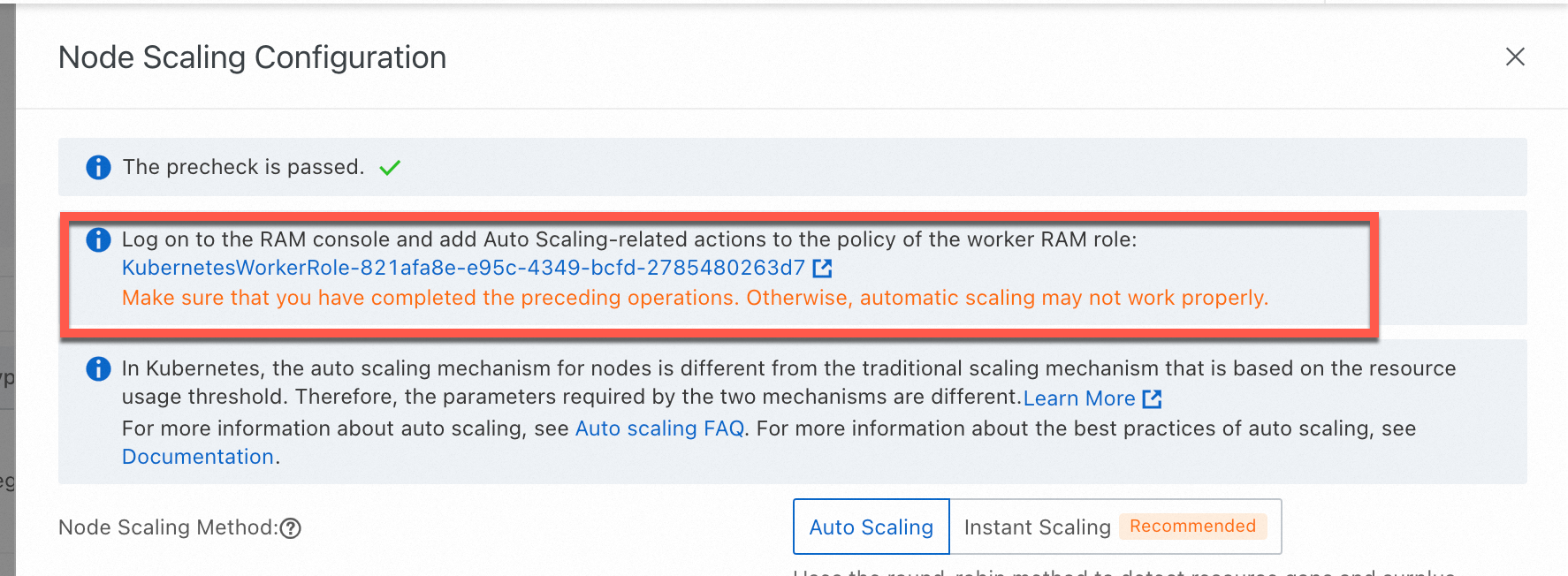

Re-apply the required policy to the Worker Role using the ACK console:

-

On the Clusters page, find the cluster and click its name. In the left navigation pane, choose Nodes > Node Pools.

-

On the Node Pools page, click Enable to the right of Node Scaling.

-

Follow the on-screen instructions to authorize the

KubernetesWorkerRoleand attach theAliyunCSManagedAutoScalerRolePolicysystem policy.

-

Manually restart the

cluster-autoscalerDeployment (node auto scaling) or theack-goatscalerDeployment (node instant scaling) in thekube-systemnamespace for the permissions to take effect immediately.