Scale multiple applications with Nginx Ingress traffic metrics

Running multiple application instances improves stability, but idle replicas raise cluster costs. Horizontal Pod Autoscaler (HPA) dynamically adjusts pod replica counts based on live traffic, eliminating both under-provisioning and idle waste. This tutorial shows how to drive HPA for multiple applications simultaneously using the nginx_ingress_controller_requests metric exposed by the NGINX Ingress Controller in your ACK cluster. The NGINX Ingress Controller in ACK clusters is an enhanced version of the community edition and is easier to use.

Each application gets its own HPA that responds only to its own traffic. The selector.matchLabels.service field in each HPA spec acts as a filter, passing per-service label matchers to the adapter rule so that scaling decisions stay isolated between applications.

Prerequisites

Before you begin, ensure that you have:

-

Alibaba Cloud Prometheus deployed in your cluster. For setup instructions, see Use Alibaba Cloud Prometheus for monitoring

-

Theack-alibaba-cloud-metrics-adapter component deployed, with its

prometheus.urlfield configured -

Apache Benchmark (

ab) installed for load testing

How it works

An Ingress forwards external requests to a Service, which routes them to the matching pod. The NGINX Ingress Controller records per-service request counts in the nginx_ingress_controller_requests Prometheus metric.

HPA cannot consume Prometheus metrics directly — it requires metrics exposed through the Kubernetes external metrics API. The ack-alibaba-cloud-metrics-adapter bridges this gap by processing nginx_ingress_controller_requests in four steps:

| Step | Field | Role | Value used here |

|---|---|---|---|

| 1. Discovery | seriesQuery |

Identifies which Prometheus metric to expose | nginx_ingress_controller_requests |

| 2. Association | resources |

Specifies whether this metric is namespace-scoped | namespaced: false |

| 3. Naming | name |

Renames the metric in the external API | Strips _requests, appends _per_second → nginx_ingress_controller_per_second |

| 4. Querying | metricsQuery |

Defines the PromQL template used to compute the value | sum(rate(<<.Series>>{<<.LabelMatchers>>}[2m])) |

The template variables in metricsQuery are filled in automatically by the adapter at query time:

-

<<.Series>>— replaced with the matched Prometheus series name (nginx_ingress_controller_requests) -

<<.LabelMatchers>>— replaced with the label selectors from the HPA spec (for example,service="sample-app")

Each HPA uses the selector.matchLabels.service field to filter this metric to a single application, so the scaling of sample-app and test-app remains independent.

Step 1: Create applications and services

Create two Deployments and their corresponding Services using the YAML files below.

-

Create

nginx1.yamlwith the following content:Apply the manifest:

kubectl apply -f nginx1.yaml -

Create

nginx2.yamlwith the following content:Apply the manifest:

kubectl apply -f nginx2.yaml

Step 2: Create an Ingress

-

Create

ingress.yamlwith the following content:Field Description hostThe domain name used to access the Services. This example uses test.example.com.pathThe URL path. Incoming requests are matched against this path and forwarded to the corresponding Service. backendThe target Service name and port for each path. /routes tosample-app;/homeroutes totest-app.The key fields in this Ingress: Apply the manifest:

kubectl apply -f ingress.yaml -

Verify the Ingress is running:

kubectl get ingress -o wideExpected output:

NAME CLASS HOSTS ADDRESS PORTS AGE test-ingress nginx test.example.com 10.XX.XX.10 80 55sThe NGINX Ingress Controller now routes requests arriving at

test.example.com/tosample-appand requests attest.example.com/hometotest-app. Both request streams are recorded in thenginx_ingress_controller_requestsmetric in Alibaba Cloud Prometheus, labeled by service name.

Step 3: Convert Prometheus metrics to HPA-compatible metrics

HPA reads metrics from the Kubernetes external metrics API, not from Prometheus directly. The ack-alibaba-cloud-metrics-adapter translates Prometheus metrics into this format using a rule you define in the adapter-config ConfigMap.

Modify the adapter-config file

-

Log on to the ACK console. In the left navigation pane, click Clusters.

-

On the Clusters page, find the cluster you want and click its name. In the left-side pane, choose Applications > Helm.

-

On the Helm page, click ack-alibaba-cloud-metrics-adapter. In the Resource section, click adapter-config, then click Edit YAML in the upper-right corner.

-

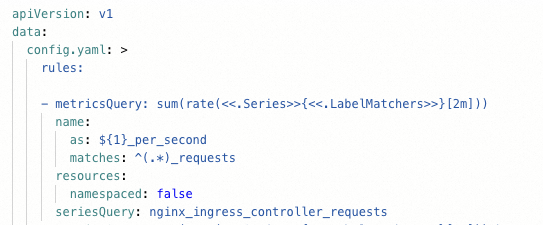

Replace the existing rules with the following, then click OK:

For the full set of adapter configuration options, see Horizontal pod autoscaling based on Alibaba Cloud Prometheus metrics.

rules: - metricsQuery: sum(rate(<<.Series>>{<<.LabelMatchers>>}[2m])) name: as: ${1}_per_second matches: ^(.*)_requests resources: namespaced: false seriesQuery: nginx_ingress_controller_requests

Verify the metric is available

Run the following command to confirm the adapter is serving the converted metric:

kubectl get --raw "/apis/external.metrics.k8s.io/v1beta1/namespaces/*/nginx_ingress_controller_per_second" | jq .Expected output:

{

"kind": "ExternalMetricValueList",

"apiVersion": "external.metrics.k8s.io/v1beta1",

"metadata": {},

"items": [

{

"metricName": "nginx_ingress_controller_per_second",

"metricLabels": {},

"timestamp": "2025-07-25T07:56:04Z",

"value": "0"

}

]

}A value of "0" is expected — there is no traffic yet.

Step 4: Create HPAs

Both HPAs use the same nginx_ingress_controller_per_second external metric but filter it to their respective service using selector.matchLabels.service. The adapter passes these labels as <<.LabelMatchers>> into the PromQL query, keeping each application's scaling decisions independent.

-

Create

hpa.yamlwith the following content:Apply the manifest:

kubectl apply -f hpa.yaml -

Verify both HPAs are ready:

kubectl get hpaExpected output:

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE sample-hpa Deployment/sample-app 0/30 (avg) 1 10 1 74s test-hpa Deployment/test-app 0/30 (avg) 1 10 1 59mBoth HPAs show

0/30 (avg), meaning the current request rate is below the scale-out threshold of 30 requests per second per pod.

Step 5: Verify autoscaling

Use Apache Benchmark to generate traffic and watch each HPA respond independently.

-

Send 5,000 requests to the

/homepath (routed totest-app):ab -c 50 -n 5000 test.example.com/home -

Watch the HPA status update in real time:

kubectl get hpa --watchAfter the load is applied, you will see

test-hpascale out whilesample-hparemains at one replica. The output transitions from the initial state to the scaled-out state:NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE sample-hpa Deployment/sample-app 0/30 (avg) 1 10 1 22m test-hpa Deployment/test-app 22096m/30 (avg) 1 10 3 80mPress Ctrl+C to stop watching.

-

Send 5,000 requests to the root path (routed to

sample-app):ab -c 50 -n 5000 test.example.com/ -

Check the HPA status again:

kubectl get hpaExpected output:

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE sample-hpa Deployment/sample-app 27778m/30 (avg) 1 10 2 38m test-hpa Deployment/test-app 0/30 (avg) 1 10 1 96msample-hpascaled out in response to the new load, whiletest-hpascaled back in after the traffic to/homestopped. Each application scaled independently based on its own traffic.

What's next

-

Multi-zone autoscaling: For data-intensive, high-availability services that need to scale across availability zones simultaneously, see Implement rapid and simultaneous elastic scaling across multiple zones.

-

Custom OS images for elastic scaling: To simplify autoscaling in environments with specific node requirements, see Elastic optimization with custom images.