Data Transmission Service (DTS) continuously replicates data from an ApsaraDB RDS for PostgreSQL instance to an ApsaraDB for ClickHouse cluster using logical replication. This lets you run analytics on ClickHouse without impacting your PostgreSQL workload.

Prerequisites

Before you begin, make sure you have:

An ApsaraDB for ClickHouse cluster with storage space larger than the data volume to synchronize. See Create a cluster.

The

wal_levelparameter on the source ApsaraDB RDS for PostgreSQL instance set tological. See Set instance parameters.

For supported source and destination database versions, see Synchronization solution overview.

Billing

| Synchronization type | Pricing |

|---|---|

| Schema synchronization and full data synchronization | Free |

| Incremental data synchronization | Charged. See Billing overview. |

Supported SQL operations for incremental synchronization

| Operation type | SQL statements |

|---|---|

| DML | INSERT, UPDATE, DELETE |

| DDL | CREATE TABLE, DROP TABLE, TRUNCATE TABLE, ADD COLUMN, MODIFY COLUMN, DROP COLUMN |

Required permissions

| Database | Required permissions | How to configure |

|---|---|---|

| Source ApsaraDB RDS for PostgreSQL | Privileged account that is the owner of the database to synchronize | Create an account and create a database |

| Destination ApsaraDB for ClickHouse cluster (v22.8 or later) | Read and write permissions on the destination database. A privileged account meets this requirement. | Community-Compatible Edition account management |

| Destination ApsaraDB for ClickHouse cluster (v21.8) | Read/write And Set Permissions and Allow DDL | Community-Compatible Edition account management |

Create a synchronization task

Step 1: Go to the synchronization task list

Use one of the following methods:

Go to the page from the DTS console

From the DTS console:

Log on to the Data Transmission Service (DTS) console.Data Transmission Service (DTS) console

In the left navigation pane, click Data Synchronization.

In the upper-left corner, select the region where the synchronization instance resides.

Go to the page from the DMS console

From the DMS console:

Log on to Data Management (DMS).Data Management (DMS)

On the top menu bar, choose Data + AI > Data Transmission (DTS) > Data Synchronization.

To the right of Data Synchronization Tasks, select the region where the synchronization instance is located.

The actual operations may vary based on the mode and layout of the DMS console. See Simple mode console and Customize the layout and style of the DMS UI.

Step 2: Configure source and destination databases

Click Create Task.

Configure the source and destination databases.

Task settings:

Parameter Description Task Name DTS auto-generates a name. Specify a descriptive name for easy identification. The name does not need to be unique. Source database (PostgreSQL):

Parameter Value Select Existing Connection Select a pre-added instance from the drop-down list, or configure the connection manually. Database Type PostgreSQL Access Method Alibaba Cloud Instance Instance Region Region of the source ApsaraDB RDS for PostgreSQL instance Replicate Data Across Alibaba Cloud Accounts No (same account) Instance ID ID of the source instance Database Name Name of the database containing the objects to synchronize Database Account Database account. See Required permissions. Database Password Password for the database account Destination database (ClickHouse):

Parameter Value Select Existing Connection Select a pre-added instance from the drop-down list, or configure the connection manually. Database Type ClickHouse Access Method Alibaba Cloud Instance Instance Region Region of the destination ApsaraDB for ClickHouse cluster Replicate Data Across Alibaba Cloud Accounts No (same account) Cluster Type Select based on your needs Cluster ID ID of the destination cluster Database Account Database account. See Required permissions. Database Password Password for the database account Click Test Connectivity and Proceed.

DTS server IP address blocks must be added to the security settings of both databases before the connectivity test passes. This can be done automatically or manually. See Add the IP address blocks of DTS servers to a whitelist. If the source or destination is a self-managed database, also click Test Connectivity in the CIDR Blocks of DTS Servers dialog box.

Step 3: Configure task objects

On the Configure Objects page, configure the synchronization settings.

Synchronization types:

| Option | Description |

|---|---|

| Schema Synchronization | DTS creates the target table schema in ClickHouse, including the _sign, _is_deleted, and _version fields. Select this if you want DTS to manage the target table structure. |

| Full Data Synchronization | DTS initializes the destination with a full copy of the source data before starting incremental sync. |

| Incremental Data Synchronization | Selected by default. DTS continuously replicates changes from the source. |

Select all three types for a complete initial load followed by ongoing synchronization.

Processing mode for conflicting tables:

| Mode | Behavior |

|---|---|

| Precheck and Report Errors | Fails the precheck if a table with the same name already exists in the destination. Use this to avoid accidental overwrites. To resolve name conflicts without deleting the destination table, see Map table and column names. |

| Ignore Errors and Proceed | Skips the duplicate table check. If schemas match and primary keys conflict: full sync retains the destination record; incremental sync overwrites it with the source record. If schemas differ, partial or complete sync failure may occur. |

Capitalization of Object Names in Destination Instance:

Configure the case sensitivity policy for database, table, and column names synchronized to the destination. The default is DTS default policy. You can also select the default policies of the source or destination database. See Case sensitivity policy for destination object names.

Object selection:

In the Source Objects box, click an object to synchronize, then click ![]() to move it to Selected Objects. You can select objects at the schema or table level.

to move it to Selected Objects. You can select objects at the schema or table level.

In the Selected Objects box:

Right-click an object to rename it or map it to a different destination object. See Map table and column names.

Right-click a table to set a WHERE clause filter. See Set filter conditions.

Right-click an object to select specific SQL operations for incremental synchronization.

Click an object and then click

to remove it from the selection.

to remove it from the selection.

Click Next: Advanced Settings and configure the options below.

Advanced settings:

| Parameter | Description |

|---|---|

| Dedicated Cluster for Task Scheduling | By default, DTS schedules tasks on a shared cluster. For more stable performance, purchase a dedicated cluster. See What is a DTS dedicated cluster? |

| Retry Time for Failed Connections | Duration DTS retries after a connection failure. Default: 720 minutes. Range: 10–1,440 minutes. Set to 30 minutes or more. If multiple DTS instances share the same source or destination, the shortest retry duration applies to all of them. |

| Retry Time for Other Issues | Duration DTS retries after non-connection errors such as DDL or DML exceptions. Default: 10 minutes. Range: 1–1,440 minutes. Set to 10 minutes or more. Must be less than Retry Time for Failed Connections. |

| Enable Throttling for Full Data Synchronization | Limits the full sync rate to reduce load on the source and destination. Configure Queries per second (QPS) to the source database, RPS of Full Data Migration, and Data migration speed for full migration (MB/s). Available only when Full Data Synchronization is selected. You can also adjust the rate after the task starts. |

| Enable Throttling for Incremental Data Synchronization | Limits the incremental sync rate. Configure RPS of Incremental Data Synchronization and Data synchronization speed for incremental synchronization (MB/s). |

| Environment Tag | Optional tag to identify the instance. |

| Configure ETL | Enable the extract, transform, and load (ETL) feature to apply data processing logic during sync. See What is ETL? and Configure ETL in a data migration or data synchronization task. |

| Monitoring and Alerting | Configure alerts for task failures or latency exceeding a threshold. See alert notificationsConfigure monitoring and alerting during task configuration. |

Click Next: Configure Database and Table Fields to configure ClickHouse-specific table settings.

ClickHouse table structure settings:

DTS provides a default configuration. Set Definition Status to All to review and modify all tables.

| Setting | Description |

|---|---|

| Type | Table engine type |

| Primary Key Column | Supports composite keys. Select one or more fields. See CREATE TABLE. |

| Sort Key | Supports composite keys. Select one or more fields. |

| Distribution Key | Single field only. |

| Partition Key | Optional. Must not be a nullable field, or the task will fail. Supports BIGINT, INT, TIMESTAMP, DATETIME, and DATE types only. Select one or more columns from Primary Key Column as the partition key. For the calculation logic, see Partition key calculation logic. |

Step 4: Run the precheck

Click Next: Save Task Settings and Precheck.

To view API parameters for the task configuration, hover over the button and click Preview OpenAPI parameters.

DTS runs a precheck before starting the synchronization task. The task starts only after all precheck items pass.

If the precheck fails, click View Details next to the failed item, fix the issue, then rerun the precheck.

If the precheck generates a warning for an item that cannot be ignored, click View Details, fix the issue, then rerun the precheck.

If the precheck generates a warning for an ignorable item, click Confirm Alert Details > Ignore > OK > Precheck Again to skip the warning. Skipping warnings may cause data inconsistency.

Step 5: Purchase the instance

When the Success Rate reaches 100%, click Next: Purchase Instance.

On the Purchase page, configure the instance.

Parameter Description Billing Method Subscription: Pay upfront for 1–9 months or 1, 2, 3, or 5 years. More cost-effective for long-term use. Pay-as-you-go: Billed hourly. Release the instance when no longer needed to avoid charges. Resource Group Configuration Resource group for the instance. Default: default resource group. See What is Resource Management? Link Specification Determines synchronization throughput. Choose based on your data volume and latency requirements. See Data synchronization link specifications. Read the Data Transmission Service (Pay-as-you-go) Service Terms and click Buy and Start. In the confirmation dialog box, click OK.

You can monitor task progress on the Data Synchronization page.

Limitations

Source database requirements

Bandwidth: The source database server must have outbound bandwidth of at least 100 Mbit/s. Lower bandwidth reduces synchronization speed.

Table structure: Tables to synchronize must have primary keys or UNIQUE constraints with unique fields. Otherwise, duplicate data may appear in the destination. If the destination table is not created by DTS (that is, Synchronization Types does not include Schema Synchronization), the destination table must have the same primary key or non-null UNIQUE constraint as the source table.

Database name: Database names cannot contain hyphens (-). For example,

dts-testdatacannot be synchronized.Unsupported objects: DTS does not synchronize TimescaleDB extension tables, tables with cross-schema inheritance, or tables with expression-based unique indexes.

Large table-level tasks: If table-level synchronization with object editing covers more than 5,000 tables in a single task, split them into multiple tasks or synchronize at the database level instead.

WAL retention:

For incremental-only tasks: retain WAL logs for more than 24 hours.

For full + incremental tasks: retain WAL logs for at least 7 days. After the initial full sync completes, you can reduce the retention to more than 24 hours.

If the retention period is shorter than required and the task fails or data inconsistency occurs, the issue is not covered by the DTS Service-Level Agreement (SLA).

WAL configuration: Set

wal_leveltologicaland enable Logical Replication Slot Failover for ApsaraDB RDS for PostgreSQL. This prevents logical replication interruptions during a failover.DDL restrictions: Do not run DDL operations that change the schema of databases or tables during schema synchronization or full data synchronization. This causes the task to fail.

During full data synchronization, DTS queries the source database and acquires metadata locks, which may block DDL operations on the source.

Operation restrictions:

Non-standard DDL syntax on synchronization objects may cause task failure or data loss.

A major engine version upgrade on the source while the synchronization instance is running causes the instance to fail permanently. Reconfigure the synchronization instance.

If a single incremental change exceeds 256 MB, the synchronization instance may fail permanently. Reconfigure the synchronization instance.

Long-running transactions: Long-running transactions combined with incremental synchronization prevent WAL logs from being cleared until the transactions commit. This may cause insufficient disk space on the source.

Partitioned tables: Include both the parent table and all child tables as synchronization objects. The parent table of a PostgreSQL partitioned table does not store data directly — all data is in the child tables. Missing child tables causes data inconsistency.

ClickHouse-specific requirements

Database limit: A single synchronization task can synchronize data from one database only. Configure separate tasks for each database. The total number of databases must not exceed the ClickHouse limit of 256.

Naming conventions: Database, table, and column names must comply with ClickHouse naming conventions. See Object naming convention limits.

Time-type data range: ClickHouse enforces range limits on time-type data. Values outside the supported range will be incorrect after synchronization. See Time data range limits.

Partition key: The partition key cannot be a nullable field. Only BIGINT, INT, TIMESTAMP, DATETIME, and DATE fields are supported.

Additional fields: During schema synchronization, DTS adds

_sign,_is_deleted, and_versionfields to the destination table. If you create the destination table manually (without Schema Synchronization), add these fields yourself. See Destination table and field structure.REPLICA IDENTITY FULL: In the following three cases, run

ALTER TABLE schema.table REPLICA IDENTITY FULL;on the tables to synchronize before writing data. This ensures data consistency. Do not run this command while the table is locked. If you skip the related precheck items, DTS runs this command automatically during instance initialization.When the synchronization instance starts for the first time.

When the synchronization granularity is schema, and a new table is created in the schema or an existing table is rebuilt using RENAME.

When you use the Modify Objects feature.

Replace

schemaandtablewith the actual schema and table names. Run this command during off-peak hours.

DTS internal behavior

Temporary tables: DTS creates the following temporary tables in the source database to track DDL statements, table structures, and heartbeat information. Do not delete these tables during synchronization — doing so will break the task. The tables are deleted automatically when the DTS instance is released.

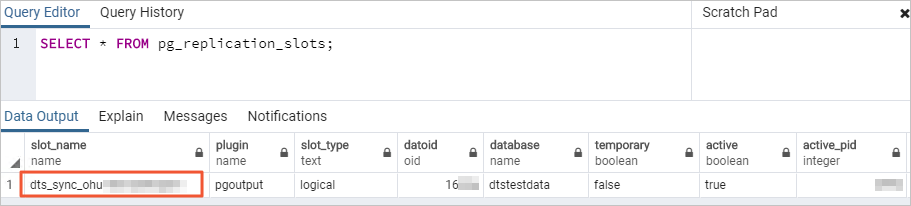

public.dts_pg_class,public.dts_pg_attribute,public.dts_pg_type,public.dts_pg_enum,public.dts_postgres_heartbeat,public.dts_ddl_command,public.dts_args_session,public.aliyun_dts_instanceReplication slot: DTS creates a replication slot with the prefix

dts_sync_in the source database to read incremental logs. DTS reads from this slot within 15 minutes. When the task fails or the instance is released, DTS attempts to clean up the replication slot automatically. If you change the database account password or remove the DTS IP address whitelist during synchronization, the replication slot cannot be cleaned up automatically. Clean it up manually to prevent disk space accumulation. If a failover occurs on the source, log on to the secondary database and clean up the replication slot manually.

Resource usage during full sync: Initial full data synchronization consumes read and write resources on both source and destination databases. Run the synchronization during off-peak hours when the CPU load on both databases is below 30%. Initial full sync runs concurrent INSERT operations, so the destination storage may be larger than the source due to table fragmentation.

External writes to destination: If data is written to the destination from a source other than DTS while the synchronization instance is running, data inconsistency may occur and the instance may fail.

Metadata validation: DTS validates data content but not metadata such as sequences. Validate metadata manually.

Task recovery: If the task fails, DTS technical support attempts recovery within 8 hours. This may involve restarting the task or modifying DTS task parameters. Database parameters are not changed. For parameters that may be modified, see Modify instance parameters.

Special cases

ApsaraDB RDS for PostgreSQL source: Do not change the endpoint or zone of the source instance during synchronization. This causes the task to fail.

Self-managed PostgreSQL source: The values of

max_wal_sendersandmax_replication_slotsmust each be greater than the sum of currently active replication slots and the number of DTS instances to be created for this source.Google Cloud SQL for PostgreSQL source: The database account must have the

cloudsqlsuperuserpermission. Select only objects that this account is authorized to manage, or grant the Owner permission for the objects by running:An account with

cloudsqlsuperuserpermission cannot manage data owned by another account withcloudsqlsuperuserpermission.GRANT <owner_of_the_object_to_be_synchronized> TO <source_database_account_used_by_the_task>;

Appendix

Destination table and field structure

Table requirements

If you create the destination table manually (without using Schema Synchronization):

If the destination table specifies an ENGINE, it must be ENGINE = ReplicatedReplacingMergeTree(_version, _is_deleted). Otherwise, data inconsistency may occur.

ClickHouse Community Edition: Create one local table and one distributed table. The distributed table name must match the source table name. The local table name must be

<distributed_table_name>_local.ClickHouse Enterprise Edition: Create a table with the same name as the source table.

DTS control fields

DTS adds three fields to the destination table to track row versions and deletion state. Use these fields in queries to get consistent results:

SELECT * FROM table_name FINAL WHERE _sign > 0;The FINAL modifier deduplicates rows with the same sort key. The WHERE _sign > 0 condition filters out deleted rows.

How CDC operations map to ClickHouse rows:

ClickHouse uses an append-only model. DTS writes all change data capture (CDC) operations — including UPDATEs and DELETEs — as new versioned rows rather than modifying existing ones. The following example shows how three source operations appear in ClickHouse:

| Source operation | Row ID | _sign | _is_deleted | _version | Notes |

|---|---|---|---|---|---|

| Initial INSERT (row 5) | 5 | 1 | 0 | 1000 | Row written at sync time |

| UPDATE row 5 | 5 | 1 | 0 | 1001 | New row with higher _version; previous row retained |

| DELETE row 5 | 5 | -1 | 1 | 1002 | New row marking deletion; previous rows retained |

Use FINAL in queries to merge these versioned rows and return the latest state of each row. The WHERE _sign > 0 filter excludes deleted rows from results.

Fields added by DTS:

For Community Edition earlier than 23.8:

| Field | Data type | Default | Description |

|---|---|---|---|

_sign | Int8 | 1 | DML operation type: INSERT = 1, UPDATE = 1, DELETE = -1 |

_version | UInt64 | 1 | Timestamp when the row was written to ClickHouse |

For Enterprise Edition and Community Edition 23.8 and later:

| Field | Data type | Default | Description |

|---|---|---|---|

_sign | Int8 | 1 | DML operation type: INSERT = 1, UPDATE = 1, DELETE = -1 |

_is_deleted | UInt8 | 0 | Deletion marker: INSERT = 0, UPDATE = 0, DELETE = 1 |

_version | UInt64 | 1 | Timestamp when the row was written to ClickHouse |

Time data range limits

ClickHouse enforces the following limits on time-type data. Values outside these ranges will be incorrect after synchronization.

| Data type | Minimum value | Maximum value |

|---|---|---|

| Date | 1970-01-01 00:00:00 | 2149-06-06 00:00:00 |

| Date32 | 1925-01-01 00:00:00 | 2283-11-11 00:00:00 |

| DateTime | 1970-01-01 08:00:00 | 2106-02-07 14:28:15 |

| DateTime64 | 1925-01-01 08:00:00 | 2283-11-12 07:59:59 |

Partition key calculation logic

DTS calculates partition key values based on the source field type:

| Source field type | Calculation |

|---|---|

| BIGINT | intDiv(tablePartKey, 18014398509481984) |

| INT | intDiv(tablePartKey, 4194304) |

| TIMESTAMP | toYYYYMM(tablePartKey) |

| DATETIME | toYYYYMM(tablePartKey) |

| DATE | toYYYYMM(tablePartKey) |

Data type mappings

ApsaraDB RDS for PostgreSQL and ApsaraDB for ClickHouse do not have a one-to-one data type mapping. During initial schema synchronization, DTS maps types based on what the destination supports. See Data type mappings for schema synchronization.