Policy Lab provides tools to investigate, test, and improve your risk control policies. It includes four features: policy restore, policy replay, variable recommendation, and variable and model customization.

Policy restore

Policy restore reconstructs the exact computation the decision engine performed for a historical request. Use it to understand why a specific request was flagged or cleared, and to diagnose policy behavior at the individual request level.

Each rule in the restored result shows whether it matched and how it contributed to the final decision. Use this information to identify which rules caused the outcome and which rules had no effect.

Prerequisites

Before you begin, make sure you have:

The request ID returned by the decision engine for the target request

Log Service enabled — the decision engine writes the logs required for policy restore to the Log Service project under the current account

Restore a request

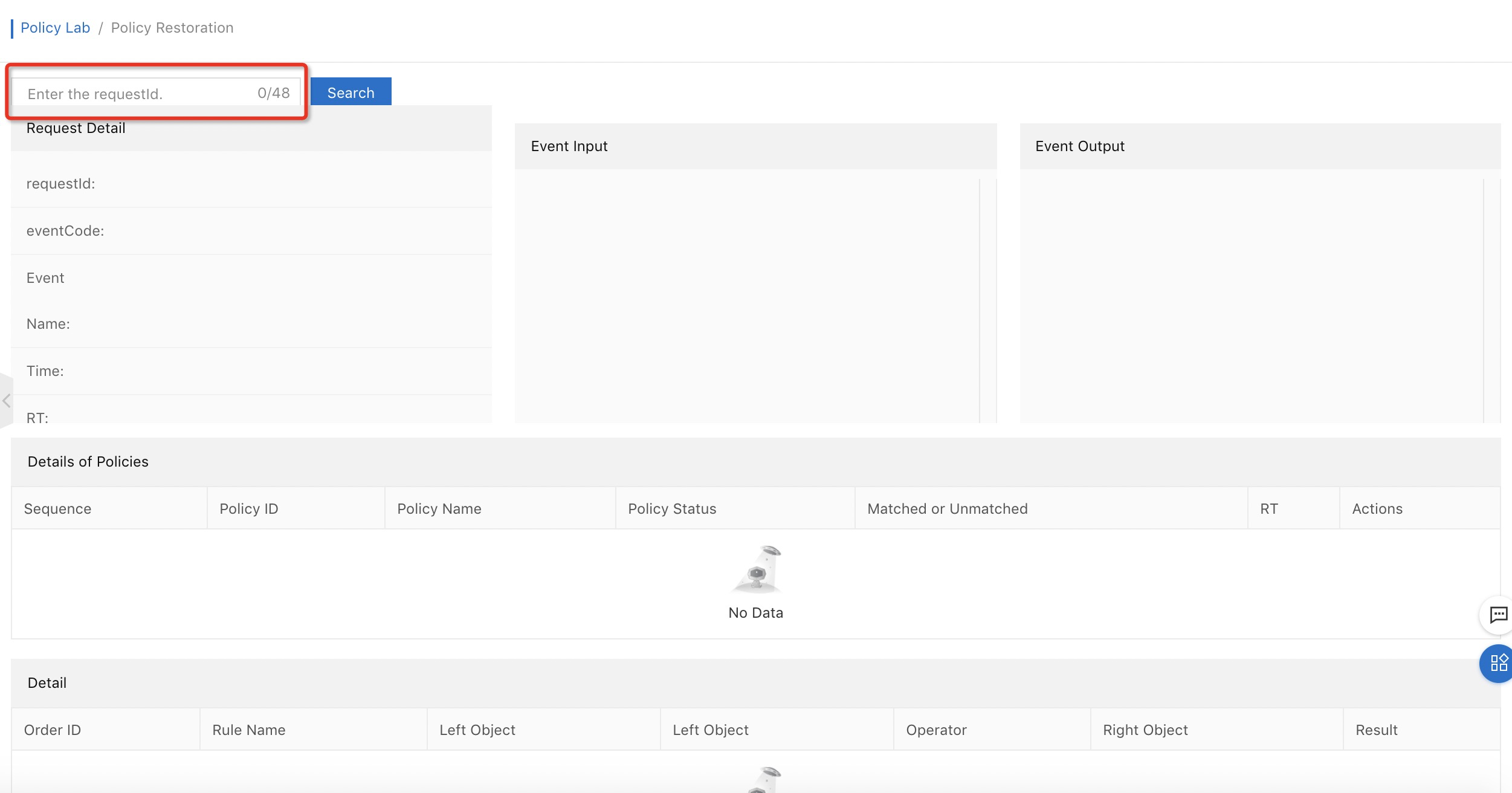

Enter the request ID in the policy restore interface.

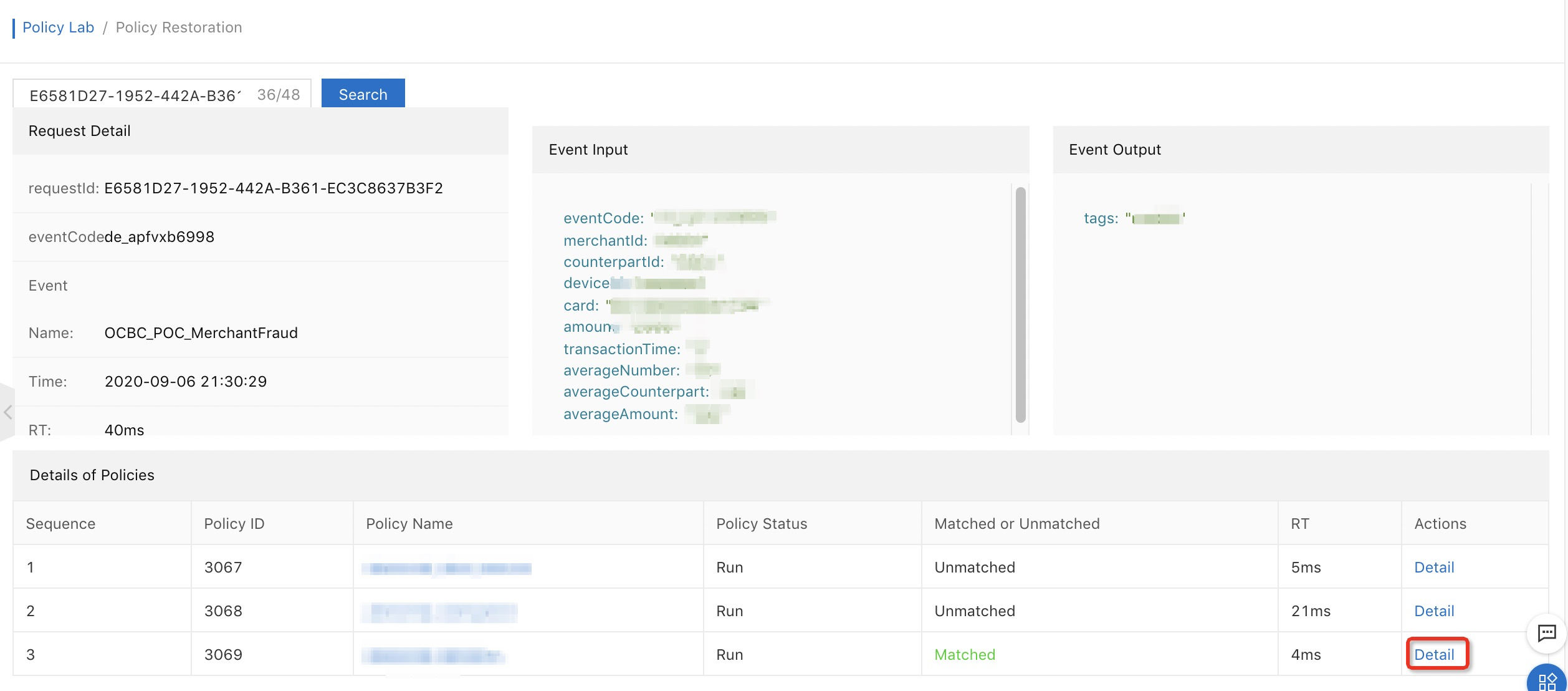

Review the restored request details, which include:

Request details

Event input and output parameters

Policies hit by the request

Click Detail in the Actions column of a hit policy to view its full computation process.

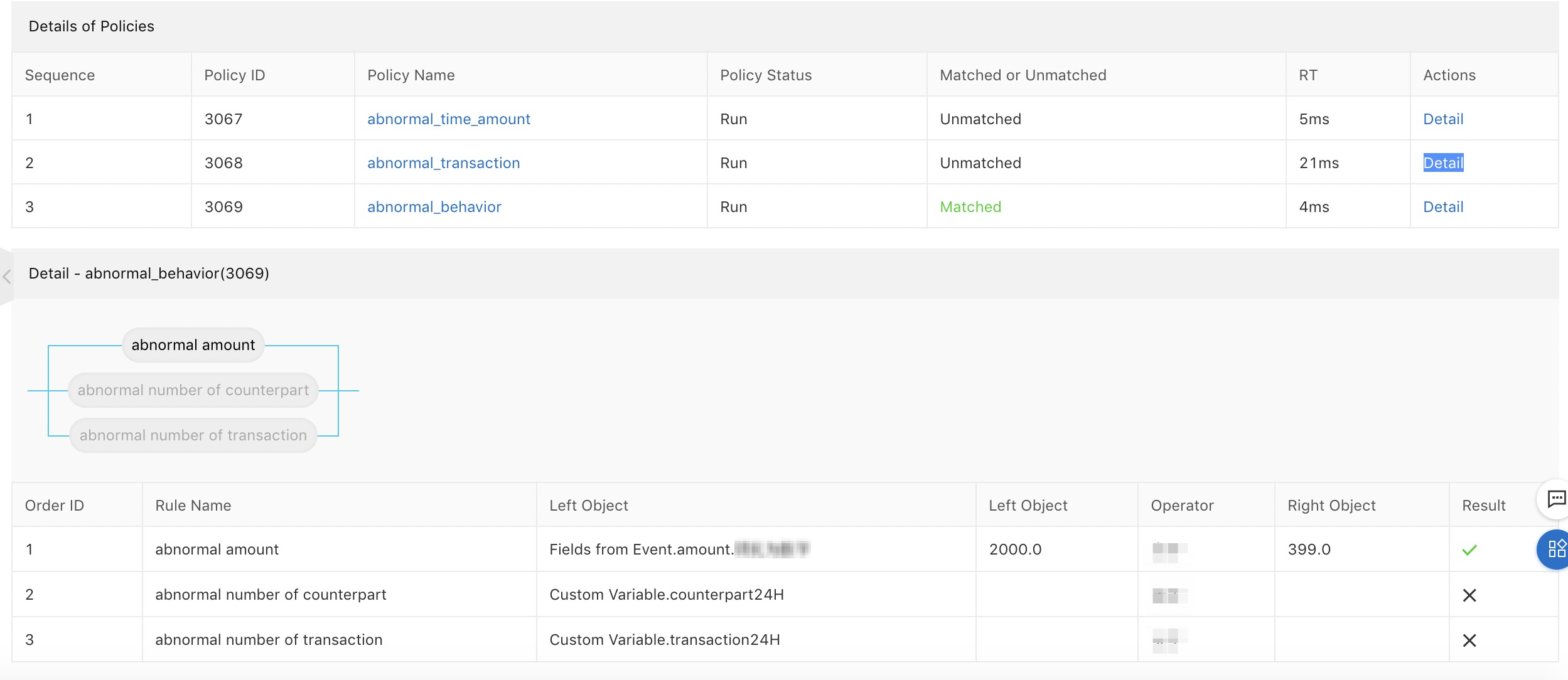

Review the execution result of each rule in the policy.

Each rule shows whether it matched and how it contributed to the final policy decision. Use this to identify which rules drove the outcome and which rules had no effect.

Policy replay

Policy replay runs an updated policy against historical request data to measure its impact before deploying it. Use it to catch missed risks or reduce service interruptions by comparing what the updated policy would have decided against what the original policy actually decided.

Prerequisites

Before you begin, make sure you have:

An updated policy ready to test

Historical event data in the time range you want to replay

Create a replay task

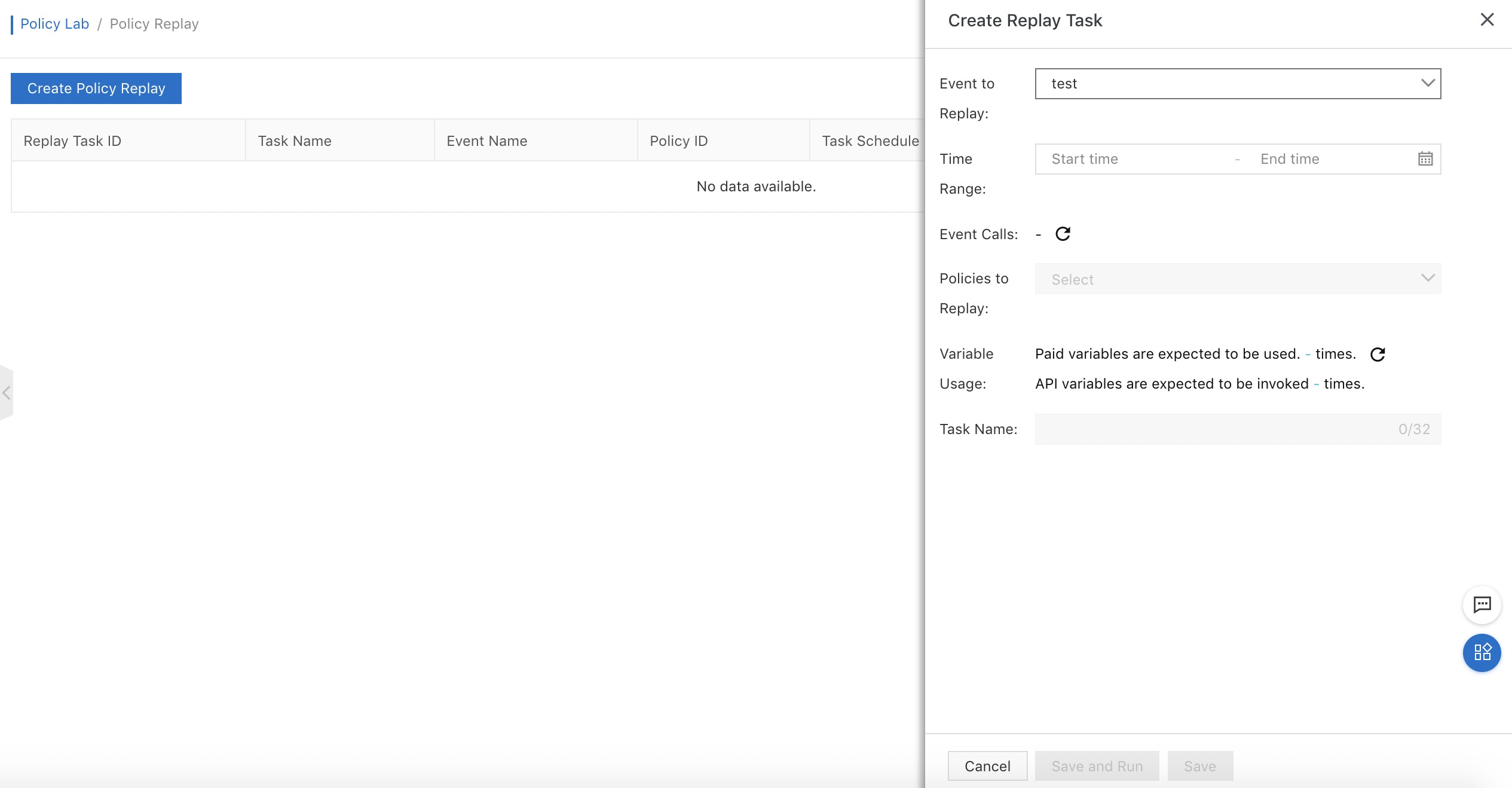

Select an event, specify a time range, and select the policy to replay.

Submit the task. The system queues it and begins computing when the task starts.

Wait for the task to complete. Computing time depends on data volume — up to 10,000 event requests typically complete within 1 hour.

Review results

After the task completes, the system delivers logs to the Log Service project and generates a comparison report. Go to the Log Service console for deeper log analysis.

The report shows how the updated policy's decisions differ from the original decisions. Focus on two dimensions when evaluating the results:

| Dimension | What to look for | When to consider deploying |

|---|---|---|

| Coverage change | Did the updated policy catch risks that the original missed? | The new policy flags significantly more genuine risks |

| False positive change | Did it block or flag requests that the original passed? | The increase in blocked legitimate requests is acceptable for your business |

A policy change is generally worth deploying when it catches more genuine risks without significantly increasing blocked legitimate requests.

Usage notes

If the policy uses charged variables, the replay task may deduct from your resource plans. The system estimates the maximum deduction based on event request volume; actual consumption is always lower than the estimate because the system only runs the computing logic specified by the rule combination in the policy.

Variable recommendation

Variable recommendation automates policy creation by learning from your labeled samples. Provide a set of risky and non-risky samples, and the system trains a model, selects the most predictive variables, and generates a candidate policy — without requiring you to build or train a model manually.

Prerequisites

Before you begin, make sure you have:

More than 2,000 labeled samples uploaded to the My Samples page

A sample ratio of approximately 1 risky sample to every 2 non-risky samples

Samples are stored in an Object Storage Service (OSS) bucket under your account and may incur storage fees. See the OSS documentation for pricing details.

Note: Sample quality directly affects model accuracy. Fewer than 2,000 samples or a heavily skewed ratio may produce a policy with poor coverage or high false positive rates. The 1:2 ratio reflects a balanced training set — adjust it to match the actual risk ratio in your business if it differs significantly.

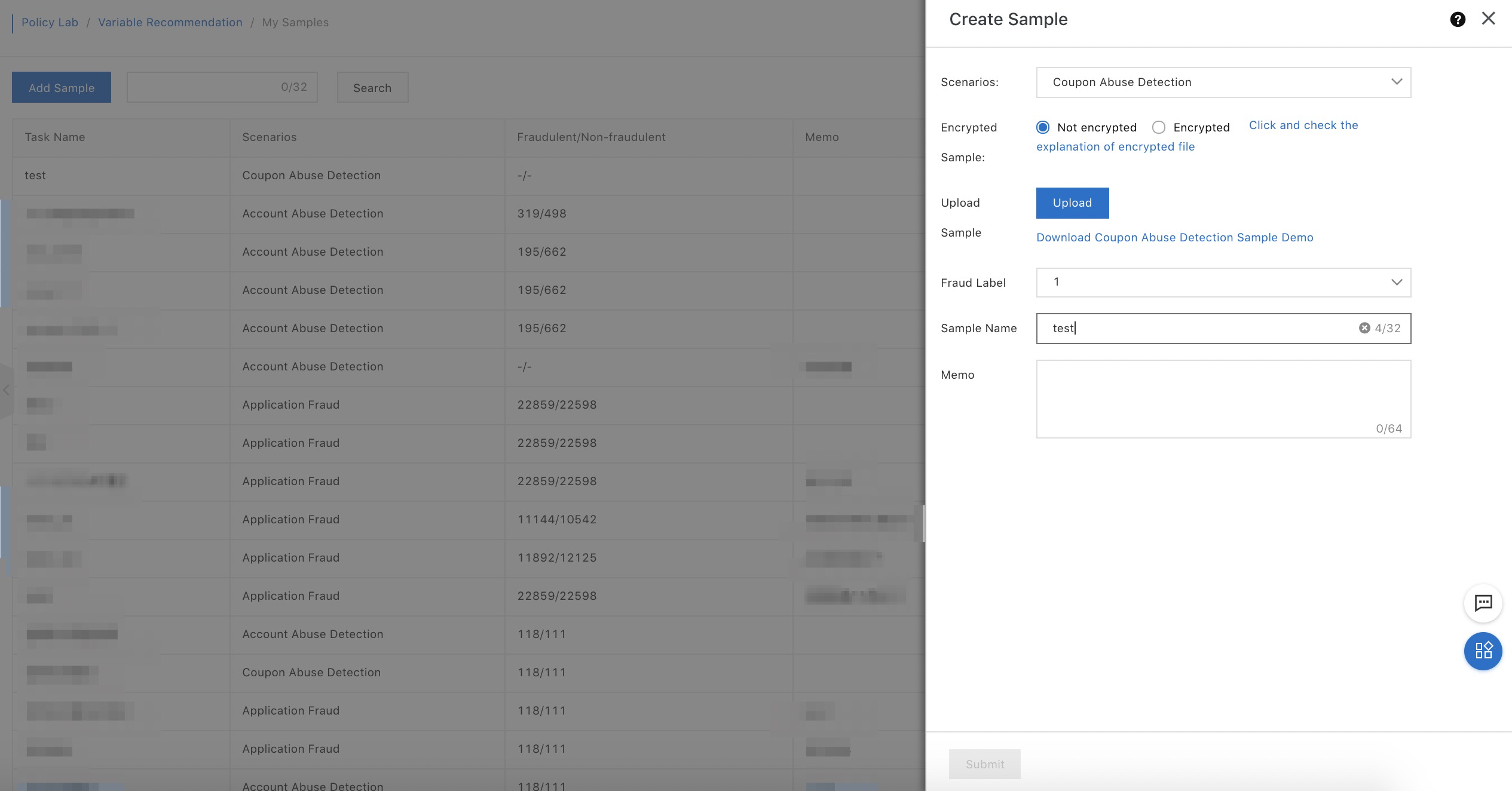

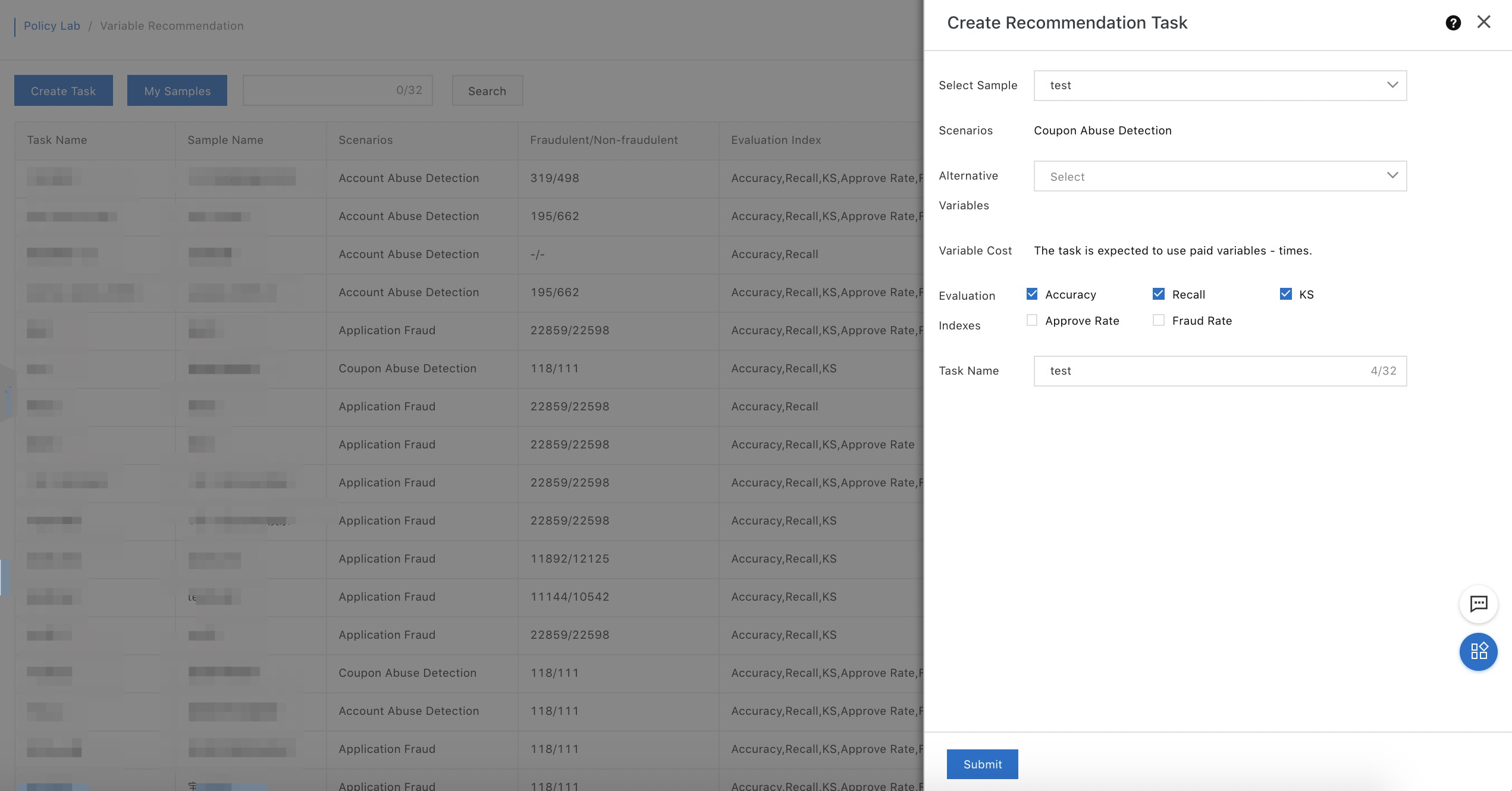

Create a variable recommendation task

Go to the variable recommendation page and create a task by selecting:

Samples

Variables to evaluate

Evaluation metrics

A task name

Submit the task. The system starts the computing process of this task. Tasks typically complete in minutes to hours, depending on sample size and the number of variables evaluated.

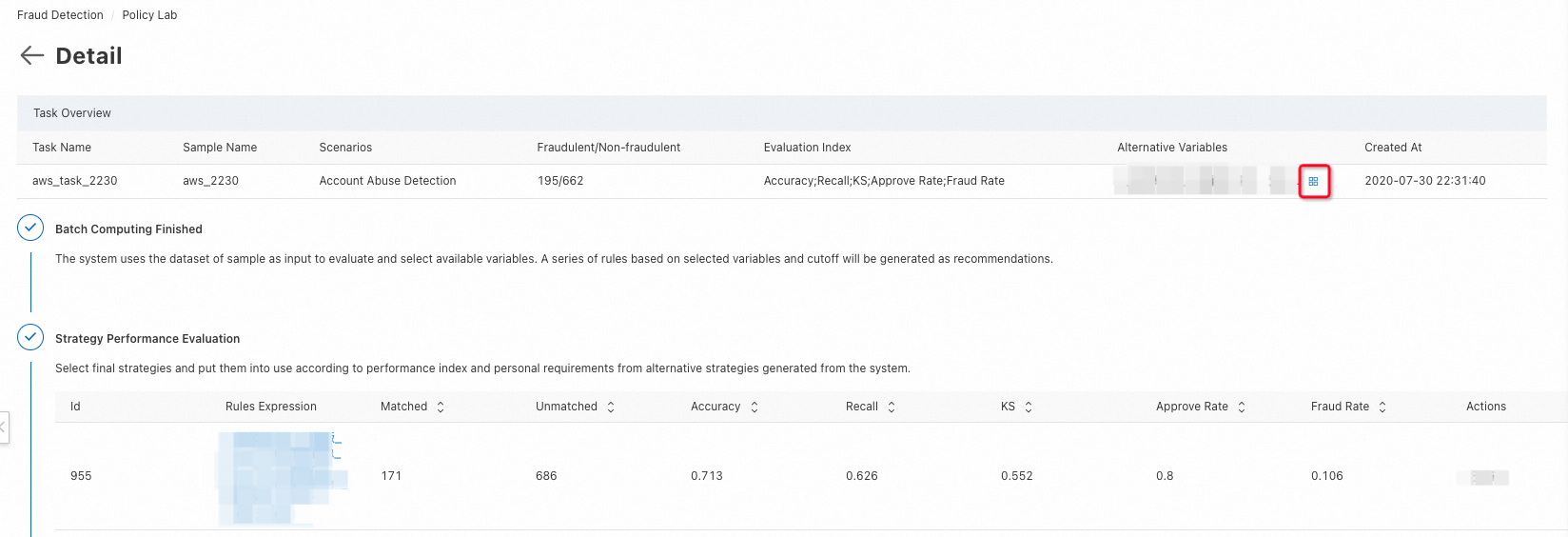

Monitor progress and review results

While the task runs, open the task details page to view staged results. The details page shows:

Independent variables selected so far

Details and evaluation metrics of policies recommended by the system

Add policies that meet your expectations as candidate policies.

Apply a candidate policy

Select a candidate policy and apply it to an event.

If the policy uses variables whose input parameters are missing from the event, the system automatically adds those parameters. Pass in those parameters when calling the operation.

Review the policy's default state before going live:

State: Draft

Output tag: test

These defaults prevent accidental impact on production traffic. Change the state and output tag on the policy details page when you are ready to deploy.