You can use Spark on MaxCompute to access instances in a Virtual Private Cloud (VPC) of Alibaba Cloud, such as Elastic Computing Service (ECS), ApsaraDB for HBase, and ApsaraDB RDS for MySQL (RDS.) The underlying network of MaxCompute is isolated from the Internet by default. Spark on MaxCompute provides a solution that enables you to access HBase in VPC environments by configuring spark.hadoop.odps.cupid.vpc.domain.list. HBase Standard Edition and HBase Enhanced Edition have different configurations. This article describes how to add corresponding configuration items in both editions.

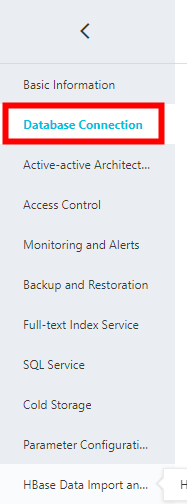

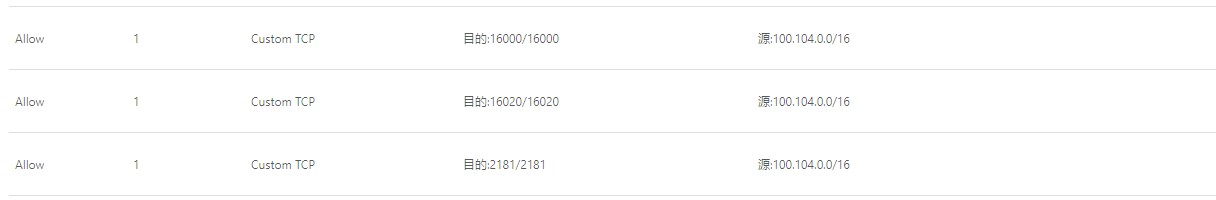

The network environment of HBase resides in the VPC. Therefore, you must add the security group that opens ports 2181, 10600, and 16020. In addition, you must add the IP address of the corresponding MaxCompute instance to the whitelist of HBase.

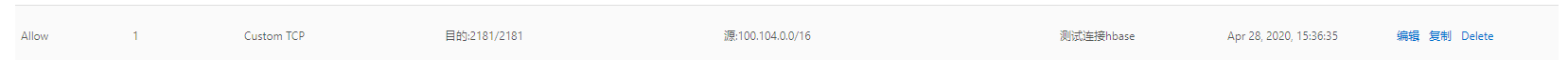

You can find the corresponding security group by looking up the corresponding VPC that you have found. Then, add the security group and set the ports.

Add the following IP address to the whitelist of HBase.

100.104.0.0/16create 'test','cf'Define the required HBase dependencies.

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-mapreduce</artifactId>

<version>2.0.2</version>

</dependency>

<dependency>

<groupId>com.aliyun.hbase</groupId>

<artifactId>alihbase-client</artifactId>

<version>2.0.5</version>

</dependency>object App {

def main(args: Array[String]) {

val spark = SparkSession

.builder()

.appName("HbaseTest")

.config("spark.sql.catalogImplementation", "odps")

.config("spark.hadoop.odps.end.point","http://service.cn.maxcompute.aliyun.com/api")

.config("spark.hadoop.odps.runtime.end.point","http://service.cn.maxcompute.aliyun-inc.com/api")

.getOrCreate()

val sc = spark.sparkContext

val config = HBaseConfiguration.create()

val zkAddress = "hb-2zecxg2ltnpeg8me4-master*-***:2181,hb-2zecxg2ltnpeg8me4-master*-***:2181,hb-2zecxg2ltnpeg8me4-master*-***:2181"

config.set(HConstants.ZOOKEEPER_QUORUM, zkAddress);

val jobConf = new JobConf(config)

jobConf.setOutputFormat(classOf[TableOutputFormat])

jobConf.set(TableOutputFormat.OUTPUT_TABLE,"test")

try{

import spark. _

spark.sql("select '7', 88 ").rdd.map(row => {

val name= row(0).asInstanceOf[String]

val id = row(1).asInstanceOf[Integer]

val put = new Put(Bytes.toBytes(id))

put.addColumn(Bytes.toBytes("cf"), Bytes.toBytes(id), Bytes.toBytes(name))

(new ImmutableBytesWritable, put)

}).saveAsHadoopDataset(jobConf)

} finally {

sc.stop()

}

}

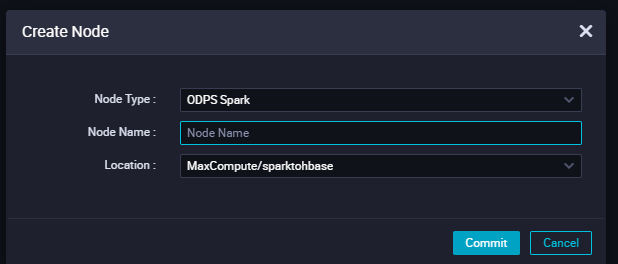

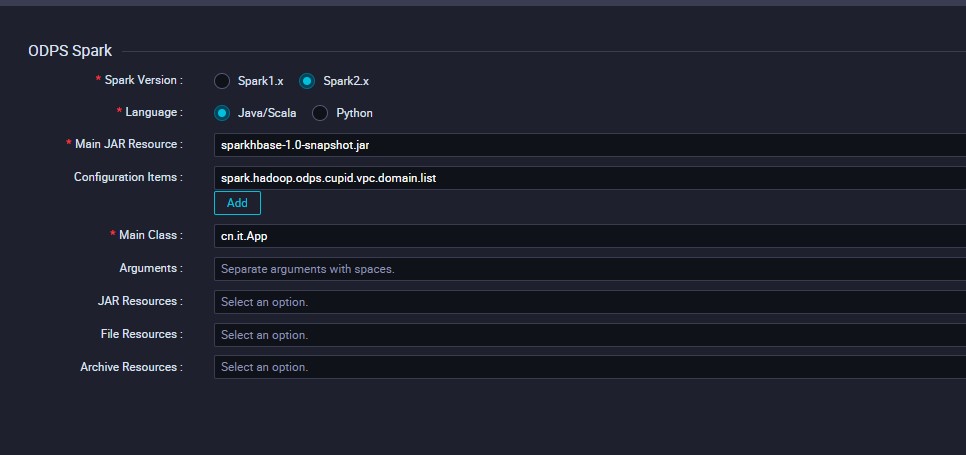

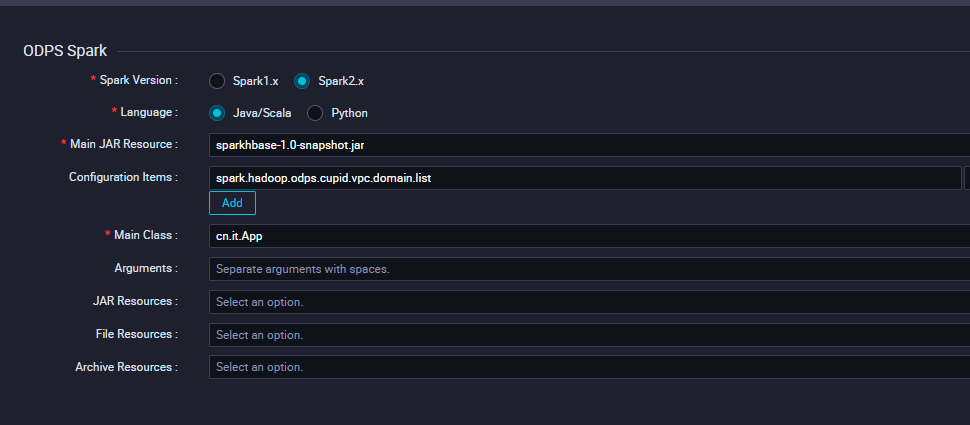

}The size of the program is greater than 50 MB, so you will need to submit it through the MaxCompute client.

add jar SparkHbase-1.0-SNAPSHOT -f;

You must configure spark.hadoop.odps.cupid.vpc.domain.list. The hbase domain here must cover all the hosts of HBase to ensure overall connectivity.

{

"regionId":"cn-beijing",

"vpcs":[

{

"vpcId":"vpc-2zeaeq21mb1dmkqh0exox",

"zones":[

{

"urls":[

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":2181

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":16000

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":16020

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":2181

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":16000

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":16020

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":2181

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":16000

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":16020

},

{

"domain":"hb-2zecxg2ltnpeg8me4-cor*-***.hbase.rds.aliyuncs.com",

"port":16020

},

{

"domain":"hb-2zecxg2ltnpeg8me4-cor*-***.hbase.rds.aliyuncs.com",

"port":16020

},

{

"domain":"hb-2zecxg2ltnpeg8me4-cor*-***.hbase.rds.aliyuncs.com",

"port":16020

}

]

}

]

}

]

}

HBase Enhanced Edition uses ports 30020, 10600, and 16020. In addition, you must add the IP address of the corresponding MaxCompute instance to the whitelist of HBase.

You can find the corresponding security group by looking up the corresponding VPC that you have found. Then, add the security group and set the ports.

100.104.0.0/16create 'test','cf'Define the required HBase dependencies. You must refer to a package of the dependencies in HBase Enhanced Edition.

<dependency>

<groupId>com.aliyun.hbase</groupId>

<artifactId>alihbase-client</artifactId>

<version>2.0.8</version>

</dependency>object McToHbase {

def main(args: Array[String]) {

val spark = SparkSession

.builder()

.appName("spark_sql_ddl")

.config("spark.sql.catalogImplementation", "odps")

.config("spark.hadoop.odps.end.point","http://service.cn.maxcompute.aliyun.com/api")

.config("spark.hadoop.odps.runtime.end.point","http://service.cn.maxcompute.aliyun-inc.com/api")

.getOrCreate()

val sc = spark.sparkContext

try{

spark.sql("select '7', 'long'").rdd.foreachPartition { iter =>

val config = HBaseConfiguration.create()

// You can retrieve the cluster endpoint (VPC internal endpoint) on the Database Connection page in the console.

config.set("hbase.zookeeper.quorum", ":30020");

import spark. _

// xml_template.comment.hbaseue.username_password.default

config.set("hbase.client.username", "");

config.set("hbase.client.password", "");

val tableName = TableName.valueOf( "test")

val conn = ConnectionFactory.createConnection(config)

val table = conn.getTable(tableName);

val puts = new util.ArrayList[Put]()

iter.foreach(

row => {

val id = row(0).asInstanceOf[String]

val name = row(1).asInstanceOf[String]

val put = new Put(Bytes.toBytes(id))

put.addColumn(Bytes.toBytes("cf"), Bytes.toBytes(id), Bytes.toBytes(name))

puts.add(put)

table.put(puts)

}

)

}

} finally {

sc.stop()

}

}

}The HBase client reports "org.apache.spark.SparkException: Task not serializable."

Spark must serialize objects before sending them to other worker nodes.

Solution

- Make the class serializable.

- Declare the instance only in the lambda function within the map function.

- Set the NotSerializable object as static, and create a NotSerializable object for each host.

- Call rdd.forEachPartition, where you create

a serializable object as follows:

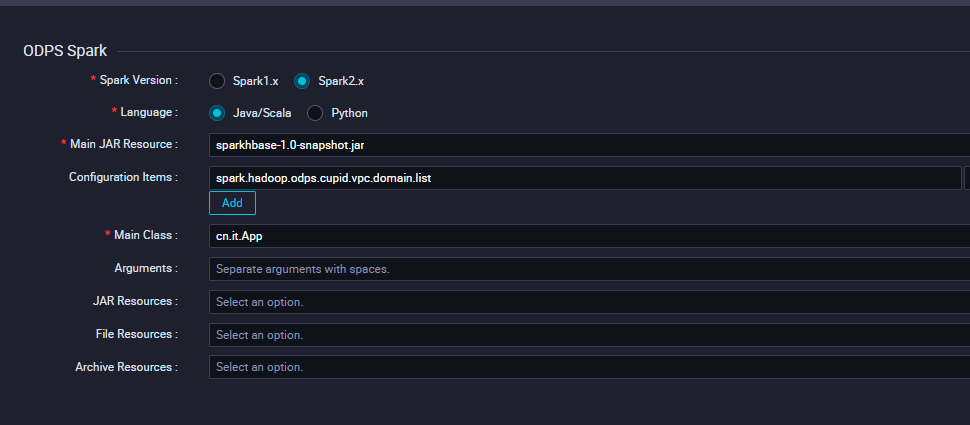

rdd.forEachPartition(iter-> {NotSerializable notSerializable = new NotSerializable();<br/>//... Handle iter});The size of the program is greater than 50 MB, so you will need to submit it through the MaxCompute client.

add jar SparkHbase-1.0-SNAPSHOT -f;

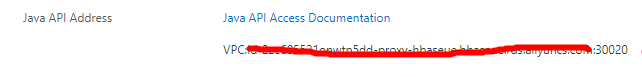

You must configure spark.hadoop.odps.cupid.vpc.domain.list.

1. You must add the endpoint of the enhanced Java API, which is an IP address. You can ping this endpoint to retrieve its IP address, which is 172.16.0.10 in this example. Then, add port 16000.

2. The hbase domain here must cover all the hosts of HBase to ensure overall connectivity.

{

"regionId":"cn-beijing",

"vpcs":[

{

"vpcId":"vpc-2zeaeq21mb1dmkqh0exox",

"zones":[

{

"urls":[

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":30020

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":16000

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":16020

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":30020

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":16000

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":16020

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":30020

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":16000

},

{

"domain":"hb-2zecxg2ltnpeg8me4-master*-***.hbase.rds.aliyuncs.com",

"port":16020

},

{

"domain":"hb-2zecxg2ltnpeg8me4-cor*-***.hbase.rds.aliyuncs.com",

"port":16020

},

{

"domain":"hb-2zecxg2ltnpeg8me4-cor*-***.hbase.rds.aliyuncs.com",

"port":16020

},

{

"domain":"hb-2zecxg2ltnpeg8me4-cor*-***.hbase.rds.aliyuncs.com",

"port":16020

},

{"domain":"172.16.0.10","port":16000}

]

}

]

}

]

}

If you have any further inquiries or suggestions regarding MaxCompute, please comment below or reach out to your nearest Alibaba Cloud sales representative!

Use Mars with RAPIDS to Accelerate Data Science on GPUs in Parallel Mode

New Enterprise Capabilities of MaxCompute: Continuous Data and Service Protection in the Cloud

137 posts | 21 followers

FollowAlibaba Clouder - October 1, 2019

Alibaba Clouder - November 13, 2017

降云 - January 12, 2021

Alibaba EMR - May 7, 2020

ApsaraDB - June 4, 2020

Alibaba Clouder - July 20, 2020

137 posts | 21 followers

Follow ApsaraDB for HBase

ApsaraDB for HBase

ApsaraDB for HBase is a NoSQL database engine that is highly optimized and 100% compatible with the community edition of HBase.

Learn More Alibaba Cloud PrivateZone

Alibaba Cloud PrivateZone

Alibaba Cloud DNS PrivateZone is a Virtual Private Cloud-based (VPC) domain name system (DNS) service for Alibaba Cloud users.

Learn More VPC

VPC

A virtual private cloud service that provides an isolated cloud network to operate resources in a secure environment.

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn MoreMore Posts by Alibaba Cloud MaxCompute