Catch the replay of the Apsara Conference 2020 at this link!

By ELK Geek and Luo Tao, Senior Technical Expert of Alibaba Group

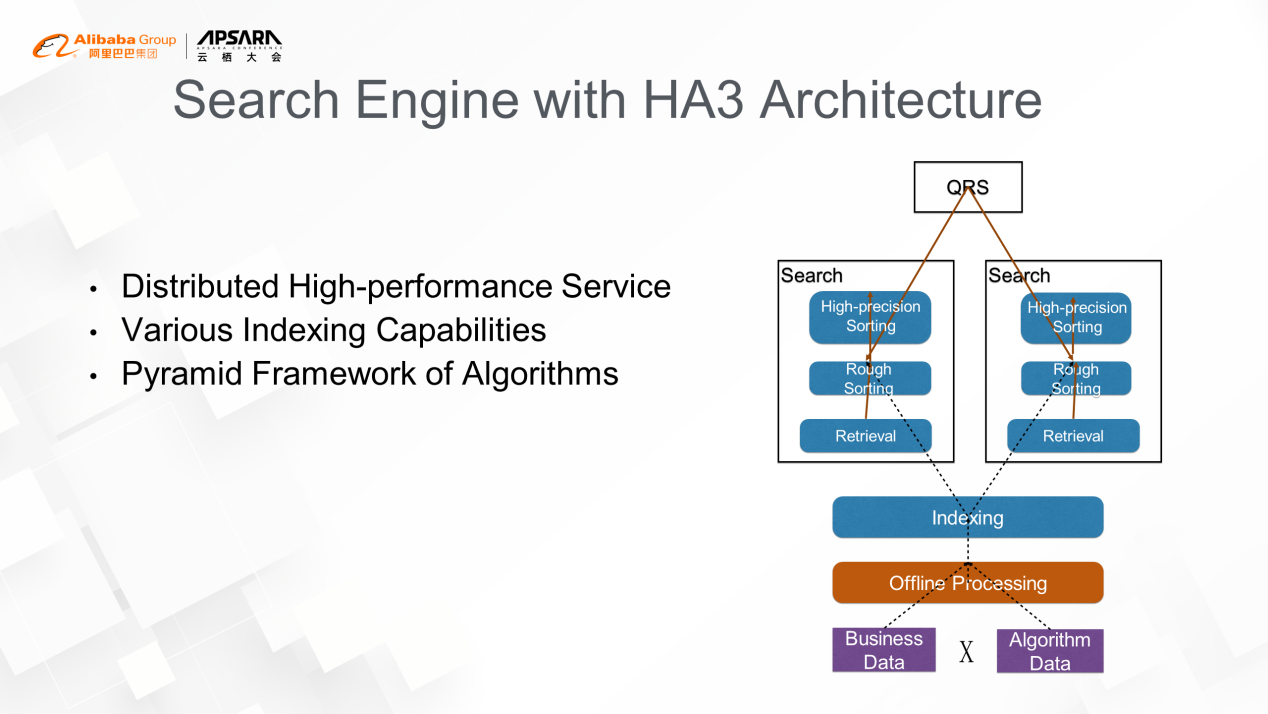

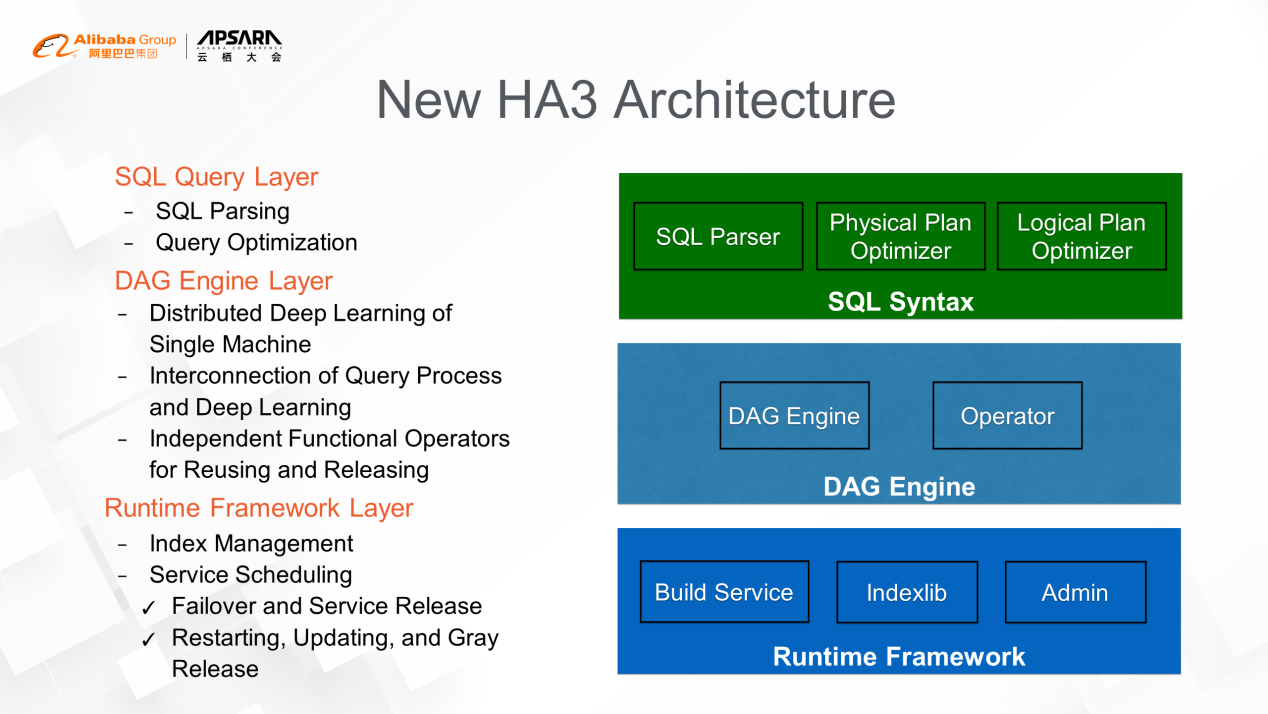

1. There are two parts of HA3 architecture:

2. There are three main features of HA3 architecture:

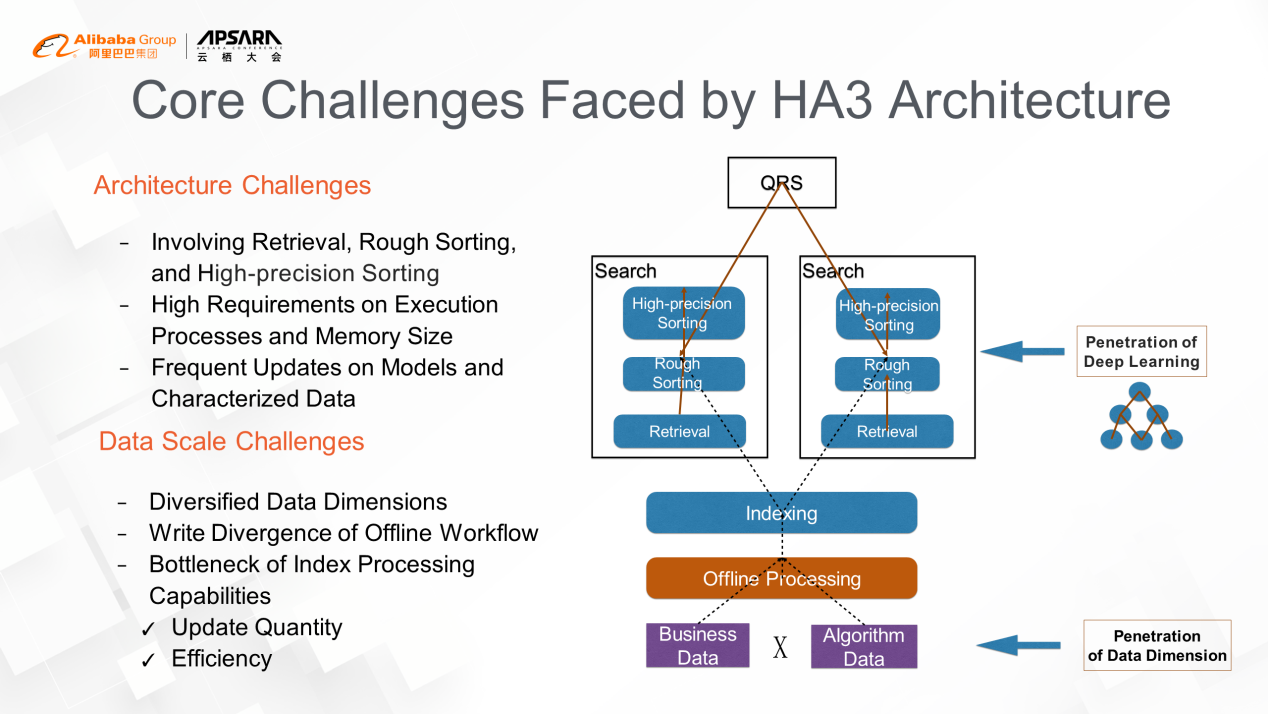

As the business of Alibaba Group has grown over the past few years, this architecture was previously viewed as an advantage but has gradually turned into a barrier for further development.

The main challenges are the extension of deep learning and the expansion of data dimensions.

1. The Extension of Deep Learning

The application scope of deep learning has extended from the early days of high-precision sorting to rough sorting and retrieval, such as the recall of vector index. The introduction of deep learning also causes two problems. The first problem is the network structure of deep learning models are usually complex and have high requirements in the execution process and model size. As a result, traditional pipeline work modes cannot meet the demands anymore. The second problem is the real-time update of the model, and characterized data pose a challenge to the indexing capabilities with tens of billions of updates online.

2. The Expansion of Data Dimensions

In the e-commerce field, the main data dimensions are buyers and sellers. Now, data in locations, distributions, stores, and fulfillment are taken into consideration. Take distribution as an example, there are distributions in 3 kilometers, 5 kilometers, and intra-city and cross-city distributions. In the offline workflow of the search engine, data of each dimension is converted into a large wide table, which results in data expansion in the form of the Cartesian product. Therefore, it is difficult to meet the requirements of the scale and timeliness of upgrades in new scenarios.

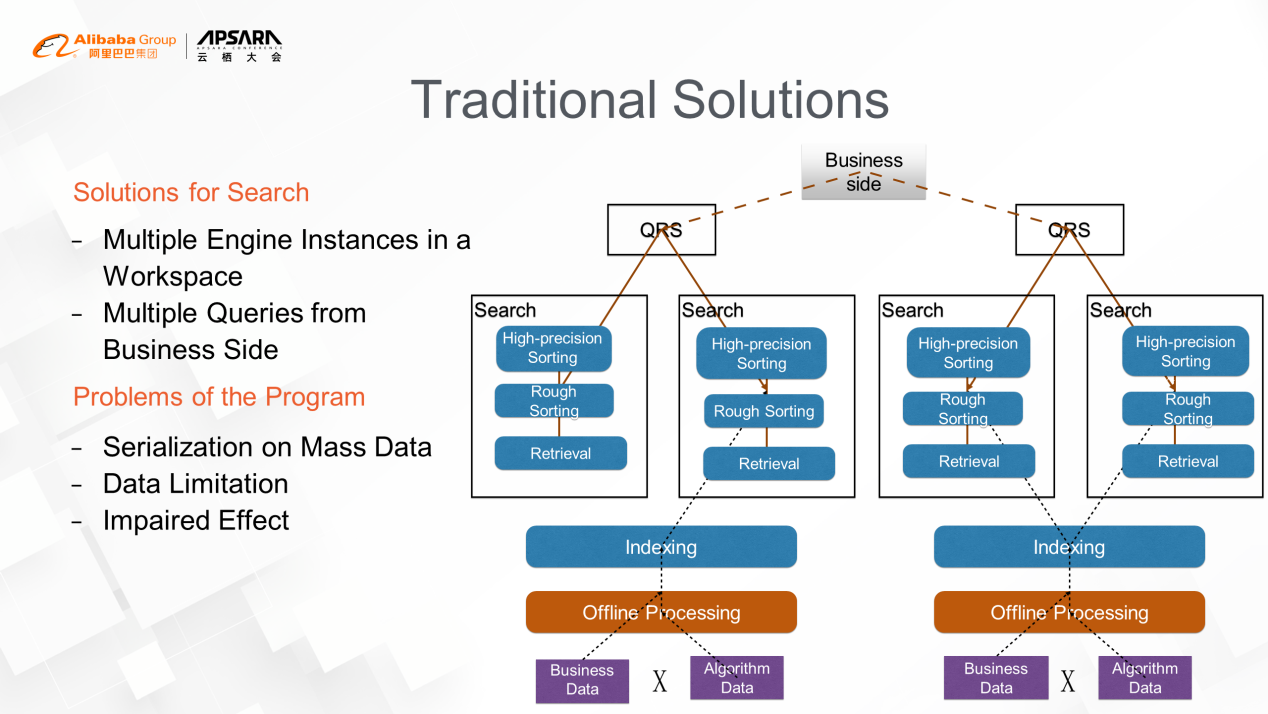

The solution for traditional search engines splits engines into different instances according to the dimension features of business data. Then, users can obtain results by querying different engine instances at the business layer. For example, the search engine of Eleme has data in dimensions of stores and products. To reduce the impact of real-time changes in store status on the index, two search engine instances can be deployed. One is used to search for appropriate stores, and the other is used to search for appropriate products. Both are operated by the business side's queries on store engine and product engine one after another. However, this solution has an obvious disadvantage. When many stores meet users' intentions, the store data needs to be serialized in the store engine and sent to the product engine on the business side. In this process, the cost of serialization is very high, and usually, a certain limitation is required for the number of stores sent from the store engine. However, those stores beyond limitation are very likely to better match the user's intentions, causing major impacts on business performance. It will be big losses for both users and sellers, especially in popular business areas.

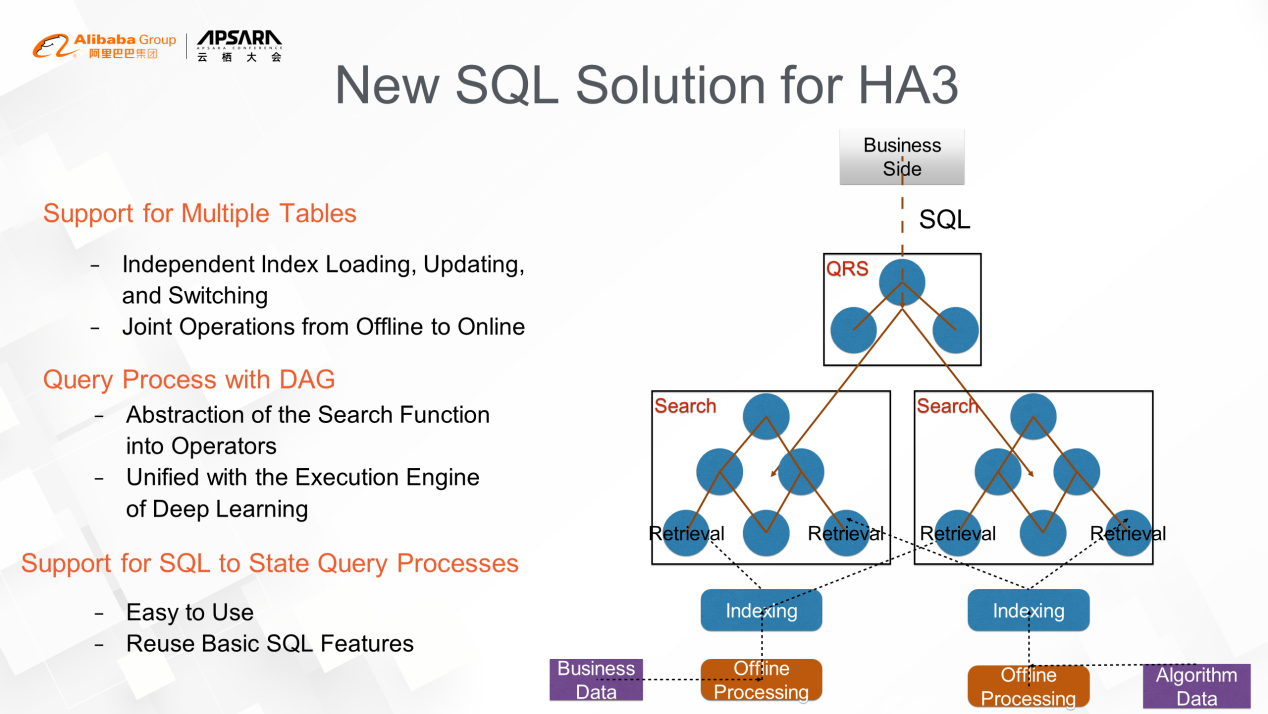

The following three key points describe how to reshape the search process using the SQL database:

It is mainly divided into three layers:

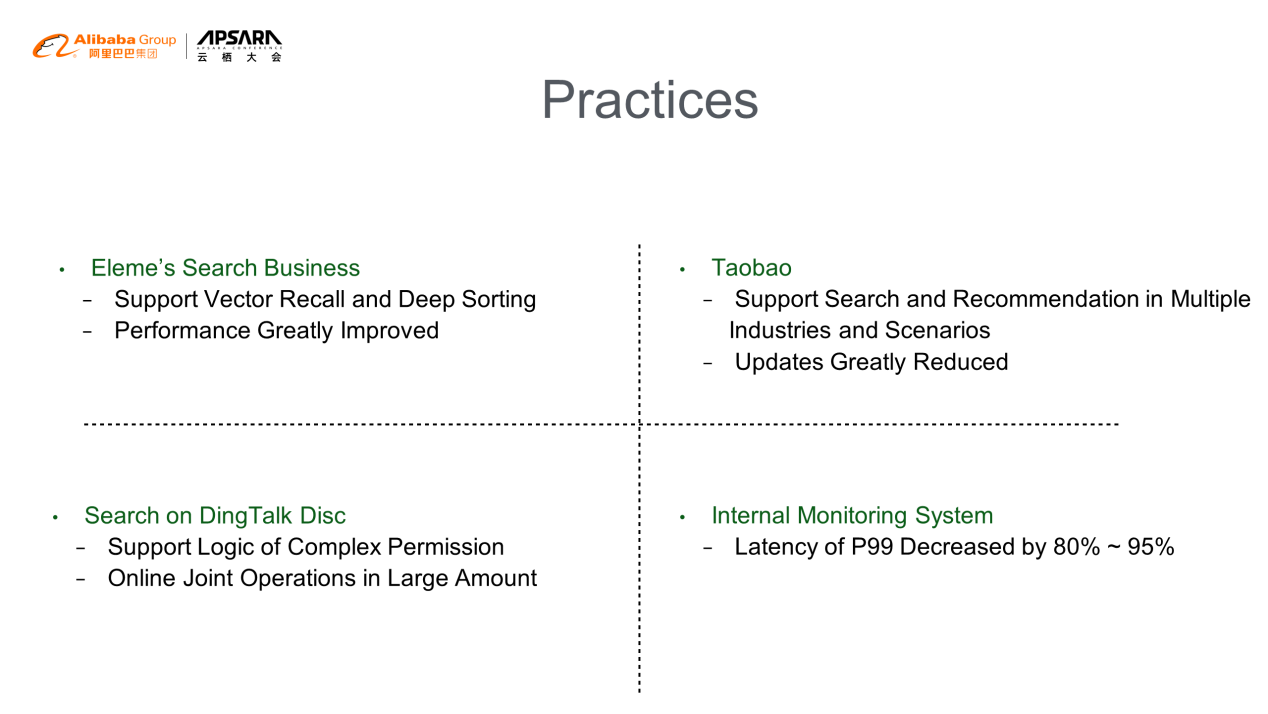

In a scenario of takeaway searching, let's imagine a user enters the keyword "beef noodles" in the search box. The background process of the search engine works in three steps. First, it searches for and finds stores that are currently open that sell beef noodles. Then, it takes the stores with the best matching product listing. Lastly, the user sees the return results of the store and product that best meet their need. In this case, the data on the business status of the store, the distribution capacity, and product inventory needs to be updated in real-time. The searching information matched in mass data also requires various indexing technologies, such as spatial indexing, inverted indexing, and vector indexing. The sorting of stores and commodities also relies on the deep models since user preferences, discount information, and distance are all important. In the past, Elasticsearch was used to query tables from store dimension and product dimension, but may have problems in query results limitation and introducing deep learning. However, these problems can be solved easily within HA3 architecture. After migrating to HA3 architecture, the long tail problem of the service disappears, and the performance improved substantially. Moreover, HA3 architecture saves space for subsequent iterations of algorithms. The performance improvements mainly lie in the index structure and query optimization.

Taobao's core demand is to introduce local services, such as the Tmall supermarket and Freshippo's distribution within an hour, into its search business. By splitting the data of stores and commodity dimensions, the update capability has been greatly improved, and many search functions of Eleme are also reused.

The permission management of documents on DingTalk Disk needs to be supported by a traditional search engine. The reasons lie in the large scale and frequent updating of data in document and permission dimensions. This problem is solved through the real-time local joint operation of HA3's SQL with low latency.

This system was previously built based on druid but can no longer meet the demand for the business scale. Manual solving of errors frequently occur. Therefore, time series data indexes are expanded based on HA3, and the parallel operation capability of SQL is also applied. By doing so, there is a decrease in latency, but stability has improved substantially.

PPWang's Exploration of the Search Business in B2B E-Commerce

The Application of Natural Language Processing in OpenSearch

2,593 posts | 794 followers

FollowApache Flink Community - August 1, 2025

Alibaba Cloud Big Data and AI - April 15, 2026

Alibaba Clouder - August 10, 2020

Alibaba Cloud MaxCompute - September 18, 2019

DavidZhang - July 5, 2022

Apache Flink Community - June 11, 2024

2,593 posts | 794 followers

Follow Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More ApsaraDB for HBase

ApsaraDB for HBase

ApsaraDB for HBase is a NoSQL database engine that is highly optimized and 100% compatible with the community edition of HBase.

Learn More ApsaraDB for Cassandra

ApsaraDB for Cassandra

A database engine fully compatible with Apache Cassandra with enterprise-level SLA assurance.

Learn MoreMore Posts by Alibaba Clouder