The following content and technical points will be introduced in this article:

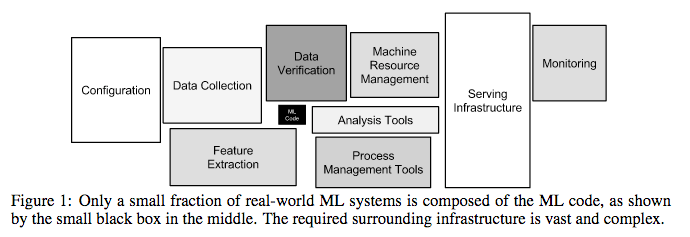

As the commercial values of machine/deep learning have risen, the software technologies for ML have also changed each day. Concepts, such as training, model, algorithm, predictions, inference, together with software frameworks, such as Spark MLlib and Tensorflow, are frequently referenced. The Jupyter notebook can be used on a local machine to call Tensorflow to train tens of thousands of images. After continuous parameter adjustments, the output model inference/prediction results are accurate. There is a figure in the paper published in the NIPS entitled, Hidden Technical Debt in Machine Learning Systems, which shows one thing very accurately. In the process of generating commercial value, the workloads of MLOps are much larger than the core development of machine learning for the development of peripheral settings for machine learning. MLOps in the production environment vary depending on the business scenario. Additionally, it involves many modules, which cannot be explained in one article. This article will focus on one of the modules, the machine learning pipeline. This article will introduce how to use Alibaba Cloud Serverless services to improve the efficiency of R&D and O&M and automatically convert algorithms to trained models. By doing so, it is expected to finally generate business value after testing and approval through prediction/inference in the production environment.

Note: This a figure from Hidden Technical Debt in Machine Learning Systems

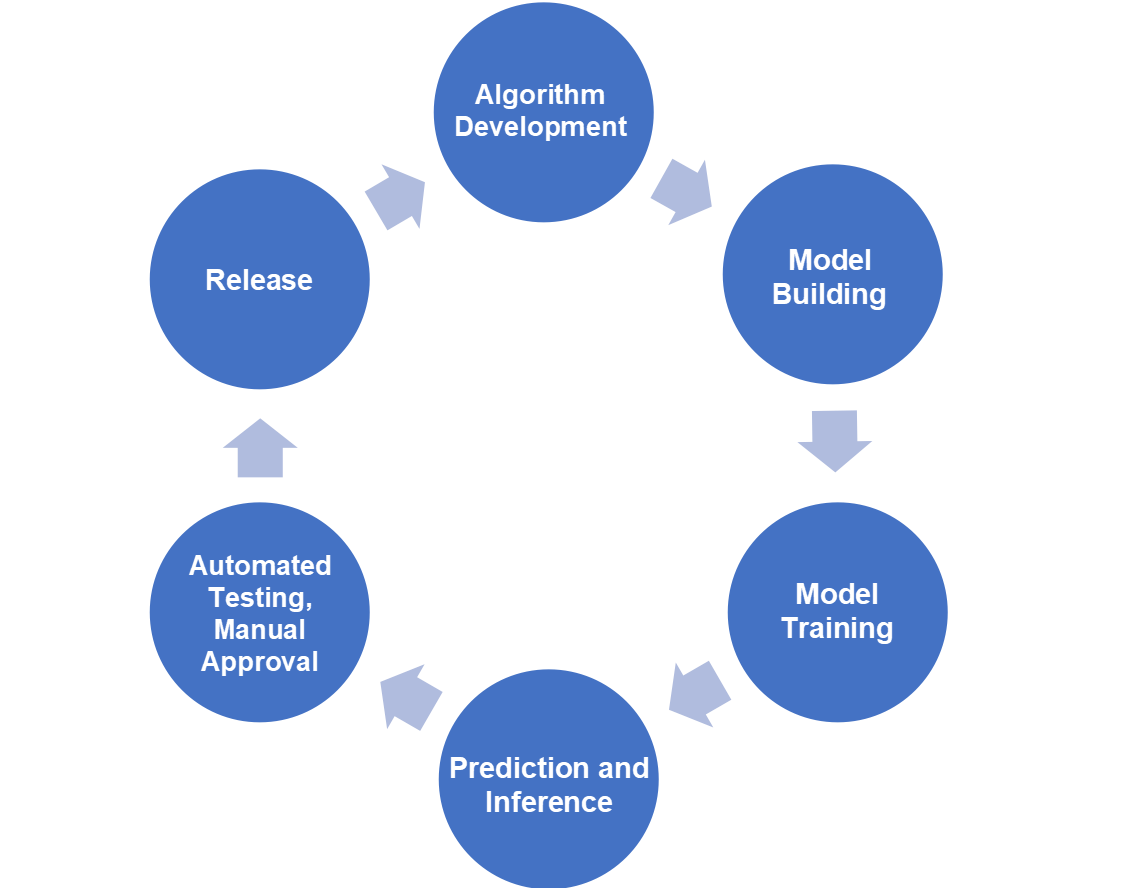

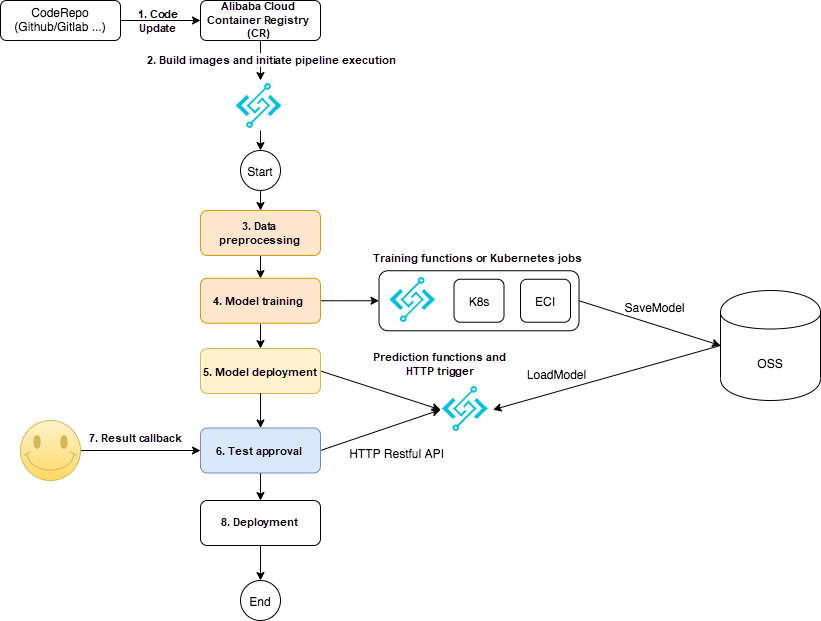

As shown in the following figure, the complex MLOps is abstracted and simplified into a closed feedback loop, including algorithm development, model build, training, serving, testing and approval, and release.

Although the logic is clear and simple, the pipeline system must meet the following requirements before being used in the production environment:

The concept of pipeline and the requirements above are not novel. Some open source solutions are also widely used, such as Jenkins, which is commonly used in CI/CD systems, workflow engine Airflow, and Uber Cadence. However, there is no popular ML pipeline solution on Alibaba Cloud platforms. This article introduces a solution that combines Alibaba Cloud Serverless cloud services with ACK.

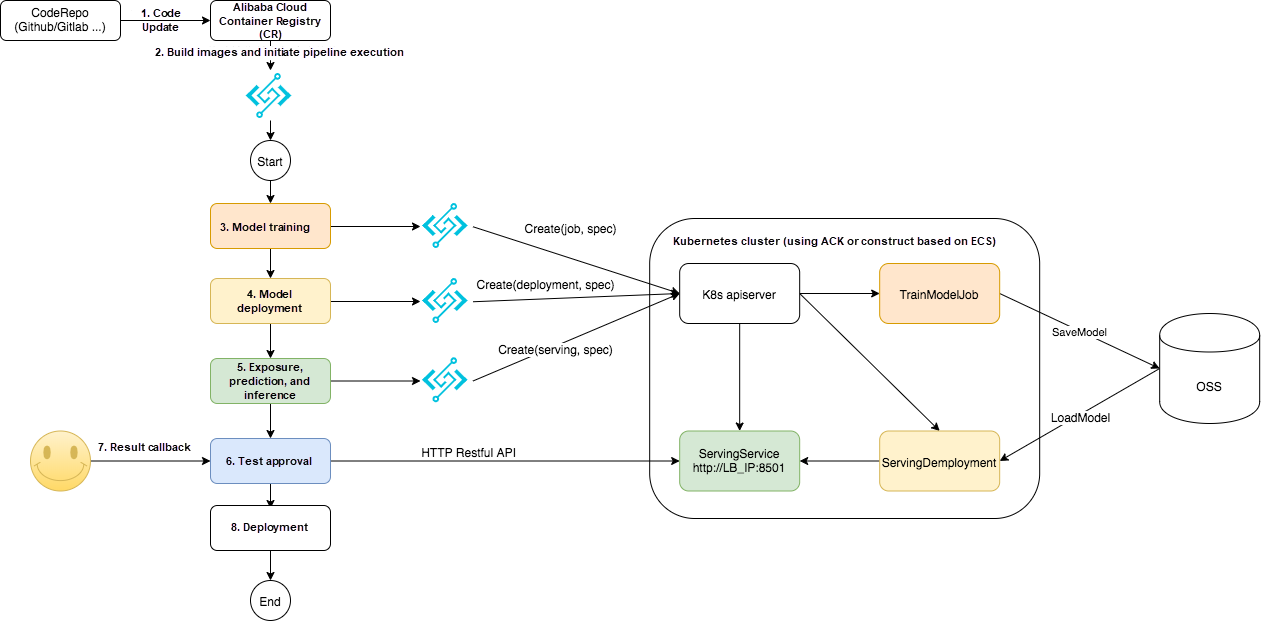

This article assumes that ACK clusters or self-built Kubernetes clusters based on Elastic Compute Service (ECS) are used for training and prediction/inference. The Fashion MNIST dataset is used in training. The prediction service accepts the image and produces corresponding prediction results, such as clothes, hats, and shoes. There are different Docker images for training and serving. FnF and FC are used in the pipeline orchestration. Both FC and FnF are Serverless cloud services. They are fully hosted, free of maintenance, and charged based on usage. They can also be unlimitedly scaled. The pipeline logic diagram is below:

As mentioned earlier, FC and FnF are used to coordinate the ML pipeline. What are the advantages of FC and FnF compared with existing open source workflow engines? The answer lies in the capabilities required by the ML pipelines. The following table is the comparative analysis of capabilities listed in the "Question Definition" section:

Compared with the open source workflow/pipeline solution, the FnF and FC solution meets more of the requirements for ML pipelines in the production environment mentioned above. It also has the following outstanding features:

In the solution mentioned in this article, the training and prediction stages are implemented through jobs and deployments on Kubernetes. The Kubernetes cluster contains relatively more resources with a common problem of low utilization rate. One of the advantages of the FnF and FC solution is its extremely high flexibility, as shown in the following section:

More features are expected to be developed by developers.

Alibaba Cloud Functions on Kubernetes with Event Driven Autoscaling Ability

99 posts | 7 followers

FollowAlibaba Clouder - March 11, 2021

Alibaba Container Service - October 21, 2019

Alibaba Developer - February 4, 2021

Ila Bandhiya - April 8, 2026

Alibaba Clouder - December 28, 2020

Alibaba Container Service - March 10, 2020

Shared information in article is very useful and helps to know about machine learning (ML) pipeline of deep learning infrastructure operations (MLOps).

99 posts | 7 followers

Follow Function Compute

Function Compute

Alibaba Cloud Function Compute is a fully-managed event-driven compute service. It allows you to focus on writing and uploading code without the need to manage infrastructure such as servers.

Learn More Platform For AI

Platform For AI

A platform that provides enterprise-level data modeling services based on machine learning algorithms to quickly meet your needs for data-driven operations.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Epidemic Prediction Solution

Epidemic Prediction Solution

This technology can be used to predict the spread of COVID-19 and help decision makers evaluate the impact of various prevention and control measures on the development of the epidemic.

Learn MoreMore Posts by Alibaba Cloud Serverless

Adnan Zaidi December 17, 2020 at 1:14 pm

Thanks for such informative parts.