The Alibaba Cloud 2021 Double 11 Cloud Services Sale is live now! For a limited time only you can turbocharge your cloud journey with core Alibaba Cloud products available from just $1, while you can win up to $1,111 in cash plus $1,111 in Alibaba Cloud credits in the Number Guessing Contest.

By ApsaraDB

The 2020 Double 11 Global Shopping Festival has come and gone, but the exploration of technology will never stop. Each year's Double 11 is not only a carnival for shopaholics but also a big test for personnel working with data. It is a stage for testing the technical level and innovation practices of the Alibaba Cloud Database Technology Team.

Every year, the Double 11 Global Shopping Festival is a touchstone for AnalyticDB for MySQL, a cloud-native data warehouse. This year, AnalyticDB entered more core transaction procedures in the Alibaba Digital Economy and fully supported Double 11. It also fully embraced Cloud-Native technologies, built ultimate elasticity, reduced costs, and provided technical benefits. A variety of enterprise-level features have been released to provide users with a cost-effective cloud-native data warehouse in a timely manner.

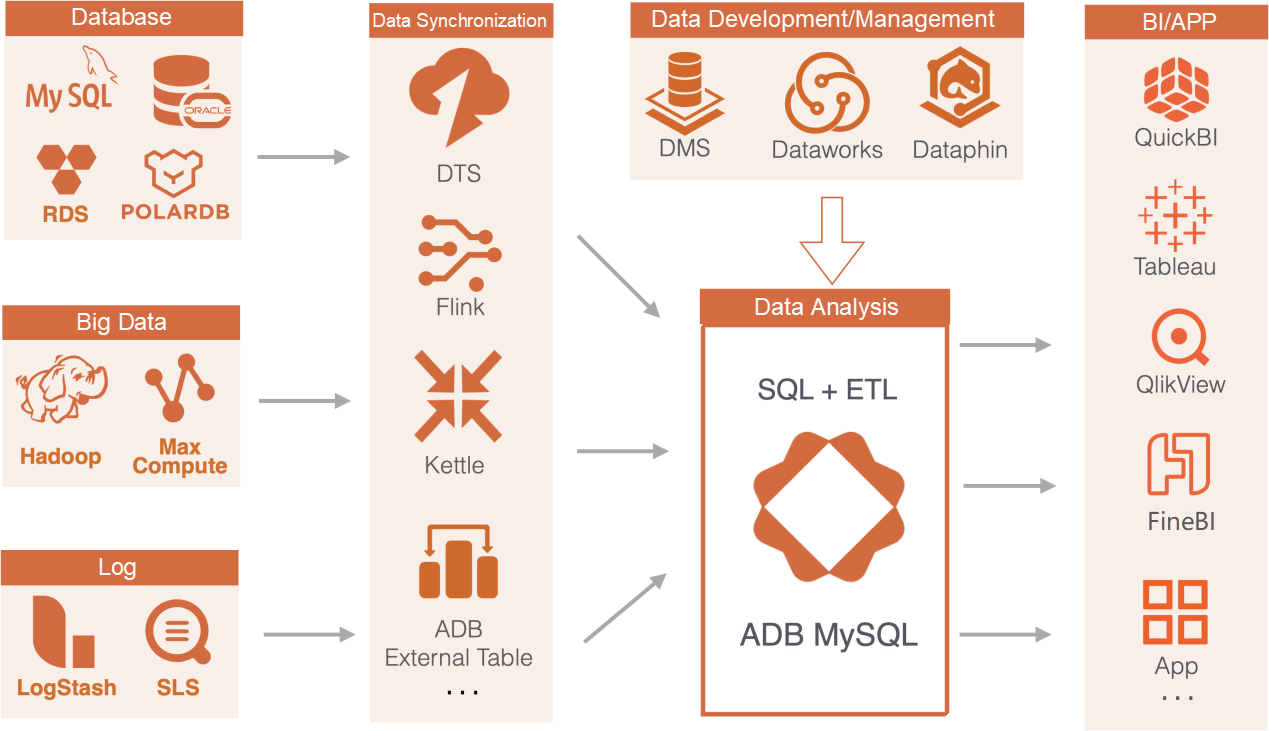

AnalyticDB is a next-generation cloud-native data warehouse that supports high-concurrency and low-latency queries. It is highly compatible with the MySQL protocol and the SQL: 2003 Syntax Standard. It provides instant multi-dimensional analysis and business exploration for large amounts of data. It also helps quickly build a cloud data warehouse for enterprises to realize online data value.

AnalyticDB can be used in all data warehouse scenarios, including report querying, online analysis, real-time data warehouses, and extract, transform, load (ETL) operations. AnalyticDB is compatible with MySQL and traditional data warehouse ecosystems with a low threshold for use.

During the 2020 Double 11 Global Shopping Festival, AnalyticDB supported most of the business units in the Alibaba Digital Economy. Cainiao, the new retail supply chain, Data Technology (DT) product series, databank, business consultants, Renqunbao, Damo Academy Xiaomi, AE data, Hema Fresh, Tmall marketing platform, and many other major businesses benefited from AnalyticDB. Stable performance was achieved in many scenarios of the core transaction procedure, such as the high-concurrency online querying and complex real-time analysis. Various indicators hit a new record on November 11. The peak TPS written in AnalyticDB on that day reached 214 million. Through the online-offline unification architecture, the number of online ETL operations and real-time query jobs reached 174,571 per second. Tasks imported and exported by offline ETL operations reached 570,267, and the size of real-time data processed reached 7.7 trillion rows.

During the 2020 Double 11 Global Shopping Festival, AnalyticDB supported core services of enterprises, such as Jushuitan, 4PX EXPRESS, and EMS, on the public cloud. On the private cloud, it supported various services of China Post Group. AnalyticDB provided ETL for data processing, real-time online analysis, core reports, big screens, and monitoring for these enterprises. It also provided stable offline and online data services for tens of thousands of merchants and tens of millions of consumers.

To achieve amazing progress during the 2020 Double 11 Global Shopping Festival, AnalyticDB faced many challenges, mainly shown in the following aspects:

AnalyticDB officially entered Alibaba Group's core transaction procedure and dealt with the buyer analysis library, which is the core transaction business of the Group. This poses high requirements for AnalyticDB's real-time high-concurrency writing and online search capabilities. There were more than 60 billion orders during the 2020 Double 11 Global Shopping Festival. The peak of balance paying between 00:00 and 00:30 on November 1 was 5 million TPS, which was 100 times higher than usual conditions. The 95th percentile RT of Query was within 10 ms.

The first-time application of the newly developed row storage engine for AnalyticDB was excellent. The storage engine supports high-concurrency online search and analysis for tens of millions of QPS. Key technologies include high-concurrency query procedure, brand new row storage, TopN push-down on any column, and combined indexing and intelligent index selection. It also supports over 10,000 QPS and linear extensibility for a single node. With the same resources, the performance of point query, aggregation, and TopN in a single table of the storage engine is 2-5 times higher than open-source ElasticSearch. The storage engine also reduces storage space by 50%, and its writing performance is 5-10 times higher. Moreover, the real-time visibility and high reliability of data are also guaranteed.

Over the past year, AnalyticDB has been applied to the core operation stages of Cainiao warehouses. The high-concurrency real-time writing, real-time query, and related data analysis capabilities of AnalyticDB are used by warehouse operators for data presentation, data verification, delivery, and many other operations. The peak value of order number per second reached 6,000. As the data warehouse engine of Cainiao, AnalyticDB monitors the status of hundreds of millions of parcels during storage, collection, transportation, and delivery in real-time. By doing so, it ensures that each order is fulfilled on time. This improves the user experience. During the first wave of traffic peaks on November 1, the TPS of the Cainiao warehouse single instance surpassed 400,000, and QPS was over 200. For the single instance of supply chain fulfillment, TPS reached 1.6 million, and QPS reached 1,200.

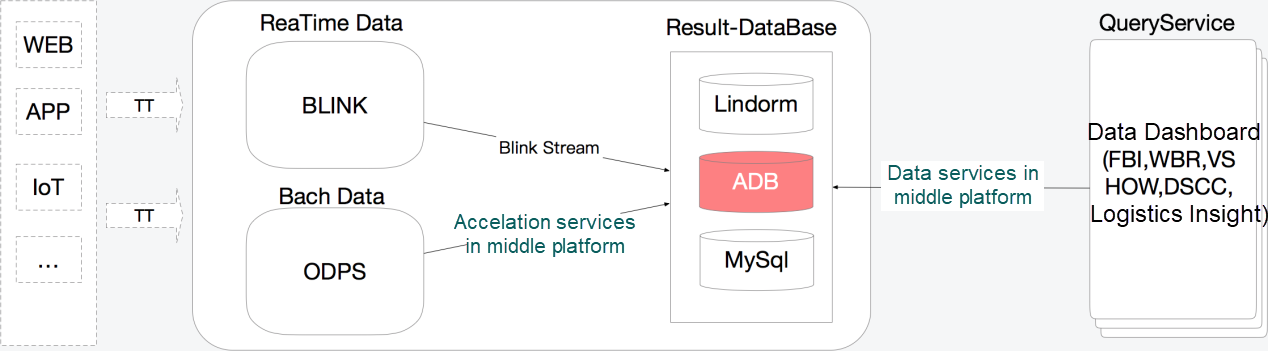

The architecture of the Cainiao data warehouse is listed below:

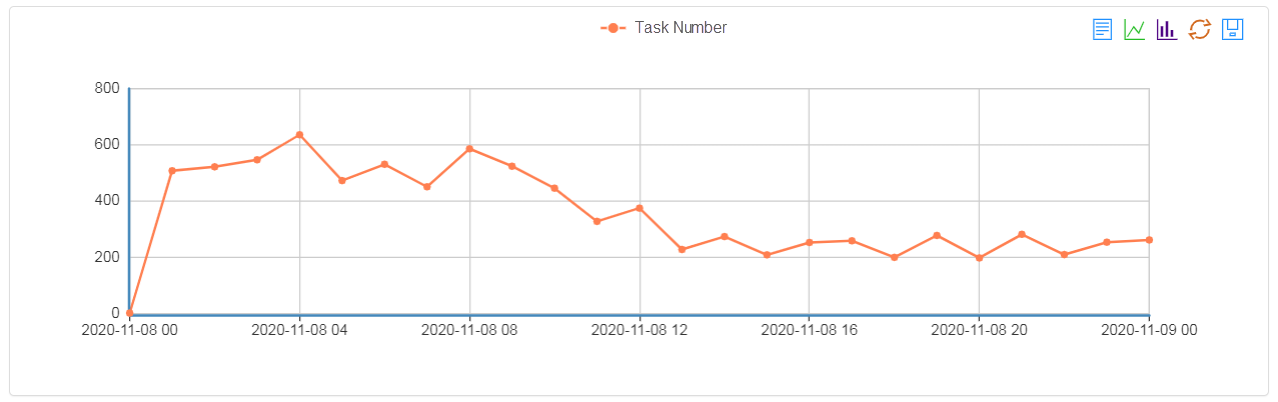

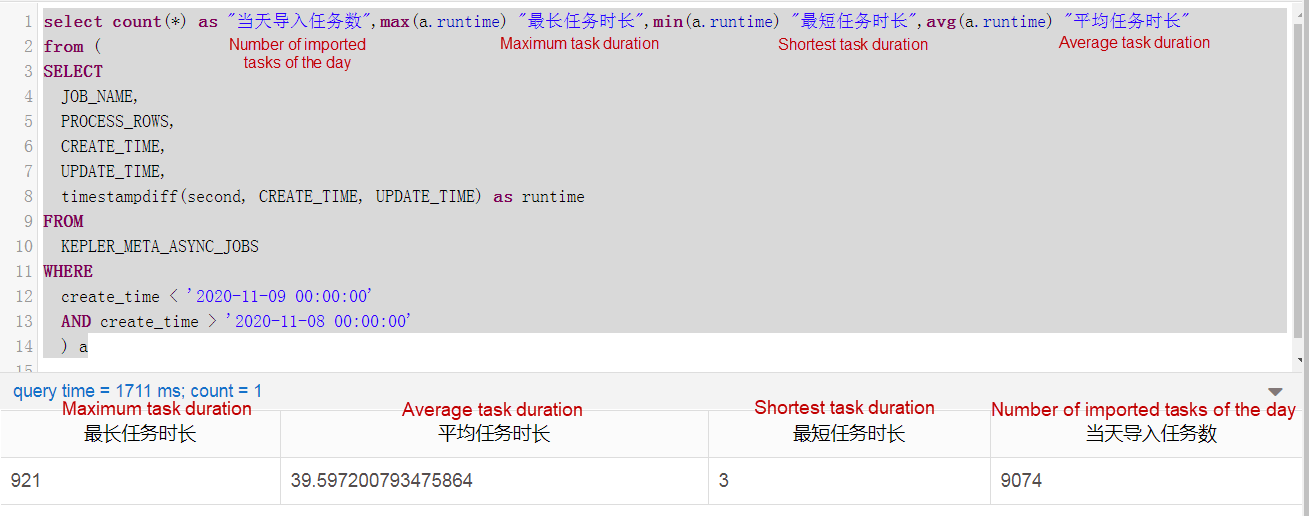

Some businesses that rely on data insight (similar to DeepInsight) are also the platforms. A large number of import tasks are performed every day, and these tasks must be imported within a specified time with baseline requirements. It requires each task to be imported at a specified time and finished within the required time. Finishing these tasks through MapReduce in AnalyticDB 2.0 is unimaginable, but it can be done easily in AnalyticDB 3.0. AnalyticDB 3.0 allows lightweight and real-time task import. Let's use the tasks on November 8 as an example. For the 9074 tasks, the longest import time was 921 seconds, while the shortest was only 3 seconds. The average time was 39 seconds.

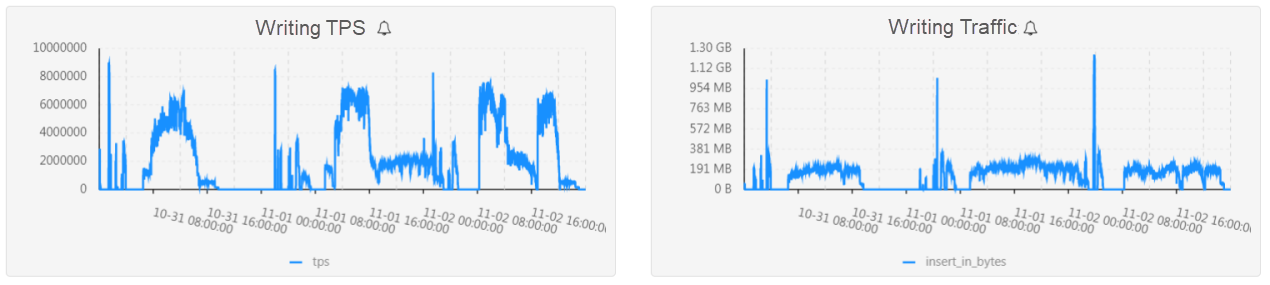

For businesses, such as databank, a large amount of data is imported each day, which is extremely challenging for the writing throughput of AnalyticDB. The AnalyticDB TPS peak for databank before and after the 2020 Double 11 Global Shopping Festival was nearly 10 million, and the writing traffic reached 1.3 GB/s. Databank uses AnalyticDB to implement crowd profiling, custom analysis, triggered computing, real-time engine, and offline acceleration. A single database stores more than 6 PB of data, using a large number of complex SQL statements, such as UNION, INTERSECT, EXCEPT, GROUP BY, COUNT, DISTINCT, and JOIN for multiple trillion-level tables.

Based on hybrid load capabilities of online analysis and offline extract-transform-load (ETL), AnalyticDB supports multiple Double 11 businesses in the AE middle platform. The merchant-side business achieved 100 QPS of crowd prediction based on detailed events and reduced complex profiling time from 10 seconds to within 3 seconds on average. Compared with the traditional way of processing merchant events into materialized tags, filtering tables through detailed events reduces the time for the new event-based crowds to go online. This also reduces the previous data development time from one week to half a day. AnalyticDB allows AE users to obtain real-time crowd clustering and upgrades original 20-minute offline clustering to the minute-level online clustering. In addition, the rights and interests subcontracting algorithm is implemented in a firm real-time manner. The offline 20-minute packet subcontracting has been updated to online minute-level packet subcontracting. Subsequently, the online algorithms can be achieved in AE and Lazada's online crowd scale-in and fine sorting based on the online AnalyticDB algorithm. This can help improve the overall efficiency of crowd and commodity operations for internationalization.

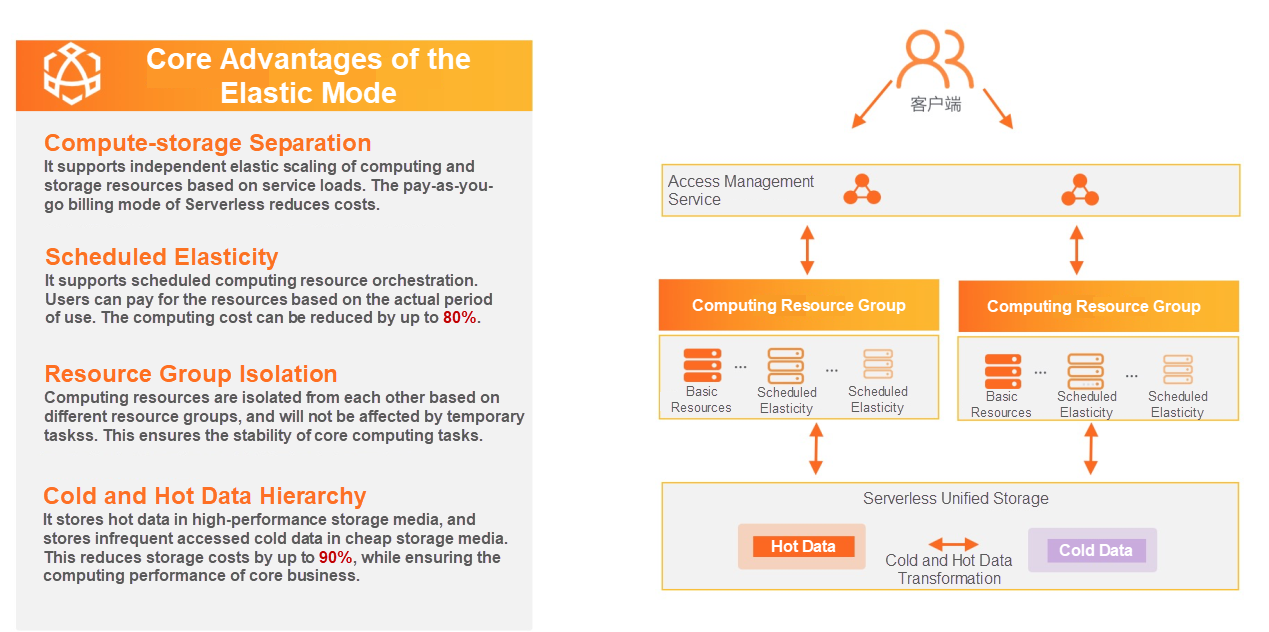

Over the past year, AnalyticDB perfectly supported the business of the Alibaba digital economy and the business of Alibaba Cloud's public and private clouds. AnalyticDB architecture fully embraced cloud-native technologies and completed major architecture upgrades. A new version with elastic mode was also released on the public cloud, giving users a cost-effective and elastic next-generation data warehouse. The key technologies of the new elastic mode are introduced below:

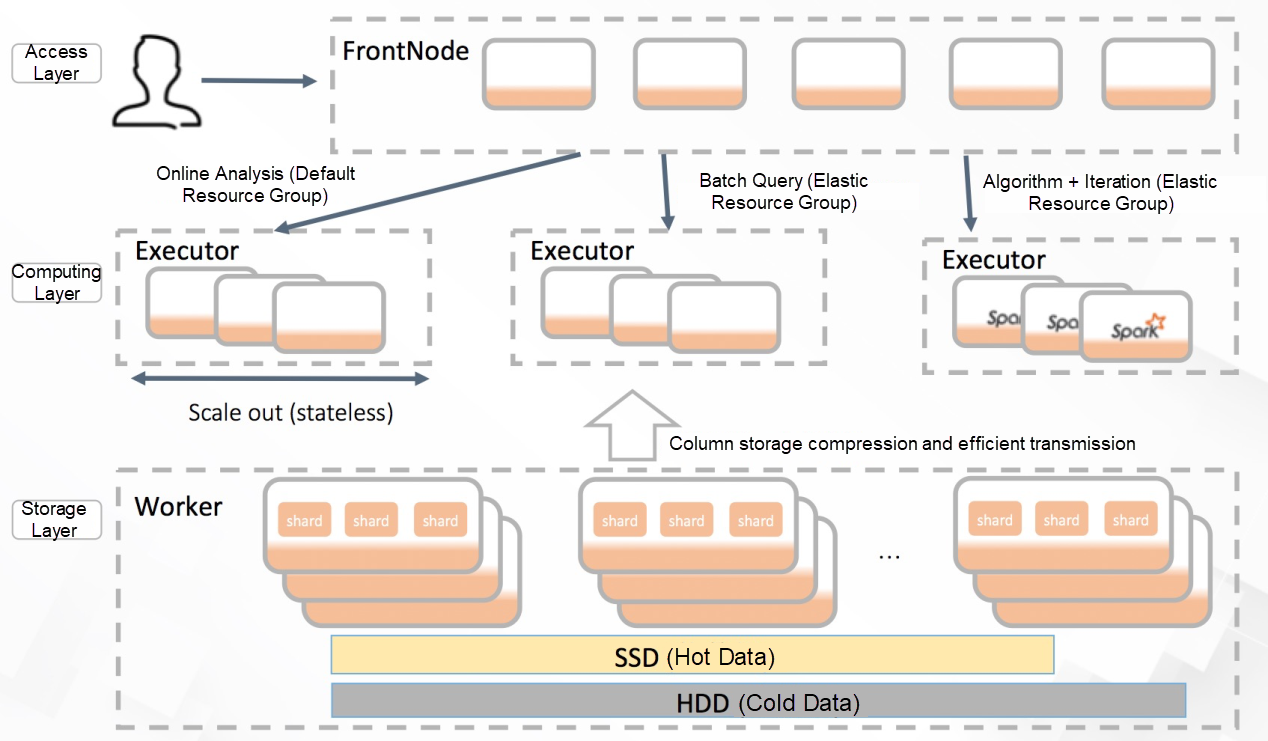

Compute-Storage Separation

Products under the AnalyticDB reservation mode are based on the Shared-Nothing architecture, featuring good extensibility and concurrency. The backend uses the compute-storage coupling approach, while the same resources are shared by the compute and storage modules. The storage capacity and computing capability are related to the number of nodes. Users can adjust their resource requirements by increasing or reducing the number of nodes. However, users cannot freely arrange the computing and storage resources to meet the requirements of different business loads. In addition, the adjustment of the node number is often coupled with a large amount of data migration. This will take a long time and have an impact on the running loads of the current system. Moreover, computing and storage resources cannot be flexibly arranged, which leads to cost performance problems.

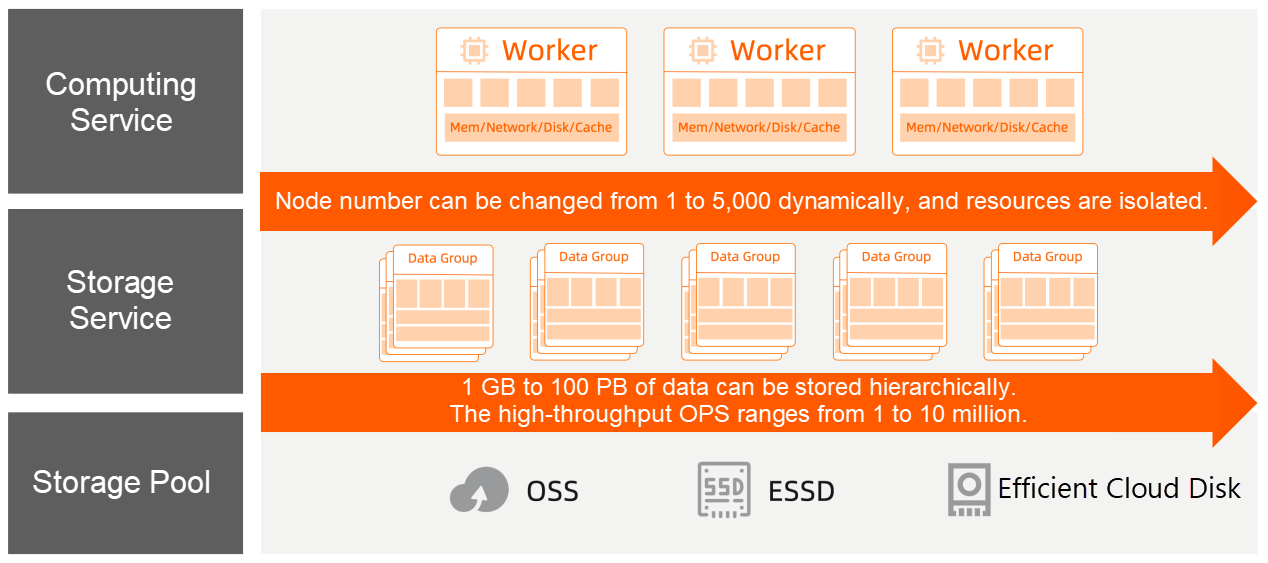

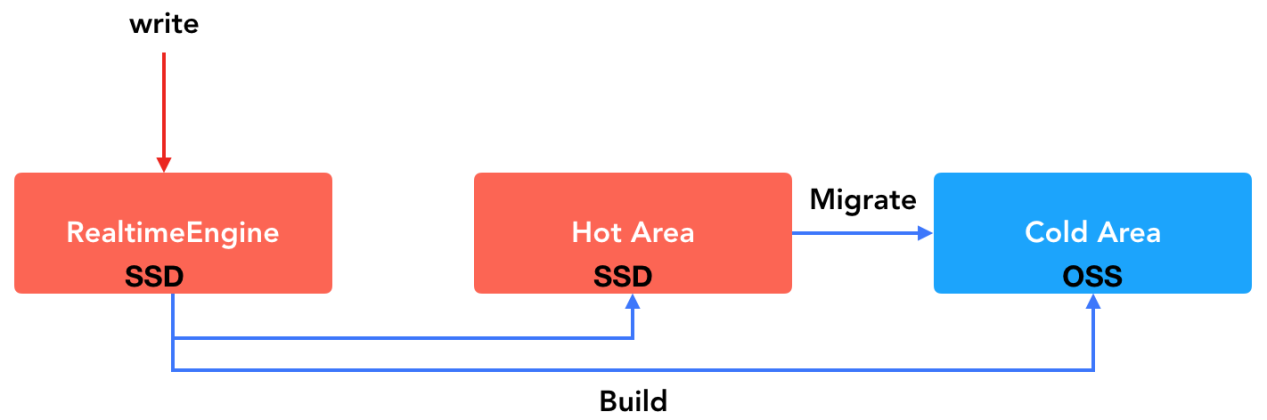

Embracing the elastic capabilities of cloud platforms, AnalyticDB adopted a new elastic mode for products and the new compute-storage separation architecture at the backend. AnalyticDB provides a service-oriented Serverless storage layer, and its computing layer can be independently and elastically scaled while maintaining the performance of the reserved mode. By decoupling compute from storage, users can flexibly scale computing resources and storage capacity to control total costs. There will be no need for data migration in terms of the scaling of computing resources, thus providing the ultimate elastic experience for users.

Hot/Cold Data Hierarchy

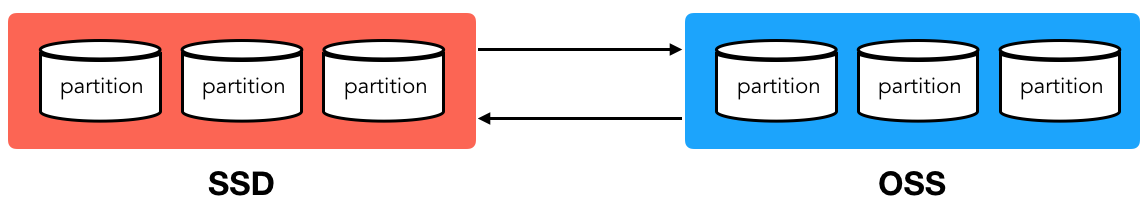

The high cost-effectiveness of data storage is one of the core competitiveness of cloud data warehouses. AnalyticDB provides an enterprise-level hierarchy of hot and cold data. AnalyticDB independently selects hot and cold storage media based on the granularity of tables and table dual partitions. For example, all table data can be stored in an SSD or HDD. Users can choose different storage types according to the business requirements, and the hot and cold data policies can be randomly converted. In addition, the space for hot and cold data in the Serverless storage layer is charged in pay-as-you-go mode. In the future, AnalyticDB will implement intelligent hot and cold data partitioning, that is, automatic pre-heating of cold data based on users' business access model.

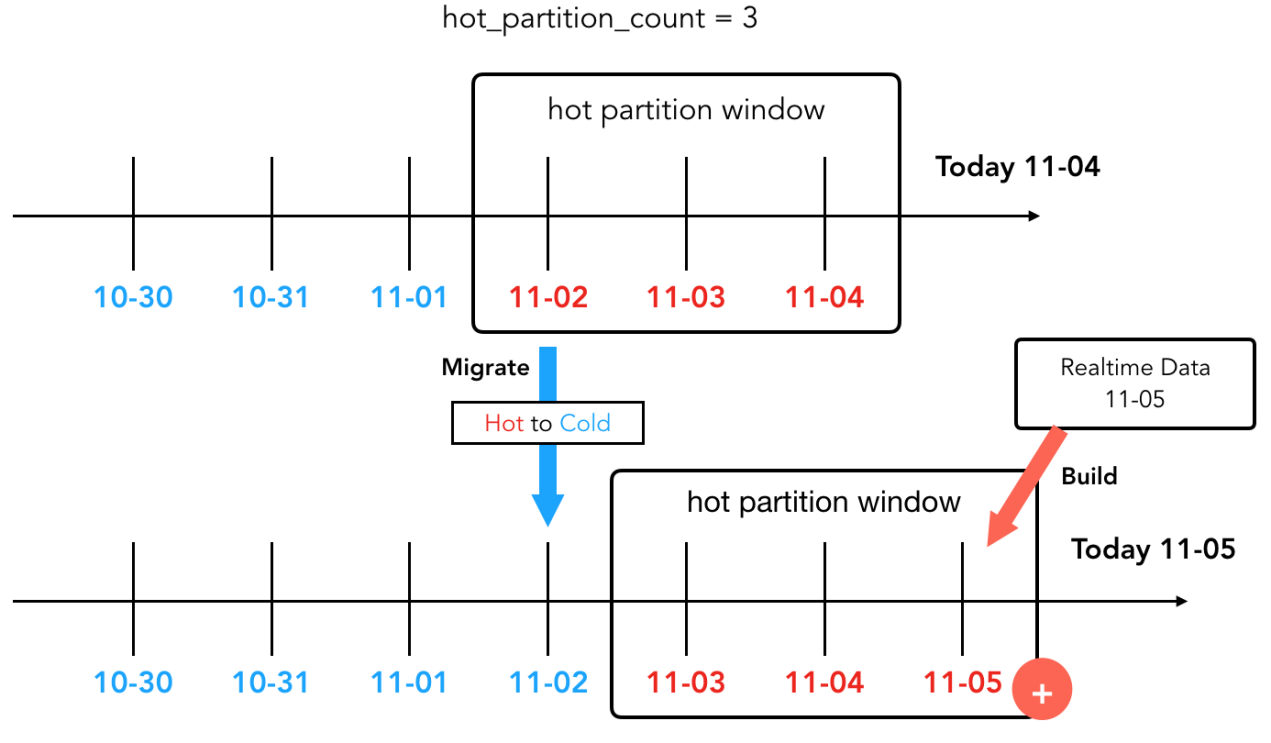

The first step of hot and cold data hierarchy is to determine the storage granularity and boundary of hot and cold data. The hot and cold data hierarchy technology of AnalyticDB uses the existing dual partition mechanism, which means that partitions are the basic units of hot and cold data storage. Hot partitions are stored on node SSDs for the best performance, while cold partitions are stored on OSS for the lowest storage cost.

A full hot-data table (all partitions in the SSD), a full cold-data table (all partitions in the OSS), and a hybrid table (some partitions in the SSD and some in the OSS) can be flexibly defined by users. Thus, the balance between performance and cost can be realized. The following is an example of a game log table. With the hybrid partitioning policy, the data of the latest seven days is stored in hot partitions, and the data before that is stored in cold partitions.

create table event(

id bigint auto_increment

dt datetime,

event varchar,

goods varchar,

package int

...

) distribute by hash(id)

partition by value(date_format(dt, '%Y%m%d')) lifecycle 365

storage_policy = 'MIXED' hot_partition_count = 7;When AnalyticDB data is written, the data will enter the hot area, that is, the SSDs. When hot data has accumulated to a certain extent or users have specified a cold table policy, the backend build task is automatically scheduled. Then, the data will be migrated to the cold area. It is completely transparent for users to write and query.

The design is based on the design concept of writing/reading optimized storage. AnalyticDB uses the adaptive full index by default to realize the efficient combined analysis in any dimension. It means that each column has a column-level index, which ensures that AnalyticDB can run queries out of the box. However, write performance is also challenged. Therefore, AnalyticDB uses an LSM-like architecture and divides the storage into two partitions: real-time and historical partitions. The real-time partition uses block-level rough indexes of row storage and hybrid storage, and the historical partition uses the full index to ensure extremely fast querying. In addition, AnalyticDB transforms real-time partitions into historical partitions through the build task based on the backend data. The automatic transformation mechanism of hot and cold partitions is listed below:

The cold area reduces storage costs but increases data access expenses. Although AnalyticDB has implemented optimizations, such as partition cutting and computing push-down, it still requires random scanning and throughput scanning for historical partitions. To accelerate the query performance for cold partitions, AnalyticDB takes a part of the SSD storage space in storage nodes as Cache. Using this SSD Cache, AnalyticDB has made the following optimizations:

1. Full Hot-Data Table: It applies when all of the data of the table is frequently accessed, requiring high access performance. The Data Definition Language (DDL) statement for this table is listed below:

create table t1(

id int,

dt datetime

) distribute by hash(id)

partition by value(date_format('%Y%m',dt)

lifecycle 12

storage_policy = 'HOT';2. Full Cold-Data Table: It applies when all of the data of the table is infrequently accessed, requiring low access performance. The DDL statement for this table is listed below:

create table t2(

id int,

dt datetime

) distribute by hash(id)

partition by value(date_format('%Y%m',dt)

lifecycle 12

storage_policy = 'COLD';3. Hybrid Table: It applies when hot and cold data are mixed according to time duration. For example, the data of the last month is frequently accessed and requires high access performance, while the data of earlier months is cold data with infrequent access. The DDL statement for this table is listed below:

create table t3(

id int,

dt datetime

) distribute by hash(id)

partition by value(date_format('%Y%m',dt)

lifecycle 12

storage_policy = 'MIXED' hot_partition_count=1;Dual partitions are created for the entire table based on months and store data of 12 months in total. Data of the last month is stored in the SSDs, and data before that is stored in HDDs. By doing so, a good balance between performance and cost is achieved.

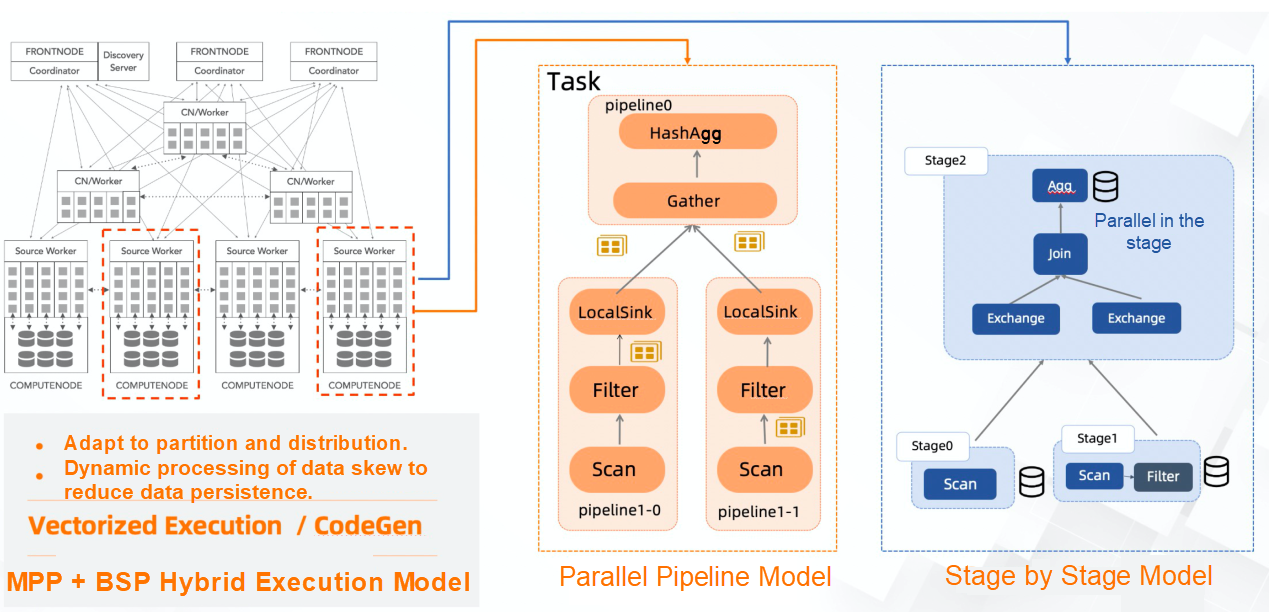

AnalyticDB uses a set of engines and supports low-latency online analysis and high-throughput complex ETL.

With the compute-storage separation architecture, AnalyticDB has greatly released its computing capabilities and supported rich and powerful hybrid computing load capabilities. In addition to online and interactive query mode, it also supports offline and batch query mode. Meanwhile, open source computing engines (such as Spark) can be integrated to support iterative computing, machine learning, and other complex computing scenarios.

The online query mode is based on the MPP architecture. In this architecture, the intermediate results and operator states are all-in-memory, and the computing process is fully pipelined. This mode, with low query RT, applies to low-latency and high-concurrency scenarios, such as BI reports, data analysis, and online decision-making.

The batch mode is based on the DAG execution model. The entire DAG can be divided into several stages with stage-by-stage execution, and the intermediate results and operator states can be persisted. The batch mode also supports data computing with high throughput. It can also be used in scenarios with few computing resources and lower computing costs. So, it could be applied to scenarios that involve large amounts of data or limited computing resources, such as ETL and data warehouses.

AnalyticDB provides an open and extensible computing architecture. It also provides users with complex computing capabilities by integrating and complying with the open-source computing engines (currently, with Spark.) Users can write more complex computing logics based on Spark's programming interfaces, such as DataFrame, SparkSQL, RDD, and DStream. Thus, the logic can be applied to the more intelligent and real-time data application scenarios in business, such as iterative computing and machine learning.

In the new elastic mode of AnalyticDB, the resource group function is supported to elastically classify computing resources. Computing resources in different resource groups are physically isolated. Through binding the AnalyticDB account to different resource groups, SQL queries are automatically routed to the corresponding resource groups to execute orders based on the binding relationships. Then, users can choose to set different query execution modes for the resource groups to meet the needs of multiple tenants and hybrid loads within instances.

Resource Group-Related Orders

-- Create a resource group.

CREATE RESOURCE GROUP group_name

[QUERY_TYPE = {interactive, batch}] -- Specify the execution mode of the resource group query.

[NODE_NUM = N] -- Number of resource group nodes.

-- Bind a resource group.

ALTER RESOURCE GROUP BATCH_RG ADD_USER= batch_user

-- Resize a resource group.

ALTER RESOURCE GROUP BATCH_RG NODE_NUM= 10

-- Delete a resource group.

DROP RESOURCE GROUP BATCH_RGResource groups support the following query execution modes:

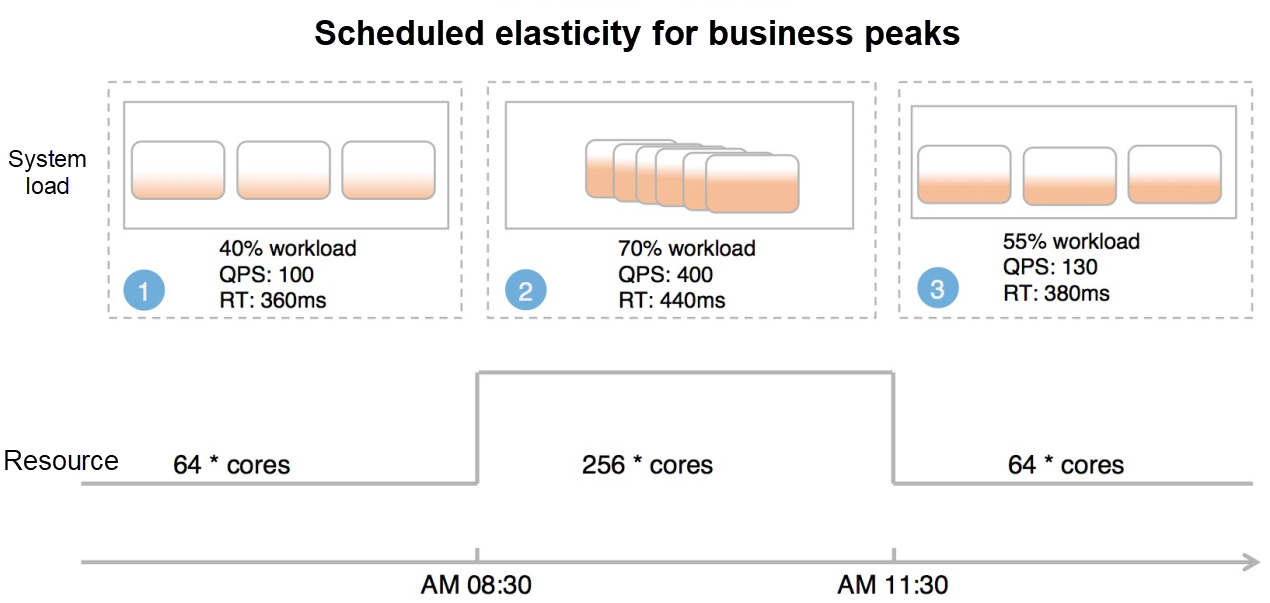

Generally, significant peaks and valleys occur in business traffic, and resources are used less during the off-peak period. AnalyticDB scheduled elasticity allows users to customize elastic plans scheduled on a daily or weekly basis. By doing so, resource groups can be automatically scaled out to deal with business traffic before peak hours. The scheduled elastic plans can meet the requirements of business traffic during peak hours and reduce AnalyticDB usage costs. Together with the resource group functions, users can realize the 0 node contained in a resource group during off-peak periods, which is very cost-effective.

Intelligent Optimization

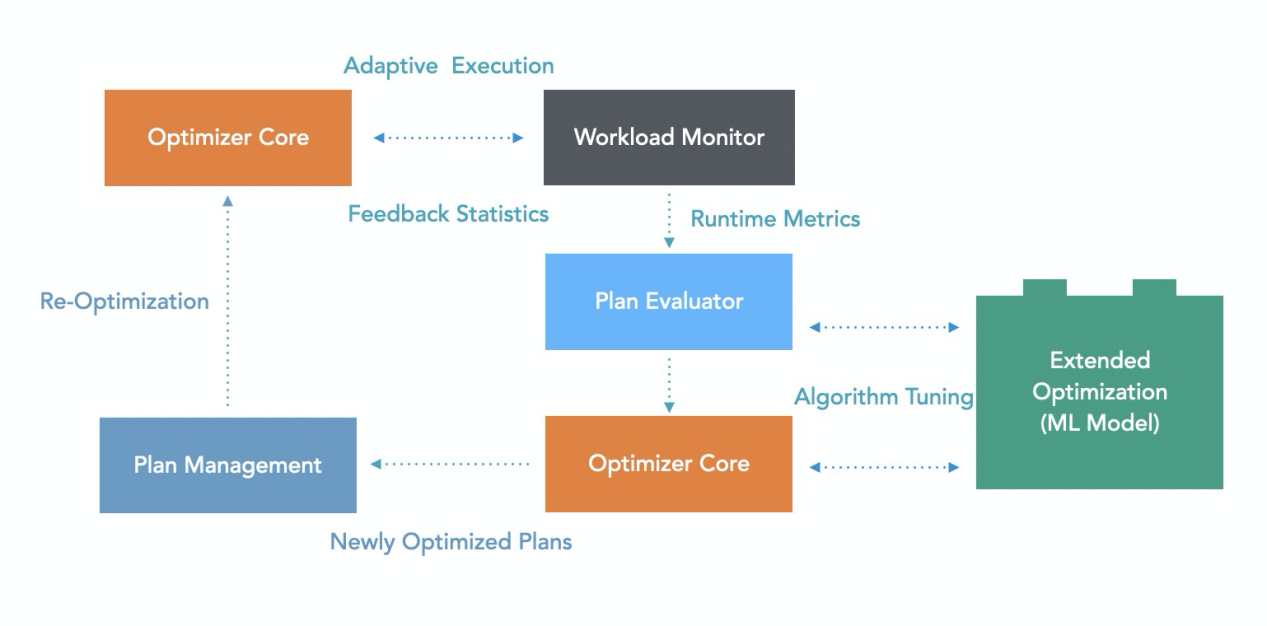

Query optimization is a key factor that affects the performance of the data warehouse system. Problems, such as generating better execution plans, partitioning methods, index configuration, and statistics update time, often bother data developers. AnalyticDB has been deeply engaged in intelligent optimization technology. By monitoring running queries in real-time, AnalyticDB dynamically adjusts the execution plan and the statistics it depends on and automatically improves query performance. The built-in intelligent algorithms allow dynamic adjustment on engine parameters according to the real-time operating status of the system. Thus, the current query load can be dealt with.

Intelligent adjustment is a continuous monitoring and analysis process. It constantly analyzes the characteristics of the current workload to identify potential optimizations and make adjustments. It also decides whether to roll back or further analyze according to the performance benefits after adjustments.

Query plans often have performance rollback due to reasons like statistics and cost models. AnalyticDB makes full use of running and post-running execution information to implement immediate and post-event adjustments for execution plans. By performing machine learning on historical execution plans and corresponding metrics, the cost estimation algorithms of execution plans are adjusted. After adjustments, the plans are more suitable for the current data characteristics and workloads. With continuous learning and adjustments, automatic optimization will be achieved, making execution plans more user-friendly.

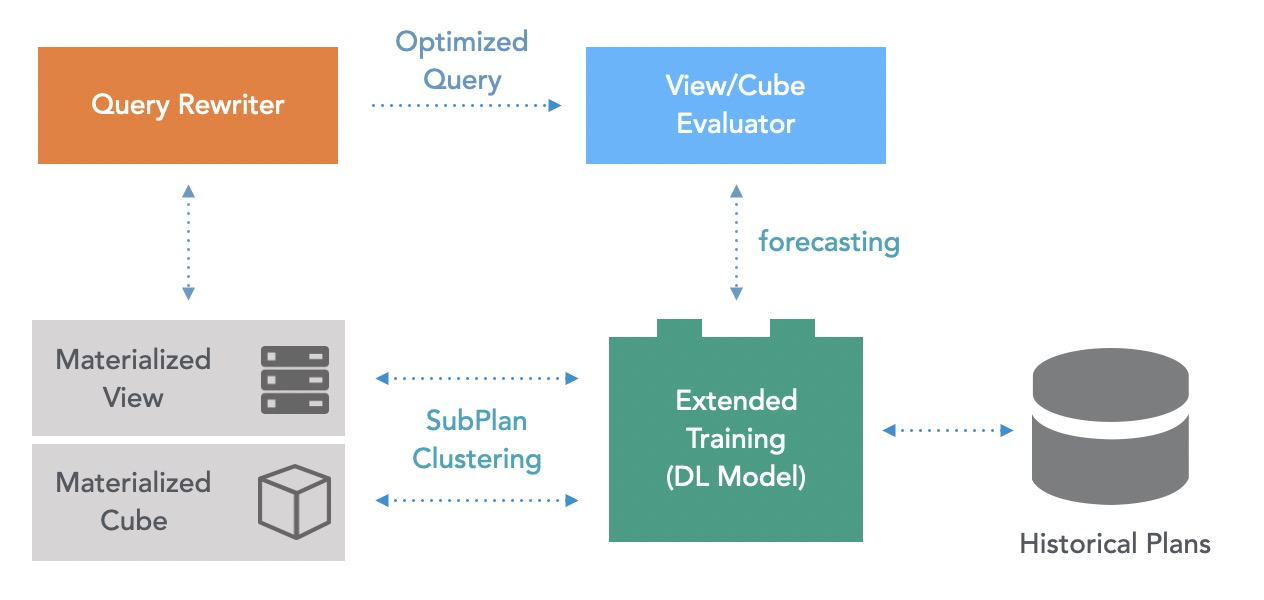

Materialized view is one of the core features in the data warehouse field. It can help users accelerate analysis and simplify the data ETL process. It covers a wide range of application scenarios. For example, users can use materialized views together with BI tools to accelerate big screen business or to cache common intermediate result sets for slow query acceleration. Materialized views have been supported since AnalyticDB 3.0, which can effectively maintain materialized views and provide automatic update mechanisms.

AnalyticDB is a next-generation cloud-native data warehouse. It supports online-offline unification in one system and successfully empowered various services during the 2020 Double 11 Global Shopping Festival. AnalyticDB can withstand extreme business loads and improve the timeliness of data value exploring in business. With the business and technology evolution of platforms, AnalyticDB is continuously developing the capabilities of enterprise-level data warehouses. Recently, AnalyticDB provided the core capabilities of the new elastic mode to users. Together with ultimate elasticity and cost-effectiveness, AnalyticDB truly allows users to get what they need.

Under Cyber Threats or Attacks? Leverage Alibaba Cloud’s Free Anti-DDoS Emergency Response

DAS Was Polished and Enhanced to Provide Daily Support for Double 11

2,593 posts | 794 followers

FollowAlibaba Clouder - December 6, 2019

Alibaba Clouder - February 14, 2020

ray - April 16, 2025

ApsaraDB - May 7, 2021

Alibaba Clouder - June 10, 2019

ApsaraDB - July 13, 2023

2,593 posts | 794 followers

Follow Black Friday Cloud Services Sale

Black Friday Cloud Services Sale

Get started on cloud with $1. Start your cloud innovation journey here and now.

Learn More Database for FinTech Solution

Database for FinTech Solution

Leverage cloud-native database solutions dedicated for FinTech.

Learn More Lindorm

Lindorm

Lindorm is an elastic cloud-native database service that supports multiple data models. It is capable of processing various types of data and is compatible with multiple database engine, such as Apache HBase®, Apache Cassandra®, and OpenTSDB.

Learn MoreMore Posts by Alibaba Clouder