The human body is like a machine. As people get older, their organs gradually age and health problems become more prevalent. Paying attention to self-care is one type of preventative care but, with the rise of big data, people may also be able to use real-time monitoring and alerts to nip problems in the bud before they become serious.

In the distant (and not so distant) past, if we wanted to check our health we had to go to a hospital or a professional physical examination clinic. This could be quite time consuming and troublesome. As technology develops, however, the monitoring of some health indicators has become increasingly convenient. Wearable technology for monitoring and detection wearables such as bracelets (which can record your heartbeat, temperature, activity levels, and various other indicators) have quickly gained in popularity. Based on current market trends and technological development, there is good reason to believe that there will continue to be more and more of these type of devices for conducting real-time health checks.

In recent years, professional institutions, such as hospitals or nursing homes, have seen a trend in the continued use of smart wearables for civilian health monitoring as well as health checks. This means that wearables may soon be produced with more sensors and more capabilities, including video and spatial analysis. Some common uses of these smart wearables include:

1.Monitoring a person's recorded movements, and raising an alert if the monitored person remains static for an extended period of time.

2.Raising an alert if the monitored person stays in bed for over 12 hours.

3.Raising an alert if the monitored person kneels, stoops, or falls over and remains at a height less than 40 cm for a period greater than 5 minutes (no alert given if there are people around). This may indicate the presence of hypertension in patients.

4.Raising an alert if a person or persons stays in a small, confined space (such as a restroom stall) for over 30 minutes.

Multiple sensors are provided for collecting data about the above situations.

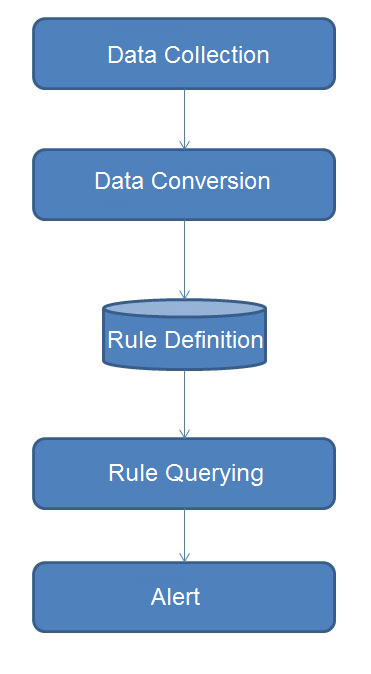

Data traffic is divided into 5 parts:

1.Data collection (from sensors)

2.Data conversion (formatting, stateful conversion)

Optional step, data conversion is aimed at making the identification and processing of data easier, and there are three methods available for conducting real time data conversion.

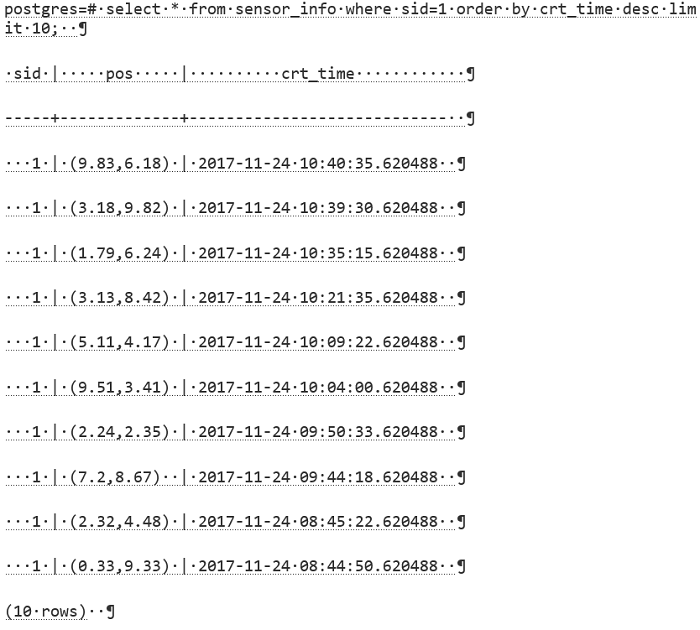

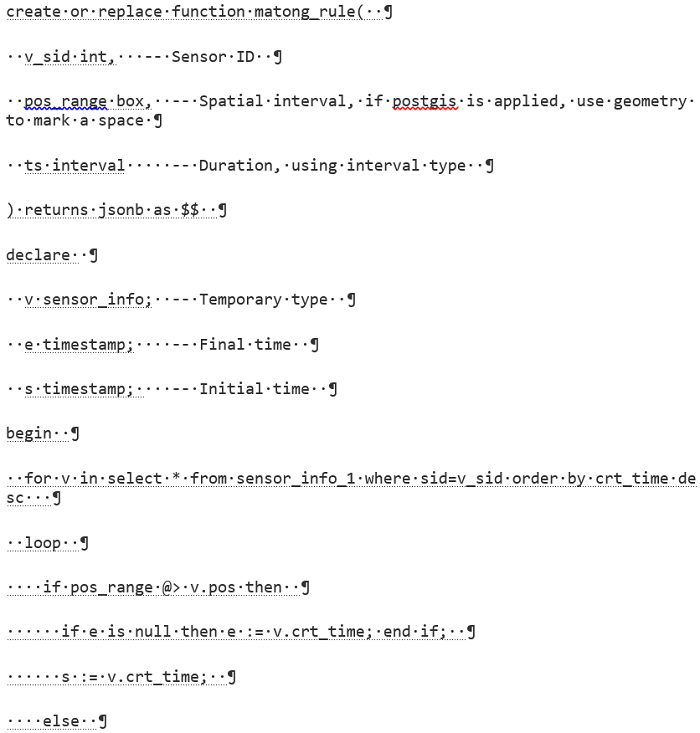

a.Define a conversion rule in a database's UDF, and execute real-time conversion according to a trigger, or when the rule is being loaded.

b.Define a conversion rule in a database's UDF, and execute conversion by means of pipeline Transforms.

c.Execute conversion in an application layer, and re-load the converted data.

3.Define rules.

Alert rules are defined, for example, as mentioned above: An alert will be given when a man stays in a small space (such as the restroom) for over 30 minutes.

In order to simplify access interfaces, the alert rules may be defined in a database's UDF and presented via a view.

4.Real-time rule query.

Inquire the defined rules and give alerts.

5.Alert.

1.A data record (one record may be comprised of multidimensional attributes such as height, location and heartbeat) may be calculated on multiple rule dimensions. For example, an alert rule regarding heart rate and an alert rule regarding location.

The most common optimization method is to reduce the amount of data scanning, and a similar optimization method is also applied to the 9.6 kernel layer.

However, this case is more complicated because different rules can result in different recording ranges. For example, an alert (probably containing thousands of records) may be given after a person stays in bed for 12 hours in the spatial dimension, but as for the height dimension, an alert is required after only 5 minutes.

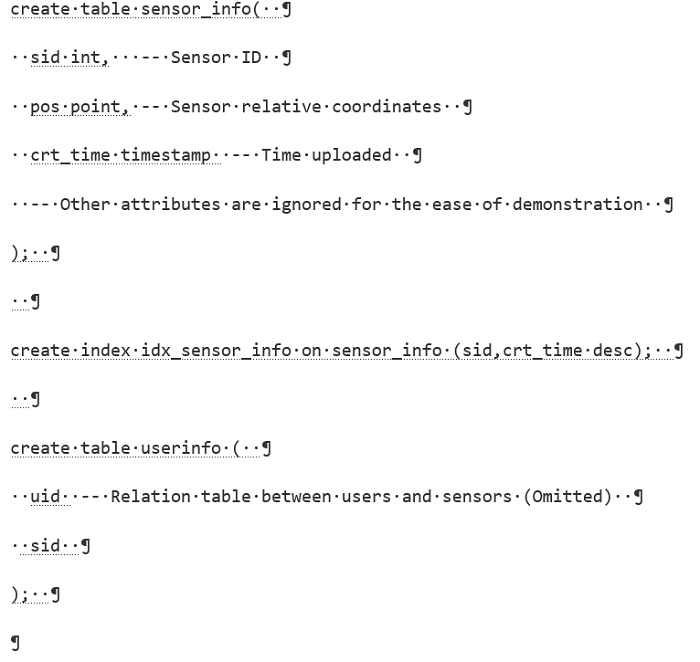

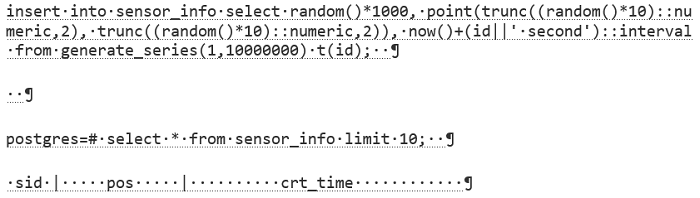

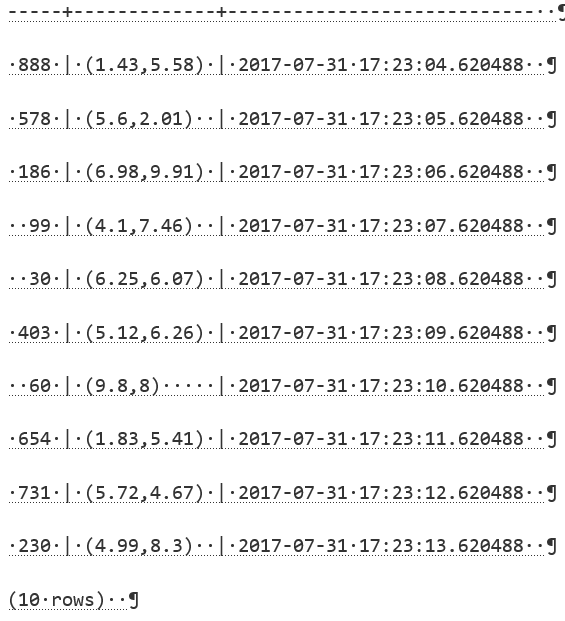

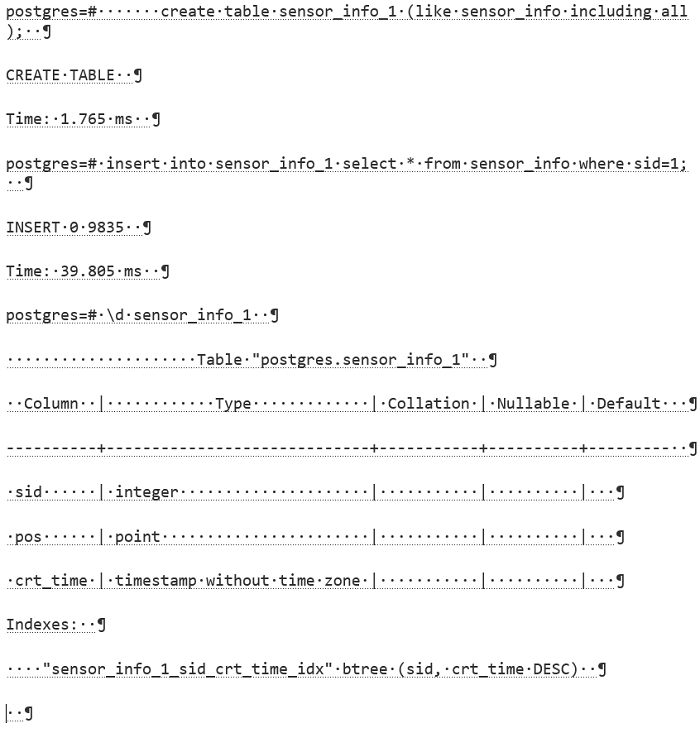

2.Data from the same sensor is stored separately in order to reduce data scanning costs. That is, each sensor has a table. For similar optimization methods, you can refer to

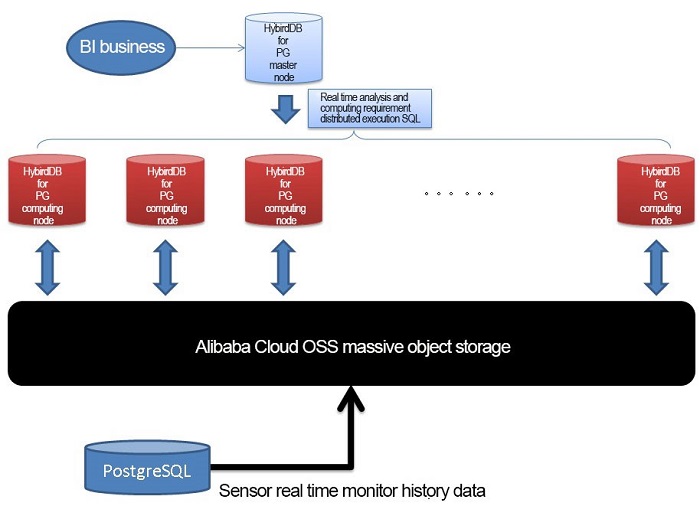

3.Because there is no need to keep all the records for the alert, a rotation method can be used to export the history data to the OSS external table. (You can make a query when you need to check details, or you can directly connect to HybridDB for PostgreSQL to conduct analysis when a huge number of analyses are needed)

PostgreSQL Data rotate Use Introduction - Cover History Data by Time

4.Use Dynamic StreamCompute rules to reduce calculated amount. For example, triggering a corresponding rule when the user enters a certain status, such as "Trigger operation of a StreamCompute rule for a restroom space after the user enters a restroom."

Taking this for our example, we'll explain how an alert is given:

An alert will be given when a man stays in a small space (such as the restroom) for over 30 minutes.

The DEMO is not comprehensive and does not take optimization factors into consideration.

An alert will be given when a person stays in a small, confined space (such as the restroom stall) for over 30 minutes.

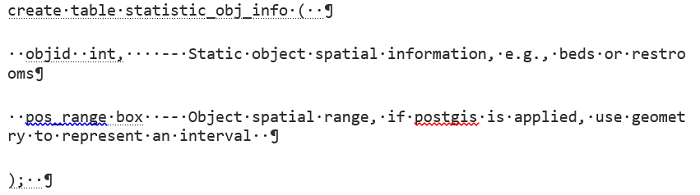

Define a coordinate interval system for each restroom stall and monitor the users. When a user enters a stall, start measuring the time spent in that particular coordinate.

For geometric operations, you can refer to

https://www.postgresql.org/docs/10/static/functions-geometry.html

or

http://postgis.net/documentation/

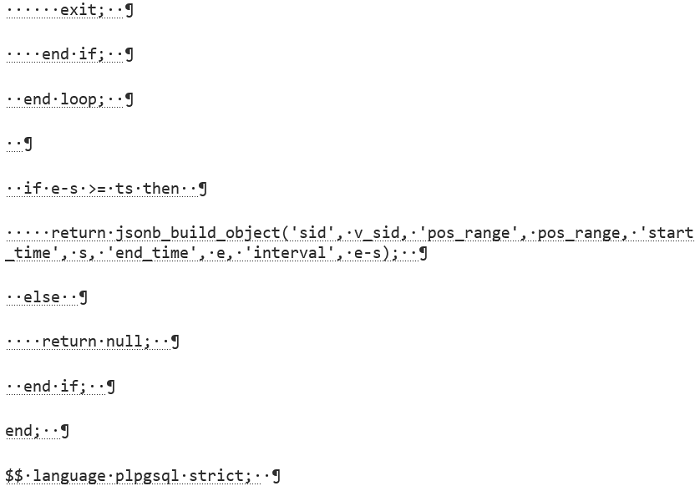

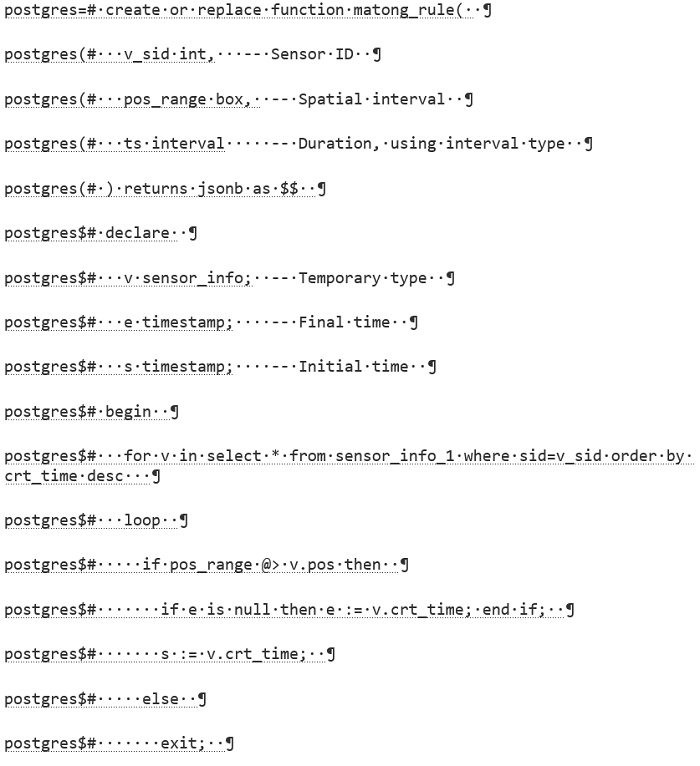

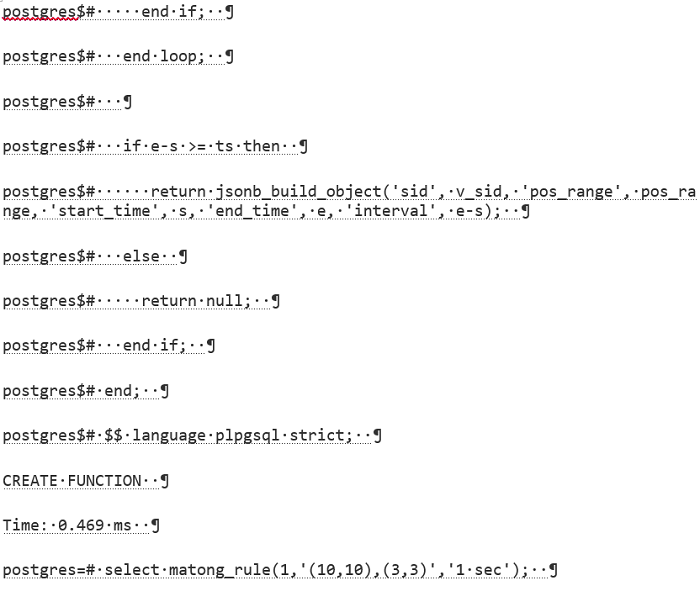

Use UDF to define rules, and output a JSON.

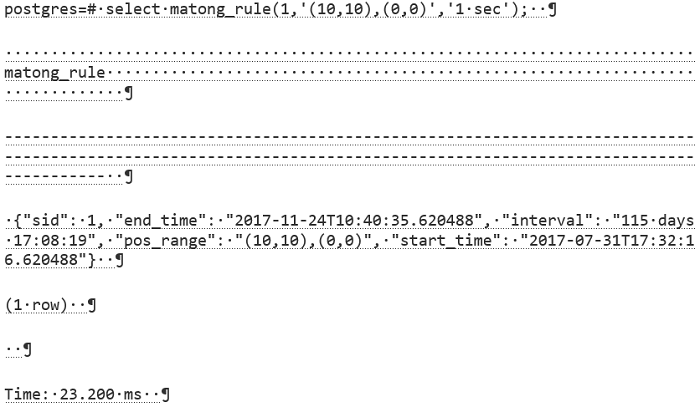

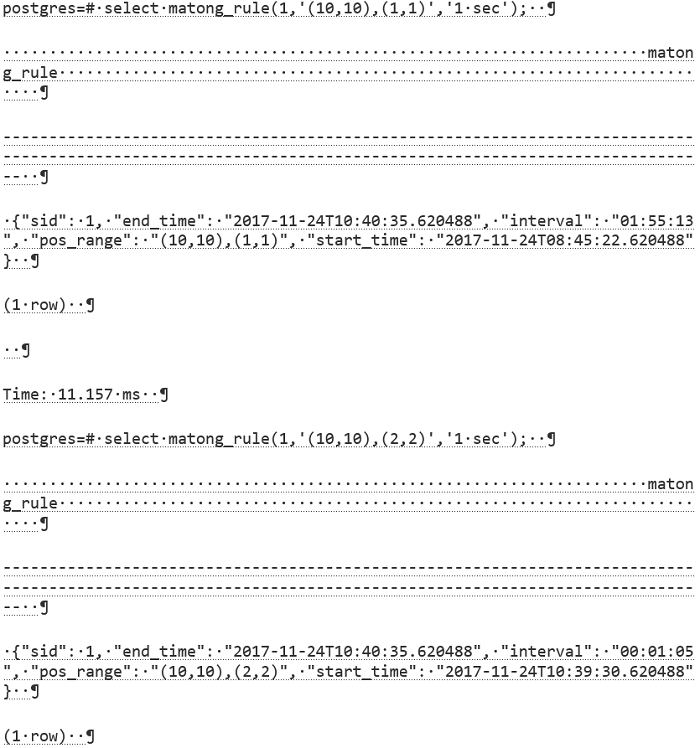

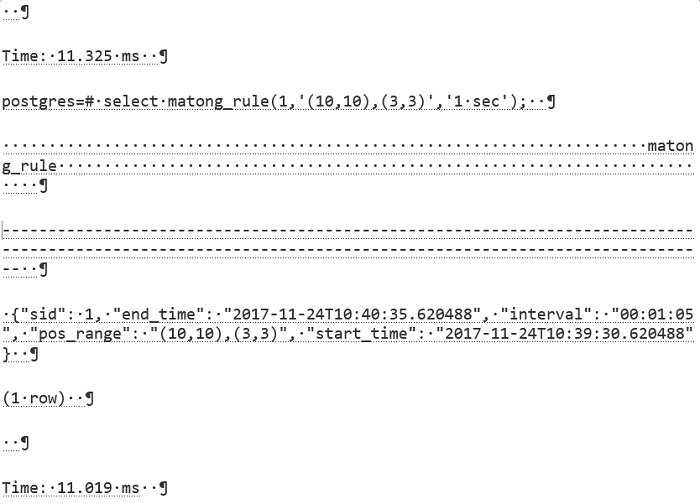

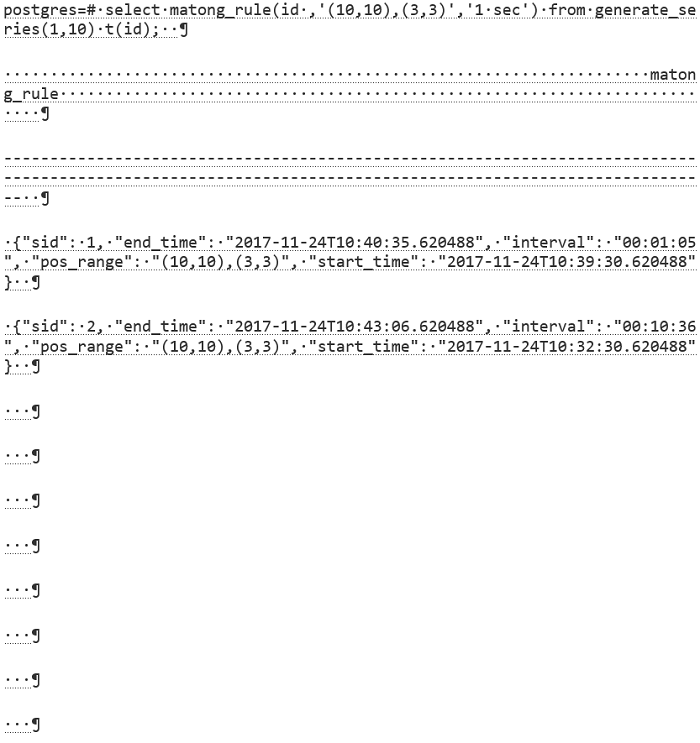

For example, 1 represents an elderly resident's sensor ID, the Box in the middle represents the interval range of a restroom, and the third parameter is duration.

When it is detected that the person enters a static object space requiring monitoring (e.g., bed or restroom), the rule above is triggered and a query is executed according to the rule.

In fact, the sensor may not upload relative coordinates, but directly upload the unique identifier of the static object (restroom, bed,...). All static objects are numbers that are provided with corresponding sensors, capable of sensing the existence of monitored patients in their space and real time reporting their monitoring data to staff or nurses.

This is an even more simple, and also more elegant practice, because even something as simple as interval judgments will not be needed. The disadvantage, however, is that the resident or patient cannot be monitored after he or she leaves the monitored location, and monitoring can only rely on wearable sensors on the person's body after this time.

Summary for the two models:

The terminal capability is weak and basically only involves data collection. All computation is completed at the server.

The terminal capability is strong and involves data collection and some data computing capabilities. Also, a layer of static terminals (e.g., beds and restrooms) is added in the building and is linked with the patients (sensors) in this space so as to report more precise judgment data.

Output statuses of all users.

For example, inquire motion statuses of sensor equipped people 1-100 in the restroom space.

If there is a record returned, give an alert.

For PostgreSQL, asynchronous messages are a very interesting feature which can be used for asynchronous alerts.

From WeChat Mini Apps to Database "Mini Apps" - God Knows What I've Been Through

[Transport] Postgres+Socket.io+nodejs Real-Time Map App Practices

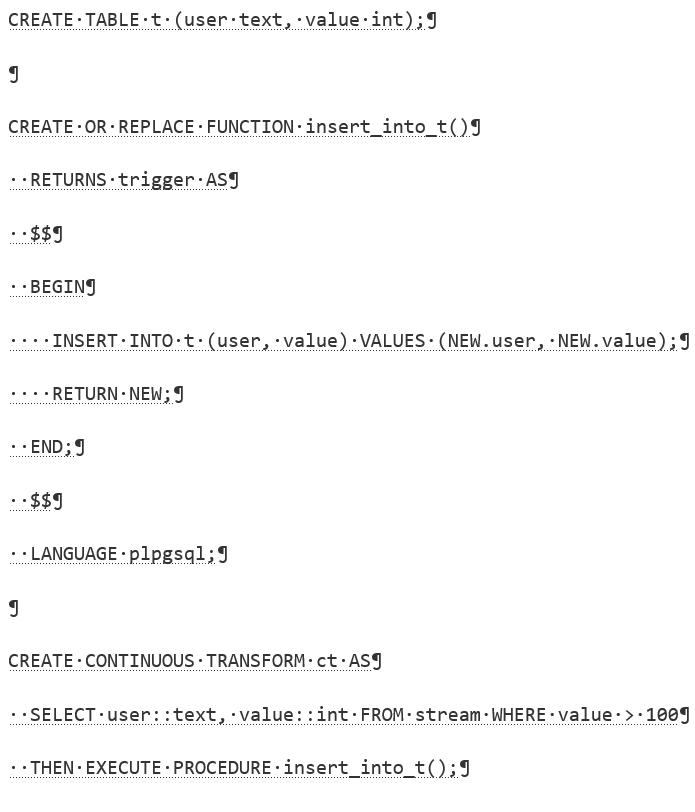

If you use pipelinedb, you may also choose the real-time alert function of transform.

http://docs.pipelinedb.com/continuous-transforms.html#built-in-transform-triggers

Track query, involving the length of time the elderly residents stay in each and every monitored place. If there are multiple records in a same place, only retain two pieces of the records (the arrival time and departure time) when drawing a dashboard.

The method is as follows:

An optimization to IO amplification is mentioned above, where data of all sensors is stored separately in order to completely eliminate the problem of IO amplification. Let's see the performance after IO is eliminated:

Compared to a previous time of 11ms, the time required for a query is decreased to 0.6ms.

As a typical case in larger health care, nursing homes involve a huge amount of spatial data and time data, and a large number of rules. A powerful database is needed to support the nursing homes, otherwise all the data must be transported to an APP layer for processing, which is not efficient.

PostgreSQL can well support applications in real-time detection, health reporting, track query and other scenarios for nursing home residents, and historical sensor data is linked to HybridDB for PostgreSQL by means of OSS, to satisfy the requirements for one-stop alert and analysis.

Pan Jinlian Changes the World - PostgreSQL News and Views Event Analysis Application

Real-Time Conversion in Data Loading - Trigger, Rule

PostgreSQL Data rotate Use Introduction - Cover History Data by Time

2,593 posts | 794 followers

Followdigoal - December 23, 2020

Alibaba Clouder - June 11, 2018

Alibaba Clouder - December 12, 2017

Neel_Shah - August 27, 2025

Data Geek - February 27, 2025

Alibaba Cloud Indonesia - January 12, 2024

2,593 posts | 794 followers

Follow Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More AnalyticDB for PostgreSQL

AnalyticDB for PostgreSQL

An online MPP warehousing service based on the Greenplum Database open source program

Learn More ApsaraDB for HBase

ApsaraDB for HBase

ApsaraDB for HBase is a NoSQL database engine that is highly optimized and 100% compatible with the community edition of HBase.

Learn MoreMore Posts by Alibaba Clouder

Raja_KT March 15, 2019 at 11:54 am

PostgreSQL seems to be eating big chunk from Big data space too.