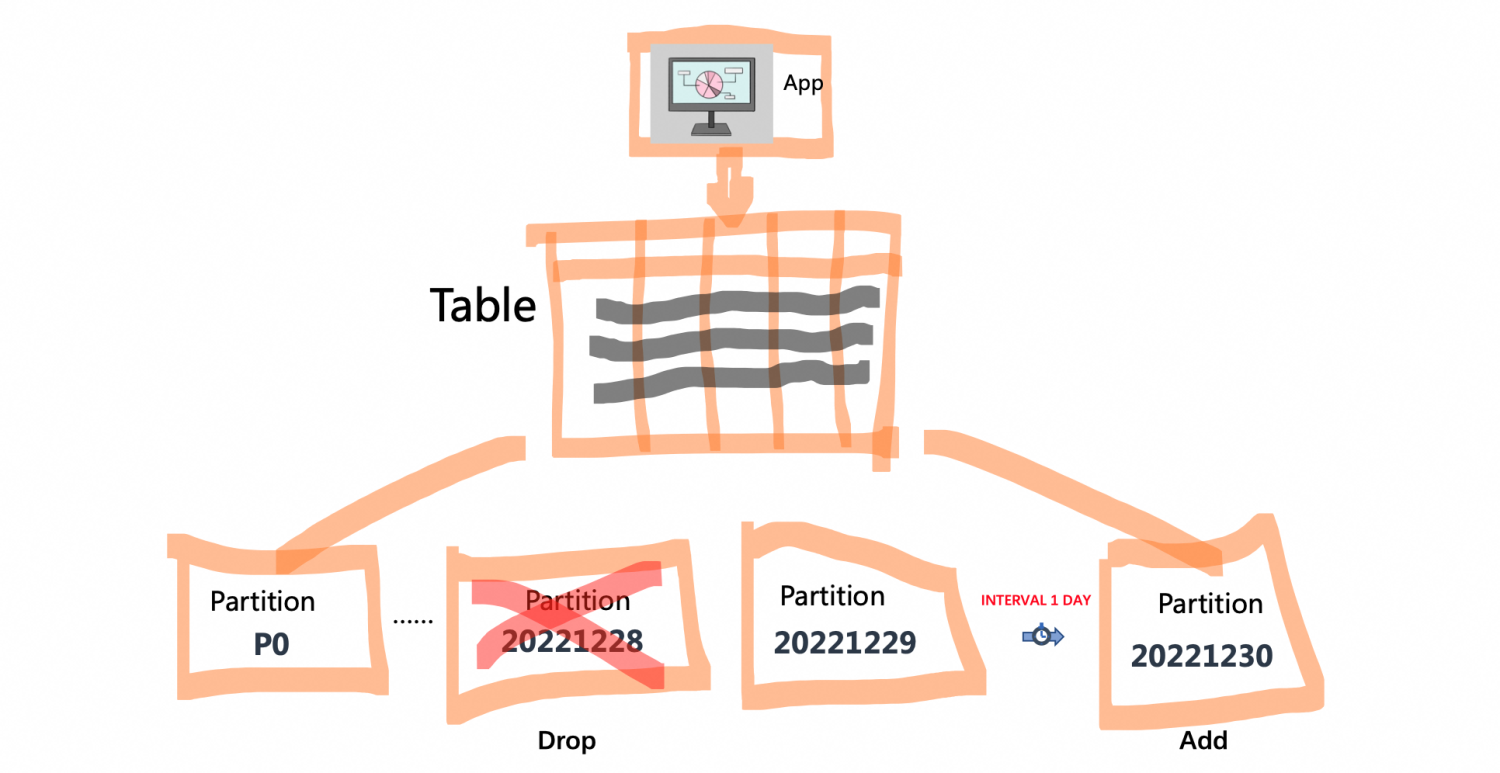

Assuming that the PolarDB MySQL is now used as a data transfer station, the data generated in the business every day will be stored in a new partition of the database, and then the data will be synchronized to the data warehouse for analysis at the same time. After the synchronization, the data in the partition can be clean up. During the O&M time(Maintenance Window) of the next day, the partition where the data of the previous day is located can be dropped to save disk space, which can achieve the purpose of reducing costs and increasing efficiency.

In this scenario, the advantage of using the PolarDB MySQL partition table is that, due to the support of the new features of partition level mdl and interval partitioning, droppping old partitions and adding new ones will not block the DML operation of the current partition.

On the PolarDB console, select an instance whose cluster version must be PolarDB MySQL engine version 8.0.2 and whose Revision version is 8.0.2.2.0 or above. If there is no suitable instance, please purchase a new instance or upgrade the instance version.

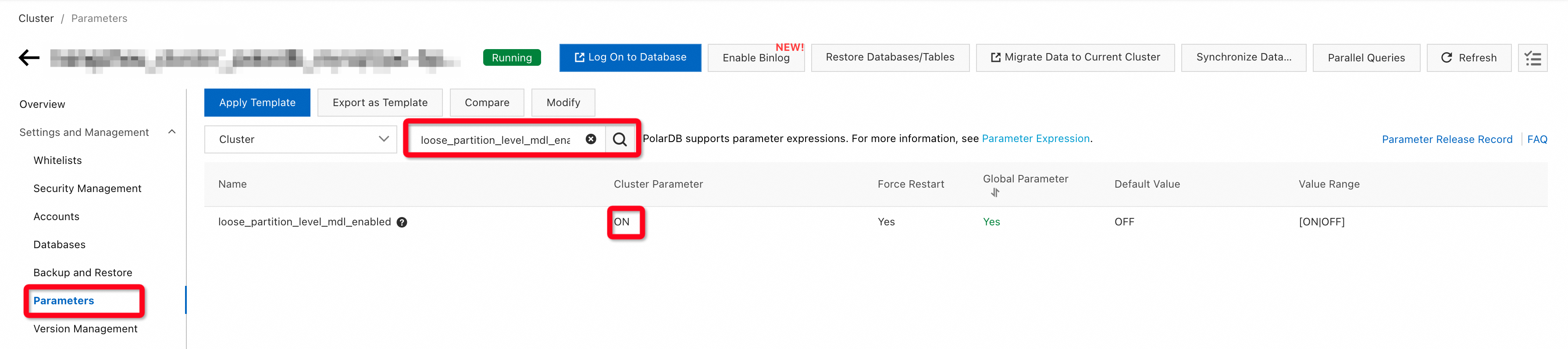

PolarDB MySQL has a new feature of partition level MDL (Metadata Lock), which can reduce the granularity of MDL to optimize some scenarios where DDL and DML are mutually blocked. Enable this function to better experience automatic partition management.

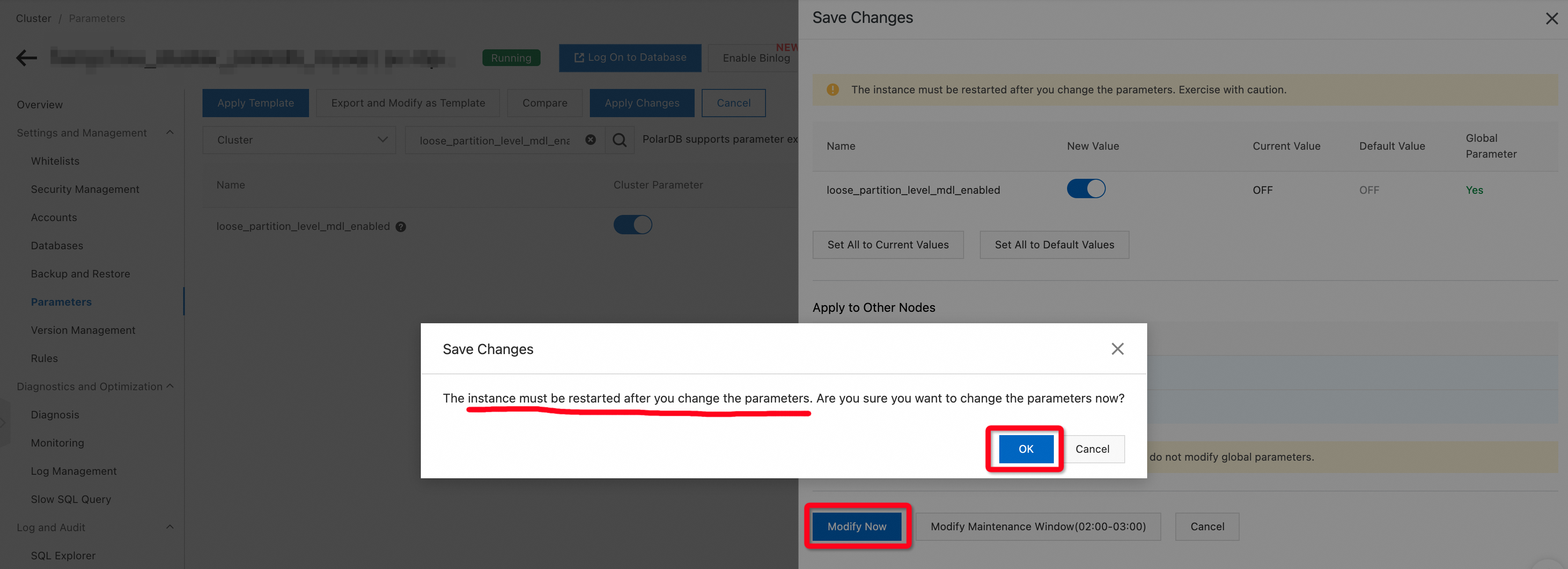

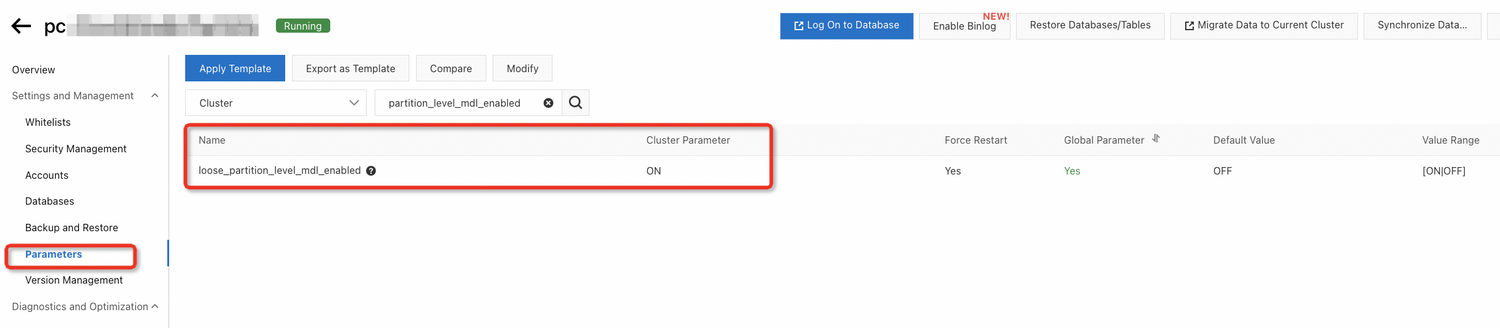

On the navigation interface of the instance, click Parameters, enter loose_partition_level_mdl_enabled in the input box, and check the value of this parameter. If the current value is not ON, you need to modify the parameter to ON on the console.

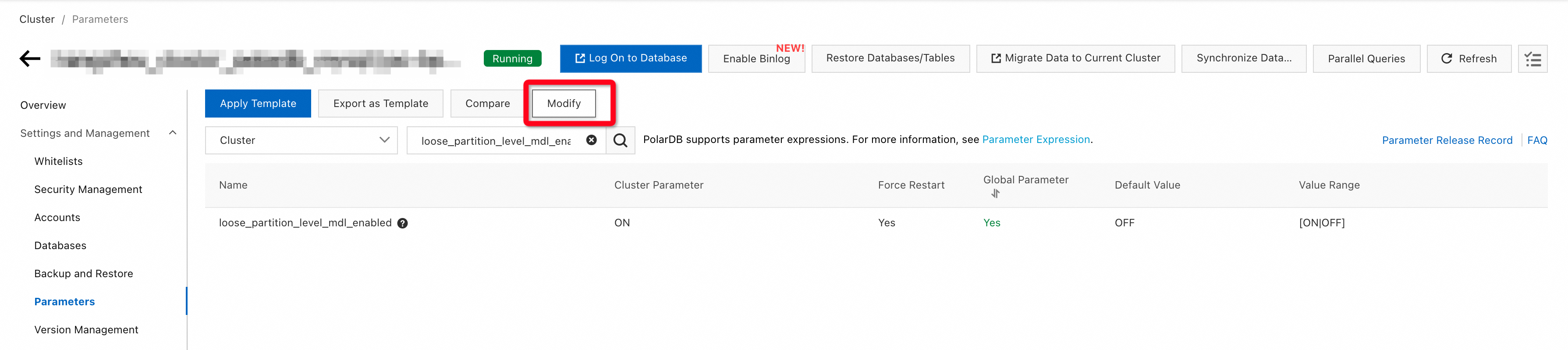

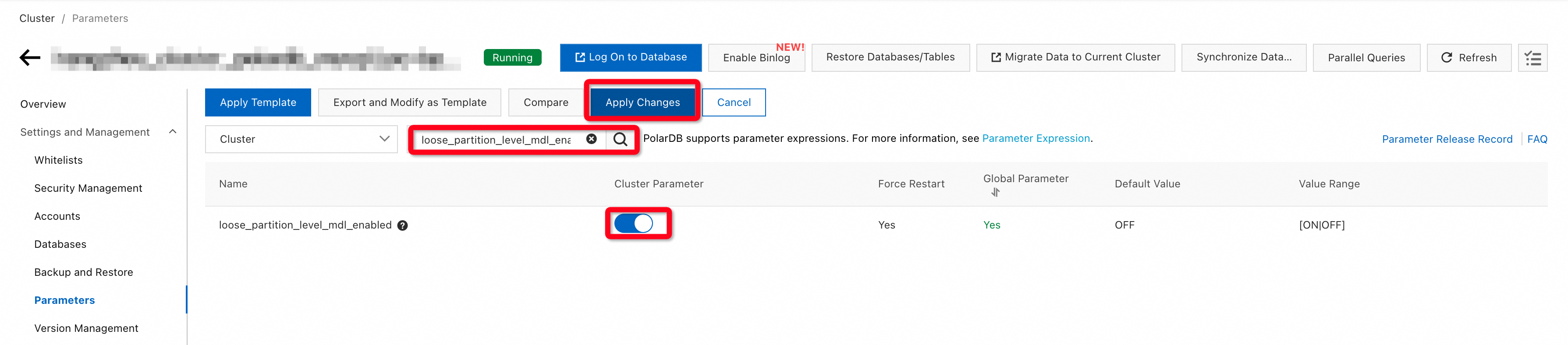

1. Click Modify.

2. Search loose_partition_level_mdl_enabled.

3. If the Cluster Parameter column shows that the current value is OFF, click the button to change it to ON.

4. Click Apply Changes.

5. Click Modify Now.

Modifying this parameter requires restarting the instance. After the instance restarts, click Log On to Database to create events on the DMS console.

Adding/dropping partitions periodically through events can reduce the workload of the DBA.

First create an Interval partition table, gmt_create as the partition key, the interval is 1 day, and there is a partition p0 in the table.

CREATE TABLE `event_metering` (

`id` bigint(20) NOT NULL AUTO_INCREMENT COMMENT 'primary key',

`gmt_create` datetime NOT NULL COMMENT 'create time',

`uid` varchar(128) NOT NULL COMMENT 'uid',

`gmt_modified` datetime DEFAULT NULL ON UPDATE CURRENT_TIMESTAMP COMMENT 'modified time',

`count` int(10) unsigned DEFAULT NULL,

`type` varchar(32) DEFAULT NULL COMMENT '(Normal, Warning)',

PRIMARY KEY (`id`, `gmt_create`),

KEY `idx_gmt_create` (`gmt_create`),

KEY `idx_gmt_modified` (`gmt_modified`)

) ENGINE=InnoDB AUTO_INCREMENT=1 DEFAULT CHARSET=utf8

PARTITION BY RANGE COLUMNS(`gmt_create`) INTERVAL(DAY,1)

(

PARTITION p0 VALUES LESS THAN('2022-12-29')

);At present, the largest partition range is '2022-12-29', insert some data beyond the partition range, and the interval partition will automatically add partitions.

INSERT INTO event_metering VALUES(0, '2022-12-29', '0', '2022-12-29', 1, 'Normal');

INSERT INTO event_metering VALUES(0, '2022-12-30', '0', '2022-12-30', 1, 'Normal');Insert some data into the table through a procedure to simulate data writing.

DELIMITER ||

CREATE PROCEDURE batch_insert(IN start_rec INTEGER, IN end_rec INTEGER)

BEGIN

declare a int;

declare b int;

set a=start_rec;

set b=end_rec;

while a<end_rec do

INSERT INTO event_metering VALUES(a, now(), convert(a, char), now(), 1, 'Normal');

set a=a+1;

end while;

end||

DELIMITER ;

CALL batch_insert(1, 10000);Assume that the O&M time(Maintenance Window) is 14:00pm-15:00pm every day, events are set to be triggered at 14:00pm.

The O&M time is generally 2:00-3:00 by default, and the O&M time of the instance can be modified in the Maintenance Window of the Overview interface.

Create a event for adding a new partition.

CREATE EVENT IF NOT EXISTS add_partition ON SCHEDULE

EVERY 1 DAY STARTS '2022-12-30 14:00:00'

ON COMPLETION PRESERVE

DO INSERT INTO event_metering VALUES(0, DATE_ADD(NOW(), INTERVAL 1 DAY), 0, DATE_ADD(NOW(), INTERVAL 1 DAY), 1, 'Normal');Create a event for dropping a partition.

DELIMITER ||

CREATE EVENT IF NOT EXISTS drop_partition ON SCHEDULE

EVERY 1 DAY STARTS '2022-12-30 14:00:00'

ON COMPLETION PRESERVE

DO

BEGIN

set @pname = concat('alter table event_metering drop partition _p', date_format(curdate(), '%Y%m%d000000'));

prepare stmt_drop_partition from @pname;

execute stmt_drop_partition;

deallocate prepare stmt_drop_partition;

END ||

DELIMITER ;At 2022-12-30 14:00:00, events will be triggered for the first time, drop yesterday's partition (gmt_create between '2022-12-29' and '2022-12-30'), add partition to store tomorrow's data (gmt_create between '2022-12-31' and '2023-01-01'). Every day thereafter, events will be triggered at 14:00:00 to periodically manage partitions.

PolarDB Hands-on | PolarDB for MySQL: List Default Hash Partitioning

PolarDB Hands-on | Build a High Reliable Website with PolarDB

ApsaraDB - May 31, 2023

ApsaraDB - April 26, 2023

Morningking - September 26, 2023

ApsaraDB - April 20, 2023

ApsaraDB - April 27, 2023

ApsaraDB - August 9, 2024

PolarDB for MySQL

PolarDB for MySQL

Alibaba Cloud PolarDB for MySQL is a cloud-native relational database service 100% compatible with MySQL.

Learn More PolarDB for PostgreSQL

PolarDB for PostgreSQL

Alibaba Cloud PolarDB for PostgreSQL is an in-house relational database service 100% compatible with PostgreSQL and highly compatible with the Oracle syntax.

Learn More PolarDB for Xscale

PolarDB for Xscale

Alibaba Cloud PolarDB for Xscale (PolarDB-X) is a cloud-native high-performance distributed database service independently developed by Alibaba Cloud.

Learn More AnalyticDB for MySQL

AnalyticDB for MySQL

AnalyticDB for MySQL is a real-time data warehousing service that can process petabytes of data with high concurrency and low latency.

Learn MoreMore Posts by ApsaraDB