As an appealing objective in the information security field, a trusted system refers to a system that achieves a certain degree of trustworthiness by implementing specific security policies.

For computers, the Trusted Platform Module (TPM) is already in use and complies with the TPM specifications stipulated by the Trusted Computing Group (TCG). TPM is a secure chip designed for implementing trusted systems. TPM being the root of trusted systems, it is the core module of trusted computing and provides solid protection for computer security.

In our web systems, building a trusted system seems to be a pseudo-proposition while "never trust clients' input" is the fundamental security guideline. In fact, the trustworthiness of systems does not actually mean absolute security and Wikipedia explain it as follows: for users, "trusted" does not actually mean "trustworthy". Precisely, it means that you can be completely sure that the behaviors of a trusted system will completely follow its design rather than conducting the behaviors prohibited by designers and software developers.

From this perspective, you can see it as a fascinating vision and you simply wish to build the TPM in a web system to limit malicious behaviors to a low probability, and implement a relatively trusted web system.

Trusted front-end

In a trusted system, one of the key features of the TPM is to identify the authenticity of messages to ensure that the terminal is trusted. In web systems, users are the source of messages. With the spread of exploiting databases, malicious registration and churning, request data identification for authentic users is becoming increasingly mandatory in various scenarios to protect user data.

To successfully build TPM in a web system, the first step is to ensure that input data is secure to construct a relatively trusted front-end environment. However, JavaScript code is always exposed as web systems are natively open while the front-end serves as the frontline for data collection. In this situation, preventing malicious forging becomes difficult and implementing a trusted front-end is challenging.

Obviously, the introduction of JavaScript obfuscation is to protect front-end code logic.

In the early stage of web system development, JavaScript did not play a major role in web systems but simply submitted forms. JavaScript files were very simple, requiring no special protection.

As the volume of JavaScript files soared, several JavaScript compression tools were released to reduce JavaScript volume and accelerate HTTP transfer efficiency such as uglify, compressor and clouser. These tools can:

· Merge multiple JavaScript files;

· Remove spaces and line breaks from JavaScript code;

· Compress variable names in JavaScript files;

· Remove annotations.

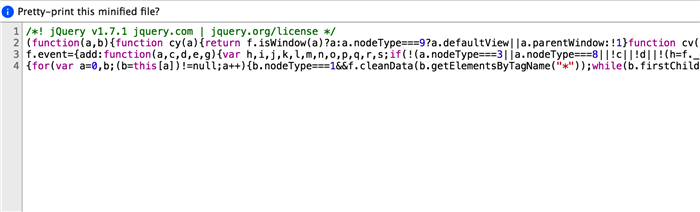

Although the design of compression tools is to help reduce the size of a JavaScript file, the compressed code creates much lower readability and protects the code as a side effect. Therefore, JavaScript file compression becomes a standard step in front-end publication. However, this method can no longer provide robust protection against malicious users as market-available mainstream browsers such as Chrome and Firefox begin supporting JavaScript formatting to rearrange compressed JavaScript code quickly and provide powerful debugging functions.

As increasingly more web applications emerge, while browser performance and network access speed increase, JavaScript is taking a higher workload share and a lot of backend logic is migrated to the front-end. Meanwhile, more and more criminals start taking advantage. In the web model, JavaScript is normally the breakthrough entry of those criminals. By learning about front-end logic, criminals can conduct their malicious behaviors as common users. In this context, JavaScript code for key services and risk control systems requires high security against attacking such as on login, signup, payment and transaction pages. Here, JavaScript obfuscation becomes apparent.

Whether JavaScript obfuscation is robust enough or not has been a hot topic for some time. Actually, code obfuscation emerged as early as the desktop software age when programmers reprocessed most software programs with code obfuscation and shell encryption to protect their code. Java and .NET both have their own obfuscators. Many antivirus programs are highly obfuscated for anti-virus purposes. However, many users see obfuscation as redundant because JavaScript is a dynamic scripting language, the transfer of source code in HTTP and reverse engineering are much easier than unpacking compiled software.

On the one hand, obfuscation required as JavaScript transfers source code and exposed code is always at risk. On the other hand, elaborated obfuscation code can be tough for malicious users and can save more time for developers. Compared with cracking, obfuscators are more affordable and can dramatically increase crackers' workload in high-intensity code resistance, playing a protective role. From this perspective, obfuscating key code is indispensable.

Normally, JavaScript obfuscators exist in two classifications:

· Obfuscators implemented by regular expression replacement

· Obfuscators implemented by syntax tree replacement

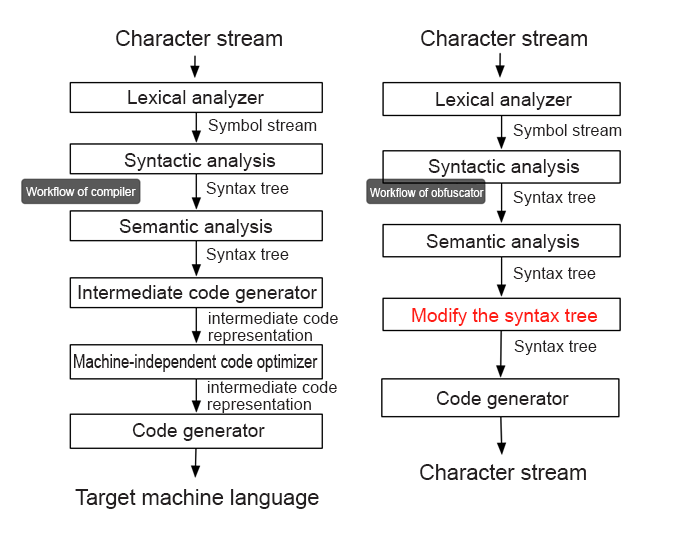

The former type of obfuscators features a low cost but moderate functionality and is suitable for non-obfuscation-demanding scenarios. The latter type of obfuscators feature a high cost but with better flexibility and security and is ideal for resistance scenarios. The following figure details the latter type. Syntax-based obfuscators are much like compilers, and they both have similar fundamental principles. First, we will explore compilers.

Token: As a lexical unit (as known as a lexical marker), a token is produced by a lexical analyzer and is the minimum unit of split text stream.

AST: The syntax analyzer produces abstract syntax tree and has a tree-like presence of source code abstract syntax structure.

Workflow of compilers:

In brief, when the system reads a piece of string text (source code), the lexical analyzer splits it into small units (tokens). For example, digit 1 is a token and the string "abc" is another token. Then, the syntax analyzer organizes those smaller units into a tree (AST), which represents the composition relationship of different tokens. For example, it can display "1 + 2" as an addition tree where the left and right nodes are token - 1 and token - 2 while the central token indicates addition. Then, the compiler produces intermediate code based on the generated AST, which it eventually converts to machine code.

Workflow of obfuscators:

Compilers need to translate source code into intermediate code or machine code, the output of obfuscators is still JavaScript code and does not require the steps following syntax analysis. Also, the objective here is to change the structure of the original JavaScript code. To what does this structure correspond? It corresponds to AST, and any correctly-developed JavaScript code can construct the AST. Similarly, the AST can also generate JavaScript code as it represents the logic relationship of different tokens. Thus, you can generate any JavaScript code simply by constructing the AST. The figure above, on the right hand shows the obfuscation process.

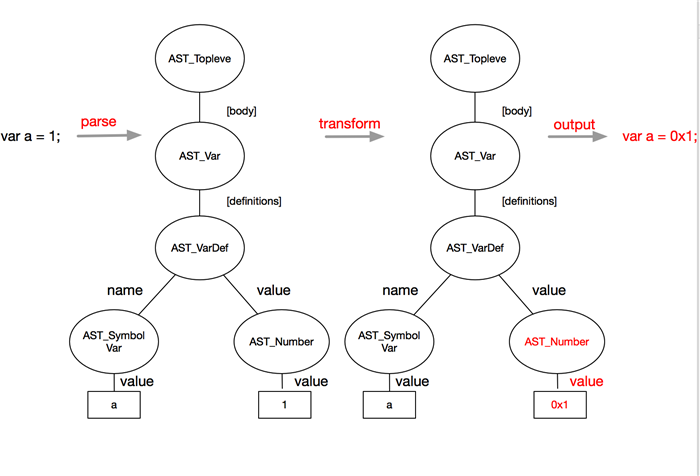

By modifying the AST, you can create a new AST, which corresponds to new JavaScript code.

Planning and designing

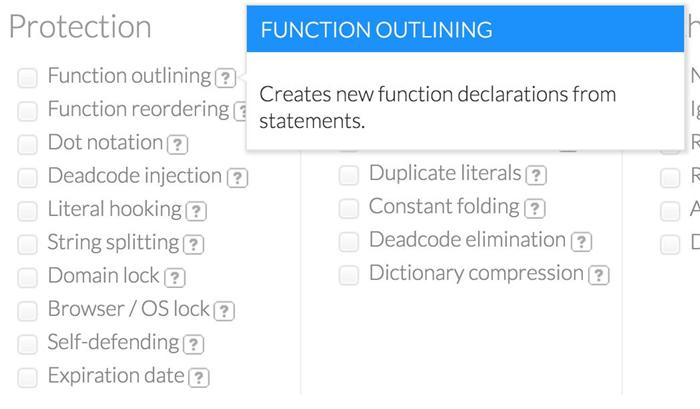

Once you learn about the obfuscation process, designing and planning become the most important part of all. As mentioned above, generating an AST creates a JavaScript code different from the original code. However, obfuscation must not disrupt the execution result of the original code. Therefore, obfuscation rules must ensure that code will become less readable while the execution result of the code remains the same.

Developers can customize obfuscation rules as per their specific requirements, for example, splitting strings and arrays and adding waste code.

Implementation

This step may be daunting for many users as lexical analysis and syntax analysis requires in-depth understanding of compilation principles. You can rely on tools to figure it out. With such tools, you can directly go to the last step, modifying the AST.

Many commercially available JavaScript lexical and syntax analyzers are easily accessible, such as v8, SpiderMonkey for Mozilla and esprima. The following describes the recommended uglify, which is a nodejs-based parser. This tool provides the following features:

· Parser - which can parse JavaScript code as the AST

· Code generator - which can generate code by using the AST

· Scope analyzer - which can analyze definitions of variables

· Tree walker - which can traverse tree nodes

· Tree transformer - which can change tree nodes

By checking the obfuscator design sketch provided above, you can see that you only have modified the syntax tree.

Examples

To help you understand how to design an obfuscator, the following simple example details how to convert the number "1" in "var a = 1" to its hexadecimal form by obfuscation rules. First, perform lexical and syntax analysis on source code and generate the syntax tree simply by using the uglify method. Then, locate the number from the syntax tree and convert it to its hexadecimal form, as shown below:

Code of the example:

var UglifyJS = require("uglify-js");

var code = "var a = 1;";

var toplevel = UglifyJS.parse(code); //toplevel is actually the syntax tree.

var transformer = new UglifyJS.TreeTransformer(function (node) {

if (node instanceof UglifyJS.AST_Number) { //Locate the leaf node that needs to be modified.

node.value = '0x' + Number(node.value).toString(16);

return node; //A new leaf node is returned to replace the original leaf node.

};

});

toplevel.transform(transformer); //Traverse the AST.

var ncode = toplevel.print_to_string(); //Restore to strings from the AST.

console.log(ncode); // var a = 0x1;By looking at the simple code above, you can understand that first you have to construct the syntax tree by using the parse method and then traverse the tree with TreeTransformer. When you traverse a node of the UglifyJS.AST_Number type to (see ast for the complete list of AST types), the token of this node has the attribute "value", which stores a specific value of the numeric type. Then, change the value to its hexadecimal form and run "return node" to replace the original node with the new node.

Result

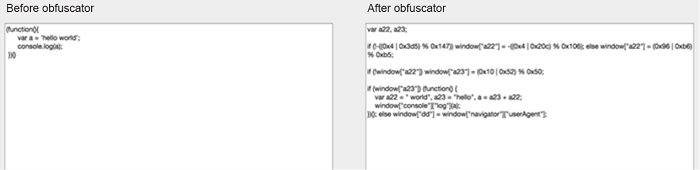

The following shows the codes before and after obfuscation:

With the addition of waste code, the original AST changes and obfuscation impacts the performance. Despite this, you can minimize the impact using obfuscation rules.

· Reduce cyclic obfuscation as too many obfuscations can impact code execution efficiency

· Avoid too many concatenated strings as string concatenation imposes performance issues in earlier versions of IE

· Control code volume - Specifically, control the proportion of inserted waste code as an oversized file can exhaust network request and code execution performance.

By using obfuscation rules, you can narrow down performance impact to a rational degree. In fact, some obfuscation rules can even accelerate code execution. For example, the compression obfuscation of variable names and attribute names can reduce the file size, while replicating global variables can minimize action scope lookup. For modern browsers, obfuscation imposes little impact on code and you can use obfuscation with full confidence after introducing rational obfuscation rules.

The objective of obfuscation is to protect the code without compromising its original functionality.

Given that the post-obfuscation AST is distinct from the original AST while the execution results of the post-obfuscation and original files must be the same, what should one do to ensure sufficient obfuscation strength without disrupting code execution? To solve this problem, you require tests with high coverage:

· Develop detailed unit tests for obfuscators

· Perform high-coverage functional tests on obfuscation target code to ensure that the execution results of the pre- and post-obfuscation code are consistent

· Perform multi-sample tests to obfuscate the class libraries complemented by unit tests, such as obfuscating jQuery and AngularJS. Then, perform those unit tests again using the obfuscated code and ensure that the execution results are the same before and after the obfuscation.

For any enterprise, building a trusted web system is a part of its vision and trusted front-end environments are a requirement for trusted web systems. To do this, we require JavaScript obfuscation for code resistance and implementing your obfuscators is a doable process. It is also recommended as the obfuscator impact on performance is controllable, thus making it easier to build a trusted web system.

https://en.wikipedia.org/wiki/Trusted_Platform_Module

https://en.wikipedia.org/wiki/Trusted_system

http://lisperator.net/uglifyjs

http://esprima.org

2,593 posts | 794 followers

FollowOpenAnolis - September 26, 2022

Miles Brown - December 8, 2025

Alibaba Clouder - March 15, 2017

Alibaba Clouder - August 6, 2019

Iain Ferguson - February 11, 2022

Alibaba Clouder - December 4, 2020

2,593 posts | 794 followers

Follow Security Center

Security Center

A unified security management system that identifies, analyzes, and notifies you of security threats in real time

Learn More Data Lake Formation

Data Lake Formation

An end-to-end solution to efficiently build a secure data lake

Learn More Data Security on the Cloud Solution

Data Security on the Cloud Solution

This solution helps you easily build a robust data security framework to safeguard your data assets throughout the data security lifecycle with ensured confidentiality, integrity, and availability of your data.

Learn More Organizational Data Mid-End Solution

Organizational Data Mid-End Solution

This comprehensive one-stop solution helps you unify data assets, create, and manage data intelligence within your organization to empower innovation.

Learn MoreMore Posts by Alibaba Clouder