By Denghui Dong

At the Apsara Conference 2021, the Alibaba JVM Team opened the source of their self-developed fastFFI project. Currently, the fastFFI code and samples are accessible on GitHub.

fastFFI is a modern and efficient FFI framework. It was developed to improve the ease of use and performance of communication between different languages. The current implementation is aimed at Java accessing C++ code and data. Different programming languages are good at solving different problems, so the need for cross-language calls is getting stronger in the modern software development process.

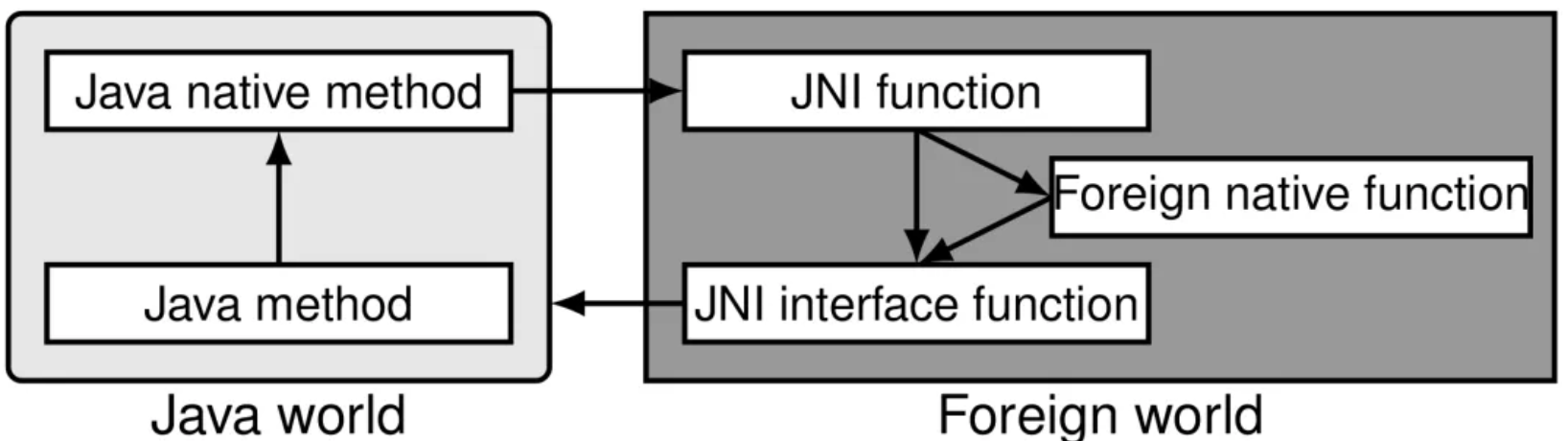

Java Native Interface (JNI) is the FFI interface in the JVM standard. Developing JNI applications is time-consuming and error-prone. In addition, frequent calls to JNI functions via Java Native methods may often cause serious performance problems. The developer needs to declare Java's Native method first and develop a Native side's JNI function to use an external function. In this JNI function, implement the call to the external function. Since the Java and Native types are different, the JNI function also needs to perform corresponding type conversion.

Compared with calls to ordinary Java methods, calls to Java Native methods have additional performance overhead. This overhead mainly comes from two parts:

Most of the existing FFI frameworks of Java are called abstract FFI frameworks, which provide some type of support based on JNI and mainly try to solve the problem of poor FFI usability. Some of the existing popular tools, such as Java Native Access (JNA) and Java Native Runtime (JNR), are based on common JNI stub and dlsym to pursue extreme ease of use at the expense of performance.

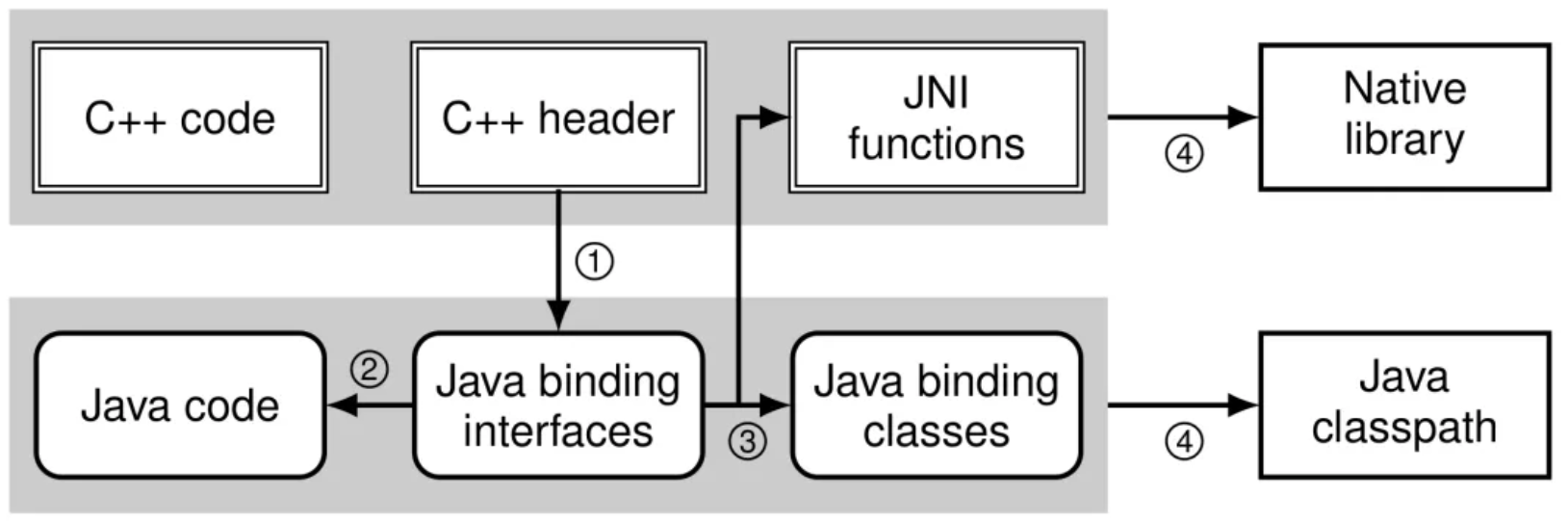

fastFFI is equally easy to use. fastFFI can automatically generate JNI code like JavaCPP. Compared with JNR and JNA, fastFFI additionally needs to call the C++ compiler to compile its automatically generated JNI code. FastFFI provides a tool (under internal testing) that can automatically extract various definitions of C++ from C++ header files and generate corresponding Java Binding.

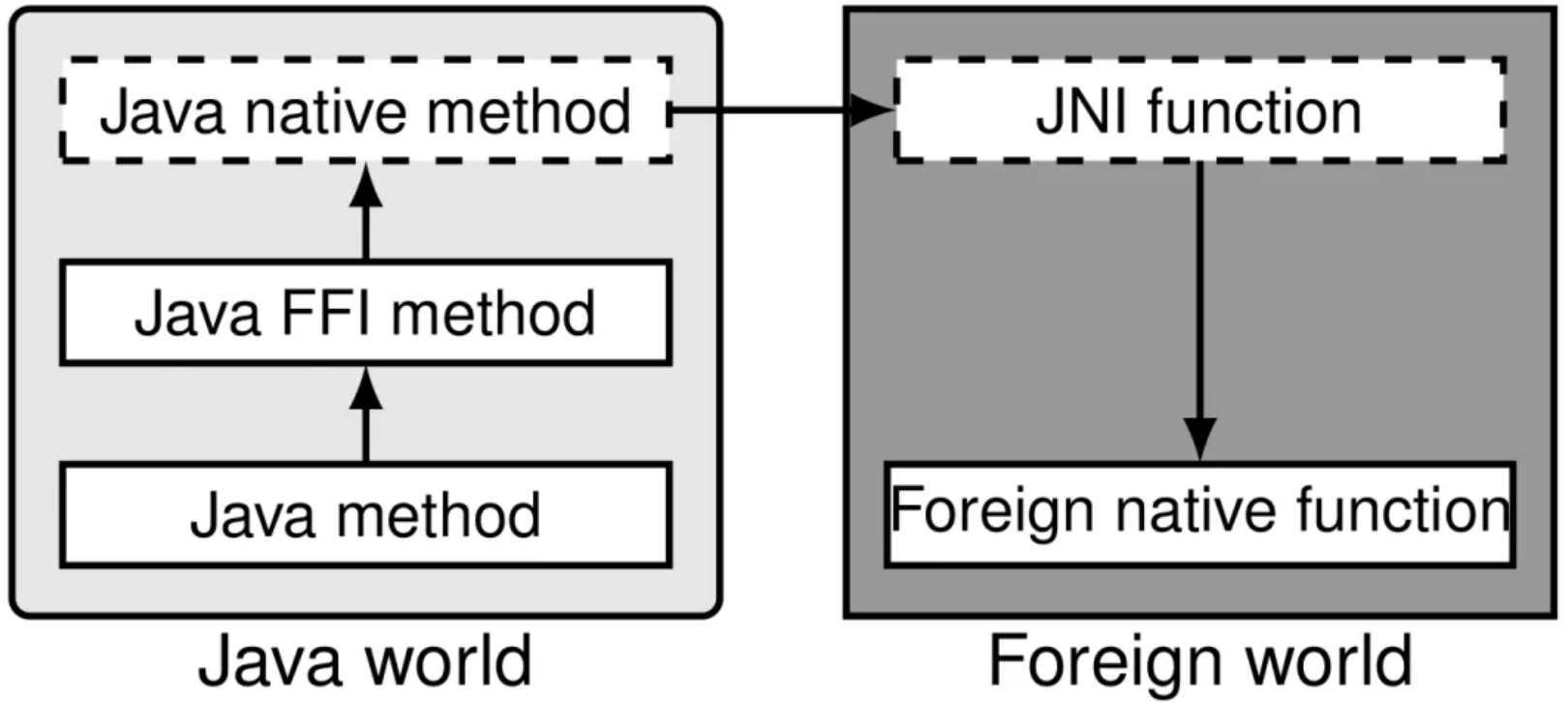

In fastFFI, binding is used to map a Java interface defined by C++. Since the interface cannot be executed, the fastFFI will generate a binding class and access the C++ function through the JNI by defining native methods in the binding class. Here, we adopt Java interfaces to maximize the retention of flexibility. If new FFI interfaces are proposed in the future JVM, fastFFI can switch seamlessly, as long as the corresponding adaptation is made when generating binding classes. Users can omit the steps of developing Java Native methods and the corresponding JNI functions using fastFFI.

Java cross-platform advantages are no longer important in the modern cloud deployment environment. The current cloud infrastructure can complete the compilation, construction, packaging, and deployment of Java and generated JNI code. In addition, fastFFI and JavaCPP can support C++, but JNR and JNA can only support dynamic link libraries built by C. Libraries developed by C++ require an additional set of CAPI.

As the name implies, one of the key features of fastFFI compared to established FFI tools and frameworks is its speed. Since it is based on the JNI code, in the worst case, the performance of the fastFFI is the same as the JNI developed by humans. However, fastFFI is better. fastFFI, compared with JNI, can provide faster access to C++ data. This is due to fastFFI's built-in tool llvm4jni, which can convert LLVM Bitcode into Java Bytecode, breaking the barrier between JVN's JIT compiler and JNIcode's C++ compiler.

fastFFI is a modern FFI framework since fastFFI is the only one that can map C++ templates to Java generics compared to any existing FFI frameworks. The fastFFI code generator can generate a corresponding binding class for each instantiation of a C++ template through type derivation by fastFFI defining some basic type information. This binding class can be used for type checking when passing parameters to detect some type errors as early as possible on the Java side. In addition, we use Java language structures to map C++ language structures as much as possible. For example, fastFFI uses binding interfaces instead of binding classes and can support C++ multi-inheritance through multi-inheritance of Java interfaces. Some common high-level language structures of Java and C++, such as exception handling and Lambda, are being developed and supported.

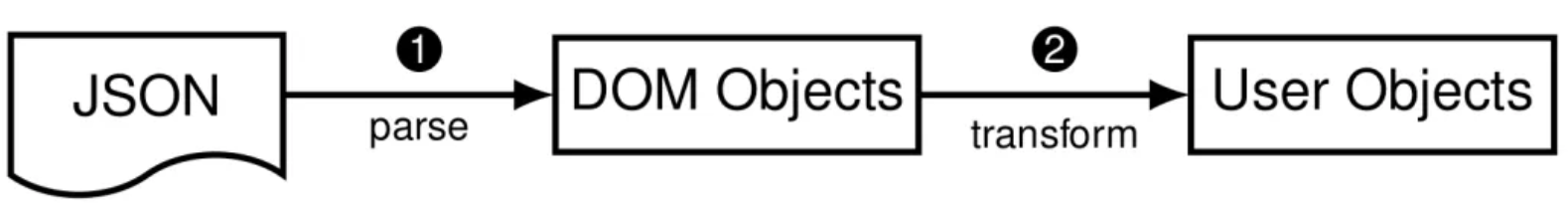

The first application scenario of fastFFI is to allow Java users to enjoy the high-quality framework of C++. JSON parsing is an example. The following figure shows a typical JSON parsing scene. A user converts the input JSON file or byte stream into a DOM object via the JSON parser and then converts the DOM object into the final user object. Therefore, the whole process is divided into resolution and transformation.

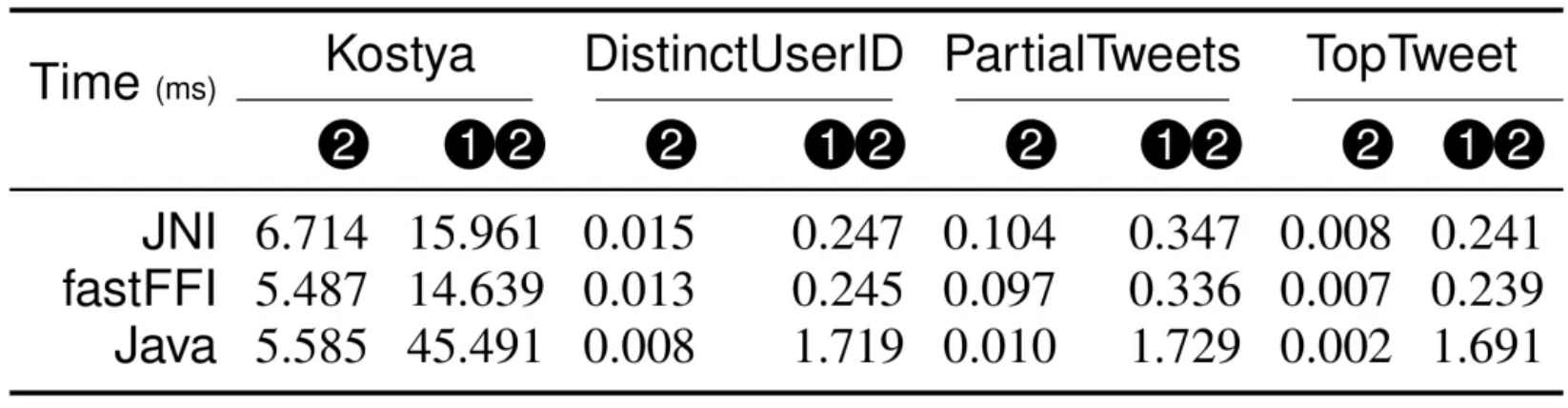

We use simdjson (the link is at the end of the article) to compare Jackson. simdjson is a modern JSON parser developed by C++. We used fastFFI to create a Java SDK, while Jackson is a JSON parsing framework developed by Java. We use the four benchmarks in simdjson to compare. The performance of converting DOM objects using FFI is different from ava, but the parsing performance of simdjson is better than Jackson. Therefore, especially for large JSON files, simdjson and fastFFI can be used to process. Since the resolution is a complex JNI call, llvm4jni cannot be optimized. The performance difference between fastFFI and JNI is not obvious, only slightly advantageous.

If llvm4jni is not enabled, fastFFI performance is equivalent to JNI performance. In the table headers of all tables in this article, JNI refers to the Java SDK developed using fastFFI and does not enable llvm4jni, while fastFFI refers to the Java SDK developed using fastFFI and enabled llvm4jni.

One of the most important scenarios of fastFFI is to replace the existing in-memory cross-platform data formats (such as Apache Arrow and Google FlatBuffers) in the big data field. In the big data field, an application is divided into the data management platform and the data processing algorithm. C++ development is more appropriate for data management platforms. Flexible memory management can be obtained. It is more efficient to develop them in a high-level programming language for data processing algorithms.

We use Grape (the link is at the end of the article), the graph computing engine of Alibaba, as an example. You can reduce the performance problems caused by JNI by using the Java SDK created by fastFFI.

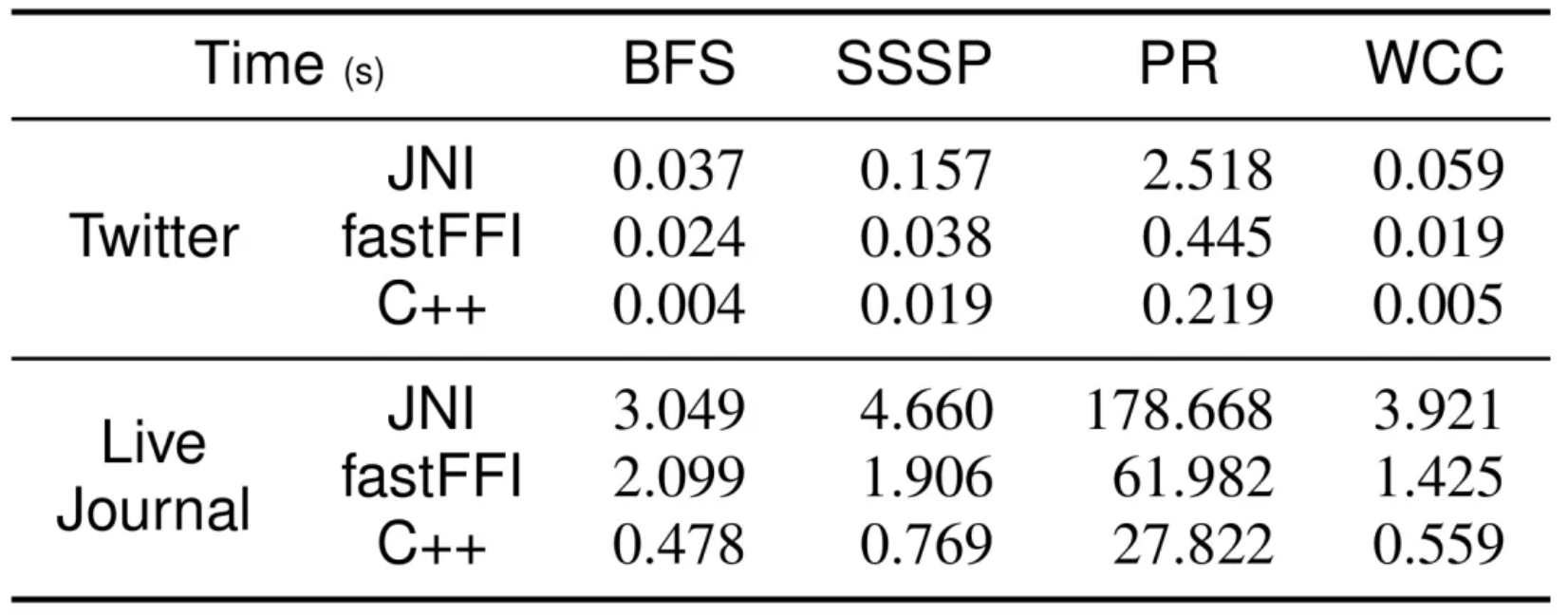

We selected four algorithms (BFS, SSSP, PageRank, and WCC) and implemented them in C++ and Java based on Grape C++ and Java API. As shown in the following table, especially SSPS and PageRank, although fastFFI has many performance gaps compared with C++, it has reduced the overhead of JNI, which makes it possible to use Java to develop algorithms on Grape.

fastFFI was originally intended to replace cross-platform memory data format (such as Apache Arrow and Google FlatBuffers) in cross-language calls. In programs where Java and C++ call each other, the best in-memory data format is C++ objects, which can be accessed and created flexibly by C++ code. However, the layout of C++ objects in memory is not easily available in Java. Therefore, Java cannot quickly access the members of C++ objects.

If you want to solve these challenges, fastFFI can generate the corresponding Java binding interface and the corresponding binding class and JNI code according to C++'s object definition. Thus, Java can access the C++ members through fastFFI. If you want to eliminate the overhead of JNI, we can use llvm4jni to convert JNI functions accessed by members into Java bytecode methods. As such, the JIT compiler can optimize these JNI functions. In other words, fastFFI uses the C++ compiler (clang) to generate LLVM Bitcode code for members accessing C++ objects. These Bitcode codes contain access to C++ objects and layout information. With the help of llvm4jni, we will encode this information through Java Bytecode and finally realize efficient access to C++ members through JIT.

If you want to remove the requirement of developing C++ data types, fastFFI provides some annotations to support the automatic generation of C++ object definitions from a Java interface. This mechanism is called fastFFI mirror.

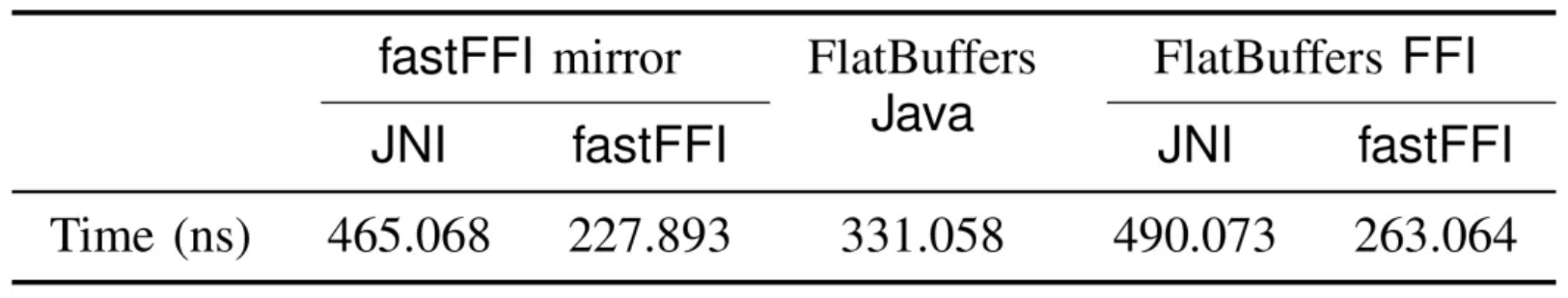

In addition, some cross-platform data formats will provide C++ and Java API, such as Protocol Buffer and FlatBuffers. According to previous evaluations, the parser of C++ is faster than Java in most scenarios. Therefore, we used fastFFI to create a new Java SDK for FlatBuffers called FlatBuffers FFI. According to the table, using fastFFI mirror (through C++ object interaction) is the most efficient way. It is worth noting that C++ objects are only suitable for data interaction in Java and C++ of this process. If cross-platform interaction is involved, the data may need to be converted to FlatBuffers format. However, the Java SDK obtained by wrapping the C++ API with fastFFI is the most efficient.

The fastFFI project is evolving and is expected to be used and discussed by more developers.

Denghui Dong has been working on Java since 2015. In 2017, he joined Alibaba JVM team, mainly focusing on RAS. He is an OpenJDK committer and the project lead of Eclipse Jifa.

Anolis OS and OpenAnolis: From Basic Software to Cloud Native

The Initiation and Development of OpenAnolis Open Source Community

105 posts | 6 followers

FollowOpenAnolis - April 11, 2022

Alibaba Cloud Native Community - September 17, 2025

jianzhang.yjz - July 9, 2021

Alibaba EMR - August 28, 2019

Alibaba Cloud Native Community - January 22, 2026

Aliware - August 18, 2021

105 posts | 6 followers

Follow Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More ApsaraDB for HBase

ApsaraDB for HBase

ApsaraDB for HBase is a NoSQL database engine that is highly optimized and 100% compatible with the community edition of HBase.

Learn More Financial Services Solutions

Financial Services Solutions

Alibaba Cloud equips financial services providers with professional solutions with high scalability and high availability features.

Learn MoreMore Posts by OpenAnolis