At the Alibaba Cloud MaxCompute session during the 2017 Computing Conference held on October 14, Lin Wei, computing platform architect of Alibaba delivered a speech titled MaxCompute2.0: The Evolution of NewSQL, sharing the efforts that have been made to optimize NewSQL for MaxCompute 2.0.

In an era of DT where a growing numbers of companies move their application data to the cloud, NewSQL has become a hot topic in the industry, for it offers a great way for users to access and store data using APIs. This article discusses the background of Alibaba Cloud MaxCompute's adoption of NewSQL, the key technologies used, and so on.

When it comes to NewSQL, SQL is an inevitable topic. The "data processing" and "database" that people mentioned in the 1980s and 1990s generally referred to the use of DataBase. DataBase is a relational database with strong structures and semantics, which allows anyone to make a quick interactive query when writing a query language. However, as massive amounts of data is generated with the rapid development of the Internet, traditional databases now face a series of challenges.

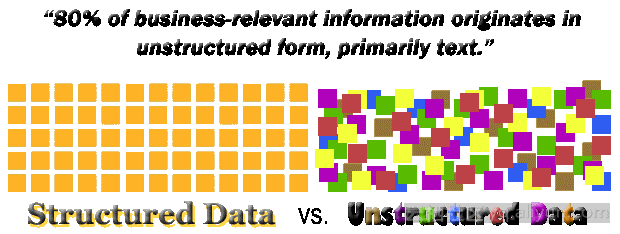

The first challenge lies in its poor horizontal scalability. In an Internet environment, the traditional DataBase would find it hard to support structured and unstructured data as well as voice and video data, resulting in a lack of flexibility. Moreover, the traditional DataBase is weak in fault tolerance. In a distributed environment, data centers are required to carry large volumes of data, which requires a high level of fault tolerance. Therefore, SQL has become inadequate to handle the surge of big data applications, leading to the birth of NoSQL, which is used in processing unstructured data.

A NoSQL database is a non-relational database with weak semantics and good flexibility. With the ability to handle unstructured, semi-structured, and structured data, the database scales well horizontally. NoSQL provides powerful User-Defined Functions, which can define the key-value pairs for map and reduce functions to process data. The database also offers a flexible API to support non-relational computations. Because all the nodes involved in the computing are separate from each other, the database is excellent in fault tolerance. It is obvious that NoSQL is far ahead of SQL in big data processing, which has led to a new wave of big data offerings like Google's BigTable and MapReduce.

Now, Alibaba Cloud has launched its own version of NewSQL with an aim to solve what SQL and NoSQL cannot on their own.

The concept of NewSQL is to go back to the relational database. When working with a NoSQL database, programmers must define map, reduce, value and many other parameters, respectively. Therefore, it is hard for them to clarify what they are working on unless all coding details are presented. The intention of going back to the relational model is that programmers can describe what to do instead of how to do it. This way, people can know what to work on as soon as they read the NewSQL database.

A strong system optimization capability is required for NewSQL to do its job. With a powerful optimizer, NewSQL can integrate a variety of functions to ensure the system can make the efficient physical execution plan in an adaptive way. In this process, we need to keep the unique features of NoSQL such as the ability to process unstructured data, a great collection of UDFs, and distributability.

If the users write an efficient execution plan with NoSQL, the following problems will arise. Firstly, programmers are unable to notice data and environment changes in time, which easily leads to a data skew problem. Secondly, with the increasingly high computing complexity and barriers existing both upstream and downstream, it is impossible for programmers to quickly work out the best execution plan. Meanwhile, computing requires knowledge sharing which will be impeded by the lack of a high-level language with strong semantics. In an environment where resources are shared, it is hard for a programmer alone to capture a big-picture perspective. Hence, we need to turn back to NewSQL, where programmers describe what they want to be done, and the system produces the efficient execution plan through optimization.

NewSQL shows great adaptability under the three scenarios as shown in the figure. It is hoped that programmers can describe their tasks better, but given the lack of flexibility, UDFs need to be used to bring balance to high-level semantics, and thus ensure highly performing, intelligent, and adaptive system optimization.

As a matter of fact, the whole industry is moving forward in this direction. For instance, in the delivery of the Dryad engine, Microsoft also offers Scope to do the optimization. DataBricks offers SparkSQL in addition to the Spark package to accelerate iteration. Hadoop has gone through the evolution from MapReduce, to Hive, and then to Hive 2.0. And Google is promoting Spanner which supports SQL semantics in addition to MapReduce. Alibaba Cloud's MaxCompute 1.0 is now stepping into MaxCompute 2.0, where system optimization is brought into play.

Knowledge of some key technologies is required to strike a balance between SQL and NoSQL.

The ability to handle unstructured, semi-structured, and structured data is required.

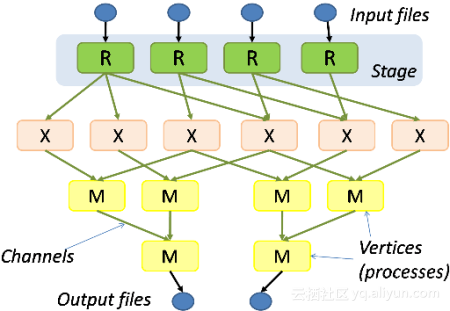

In an Internet environment, users need to provide serialize and deserialize functions to enable dynamic conversion from unstructured to structured, so that structured data can be extracted for computation. To get over the limitations of traditional databases, the support for user-defined functions is needed to diversify the UDF features and to allow for better interaction between various programming languages. Users also need to define partitions to connect the upstream and the downstream, and to connect the input and output ends with other Internet applications.

This is for getting over the limitations of MapReduce so that the system can deploy loops and iteration to DAG. An asymmetric graph is also needed to support the complex physical execution plan. Only by doing so can the optimizer produce the efficient execution plan and makes the language complete.

A complete collection of UDFs reduces the relational model to a functional language, which can be used to create different DAG execution plans. To ensure flexible interaction at the language level, functions like serialize/deserialize, join, aggregate, and processor (supports hash, range, and direct hash) are provided.

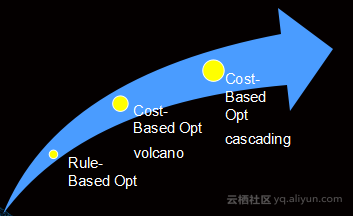

A powerful optimizer helps optimize data storage, from a single statement to thousands of store procedures. NoSQL adopts a functional programming language that can create rather complex graphs. With traditional databases, however, statements are committed one by one, which results in a poor job sharing experience. A powerful optimizer can be used to write more complex query storage procedures. This in turn results in a huge logic execution plan and a larger room for optimization. Thus a more advanced optimizer is needed to migrate away from rule-based optimization to cost-based optimization.

In addition, a distributed approach should be taken into account for a different optimizer. Take a Non-SQL scenario for example, many UDF extensions, whether they are for data, user, or computing, can be used to produce great execution plans.

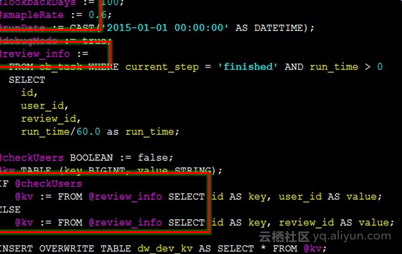

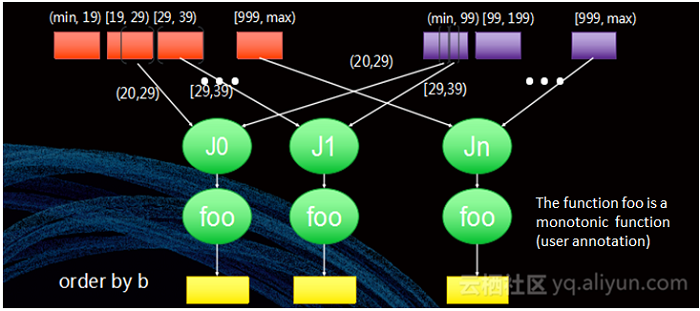

The following figure show an interesting example about how a optimizer and the UDFs work together.

On the left is the result of not understanding the UDFs. In this case, the optimization is unsatisfactory and the output properties of the UDFs are not sensed, which results in an inefficient physical execution plan. On the right shows a healthy interaction between the UDFs and the optimizer. In this case, the optimizer achieves global optimization, and interacts well with the user to understand the UDF properties, and that is a shift from black box optimization to gray box optimization.

In the use case as shown in the figure, the cost for a distributed model is dramatically reduced, and both flexibility and optimization are achieved. In fact, some UDF properties are worth thinking:

A. Row-wise? Monotonic function?

B. Keep some columns unchanged (such as pass through)?

C. Keep the "clustered by" column unchanged? Keep the "sorted by" column unchanged?

D. Selectivity, data distribution of output, and more.

The optimization in a distributed scenario works in a different way as compared to a stand-alone SQL database. Due to vast amounts of NoSQL UDFs, a variety of dynamic environments in a distributed scenario (such as the topology to assign workers and distribution of Failure Region), achieving a balance between run-time and compile-time optimization needs a powerful engine which can perform optimization at run time. Run-time optimization includes determining the number of partitions and the boundary, selecting the Join method, and an efficient Datashuffle approach.

NewSQL is designed to help developers develop programs more efficiently and achieve interactive computing by solving what NoSQL and SQL cannot on their own. With its powerful system optimization capability, NewSQL is expected to be highly available, interpretable, performing, and adaptive, so as to drive the boom of the whole MaxCompute ecosystem.

2,593 posts | 794 followers

FollowAlibaba Clouder - November 6, 2017

Alibaba Cloud MaxCompute - September 12, 2018

Alibaba Cloud MaxCompute - April 25, 2019

Alibaba Clouder - April 10, 2018

Alibaba Cloud MaxCompute - January 7, 2019

Alibaba Clouder - July 16, 2020

2,593 posts | 794 followers

Follow Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More Financial Services Solutions

Financial Services Solutions

Alibaba Cloud equips financial services providers with professional solutions with high scalability and high availability features.

Learn MoreMore Posts by Alibaba Clouder

Raja_KT February 13, 2019 at 6:45 am

Good one but lacks real example. It is always easy to be ACID and SQL compliant if not ANSI. NoSQL->NewSQL->HTAP...movement is there. lots to say :)....If it can be explained from RDBMS context like rough example "select d.deptno,sum(e.salary) from emp e inner join dept d on (e.deptno=d.deptno) group by d.deptno order by d.deptno ..." where deptno is PK on dept and fk on emp , then it will be easier for audience.