By Taosu

The four major families of NoSQL databases:

Aerospike (AS) is a distributed, scalable key-value NoSQL database designed for T-level big data and high-concurrency structured data storage. It utilizes a hybrid architecture, with indexes stored in memory while data can be stored on either Mechanical Hard Disk Drive (HDD) or Solid State Drive (SSD). This enables read and write operations within microseconds, with 99% of responses achieved within 1 millisecond.

| Aerospike | Redis | |

|---|---|---|

| Type | NoSQL database | Cache |

| Number of Thread | Multiple threads | Single thread |

| Data Sharding | Automatic processing (equivalent to sharding) | A provided sharding algorithm to balance various partition data |

| Data Expansion | Dynamically increase data volume to balance traffic | Shutdown is required |

| Data Synchronization | Failover can be completed transparently after setting the replication factor (RF) | Manual failover and data synchronization |

| Carrier | Index are stored in memory and data are stored in SSD | Memory |

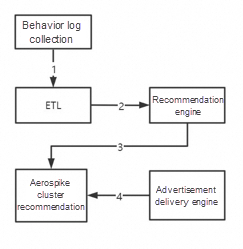

Aerospike, as a large-capacity NoSQL solution, is suitable for scenarios where large capacity is required and queries per second (QPS) is relatively low. It is mainly used in the advertising industry. The personalized recommendation advertisement is based on the knowledge of the unique preferences and habits of customers. Therefore, it makes accurate predictions or guidance on the purchase needs of customers and presents advertisements that are highly consistent with their needs in the right place, at the right time, and in the appropriate form to promote consumption.

ETL Data Warehouse Technology: Extract, Transform, and Load

HDFS and HBASE are used to analyze logs offline and in real time. Then, the tag results of user profiles are stored in the high-performance NoSQL database Aerospike, and the data is backed up to a remote data center. Through the decision engine (delivery engine), the frontend advertisement delivery request reads the corresponding user profile data from the user profile database, and then bids according to the bidding algorithm. After the bidding is successful, the advertisement can be displayed. As for what kind of advertisement is displayed to users, it is determined by the personalized recommendation advertisement mentioned above.

| Aerospike | Mysql | |

|---|---|---|

| Database Name | Namespace | Database |

| Table Name | Set | Table |

| Record | Bin | Column |

| Field | Record | Row |

| Index | key, pk, and kv | pk |

Neo4j is a Java-based open-source NoSQL graph database, that uniquely stores structured data in graphs rather than traditional tables. It functions as an embedded, disk-based Java persistence engine, offering complete transactional capabilities. The program data is structured within a flexible network framework, rather than strictly within tables, while retaining the benefits of a fully transactional and enterprise-level database.

Based on a graph data structure, Neo4j encompasses nodes (entities identified by globally unique IDs) and edges (relationships connecting two nodes). In essence, a knowledge graph forms a relationship network, connecting diverse sets of information and providing an analytical perspective oriented towards relationships.

Graph database refers to a database that stores and queries data in the form of graph data structure. In a relationship graph, relationships are organized in a graph structure, so they are suitable for storage in the graph database.

Advantages:

| S.No. | Function | Description |

|---|---|---|

| 1 | STARTNODE | It is used to know the starting node of the relationship. |

| 2 | ENDNODE | It is used to know the ending node of the relationship. |

| 3 | ID | It is used to know the ID of the relationship. |

| 4 | TYPE | It is used to know the TYPE of a relationship in the string representation. |

// Query the three-level relationship nodes as follows: "with" can use the previous query result as the condition of the following query match (na:Person)-[re]-(nb:Person) where na.name="林婉儿" WITH na,re,nb match (nb:Person)- [re2:Friends]->(nc:Person) return na,re,nb,re2,nc// Directly concatenate relational nodes to query match data=(na:Person{name:"范闲"})-[re]->(nb:Person)-[re2]->(nc:Person) return data // It is cumbersome to use the above method by using deep operators. For variable number of relationships -> nodes, use -[:TYPE*minHops..maxHops]-。match data=(na:Person{name:"范闲"})-[*1..2]-(nb:Person) return dataMongoDB is a database based on distributed file storage. It has the richest features and is most like a relational database among all the non-relational databases. In the case of high load, server performance can be guaranteed by adding more nodes. Written in C++, it can provide scalable, high-performance, and easy-to-deploy data storage solutions for WEB applications.

| Mysql | MongoDB |

|---|---|

| database | Database |

| Table | Collection |

| Row | BSON document |

| Column | Field |

| Index (unique index and primary key index) | Index (geo-location index, full-text index, and hash index supported) |

| Join primary and foreign keys | Embedded document |

| Primary key (specify 1 to N columns as primary keys) | Primary key (specify_id field as the primary key) |

{key:value,key2:value2} is similar to JSON. It is a binary storage format and supports embedded document objects and array objects. However, BSON has some data types that JSON does not have. For example, value includes string, double, array, and date. BSON can be used as a storage form for network data exchange. It is of high flexibility, but the space utilization is not ideal.

BSON has three characteristics: lightweight, traversability, and high efficiency.

/* The query find() method can specify multiple keys and each key is separated with a comma. */db.collection.find({key1:value1, key2:value2}).pretty()/* Update $set: set the field value $unset: delete the specified field $inc: perform auto-increment on the modified value */db.collection.update({ where },{ $set :{field name: value }},{ mti true })/* Delete justOne : if it is set to true, only one document will be deleted. The default false is to delete all documents matching the condition */db.collection.remove({ where }, {justOne: <boolean>, writeConcern: <receipt> } )For a hierarchical data structure, it is very difficult to use a flat, table-like structure to query and save data.

For tens of millions of document objects and nearly 10 GB of data, the query on the indexed ID will not be slower than MySQL, while the query on the non-indexed fields will win in an all-around way. MySQL is not capable of queries on any field under a large number of data, while the query performance of MongoDB is amazing. The write performance is also very satisfactory. For the level of millions of data write, MongoDB takes less than 10 minutes.

MySQL 8.0

| OSS | Self-Developed Cloud Storage | |

|---|---|---|

| Reliability | The availability is no less than 99.995 percent. The data durability is no less than 99.9999999999 percent (the number of 9 is twelve). |

It is prone to errors due to low hardware reliability. If a bad sector appears, the data loss may be irreversible. Manual data restoration can be complex, time-consuming, and labor-intensive. |

| Security | Server-side encryption, client-side encryption, hotlink protection, and IP address blacklist and whitelist are supported. It applies a resource isolation mechanism for multiple users and supports geo-disaster recovery. | Additional cleansing devices and blackhole policy-related services are required. It requires a separate security mechanism. |

| Cost | It supports multi-line Border Gateway Protocol (BGP) backbone networks without bandwidth limits. Upstream traffic is free of charge. Investments in hiring O&M personnel or managing databases are not required. | The bandwidth is limited due to slow single-line or double-line access to backbone networks. You need to manually expand bandwidth capacity during peak hours. It requires dedicated O&M personnel, which adds to costs. |

You can use Object Storage Service (OSS) and Alibaba Cloud Content Delivery Network (CDN) together to optimize the scenario that accelerates the static and hot object downloads, that is, a large number of users in the same region download the same static object at the same time. You can configure your OSS Bucket as the origin and use CDN to publish the data in the Bucket to edge nodes. When a large number of users frequently access the same object, the users can retrieve the cached data from edge nodes. This shortens the response time.

FastDFS is an open-source lightweight distributed file system (DFS). It is used to manage files including file storing, file syncing, and file accessing (file upload and download), and solves the problems of mass storage and load balancing. FastDFS makes it easy to build a high-performance file server cluster to provide services such as file upload and download. For example, photo album websites and video websites.

Scalability: It supports horizontal scaling and dynamic scaling.

High availability: It refers to the availability of the entire file system and the integrity and consistency of data.

Elastic storage: You can add or delete resources in the storage pool based on your business requirements without interrupting the system.

| Metrics | Suitable Type | File Distribution | Complexity | FUSE | POSIX | Backup Policy | Communication Protocol Interface | Community Support | Programming Language |

|---|---|---|---|---|---|---|---|---|---|

| FastDFS | 4KB-500MB | Small files are merged and stored without sharding | Simple | Not supported | Not supported | In-group redundant backup | API http | Domestic user groups | C |

| TFS | All files | Small files are merged and sharded by block organizations | Complex | Not supported | Not supported | Blocks store multiple backups with primary and secondary disaster recovery | API http | Few supports | C++ |

| MFS | Files greater than 64 KB | The files are sharded for storage | Complex | Supported | Supported | Multi-point backup and dynamic redundancy | Use FUSE | Many supports | Perl |

| HDFS | Large files | Large files are stored by shards and blocks | Simple | Supported | Supported | Multiple replicas | Native APIs | Many supports | Java |

| Ceph | Object file blocks | Object-based Storage Device (OSD) with one primary file and multiple secondary files | Complex | Supported | Supported | Multiple replicas | Native APIs | Few supports | C++ |

| MogileFS | Massive small pictures | Files are stored without sharding | Complex | Supported | Not supported | Dynamic redundancy | HTTP native APIs | Few documentation supports | Perl |

Features

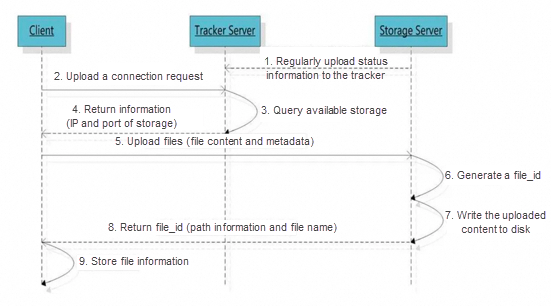

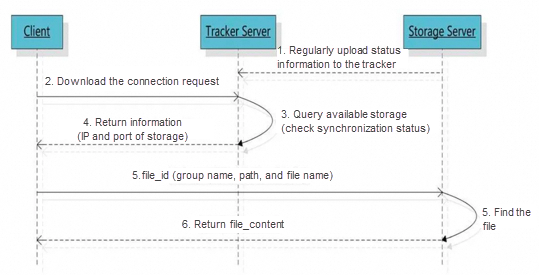

Data interacts with the tracker server or storage nodes by using the TCP/IP protocol over a proprietary interface.

The tracker server is used for load balancing and scheduling. By tracker server, the address where files are uploaded can be found according to the policy. Tracker plays a load balancing role in accessing.

The storage server is used for file storage. Files uploaded by clients are finally stored on the storage server. The storage server does not implement its own file system. Instead, it manages files by using the file system of the operating system. Servers on the storage nodes can be added or taken offline at any time without affecting online services.

The size and MD5 hash value of the file involved in the resumable upload does not change. The process of resumable upload is similar to that of uploading a file. First, locate the source storage, finish complete or partial upload. Then, use binary logs to synchronize server files in the same group.

Configuration files: tracker.conf and storage.conf

// FastDFS uses the memory pool. // FastDFS 5.04 uses incremental pre-allocation. Tracker pre-allocates 1,024 at a time and storage pre-allocates 256 at a time. max_connections=10240 // Set the max_connections to a relatively large value based on actual needs, such as 10,240 or larger. // At the same time, you need to increase the maximum number of files that can be opened by a process. vi /etc/security/limit .conf. It takes effect after restarting the system. * soft nofile 65535 * hard nofile 65535.work_threads =4 // Note: To avoid the overhead of CPU context switching and unnecessary resource consumption, we do not recommend that you set this parameter to a too-large number. // The formula is: work_threads + (reader_threads + writer_threads) = Number of CPUs// For the single disk mounting method, set the disk reader and writer threads to 1 respectively // If the disks are used to create a Redundant Array of Independent Disks (RAID), the number of read and write threads needs to be increased as appropriate to maximize the disk performance disk_rw_separated: whether disk read and write are separated disk_reader_threads: number of read threads of a single disk disk_writer_threads: number of write threads of a single diskThe solution to avoiding repeated file upload: Calculate the corresponding MD5 hash value of the file after the file is uploaded and then store it in MySQL. When you add a file, compare the MD5 hash value of the file with the previous information stored in MySQL. DigestUtils.md5DigestAsHex(bytes).

Transaction characteristics: atomicity, consistency, isolation, and durability (ACID)

Atomicity: A transaction is the smallest execution unit and cannot be split. The atomicity of transactions ensures that the action is either completed in full or not executed at all.

Consistency: Before and after the transaction is executed, the data remains consistent, and the read results of multiple transactions on the same data are the same.

Isolation: During the concurrent access to the database, the transactions of an account are not disturbed by the other transactions. The database of each concurrent transaction is isolated.

Durability: After a transaction is committed, the change it brings to the data in the database is durable. Even if the database fails, it should not have any impact.

Implementation guarantee: The InnoDB storage engine of MySQL uses redo logs to ensure consistency and durability, rollback logs to ensure atomicity, and various locks to ensure isolation.

Read uncommitted: The lowest isolation level that allows you to read uncommitted data changes. This may result in dirty reads, phantom reads, or non-repeatable reads.

Read committed (RC): It allows you to read data that has been committed by concurrent transactions. Dirty reads can be blocked. However, phantom reads or non-repeatable reads may still appear.

Repeatable read (RR): Multiple reading results of the same field are consistent, except that the data is modified by its transaction. Dirty reads and non-repeatable reads can be blocked. However, phantom reads still appear.

Serializable: The highest isolation level that is fully compliant with the ACID isolation level. All transactions are executed one by one in turn so that transactions cannot interfere with each other.

| Isolation Level | Concurrency Issues |

|---|---|

| Read uncommitted | Possibly result in dirty reads, phantom reads, or non-repeatable reads |

| Read committed | Possibly result in phantom or non-repeatable reads |

| Repeatable read | Possibly result in phantom reads |

| Serializable | No interference |

The multiple reads of the same field are consistent, except that the data is modified by its transaction.

RR may result in phantom reads. To ensure absolute security, you can only set the isolation level to serializable. In this way, all transactions can only be executed sequentially, which will not be affected by concurrency, but the performance will be much lower.

The second method is to use multi-version concurrency control (MVCC) to solve the problem of snapshot phantom reads (such as simple SELECT). It does not read the latest data. By maintaining a field as a version, only one person can update one version each time.

select id from table_xx where id = ? and version = Vupdate id from table_xx where id = ? and version = V+1The third method is to use GapLock + Next-KeyLock to solve the current problem of phantom reads if you need to read the latest data.

select id from table_xx where id > 100 for update;select id from table_xx where id > 100 lock in share mode;Transaction isolation levels RC (read commit) and RR (repeatable read) are implemented based on MVCC (multi-version concurrency control).

| RC | RR | |

|---|---|---|

| Implementation | Multiple query statements create different ReadViews | Only one version of ReadView is required |

| Granularity | Statement-level read consistency | Transaction-level read consistency |

| Accuracy | Data at the time point of each statement execution | Data at the time point of the first statement execution |

InnoDB locks according to different classifications:

Shared and exclusive locks: row-level locks

Intention locks: table-level locks

Gap locks: to lock an interval

Record locks: to lock a row record

It is the lock with the largest lock granularity in MySQL. It locks the entire table that is being operated. It is easy to implement, consumes few resources, and locks quickly without deadlocks. It has the largest lock granularity, the highest probability of triggering lock conflicts, and the lowest concurrency. Both MyISAM and InnoDB engines support table-level locks.

It is the lock with the smallest lock granularity in MySQL. It only locks the row that is being operated. Row-level locks can greatly reduce conflicts in database operations. It has the smallest lock granularity and high concurrency, but it also has the largest lock overhead. It locks slowly and deadlocks occur. InnoDB supports the following types of row-level locks:

Record locks: a record lock is a lock on an index record, locking rows that meet the conditions. Other transactions cannot modify or delete locked items.

Gap locks: a gap lock is a lock on a gap between index records, locking the range of records, excluding the index itself. Other transactions cannot insert data into the locked range.

Next-key locks: A next-key lock locks both the index record itself and the range of records. It is the combination of a record lock and a gap lock. It can solve the problem of phantom reads.

InnoDB supports multiple granularity locking, which allows row-level locks and table-level locks to coexist, and intention locks are one of these table locks.

Shared locks (S) allow the lock holder to read rows. When locking, add S locks to all current and child nodes, and add intention shared (IS) locks to all parent nodes until the table header.

Exclusive locks (X) allow the lock holder to modify rows. When locking, add X locks to all current and child nodes, and add intention exclusive (IX) locks to all parent nodes until the table header.

IS locks (intention shared locks): The transaction intends to acquire an S lock on certain rows in the table.

IX locks (intention exclusive locks): The transaction intends to acquire an X lock on certain rows in the table.

| Mutual Exclusion | S | X | IS | IX |

|---|---|---|---|---|

| S | ✅ | ❌ | ✅ | ❌ |

| X | ❌ | ❌ | ❌ | ❌ |

| IS | ✅ | ❌ | ✅ | ✅ |

| IX | ❌ | ❌ | ✅ | ✅ |

MVCC is a multi-version concurrency control mechanism, which can reduce the system overhead by seeing the expected data through the visibility of transactions. (RC and RR level work)

InnoDB MVCC saves the system version number which can be understood as the transaction ID after each row of records. Each time a new transaction is started, the system version number will be automatically incremented, and the system version number at the beginning of the transaction will be used as the transaction ID. This ensures that the rows read by the transaction have existed before the start of the transaction or have been inserted or modified by the transaction itself to prevent phantom reads.

The reason is that only after the transaction is committed will the creation and deletion versions of MVCC be generated. Objectively, MySQL uses a whole implementation of optimistic locks, that is, each line has a version number, and whether the saving is successful is determined according to the version number. The snapshots used by InnoDB MVCC are stored in undo logs which use rollback pointers to connect all snapshots of a data row.

In the InnoDB engine table, there are two necessary hidden columns in its clustered index record:

This ID is used to store the transaction ID each time a clustered index record is modified.

Each time a clustered index record is modified, the previous version is written to the undo log. This roll_pointer saves a pointer that points to the location of the previous version of this clustered index record. It is used to obtain the record information of the previous version. (Note: The undo log of the insert operation does not have this attribute because it does not have a previous version.)

Each modification is recorded in the version chain. SELECT allows you to retrieve records from the version chain. This implements read-write and write-read concurrent execution and improves system performance.

MyISAM: It supports table locks and is suitable for read-intensive scenarios. It does not support foreign keys or transactions. Indexes and data are in different files.

InnoDB: It supports row and table locks. The default is row locks. It is suitable for concurrent scenarios. It supports foreign keys and transactions. Indexes and data are in the same file.

The hash index uses the value of the index column to calculate the hashCode of the value and then stores the physical location of the row data where the value is located in the corresponding position of the hashCode. With the hash algorithm, the access can be very fast. However, a value can only correspond to one hashCode, and it is a hash distribution method, so the hash index does not support range search or sorting.

The B+tree has lower disk read-write costs, fewer queries, and stabler query efficiency, which is conducive to database scanning.

The B+tree is an upgraded version of the B-tree. The B+tree only uses leaf nodes to store data and the remaining nodes are used for indexes. All index nodes can be added to the memory to increase query efficiency. Leaf nodes can be used as doubly linked lists to improve range search efficiency and increase the index range.

When large-scale data is stored, the red-black tree is often inefficient because the excessive tree depth leads to frequent disk I/O reads and writes. Therefore, we can try to improve the tree structure to reduce its height. B-tree and B+tree can have multiple children from tens to thousands, reducing the height of the tree.

Disk pre-read principle: It sets the size of a node equal to one page, so each node can be fully loaded with only one I/O. The following technique should be used for the actual B-tree implementation to achieve this goal: each time a new node is created, directly apply the space of one page, thus ensuring that a node is physically stored on a page. In addition, the computer storage allocation is aligned by page, thus realizing that a node only needs one I/O.

CREATE [UNIQUE | FULLTEXT] INDEX index name ON table name (field name) [USING index method]; Description: UNIQUE: optional. It indicates that the index is unique. FULLTEXT: optional. It indicates that the index is full-text. INDEX and KEY: They are used to specify the field as an index. You just need to select one of the two because they achieve the same effect. Index name: optional. Give the created index a new name. Field name 1: It specifies the field name corresponding to the index. The field must be previously defined. Note: The index method uses B+tree by default. Clustered index: Data is stored together with indexes. The leaf nodes of the index structure store row data (primary key indexes).

Non-clustered index: Data is stored separately from the index. The leaf nodes of the index structure point to the corresponding position of the data (secondary index).

A leaf node of a clustered index is a data node, while a leaf node of a non-clustered index is still an index node, but it has a pointer to the corresponding data block.

The leftmost prefix principle is mainly used in the joint index. The B+tree of the joint index is indexed and sorted based on the first keyword.

The bottom of the joint index is a B+tree, but the B+tree nodes of the joint index store the key value. Since building a B+tree can only determine the index relationship based on one value, the database building relies on the leftmost field of the joint index.

Matching with the greater-than sign (>) and less-than sign (<) will cause the index failure of the following columns, because the data matched by this method is unknowable.

select * from student A where A.age= '18' and A.name= '张三';

Based on the above description, we will analyze the execution process of this statement:

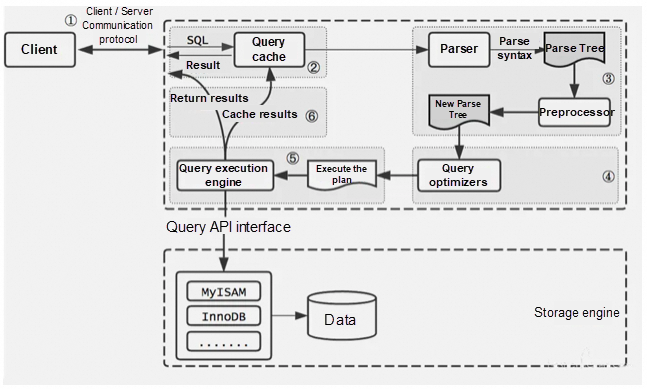

① Establish a connection with MySQL through a client/server communication protocol. Query whether you have the permission.

② Before running MySQL 8.0, check whether caching is enabled. If the query cache is enabled and the same SQL statement is hit, the query result will be directly returned to the client.

③ Parse syntax and semantics by the parser and generate a parse tree. For example, the query is SELECT, the table name is tb_student, and the condition is id='1'.

④ The query optimizer generates an execution plan. Check whether it can be optimized according to the index.

⑤ Query the execution engine to execute SQL statements and obtain the query results based on the storage engine type. If query cache is enabled, cache the query. Otherwise, directly return the query.

For common indexes such as unique indexes, joint indexes, and full-text indexes, the index tree needs to be scanned twice.

(1) Use a common index to locate the primary key value id=5.

(2) Locate the row record through the clustered index.

This is the back-to-table query, which first locates the primary key value and then locates the row record. Its performance is lower than that of scanning the index tree once.

Covering index: primary key index == clustered index == covering index

If the column of the WHERE condition and the returned data are in the same index, then there is no need to query back to the table, then it is called the covering index.

Covering index implementation: A common method is to build the fields to be queried into the joint index.

mysql> explain select * from staff;+----+-------------+-------+------+---------------+------+---------+------+------+-------+| id | select_type | table | type | possible_keys | key | key_len | ref | rows | Extra |+----+-------------+-------+------+---------------+------+---------+------+------+-------+| 1 | SIMPLE | staff | ALL | NULL | Index | NULL | NULL | 2 | NULL |+----+-------------+-------+------+---------------+------+---------+------+------+-------+1 row in set① Leftmost prefix index: like is only used for 'string%', and the = and in in the statements will dynamically adjust their order.

② Unique index: The differentiation degree of the unique key is greater than 0.1.

③ Unable to use index: !=, is null, or, > <, (automatically determined according to the quantity since MySQL 5.7) in, not in.

④ Joint index: Avoid SELECT * and use covering indexes for column queries.

SELECT uid From user Where gid = 2 order by ctime asc limit 10ALTER TABLE user add index idx_gid_ctime_uid(gid,ctime,uid) # Create a joint covering index to avoid back-to-table queries.① CHAR fixed-length query is of high efficiency, and the first byte of VARCHAR records data length.

② Add indexes for Rows in EXPLAIN.

③ Indexes are involved in both GROUP BY and ORDER BY fields.

④ The paging query in Limit becomes slower as the start value increases, which can be solved by sub-query and table connection.

select * from mytbl order by id limit 100000,10 the improved SQL statements are as follows: select * from mytbl where id >= ( select id from mytbl order by id limit 100000,1 ) limit 10select * from mytbl inner ori join (select id from mytbl order by id limit 100000,10) as tmp on tmp.id=ori.id;⑤ COUNT performs a full table scan. You can use EXPAIN for estimating.

⑥ When you delete a table by DELETE, a large number of undo and redo logs are added. If you decide to delete a table, you can use TRUNCATE.

① No more than 200 tables in a single database

② No more than 5,000,000 pieces of data in a single table

③ No more than 40 columns in a single table

④ No more than 5 indexes in a single table

① First Normal Form (1NF): Columns are indivisible

② Second Normal Form (2NF): Attributes completely depend on the primary key [eliminating some sub-function dependence]

③ Third Normal Form (3NF): Attributes do not depend on other non-primary attributes [eliminating transitive dependencies]

Configure connections, disenable Swap, increase memory, and upgrade SSD hard disks

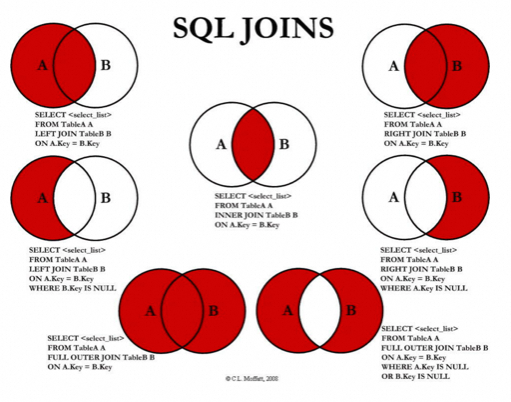

LEFT JOIN: It returns all data records in the left table and data records that match the specified join field in the two tables.

RIGHT JOIN: It returns all data records in the right table and data records that match the specified join field in the two tables.

INNER JOIN: It returns only the data records that match specific join fields in the two tables.

Advantages:

Binary log format: statement, row, and mixed

Statement-based replication, row-based replication, and statement- and row-based (mixed) replication. Among them, the row-based replication method can better ensure the data consistency of the primary-secondary database. However, the method has a large number of logs, so the disk space should be considered when setting.

"The primary-secondary replication has a delay." If you read the secondary database during the delay, you may read inconsistent data.

Record the occurred write requests in the cache to route the primary database read or the secondary database read.

In asynchronous replication, after the primary database completes the operation and writes the binary log, it returns to the client. This action ends without verifying whether the secondary database has received the binary log or whether the log is complete, thus causing data inconsistency.

After the primary database commits a transaction, it does not return immediately. Instead, it returns to the client only after one of the secondary databases receives the binary log and successfully writes the binary log to the relay log. Data security and consistency can be ensured through the binary log of the primary database and the relay log of the secondary database.

When the primary database executes a transaction, all the secondary databases execute the transaction before returning to the client. Because it returns after all the secondary databases finish executing the transaction, the performance of fully synchronous replication is severely affected.

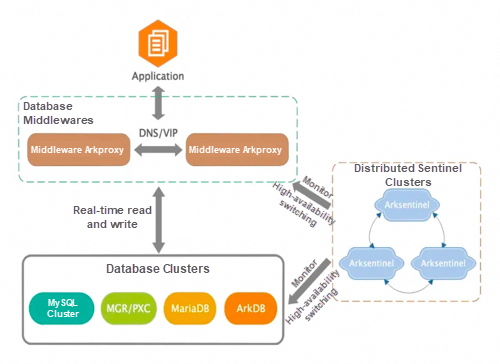

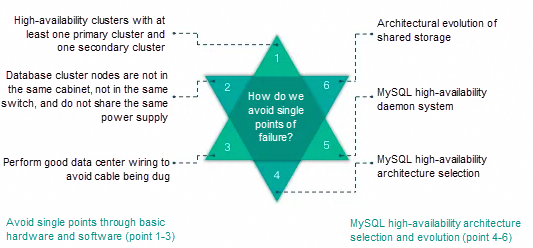

When the write node Master db1 fails, the switchover script is triggered by MMM Monitor or Keepalived to drift the VIP to the available Master db2. When network jitter or network partitions occur, MMM Monitor misjudges. In severe cases, frequent switching to write the VIP will cause double writes to the cluster. When data replication is delayed, the application fails due to data disorder or data conflict. An architecture that effectively avoids the failure of single points is to use shared storage. The failure switching of single points can be monitored by the distributed sentinel system.

1. Virtual IP or DNS service (Keepalived + VIP/DNS and MMM architecture)

During virtual IP O&M, a problem that one VIP is bound to multiple servers to provide connections at the same time sometimes occurs during ARP refresh. This is one of the reasons why you should avoid using the Keepalived + VIP and MMM architecture because it cannot handle such problems and causes multi-point write to the cluster.

2. Upgrade the secondary database to the primary database (MHA or QMHA).

Try to set read_only to on for the original master to avoid multiple writes to the cluster. Use the binary log server to retain the binary logs of the master. If data delays, you must complete the data before promoting the slave to the new master. Otherwise, data loss occurs.

Disclaimer: The views expressed herein are for reference only and don't necessarily represent the official views of Alibaba Cloud.

Interview Questions We've Learned Over the Years: MySQL – Part 2

1,407 posts | 493 followers

FollowAlibaba Cloud Community - May 3, 2024

Alibaba Cloud Community - May 8, 2024

Alibaba Cloud Community - May 1, 2024

Alibaba Cloud Community - May 6, 2024

Alibaba Cloud Community - May 7, 2024

Alibaba Cloud Community - July 29, 2024

1,407 posts | 493 followers

Follow ApsaraDB for HBase

ApsaraDB for HBase

ApsaraDB for HBase is a NoSQL database engine that is highly optimized and 100% compatible with the community edition of HBase.

Learn More PolarDB for MySQL

PolarDB for MySQL

Alibaba Cloud PolarDB for MySQL is a cloud-native relational database service 100% compatible with MySQL.

Learn More ApsaraDB for MongoDB

ApsaraDB for MongoDB

A secure, reliable, and elastically scalable cloud database service for automatic monitoring, backup, and recovery by time point

Learn More AnalyticDB for MySQL

AnalyticDB for MySQL

AnalyticDB for MySQL is a real-time data warehousing service that can process petabytes of data with high concurrency and low latency.

Learn MoreMore Posts by Alibaba Cloud Community