By Zhihao Xu and Yang Che

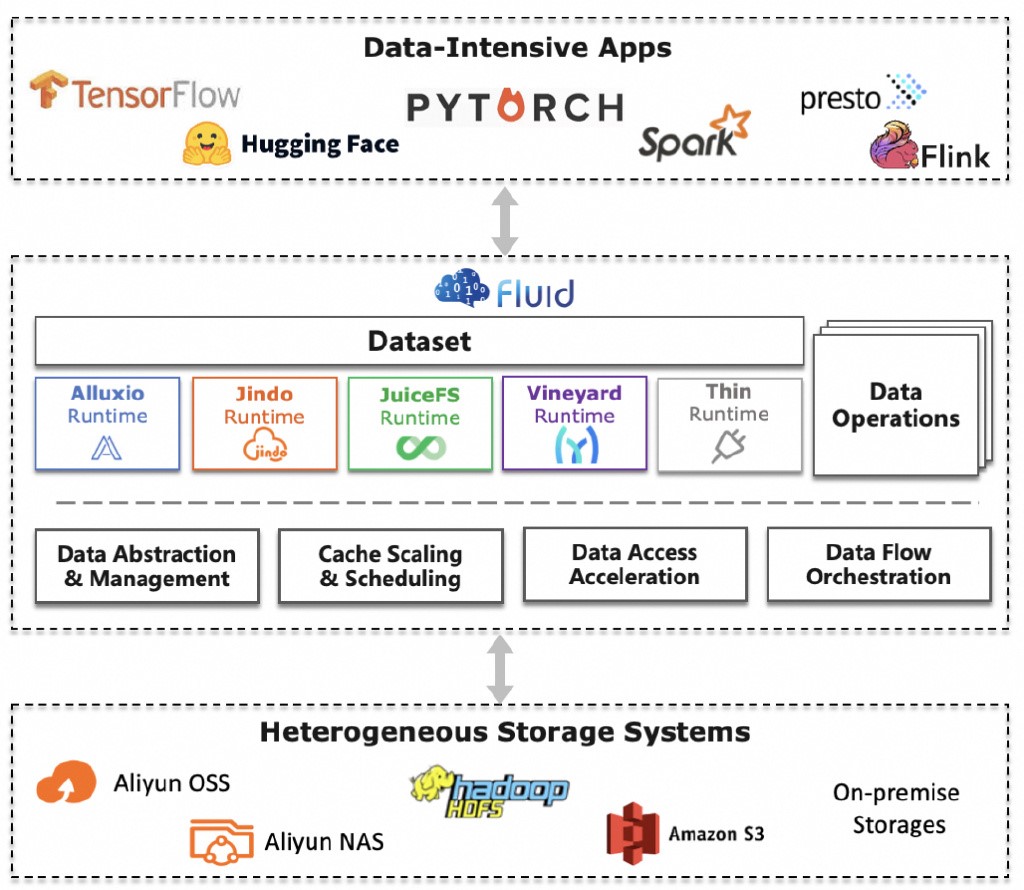

In the era of large models, the rapid development of Artificial Intelligence Generated Content (AIGC) and Large Language Model (LLM) technologies is driving innovations across industries. However, this swift advancement also presents significant technical challenges, particularly in data management and processing. Data acceleration has been a critical issue in many scenarios such as LLM training, complex inference calculations, and big data analytics.

When it comes to data acceleration, many people intuitively think that simply adding a layer of cache can solve the problem. However, in complex customer environments and diverse business scenarios, adding a cache does not necessarily lead to performance improvements. Instead, the key lies in tailoring the cache to specific contexts and leveraging its full potential.

In fact, data acceleration involves more than just speeding up the processing and transmission of massive data. It also requires balancing performance, stability, and consistency. In this article, we will delve into how to enhance performance while ensuring system stability and data consistency by using Alibaba Cloud Container Service for Kubernetes (ACK) Fluid as an example. Through specific scenario analysis and technical details, we aim to help you better address data management needs in the large model era.

You can use cache optimization policies of Fluid to improve data access efficiency for applications within Kubernetes clusters. In Kubernetes clusters that use an architecture where computing is decoupled from storage, you can use the caching capabilities of Fluid to effectively reduce access latency and resolve bandwidth throttling when you access the storage system. Data caching technologies accelerate data access based on the principle of locality. Data caching technologies are suitable for data-intensive applications that feature data locality. Scenarios:

• AI model training applications. During the training procedure of an AI model, training datasets are read per epoch. The datasets are used for model iteration and convergence.

• AI model inference startups. When you deploy an AI model as an inference service or update an inference service, a large number of inference service instances are concurrently launched. Each instance concurrently reads the same mode file from the storage system and loads the model file to the GPU memory allocated to the instance.

• Big data analytics scenarios. Data may be shared by multiple data analysis tasks. For example, when you use SparkSQL to analyze user profiles and product information, order data is used by both analysis tasks.

When you use Fluid to build a cache system to accelerate data access, you can configure the following settings based on your business requirements and budget: Elastic Compute Service (ECS) instance types, cache media, cache system parameters, and cache management policies. In addition, you need to consider the access mode of clients, which may impact the performance of data reads. This section introduces best practices for cache optimization policies to improve the performance of Fluid.

A distributed cache system can aggregate the storage and bandwidth resources on different nodes and provide larger cache capacity and higher bandwidth for upper-layer applications. You can calculate the cache capacity of the cache system, the cache bandwidth, and the maximum bandwidth of a pod based on the following formulas:

• Cache capacity of the cache system = Cache capacity of each cache system worker pod × Number of cache system worker pods

• Cache bandwidth = Number of cache system worker pods × min{Maximum ECS bandwidth of worker pods, I/O throughput of the cache media used by worker pods}

The bandwidth of a distributed cache cluster depends on the maximum bandwidth of ECS instances in the cluster and the cache media used by the cluster. To improve the performance of the distributed cache system, we recommend that you select ECS instances that provide high bandwidth and use memory, local hard disk drives (HDDs), and local SSDs as the cache media.

Note: The cache bandwidth is not equal to the actual bandwidth for cache access. The actual bandwidth depends on the bandwidth of the client ECS instance and the access mode, such as sequential read or random read.

Maximum bandwidth for a pod = min{Bandwidth of the hosting ECS instance, Cache bandwidth}. When multiple pods concurrently access the cache, the cache bandwidth is shared by the pods.

We recommend that you select the following ECS instance types.

|

Instance family |

Instance type |

Instance specification |

|

g7nex, network-enhanced general-purpose instance family |

ecs.g7nex.8xlarge |

32 vCPUs, 128 GiB of memory, and 40 Gbit/s of bandwidth |

|

ecs.g7nex.16xlarge |

64 vCPUs, 256 GiB of memory, and 80 Gbit/s of bandwidth |

|

|

ecs.g7nex.32xlarge |

128 vCPUs, 512 GiB of memory, and 160 Gbit/s of bandwidth |

|

|

i4g, instance family with local SSDs |

ecs.i4g.16xlarge |

64 vCPUs, 256 GiB of memory, 2 local SSDs that are 1,920 GB in size, and 32 Gbit/s of bandwidth |

|

ecs.i4g.32xlarge |

128 vCPUs, 512 GiB of memory, 4 local SSDs that are 1,920 GB in size, and 64 Gbit/s of bandwidth |

|

|

g7ne, network-enhanced general-purpose instance family |

ecs.g7ne.8xlarge |

32 vCPUs, 128 GiB of memory, and 25 Gbit/s of bandwidth |

|

ecs.g7ne.12xlerge |

48 vCPUs, 192 GiB of memory, and 40 Gbit/s of bandwidth |

|

|

ecs.g7ne.24xlarge |

96 vCPUs, 384 GiB of memory, and 80 Gbit/s of bandwidth |

|

|

g8i, general-purpose instance family |

ecs.g8i.24xlarge |

96 vCPUs, 384 GiB of memory, and 50 Gbit/s of bandwidth |

|

ecs.g8i.16xlarge |

64 vCPUs, 256 GiB of memory, and 32 Gbit/s of bandwidth |

Example

Assume that two ECS instances of the ecs.g7nex.8xlarge type are added to your Container Service for Kubernetes (ACK) cluster to build a distributed cache system. The cache system consists of two worker pods each of which provides 100 GiB of memory cache. The pods run on different ECS instances. An application pod is deployed on an ECS instance of the ecs.gn7i-c8g1.2xlarge type. The instance provides 8 vCPUs, 30 GiB of memory, and 16 Gbit/s of bandwidth. The cache capacity of the cache system, cache bandwidth, and maximum pod bandwidth can be calculated based on the following formulas:

• Cache capacity of the cache system = 100 GiB × 2 = 200 GiB

• Cache bandwidth = 2 × min{40 Gbit/s, Memory I/O throughput} = 80 Gbit/s

• When a cache hit occurs, the maximum pod bandwidth = min{80 Gbit/s, 16 Gbit/s} = 16 Gbit/s

The bandwidth of a distributed cache cluster depends on the maximum bandwidth of ECS instances in the cluster and the cache media used by the cluster. To improve the performance of the distributed cache system, we recommend that you select ECS instances that provide high bandwidth and use memory or local SSDs as the cache media. Fluid allows you to use the spec.tieredstore parameter of the cache runtime to configure different cache media and corresponding cache capacities.

Note: In most cases, Enterprise SSDs (ESSDs) do not meet the requirements of data-intensive applications for data access. For example, a disk of performance level (PL) 2 provides a throughput of up to 750 MB/s. If you attach an ESSD of PL2 to an ECS instance to cache data, the maximum bandwidth of the ECS instance is 750 MB/s, even though the instance type provides bandwidth higher than 750 MB/s.

If you use memory to cache data, configure the spec.tieredstore parameter of the cache runtime based on the following code block:

spec:

tieredstore:

levels:

- mediumtype: MEM

volumeType: emptyDir

path: /dev/shm

quota: 30Gi # The cache capacity provided by each worker pod of the distributed cache system.

high: "0.95"

low: "0.7"• tieredstore.levels.quota is the maximum cache capacity provided by each worker of the distributed cache system.

If you use a local SSD to cache data, configure the spec.tieredstore parameter of the cache runtime based on the following code block:

spec:

tieredstore:

levels:

- mediumtype: SSD

volumeType: hostPath

path: /mnt/disk1 # /mnt/disk1 is the path of the local SSD on the host.

quota: 100Gi # The cache capacity provided by each worker pod of the distributed cache system.

high: "0.95"

low: "0.7"If you want to use multiple local disks to cache data, configure the spec.tieredstore parameter based on the following code block:

spec:

tieredstore:

levels:

- mediumtype: SSD

volumeType: hostPath

path: /mnt/disk1,/mnt/disk2 # /mnt/disk1 and /mnt/disk2 are the paths of the data disks on the host.

quota: 100Gi # The cache capacity provided by each worker pod of the distributed cache system. The cache capacity is evenly distributed across multiple paths.

high: "0.95"

low: "0.7"When a cache hit occurs, application pods read data from the cache system. In this case, if the pod and the cache system are deployed in different zones, the pod needs to access cache across zones. Network jitters may occur during cross-zone access. To avoid network jitters, you can configure affinity rules between the application pod and the cache system pods. Details:

• Configure affinity rules to deploy the worker pods of the cache system in the same zone.

• Configure affinity rules to deploy application pods in the zone where the worker pods of the cache system are deployed.

Note: Deploying multiple application pods and the worker pods of the cache system in a single zone may downgrade the disaster recovery capability of the application. We recommend that you balance the service performance and service availability based on the service-level agreement (SLA) of your service.

Fluid allows you to use the spec.nodeAffinity parameter of a dataset to specify an affinity rule for the worker pods of the cache system. Sample code:

apiVersion: data.fluid.io/v1alpha1

kind: Dataset

metadata:

name: demo-dataset

spec:

...

nodeAffinity:

required:

nodeSelectorTerms:

- matchExpressions:

- key: topology.kubernetes.io/zone

operator: In

values:

- <ZONE_ID> # e.g. cn-beijing-iThe preceding code block can be used to schedule the worker pods of the cache system on ECS instances in the specified zone.

In addition, Fluid automatically injects the cache affinity rule into the application pod specification. Based on the affinity rule, the system attempts to schedule the application pods to the zone where the worker pods of the cache system are deployed. For more information, see Schedule pods based on cache affinity.

Full data sequential reads on large files are used in data-intensive scenarios. For example, full data sequential reads on large files are required when you use datasets of the TFRecord or TAR format to train models, load one or more model files during the startup of an inference service, or read data from Parquet files to implement distributed data analytics. In the preceding scenarios, you can enable prefetching to accelerate data access. For example, you can increase the prefetching concurrency and the size of prefetched data.

The parameters that you can use to configure prefetching vary based on the cache runtime that you use in Fluid.

JindoRuntime:

kind: JindoRuntime

metadata:

...

spec:

fuse:

properties:

fs.oss.download.thread.concurrency: "200"

fs.oss.read.buffer.size: "8388608" # 8M

fs.oss.read.readahead.max.buffer.count: "200"

fs.oss.read.sequence.ambiguity.range: "2147483647" # Approximately 2G.• fs.oss.download.thread.concurrency: The number of concurrent threads created for the Jindo client to prefetch data. The data prefetched by each thread is stored in a buffer.

• fs.oss.read.buffer.size: The buffer size.

• fs.oss.read.readahead.max.buffer.count: The maximum number of buffers for one prefetching thread.

• fs.oss.read.sequence.ambiguity.range: The range based on which the Jindo client determines whether the process performs sequential reads.

For more information about how to optimize the performance and the relevant parameters, see JindoSDK advanced parameter configuration.

When you use JuiceFSRuntime, you can use the spec.fuse.options parameter to configure the FUSE component and the spec.worker.options parameter to configure the worker component.

kind: JuiceFSRuntime

metadata:

...

spec:

fuse:

options:

buffer-size: "2048"

cache-size: "0"

max-uploads: "150"

worker:

options:

buffer-size: "2048"

max-downloads: "200"

max-uploads: "150"• buffer-size: The size of the read/write buffer.

• max-downloads: The concurrency of downloads in the prefetching process.

• fuse cache-size: This parameter is set to 0.

You can set the size of the local cache that can be used by the JuiceFS FUSE component to 0 and the memory request of the FUSE component (the requests parameter) to a small value, such as 2 GiB. In this case, the FUSE component automatically uses the page cache provided by the Linux kernel on the node. When no page cache hit occurs, the JuiceFS worker distributed cache is used to accelerate data access. For more information about how to optimize the performance and the relevant parameters, see JuiceFS official documentation.

The cache system maintains the metadata of all files in the directories mounted to the cache system and records additional information about the files, such as the cache status. If the cache system is mounted with large directories, such as the root directories of large storage systems, the cache system consumes a large amount of memory resources to store the metadata of the files in the mounted directories and consumes additional CPU resources to process access to the metadata.

Fluid defines the dataset concept. A dataset is a collection of logically related data intended for upper-layer applications. A dataset corresponds to a distributed cache system. We recommend that you use a separate dataset to accelerate data access for a data-intensive application or relevant tasks. If you need to run multiple applications, we recommend that you create separate datasets for the applications and mount different subdirectories of the underlying data source to different datasets. A mounted subdirectory contains the data required by the application to which the subdirectory is mounted. This provides improved data isolation when different applications use the cache systems of the mounted datasets to access data. This also ensures the stability and performance of the cache systems. Sample dataset configurations:

apiVersion: data.fluid.io/v1alpha1

kind: Dataset

metadata:

name: demo-dataset

spec:

...

mounts:

- mountPoint: oss://<BUCKET>/<PATH1>/<SUBPATH>/

name: sub-bucketMultiple cache systems may increase the complexity of maintenance. You can select flexible cache system architectures based on your business requirements:

• If the datasets that you want to use are small in size, strongly rely on each other, stored in the same storage system, and contain a small number of files, we recommend that you create one Fluid dataset and one distributed cache system.

• If the datasets that you want to use are large in size and contain a large number of files, we recommend that you create multiple Fluid datasets and multiple distributed cache systems. You can mount one or multiple Fluid datasets to your application pods.

• If the datasets that you want to use belong to different storage systems of different users and data isolation between users is required, we recommend that you create separate short-lived Fluid datasets for each user or the relevant data processing jobs. You can develop Kubernetes operators to manage multiple Fluid datasets.

In some distributed cache systems, the master pod is responsible for maintaining the metadata of all files in the directories mounted to the cache system and recording the cache status of the files. When an application uses such a cache system to access a file, the application needs to first obtain the metadata of the file from the master pod and then use the metadata to retrieve the file from the underlying storage or the worker pod of the cache system. Therefore, the stability of the master pod is critical to the high availability of the cache system.

If you use JindoRuntime, you can use the following configurations to improve the availability of the master pod:

apiVersion: data.fluid.io/v1alpha1

kind: JindoRuntime

metadata:

name: sd-dataset

spec:

...

volumes:

- name: meta-vol

persistentVolumeClaim:

claimName: demo-jindo-master-meta

master:

resources:

requests:

memory: 4Gi

limits:

memory: 8Gi

volumeMounts:

- name: meta-vol

mountPath: /root/jindofs-meta

properties:

namespace.meta-dir: "/root/jindofs-meta"The preceding YAML file uses an existing persistent volume claim (PVC) named demo-jindo-master-meta, which is used to mount an ESSD as a volume. This means that the metadata maintained by the master pod is persisted and can be migrated together with the pod. For more information, see Use JindoRuntimes to persist storage for the JindoFS master.

The cache system client runs in the FUSE pod, which mounts the FUSE file system to the specified path in the application pod on the host and uses POSIX (Portable Operating System Interface) to expose data. This way, you can accelerate data access from application pods without the need to modify the application code. The access to remote storage is as fast as the access to local storage. The FUSE pod is responsible for maintaining the data tunnel between the application and the cache system. We recommend that you properly allocate resources to the FUSE pod.

Fluid allows you to use the spec.fuse.resources parameter of the cache runtime to allocate resources to the FUSE pod. If out of memory (OOM) errors occur in the FUSE pod, the FUSE file system may be unmounted from the application pod. To prevent this issue, we recommend that you do not specify a memory limit for the FUSE pod or specify a high memory limit for the FUSE pod to minimize the business impact caused by OOM. For example, you can set the memory limit to a value close to the amount of memory provided by the ECS instance.

spec:

fuse:

resources:

requests:

memory: 8Gi

# limits:

# memory: <ECS_ALLOCATABLE_MEMORY>The FUSE pod is responsible for maintaining the data tunnel between the application and the cache system. By default, if the FUSE pod crashes due to unexpected reasons, the application pod can no longer use the FUSE file system to access data, even though the FUSE pod is restarted to run as normal. To resolve this issue, you must restart the application pod to restore data access. When the pod is restarted, the FUSE file system is remounted to the pod. However, this may affect the availability of the application.

Fluid provides the auto repair feature for FUSE. This feature can automatically restore data access to the FUSE file system for the application pod within a specific time period after the FUSE pod is restarted. In addition, you do not need to restart the application pod.

Note: If the FUSE pod crashes, the FUSE file system remains inaccessible before the FUSE pod is restarted. Therefore, you must manually handle I/O errors before the FUSE pod is restarted to prevent the application from crashing. You can retry I/O operations until you succeed.

For more information about how to enable the auto repair feature of FUSE, see How to enable auto repair for FUSE. The auto repair feature of FUSE has certain limits. Therefore, we recommend that you enable this feature only in suitable scenarios. For example:

• Interactive program development scenarios where you develop applications by using online notebooks or other interactive programming environments. After enabling this feature, you do not need to restart the entire environment to restore data access through the FUSE file system.

A cache system accelerates data access for your application. However, read-write consistency issues may occur in cache. This section describes how to configure the read-write consistency of cache in different scenarios. If you want to ensure a high read-write consistency of cache, the performance of data access will be compromised or the maintenance of the cache system will become complex. We recommend that you select proper policies to improve the read-write consistency of cache based on your business scenarios. The following describes common scenarios, corresponding use cases, and the Fluid configurations for each scenario.

Use cases: Each time you train an AI model, the model reads data from a static dataset and then runs iterative training jobs. After the training jobs are completed, the cached data is deleted.

Solutions: Create a Fluid dataset and use the default dataset configurations or explicitly set the dataset to read-only.

Examples:

apiVersion: data.fluid.io/v1alpha1

kind: Dataset

metadata:

name: demo-dataset

spec:

...

# accessModes: ["ReadOnlyMany"] ReadOnlyMany is the default value.Use cases: A cache system is deployed in a Kubernetes cluster and business data is collected to the backend storage on a daily basis. Data analysis tasks are run in the early morning every day to analyze and aggregate incremental business data. The aggregated data is directly written to the backend storage and is not cached.

Solutions: Create a Fluid dataset and use the default dataset configurations or explicitly set the dataset to read-only. Periodically prefetch data from the backend storage and synchronize data changes to the backend storage.

Examples:

apiVersion: data.fluid.io/v1alpha1

kind: Dataset

metadata:

name: demo-dataset

spec:

...

# accessModes: ["ReadOnlyMany"] ReadOnlyMany is the default value.

---

apiVersion: data.fluid.io/v1alpha1

kind: DataLoad

metadata:

name: demo-dataset-warmup

spec:

...

policy: Cron

schedule: "0 0 * * *" # Prefetch data at 00:00:00 (UTC+8) each day.

loadMetadata: true # Synchronize data changes to the backend storage when you prefetch data from the underlying storage.

target:

- path: /path/to/warmupUse cases: A model inference service allows you to submit a custom AI model and use the model to run inference tasks. In this case, the custom model is directly written to the backend storage and is not cached. After the model is submitted, you can use the model to run inference tasks and view the inference results.

Solutions: Create a Fluid dataset and use the default dataset configurations or explicitly set the dataset to read-only. Set the timeout period of metadata to a small value for the FUSE pod of the cache runtime. Disable caching for server-side metadata in the cache system.

Examples:

Sample code block for JindoRuntime:

apiVersion: data.fluid.io/v1alpha1

kind: Dataset

metadata:

name: demo-dataset

spec:

...

# accessModes: ["ReadOnlyMany"] ReadOnlyMany is the default value.

---

apiVersion: data.fluid.io/v1alpha1

kind: JindoRuntime

metadata:

name: demo-dataset

spec:

fuse:

args:

- -oauto_cache

# Set the timeout period of metadata to 30 seconds. If you specify xxx_timeout=0, strong data consistency is ensured. However, the data access efficiency is greatly downgraded.

- -oattr_timeout=30

- -oentry_timeout=30

- -onegative_timeout=30

- -ometrics_port=0

properties:

fs.jindofsx.meta.cache.enable: "false"Use cases: In large-scale distributed AI training scenarios, training jobs read data from datasets in Directory A and write the model checkpoint to Directory B each time an epoch is completed. The checkpoint may be large in size. In this case, you can write data to cache to improve write efficiency.

Solutions: Create a write-only Fluid dataset and a read/write Fluid dataset. Mount the write-only dataset to Directory A and the read/write dataset to Directory B. This way, read/write splitting is achieved.

Examples:

apiVersion: data.fluid.io/v1alpha1

kind: Dataset

metadata:

name: train-samples

spec:

...

# accessModes: ["ReadOnlyMany"] ReadOnlyMany is the default value.

---

apiVersion: data.fluid.io/v1alpha1

kind: Dataset

metadata:

name: model-ckpt

spec:

...

accessModes: ["ReadWriteMany"]Sample code block for the application:

apiVersion: v1

kind: Pod

metadata:

...

spec:

containers:

...

volumeMounts:

- name: train-samples-vol

mountPath: /data/A

- name: model-ckpt-vol

mountPath: /data/B

volumes:

- name: train-samples-vol

persistentVolumeClaim:

claimName: train-samples

- name: model-ckpt-vol

persistentVolumeClaim:

claimName: model-ckptUse cases: In interactive program development scenarios, you need to use a workspace directory. The workspace directory stores datasets and code. You may need to frequently add, delete, or modify files in the workspace directory.

Solutions: Create a read/write Fluid dataset and use a backend storage that is fully compatible with POSIX.

Examples:

apiVersion: data.fluid.io/v1alpha1

kind: Dataset

metadata:

name: myworkspace

spec:

...

accessModes: ["ReadWriteMany"]This article provides a detailed overview of using ACK Fluid in the ACK environment, covering multiple optimization policies. In terms of performance improvements, you can accelerate data access by selecting appropriate ECS instance types and cache media, scheduling pods based on cache affinity, and optimizing prefetching policies. In terms of stability improvements, you can improve system reliability by preventing the cache system from being overloaded, persisting metadata, properly allocating resources to the FUSE pod, and enabling the auto repair feature of the FUSE component. In terms of data consistency, you can select proper policies to improve the read-write consistency of cache based on your business scenarios, such as periodical data changes in read-only or read/write mode, disable caching for metadata, and read/write splitting.

In summary, ACK Fluid provides a comprehensive set of policies to help users achieve optimal cache effects in various scenarios. Through policy selection and configuration, users can effectively balance data processing performance, stability, and data consistency, thereby accelerating data in production environments.

Kubernetes Multi-Cluster Management: Empower Your Kubernetes Clusters

229 posts | 34 followers

FollowAlibaba Container Service - January 15, 2026

Alibaba Cloud Native Community - November 23, 2022

OpenAnolis - March 10, 2026

ApsaraDB - November 26, 2024

Alibaba Clouder - April 2, 2020

Alibaba Cloud Native Community - November 6, 2025

229 posts | 34 followers

Follow Best Practices

Best Practices

Follow our step-by-step best practices guides to build your own business case.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Network Intelligence Service

Network Intelligence Service

Self-service network O&M service that features network status visualization and intelligent diagnostics capabilities

Learn MoreMore Posts by Alibaba Container Service