For those using cloud, timeout issues reported in the service logs are often difficult to handle, because, for one, the crux of the problem may be in the cloud infrastructure layer or in the service software layer, and so there is a relatively large scope for troubleshooting. And, for two, such problems are often non-reproducible and therefore it is often difficult to pinpoint what is actually going on. This blog article looks into how to identify and troubleshoot the root causes of timeout issues reported in the service logs. Specifically, this blog will focus on service timeouts from the cloud infrastructure layer and service software layer.

Often timeouts can be hard to understand and troubleshoot. This can be due to there being different service forms, or different software implementation languages and frameworks, or the fact that the information printed in the service log may be different. Keywords could be in one of several different forms. Some of these may be:

"SocketTimeOut", "Read timed out", and "Request timeout".

In terms of form, they all belong to the category of network timeout, but when it comes to looking at these, there's one concept needs to be clarified. That concept is that this kind of problem occurs because the request exceeds the set timeout time, and this setting may come from the client, server or intermediate network node, which is the direct cause. Network packet loss may cause timeout, but it is not a sufficient condition. The relationship between the service timeout and the network packet loss can be summarized as follows:

Network packet-dropping may cause service timeout, but the cause for service timeout is not necessarily packet loss.

After clarifying this causal relationship, let's look at how to analyze the service timeout. If the service timeout is arbitrarily equated with packet loss due to network jitter, then the troubleshooting and analysis process completely misses that the cause could be the service software layer itself. In fact, often the service software layer is where the whole dilemma begins.

Given this issue, this blog will try to focus on service timeouts from the cloud infrastructure layer and service software layer. In general, the reasons for packet loss at the basic settings layer are relatively easy to troubleshoot in all actuality. Alibaba Cloud has a complete underlying monitoring system. Therefore, given the corresponding time period when the service log reports an error, the monitoring data can provide the necessary information to determine whether a problem has occurred in the infrastructure network. However, the timeout of the service layer is usually results from the setting of the software layer, and is related to the software implementation and service form. This is often more difficult to troubleshoot.

Network jitter can cause service timeout. This is mainly because network jitter can lead to different, varying degrees of latency. Consider TCP, which has been used in most Internet applications to this date, as an example. Dropping packets does not affect the integrity of data transmission, because the TCP protocol has already been designed to handle issues such as packet loss, out-of-order packet exceptions, and other exceptions. In addition, all retransmission processes are completed in the kernel TCP protocol stack. The user space process of the operating system is actually not aware of this process. The only side effect of packet loss is that the delay increases. If the delay is long enough that it reaches a Timeout time set by the application process, then a service timeout occurs on the service application side.

Now, whether a timeout occurs when packets are dropped is dependent on the Timeout setting of the application process. For example, if only one TCP packet is dropped during data transmission, the timeout retransmission after 200 ms is triggered:

What are the quantitative values that can be referenced when setting the Timeout time of an application process? Although RTT/RTO in TCP changes dynamically, the impact of TCP packet loss can be summarized quantitatively.

The delays caused by packet loss are mainly divided into the following two categories:

In addition to packet loss, another common delay is caused by the TCP Delayed ACK. This is related to the protocol design and has nothing to do with packet loss actually. Here, it can be also summarized into the delay quantification section. It is easily triggered in the interactive data stream and Nagle algorithm scenario. The minimum value TCP_DELACK_MIN of the Delayed ACK defined in Linux is 40 ms.

Therefore, to sum up, the following quantitative time can be used for reference:

Packet loss in infrastructure networks can be caused by a variety of reasons, but these reasons can mainly be summarized into the following three categories discussed below.

Hardware and software problems may occur in the host virtualization network where network links, physical network devices, and ECS/RDS are located. Given this, the cloud infrastructure has been made fully redundant so to ensure rapid isolation, switching, and recovery when problems arise.

Many network cloud products, such as ECS, SLB, and NAT gateway, have specifications and bandwidth options, which are made so that, when the traffic or the total connections of the cloud product exceeds the specification or bandwidth limit set given whichever option was chosen, packet loss occurs. This type of packet loss is not caused by the failure of the cloud vendor, but rather by the deviation between the actual service traffic scale and the selection of the cloud product specification or service threshold. This problem can usually be identified through the monitoring services, which should be provided by the specific cloud product itself, or an associated monitoring service.

In public network scenarios, the packet interaction between the client and the server often goes through multiple autonomous systems (ASs). If a problem occurs with the intermediate link of the vendor, end-to-end packet loss may occur as a result.

The previous section is an analysis on application timeout caused by packet loss due to exceptions or speed-limitations in several infrastructures. In general, as long as the point of dropping packets can be identified, the root cause can be found. However, in some cases, network monitoring shows no signs of packet loss. At this time, we need to continue troubleshooting from the application side. Due to the diversity of application forms, two typical examples are given below to illustrate why timeouts are also reported in the service logs without network packet loss.

This is an example of an access timeout that occurred between an ECS host and a third-party API.

Troubleshooting methods:

After the packets are captured, the "Analysis - Expert Information" in Wireshark or the tcp.analysis.retransmission expression can be used to filter out whether a retransmission exists.

The result does not show any signs of packet loss, which proves that this problem is not caused by dropping packets. If it is not caused by packet loss, then why? At this time, we need to further analyze the captured packets.

We can notice that the keyword "Request Timeout" actually appears in the service logs, which means that the outgoing HTTP request timed out. In terms of the HTTP protocol, it can be understood as: "The HTTP request has been sent to the peer end, but the end did not return a complete response within a set period of time". Along this line of thought, we can check if the packet has only HTTP requests and no HTTP responses. In Wireshark, HTTP requests without HTTP responses can be filtered by the following method:

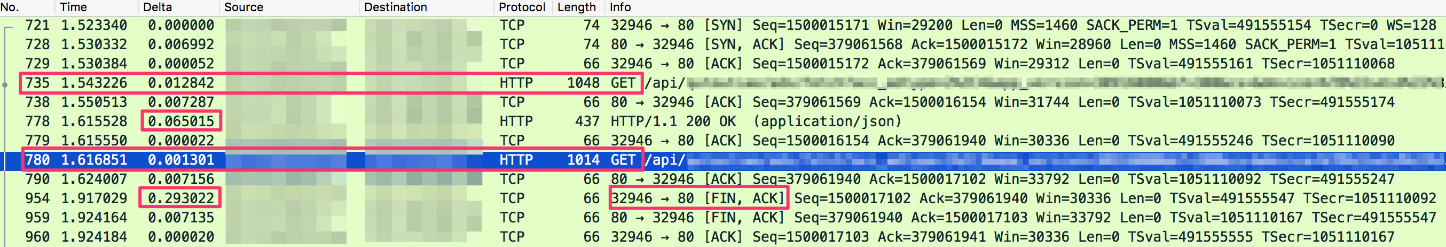

http.request.method == GET && !http.response_inWe found some such HTTP requests. For this method, you can select an HTTP request, and then track the TCP stream to see the whole process of packet interaction, as you can see from the following:

According to the captured packets, the following are some findings:

So far, we can infer that the ECS server actively terminates (FIN) the TCP connection 300 ms after sending an HTTP request to a third-party API. This may be the timeout time set by the client in the application. After the service application times out, it may have its own retry logic.

The user finally confirmed that the timeout setting does exist in the service software.

One question is whether the 300 ms timeout reasonable. Well, to answer this question. From the captured packets, we can see that the RTT of the ECS peer API server is about 7 ms, and it is assumed that it is a access within the same metropolitan. For the single-digit millisecond level RTT, the timeout of 300 ms has actually had a margin, and may even allow a timeout retransmission (200 ms).

Next, another problem we need to analyze is what is the root cause of the problem. Based on our analysis it seems that it is mainly caused by unstable request processing speed on the API server. Some requests are processed and returned in dozens of milliseconds, while some requests are not processed in 300 ms. This instability may be related to the resource usage, and pressure of the API server, but it is a black box and requires peer analysis.

Given our analysis, you can consider one of the following solutions. Either you can contact the owner of the peer API server to find the root cause and eradicate it, which is the best solution, as it is a more prominent solution. Or a temporary solution is to adjust and increase the client timeout value set on the ECS.

This section discusses an example access timeout between an ECS intranet and Redis server or a self-hosted Redis server. The access timeout from the ECS to the cloud service RDS/Cache or a self-hosted database/Cache is another type of problem. Below, an access timeout from the ECS intranet to the byte Redis is used to illustrate this type of problem.

redis.clients.jedis.exceptions.JedisConnectionException: java.Net.SocketTimeoutException: Read timed outAfter the packets are captured, similarly, the system check whether Negative Acknowledgment (NACK) is found, and, the result is that no NACK is found. Unlike the previous example, this example cannot filter data packets by certain features. Therefore, we can only find the location of the corresponding packet based on the time of the reported error in the Jedis log to see if any clues can be found.

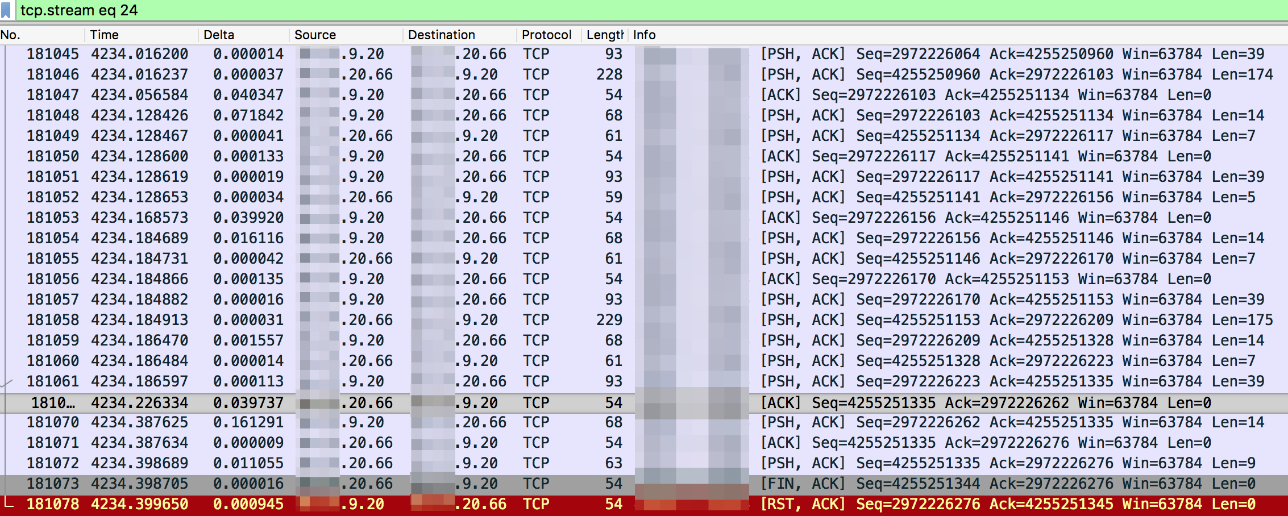

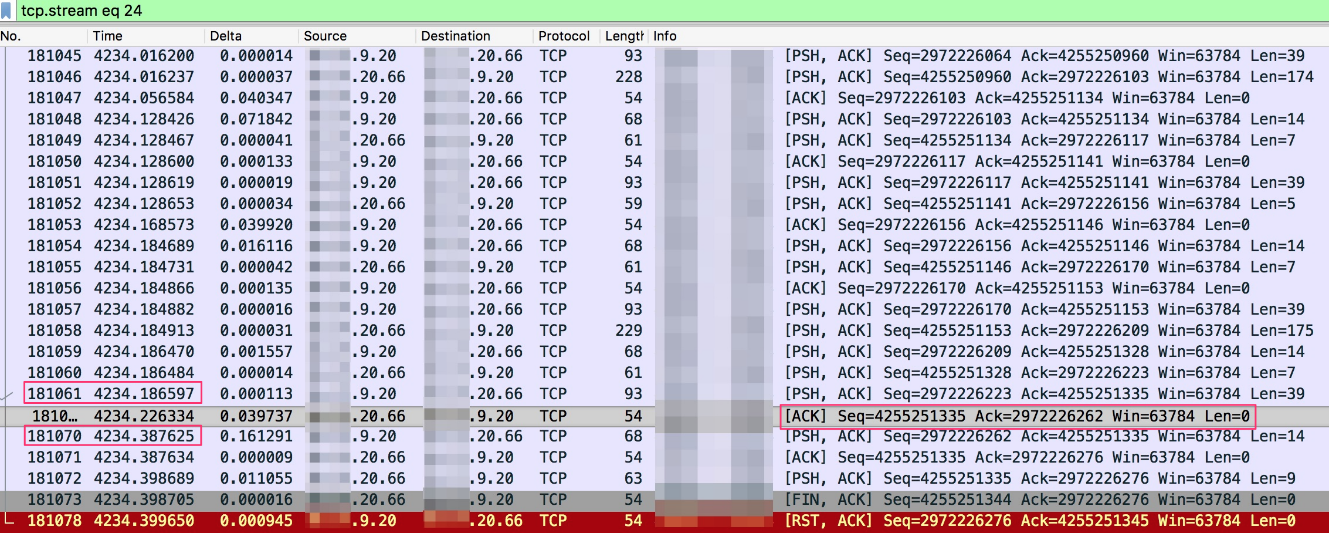

Find the corresponding packet based on the time of the reported error in the Jedis log, and track the TCP stream to see the whole process of packet interaction, as follows (Jedis client is 9.20, and Redis server is 20.66):

According to the captured packets, the following are some of our findings:

The above information is not enough to explain why the error "Read timed out" is reported. The last TCP Reset initiated from the client may be an interference item. We can see that this TCP Reset is sent when the FIN from the server is received. For the normal TCP Four-Way Wavehand (FIN ACK) process, the client should also send a FIN to the server to terminate the connection after receiving the FIN from the server. However, TCP has a Linger option to control this behavior. After the Linger option is set, the client can directly return the Reset, so that both parties can quickly close this set of sockets, thus preventing these sockets from actively closing and entering a 60-second TIME_WAIT status. It looks like the problem was caused by the Linger setting of the client. After searching in the Jedis code, this setting is found in Connection.java (as shown below), which explains why the client executes the Reset command for TCP connection. This behavior is logical.

socket = new Socket();

socket.setReuseAddress(true);

socket.setKeepAlive(true); // Will monitor the TCP connection is valid

socket.setTcpNoDelay(true); // Socket buffer Whether closed, to ensure timely delivery of data

socket.setSoLinger(true, 0); // Control calls close () method, the underlying socket is closed immediatelyNext, let's see if the Redis command in the packet interaction is normal. After tracking the TCP stream, we can see data in ASCII form, as follows:

The client sends the DEL command and the QUIT command, which can be checked against the packets.

The key to the disconnection of this connection is that the client sends the QUIT command to the Redis server. As for the reason why QUIT is sent, and why it is sent 200 ms after the previous command was sent and no response was returned, it is likely that a timeout was set. After checking several other TCP streams, we found that basically all TCP persistent connections ended in a similar way, and basically we can draw this conclusion.

This case is similar to the first case. The difference is that in the captured packets, after the timeout time, the client does not directly terminate (FIN) the connection, but sends the Redis QUIT command, and the connection will not be closed until the preceding command is executed. Compared with the first method, this is a more elegant method, provided that the Redis has the QUIT command, and Jedis internalizes this operation. This case more clearly illustrates the impact of specific services on connection behavior. Packets need to be used to reverse the interaction between the Redis client and the server.

In this bloog article, we have introduce the two layers that should be considered when handling timeout issues reported in the service logs: the cloud infrastructure layer and the service software layer. A considerable number of problems may be caused by network packet loss in the infrastructure. It is important to identify the point of packet loss through network monitoring, and the CloudMonitor network product. Do not equate service timeout with packet loss. The other type of timeout caused by the Timeout setting in the service software layer occurs in a relatively small proportion, but requires more extensive troubleshooting. Do not ignore the timeout caused by such reasons carelessly.

7 posts | 5 followers

FollowAlibaba Clouder - January 31, 2019

Alibaba Cloud MaxCompute - December 18, 2018

Alibaba Clouder - July 25, 2019

Alibaba Clouder - March 25, 2021

Alibaba Clouder - July 1, 2018

Alibaba Clouder - January 24, 2019

7 posts | 5 followers

Follow Networking Overview

Networking Overview

Connect your business globally with our stable network anytime anywhere.

Learn More Global Network Solution

Global Network Solution

Alibaba Cloud's global infrastructure and cloud-native SD-WAN technology-based solution can help you build a dedicated global network

Learn More Edge Network Acceleration

Edge Network Acceleration

Establish high-speed dedicated networks for enterprises quickly

Learn More Accelerated Global Networking Solution for Distance Learning

Accelerated Global Networking Solution for Distance Learning

Alibaba Cloud offers an accelerated global networking solution that makes distance learning just the same as in-class teaching.

Learn MoreMore Posts by William Pan

5371000810218051 February 1, 2021 at 9:08 pm

Hi! I'm not really good in networking stuff, I've built an embeddable chat tool, and that is being hosted in my GoDaddy VPS Centos 7 Server, and I also created a script that will be embedded to a website that wants to use the chat tool. It is actually been already deployed to several websites now, and we're having a problem with this intermittent connection timeout error. the script is embedded in the website's header section ,and whenever a load event happens, the script will submit a http request to my vps server requesting for the chat tool body, sometimes I get connection timeout error and sometimes I don't, this is happening in all websites the script is embedded into, can you help me with this? I tried to look for logs in my server but I can't find it, it would be greatly appreciated if you could help me with this one, thanks!