By Qianyi

1. I/O Models and Their Problems

2. Resource Contention and Distributed Locks

3. Redis Snap-Up System Instances

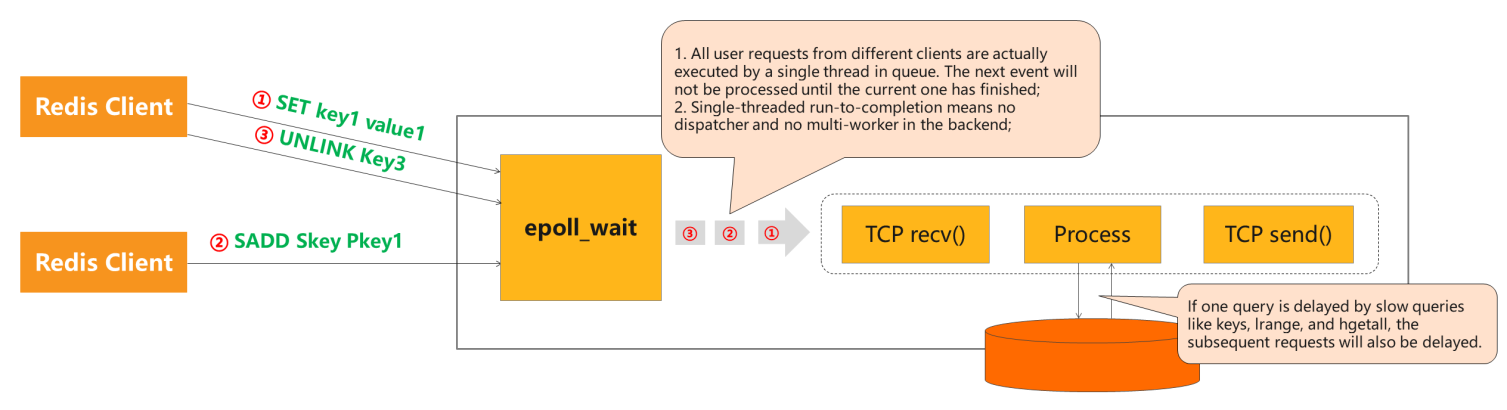

The I/O model of Redis Community Edition is simple. Generally, all commands are parsed and processed by one I/O thread.

However, if there is a slow query command, other queries have to queue up. In other words, when a client runs a command too slowly, subsequent commands will be blocked. Sentinel revitalization cannot help since it will delay the ping command, which is also affected by slow queries. If the engine gets stuck, the ping command will fail to determine whether the service is currently available for fear of misjudgment.

If the service does not respond and the Master is switched to the Slave, slow queries also will slow down the Slave. The misjudgment may also be made by ping command. As a result, it is difficult to monitor service availability.

If one query is delayed by slow queries, such as keys, lrange, and hgetall, the subsequent requests will also be delayed.

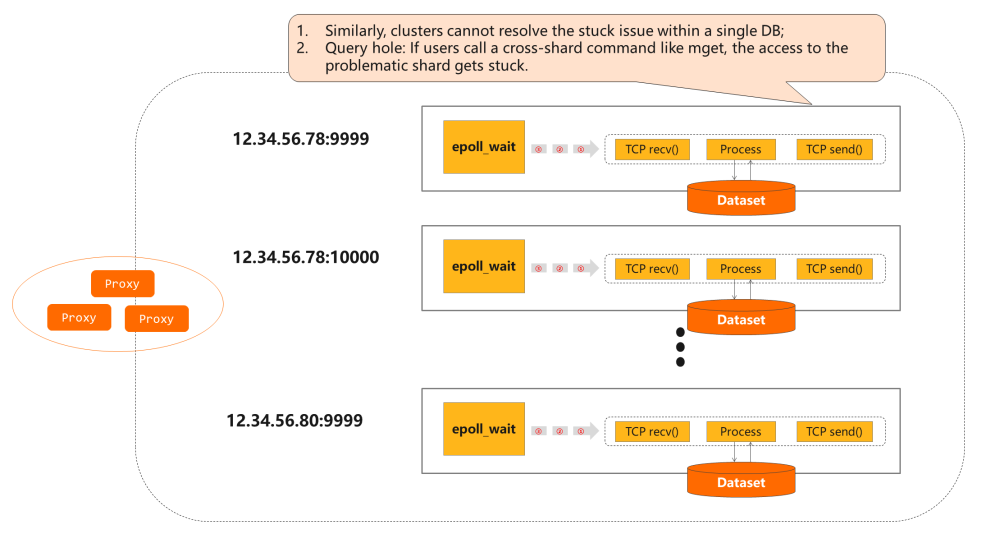

This problem also exists in a cluster containing multiple shards. If a slow query delays processes in a shard, for example, calling a cross-shard command like mget, the access to the problematic shard gets stuck. Thus, all subsequent commands are blocked.

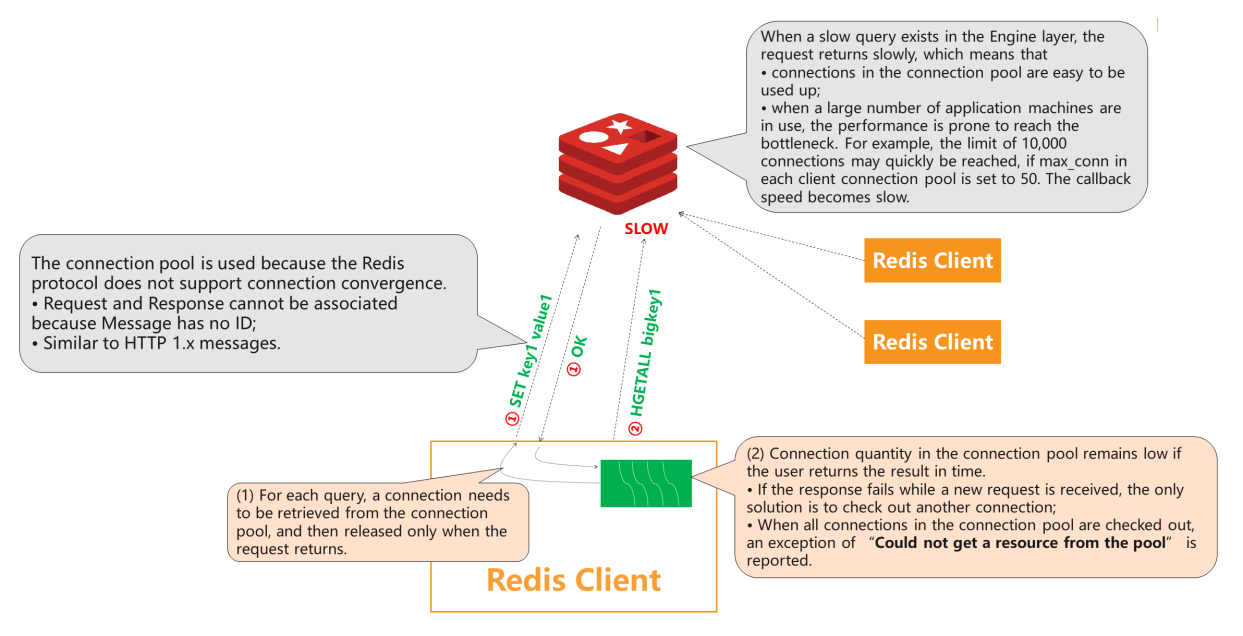

Common Redis clients like Jedis provide connection pools. When a service thread accesses Redis, a persistent connection will be retrieved for each query. If the query is processed too slowly to return the connection, the waiting time will be prolonged because the connection cannot be used by other threads until the request returns a result.

If all queries are processed slowly, each service thread retrieves a new persistent connection, which will be consumed gradually. If this is the case, an exception of “no resource available in the connection pool” is reported because the Redis server is single-threaded. When a persistent connection of the client is blocked by a slow query, requests from subsequent connections will not be processed in time. The current connection cannot be released back to the connection pool.

Connection convergence is not supported by the Redis protocol, which deprives the Message of an ID. Thus, Request and Response cannot be associated. Therefore, the connection pool is used. The callback is put into a queue unique for each connection when a request is received to implement asynchronization. The callback can be retrieved and executed after the request returns. This is the FIFO model. However, server connections cannot be returned out of order because they cannot be matched in the client. Generally, the client uses BIO, which blocks a connection and only allows other threads to use it after request returns.

However, asynchronization cannot improve efficiency, which is limited by the single-threaded server. Even if the client modifies the access method to allow multiple connections to send requests at the same time, these requests still have to queue up in the server. Slow queries still block other persistent connections.

Another serious problem comes from the Redis thread model. Its performance will lower when the I/O thread handles with more than 10,000 connections. For example, if there are 20,000 to 30,000 persistent connections, the performance will be unacceptable for the business. For business machines, if there are 300 to 500 machines and each one handles 50 persistent connections, it is easy to reach the performance bottleneck.

The connection pool is used because the Redis protocol does not support connection convergence.

When a slow query exists in the Engine layer, the request returns slowly, which means:

max_conn in each client connection pool is set to 50, the callback speed becomes slow.Here are some solutions for asynchronous interfaces implementation based on the Redis protocol:

set k v command to encapsulate a request in the following form:multi

ping {id}

set k v

execThe server will return the following code:

{id}

OKThis tricky method uses atomic operations of Multi-Exec and the unmodified parameter return feature of the ping command to “attach” message IDs in the protocol. However, this method is fundamentally not used by any client.

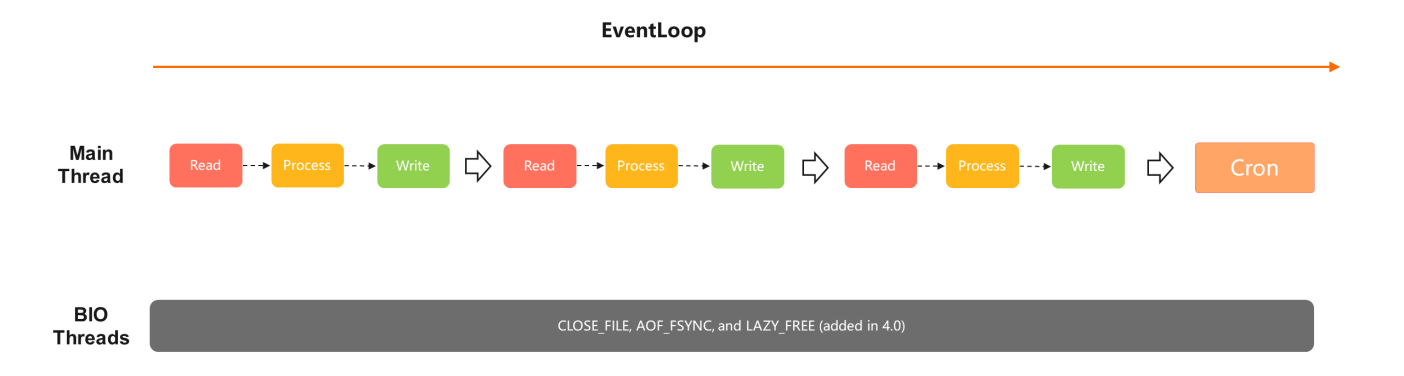

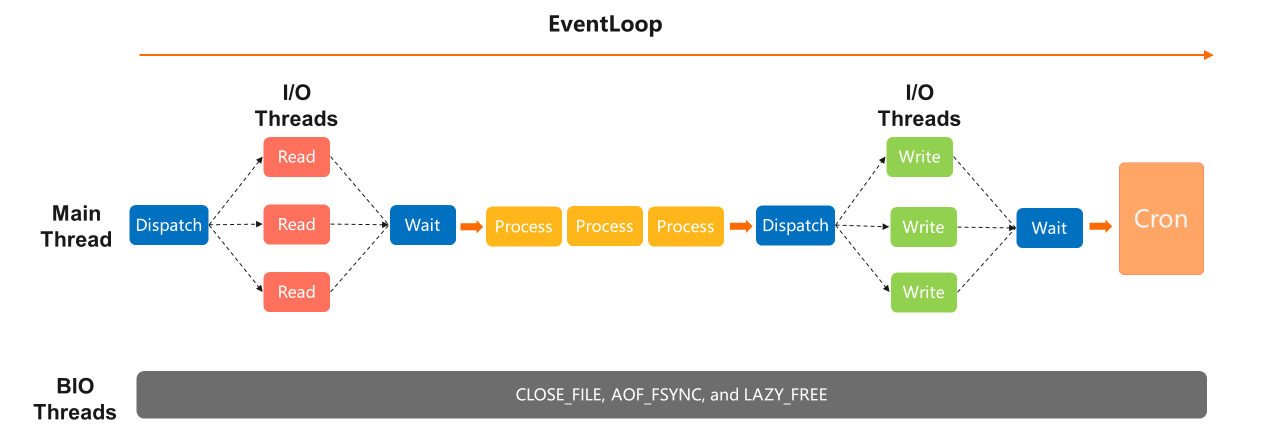

The model of well-known versions before Redis 5.x remains the same. All commands are processed by a single thread, and all reads, processes, and writes run in the same main I/O. There are several BIO threads that close or refresh files in the background.

In versions later than Redis 4.0, LAZY_FREE is added, allowing certain big keys to release asynchronously to avoid blocking the synchronous processing of tasks. On the contrary, Redis 2.8 will get stuck when eliminating or expiring large keys. Therefore, Redis 4.0 or later is recommended.

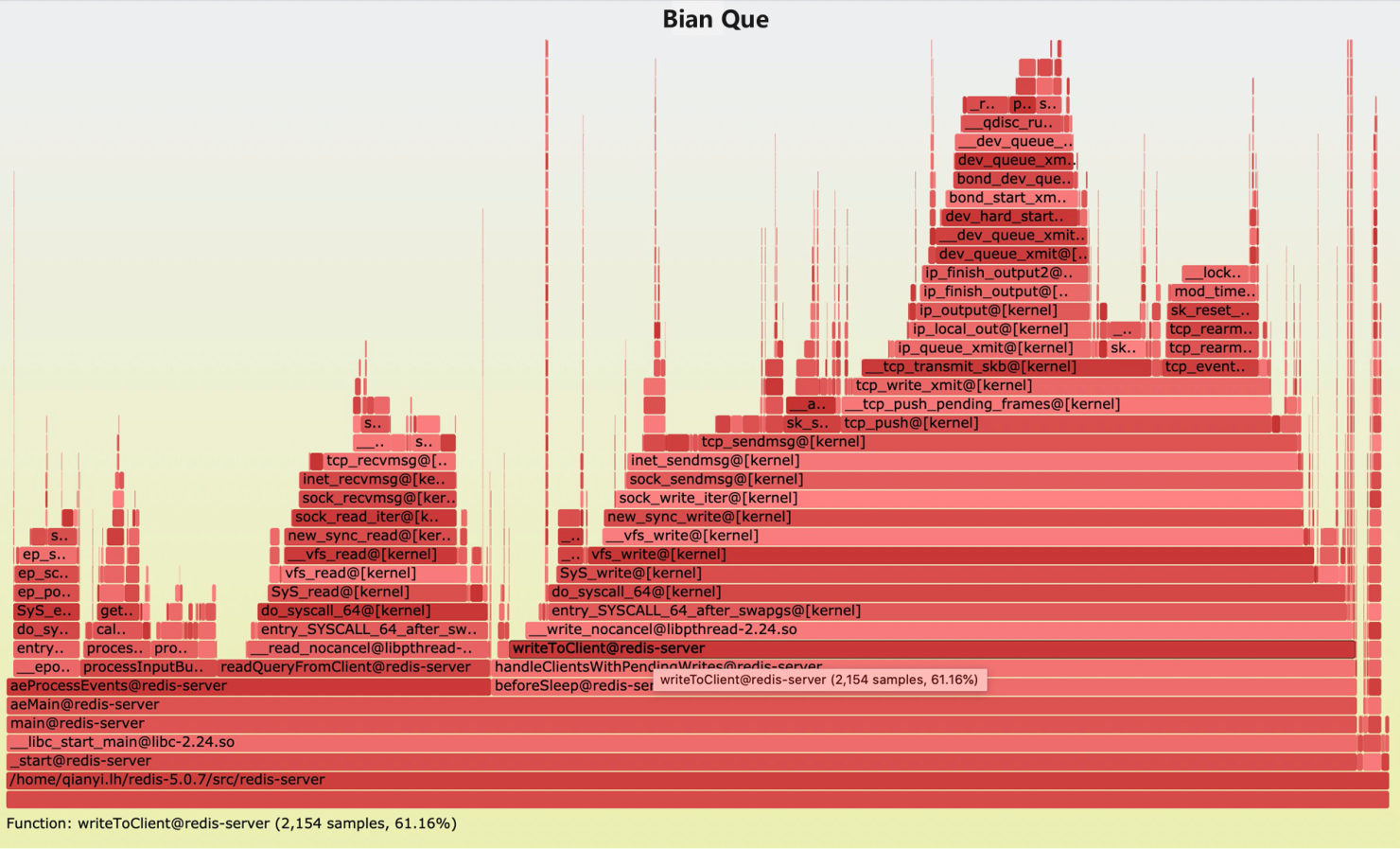

The following figure shows the performance analysis result. The left two parts are about command processing, the middle part talks about “reading,” and the rightmost part stands for “writing,” which occupies 61.16%. Thus, we can tell that most of the performance depends on the network I/O.

With the improved model of Redis 6.x, the “reading” task can be delegated to the I/O thread for processing after readable events are triggered in the main thread. After the reading task finishes, the result is returned for further processing. The “writing” task can also be distributed to the I/O thread, which improves the performance.

Improvement in the performance can be really impressive with O(1) commands like simple “reading” and “writing” if only one running thread exists. If the command is complex while only one running thread in DB exists, the improvement is rather limited.

Another problem lies in time consumption. Every “reading” and “writing” task needs to wait for the result after being distributed. Therefore, the main thread will idle for a long time, and service cannot be provided. Therefore, more improvements of the Redis 6.x model are expected.

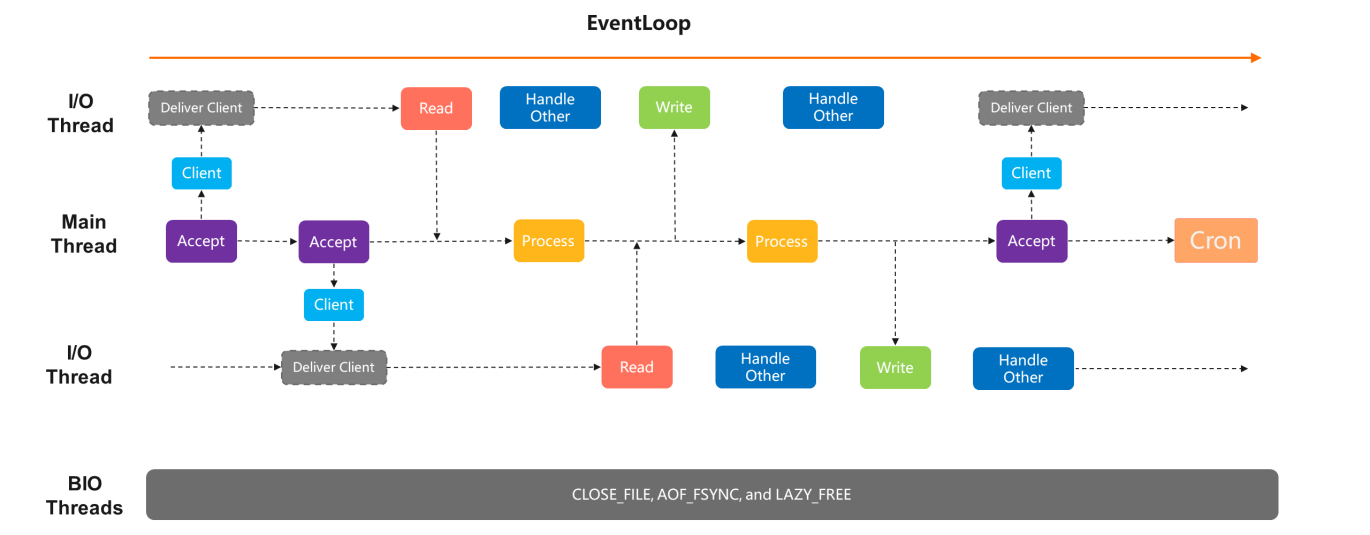

The model of Alibaba Cloud Redis Enterprise Edition splits the entire event into parts. The main thread is only responsible for command processing, while all reading and writing tasks are processed by the I/O thread. Connections are no longer retained only in the main thread. The main thread only needs to read once after the event starts. After the client is connected, the reading tasks are handed over to other I/O threads. Thus, the main thread does not care about readable and writable events from clients.

When a command arrives, the I/O thread will forward the command to the main thread for processing. Later, the processing result will be passed to the I/O thread through notification for writing. By doing so, the waiting time of the main thread is reduced as much as possible to enhance the performance further.

The same disadvantage applies. Only one thread is used in command processing. The improvement is great for O(1) commands but not enough for commands that consume many CPU resources.

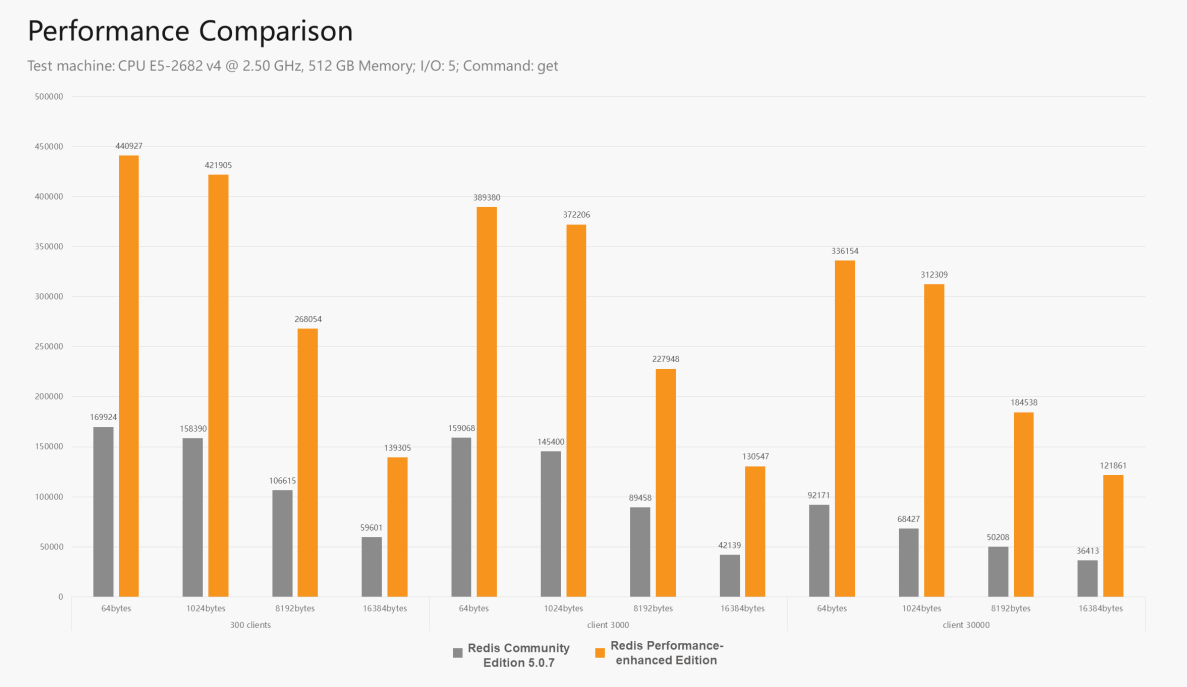

In the following figure, the gray color on the left stands for Redis Community Edition 5.0.7, and the orange color on the right stands for Redis Performance-Enhanced Edition. The multi-thread performance of Redis 6.x is in between. In the test, the reading command is used, which requires more on I/O rather than CPU. Therefore, the performance improvement is great. If the command used consumes many CPU resources, the difference between the two editions will reduce to none.

It is worth mentioning that the Redis Community Edition 7 is in the planning phase. Currently, it is being designed to adopt a modification scheme similar to the one used by Alibaba Cloud. With this scheme, it can gradually approach the performance bottleneck of a single main thread.

Performance improvement is just one of the benefits. Another benefit of distributing connections to I/O threads is that it linearly increases the number of connections. You can add I/O threads to deal with more connections. Redis Enterprise Edition supports tens of thousands of connections by default. It can even support more connections, such as 50,000 or 60,000 persistent connections, to solve the issue of insufficient connections during the large-scale machine scaling at the business layer.

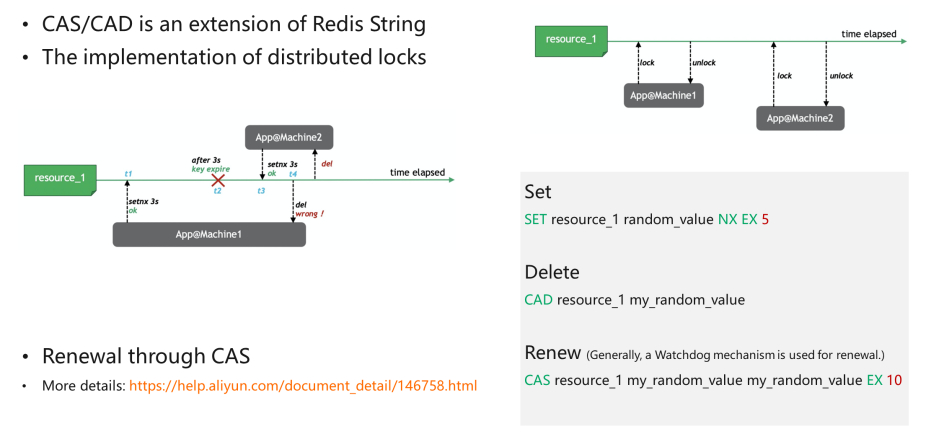

The write command in Redis string has a parameter called NX, which specifies that a string can be written if no string exists. This is naturally locked. This feature facilitates the lock operation. By taking a random value and setting it with the NX parameter, atomicity can be ensured.

An “EX” is added to set an expiration time to ensure the lock will be released if the business machine fails. If a lock is not released after the machine fails or is disabled for some reason, it will never be unlocked.

The parameter “5” is an example. It does not have to be 5 seconds, but it depends on the specific tasks to be done by the business machine.

The removal of distributed locks can be troublesome. Here’s a case. A locked machine sticks or loses contact due to some sudden incidents. Five seconds later, the lock has expired, and other machines have been locked, while the once-failed machine is available again. After processing, like deleting Key, the lock that does not belong to the failed machine is removed. Therefore, the deletion requires judgment. Only when the value is equal to the previously written one can the lock be removed. Currently, Redis does not have such a command, so it usually uses Lua.

When the value is equal to the value in the engine, Key is deleted by using the CAD command “Compare And Delete.” For open-source CAS/CAD commands and TairString in Module form, please see this GitHub page. Users can load modules directly to use these APIs on all Redis versions that support the Module mechanism.

When locking, we set an expiration time, such as “5 seconds.” If a thread does not complete processing within the time duration (for example, the transaction is still not completed after 3 seconds), the lock needs to be renewed. If the processing has not finished before the lock expires, a mechanism is required to renew the lock. Similar to deletion, we cannot renew directly, unless the value is equal to the value in the engine. Only when the lock is held by the current thread can we renew it. This is a CAS operation. Similarly, if there is no API, a new Lua script is needed to renew the lock.

Distributed locks are not perfect. As mentioned above, if the locked machine is lost, the lock is held by others. When the lost machine is suddenly available again, the code will not judge whether the lock is held by the current thread, possibly leading to reenter. Therefore, Redis distributed locks, as well as other distributed locks, are not 100% reliable.

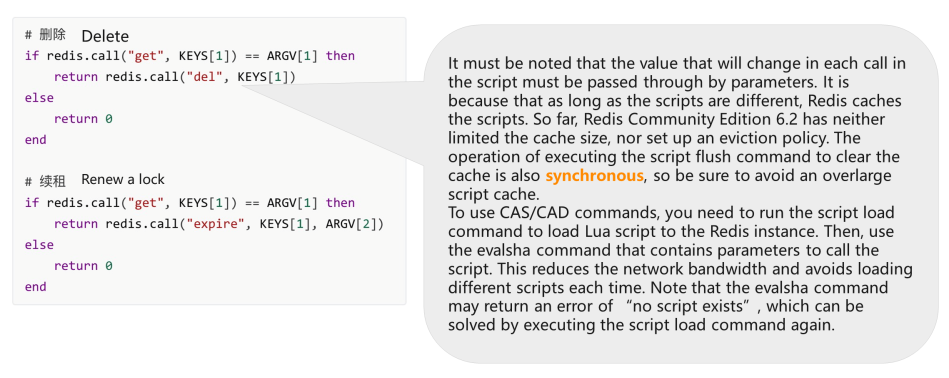

If there is no CAS/CAD command available, Lua script is needed to read the Key and renew the lock, if two values are the same.

Note: The value that will change in each call in the script must be passed through by parameters because as long as the scripts are different, Redis caches the scripts. So far, Redis Community Edition 6.2 has neither limited the cache size nor set up an eviction policy. The operation of executing the script flush command to clear the cache is also synchronous, so be sure to avoid an overlarge script cache. The feature of asynchronous cache deletion has been added to the Redis Community Edition by Alibaba Cloud engineers. The script flush async command is supported in Redis 6.2 and later versions.

You need to run the script load command to load the Lua script to the Redis instance to use CAS/CAD commands. Then, use the evalsha command that contains parameters to call the script. This reduces the network bandwidth and avoids loading different scripts each time. Note: The evalsha command may return an error of “no script exists,” which can be solved by executing the script load command again.

More information about the Lua implementation of CAS/CAD is listed below:

Before executing the Lua scripts, Redis needs to parse and translate the scripts first. Generally, Lua usage is not recommended in Redis for two reasons.

First, to use Lua in Redis, you need to call Lua from C language and then call C language from Lua. The returned value is converted twice from a value compatible with Redis protocol to a Lua object and then to C language data.

Second, the execution process involves many Lua script parsing and VM processing operations (including the memory usage of lua.vm.) So, the time consumed is longer than the common commands. Thus, simple Lua scripts like if statement is highly recommended. Loops, duplicate operations, and massive data access and acquisition should be avoided as much as possible. Remember that the engine only has one thread. If the majority of CPU resources are consumed by Lua script execution, there will be few CPU resources available for business command processing.

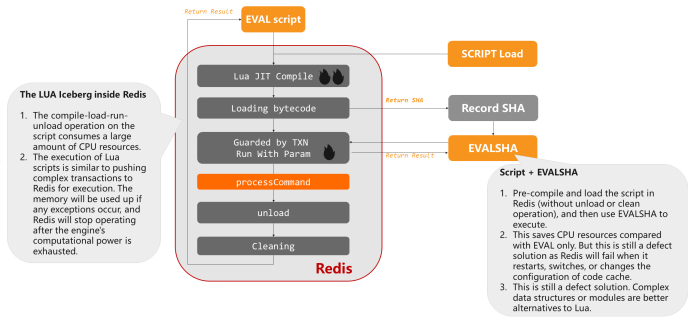

The compile-load-run-unload operation on the script consumes a large amount of CPU resources. The execution of Lua scripts is similar to pushing complex transactions to Redis for execution. The memory will be depleted if any exceptions occur, and Redis will stop operating after the engine's computational power is exhausted.

If we pre-compile and load the script in Redis (without unload or clean operation) and use EVALSHA to execute, this saves CPU resources compared with EVAL only. However, this is still a defective solution as Redis will fail when it restarts, switches, or changes the configuration of the code cache. The code cache needs to be reloaded. Complex data structures or modules are better alternatives to Lua.

A flash sale scenario is divided into three phases:

Snap-up/flash sale scenarios fundamentally concern highly concurrent reading and writing of hot spot data.

Snap-up/flash sale is a process of continuously pruning requests:

1. Less Data (Staticizing, CDN, frontend resource merge, dynamic and static data separation on page, and LocalCache)

Reduce the page demand for dynamic parts in every way possible. If the frontend page is mostly static, use CDN or other mechanisms to prevent all requests. Thus, requests on the server will be reduced largely in quantity and bytes.

2. Short Path (Shorten frontend-to-end path as much as possible, minimize the dependency on different systems, and support throttling and degradation.)

In the path from the user side to the final end, you should depend on fewer business systems. Bypass systems should reduce their competition, and each layer must support throttling and degradation. After throttling and degrading, the frontend prompts optimization is needed.

3. Single Point Prohibition (Achieve stateless application services scaling horizontally and avoid hot spots for storage services.)

Stateless scale-out must be supported everywhere in the service. Stateful storage services must avoid hot spots, generally some reading and writing hot spots.

The third method is commonly selected, as the first two all have defects. For the first one, it is difficult to avoid malicious orders that do not pay. For the second one, the payment fails because of insufficient inventories. So, the experience of the former two methods is very poor. Usually, the inventory is deducted in advance and will be released when the order times out. The TV framework will be used, and a security and anti-fraud mechanism will also be established.

1. String Structure

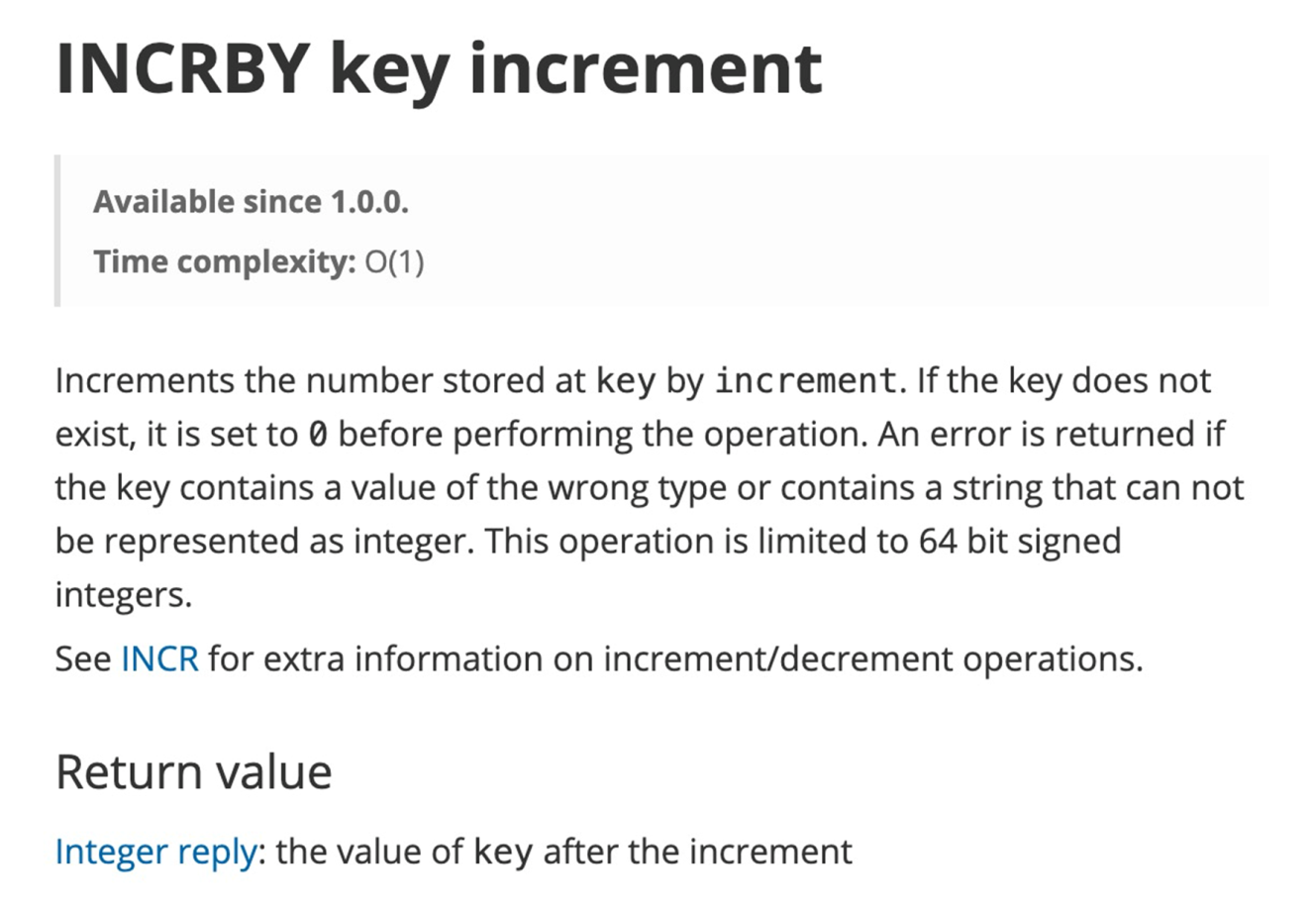

incr/decr/incrby/decrby directly. Note: Currently, Redis does not support upper and lower bound limits.2. List Structure

lpop or rpop command to deduct inventory until nil (key not exist) is returned.List structure has some disadvantages, for example, more memory is occupied. If multiple inventory units are deducted at a time, lpop needs to be called multiple times, which will affect the performance.

3. Set/Hash Structure

hincrby to count and hget to judge the purchased quantity.4. (If Service Scenarios Allow) Multiple Keys (key_1, key_2, key_3...) for Hot Commodities

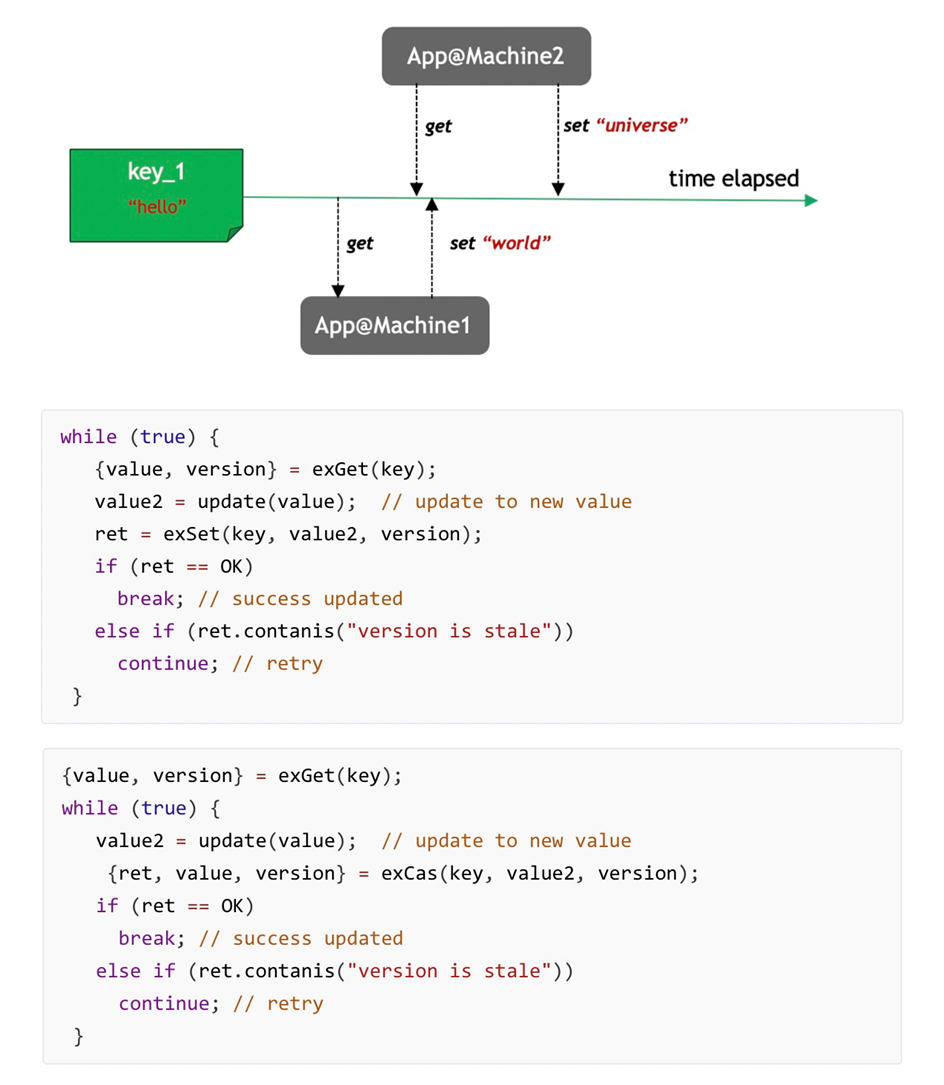

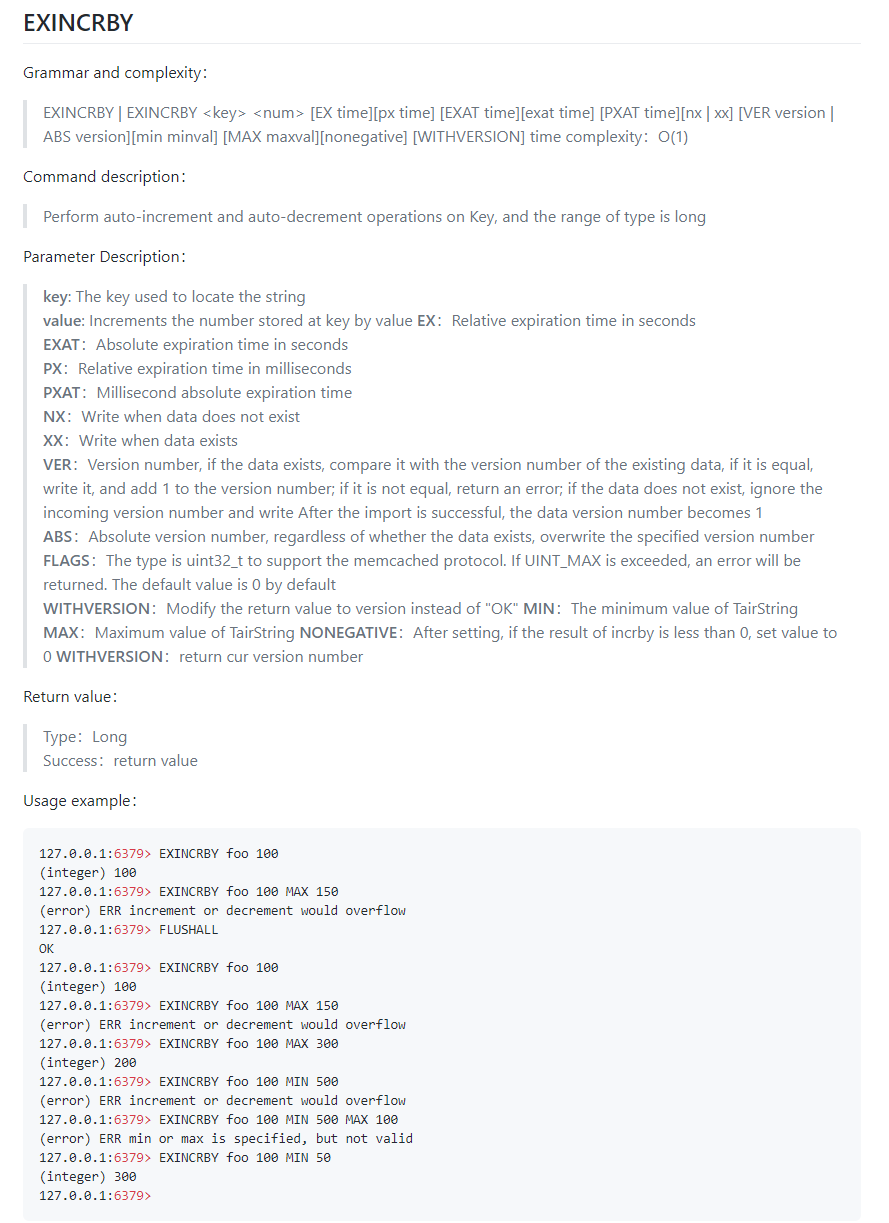

TairString, another structure in the module, modifies Redis String and supports String with high-concurrency CAS and Version. It has Version values, which enable the implementation of optimistic lock during reading and writing. Note: This String structure is different and cannot be used together with other common String structures in Redis.

As shown in the above figure, when functioning, first, TairString gives an exGet value and return (value,version). Then, it operates on the value and updates with the previous version. If versions are the same, then update. Otherwise, re-read and modify before updating to implement CAS operations. This is called an optimistic lock on the server.

For the optimization of the scenario mentioned above, you can apply the exCAS interface. Similar to exSet, when encountering version conflict, exCAS will return the version mismatch error and updated version of the new value. Thus, another API call is reduced. By applying exCAS after exSet and performing exSet -> exCAS again when the API call fails, network interaction is reduced. Thus, the access volume to Redis will be reduced.

TairString is a string that supports high-concurrency CAS.

Version-Carried String

More Semantics

exIncr/exIncrBy: Snap-up/flash sale (with upper and lower bounds)exSet -> exCAS: Reduce network interactionsThe String is based on the INCRBY method with no upper or lower bound, and the exString is based on the EXINCRBY method that provides various parameters together with the upper and lower bounds. For example, if the minimum value is set to 0, the value cannot be reduced when it equals 0. exString also supports specifying expiration time. For example, a product can only be snapped up within a specified period and cannot after the expiration. The business system also restricts the cache to clear it after a specified time. If the inventory is limited, the goods are removed after ten seconds if no order is placed. If new orders keep coming, the cache is renewed. A parameter needs to be included in EXINCRBY to renew the cache each time INCRBY or API is called to achieve this. Thus, the hit rate can be improved.

As shown in the following figure, to use Redis String, the Lua script displayed above is suitable. If the “get” KEY[1] is larger than “0,” use “decrby” minus “1.” Otherwise, the “overflow” error is returned. Value that has been reduced to “0” cannot be decreased. In the following example, ex_item is set to “3.” Subtract it 3 times and return the “overflow” error when the value becomes “0.”

exString is very simple in use. Users just need to exset a value and execute “exincrby k -1.” Remember that String and TairString are different. Their APIs cannot be used together.

How to Choose the Most Suitable Database to Empower Your Business with Proven Performance

ApsaraDB - July 10, 2019

Alibaba Cloud Native Community - March 30, 2026

Alibaba Cloud Native Community - September 9, 2025

Alibaba Cloud Community - May 14, 2024

Alibaba Cloud Community - March 25, 2022

Alibaba Clouder - April 16, 2019

Tair (Redis® OSS-Compatible)

Tair (Redis® OSS-Compatible)

A key value database service that offers in-memory caching and high-speed access to applications hosted on the cloud

Learn More ApsaraDB for HBase

ApsaraDB for HBase

ApsaraDB for HBase is a NoSQL database engine that is highly optimized and 100% compatible with the community edition of HBase.

Learn More ApsaraDB for OceanBase

ApsaraDB for OceanBase

A financial-grade distributed relational database that features high stability, high scalability, and high performance.

Learn More ApsaraDB for Cassandra

ApsaraDB for Cassandra

A database engine fully compatible with Apache Cassandra with enterprise-level SLA assurance.

Learn MoreMore Posts by ApsaraDB