In the big data session of the 2017 Computing Conference Beijing Summit, Li Xuefeng, a senior technical expert from Alibaba Cloud, talked about multi-tenant isolation on a financial big data platform. He started his speech with the problems of tenant isolation in a traditional single-tenant IaaS architecture and then talked about the multi-tenant PaaS architecture of Alibaba Cloud MaxCompute and how MaxCompute implemented secure isolation. We will discuss these architecture details in this article.

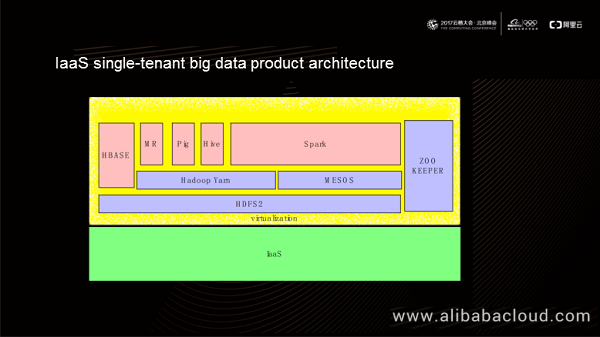

As shown in this figure, the bottom layer of the single-tenant big data product architecture is HDFS2, on which resource control platforms such as Hadoop Yarn and MESOS reside. We can implement specific computing models, such as MR, Hive, HBASE, and Spark over the resource control platforms. In this ecosystem, the IaaS platform is generally available to the same tenant. When new business requirements arise, the tenant can apply for a batch of VM clusters on the IaaS platform, and then deploy open-source products on the clusters. This ecosystem encounters following problems from the perspective of isolation:

Firstly, the IaaS single-tenant big data product architecture has logic issues in actual use. Users must know the specific logic of each product for data analysis. For example, users must understand the Hive logic when using SQL and possess knowledge of Spark when using Spark. It is possible to control the cost of learning at a low level with fewer products, but this increases exponentially when we have to use multiple products collaboratively. Additionally, different open-source products usually cannot identify the logical model of each other, which worsens the logic issue in authentication scenarios.

Secondly, each open-source product has its priority definition for system operation. When we use only one open-source product, jobs get executed based on the priority system of this product. Jobs of higher priorities obtain more resources than those of lower priorities, and we get a better estimate of their running durations. However, when we have to use multiple open-source products together, the IaaS single-tenant big data architecture cannot optimize the job execution priorities globally.

Lastly, open-source products often offer user-defined logic, for example, UDF of MR or Hive. Running user-defined code in big data products brings security risks. For example, Hadoop Yarn simply isolates user-defined code with Linux Containers. In this isolation mechanism, the user-defined code logic runs in the same kernel as the Hadoop process. If the attacking program in the code logic affects the kernel, the big data product processes running in the same kernel are also affected. Generally, a job of a big data product runs on most of or even all machines in a cluster, depending on the size of the data shard. In this case, the entire cluster becomes vulnerable to security risks. In an extreme condition, the entire computing cluster may break down when a hacker exploits a kernel vulnerability to attack a machine successfully, and the job shard is large enough.

To address these problems, MaxCompute offers PaaS multi-tenancy capability using a proprietary system architecture.

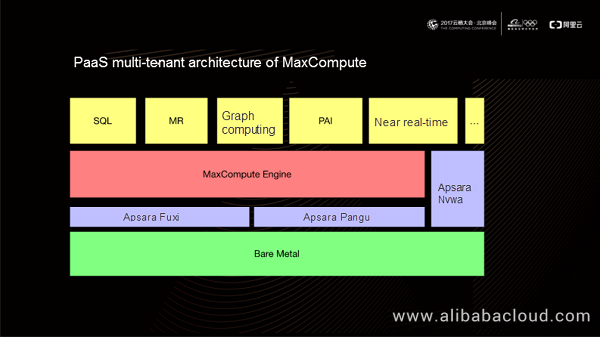

In the above PaaS multi-tenant architecture diagram, MaxCompute runs over the Apsara operating system. It depends on the Apsara Fuxi module to provide unified resource control, the Apsara Pangu module to provide unified storage, and the Apsara Nvwa module to provide consistency service. MaxCompute uses the same computing engine to offer multiple computing models, including SQL, MR, graph computing, PAI, and near real-time.

Now, this computing engine offers qualified computing capabilities for financial users on the public cloud.

MaxCompute uses the following methods to implement multi-tenancy:

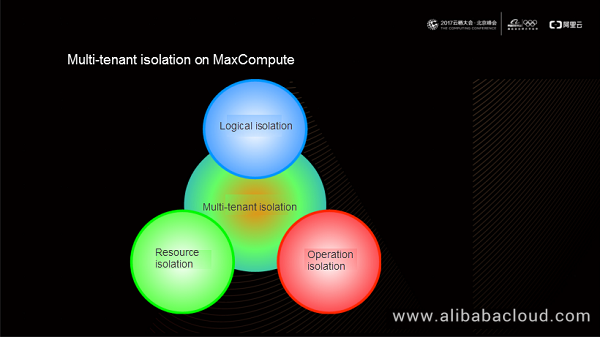

We will now discuss these three isolation mechanisms offered by MaxCompute in detail.

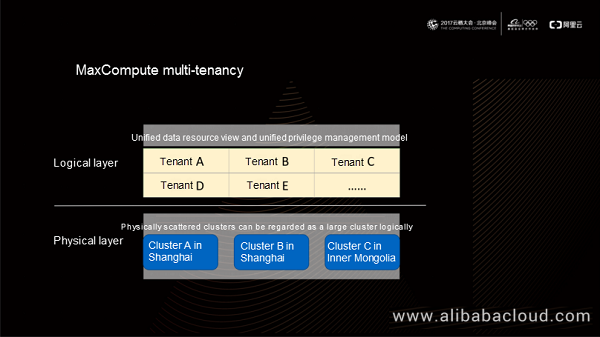

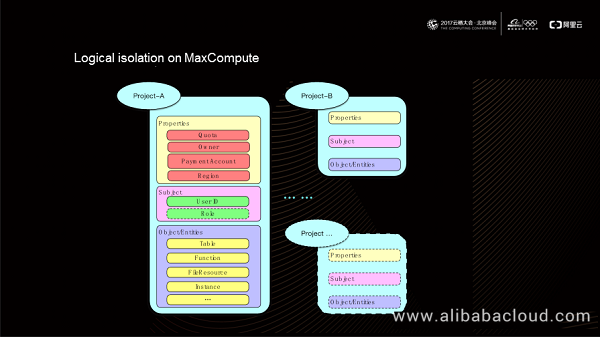

Currently, a MaxCompute instance provides a unified tenant system, no matter how many physical clusters the instance is running on. In this tenant system, the data resource view and privilege management model for the same tenant is unique and bound to the tenant model. In real-world applications, a tenant on MaxCompute maps to a project, which contains all resources, properties, and privileges of the tenant.

As shown in the preceding figure, a project consists of three parts: properties, subject, and object. Properties of a project include information such as quota, owner, payment account, and region. All authorized accesses in a project must use the user IDs as the subject, based on which MaxCompute offers a role model for authority aggregation. The resources we have used in the above-mentioned computing models (such as MR and Hive) are all mapped to a specific object in a project. For example, resources in the SQL model are table objects, and resources in the UDF model are function objects.

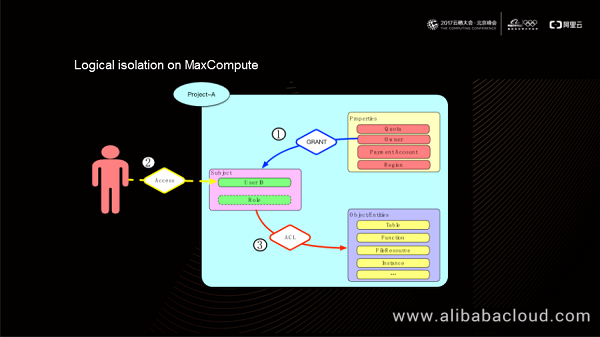

Based on the preceding logical model, MaxCompute offers a set of authentication and authorization mechanisms for privilege control. A project owner has all the privileges over the project. Any user who wants to use this project for computing must get authorization from the project owner first (using the GRANT statement). When accessing the project, a user submits its user ID as the user identity to perform operations such as reading and writing tables, creating functions, or adding and deleting resources. MaxCompute uses a unified ACL logic to determine whether the current user ID has the required privilege before it allows execution of such operations.

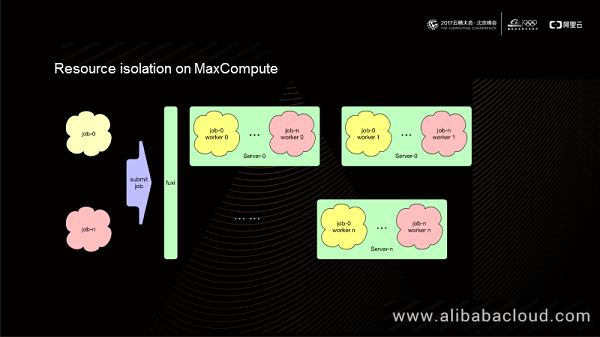

The computing engine of MaxCompute depends on the Apsara operating system to offer resource operation and isolation capabilities.

As shown in the preceding figure, when we submit Job-0 to Job-n to the Apsara Fuxi module, the Fuxi scheduling system assigns operation levels to these jobs based on operation levels of users. The operation levels correspond to properties in a project. The Fuxi module converts Job-0 to Job-n into Fuxi jobs and then dispatches them to nodes of a computing cluster. Finally, a server in the computing cluster runs jobs of multiple tenants simultaneously. All these jobs run as Fuxi workers.

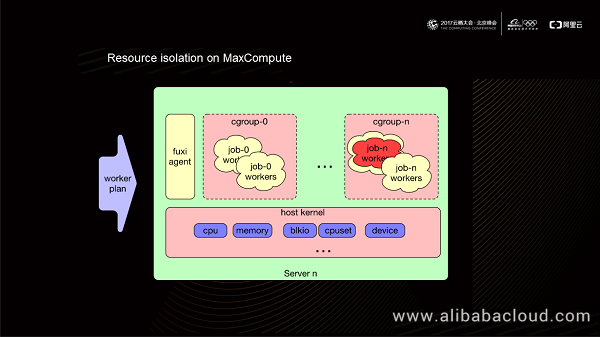

When the Fuxi engine on a machine in the cluster receives a worker plan, it sets the Cgroup parameter on this machine based on the quota of the user to which the worker belongs. In this way, jobs submitted by different users run with different Cgroup parameter settings on the physical machine. Currently, MaxCompute leverages the Cgroup capability of the Linux kernel to allocate CPU, memory, and other resources to a certain process on a physical machine.

Finally, let's have a look at the operation isolation mechanism provided by MaxCompute to ensure secure operation of user-defined logic. When the Fuxi module runs user-defined code logic, it pulls an isolated environment and runs the code in an isolated process. For the Fuxi module, this process is the same as other processes but runs in an isolated system. That is, this is a common process for the Fuxi module but is isolated from untrusted code processes.

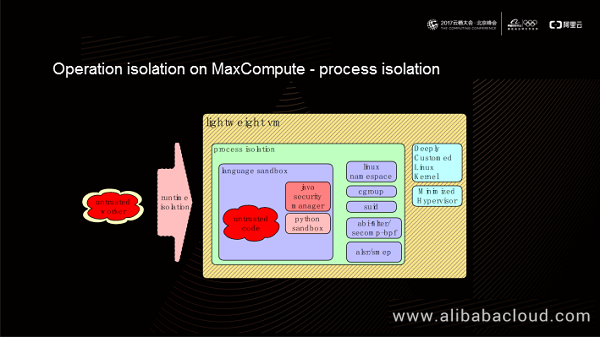

We can classify operation isolation further into process isolation, device isolation, and network isolation.

A single process running untrusted code (which may contain malicious code) may damage the computing platform. MaxCompute offers an embedding isolation solution to prevent this potential security risk. The innermost layer of this solution provides Java sandbox and Python sandbox. The language-specific sandboxes implement innermost isolation. For example, Java UDF can restrict the classes that can be loaded, and Python UDF can restrict specific functions. At the intermediate layer, MaxCompute isolates processes based on Linux kernel mechanisms, including namespace, Cgroup, and secomp-bpf. At the outermost layer, MaxCompute offers lightweight VMs (created in several hundreds of milliseconds) using in-depth custom Linux kernel and a minimized hypervisor. Finally, the untrusted code runs on a hypervisor over the physical machine. The Fuxi module treats the untrusted code as a hypervisor process, but the untrusted code is running in an isolated environment.

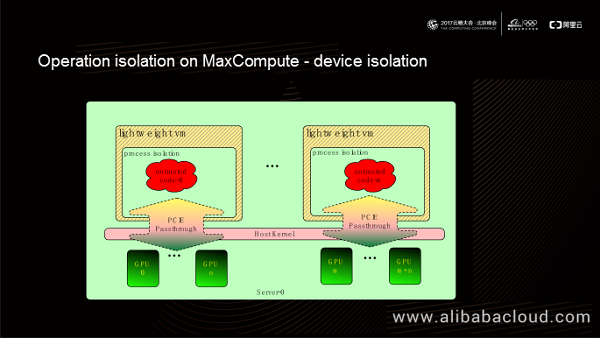

MaxCompute also supports hardware acceleration for a user-defined code. For example, PAI supports direct GPU access. MaxCompute supports GPU pass-through into a VM in PCIe pass-through mode, which allows guest processes to access the GPU over the PCIe bus and GPU driver in the guest kernel.

GPU access from a VM over the PCIe bus has a similar speed to GPU access from a physical machine. Also, this GPU access mode removes the need to install the GPU driver on a physical machine, thereby eliminating the impact of GPU driver on platform stability and reliability.

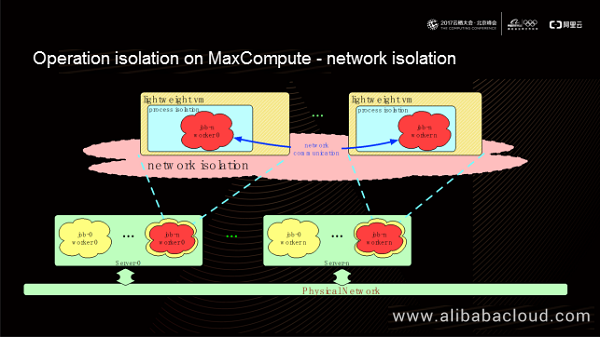

MaxCompute offers the network isolation capability for users' code logic on some products. It creates a virtual network between the VMs provisioned by the Fuxi module. These VMs can communicate directly through the virtual network, ensuring compatibility between open-source code running in the VMs. You can also see from the preceding figure that the user-defined code logic does not connect to the physical network directly, whereas the trusted code running on Fuxi, including the code in the MaxCompute framework, uses the physical network for communication. This guarantees a low communication latency in the MaxCompute framework.

We have discussed how Alibaba Cloud MaxCompute uses logical isolation, resource isolation, and network isolation methods to provide secure isolation for big data processing. You can learn more about MaxCompute and other Alibaba Cloud products and solutions at www.alibabacloud.com.

End-to-End IoT Security Evaluation Methods for the Next Decade

2,593 posts | 794 followers

FollowAlibaba Clouder - July 26, 2019

Alibaba Cloud MaxCompute - June 23, 2022

Alibaba Clouder - July 26, 2019

rrravikumar - September 17, 2021

Alibaba Cloud Community - October 20, 2025

Alibaba Cloud Community - October 20, 2025

2,593 posts | 794 followers

Follow MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn More E-MapReduce Service

E-MapReduce Service

A Big Data service that uses Apache Hadoop and Spark to process and analyze data

Learn More DataWorks

DataWorks

A secure environment for offline data development, with powerful Open APIs, to create an ecosystem for redevelopment.

Learn MoreMore Posts by Alibaba Clouder