By Zhou Zhengxi (Dianying), a technical expert in Alibaba Cloud interested in cloud native and deeply involved in the OAM community

Open Application Model (OAM) classifies an application's workloads into three types: core, standard, and extended workloads. OAM platforms provide different degrees of freedom for implementing different types of workloads. The only core workload in the OAM community is a containerized workload. This is a container-based workload and can be regarded as the simplified Kubernetes Deployment workload because it removes a large number of fields that are irrelevant to business R&D, such as PodSecurityPolicy.

Many of you may have doubts on how OAM can support Kubernetes built-in workloads. However, it surely can. This is a default capability of OAM as a Kubernetes native application definition model.

The article uses Deployment workloads as examples to describe how to use OAM to define and manage cloud-native applications based on Kubernetes built-in workloads.

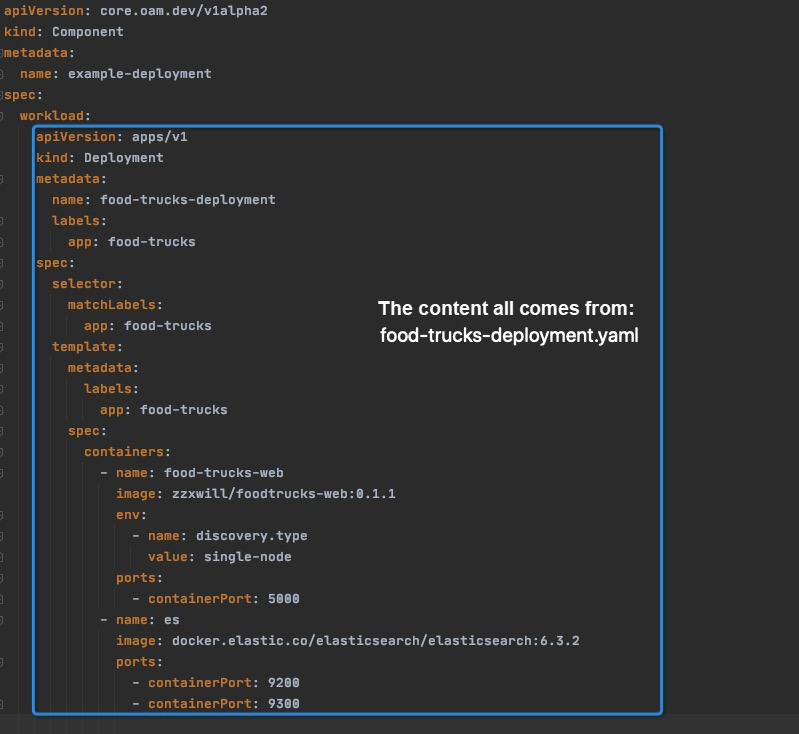

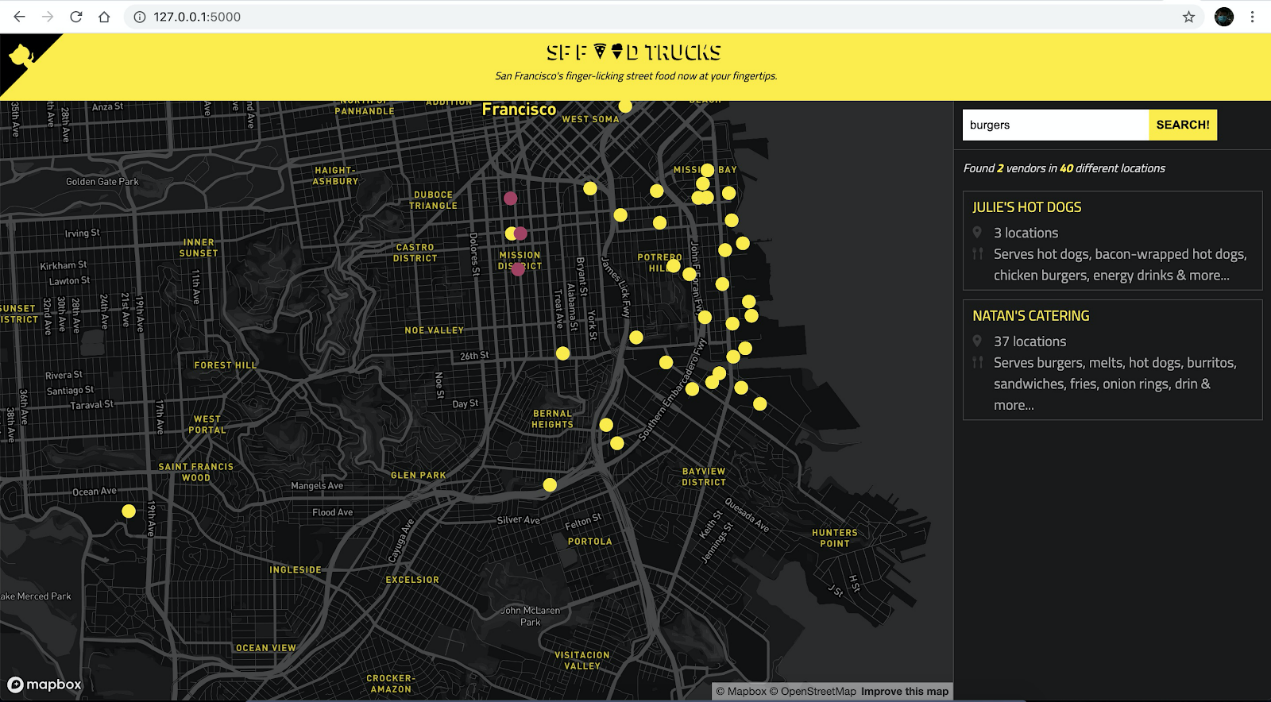

Based on the GitHub Food Trucks project (a map application for San Francisco street foods), build the zzxwill/foodtrucks-web:0.1.1 image with the dependent Elasticsearch image. By default, its Deployment description file food-truck-deployment.yaml is as follows:

apiVersion: apps/v1

kind: Deployment

metadata:

name: food-trucks-deployment

labels:

app: food-trucks

spec:

selector:

matchLabels:

app: food-trucks

template:

metadata:

labels:

app: food-trucks

spec:

containers:

- name: food-trucks-web

image: zzxwill/foodtrucks-web:0.1.1

env:

- name: discovery.type

value: single-node

ports:

- containerPort: 5000

- name: es

image: docker.elastic.co/elasticsearch/elasticsearch:6.3.2

ports:

- containerPort: 9200

- containerPort: 9300If you submit the preceding YAML file to a Kubernetes cluster, you can run port-forward to view the effect in a browser.

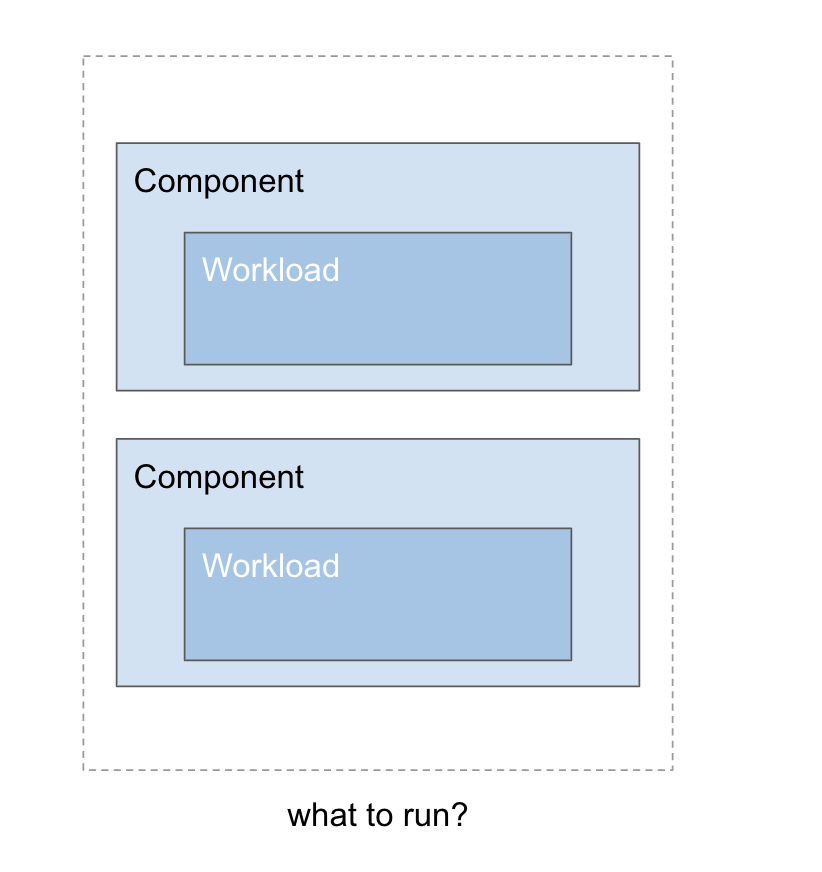

In OAM, an application consists of multiple components, and the core of a component is its workload.

Therefore, Kubernetes built-in workloads, such as Deployment and StatefulSet can be defined as workloads of an OAM component. In the following sample-deployment-component.yaml file, the .spec.workload content is a Deployment workload, specifically, the Deployment workload defined in the food-truck-deployment.yaml file.

Then, we can submit the preceding OAM component to a Kubernetes cluster for verification.

In OAM, we need to write an ApplicationConfiguration YAML file to organize all OAM components. This example contains only one component, and therefore sample-applicationconfiguration.yaml is simple, as shown below:

apiVersion: core.oam.dev/v1alpha2

kind: ApplicationConfiguration

metadata:

name: example-deployment-appconfig

spec:

components:

- componentName: example-deploymentSubmit the OAM component and ApplicationConfiguration YAML file to Kubernetes.

✗ kubectl apply -f sample-deployment-component.yaml

component.core.oam.dev/example-deployment created

✗ kubectl apply -f sample-applicationconfiguration.yaml

applicationconfiguration.core.oam.dev/example-deployment-appconfig createdIf you check the execution of example-deployment-appconfig, you will find the following error:

✗ kubectl describe applicationconfiguration example-deployment-appconfig

Name: example-deployment-appconfig

...

Status:

Conditions:

Message: cannot apply components: cannot apply workload "food-trucks-deployment": cannot get object: deployments.apps "food-trucks-deployment" is forbidden: User "system:serviceaccount:crossplane-system:crossplane" cannot get resource "deployments" in API group "apps" in the namespace "default"

Reason: Encountered an error during resource reconciliation

...This is because OAM does not have sufficient Kubernetes plugin permissions. Therefore, do not forget to properly set ClusterRole and ClusterRoleBinding.

Submit the authorization file rbac.yaml, as shown below. ApplicationConfiguration can be successfully executed.

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: deployment-clusterrole-poc

rules:

- apiGroups:

- apps

resources:

- deployments

verbs:

- "*"

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: oam-food-trucks

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: deployment-clusterrole-poc

subjects:

- kind: ServiceAccount

namespace: crossplane-system

name: crossplaneView deployments and set port forwarding.

✗ kubectl get deployments

NAME READY UP-TO-DATE AVAILABLE AGE

food-trucks-deployment 1/1 1 1 2m20s

✗ kubectl port-forward deployment/food-trucks-deployment 5000:5000

Forwarding from 127.0.0.1:5000 -> 5000

Forwarding from [::1]:5000 -> 5000

Handling connection for 5000

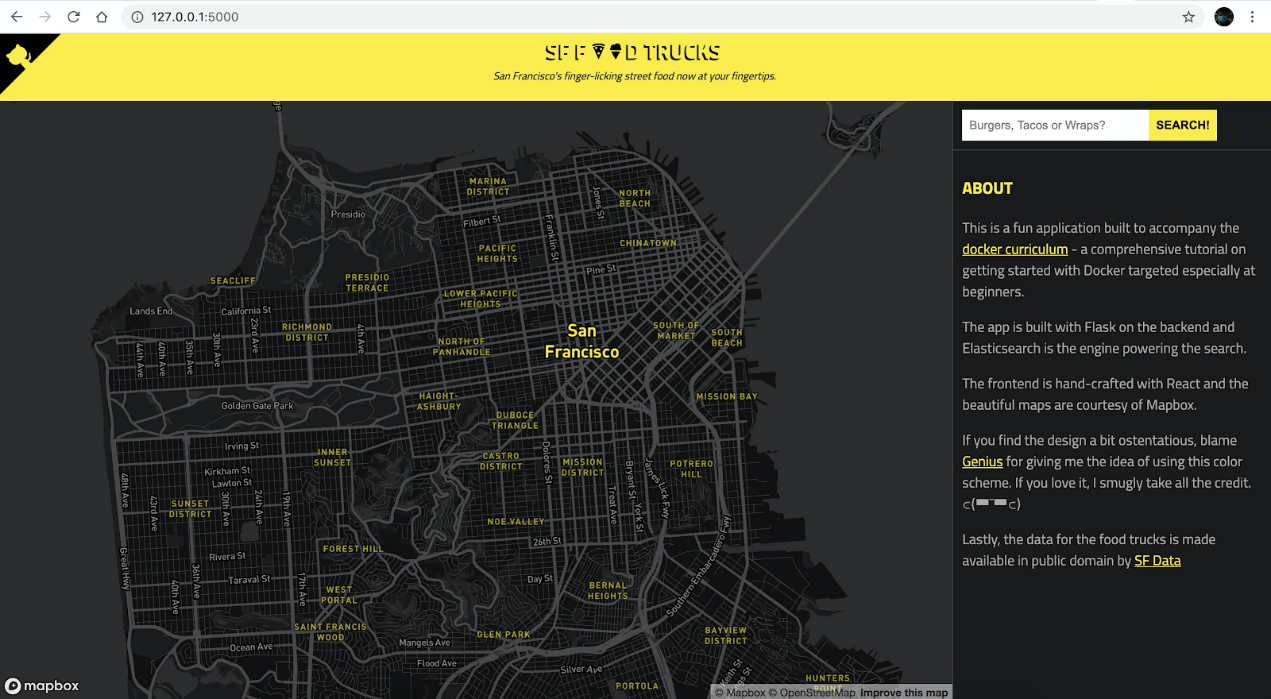

Handling connection for 5000Then, you can visit http://127.0.0.1:5000 to find hamburger shops on the San Francisco street food map.

If you've read this far, you may be wondering when you should use Deployment workloads or containerized workloads as OAM workloads.

If your users want to see a simple Deployment workload without irrelevant fields or if you want to remove user-irrelevant fields in a Deployment workload (for example, you do not want R&D engineers to set PodSecurityPolicy), you can expose a containerized workload to users. In this way, an OAM Traits object, such as ManualScalerTrait, defines the O&M operations and policies required by the workload. This "separation of concerns" approach is also a best practice advocated by OAM.

If you do not need to remove O&M and security-related fields in a Deployment workload, you can expose a Deployment workload. An OAM Traits object can also provide other O&M capabilities that the workload requires.

Since workloads in an OAM component are various API objects in Kubernetes, you may wonder about the benefits of using OAM to define applications.

When you build a Kubernetes-based application platform, you will encounter a series of difficulties, such as dependency management, version control, and phased release. In addition, if you only use Kubernetes native workloads, you cannot integrate the application platform with cloud resources.

However, OAM not only allows you to centrally describe cloud resources and applications, but also help you solve other difficulties, such as dependency management, version control, and phased release. We will describe these solutions in detail in subsequent articles.

704 posts | 57 followers

FollowAlibaba Developer - May 27, 2020

Alibaba Developer - February 1, 2021

Alibaba Developer - August 18, 2020

Alibaba Cloud Native Community - November 11, 2022

Alibaba Clouder - February 20, 2021

Alibaba Cloud Native Community - April 4, 2023

704 posts | 57 followers

Follow Cloud-Native Applications Management Solution

Cloud-Native Applications Management Solution

Accelerate and secure the development, deployment, and management of containerized applications cost-effectively.

Learn More Managed Service for Prometheus

Managed Service for Prometheus

Multi-source metrics are aggregated to monitor the status of your business and services in real time.

Learn More Bastionhost

Bastionhost

A unified, efficient, and secure platform that provides cloud-based O&M, access control, and operation audit.

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn MoreMore Posts by Alibaba Cloud Native Community