The Alibaba Cloud 2021 Double 11 Cloud Services Sale is live now! For a limited time only you can turbocharge your cloud journey with core Alibaba Cloud products available from just $1, while you can win up to $1,111 in cash plus $1,111 in Alibaba Cloud credits in the Number Guessing Contest.

By Hanchao

In this internet era, personalized recommendations are common. Often, people are directed to links such as "You May Also Like" and "Related Products". Personalized recommendation algorithms and user-generated data are used to record and predict users' interests in real-time and provide recommendations based on their interests. Those algorithms have been introduced in a number of successful mobile apps such as news clients (ByteDance's Toutiao, NetEase News, and Alibaba's UC News) and e-commerce shopping clients (Pinduoduo, Taobao, and Tmall). In Alibaba Cloud, vector analysis provided by AnalyticDB for PostgreSQL helps implement personalized recommendation systems.

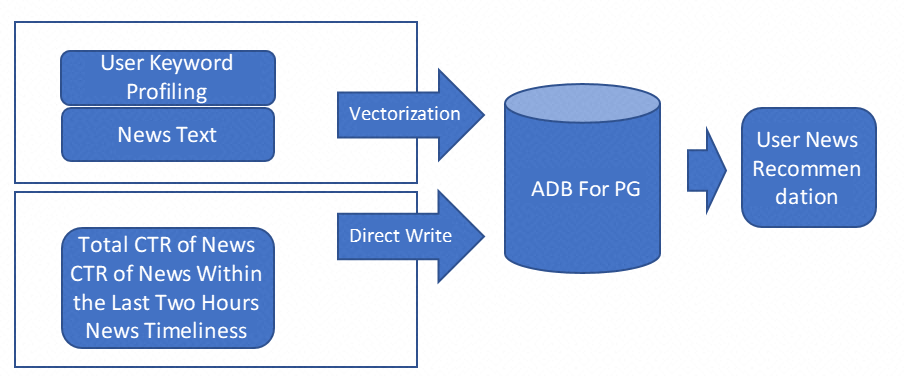

For example, a user reads a piece of news. A personalized news recommendation system uses the natural language processing (NLP) algorithm to extract keywords from the news title and body. Then the system uses the feature_extractor function in AnalyticDB for PostgreSQL to convert the keywords into news feature vectors and import the vectors to the vector library of AnalyticDB for PostgreSQL for news recommendations. Figure 1 shows the implementation process.

Figure 1. The overall framework of recommendation algorithms

1) Use the vector library in AnalyticDB for PostgreSQL to obtain user feature vectors: The system analyzes historical browsing data of users, constructs user profiles, and builds user preference models to obtain user feature vectors. Specifically, the system obtains details about the news read by users from their browsing logs and extracts keywords from each piece of the news to establish user profiles. If a user reads several pieces of National Basketball Association (NBA) playoff news, which contain keywords such as NBA, basketball, superstar, and sports, it indicates that the user is an NBA fan. The system uses the feature_extractor function in AnalyticDB for PostgreSQL to convert these keywords to vectors and import the vectors into the vector library of AnalyticDB for PostgreSQL to obtain the feature vector of the user.

2) Push news based on the vector library and the logistic regression prediction model of AnalyticDB for PostgreSQL: The system uses the vector library of AnalyticDB for PostgreSQL to retrieve the first 500 pieces of unread news which may interest the user. Then, it extracts the publish time and click-through rates (CTRs) of the 500 pieces of news and pushes news to the user based on the logistic regression prediction model. This model is obtained based on the browsing records of the user.

The feature_extractor function in AnalyticDB for PostgreSQL uses the Bidirectional Encoder Representations from Transformers (BERT) model. This model is trained based on a large number of corpora. It contains semantic information and has a higher query precision than the term frequency-inverse document frequency (TFIDF) algorithm.

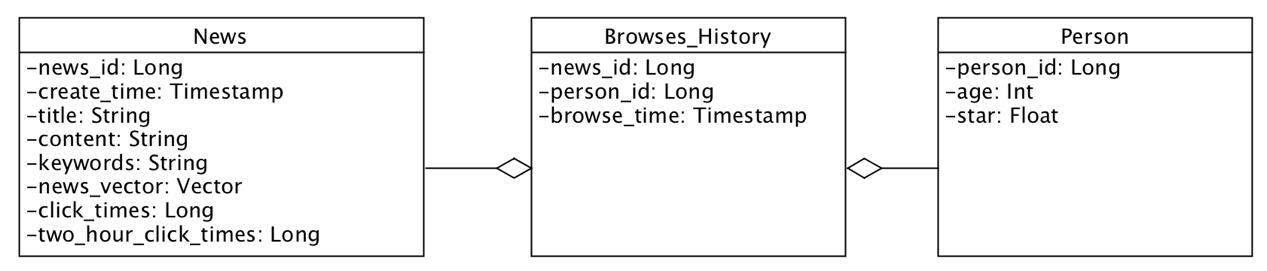

Figure 2 shows the schema of tables in AnalyticDB for PostgreSQL in the personalized news recommendation system. Note that the system contains three tables: news, person, and browses_history.

Figure 2. Schema of tables in AnalyticDB for PostgreSQL in a personalized recommendation system

Let's look at these three tables.

The news table stores information about news, including the news ID (news_id), publication time (create_time), title (title), body (content), total number of clicks (click_times), and number of clicks within two hours (two_hour_click_times). The personalized news recommendation system extracts keywords from the news title and the body and converts the keywords into a vector (news_vector). When data is inserted into the news table, the system automatically converts keywords into vectors and inserts the vectors and other news information into the news table.

CREATE TABLE news (

news_id bigint,

create_time timestamp,

title varchar(100),

content varchar(200),

keywords varchar(50),

click_times bigint,

two_hour_click_times bigint,

news_vector real[],

primary key (news_id)

) distributed by (news_id);The browses_history table stores information about news read by users, including the news ID (news_id), user ID (person_id), and reading time of the news (browse_time).

CREATE TABLE browses_history (

browse_id bigint,

news_id bigint,

person_id bigint,

browse_time timestamp,

primary key (browse_id)

) distributed by (browse_id);The person table stores user information, including the user ID (person_id), age (age), and star class (star).

CREATE TABLE person(

person_id bigint,

age bigint,

star float,

primary key (person_id)

) distributed by (person_id);The personalized news recommendation system uses the feature_extractor function in AnalyticDB for PostgreSQL to extract news feature vectors and import the vectors to the news table. For example, execute the SELECT statement to obtain the feature vector corresponding to the text "ADB For PG is very good!".

select feature_extractor('text', 'ADB For PG is very good!') ;The following figure shows a piece of news. The personalized news recommendation system stores the news information to the news table in two steps:

1) Extract news keywords. AnalyticDB for PostgreSQL does not support the extract_tags function. Instead, call the (jieba.analyse.extract_tags(title + content, 3)) function in the jieba NLP system to extract keywords.

2) Execute the INSERT statement to store news information, including the keywords and news feature vectors, to the news table.

insert into news(news_id, create_time, title, content,

keywords, click_times,two_hour_click_times)

values(1, now(),'South Korea military: North Korea launched a UFO eastward in the north Pyeongan Province','According to South Korea's Joint Staff Headquarters, at about 16:30 p.m local time today, North Korea launched a UFO eastward in the north Pyeongan Province.', 'South Korea, North Korea, UFO', 123, 3);Based on the news browsing logs of the users, their browsing keywords can be obtained easily. For example, by executing the SELECT statement, the return result shows that the value of person_id is 9527.

select keywords

from Person p, Browses_History bh, News n

where p.person_id = bh.person_id and bh.news_id = n.news_id and p.person_id = 9527;The system extracts all browsing keywords of users in the same way. For example, the user whose person_id is 9527 reads the news with the following keywords: NBA sports, finals, Miami Heat, and Houston Rockets. The system then uses the feature_extractor function to convert the keywords to vectors.

select feature_extractor('text', 'NBA sports,finals,Miami Heat,Houston Rockets'));The system uses the user feature vectors to search for relevant news information in the news table. For example, execute the SELECT statement to obtain the first 500 pieces of news that may interest the user. Meanwhile, the system filters out articles the user has read. After the news recommendations are obtained, the application pushes them to the user.

select news_id, title, content, (extract(epoch from (now()-create_time)) * w1 + click_times/extract(epoch from (now()-create_time)) * w2 + two_hour_click_times/extract(epoch from (now()-create_time)) * w3 + ann_distance * w4) as rank_score

from (select *, l2_distance(news_vector, feature_extractor('textf', 'NBA sports,finals,Miami Heat,Houston Rockets')) as ann_distance from news order by ann_distance desc limit 500) S

order by rank_score desc;The parameters are described as follows:

Intime Abandons Traditional Databases for PolarDB, Increasing Its ROI by More Than 200%

Alibaba Cloud MaxCompute - October 18, 2021

Alibaba Clouder - September 25, 2020

Alibaba Clouder - April 1, 2021

Alibaba Clouder - May 11, 2020

Alibaba Clouder - July 4, 2017

Kaiwai - September 9, 2019

Platform For AI

Platform For AI

A platform that provides enterprise-level data modeling services based on machine learning algorithms to quickly meet your needs for data-driven operations.

Learn More Epidemic Prediction Solution

Epidemic Prediction Solution

This technology can be used to predict the spread of COVID-19 and help decision makers evaluate the impact of various prevention and control measures on the development of the epidemic.

Learn More Online Education Solution

Online Education Solution

This solution enables you to rapidly build cost-effective platforms to bring the best education to the world anytime and anywhere.

Learn More Accelerated Global Networking Solution for Distance Learning

Accelerated Global Networking Solution for Distance Learning

Alibaba Cloud offers an accelerated global networking solution that makes distance learning just the same as in-class teaching.

Learn MoreMore Posts by ApsaraDB