By Eryu Guan

As the image acceleration standard solution of OpenAnolis, Dragonfly is a P2P-based intelligent image and file distribution tool that aims to improve the efficiency and rate of large-scale file transfer and maximize the use of network bandwidth. It is widely used in application distribution, cache distribution, log distribution, and image distribution.

At this stage, Dragonfly evolves based on Dragonfly1.x. Maintaining the original core capabilities of Dragonfly1.x, Dragonfly has been comprehensively upgraded in several aspects, such as system architecture design, product capabilities, and usage scenarios.

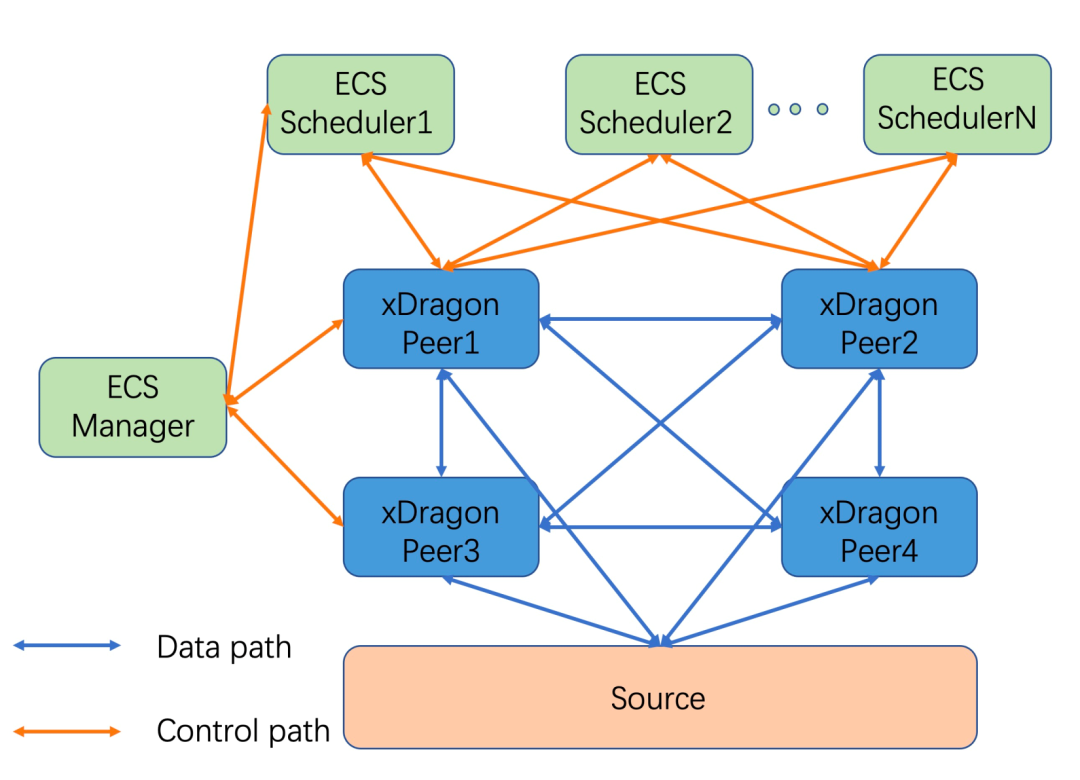

The Dragonfly architecture is mainly divided into three parts: Manager, Scheduler, Seed Peer, all of which form a P2P download network by performing their duties. Dfdaemon can be used as Seed Peer and Peer. Please see the architecture document at the end of this article for more information. The following are the functions of each module:

Please see the Dragonfly website at end of this article for more information.

Although Dragonfly is known as a P2P-based file distribution system, the distributed files must be those that can be downloaded from the network. Whether it is the rpm package or the container image content, there will eventually be an address source. Users can initiate a download request to dfdaemon through the dfget command, and the Dragonfly P2P system downloads it. If the data is not on other Peers, Peer or SeedPeer will return to the source, download data directly from the source, and return it to the user.

However, in some scenarios, the data we need to distribute is generated on a certain node, and there is no remote source address. Dragonfly cannot distribute this data at this time. Therefore, we hope that Dragonfly can add support for this scenario, which means Dragonfly is regarded as a distributed P2P-based cache and arbitrary data distribution system.

This is what we think the Dragonfly cache system architecture should be:

A dfdaemon should be deployed on each compute node (such as X-Dragon) as a peer to join the P2P network.

One or more managers as cluster managers

The original daemon interface:

pkg/rpc/dfdaemon/dfdaemon.proto

// Daemon Client RPC Service

service Daemon{

// Trigger client to download file

rpc Download(DownRequest) returns(stream DownResult);

// Get piece tasks from other peers

rpc GetPieceTasks(base.PieceTaskRequest)returns(base.PiecePacket);

// Check daemon health

rpc CheckHealth(google.protobuf.Empty)returns(google.protobuf.Empty);

}Add 4 interfaces:

service Daemon {

// Check if given task exists in P2P cache system

rpc StatTask(StatTaskRequest) returns(google.protobuf.Empty);

// Import the given file into P2P cache system

rpc ImportTask(ImportTaskRequest) returns(google.protobuf.Empty);

// Export or download file from P2P cache system

rpc ExportTask(ExportTaskRequest) returns(google.protobuf.Empty);

// Delete file from P2P cache system

rpc DeleteTask(DeleteTaskRequest) returns(google.protobuf.Empty);

}The original scheduler interface:

// Scheduler System RPC Service

service Scheduler{

// RegisterPeerTask registers a peer into one task.

rpc RegisterPeerTask(PeerTaskRequest)returns(RegisterResult);

// ReportPieceResult reports piece results and receives peer packets.

// when migrating to another scheduler,

// it will send the last piece result to the new scheduler.

rpc ReportPieceResult(stream PieceResult)returns(stream PeerPacket);

// ReportPeerResult reports downloading result for the peer task.

rpc ReportPeerResult(PeerResult)returns(google.protobuf.Empty);

// LeaveTask makes the peer leaving from scheduling overlay for the task.

rpc LeaveTask(PeerTarget)returns(google.protobuf.Empty);

}Add 2 interfaces, download the RegisterPeerTask() interface before reuse, and delete the LeaveTask() interface before reuse:

// Scheduler System RPC Service

service Scheduler{

// Checks if any peer has the given task

rpc StatTask(StatTaskRequest)returns(Task);

// A peer announces that it has the announced task to other peers

rpc AnnounceTask(AnnounceTaskRequest) returns(google.protobuf.Empty);

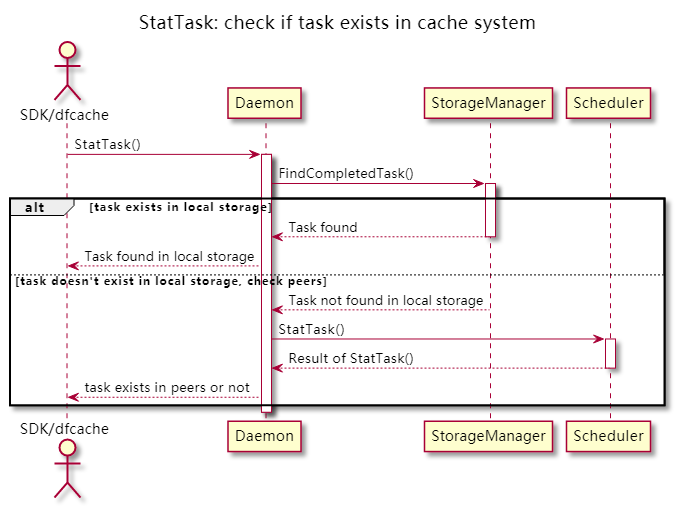

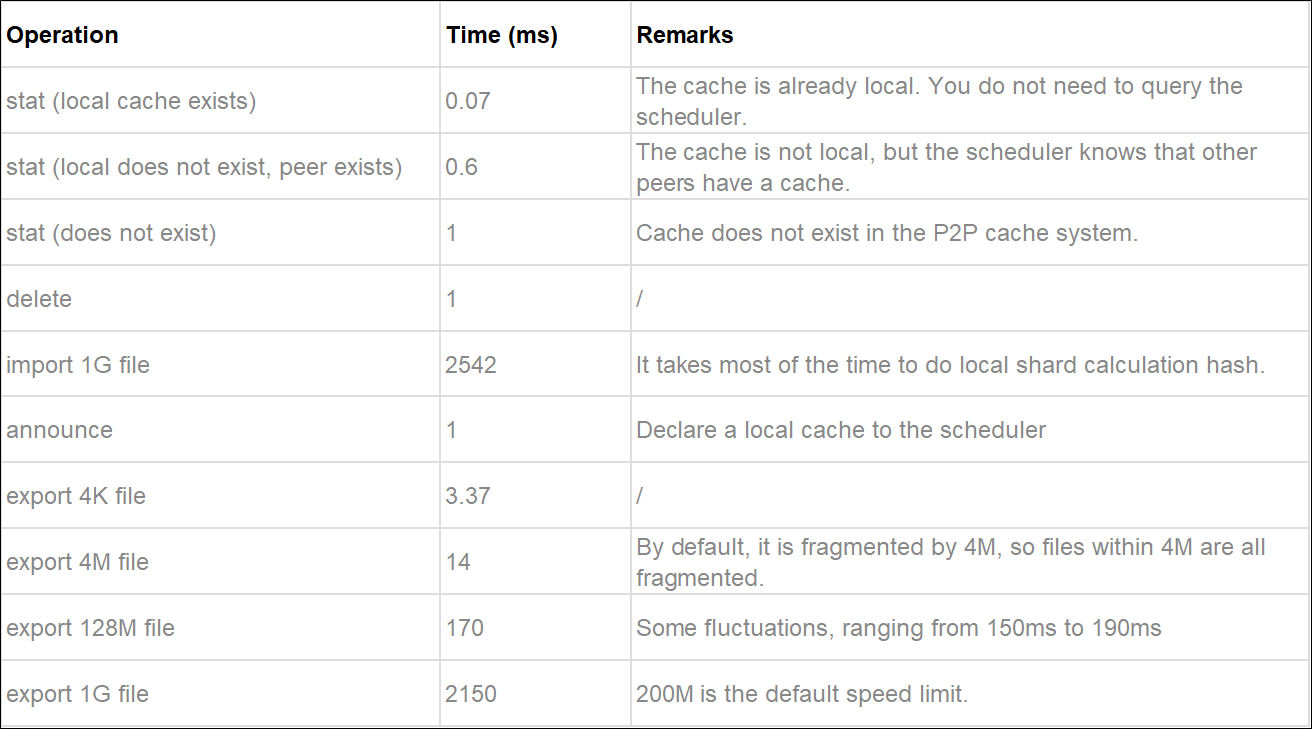

}StatTask

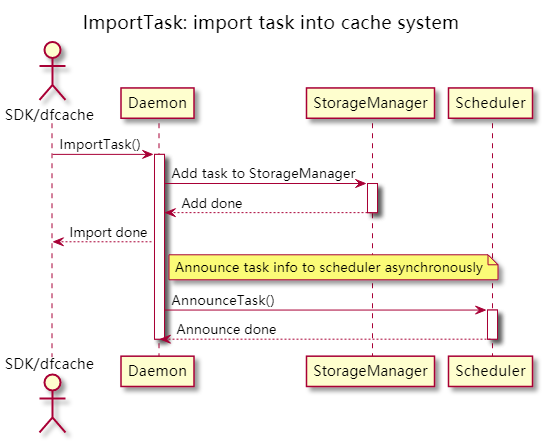

ImportTask

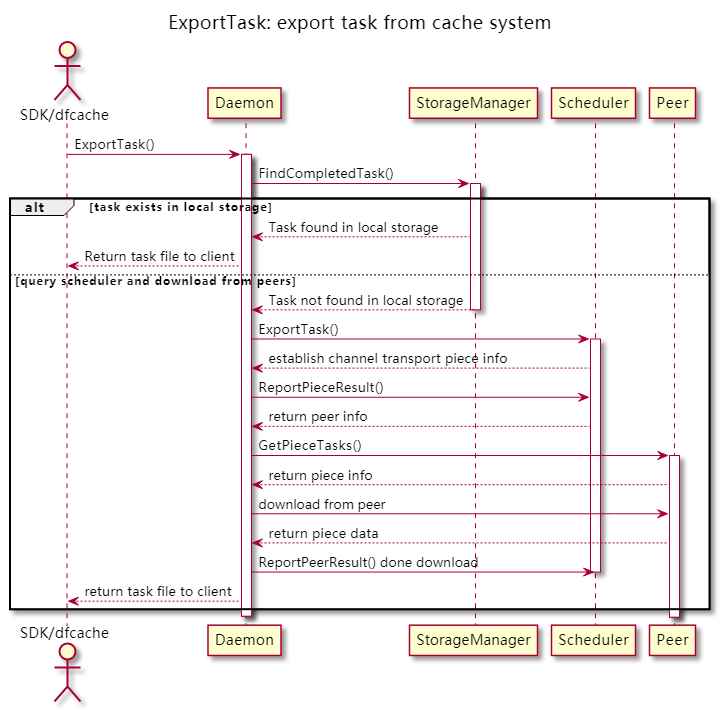

ExportTask

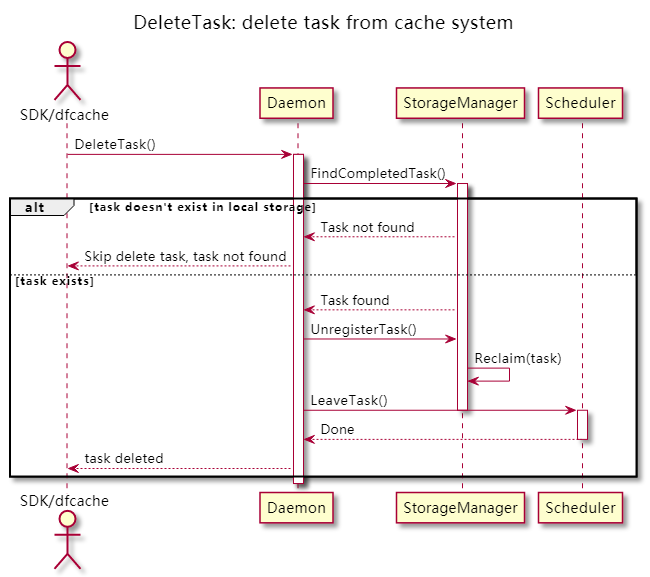

DeleteTask

The code has been merged and can be used in Dragonfly v2.0.3.

Upstream PR:

https://github.com/dragonflyoss/Dragonfly2/pull/1227

In addition to a new interface, we added a command called dfcache for testing. The usage method is listed below:

- add a file into cache system

dfcache import --cid sha256:xxxxxx --tag testtag /path/to/file

- check if a file exists in cache system

dfcache stat --cid testid --local # only check local cache

dfcache stat --cid testid # check other peers as well

- export/download a file from cache system

dfcache export --cid testid -O /path/to/output

- delete a file from cache system, both local cache and P2P network

dfcache delete -i testid -t testtagAdd files of different sizes to the P2P cache system on a node using the added dfcache command and then query, download, and delete the files on another node. Example:

# dd if=/dev/urandom of=testfile bs=1M count =1024

# dfcache stat -i testid # Check a file that does not exist.

# dfcache import -i testid testfile

# on another node

# dfcache stat -i testid

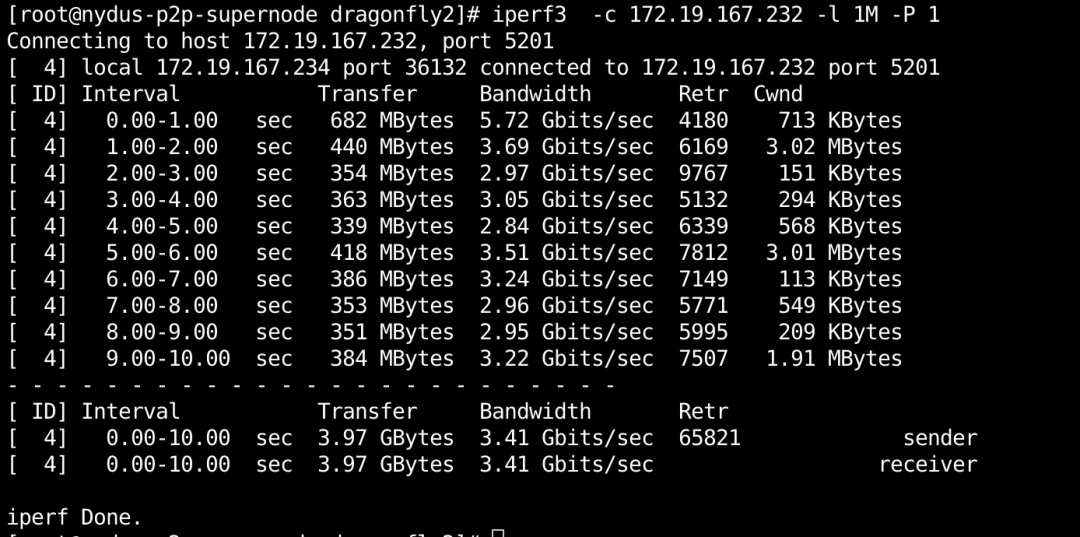

# dfcache export -i testid testfile.exportWith two ECS instances, the network is based on VPC, and the bandwidth is 3.45 Gbits/s (about 440MiB/s):

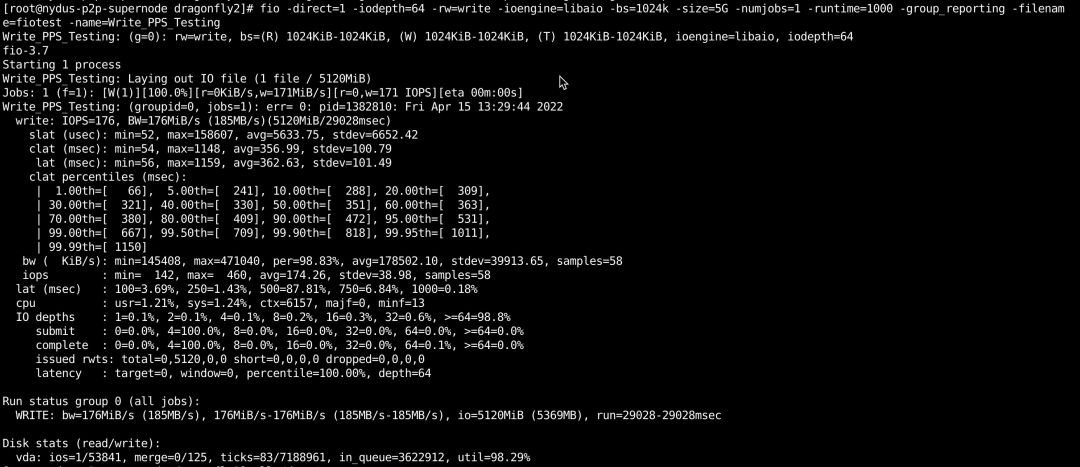

The bandwidth of the downloaded ECS disk is about 180MiB/s:

Image Pulling Saves over 90%: The Large-Scale Distribution Practice of Kuaishou Based on Dragonfly

105 posts | 6 followers

FollowOpenAnolis - April 7, 2023

Alibaba Container Service - February 12, 2019

Alibaba Cloud MaxCompute - July 15, 2021

OpenAnolis - January 12, 2023

Alibaba Clouder - March 15, 2019

Apache Flink Community China - August 2, 2019

105 posts | 6 followers

Follow CT Image Analytics Solution

CT Image Analytics Solution

This technology can assist realizing quantitative analysis, speeding up CT image analytics, avoiding errors caused by fatigue and adjusting treatment plans in time.

Learn More Architecture and Structure Design

Architecture and Structure Design

Customized infrastructure to ensure high availability, scalability and high-performance

Learn More Image Search

Image Search

An intelligent image search service with product search and generic search features to help users resolve image search requests.

Learn More DevOps Solution

DevOps Solution

Accelerate software development and delivery by integrating DevOps with the cloud

Learn MoreMore Posts by OpenAnolis