Programming in SQL is an exciting as well as a challenging task. Even experienced SQL programmers, developers and database administrators (DBAs) sometimes face challenges with the SQL language. This article aims to help users identify such critical mistakes and learn to overcome them.

Let's deep dive into the eight worst SQL mistakes in the following sections.

Paged queries are one of the most common scenarios, but also an extremely common problem. For example, for the following simple statement, DBAs often add composite indexes for the type, name, and create_time fields. Such conditional sorting allows effective use of indexes and quickly improves performance. This is a common way for more than 90% of DBAs to solve such problem. However, when the LIMIT clause is changed to "LIMIT 1000000,10", programmers often complain that it takes too long to retrieve merely 10 records. It happens because the database does not know where the 1,000,000th record starts. Therefore, even when indexes are available, it must calculate from scratch. This performance problem occurs usually due to the laziness of programmers. In scenarios such as frontend data browsing and paging or the batch export of big data, the maximum value on the previous page can be used as a query condition. Rewrite the SQL code as follows:

With the new design, the query time is basically fixed and does not change as data volumes increase.

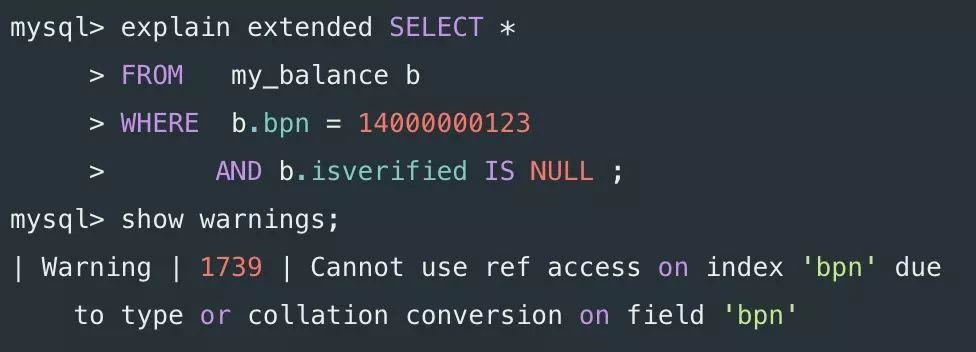

It's another common error that occurs when query variables do not match the field definition types in SQL statements. The following statement is one such example:

The bpn field is defined as varchar(20), and the MySQL policy is to convert the string into a number before comparison. When the function acts on table fields, the index becomes invalid. The preceding problem may be caused by parameters automatically completed by the application framework, rather than due to a conscious mistake on the part of the programmer. At present, many application frameworks are complicated. Although they are very convenient to use, you must also be aware of the potential problems they may cause.

Although the materialized feature was introduced in MySQL 5.6, note that it is only optimized for query statements at present. Manually rewrite UPDATE or DELETE statements into JOIN statements.

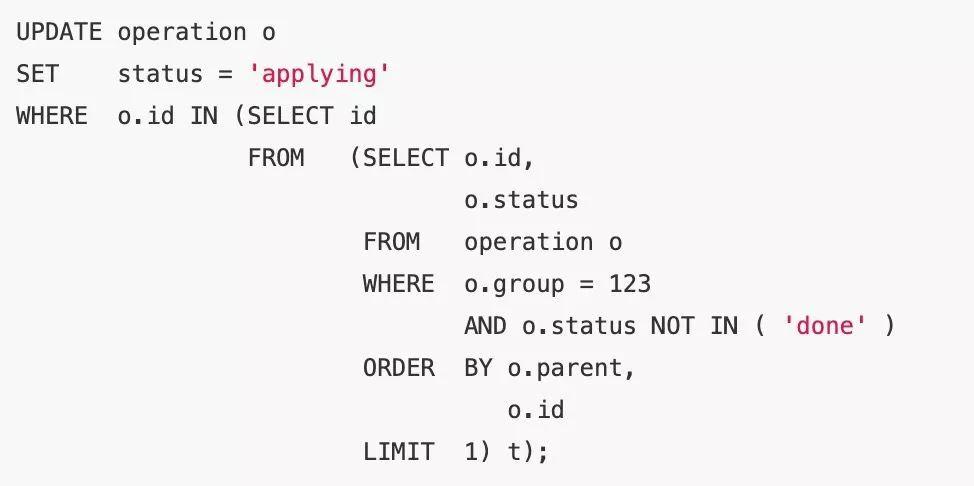

For example, in the following UPDATE statement, MySQL actually runs a circular or nested subquery (DEPENDENT SUBQUERY), and the execution time is relatively long.

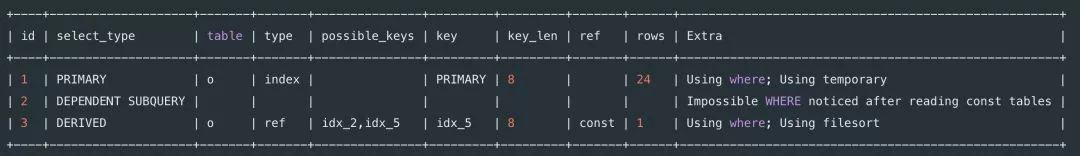

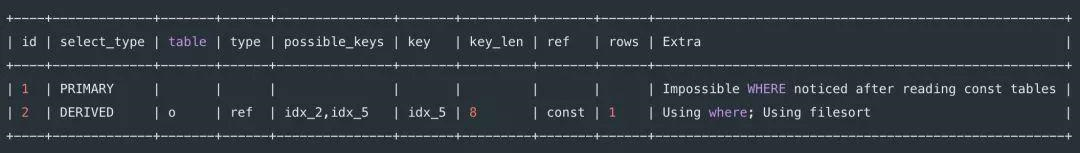

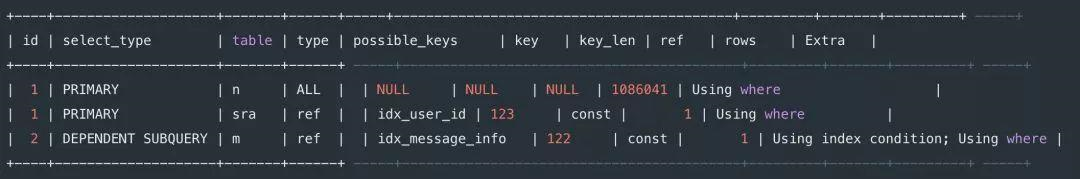

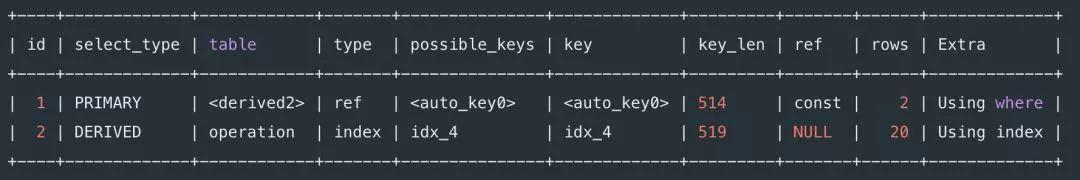

Consider the following execution plan.

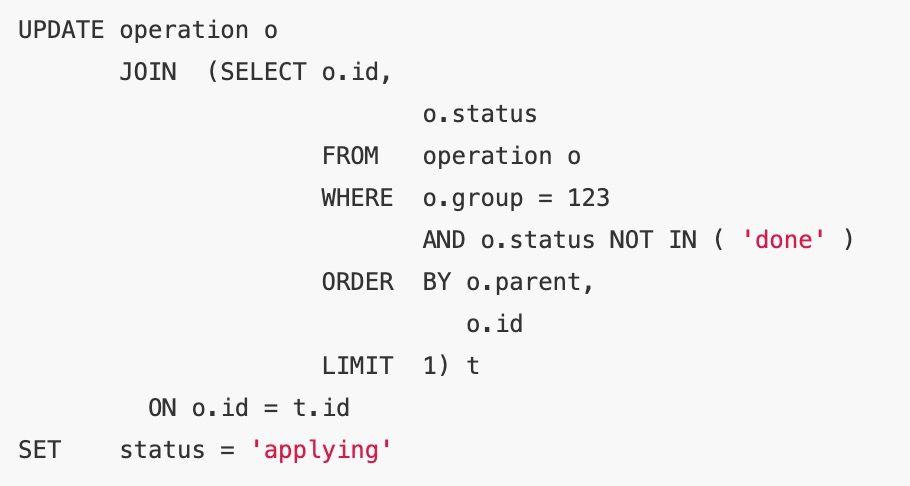

After rewriting the statement as a JOIN statement, the subquery selection mode changes from DEPENDENT SUBQUERY to DERIVED, which reduces the time required from 7 seconds to 2 milliseconds.

Refer to the following simplified execution plan.

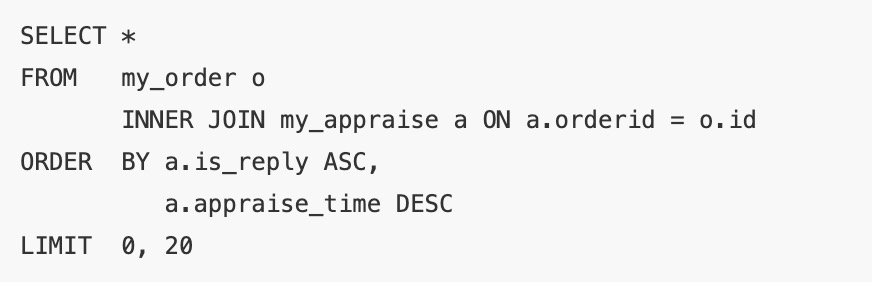

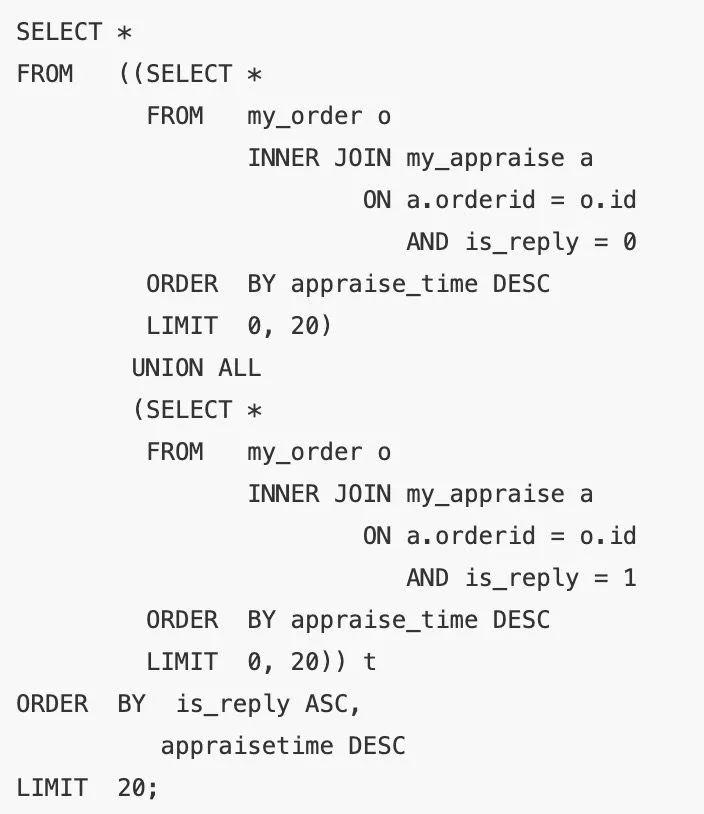

MySQL cannot use indexes for mixed sorting. However, in some scenarios, users still have access to special methods for improving performance.

The execution plan is presented as a full table scan.

Since is_reply only has the states 0 and 1, after rewriting it by following the method, the execution time is reduced from 1.58 seconds to 2 milliseconds.

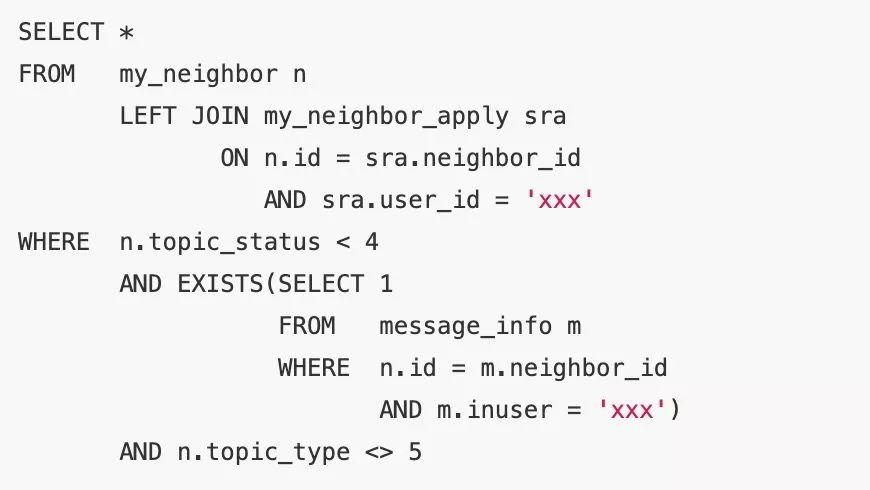

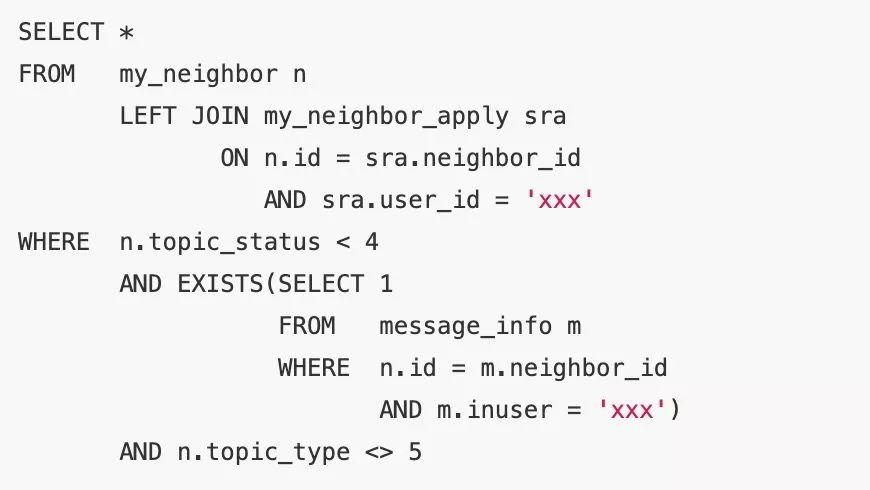

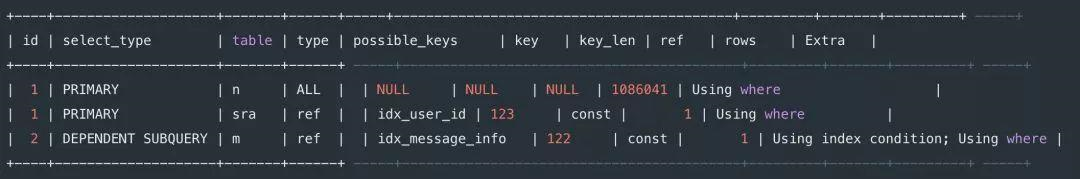

MySQL still uses nested subqueries to process EXISTS clauses. For example, consider the SQL statement below:

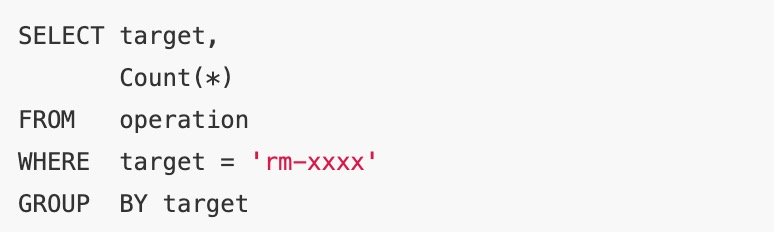

Refer to the following execution plan.

Change the EXISTS statement to a JOIN statement to avoid nested subqueries and reduce the execution time from 1.93 seconds to 1 millisecond.

Consider the new execution plan below.

An external query condition cannot be pushed down to a complex view or subquery in the following situations:

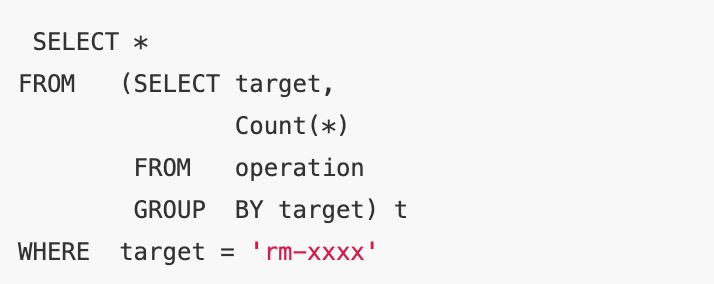

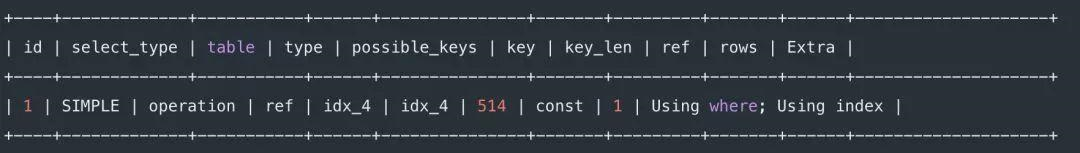

From the execution plan for the following statement, note that the condition acts after the aggregate subquery.

Make sure that the semantic query conditions is directly pushed down and then rewritten as follows:

Refer to the following updated execution plan.

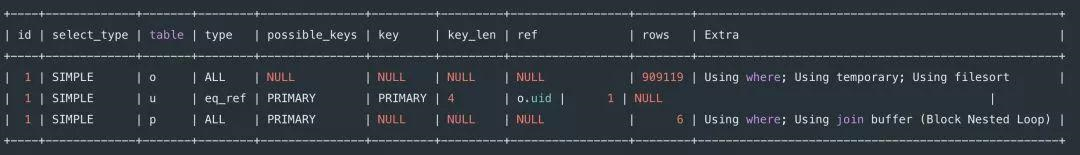

Start with the initial SQL statement as shown below.

The quantity is 900,000, and the execution takes 12 seconds.

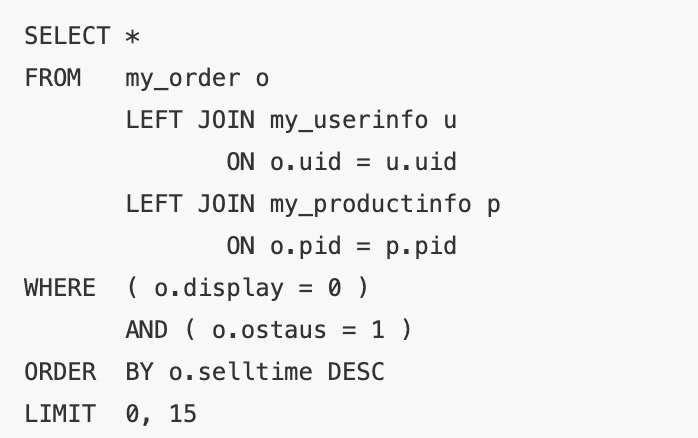

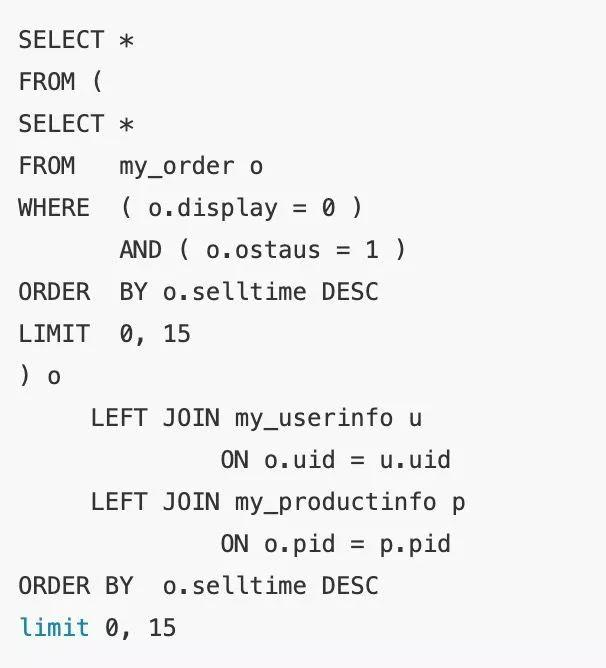

Since the last WHERE condition and sorting are performed on the leftmost primary table, first narrow down the data volume for my_order sorting before performing the left join. After the SQL statement is rewritten as follows, the execution time is reduced to about 1 ms.

Review the execution plan. After the subquery is materialized, select_type=DERIVED participates in the JOIN operation. Although the estimated number of rows to be scanned remains 900,000, the actual execution time is reduced after the index and the LIMIT clause are applied.

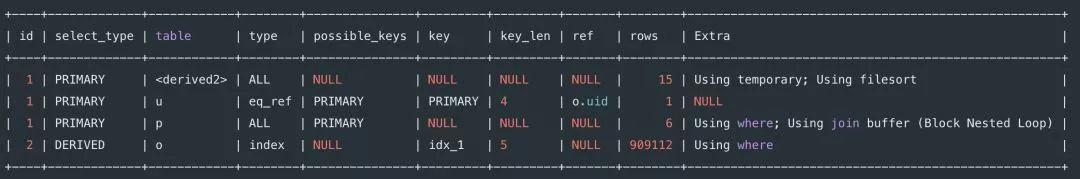

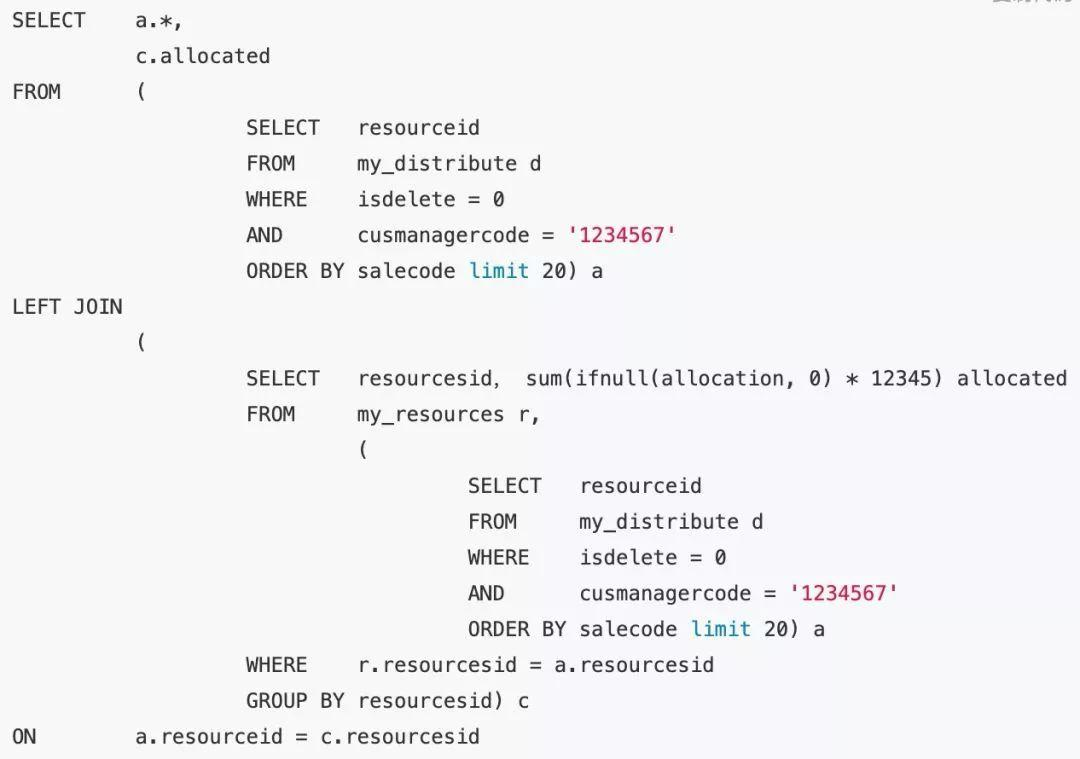

Let's take a look at the following initially optimized example (the query condition first acts on the primary table in the left join):

Are there any other problems with this statement? It is easy to see that subquery c is a full table aggregate query. Therefore, when the number of tables is particularly large, the performance of the entire statement drops.

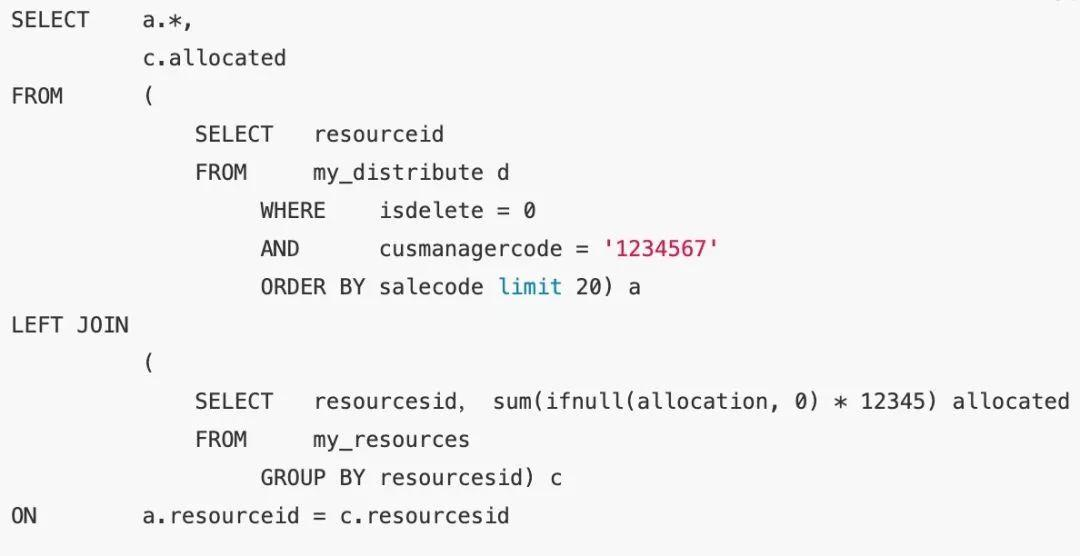

In fact, for subquery c, the final result set of the left join is only concerned with the data that matches the primary table resourceid. Therefore, rewrite the statement as follows to reduce the execution time from 2 seconds to 2 milliseconds.

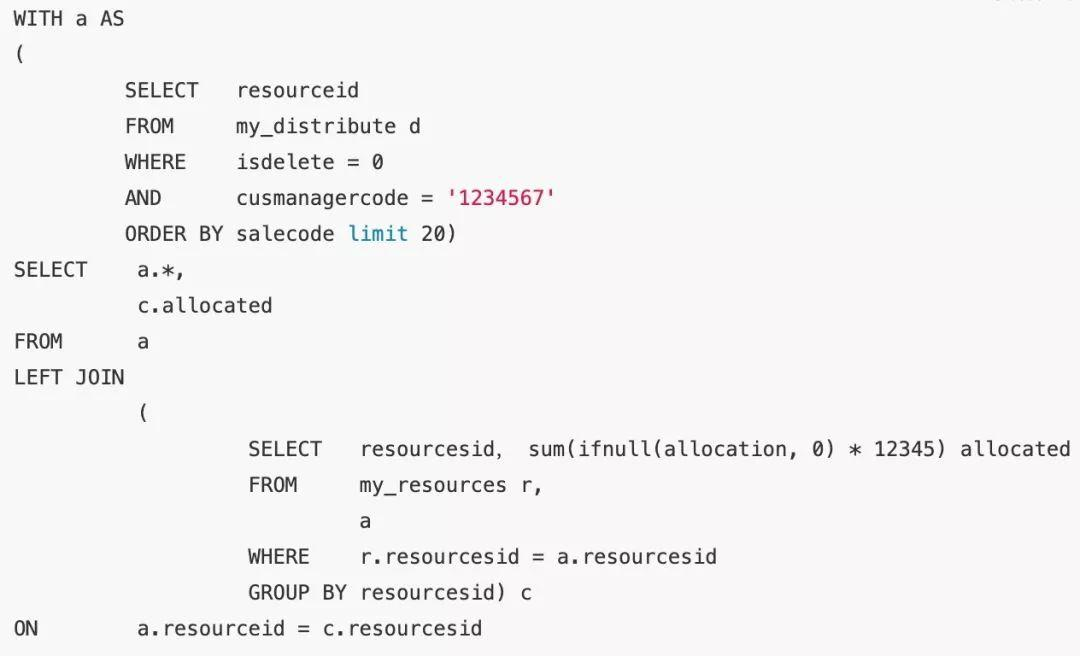

However, subquery appears in the SQL statement multiple times. This method not only incurs additional overhead but also makes the entire statement more complicated. Use a WITH statement to rewrite the statement again.

The database compiler generates an execution plan, which determines the actual execution method of SQL statements. However, the compiler only tries its best to provide services, and no database compiler is perfect.

In most of the aforementioned scenarios, performance problems also occur in other databases. You must understand the features of the database compiler to avoid its shortcomings and write high-performance SQL statements.

When designing data models and writing SQL statements, incorporate your algorithm ideas and awareness. For example, use WITH clauses when writing complex SQL statements, whenever possible. Simple and clear SQL statements also reduce the load on the database.

Learn How PolarDB Is Supporting Business Expansion in the Gaming Industry

Data Geek - April 25, 2024

Data Geek - May 20, 2024

Alex - June 21, 2019

Alibaba Cloud Community - September 10, 2025

Alibaba Cloud Native Community - January 15, 2026

Alibaba Cloud Community - September 3, 2025

PolarDB for MySQL

PolarDB for MySQL

Alibaba Cloud PolarDB for MySQL is a cloud-native relational database service 100% compatible with MySQL.

Learn More ApsaraDB for HBase

ApsaraDB for HBase

ApsaraDB for HBase is a NoSQL database engine that is highly optimized and 100% compatible with the community edition of HBase.

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Time Series Database (TSDB)

Time Series Database (TSDB)

TSDB is a stable, reliable, and cost-effective online high-performance time series database service.

Learn MoreMore Posts by ApsaraDB